一、简易识别

用最简单的已训练好的模型对20类目标做检测。

你电脑的tensorflow + CUDA + CUDNN环境都是OK的, 同时python需要安装cv2库

{ 'aeroplane' 'bicycle' 'bird' 'boat' 'bottle' 'bus' 'car' 'cat' 'chair' 'cow' 'diningtable' 'dog' 'horse' 'motorbike' 'person' 'pottedplant' 'sheep' 'sofa' 'train' 'tvmonitor' }

下载好代码后,在checkpoints文件夹下面由ssd_300_vgg.ckpt,直接解压到当前文件夹。

找到notebooks文件夹里的ssd_notebook.ipynb,用jupyter打开。

将读入的图片改为自己的图片就行了(更改path或者将自己的图片放到demo文件夹下面),然后运行所有cells。

- path为你想要进行测试的图片目录(代码中只对该目录下的最后一个文件夹进行测试,如果要想测试多幅图片或者做成视频的方式,需大家自行修改代码)

二、demo2

在notebooks文件夹下,建立demo_test.py文件,在demo_test.py文件内写入如下代码后,直接运行demo_test.py(以下代码也是notebooks文件夹ssd_tests.ipynb内的代码,可以用notebook读取;我只是做了一些小改动)

# -*- coding:utf-8 -*- # -*- author:zzZ_CMing CSDN address:https://blog.csdn.net/zzZ_CMing # -*- 2018/07/14; 15:19 # -*- python3.5 """ address: https://blog.csdn.net/qq_35608277/article/details/78660469 本文代码来自于github中微软官方仓库 """ import os import cv2 import math import random import tensorflow as tf import matplotlib.pyplot as plt import matplotlib.cm as mpcm import matplotlib.image as mpimg from notebooks import visualization from nets import ssd_vgg_300, ssd_common, np_methods from preprocessing import ssd_vgg_preprocessing import sys # 当引用模块和运行的脚本不在同一个目录下,需在脚本开头添加如下代码: sys.path.append('./SSD-Tensorflow/') slim = tf.contrib.slim # TensorFlow session gpu_options = tf.GPUOptions(allow_growth=True) config = tf.ConfigProto(log_device_placement=False, gpu_options=gpu_options) isess = tf.InteractiveSession(config=config) l_VOC_CLASS = ['aeroplane', 'bicycle', 'bird', 'boat', 'bottle', 'bus', 'car', 'cat', 'chair', 'cow', 'diningTable', 'dog', 'horse', 'motorbike', 'person', 'pottedPlant', 'sheep', 'sofa', 'train', 'TV'] # 定义数据格式,设置占位符 net_shape = (300, 300) # 预处理,以Tensorflow backend, 将输入图片大小改成 300x300,作为下一步输入 img_input = tf.placeholder(tf.uint8, shape=(None, None, 3)) # 输入图像的通道排列形式,'NHWC'表示 [batch_size,height,width,channel] data_format = 'NHWC' # 数据预处理,将img_input输入的图像resize为300大小,labels_pre,bboxes_pre,bbox_img待解析 image_pre, labels_pre, bboxes_pre, bbox_img = ssd_vgg_preprocessing.preprocess_for_eval( img_input, None, None, net_shape, data_format, resize=ssd_vgg_preprocessing.Resize.WARP_RESIZE) # 拓展为4维变量用于输入 image_4d = tf.expand_dims(image_pre, 0) # 定义SSD模型 # 是否复用,目前我们没有在训练所以为None reuse = True if 'ssd_net' in locals() else None # 调出基于VGG神经网络的SSD模型对象,注意这是一个自定义类对象 ssd_net = ssd_vgg_300.SSDNet() # 得到预测类和预测坐标的Tensor对象,这两个就是神经网络模型的计算流程 with slim.arg_scope(ssd_net.arg_scope(data_format=data_format)): predictions, localisations, _, _ = ssd_net.net(image_4d, is_training=False, reuse=reuse) # 导入官方给出的 SSD 模型参数 ckpt_filename = '../checkpoints/ssd_300_vgg.ckpt' # ckpt_filename = '../checkpoints/VGG_VOC0712_SSD_300x300_ft_iter_120000.ckpt' isess.run(tf.global_variables_initializer()) saver = tf.train.Saver() saver.restore(isess, ckpt_filename) # 在网络模型结构中,提取搜索网格的位置 # 根据模型超参数,得到每个特征层(这里用了6个特征层,分别是4,7,8,9,10,11)的anchors_boxes ssd_anchors = ssd_net.anchors(net_shape) """ 每层的anchors_boxes包含4个arrayList,前两个List分别是该特征层下x,y坐标轴对于原图(300x300)大小的映射 第三,四个List为anchor_box的长度和宽度,同样是经过归一化映射的,根据每个特征层box数量的不同,这两个List元素 个数会变化。其中,长宽的值根据超参数anchor_sizes和anchor_ratios制定。 """ # 加载辅助作图函数 def colors_subselect(colors, num_classes=21): dt = len(colors) // num_classes sub_colors = [] for i in range(num_classes): color = colors[i * dt] if isinstance(color[0], float): sub_colors.append([int(c * 255) for c in color]) else: sub_colors.append([c for c in color]) return sub_colors def bboxes_draw_on_img(img, classes, scores, bboxes, colors, thickness=2): shape = img.shape for i in range(bboxes.shape[0]): bbox = bboxes[i] color = colors[classes[i]] # Draw bounding box... p1 = (int(bbox[0] * shape[0]), int(bbox[1] * shape[1])) p2 = (int(bbox[2] * shape[0]), int(bbox[3] * shape[1])) cv2.rectangle(img, p1[::-1], p2[::-1], color, thickness) # Draw text... s = '%s/%.3f' % (l_VOC_CLASS[int(classes[i]) - 1], scores[i]) p1 = (p1[0] - 5, p1[1]) # cv2.putText(img, s, p1[::-1], cv2.FONT_HERSHEY_DUPLEX, 1.5, color, 3) colors_plasma = colors_subselect(mpcm.plasma.colors, num_classes=21) # 主流程函数 def process_image(img, case, select_threshold=0.15, nms_threshold=.1, net_shape=(300, 300)): # select_threshold:box阈值——每个像素的box分类预测数据的得分会与box阈值比较,高于一个box阈值则认为这个box成功框到了一个对象 # nms_threshold:重合度阈值——同一对象的两个框的重合度高于该阈值,则运行下面去重函数 # 执行SSD模型,得到4维输入变量,分类预测,坐标预测,rbbox_img参数为最大检测范围,本文固定为[0,0,1,1]即全图 rimg, rpredictions, rlocalisations, rbbox_img = isess.run([image_4d, predictions, localisations, bbox_img], feed_dict={img_input: img}) # ssd_bboxes_select()函数根据每个特征层的分类预测分数,归一化后的映射坐标, # ancohor_box的大小,通过设定一个阈值计算得到每个特征层检测到的对象以及其分类和坐标 rclasses, rscores, rbboxes = np_methods.ssd_bboxes_select(rpredictions, rlocalisations, ssd_anchors, select_threshold=select_threshold, img_shape=net_shape, num_classes=21, decode=True) """ 这个函数做的事情比较多,这里说的细致一些: 首先是输入,输入的数据为每个特征层(一共6个,见上文)的: rpredictions: 分类预测数据, rlocalisations: 坐标预测数据, ssd_anchors: anchors_box数据 其中: 分类预测数据为当前特征层中每个像素的每个box的分类预测 坐标预测数据为当前特征层中每个像素的每个box的坐标预测 anchors_box数据为当前特征层中每个像素的每个box的修正数据 函数根据坐标预测数据和anchors_box数据,计算得到每个像素的每个box的中心和长宽,这个中心坐标和长宽会根据一个算法进行些许的修正, 从而得到一个更加准确的box坐标;修正的算法会在后文中详细解释,如果只是为了理解算法流程也可以不必深究这个,因为这个修正算法属于经验算 法,并没有太多逻辑可循。 修正完box和中心后,函数会计算每个像素的每个box的分类预测数据的得分,当这个分数高于一个阈值(这里是0.5)则认为这个box成功 框到了一个对象,然后将这个box的坐标数据,所属分类和分类得分导出,从而得到: rclasses:所属分类 rscores:分类得分 rbboxes:坐标 最后要注意的是,同一个目标可能会在不同的特征层都被检测到,并且他们的box坐标会有些许不同,这里并没有去掉重复的目标,而是在下文 中专门用了一个函数来去重 """ # 检测有没有超出检测边缘 rbboxes = np_methods.bboxes_clip(rbbox_img, rbboxes) rclasses, rscores, rbboxes = np_methods.bboxes_sort(rclasses, rscores, rbboxes, top_k=400) # 去重,将重复检测到的目标去掉 rclasses, rscores, rbboxes = np_methods.bboxes_nms(rclasses, rscores, rbboxes, nms_threshold=nms_threshold) # 将box的坐标重新映射到原图上(上文所有的坐标都进行了归一化,所以要逆操作一次) rbboxes = np_methods.bboxes_resize(rbbox_img, rbboxes) if case == 1: bboxes_draw_on_img(img, rclasses, rscores, rbboxes, colors_plasma, thickness=8) return img else: return rclasses, rscores, rbboxes """ # 只做目标定位,不做预测分析 case = 1 img = cv2.imread("../demo/person.jpg") img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB) plt.imshow(process_image(img, case)) plt.show() """ # 做目标定位,同时做预测分析 case = 2 path = '../demo/person.jpg' # 读取图片 img = mpimg.imread(path) # 执行主流程函数 rclasses, rscores, rbboxes = process_image(img, case) # visualization.bboxes_draw_on_img(img, rclasses, rscores, rbboxes, visualization.colors_plasma) # 显示分类结果图 visualization.plt_bboxes(img, rclasses, rscores, rbboxes), rscores, rbboxes

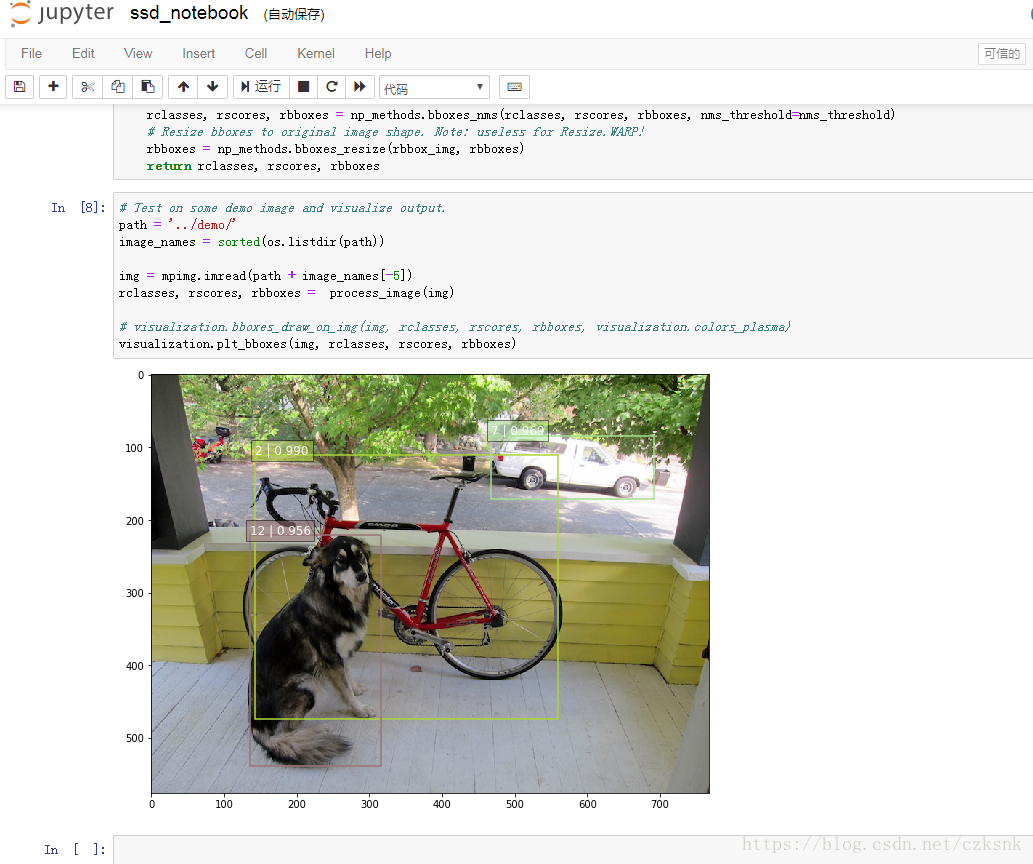

会得到如下图示,如图已经成功的把物体标注出来,每个标记框中前一个数是标签项,后一个是预测的准确率;

# 标签项与其对应的标签内容

dict = {1:'aeroplane', 2:'bicycle', 3:'bird', 4:'boat', 5:'bottle',

6:'bus', 7:'car', 8:'cat', 9:'chair', 10:'cow',

11:'diningTable', 12:'dog', 13:'horse', 14:'motorbike', 15:'person',

16:'pottedPlant', 17:'sheep', 18:'sofa', 19:'train', 20:'TV'}

三、demo3 视频定位检测

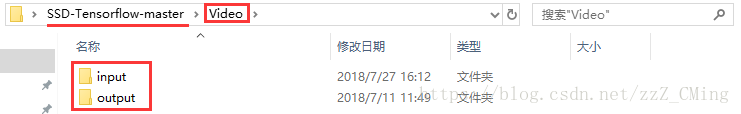

以上demo文件夹内都只是图片,如果你想在视频中标记物体——首先你需要拍一段视频,建议不要太长不然你要跑很久,然后需要在主目录下建立Video文件夹,在其下建立input、output文件夹,如下图所示:

再将拍摄的视频存入input文件夹下,注意视频的名称哦!最后在主目录下建立demo_Video.py文件,存入如下代码,运行demo_Video.py

请注意:166行的文件名要与文件夹视频名一致

请注意:166行的文件名要与文件夹视频名一致

1 # -*- coding:utf-8 -*- 2 # -*- author:zzZ_CMing CSDN address:https://blog.csdn.net/zzZ_CMing 3 # -*- 2018/07/09; 15:19 4 # -*- python3.5 5 import os 6 import cv2 7 import math 8 import random 9 import tensorflow as tf 10 import matplotlib.pyplot as plt 11 import matplotlib.cm as mpcm 12 import matplotlib.image as mpimg 13 from notebooks import visualization 14 from nets import ssd_vgg_300, ssd_common, np_methods 15 from preprocessing import ssd_vgg_preprocessing 16 import sys 17 18 # 当引用模块和运行的脚本不在同一个目录下,需在脚本开头添加如下代码: 19 sys.path.append('./SSD-Tensorflow/') 20 21 slim = tf.contrib.slim 22 23 # TensorFlow session 24 gpu_options = tf.GPUOptions(allow_growth=True) 25 config = tf.ConfigProto(log_device_placement=False, gpu_options=gpu_options) 26 isess = tf.InteractiveSession(config=config) 27 28 l_VOC_CLASS = ['aeroplane', 'bicycle', 'bird', 'boat', 'bottle', 29 'bus', 'car', 'cat', 'chair', 'cow', 30 'diningTable', 'dog', 'horse', 'motorbike', 'person', 31 'pottedPlant', 'sheep', 'sofa', 'train', 'TV'] 32 33 # 定义数据格式,设置占位符 34 net_shape = (300, 300) 35 # 预处理,以Tensorflow backend, 将输入图片大小改成 300x300,作为下一步输入 36 img_input = tf.placeholder(tf.uint8, shape=(None, None, 3)) 37 # 输入图像的通道排列形式,'NHWC'表示 [batch_size,height,width,channel] 38 data_format = 'NHWC' 39 40 # 数据预处理,将img_input输入的图像resize为300大小,labels_pre,bboxes_pre,bbox_img待解析 41 image_pre, labels_pre, bboxes_pre, bbox_img = ssd_vgg_preprocessing.preprocess_for_eval( 42 img_input, None, None, net_shape, data_format, 43 resize=ssd_vgg_preprocessing.Resize.WARP_RESIZE) 44 # 拓展为4维变量用于输入 45 image_4d = tf.expand_dims(image_pre, 0) 46 47 # 定义SSD模型 48 # 是否复用,目前我们没有在训练所以为None 49 reuse = True if 'ssd_net' in locals() else None 50 # 调出基于VGG神经网络的SSD模型对象,注意这是一个自定义类对象 51 ssd_net = ssd_vgg_300.SSDNet() 52 # 得到预测类和预测坐标的Tensor对象,这两个就是神经网络模型的计算流程 53 with slim.arg_scope(ssd_net.arg_scope(data_format=data_format)): 54 predictions, localisations, _, _ = ssd_net.net(image_4d, is_training=False, reuse=reuse) 55 56 # 导入官方给出的 SSD 模型参数 57 ckpt_filename = '../checkpoints/ssd_300_vgg.ckpt' 58 # ckpt_filename = '../checkpoints/VGG_VOC0712_SSD_300x300_ft_iter_120000.ckpt' 59 isess.run(tf.global_variables_initializer()) 60 saver = tf.train.Saver() 61 saver.restore(isess, ckpt_filename) 62 63 # 在网络模型结构中,提取搜索网格的位置 64 # 根据模型超参数,得到每个特征层(这里用了6个特征层,分别是4,7,8,9,10,11)的anchors_boxes 65 ssd_anchors = ssd_net.anchors(net_shape) 66 """ 67 每层的anchors_boxes包含4个arrayList,前两个List分别是该特征层下x,y坐标轴对于原图(300x300)大小的映射 68 第三,四个List为anchor_box的长度和宽度,同样是经过归一化映射的,根据每个特征层box数量的不同,这两个List元素 69 个数会变化。其中,长宽的值根据超参数anchor_sizes和anchor_ratios制定。 70 """ 71 72 73 # 加载辅助作图函数 74 def colors_subselect(colors, num_classes=21): 75 dt = len(colors) // num_classes 76 sub_colors = [] 77 for i in range(num_classes): 78 color = colors[i * dt] 79 if isinstance(color[0], float): 80 sub_colors.append([int(c * 255) for c in color]) 81 else: 82 sub_colors.append([c for c in color]) 83 return sub_colors 84 85 86 def bboxes_draw_on_img(img, classes, scores, bboxes, colors, thickness=2): 87 shape = img.shape 88 for i in range(bboxes.shape[0]): 89 bbox = bboxes[i] 90 color = colors[classes[i]] 91 # Draw bounding box... 92 p1 = (int(bbox[0] * shape[0]), int(bbox[1] * shape[1])) 93 p2 = (int(bbox[2] * shape[0]), int(bbox[3] * shape[1])) 94 cv2.rectangle(img, p1[::-1], p2[::-1], color, thickness) 95 # Draw text... 96 s = '%s/%.3f' % (l_VOC_CLASS[int(classes[i]) - 1], scores[i]) 97 p1 = (p1[0] - 5, p1[1]) 98 # cv2.putText(img, s, p1[::-1], cv2.FONT_HERSHEY_DUPLEX, 1.5, color, 3) 99 100 101 colors_plasma = colors_subselect(mpcm.plasma.colors, num_classes=21) 102 103 104 # 主流程函数 105 def process_image(img, select_threshold=0.2, nms_threshold=.1, net_shape=(300, 300)): 106 # select_threshold:box阈值——每个像素的box分类预测数据的得分会与box阈值比较,高于一个box阈值则认为这个box成功框到了一个对象 107 # nms_threshold:重合度阈值——同一对象的两个框的重合度高于该阈值,则运行下面去重函数 108 109 # 执行SSD模型,得到4维输入变量,分类预测,坐标预测,rbbox_img参数为最大检测范围,本文固定为[0,0,1,1]即全图 110 rimg, rpredictions, rlocalisations, rbbox_img = isess.run([image_4d, predictions, localisations, bbox_img], 111 feed_dict={img_input: img}) 112 113 # ssd_bboxes_select函数根据每个特征层的分类预测分数,归一化后的映射坐标, 114 # ancohor_box的大小,通过设定一个阈值计算得到每个特征层检测到的对象以及其分类和坐标 115 rclasses, rscores, rbboxes = np_methods.ssd_bboxes_select(rpredictions, rlocalisations, ssd_anchors, 116 select_threshold=select_threshold, 117 img_shape=net_shape, 118 num_classes=21, decode=True) 119 120 """ 121 这个函数做的事情比较多,这里说的细致一些: 122 首先是输入,输入的数据为每个特征层(一共6个,见上文)的: 123 分类预测数据(rpredictions), 124 坐标预测数据(rlocalisations), 125 anchors_box数据(ssd_anchors) 126 其中: 127 分类预测数据为当前特征层中每个像素的每个box的分类预测 128 坐标预测数据为当前特征层中每个像素的每个box的坐标预测 129 anchors_box数据为当前特征层中每个像素的每个box的修正数据 130 131 函数根据坐标预测数据和anchors_box数据,计算得到每个像素的每个box的中心和长宽,这个中心坐标和长宽会根据一个算法进行些许的修正, 132 从而得到一个更加准确的box坐标;修正的算法会在后文中详细解释,如果只是为了理解算法流程也可以不必深究这个,因为这个修正算法属于经验算 133 法,并没有太多逻辑可循。 134 修正完box和中心后,函数会计算每个像素的每个box的分类预测数据的得分,当这个分数高于一个阈值(这里是0.5)则认为这个box成功 135 框到了一个对象,然后将这个box的坐标数据,所属分类和分类得分导出,从而得到: 136 rclasses:所属分类 137 rscores:分类得分 138 rbboxes:坐标 139 140 最后要注意的是,同一个目标可能会在不同的特征层都被检测到,并且他们的box坐标会有些许不同,这里并没有去掉重复的目标,而是在下文 141 中专门用了一个函数来去重 142 """ 143 144 # 检测有没有超出检测边缘 145 rbboxes = np_methods.bboxes_clip(rbbox_img, rbboxes) 146 rclasses, rscores, rbboxes = np_methods.bboxes_sort(rclasses, rscores, rbboxes, top_k=400) 147 # 去重,将重复检测到的目标去掉 148 rclasses, rscores, rbboxes = np_methods.bboxes_nms(rclasses, rscores, rbboxes, nms_threshold=nms_threshold) 149 # 将box的坐标重新映射到原图上(上文所有的坐标都进行了归一化,所以要逆操作一次) 150 rbboxes = np_methods.bboxes_resize(rbbox_img, rbboxes) 151 152 bboxes_draw_on_img(img, rclasses, rscores, rbboxes, colors_plasma, thickness=8) 153 return img 154 155 156 # 视频物体定位 157 import imageio 158 imageio.plugins.ffmpeg.download() 159 from moviepy.editor import VideoFileClip 160 161 def process_video (input_path, output_path): 162 video = VideoFileClip(input_path) 163 result = video.fl_image(process_image) 164 result.write_videofile(output_path, fps=40) 165 166 video_name = "3.mp4" 167 input_path = "./Video/input/" + video_name 168 output_path = "./Video/output/output_" + video_name 169 process_video(input_path,output_path )

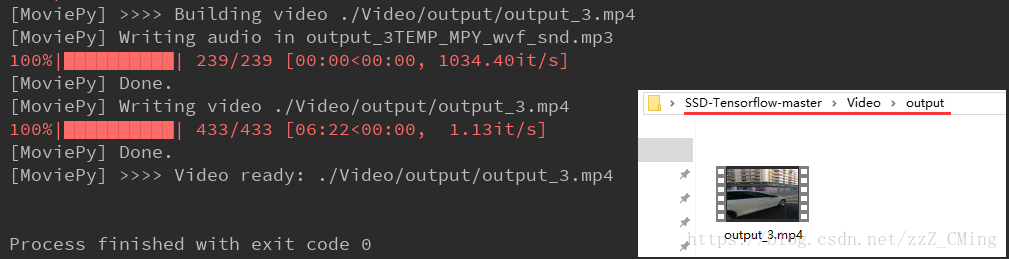

经过一段时间的等待,终于跑完程序;

打开Video/input文件夹,查看输出的视频是什么样子的吧!

四、demo4-视频(显示标签)

notebook目录下新建ssd_notebook_camera.py:

# coding: utf-8 import os import math import random import numpy as np import tensorflow as tf import cv2 slim = tf.contrib.slim # get_ipython().magic('matplotlib inline') import matplotlib.pyplot as plt import matplotlib.image as mpimg import sys sys.path.append('../') from nets import ssd_vgg_300, ssd_common, np_methods from preprocessing import ssd_vgg_preprocessing from notebooks import visualization_camera # visualization # TensorFlow session: grow memory when needed. TF, DO NOT USE ALL MY GPU MEMORY!!! gpu_options = tf.GPUOptions(allow_growth=True) config = tf.ConfigProto(log_device_placement=False, gpu_options=gpu_options) isess = tf.InteractiveSession(config=config) # ## SSD 300 Model # # The SSD 300 network takes 300x300 image inputs. In order to feed any image, the latter is resize to this input shape (i.e.`Resize.WARP_RESIZE`). Note that even though it may change the ratio width / height, the SSD model performs well on resized images (and it is the default behaviour in the original Caffe implementation). # # SSD anchors correspond to the default bounding boxes encoded in the network. The SSD net output provides offset on the coordinates and dimensions of these anchors. # Input placeholder. net_shape = (300, 300) data_format = 'NHWC' img_input = tf.placeholder(tf.uint8, shape=(None, None, 3)) # Evaluation pre-processing: resize to SSD net shape. image_pre, labels_pre, bboxes_pre, bbox_img = ssd_vgg_preprocessing.preprocess_for_eval( img_input, None, None, net_shape, data_format, resize=ssd_vgg_preprocessing.Resize.WARP_RESIZE) image_4d = tf.expand_dims(image_pre, 0) # Define the SSD model. reuse = True if 'ssd_net' in locals() else None ssd_net = ssd_vgg_300.SSDNet() with slim.arg_scope(ssd_net.arg_scope(data_format=data_format)): predictions, localisations, _, _ = ssd_net.net(image_4d, is_training=False, reuse=reuse) # Restore SSD model. ckpt_filename = 'E:/SSD/initial_SSD/SSD-Tensorflow-master/checkpoints/ssd_300_vgg.ckpt' # 可更改为自己的模型路径 # ckpt_filename = '../checkpoints/VGG_VOC0712_SSD_300x300_ft_iter_120000.ckpt' isess.run(tf.global_variables_initializer()) saver = tf.train.Saver() saver.restore(isess, ckpt_filename) # SSD default anchor boxes. ssd_anchors = ssd_net.anchors(net_shape) # ## Post-processing pipeline # # The SSD outputs need to be post-processed to provide proper detections. Namely, we follow these common steps: # # * Select boxes above a classification threshold; # * Clip boxes to the image shape; # * Apply the Non-Maximum-Selection algorithm: fuse together boxes whose Jaccard score > threshold; # * If necessary, resize bounding boxes to original image shape. # Main image processing routine. def process_image(img, select_threshold=0.5, nms_threshold=.45, net_shape=(300, 300)): # Run SSD network. rimg, rpredictions, rlocalisations, rbbox_img = isess.run([image_4d, predictions, localisations, bbox_img], feed_dict={img_input: img}) # Get classes and bboxes from the net outputs. rclasses, rscores, rbboxes = np_methods.ssd_bboxes_select( rpredictions, rlocalisations, ssd_anchors, select_threshold=select_threshold, img_shape=net_shape, num_classes=21, decode=True) rbboxes = np_methods.bboxes_clip(rbbox_img, rbboxes) rclasses, rscores, rbboxes = np_methods.bboxes_sort(rclasses, rscores, rbboxes, top_k=400) rclasses, rscores, rbboxes = np_methods.bboxes_nms(rclasses, rscores, rbboxes, nms_threshold=nms_threshold) # Resize bboxes to original image shape. Note: useless for Resize.WARP! rbboxes = np_methods.bboxes_resize(rbbox_img, rbboxes) return rclasses, rscores, rbboxes # # Test on some demo image and visualize output. # path = '../demo/' # image_names = sorted(os.listdir(path)) # img = mpimg.imread(path + image_names[-5]) # rclasses, rscores, rbboxes = process_image(img) # # visualization.bboxes_draw_on_img(img, rclasses, rscores, rbboxes, visualization.colors_plasma) # visualization.plt_bboxes(img, rclasses, rscores, rbboxes) ##### following are added for camera demo#### cap = cv2.VideoCapture(r'E:/SSD/initial_SSD/SSD-Tensorflow-master/demo_video/01.mp4') fps = cap.get(cv2.CAP_PROP_FPS) size = (int(cap.get(cv2.CAP_PROP_FRAME_WIDTH)), int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))) fourcc = cap.get(cv2.CAP_PROP_FOURCC) # fourcc = cv2.CAP_PROP_FOURCC(*'CVID') print('fps=%d,size=%r,fourcc=%r' % (fps, size, fourcc)) delay = 30 / int(fps) while (cap.isOpened()): ret, frame = cap.read() if ret == True: # image = Image.open(image_path) # gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY) image = frame # the array based representation of the image will be used later in order to prepare the # result image with boxes and labels on it. image_np = image # image_np = load_image_into_numpy_array(image) # Expand dimensions since the model expects images to have shape: [1, None, None, 3] image_np_expanded = np.expand_dims(image_np, axis=0) # Actual detection. rclasses, rscores, rbboxes = process_image(image_np) # Visualization of the results of a detection. visualization_camera.bboxes_draw_on_img(image_np, rclasses, rscores, rbboxes) # plt.figure(figsize=IMAGE_SIZE) # plt.imshow(image_np) cv2.imshow('frame', image_np) cv2.waitKey(np.uint(delay)) print('Ongoing...') else: break cap.release() cv2.destroyAllWindows()

此外还要新建visualization.py:

1 # Copyright 2017 Paul Balanca. All Rights Reserved. 2 # 3 # Licensed under the Apache License, Version 2.0 (the "License"); 4 # you may not use this file except in compliance with the License. 5 # You may obtain a copy of the License at 6 # 7 # http://www.apache.org/licenses/LICENSE-2.0 8 # 9 # Unless required by applicable law or agreed to in writing, software 10 # distributed under the License is distributed on an "AS IS" BASIS, 11 # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. 12 # See the License for the specific language governing permissions and 13 # limitations under the License. 14 # ============================================================================== 15 import cv2 16 import random 17 18 import matplotlib.pyplot as plt 19 import matplotlib.image as mpimg 20 import matplotlib.cm as mpcm 21 #这是一个mixin类,用于支持标量数据到RGBA映射。ScalarMappable在从给定的colormap返回RGBA颜色之前使用数据规范化。 22 23 def num2class(n): 24 import datasets.pascalvoc_2007 as pas 25 x = pas.pascalvoc_common.VOC_LABELS.items() 26 for name, item in x: 27 if n in item: 28 # print(name) 29 return name 30 # =========================================================================== # 31 # Some colormaps. 32 # =========================================================================== # 33 def colors_subselect(colors, num_classes=21): 34 dt = len(colors) // num_classes 35 sub_colors = [] 36 for i in range(num_classes): 37 color = colors[i * dt] 38 if isinstance(color[0], float): 39 sub_colors.append([int(c * 255) for c in color]) 40 else: 41 sub_colors.append([c for c in color]) 42 return sub_colors 43 44 45 colors_plasma = colors_subselect(mpcm.plasma.colors, num_classes=21) 46 colors_tableau = [(255, 255, 255), (31, 119, 180), (174, 199, 232), (255, 127, 14), (255, 187, 120), 47 (44, 160, 44), (152, 223, 138), (214, 39, 40), (255, 152, 150), 48 (148, 103, 189), (197, 176, 213), (140, 86, 75), (196, 156, 148), 49 (227, 119, 194), (247, 182, 210), (127, 127, 127), (199, 199, 199), 50 (188, 189, 34), (219, 219, 141), (23, 190, 207), (158, 218, 229)] 51 52 53 # =========================================================================== # 54 # OpenCV drawing. 55 # =========================================================================== # 56 def draw_lines(img, lines, color=[255, 0, 0], thickness=2): 57 """Draw a collection of lines on an image. 58 """ 59 for line in lines: 60 for x1, y1, x2, y2 in line: 61 cv2.line(img, (x1, y1), (x2, y2), color, thickness) 62 63 64 def draw_rectangle(img, p1, p2, color=[255, 0, 0], thickness=2): 65 cv2.rectangle(img, p1[::-1], p2[::-1], color, thickness) 66 67 68 def draw_bbox(img, bbox, shape, label, color=[255, 0, 0], thickness=2): 69 p1 = (int(bbox[0] * shape[0]), int(bbox[1] * shape[1])) 70 p2 = (int(bbox[2] * shape[0]), int(bbox[3] * shape[1])) 71 cv2.rectangle(img, p1[::-1], p2[::-1], color, thickness) 72 p1 = (p1[0] + 15, p1[1]) 73 cv2.putText(img, str(label), p1[::-1], cv2.FONT_HERSHEY_DUPLEX, 0.5, color, 1) 74 75 76 def bboxes_draw_on_img(img, classes, scores, bboxes, colors=dict(), thickness=2): 77 shape = img.shape 78 ####add 20180516##### 79 # colors=dict() 80 ####add ############# 81 for i in range(bboxes.shape[0]): 82 bbox = bboxes[i] 83 if classes[i] not in colors: 84 colors[classes[i]] = (random.random(), random.random(), random.random()) 85 p1 = (int(bbox[0] * shape[0]), int(bbox[1] * shape[1])) 86 p2 = (int(bbox[2] * shape[0]), int(bbox[3] * shape[1])) 87 cv2.rectangle(img, p1[::-1], p2[::-1], colors[classes[i]], thickness) 88 s = '%s/%.3f' % (num2class(classes[i]), scores[i]) 89 p1 = (p1[0] - 5, p1[1]) 90 cv2.putText(img, s, p1[::-1], cv2.FONT_HERSHEY_DUPLEX, 0.4, colors[classes[i]], 1) 91 92 # =========================================================================== # 93 94 95 # Matplotlib show... 96 # =========================================================================== # 97 def plt_bboxes(img, classes, scores, bboxes, figsize=(10, 10), linewidth=1.5): 98 """Visualize bounding boxes. Largely inspired by SSD-MXNET! 99 """ 100 fig = plt.figure(figsize=figsize) 101 plt.imshow(img) 102 height = img.shape[0] 103 width = img.shape[1] 104 colors = dict() 105 for i in range(classes.shape[0]): 106 cls_id = int(classes[i]) 107 if cls_id >= 0: 108 score = scores[i] 109 if cls_id not in colors: 110 colors[cls_id] = (random.random(), random.random(), random.random()) 111 ymin = int(bboxes[i, 0] * height) 112 xmin = int(bboxes[i, 1] * width) 113 ymax = int(bboxes[i, 2] * height) 114 xmax = int(bboxes[i, 3] * width) 115 rect = plt.Rectangle((xmin, ymin), xmax - xmin, 116 ymax - ymin, fill=False, 117 edgecolor=colors[cls_id], 118 linewidth=linewidth) 119 plt.gca().add_patch(rect) 120 ##class_name = str(cls_id) #commented 20180516 121 #### added 20180516##### 122 class_name = num2class(cls_id) 123 #### added end ######### 124 plt.gca().text(xmin, ymin - 2, 125 '{:s} | {:.3f}'.format(class_name, score), 126 bbox=dict(facecolor=colors[cls_id], alpha=0.5), 127 fontsize=12, color='white') 128 plt.show()