利用了beautifulsoup进行爬虫,解析网址分页面爬虫并存入文本文档:

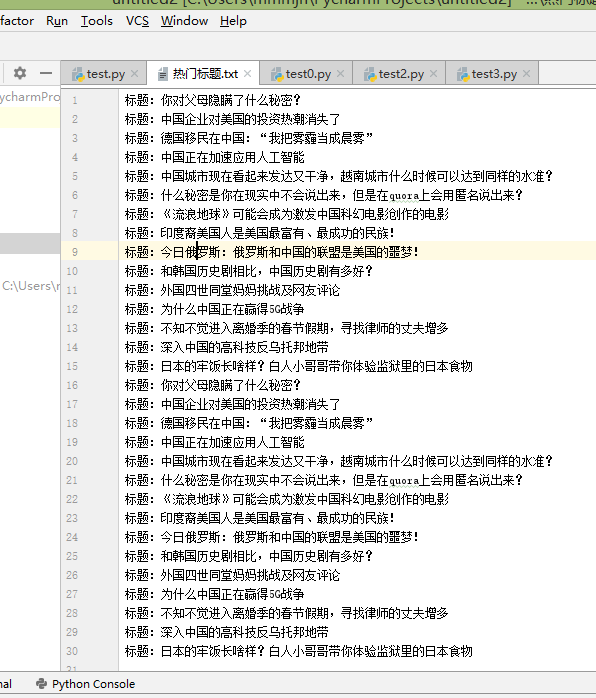

结果:

源码:

from bs4 import BeautifulSoup

from urllib.request import urlopen

with open("热门标题.txt","a",encoding="utf-8") as f:

for i in range(2):

url = "http://www.ltaaa.com/wtfy-{}".format(i)+".html"

html = urlopen(url).read()

soup = BeautifulSoup(html,"html.parser")

titles = soup.select("div[class = 'dtop' ] a") # CSS 选择器

for title in titles:

print(title.get_text(),title.get('href'))# 标签体、标签属性

f.write("标题:{}

".format(title.get_text()))