1.前向传播:

template <typename Dtype> void SoftmaxLayer<Dtype>::Forward_cpu(const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top) { const Dtype* bottom_data = bottom[0]->cpu_data(); Dtype* top_data = top[0]->mutable_cpu_data(); Dtype* scale_data = scale_.mutable_cpu_data(); int channels = bottom[0]->shape(softmax_axis_); int dim = bottom[0]->count() / outer_num_; //dim表示要分类的类别数,count()得到的是总共的输入Blob数,outer_num_得到的是是每一类的Blob数 caffe_copy(bottom[0]->count(), bottom_data, top_data); //先将输入拷贝到输出缓冲区 // We need to subtract the max to avoid numerical issues, compute the exp, // and then normalize,减去最大值,避免数值问题,计算指数,归一化 for (int i = 0; i < outer_num_; ++i) { // 初始化scale_的data域为第一个平面,其中scale用来存放临时计算结果 caffe_copy(inner_num_, bottom_data + i * dim, scale_data); for (int j = 0; j < channels; j++) { for (int k = 0; k < inner_num_; k++) { scale_data[k] = std::max(scale_data[k], bottom_data[i * dim + j * inner_num_ + k]); } } // 输出缓冲区减去最大值 caffe_cpu_gemm<Dtype>(CblasNoTrans, CblasNoTrans, channels, inner_num_, 1, -1., sum_multiplier_.cpu_data(), scale_data, 1., top_data); // exponentiation caffe_exp<Dtype>(dim, top_data, top_data); // sum after exp caffe_cpu_gemv<Dtype>(CblasTrans, channels, inner_num_, 1., top_data, sum_multiplier_.cpu_data(), 0., scale_data); // division for (int j = 0; j < channels; j++) { caffe_div(inner_num_, top_data, scale_data, top_data); top_data += inner_num_; } } }

一般的我们有top[0]来存放数据,top[1]来存放标签(对于bottom也一样)

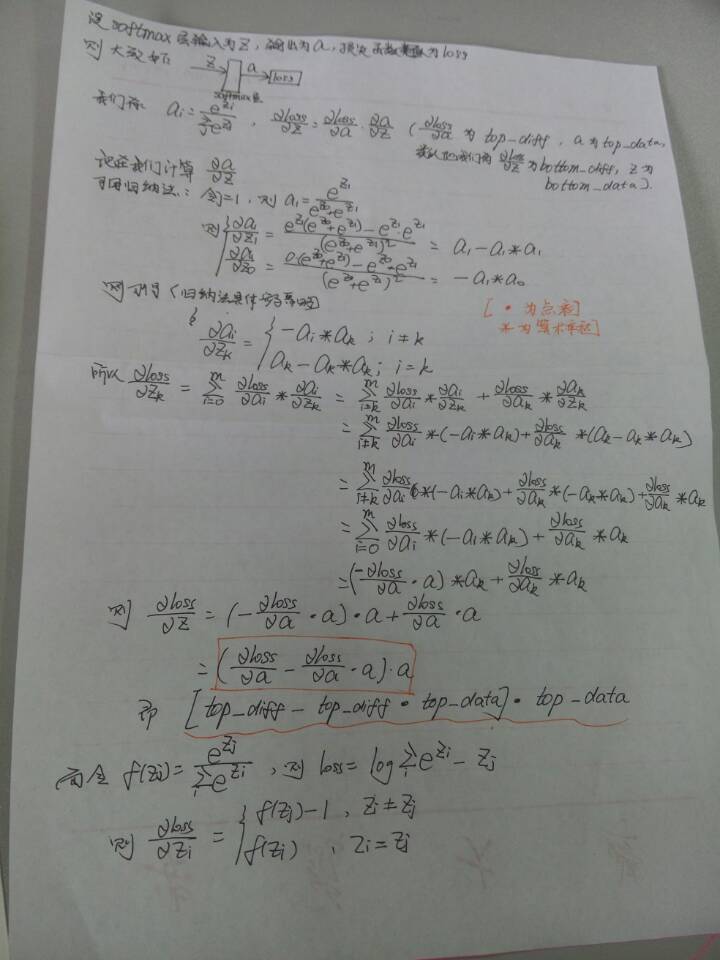

2.反向传播:

template <typename Dtype> void SoftmaxLayer<Dtype>::Backward_cpu(const vector<Blob<Dtype>*>& top, const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom) { const Dtype* top_diff = top[0]->cpu_diff(); const Dtype* top_data = top[0]->cpu_data(); Dtype* bottom_diff = bottom[0]->mutable_cpu_diff(); Dtype* scale_data = scale_.mutable_cpu_data(); int channels = top[0]->shape(softmax_axis_); int dim = top[0]->count() / outer_num_; caffe_copy(top[0]->count(), top_diff, bottom_diff); //先用top_diff初始化bottom_diff for (int i = 0; i < outer_num_; ++i) { // 计算top_diff和top_data的点积,然后从bottom_diff中减去该值 for (int k = 0; k < inner_num_; ++k) { scale_data[k] = caffe_cpu_strided_dot<Dtype>(channels, bottom_diff + i * dim + k, inner_num_, top_data + i * dim + k, inner_num_); } // 减值 caffe_cpu_gemm<Dtype>(CblasNoTrans, CblasNoTrans, channels, inner_num_, 1, -1., sum_multiplier_.cpu_data(), scale_data, 1., bottom_diff + i * dim); } // 逐点相乘 caffe_mul(top[0]->count(), bottom_diff, top_data, bottom_diff); }

解释:

补充:最后部分,Zi!=Zj和Zi=Zj部分写反了,大家注意一下~