(1)添加项目jar包

File -> Project Structure

Libarries 添加jar包jna-4.0.0.jar

(2)将Data文件夹复制到ICTCLAS2015文件夹下

(3)声明调用分词器的接口,如下:

//定义接口Clibrary,继承自com.sun.jna.Library

public interface CLibrary extends Library{

//定义并初始化接口的静态变量,这一个语句是用来加载dll的,注意dll文件的路径

//可以是绝对路径也可以是相对路径,只需要填写dll的文件名,不能加后缀

//加载dll文件NLPIR.dll

CLibrary Instance =(CLibrary) Native.loadLibrary(

"C:\jars\nlpir\bin\ICTCLAS2015\NLPIR",CLibrary.class);

// 初始化函数声明

public int NLPIR_Init(String sDataPath, int encoding, String sLicenceCode);

//执行分词函数声明

public String NLPIR_ParagraphProcess(String sSrc, int bPOSTagged);

//提取关键词函数声明

public String NLPIR_GetKeyWords(String sLine, int nMaxKeyLimit, boolean bWeightOut);

//提取文件中关键词的函数声明

public String NLPIR_GetFileKeyWords(String sLine, int nMaxKeyLimit, boolean bWeightOut);

//添加用户词典声明

public int NLPIR_AddUserWord(String sWord);//add by qp 2008.11.10

//删除用户词典声明

public int NLPIR_DelUsrWord(String sWord);//add by qp 2008.11.10

//获取最后一个错误的说明

public String NLPIR_GetLastErrorMsg();

//退出函数声明

public void NLPIR_Exit();

//文件分词声明

public void NLPIR_FileProcess(String utf8File, String utf8FileResult, int i);

}

(4)分词器使用实例:

String system_charset="UTF-8";

//说明分词器的bin文件所在的目录

String dir="C:\jars\nlpir\bin\ICTCLAS2015";

int charset_type=1;

String utf8File="C:\utf8file.txt";

String utf8FileResult ="C:\utf8result.txt";

//初始化分词器

int init_flag =CLibrary.Instance.NLPIR_Init(dir,charset_type,"0");

String nativeBytes=null;

if(init_flag==0){

nativeBytes=CLibrary.Instance.NLPIR_GetLastErrorMsg();

System.out.println("初始化失败,原因为"+nativeBytes);

return;

}

String sinput="去年开始,打开百度李毅吧,满屏的帖子大多含有“屌丝”二字,一般网友不仅不懂这词什么意思,更难理解这个词为什么会这么火。然而从下半年开始,“屌丝”已经覆盖网络各个角落,人人争说屌丝,人人争当屌丝。 " +

"从遭遇恶搞到群体自嘲,“屌丝”名号横空出世";

try{

//参数0代表不带词性,参数1代表带有词性标识

nativeBytes=CLibrary.Instance.NLPIR_ParagraphProcess(sinput,0);

System.out.println("分词结果为:"+nativeBytes);

//添加用户词典

CLibrary.Instance.NLPIR_AddUserWord("满屏的帖子 n");

CLibrary.Instance.NLPIR_AddUserWord("更难理解 n");

//执行分词

nativeBytes=CLibrary.Instance.NLPIR_ParagraphProcess(sinput,1);

System.out.println("增加用户词典后分词的结果为: " +nativeBytes);

//删除用户定义的词 更难理解

CLibrary.Instance.NLPIR_DelUsrWord("更难理解");

//执行分词

nativeBytes=CLibrary.Instance.NLPIR_ParagraphProcess(sinput,1);

System.out.println("删除用户词典后分词结果为: "+nativeBytes);

//从utf8File目录中读取语句进行分词,将结果写入utf8FileResult对应的路径之中,保留词性对应的标志

CLibrary.Instance.NLPIR_FileProcess(utf8File,utf8FileResult,1);

//获取sinput中对应的关键词,指定关键词数目最多为3

nativeBytes=CLibrary.Instance.NLPIR_GetKeyWords(sinput,3,false);

System.out.println("关键词提取结果是:"+nativeBytes);

//获取文件中对应的关键词

nativeBytes=CLibrary.Instance.NLPIR_GetFileKeyWords(utf8File,10,false);

System.out.println("关键词提取结果是:"+nativeBytes);

}catch (Exception e){

e.printStackTrace();

}

(3)String编码方式转换函数的实现:

//转换String的编码方式

public static String transString(String str,String ori_encoding,String new_encoding){

try{

return new String(str.getBytes(ori_encoding),new_encoding);

}catch (UnsupportedEncodingException e){

e.printStackTrace();

}

return null;

}

二、spark项目

请注意,一中步骤(1),(2),(3)也是必不可少的!

有了普通实现的完成,下一步我想将分词部署在spark中,就有了下述项目。我想将文本转换为 单词,词性标志 PairRDD,具体实现如下,有上面的基础,就不在详细解释了。

SparkConf conf =new SparkConf().setAppName("test").setMaster("local");

JavaSparkContext sc =new JavaSparkContext(conf);

JavaRDD<String> inputs =sc.textFile("C:\utf8file.txt");

JavaRDD<String> source=inputs.filter(

new Function<String, Boolean>() {

public Boolean call(String v1) throws Exception {

return !v1.trim().isEmpty();

}

}

);

JavaRDD<String> transmit = source.mapPartitions(

new FlatMapFunction<Iterator<String>, String>() {

public Iterable<String> call(Iterator<String> it) throws Exception {

List<String> list=new ArrayList<String>();

String dir="C:\jars\nlpir\bin\ICTCLAS2015";

int charset_type=1;

int init_flag =CLibrary.Instance.NLPIR_Init(dir,charset_type,"0");

if(init_flag==0){

throw new RuntimeException(CLibrary.Instance.NLPIR_GetLastErrorMsg());

}

try{

while(it.hasNext()){

list.add(CLibrary.Instance.NLPIR_ParagraphProcess(it.next(),1));

}

}catch (Exception e){

e.printStackTrace();

}

return list;

}

}

).filter(

new Function<String, Boolean>() {

public Boolean call(String v1) throws Exception {

return !v1.trim().isEmpty();

}

}

);

JavaRDD<String> words = transmit.flatMap(

new FlatMapFunction<String, String>() {

public Iterable<String> call(String s) throws Exception {

return Arrays.asList(s.split(" "));

}

}

).filter(

new Function<String, Boolean>() {

public Boolean call(String v1) throws Exception {

return !v1.trim().isEmpty();

}

}

);

JavaPairRDD<String, String> result = words.mapToPair(

new PairFunction<String, String, String>() {

public Tuple2<String, String> call(String s) throws Exception {

String[] split = s.split("/");

return new Tuple2<String, String>(split[0], split[1]);

}

}

);

for(Tuple2<String,String> t:result.collect())

System.out.println(t._1()+" "+t._2());

sc.stop();

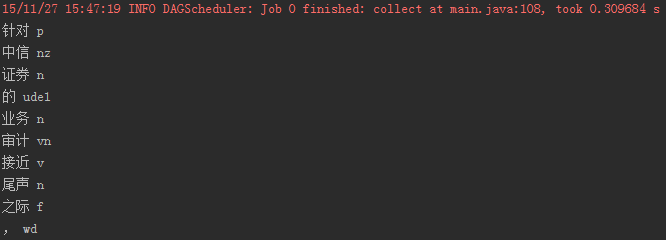

程序执行结果:

scala版本

def ok(f:Iterator[String]):Iterator[String] ={

val dir="C:\jars\nlpir\bin\ICTCLAS2015"

val charset_type=1

val init_flag=CLibrary.Instance.NLPIR_Init(dir,charset_type,"0")

if(init_flag==0)

null

val buf = new ArrayBuffer[String]

for(it<-f){

buf+=(CLibrary.Instance.NLPIR_ParagraphProcess(it,0))

}

buf.iterator

}

def main(args:Array[String]): Unit = {

val conf = new SparkConf().setAppName("test").setMaster("local");

val sc = new SparkContext(conf);

val inputs = sc.textFile("c:\utf8file.txt");

val results=inputs.mapPartitions(

ok

);

results.collect().foreach(println)

}