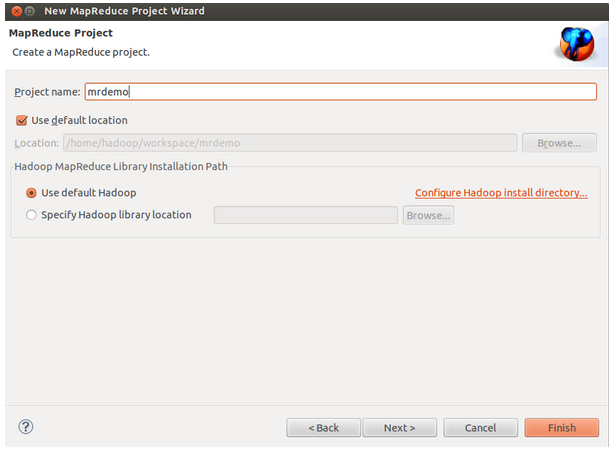

1、新建MR工程

依次点击 File → New → Ohter… 选择 “Map/Reduce Project”,然后输入项目名称:mrdemo,创建新项目:

2、(这步在以后的开发中可能会用到,但是现在不用,现在直接新建一个class文件即可)创建Mapper和Reducer

依次点击 File → New → Ohter… 选择Mapper,自动继承Mapper<KEYIN, VALUEIN, KEYOUT, VALUEOUT>

依次点击 File → New → Ohter… 选择Mapper,自动继承Mapper<KEYIN, VALUEIN, KEYOUT, VALUEOUT>

创建Reducer的过程同Mapper,具体的业务逻辑自己实现即可。

3、新建一个class文件,包名为com.mrdemo,类名为WordCount,按finish。

4、编写map函数、reduce函数和主函数。本文就以官方自带的WordCount为例进行测试(将下面的源码复制到eclipse中):

package com.mrdemo; import java.io.IOException; import java.util.StringTokenizer; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import org.apache.hadoop.util.GenericOptionsParser; public class WordCount { public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable>{ private final static IntWritable one = new IntWritable(1); private Text word = new Text(); public void map(Object key, Text value, Context context ) throws IOException, InterruptedException { StringTokenizer itr = new StringTokenizer(value.toString()); while (itr.hasMoreTokens()) { word.set(itr.nextToken()); context.write(word, one); } } } public static class IntSumReducer extends Reducer<Text,IntWritable,Text,IntWritable> { private IntWritable result = new IntWritable(); public void reduce(Text key, Iterable<IntWritable> values, Context context ) throws IOException, InterruptedException { int sum = 0; for (IntWritable val : values) { sum += val.get(); } result.set(sum); context.write(key, result); } } public static void main(String[] args) throws Exception { Configuration conf = new Configuration(); String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs(); if (otherArgs.length != 2) { System.err.println("Usage: wordcount <in> <out>"); System.exit(2); } //conf.set("fs.defaultFS", "hdfs://192.168.6.77:9000"); Job job = new Job(conf, "word count"); job.setJarByClass(WordCount.class); job.setMapperClass(TokenizerMapper.class); job.setCombinerClass(IntSumReducer.class); job.setReducerClass(IntSumReducer.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); FileInputFormat.addInputPath(job, new Path(otherArgs[0])); FileOutputFormat.setOutputPath(job, new Path(otherArgs[1])); System.exit(job.waitForCompletion(true) ? 0 : 1); } }

5、准备测试数据。

在hdfs中新建一个input01文件夹,然后将/home/hadoop/Documents文件夹下新建的hello文件上传到hdfs中的input01文件夹中。

测试数据:

hello world!

hello hadoop

jobtracker

maptracker

reducetracker

task

namenode

datanode

block

beautiful world

hadoop:

HDFS

MapReduce

hello hadoop

jobtracker

maptracker

reducetracker

task

namenode

datanode

block

beautiful world

hadoop:

HDFS

MapReduce

hadoop@hadoop-ThinkPad:~$ hadoop fs -mkdir input01

hadoop@hadoop-ThinkPad:~$ cd /home/hadoop/Documents

hadoop@hadoop-ThinkPad:~/Documents$ hadoop fs -copyFromLocal hello input01

hdfs://localhost:9000/user/yyq/input01

hdfs://localhost:9000/user/yyq/output01

hdfs://localhost:9000/user/yyq/output01

6、配置运行参数

Run As → Run Configurations… ,在Arguments中配置运行参数,例如程序的输入参数:

Run As → Run Configurations… ,在Arguments中配置运行参数,例如程序的输入参数:

7、运行

Run As -> Run on Hadoop ,执行完成后可以看到如下信息:![]()

Run As -> Run on Hadoop ,执行完成后可以看到如下信息:

到此Eclipse中调用Hadoop-1.0.3本地伪分布式模式执行MR演示成功。

参考博客:

http://www.aboutyun.com/forum.php?mod=viewthread&tid=7541&