1、环境安装

(1)安装Anaconda:

下载地址:https://www.anaconda.com/products/individual

(2)安装:scrapy

(3)安装:Pycharm

(4)安装:Xpath helper

教程参考:

获取插件:https://blog.csdn.net/weixin_41010318/article/details/86472643

配置过程:https://www.cnblogs.com/pfeiliu/p/13483562.html

2、爬取过程

(1)网页分析:

网址:

每个房源页网址都是:https://bj.lianjia.com/ershoufang/ 下的房源标题链接;

上次爬取了每个房源页链接的链接信息,并保存到了json文件中,可以逐个调用文件中的链接信息进行所有页面的爬取;

利用xpath helper 分析出需要爬取的字段:

(2)详细代码:

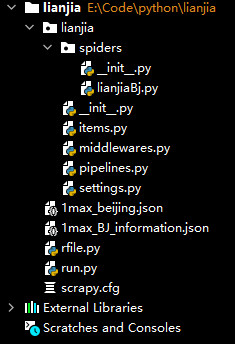

目录:

创建项目:

# 打开Pycharm,并打开Terminal,执行以下命令

scrapy startproject lianjia

cd lianjia

scrapy genspider lianjiaBj bj.lianjia.com

在scrapy.cfg同级目录,创建run.py,用于启动Scrapy项目,内容如下

# !/usr/bin/python3 # -*- coding: utf-8 -*- # 在项目根目录下新建:run.py from scrapy.cmdline import execute # 第三个参数是:爬虫程序名 # --nolog什么意思? execute(['scrapy', 'crawl', 'lianjiaBj', '--nolog'])

lianjiaBj.py

import scrapy # -*- coding: utf-8 -*- from lianjia.items import LianjiaItem from selenium.webdriver import ChromeOptions from selenium.webdriver import Chrome import json # 引入文件1max_beijing.json,利用文件内的url网址信息 with open('1max_beijing.json', 'r') as file: str = file.read() data = json.loads(str) class LianjiabjSpider(scrapy.Spider): name = 'lianjiaBj' # allowed_domains = ['bj.lianjia.com'] start_urls = data # 实例化一个浏览器对象 def __init__(self): # 防止网站识别Selenium代码 # selenium启动配置参数接收是ChromeOptions类,创建方式如下: options = ChromeOptions() # 添加启动参数 # 浏览器不提供可视化页面. linux下如果系统不支持可视化不加这条会启动失败 options.add_argument("--headless") # => 为Chrome配置无头模式 # 添加实验性质的设置参数 # 设置开发者模式启动,该模式下webdriver属性为正常值 options.add_experimental_option('excludeSwitches', ['enable-automation']) options.add_experimental_option('useAutomationExtension', False) # 禁用浏览器弹窗 self.browser = Chrome(options=options) # 最新解决navigator.webdriver=true的方法 self.browser.execute_cdp_cmd("Page.addScriptToEvaluateOnNewDocument", { "source": """ Object.defineProperty(navigator, 'webdriver', { get: () => undefined }) """ }) super().__init__() def start_requests(self): print("开始爬虫") url = self.start_urls print("开始接触url") print(type(url)) count = 0 # 逐个引入1max_beijing.json文件中url信息 for i in url: count += 1 alone_url = i['BeiJingFangYuan_Id'] # dataNum为 str类型的地址 # print(alone_url) # print("url", url) # 访问下一页,有返回时,调用self.parse_details方法 response = scrapy.Request(url=alone_url, callback=self.parse_details) yield response print("本次爬取数据: %s条" % count) # 一个Request对象表示一个HTTP请求,它通常是在爬虫生成,并由下载执行,从而生成Response # url(string) - 此请求的网址 # callback(callable) - 将使用此请求的响应(一旦下载)作为其第一个参数调用的函数。 # 如果请求没有指定回调,parse()将使用spider的 方法。请注意,如果在处理期间引发异常,则会调用errback。 # 仍有许多参数:method='GET', headers, body, cookies, meta, encoding='utf-8', priority=0, dont_filter=False, errback # response = scrapy.Request(url=url, callback=self.parse_index) # # yield response # 整个爬虫结束后关闭浏览器 def close(self, spider): print("关闭爬虫") self.browser.quit() ''' # 访问主页的url, 拿到对应板块的response def parse_index(self, response): print("访问主页") for i in range(0, 2970): # 下一页url url = url[i]['BeiJingFangYuan_Id'] i += 1 print("url", url) # 访问下一页,有返回时,调用self.parse_details方法 yield scrapy.Request(url=url, callback=self.parse_details)''' def parse_details(self, response): # 获取页面中要抓取的信息在网页中位置节点 node_list = response.xpath('//div[@class="introContent"]') try: # 基本属性 # 房屋户型 fangWuHuXing = node_list.xpath('./div[@class="base"]//li[1]/text()').extract() if not fangWuHuXing: fangWuHuXing = "房屋户型为空" print("fangWuHuXing", fangWuHuXing) # 交易属性 # 挂牌时间 guaPaiShiJian = node_list.xpath('./div[@class="transaction"]//li[1]/span[2]/text()').extract() if not guaPaiShiJian: guaPaiShiJian = "挂牌时间为空" print("guaPaiShiJian", guaPaiShiJian) # item item = LianjiaItem() item['fangWuHuXing'] = fangWuHuXing item['guaPaiShiJian'] = guaPaiShiJian yield item except Exception as e: print(e)

items.py

# Define here the models for your scraped items # # See documentation in: # https://docs.scrapy.org/en/latest/topics/items.html import scrapy class LianjiaItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() # 基本属性 # 房屋户型 fangWuHuXing = scrapy.Field() # 交易属性 # 挂牌时间 guaPaiShiJian = scrapy.Field() # pass

pipelines.py

# Define your item pipelines here # # Don't forget to add your pipeline to the ITEM_PIPELINES setting # See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html # useful for handling different item types with a single interface from itemadapter import ItemAdapter import codecs,json class LianjiaPipeline(object): def __init__(self): # python3保存文件 必须需要'wb' 保存为json格式 # self.f = open("1max_BJ_information.json", 'wb') self.file = codecs.open('1max_BJ_information.json', 'w', encoding="utf-8") def process_item(self, item, spider): # 读取item中的数据 并换行处理 content = json.dumps(dict(item), ensure_ascii=False) + ', ' self.file.write(content) return item def close_spider(self, spider): # 关闭文件 self.file.close()

修改settings.py,应用pipelines

# Scrapy settings for lianjia project # # For simplicity, this file contains only settings considered important or # commonly used. You can find more settings consulting the documentation: # # https://docs.scrapy.org/en/latest/topics/settings.html # https://docs.scrapy.org/en/latest/topics/downloader-middleware.html # https://docs.scrapy.org/en/latest/topics/spider-middleware.html BOT_NAME = 'lianjia' SPIDER_MODULES = ['lianjia.spiders'] NEWSPIDER_MODULE = 'lianjia.spiders' # Crawl responsibly by identifying yourself (and your website) on the user-agent #USER_AGENT = 'lianjia (+http://www.yourdomain.com)' # Obey robots.txt rules ROBOTSTXT_OBEY = False # Configure maximum concurrent requests performed by Scrapy (default: 16) #CONCURRENT_REQUESTS = 32 # Configure a delay for requests for the same website (default: 0) # See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay # See also autothrottle settings and docs #DOWNLOAD_DELAY = 3 # The download delay setting will honor only one of: #CONCURRENT_REQUESTS_PER_DOMAIN = 16 #CONCURRENT_REQUESTS_PER_IP = 16 # Disable cookies (enabled by default) #COOKIES_ENABLED = False # Disable Telnet Console (enabled by default) #TELNETCONSOLE_ENABLED = False # Override the default request headers: #DEFAULT_REQUEST_HEADERS = { # 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', # 'Accept-Language': 'en', #} # Enable or disable spider middlewares # See https://docs.scrapy.org/en/latest/topics/spider-middleware.html #SPIDER_MIDDLEWARES = { # 'lianjia.middlewares.LianjiaSpiderMiddleware': 543, #} # Enable or disable downloader middlewares # See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html #DOWNLOADER_MIDDLEWARES = { # 'lianjia.middlewares.LianjiaDownloaderMiddleware': 543, #} # Enable or disable extensions # See https://docs.scrapy.org/en/latest/topics/extensions.html #EXTENSIONS = { # 'scrapy.extensions.telnet.TelnetConsole': None, #} # Configure item pipelines # See https://docs.scrapy.org/en/latest/topics/item-pipeline.html ITEM_PIPELINES = { 'lianjia.pipelines.LianjiaPipeline': 300, } # Enable and configure the AutoThrottle extension (disabled by default) # See https://docs.scrapy.org/en/latest/topics/autothrottle.html #AUTOTHROTTLE_ENABLED = True # The initial download delay #AUTOTHROTTLE_START_DELAY = 5 # The maximum download delay to be set in case of high latencies #AUTOTHROTTLE_MAX_DELAY = 60 # The average number of requests Scrapy should be sending in parallel to # each remote server #AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0 # Enable showing throttling stats for every response received: #AUTOTHROTTLE_DEBUG = False # Enable and configure HTTP caching (disabled by default) # See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings #HTTPCACHE_ENABLED = True #HTTPCACHE_EXPIRATION_SECS = 0 #HTTPCACHE_DIR = 'httpcache' #HTTPCACHE_IGNORE_HTTP_CODES = [] #HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

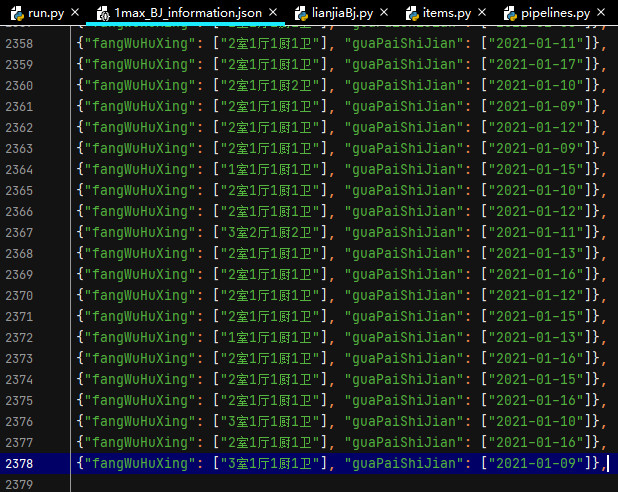

执行run.py,启动爬虫项目,效果如下:

3、总结

json文件中有2970个url房源链接网址,理论上应该爬取2970条信息,当时上面文件中仅有2378条信息,少了600多条信息;

原因可能是:页面格式不全是一种类型。