一、Linear regression

- Establish a regression model based on the data,y=w1x1+w2x2+...+b,through the establishment of error between the real value and the predicted value,and use gradient descent optimization to obtain the weight and offset corresponding to the minimum loss.Finally,the weight and bias parameters of the model are determined,and finally these parameters can be used for prediction.

二、Linear regression steps

- Construct the model: y = y=w1x1+w2x2+....+b.

- Constructing a loss function: mean square error.

- Optimization loss: Gradient descent is used to optimize loss. When the loss is minimum, the corresponding weight and offset are the last desired model parameters.

三、Design

1.Prepare data

- The data distribution is y=0.8*x+0.7.The determination of the data distribution law here is to compare the accuracy of the training with the parameters of our training site and the real parameters(is 0.8 and 0.7).

X = tf.random_normal(shape=[100,1])

y_true = tf.matmul(X,[[0.8]])+0.7

2.Build model

- The model should satisfy y=weight*x+bias, the shape of the data x is (100,1), and the shape after multiplying the weight is (100,1), that is, the shape of the model parameter weight weight is (1,1). The shape of the bias can be the same as the weight shape or a scalar.

weight = tf.Variable(initial_value=tf.random_normal(shape=[1,1]))

bias = tf.Variable(initial_value=tf.random_normal(shape=[1,1]))

y_predict = tf.matmul(X,weight)+bias

3.Build loss function

- The linear regression loss function uses the mean square error.

error = tf.reduce_mean(tf.square(y_predict - y_true))

4.Optimization loss

- Use gradient descent optimization.

tf.train.GradientDescentOptimizer(learning_rate=0.1).minimize(error)

Where learning_rate is the learning rate, which is generally a relatively small value between 0-1. Because the loss is to be minimized, the minimize() method of the gradient descent optimizer is called.

四、Code examples

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

import tensorflow as tf

def linear_regression():

# 1.Prepare data

X = tf.random_normal(shape=[100,1])

y_true = tf.matmul(X,[[0.8]]) + 0.7

# Construct weights and bias, use variables to create

weight = tf.Variable(initial_value=tf.random_normal(shape=[1,1]))

bias = tf.Variable(initial_value=tf.random_normal(shape=[1,1]))

y_predict = tf.matmul(X,weight) + bias

# 2.Construct loss function

error = tf.reduce_mean(tf.square(y_predict-y_true))

# 3.Optimization loss

optimizer = tf.train.GradientDescentOptimizer(learning_rate=0.1).minimize(error)

# Initialize variables

init = tf.global_variables_initializer()

# Start conversation

with tf.Session() as sess:

# Run initialization variables

sess.run(init)

print('View model parameters before training: weight: %f, partial amount: %f, loss: %f'%(weight.eval(),bias.eval(),error.eval()))

# Start training

for i in range(100):

sess.run(optimizer)

print('View model parameters after training %d times: weight: %f, partial amount: %f, loss: %f'%((i+1), weight.eval(), bias.eval(), error.eval()))

if __name__ == '__main__':

linear_regression()

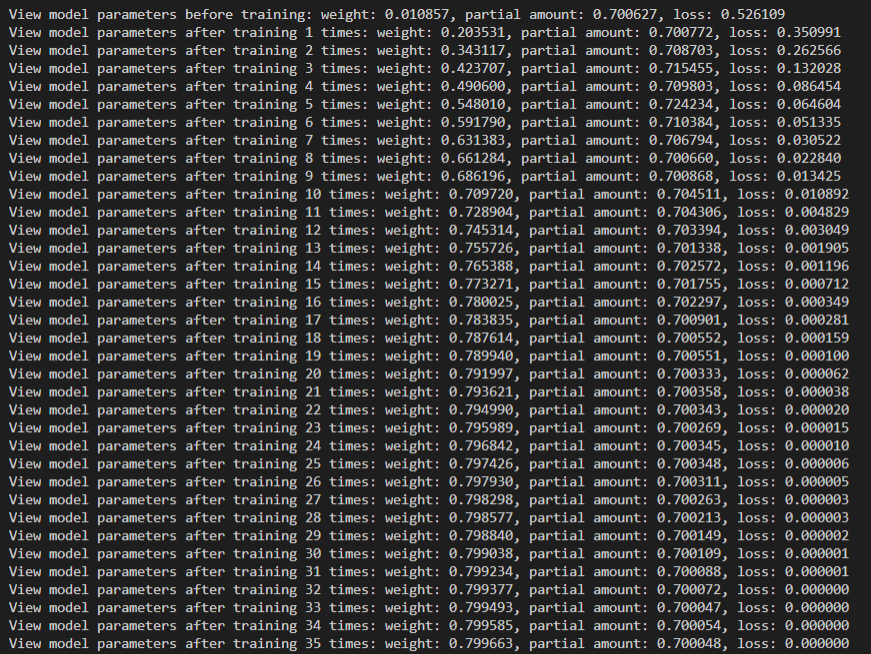

五、Result