[root@localhost cloud_images]# lsmod | grep vhost_net vhost_net 262144 0 vhost 262144 1 vhost_net tap 262144 1 vhost_net tun 262144 2 vhost_net [root@localhost cloud_images]#

vhost-net网卡的后端默认使用linux的虚拟网桥tap设备,qemu和虚拟机内部使用virtio-net虚拟网卡。 步骤1: 创建linux网桥和tap设备(对于fedora,centos,redhat等默认有创建好的虚拟网卡) brctl addbr virbr0 brctl stp virbr0 on ip tuntap add name virbr0-nic mode tap ip link set dev virbr0-nic up 步骤二:将host网卡添加到virbr0的一个port,并把ip配置给virbr0 brctl addif virbr0 eth0 brctl addif virbr0 virbr0-nic dhclient virbr0 步骤三:用命令行起一个虚拟机 sudo x86_64-softmmu/qemu-system-x86_64 --enable-kvm -m 5120 -drive file=/home/fang/vm/centos.img,if=virtio -net nic,model=virtio -net tap,ifname=virbr0-nic,script=no -vnc :0

用vhost_net后端驱动

前面提到virtio在宿主机中的后端处理程序(backend)一般是由用户空间的QEMU提供的,然而如果对于网络IO请求的后端处理能够在在内核空间来完成,则效率会更高,会提高网络吞吐量和减少网络延迟。在比较新的内核中有一个叫做“vhost-net”的驱动模块,它是作为一个内核级别的后端处理程序,将virtio-net的后端处理任务放到内核空间中执行,从而提高效率。

在第4章介绍“使用网桥模式”的网络配置时,有几个选项和virtio相关的,这里也介绍一下。

-net tap,[,vnet_hdr=on|off][,vhost=on|off][,vhostfd=h][,vhostforce=on|off]

vnet_hdr =on|off

设置是否打开TAP设备的“IFF_VNET_HDR”标识。“vnet_hdr=off”表示关闭这个标识;“vnet_hdr=on”则强制开启这个标识,如果没有这个标识的支持,则会触发错误。IFF_VNET_HDR是tun/tap的一个标识,打开它则允许发送或接受大数据包时仅仅做部分的校验和检查。打开这个标识,可以提高virtio_net驱动的吞吐量。

vhost=on|off

设置是否开启vhost-net这个内核空间的后端处理驱动,它只对使用MIS-X[5]中断方式的virtio客户机有效。

vhostforce=on|off

设置是否强制使用vhost作为非MSI-X中断方式的Virtio客户机的后端处理程序。

vhostfs=h

设置为去连接一个已经打开的vhost网络设备。

用如下的命令行启动一个客户机,就在客户机中使用virtio-net作为前端驱动程序,而后端处理程序则使用vhost-net(当然需要当前宿主机内核支持vhost-net模块)。

[root@jay-linux kvm_demo]# qemu-system-x86_64 rhel6u3.img -smp 2 -m 1024 -net nic,model=virtio,macaddr=00:16:3e:22:22:22 -net tap,vnet_hdr=on,vhost=on

VNC server running on ::1:5900'

qemu-system-aarch64: network script /usr/local/bin/../etc/qemu-ifup failed with status 256

[root@localhost cloud_images]# cat qemu-ifup #!/bin/sh set -x switch=virbr0 if [ -n "$1" ];then ip tuntap add $1 mode tap user `whoami` ip link set $1 up sleep 0.5s ip link set $1 master $switch exit 0 else echo "Error: no interface specified" exit 1 fi

qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 2 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append "console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1" -initrd initramfs-4.18 -drive file=vhuser-test1.qcow2 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -net nic,model=virtio,macaddr=00:16:3e:22:22:22 -net tap,id=hostnet1,script=qemu-ifup,vnet_hdr=on,vhost=on -vnc :10

当给一个Qemu进程传递了参数-netdev tap,vhost=on 的时候,QEMU会通过调用几个ioctl命令对这个文件描述符进行一些初始化的工作,然后进行特性的协商,从而宿主机跟客户机的vhost-net driver建立关系。 QEMU代码调用如下:

vhost_net_init -> vhost_dev_init -> vhost_net_ack_features+ switch=virbr0 + '[' -n tap0 ']' ++ whoami + ip tuntap add tap0 mode tap user root ioctl(TUNSETIFF): Device or resource busy + ip link set tap0 up + sleep 0.5s + ip link set tap0 master virbr0 + exit 0 [root@localhost cloud_images]# brctl show bridge name bridge id STP enabled interfaces virbr0 8000.5254000d3f71 yes enp125s0f1 tap0 virbr0-nic

[root@localhost cloud_images]# ps -elf | grep vhost 7 S root 49044 1 9 80 0 - 88035 poll_s 21:56 ? 00:00:11 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 2 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -drive file=vhuser-test1.qcow2 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -net nic,model=virtio,macaddr=00:16:3e:22:22:22 -net tap,id=hostnet1,script=qemu-ifup,vnet_hdr=on,vhost=on -vnc :10 1 S root 49065 2 0 80 0 - 0 vhost_ 21:56 ? 00:00:00 [vhost-49044]

[root@localhost ~]# cat /proc/49065/stack [<ffff000008085ed4>] __switch_to+0x8c/0xa8 [<ffff000001e507d8>] vhost_worker+0x148/0x170 [vhost] [<ffff0000080f8638>] kthread+0x10c/0x138 [<ffff000008084f54>] ret_from_fork+0x10/0x18 [<ffffffffffffffff>] 0xffffffffffffffff [root@localhost ~]#

- To get qemu vcpu thread id # ps -eLo ruser,pid,ppid,lwp,args | grep qemu-kvm | grep -v grep - To get vhost thread id # ps -eaf |grep vhost-$pid_of_qemu-kvm |grep -v grep

[root@localhost cloud_images]# ps -eLo ruser,pid,ppid,lwp,args | grep qemu-system-aarch64 | grep vhost | grep -v grep | wc -l 20

[root@localhost cloud_images]# ps -eLo ruser,pid,ppid,lwp,args | grep qemu-system-aarch64 | grep -v grep root 48617 1 48617 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48618 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48619 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48672 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48674 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48676 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48677 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48678 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48679 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48680 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48681 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48682 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48683 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48684 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48685 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48686 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48687 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48688 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48689 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48690 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10 root 48617 1 48782 qemu-system-aarch64 -name vm2 -daemonize -enable-kvm -M virt -cpu host -smp 16 -m 4096 -object memory-backend-file,id=mem,size=4096M,mem-path=/mnt/huge,share=on -numa node,memdev=mem -mem-prealloc -drive file=vhuser-test1.qcow2 -global virtio-blk-device.scsi=off -device virtio-scsi-device,id=scsi -kernel vmlinuz-4.18 --append console=ttyAMA0 root=UUID=6a09973e-e8fd-4a6d-a8c0-1deb9556f477 iommu=pt intel_iommu=on iommu.passthrough=1 -initrd initramfs-4.18 -serial telnet:localhost:4322,server,nowait -monitor telnet:localhost:4321,server,nowait -chardev socket,id=char0,path=/tmp/vhost1,server -netdev type=vhost-user,id=netdev0,chardev=char0,vhostforce -device virtio-net-pci,netdev=netdev0,mac=52:54:00:00:00:01,mrg_rxbuf=on,rx_queue_size=256,tx_queue_size=256 -vnc :10

什么是 vhost

vhost 是 virtio 的一种后端实现方案,在 virtio 简介中,我们已经提到 virtio 是一种半虚拟化的实现方案,需要虚拟机端和主机端都提供驱动才能完成通信,通常,virtio 主机端的驱动是实现在用户空间的 qemu 中,而 vhost 是实现在内核中,是内核的一个模块 vhost-net.ko。为什么要实现在内核中,有什么好处呢,请接着往下看。

为什么要用 vhost

在 virtio 的机制中,guest 与 用户空间的 Hypervisor 通信,会造成多次的数据拷贝和 CPU 特权级的上下文切换。例如 guest 发包给外部网络,首先,guest 需要切换到 host kernel,然后 host kernel 会切换到 qemu 来处理 guest 的请求, Hypervisor 通过系统调用将数据包发送到外部网络后,会切换回 host kernel , 最后再切换回 guest。这样漫长的路径无疑会带来性能上的损失。

vhost 正是在这样的背景下提出的一种改善方案,它是位于 host kernel 的一个模块,用于和 guest 直接通信,数据交换直接在 guest 和 host kernel 之间通过 virtqueue 来进行,qemu 不参与通信,但也没有完全退出舞台,它还要负责一些控制层面的事情,比如和 KVM 之间的控制指令的下发等。

vhost 的数据流程

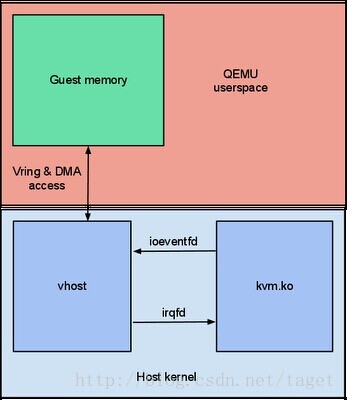

下图左半部分是 vhost 负责将数据发往外部网络的过程, 右半部分是 vhost 大概的数据交互流程图。其中,qemu 还是需要负责 virtio 设备的适配模拟,负责用户空间某些管理控制事件的处理,而 vhost 实现较为纯净,以一个独立的模块完成 guest 和 host kernel 的数据交换过程。

vhost 与 virtio 前端的通信主要采用一种事件驱动 eventfd 的机制来实现,guest 通知 vhost 的事件要借助 kvm.ko 模块来完成,vhost 初始化期间,会启动一个工作线程 work 来监听 eventfd,一旦 guest 发出对 vhost 的 kick event,kvm.ko 触发 ioeventfd 通知到 vhost,vhost 通过 virtqueue 的 avail ring 获取数据,并设置 used ring。同样,从 vhost 工作线程向 guest 通信时,也采用同样的机制,只不过这种情况发的是一个回调的 call envent,kvm.ko 触发 irqfd 通知 guest。

总结

vhost 与 kvm 的事件通信通过 eventfd 机制来实现,主要包括两个方向的 event,一个是 guest 到 vhost 方向的 kick event,通过 ioeventfd 实现;另一个是 vhost 到 guest 方向的 call event,通过 irqfd 实现。

Vhost 概述

Linux kernel 中的vhost driver提供了KVM在kernel环境中的virtio设备的模拟。vhost把QEMU模拟设备的代码放在了linux kernel里面,所以设备模拟代码可以直接进入kernel子系统,从而不需要从用户空间通过系统调用陷入内核,减少了由于模拟IO导致的性能下降。

vhost-net是在宿主机上对vhost 网卡的模拟,同样,也有vhost-blk,对block设备的模拟,以及vhost-scsi,对scsi设备的模拟。

vhost 在kernel中的代码位于 drivers/vhost/vhost.c

Vhost 驱动模型

vhost driver创建了一个字符设备 /dev/vhost-net,这个设备可以被用户空间打开,并可以被ioctl命令操作。当给一个Qemu进程传递了参数-netdev tap,vhost=on 的时候,QEMU会通过调用几个ioctl命令对这个文件描述符进行一些初始化的工作,然后进行特性的协商,从而宿主机跟客户机的vhost-net driver建立关系。 QEMU代码调用如下:

vhost_net_init -> vhost_dev_init -> vhost_net_ack_features

在vhost_net_init中调用了 vhost_dev_init ,打开/dev/vhost-net这个设备,然后返回一个文件描述符作为vhost-net的后端, vhost_dev_init 调用的ioctl命令有

r = ioctl(hdev->control, VHOST_SET_OWNER, NULL);

Kernel 中的定义为:

1. /* Set current process as the (exclusive) owner of this file descriptor. This

2. * must be called before any other vhost command. Further calls to

3. * VHOST_OWNER_SET fail until VHOST_OWNER_RESET is called. */

4. #define VHOST_SET_OWNER _IO(VHOST_VIRTIO, 0x01)

然后获取VHOST支持的特性

r = ioctl(hdev->control, VHOST_GET_FEATURES, &features);

同样,kernel中的定义为:

1. /* Features bitmask for forward compatibility. Transport bits are used for

2. * vhost specific features. */

3. #define VHOST_GET_FEATURES _IOR(VHOST_VIRTIO, 0x00, __u64)

QEMU中用vhost_net 这个数据结构代表打开的vhost_net 实例:

1. struct vhost_net {

2. struct vhost_dev dev;

3. struct vhost_virtqueue vqs[2];

4. int backend;

5. NetClientState *nc;

6. };

使用ioctl设置完后,QEMU注册memory_listener 回调函数:

1. hdev->memory_listener = (MemoryListener) {

2. .begin = vhost_begin,

3. .commit = vhost_commit,

4. .region_add = vhost_region_add,

5. .region_del = vhost_region_del,

6. .region_nop = vhost_region_nop,

7. .log_start = vhost_log_start,

8. .log_stop = vhost_log_stop,

9. .log_sync = vhost_log_sync,

10. .log_global_start = vhost_log_global_start,

11. .log_global_stop = vhost_log_global_stop,

12. .eventfd_add = vhost_eventfd_add,

13. .eventfd_del = vhost_eventfd_del,

14. .priority = 10

15. };

vhost_region_add 是为了将QEMU guest的地址空间映射到vhost driver

最后进行特性的协商:

1. /* Set sane init value. Override when guest acks. */

2. vhost_net_ack_features(net, 0);

与此同时,kernel中要创建一个kernel thread 用于处理I/O事件和设备的模拟。 kernel代码 drivers/vhost/vhost.c:

在vhost_dev_set_owner中,调用了这个函数用于创建worker线程(线程名字为vhost-qemu+进程pid)

Kernel 中的virtio 模拟

vhost并没有完全模拟一个pci设备,相反,它只把自己限制在对virtqueue的操作上。

worker thread 一直等待virtqueue的数据,对于vhost-net来说,当virtqueue的tx队列中有数据了,它会把数据传送到与其相关联的tap设备文件描述符上。

相反,worker thread 也要进行tap文件描述符的轮询,对于vhost-net,当tap文件描述符有数据到来时候,worker thread会被唤醒,然后将数据传送到rx队列中。

vhost的在用户空间的接口

数据已经准备好了,如何通知客户机呢?

从vhost模块的依赖性可以得知,vhost这个模块并没有依赖于kvm 模块,也就是说,理论上其他应用只要实现了和vhost接口,也可以调用vhost来进行数据传输的加速。

但也正是因为vhost跟kvm模块没什么关系,当QEMU(KVM)guest把数据放到tx队列(virtqueue)上之后,它是没有办法直接通知vhost数据准备了的。

不过,vhost 设置了一个eventfd文件描述符,这个文件描述符被前面我们提到的worker thread 监控,所以QEMU可以通过向eventfd发送消息告诉vhost数据准备好了。

QEMU的做法是这样的,在QEMU中注册一个ioeventfd,当guest 发生I/O退出了,会被KVM捕捉到,KVM向vhost发送eventfd从而告知vhost KVM guest已经准备好数据了。由于 worker thread监控这个eventfd,在收到消息后,知道guest已经把数据放到了tx队列,可以进行对戏

vhost通过发出一个guest中断,通过KVM提供的irqevent,告诉guest需要传送的buffer已经放到了rx virtqueue了,QEMU(KVM)注册这个irq PCI事件,得知内核空间的数据准备好了,调用guest驱动进行数据的读取。

所以,总的来说 vhost 实例需要知道的有三样

- guest的内存映射,也就是virtqueue,用于数据的传输

- qemu kick eventfd,vhost接收guest发送的消息,该消息被worker thread捕获

- call event(irqfd)用于通知guest

以上三点在QEMU初始化的时候准备好,数据的传输只在内核空间就完成了,不需要QEMU进行干预,所以这也是为什么使用vhost进行传输数据高效的原因