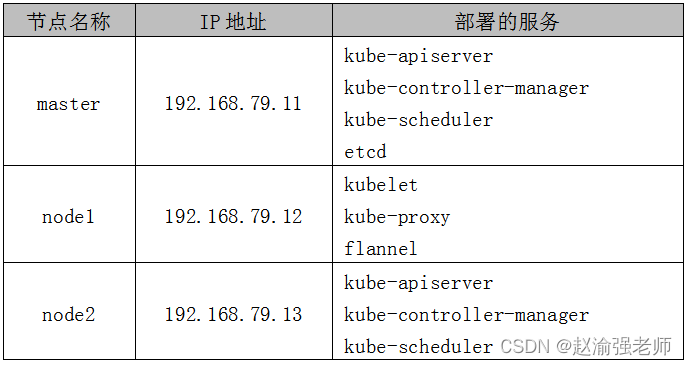

在一些企业的私有环境中可能无法连接外部的网络。如果要在这样的环境中部署Kubernetes集群,可以采集Kubernetes离线安装的方式进行部署。即:使用二进制安装包部署Kubernetes集群,采用的版本是Kubernetes v1.18.20。

下面通过具体的步骤来演示如何使用二进制包部署三个节点的Kubernetes集群。

1. 部署ETCD

(1)从GitHub上下载ETCD的二进制安装包“etcd-v3.3.27-linux-amd64.tar.gz”。

(2)从cfssl官方网站上下载所需要的介质,并安装cfssl。

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

提示: cfssl是一个命令行工具包,该工具包包含了运行一个认证中心所需要的全部功能。

(3)创建用于生成CA证书和私钥的配置文件,执行下面的命令:

mkdir -p /opt/ssl/etcd

cd /opt/ssl/etcd

cfssl print-defaults config > config.json

cfssl print-defaults csr > csr.json

cat > config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

cat > csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}]

}

EOF

(4)生成CA证书和私钥。

cfssl gencert -initca csr.json | cfssljson -bare etcd

(5)在目录“/opt/ssl/etcd”下添加文件“etcd-csr.json”,该文件用于生成ETCD的证书和私钥,内容如下:

cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"192.168.79.11"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "etcd",

"OU": "Etcd Security"

}

]

}

EOF

提示: 这里只部署了一个ETCD的节点。如果是部署ETCD集群,可以修改字段“hosts”添加多个ETCD节点即可。

(6)安装ETCD。

tar -zxvf etcd-v3.3.27-linux-amd64.tar.gz

cd etcd-v3.3.27-linux-amd64

cp etcd* /usr/local/bin

mkdir -p /opt/platform/etcd/

(7)编辑文件“/opt/platform/etcd/etcd.conf”添加ETCD的配置信息,内容如下:

ETCD_NAME=k8s-etcd

ETCD_DATA_DIR="/var/lib/etcd/k8s-etcd"

ETCD_LISTEN_PEER_URLS="http://192.168.79.11:2380"

ETCD_LISTEN_CLIENT_URLS="http://127.0.0.1:2379,http://192.168.79.11:2379"

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.79.11:2380"

ETCD_INITIAL_CLUSTER="k8s-etcd=http://192.168.79.11:2380"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-test"

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.79.11:2379"

(8)将ETCD服务加入系统服务中,编辑文件“/usr/lib/systemd/system/etcd.service”内容如下:

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/platform/etcd/etcd.conf

ExecStart=/usr/local/bin/etcd \

--cert-file=/opt/ssl/etcd/etcd.pem \

--key-file=/opt/ssl/etcd/etcd-key.pem \

--peer-cert-file=/opt/ssl/etcd/etcd.pem \

--peer-key-file=/opt/ssl/etcd/etcd-key.pem \

--trusted-ca-file=/opt/ssl/etcd/etcd.pem \

--peer-trusted-ca-file=/opt/ssl/etcd/etcd.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

(9)创建ETCD的数据存储目录,然后启动ETCD服务。

mkdir -p /opt/platform/etcd/data

chmod 755 /opt/platform/etcd/data

systemctl daemon-reload

systemctl enable etcd.service

systemctl start etcd.service

(10)验证ETCD的状态。

etcdctl cluster-health

输出信息如下:

member fd4d0bd2446259d9 is healthy:

got healthy result from http://192.168.79.11:2379

cluster is healthy

(11)查看ETCD的成员列表。

etcdctl member list

输出的信息如下:

fd4d0bd2446259d9: name=k8s-etcd peerURLs=http://192.168.79.11:2380 clientURLs=http://192.168.79.11:2379 isLeader=true

提示: 由于是单节点的ETCD,因此这里只有一个成员信息。

(12)将ETCD的证书文件拷贝的node1和node2节点上。

cd /opt

scp -r ssl/ root@node1:/opt

scp -r ssl/ root@node2:/opt

2. 部署Flannel网络

(1)在master节点上写入分配的子网段到ETCD中供Flannel使用,执行命令:

etcdctl set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

(2)在master节点上查看写的Flannel子网信息,执行命令:

etcdctl get /coreos.com/network/config

输出的信息如下:

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

(3)在node1上解压flannel-v0.10.0-linux-amd64.tar.gz安装包,执行命令:

tar -zxvf flannel-v0.10.0-linux-amd64.tar.gz

(4)在node1上创建Kubernetes工作目录。

mkdir -p /opt/kubernetes/{cfg,bin,ssl}

mv mk-docker-opts.sh flanneld /opt/kubernetes/bin/

(5)在node1上定义Flannel脚本文件“flannel.sh”,输入下面的内容:

#!/bin/bash

ETCD_ENDPOINTS=${1}

cat <<EOF >/opt/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \

-etcd-cafile=/opt/ssl/etcd/etcd.pem \

-etcd-certfile=/opt/ssl/etcd/etcd.pem \

-etcd-keyfile=/opt/ssl/etcd/etcd-key.pem"

EOF

cat <<EOF >/usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

(6)在node1节点上开启Flannel网络功能,执行命令:

bash flannel.sh http://192.168.79.11:2379

提示: 这里指定了在master节点上部署的ETCD地址。

(7)在node1节点上查看Flannel网络的状态,执行命令:

systemctl status flanneld

输出的信息如下:

flanneld.service - Flanneld overlay address etcd agent

Loaded: loaded (/usr/lib/systemd/system/flanneld.service; enabled; vendor preset: disabled)

Active: active (running) since Tue 2022-02-08 22:30:46 CST; 6s ago

(8)在node1节点上修改文件“/usr/lib/systemd/system/docker.service”配置node1节点上的Docker连接Flannel网络,在文件中增加下面的一行:

... ...

EnvironmentFile=/run/flannel/subnet.env

... ...

(9)在node1节点上重启Docker服务。

systemctl daemon-reload

systemctl restart docker.service

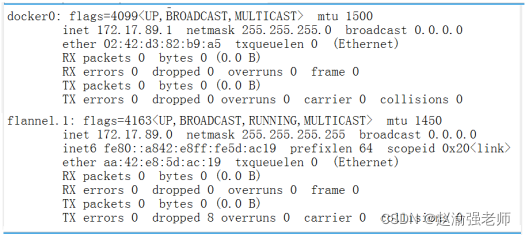

(10)查看node1节点上的Flannel网络信息,如图13-3所示:

ifconfig

(11)在node2节点上配置Flannel网络,重复第3步到第10步。

3. 部署Master节点

(1)创建Kubernetes集群证书目录。

mkdir -p /opt/ssl/k8s

cd /opt/ssl/k8s

(2)创建脚本文件“k8s-cert.sh”用于生成Kubernetes集群的证书,在脚本中输入下面的内容:

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

cat >server-csr.json<<EOF

{

"CN": "kubernetes",

"hosts": [

"192.168.79.11",

"127.0.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem \

-config=ca-config.json -profile=kubernetes \

server-csr.json | cfssljson -bare server

cat >admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem \

-config=ca-config.json -profile=kubernetes \

admin-csr.json | cfssljson -bare admin

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem \

-config=ca-config.json -profile=kubernetes \

kube-proxy-csr.json | cfssljson -bare kube-proxy

(3)执行脚本文件“k8s-cert.sh”。

bash k8s-cert.sh

(4)拷贝证书。

mkdir -p /opt/kubernetes/ssl/

mkdir -p /opt/kubernetes/logs/

cp ca*pem server*pem /opt/kubernetes/ssl/

(5))解压kubernetes压缩包

tar -zxvf kubernetes-server-linux-amd64.tar.gz

(6)复制关键命令文件

mkdir -p /opt/kubernetes/bin/

cd kubernetes/server/bin/

cp kube-apiserver kube-scheduler kube-controller-manager \

/opt/kubernetes/bin

cp kubectl /usr/local/bin/

(7)随机生成序列号。

mkdir -p /opt/kubernetes/cfg

head -c 16 /dev/urandom | od -An -t x | tr -d ' '

输出内容如下:

05cd8031b0c415de2f062503b0cd4ee6

(8)创建“/opt/kubernetes/cfg/token.csv”文件,输入下面的内容:

05cd8031b0c415de2f062503b0cd4ee6,kubelet-bootstrap,10001,"system:node-bootstrapper"

(9)创建API Server的配置文件“/opt/kubernetes/cfg/kube-apiserver.conf”,输入下面的内容:

KUBE_APISERVER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--etcd-servers=http://192.168.79.11:2379 \

--bind-address=192.168.79.11 \

--secure-port=6443 \

--advertise-address=192.168.79.11 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth=true \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-32767 \

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/ssl/etcd/etcd.pem \

--etcd-certfile=/opt/ssl/etcd/etcd.pem \

--etcd-keyfile=/opt/ssl/etcd/etcd-key.pem \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

(10)使用系统的systemd来管理API Server,执行命令:

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

(11)启动API Server。

systemctl daemon-reload

systemctl start kube-apiserver

systemctl enable kube-apiserver

(12)查看API Server的状态。

systemctl status kube-apiserver.service

输出的信息如下:

kube-apiserver.service - Kubernetes API Server

Loaded: loaded (/usr/lib/systemd/system/kube-apiserver.service; enabled; vendor preset: disabled)

Active: active (running) since Tue 2022-02-08 21:11:47 CST; 24min ago

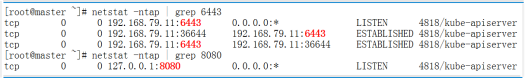

(13)查看监听的端口6433和端口8080信息,如图13-4所示。

netstat -ntap | grep 6443

netstat -ntap | grep 8080

(14)授权kubelet-bootstrap用户允许请求证书。

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

(15)创建kube-controller-manager的配置文件,执行命令:

cat > /opt/kubernetes/cfg/kube-controller-manager.conf << EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect=true \

--master=127.0.0.1:8080 \

--bind-address=127.0.0.1 \

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--experimental-cluster-signing-duration=87600h0m0s"

EOF

(16)使用systemd服务来管理kube-controller-manager,执行命令

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

(17)启动kube-controller-manager。

systemctl daemon-reload

systemctl start kube-controller-manager

systemctl enable kube-controller-manager

(18)查看kube-controller-manager的状态。

systemctl status kube-controller-manager

输出的信息如下:

kube-controller-manager.service - Kubernetes Controller Manager

Loaded: loaded (/usr/lib/systemd/system/kube-controller-manager.service; enabled; vendor preset: disabled)

Active: active (running) since Tue 2022-02-08 20:42:08 CST; 1h 2min ago

(19)创建kube-scheduler的配置文件,执行命令:

cat > /opt/kubernetes/cfg/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect \

--master=127.0.0.1:8080 \

--bind-address=127.0.0.1"

EOF

(20)使用systemd服务来管理kube-scheduler,执行命令:

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

(21)启动kube-scheduler。

systemctl daemon-reload

systemctl start kube-scheduler

systemctl enable kube-scheduler

(22)查看kube-scheduler的状态。

systemctl status kube-scheduler.service

输出的信息如下:

kube-scheduler.service - Kubernetes Scheduler

Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: disabled)

Active: active (running) since Tue 2022-02-08 20:43:01 CST; 1h 8min ago

(23)查看master节点的状态信息。

kubectl get cs

输出的信息如下:

NAME STATUS MESSAGE ERROR

etcd-0 Healthy {"health":"true"}

controller-manager Healthy ok

scheduler Healthy ok

4. 部署Node节点

(1)在master节点上创建脚本文件“kubeconfig”,输入下面的内容:

APISERVER=${1}

SSL_DIR=${2}

# 创建kubelet bootstrapping kubeconfig

export KUBE_APISERVER="https://$APISERVER:6443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

# 注意这里的token ID需要与token.csv文件中的ID一致。

kubectl config set-credentials kubelet-bootstrap \

--token=05cd8031b0c415de2f062503b0cd4ee6 \

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

# 创建kube-proxy kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=$SSL_DIR/kube-proxy.pem \

--client-key=$SSL_DIR/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

(2)执行脚本文件“kubeconfig”。

bash kubeconfig 192.168.79.11 /opt/ssl/k8s/

输出的信息如下:

Cluster "kubernetes" set.

User "kubelet-bootstrap" set.

Context "default" created.

Switched to context "default".

Cluster "kubernetes" set.

User "kube-proxy" set.

Context "default" created.

Switched to context "default".

(3)将master节点上生成的配置文件拷贝到node1节点和node2节点。

scp bootstrap.kubeconfig kube-proxy.kubeconfig \

root@node1:/opt/kubernetes/cfg/

scp bootstrap.kubeconfig kube-proxy.kubeconfig \

root@node2:/opt/kubernetes/cfg/

(4)在node1节点上解压文件“kubernetes-node-linux-amd64.tar.gz”。

tar -zxvf kubernetes-node-linux-amd64.tar.gz

(5)在node1节点上将kubelet和kube-proxy复制到目录“/opt/kubernetes/bin/”下。

cd kubernetes/node/bin/

cp kubelet kube-proxy /opt/kubernetes/bin/

(6)在node1节点上创建脚本文件“kubelet.sh”,输入下面的内容:

#!/bin/bash

NODE_ADDRESS=$1

DNS_SERVER_IP=${2:-"10.0.0.2"}

cat <<EOF >/opt/kubernetes/cfg/kubelet

KUBELET_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=${NODE_ADDRESS} \\

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\

--config=/opt/kubernetes/cfg/kubelet.config \\

--cert-dir=/opt/kubernetes/ssl \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

EOF

cat <<EOF >/opt/kubernetes/cfg/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: ${NODE_ADDRESS}

port: 10250

readOnlyPort: 10255

cgroupDriver: systemd

clusterDNS:

- ${DNS_SERVER_IP}

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

EOF

cat <<EOF >/usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet

(7)在node1节点上执行脚本文件“kubelet.sh”。

bash kubelet.sh 192.168.79.12

提示: 这里指定的node1节点的IP地址。

(8)在node1节点上查看Kubelet的状态。

systemctl status kubelet

输出的信息如下:

kubelet.service - Kubernetes Kubelet Loaded: loaded

(/usr/lib/systemd/system/kubelet.service; enabled; vendor preset:

disabled) Active: active (running) since Tue 2022-02-08 23:23:52 CST;

3min 18s ago

(9)在node1节点上创建脚本文件“proxy.sh”,输入下面的内容

#!/bin/bash

NODE_ADDRESS=$1

cat <<EOF >/opt/kubernetes/cfg/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=${NODE_ADDRESS} \\

--cluster-cidr=10.0.0.0/24 \\

--proxy-mode=ipvs \\

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

(10)在node1节点上执行脚本文件“proxy.sh”。

bash proxy.sh 192.168.79.12

(11)在node1节点上查看kube-proxy的状态。

systemctl status kube-proxy.service

输出的信息如下:

kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since Tue 2022-02-08 23:30:51 CST; 9s ago

(12)在master节点上检查node1节点加入集群的请求信息,执行命令:

kubectl get csr

输出的信息如下:

NAME ... CONDITION

node-csr-Qc2wKIo6AIWh6AXKW6tNwAvUqpxEIXFPHkkIe1jzSBE ... Pending

(13)在master节点上批准node1节点的请求,执行命令:

kubectl certificate approve \

node-csr-Qc2wKIo6AIWh6AXKW6tNwAvUqpxEIXFPHkkIe1jzSBE

(14)在master节点上查看Kubernetes集群中的节点信息,执行命令:

kubectl get node

输出的信息如下:

NAME STATUS ROLES AGE VERSION

192.168.79.12 Ready <none> 85s v1.18.20

提示: 这时候node1节点已经成功加入了Kubernetes集群中。

(15)在node2节点上重复第4步到第14步,按照同样的方法把node2节点加入集群。

(16)在master节点上查看Kubernetes集群中的节点信息,执行命令:

kubectl get node

输出的信息如下:

NAME STATUS ROLES AGE VERSION

192.168.79.12 Ready <none> 5m47s v1.18.20

192.168.79.13 Ready <none> 11s v1.18.20

至此便成功使用二进制包部署了三个节点的Kubernetes集群。