使用Docker-compose实现Tomcat+Nginx负载均衡

nginx反向代理原理

nginx代理tomcat集群,代理2个以上tomcat;

拉取tomcat镜像

docker-compose.yml

version: "3"

services:

nginx:

image: nginx

container_name: c_nginxtomcat

ports:

- 80:2438

volumes:

- ./nginx/default.conf:/etc/nginx/conf.d/default.conf # 挂载配置文件

depends_on:

- tomcat1

- tomcat2

- tomcat3

tomcat1:

image: tomcat

container_name: c_tomcat1 # 容器名,与conf对应

volumes:

- ./tomcat1:/usr/local/tomcat/webapps/ROOT

tomcat2:

image: tomcat

container_name: c_tomcat2

volumes:

- ./tomcat2:/usr/local/tomcat/webapps/ROOT

tomcat3:

image: tomcat

container_name: c_tomcat3

volumes:

- ./tomcat3:/usr/local/tomcat/webapps/ROOT

default.conf

upstream tomcats {

server c_tomcat1:8080 ; # 容器名,与docker-compose.yml里面相对应

server c_tomcat2:8080 ;# tomcat默认端口号8080

server c_tomcat3:8080 ; # 默认使用轮询策略

}

server {

listen 2438;

server_name localhost;

location / {

proxy_pass http://tomcats; # 请求转向tomcats

}

}

tomcat1/index.html

tomcat1

tomcat2和tomcat3参照tomcat1

运行docker-compose

docker-compose up -d

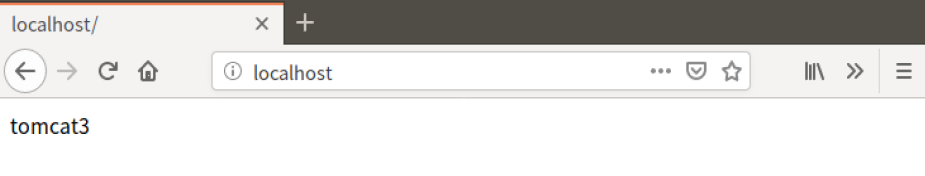

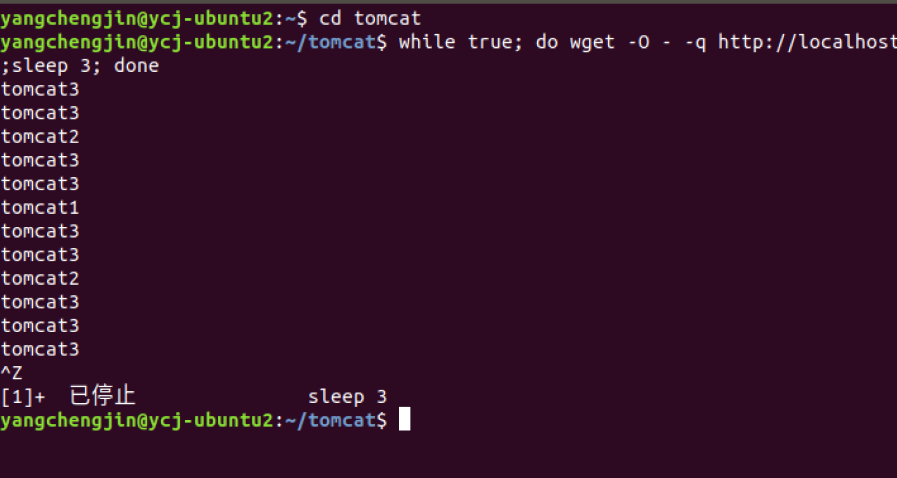

刷新浏览器

权重策略测试负载均衡

修改default.conf

upstream tomcats {

server c_tomcat1:8080 weight=1; # 容器名,与docker-compose.yml里面相对应

server c_tomcat2:8080 weight=2;# tomcat默认端口号8080

server c_tomcat3:8080 weight=7; # 使用权重策略

}

server {

listen 2438;

server_name localhost;

location / {

proxy_pass http://tomcats; # 请求转向tomcats

}

}

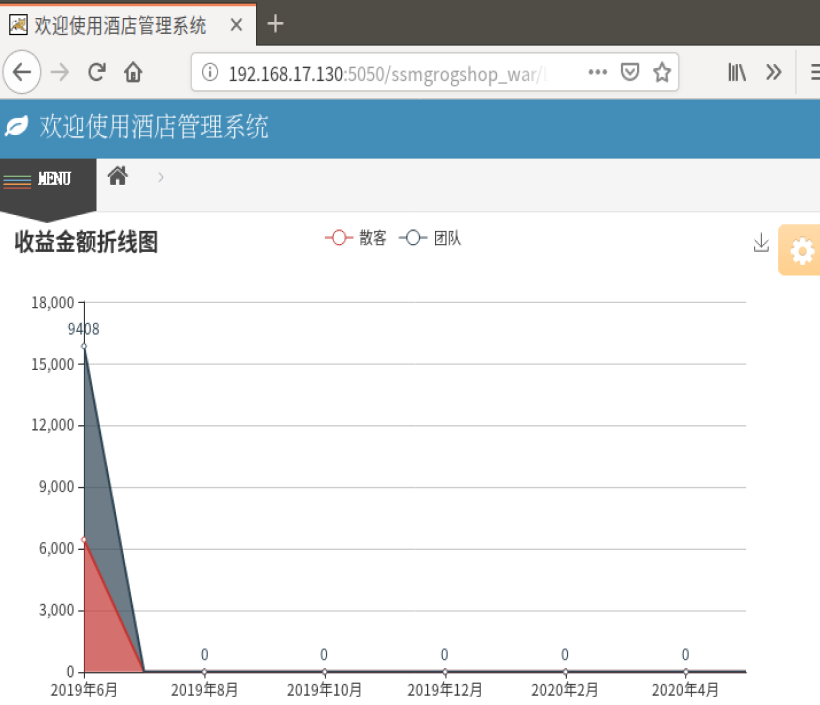

使用Docker-compose部署javaweb运行环境

docker-compose.yml

version: '2'

services:

tomcat:

image: tomcat

hostname: hostname

container_name: c_tomcat_javaweb

ports:

- "5050:8080"

volumes:

- "./webapps:/usr/local/tomcat/webapps"

- ./wait-for-it.sh:/wait-for-it.sh

networks:

webnet:

ipv4_address: 15.22.0.15

mysql:

build: . #通过MySQL的Dockerfile文件构建MySQL

image: mysql

container_name: c_mysql_javaweb

ports:

- "3309:3306"

command: [

'--character-set-server=utf8mb4',

'--collation-server=utf8mb4_unicode_ci'

]

environment:

MYSQL_ROOT_PASSWORD: "123456"

networks:

webnet:

ipv4_address: 15.22.0.6

nginx:

image: nginx

container_name: c_nginx_javaweb

ports:

- "8080:8080"

volumes:

- ./default.conf:/etc/nginx/conf.d/default.conf #挂载配置文件

networks:

webnet:

ipv4_address: 15.22.0.7

networks:

webnet:

driver: bridge

ipam:

config:

- subnet: 15.22.0.0/24

gateway: 15.22.0.2

default.conf

upstream tomcat123 {

server c_tomcat_javaweb:8080;

}

server {

listen 8080;

server_name localhost;

location / {

proxy_pass http://tomcat123;

}

}

修改jdbc.properties

运行docker-compose up -d --build后,查看结果

使用Docker搭建大数据集群环境

创建hadoop环境

Dockerfile

source.list

创建并运行容器

sudo docker build -t ubuntu:18.04 .

sudo docker run -it --name ubuntu ubuntu:18.04

容器进行初始化

apt-get update

apt-get install vim # 用于修改配置文件

apt-get install ssh # 分布式hadoop通过ssh连接

vim ~/.bashrc #在该文件中最后一行添加如下内容,实现进入Ubuntu系统时,都能自动启动sshd服务

/etc/init.d/ssh start

实现ssh无密码登陆

cd ~/.ssh

ssh-keygen -t rsa # 一直按回车即可

cat id_rsa.pub >> authorized_keys #这一步要在~/.ssh目录下进行

安装jdk

apt install openjdk-8-jdk

vim ~/.bashrc # 在文件末尾添加以下两行,配置Java环境变量:

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64/

export PATH=$PATH:$JAVA_HOME/bin

source ~/.bashrc

java -version #查看是否安装成功

安装hadoop

docker cp ./build/hadoop-3.1.3.tar.gz 容器ID:/root/build

cd /root/build

tar -zxvf hadoop-3.1.3.tar.gz -C /usr/local

vim ~/.bashrc

export HADOOP_HOME=/usr/local/hadoop-3.1.3

export CLASSPATH=.:$JAVA_HOME/lib:$JRE_HOME/lib

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin:$JAVA_HOME/bin

source ~/.bashrc # 使.bashrc生效

hadoop version

配置hadoop集群

进入到以下目录

cd /usr/local/hadoop-3.1.3/etc/hadoop

修改hadoop-env.sh

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64/ # 在任意位置添加

修改core-site.xml

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/hadoop-3.1.3/tmp</value>

<description>Abase for other temporary directories.</description>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

</configuration>

修改hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/hadoop-3.1.3/tmp/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/hadoop-3.1.3/tmp/dfs/data</value>

</property>

<property>

<name>dfs.permissions.enabled</name>

<value>false</value>

</property>

</configuration>

修改mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoop-3.1.3</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoop-3.1.3</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoop-3.1.3</value>

</property>

</configuration>

修改yarn-site.xml

<?xml version="1.0" ?>

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>Master</value>

</property>

<!--虚拟内存和物理内存比,不加这个模块程序可能跑不起来-->

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.5</value>

</property>

</configuration>

进入脚本目录

cd /usr/local/hadoop-3.1.3/sbin

修改start-dfs.sh和stop-dfs.sh文件,添加下列参数

HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

修改start-yarn.sh和stop-yarn.sh,添加下列参数

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

构建镜像

docker commit 容器ID ubuntu/hadoop

用构建好的镜像运行主机,分别在三个终端运行如下命令

# 第一个终端

docker run -it -h master --name master ubuntu/hadoop

# 第二个终端

docker run -it -h slave01 --name slave01 ubuntu/hadoop

# 第三个终端

docker run -it -h slave02 --name slave02 ubuntu/hadoop

三个终端分别打开/etc/hosts,根据各自ip修改为如下

172.17.0.3 master

172.17.0.4 slave01

172.17.0.5 slave02

master结点测试链接slave

ssh slave01

ssh slave02

exit #退出

master主机上修改workers

vim /usr/local/hadoop-3.1.3/etc/hadoop/workers

slave01

slave02

测试Hadoop集群

#在master上操作

cd /usr/local/hadoop-3.1.3

bin/hdfs namenode -format #首次启动Hadoop需要格式化

sbin/start-all.sh #启动所有服务

jps #分别查看三个终端

运行Hadoop实例

bin/hdfs dfs -mkdir -p /user/hadoop/input

bin/hdfs dfs -put ./etc/hadoop/*.xml /user/hadoop/input

bin/hdfs dfs -ls /user/hadoop/input

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar grep /user/hadoop/input output 'dfs[a-z.]+'

bin/hdfs dfs -cat output/*

总结

遇到问题

解决方法:

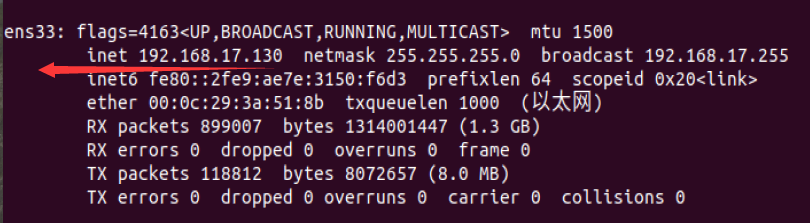

第一次用192.168.17.130,出现上述问题;第二次改用192.168.17.2,直接无法访问;第三次问了同学,他说让我换回192.168.17.130,再试一次,结果可以了。。。。我人傻了,这是靠运气做实验吗。

用时

学习4h+做实验6h+写博客1h