一、创建Scrapy工程

1 #scrapy startproject 工程名 2 scrapy startproject demo3

二、进入工程目录,根据爬虫模板生成爬虫文件

1 #scrapy genspider -l # 查看可用模板 2 #scrapy genspider -t 模板名 爬虫文件名 允许的域名 3 scrapy genspider -t crawl test sohu.com

三、设置IP池或用户代理(middlewares.py文件)

1 # -*- coding: utf-8 -*- 2 # 导入随机模块 3 import random 4 # 导入有关IP池有关的模块 5 from scrapy.downloadermiddlewares.httpproxy import HttpProxyMiddleware 6 # 导入有关用户代理有关的模块 7 from scrapy.downloadermiddlewares.useragent import UserAgentMiddleware 8 9 # IP池 10 class HTTPPROXY(HttpProxyMiddleware): 11 # 初始化 注意一定是 ip='' 12 def __init__(self, ip=''): 13 self.ip = ip 14 15 def process_request(self, request, spider): 16 item = random.choice(IPPOOL) 17 try: 18 print("当前的IP是:"+item["ipaddr"]) 19 request.meta["proxy"] = "http://"+item["ipaddr"] 20 except Exception as e: 21 print(e) 22 pass 23 24 25 # 设置IP池 26 IPPOOL = [ 27 {"ipaddr": "182.117.102.10:8118"}, 28 {"ipaddr": "121.31.102.215:8123"}, 29 {"ipaddr": "1222.94.128.49:8118"} 30 ] 31 32 33 # 用户代理 34 class USERAGENT(UserAgentMiddleware): 35 #初始化 注意一定是 user_agent='' 36 def __init__(self, user_agent=''): 37 self.user_agent = user_agent 38 39 def process_request(self, request, spider): 40 item = random.choice(UPPOOL) 41 try: 42 print("当前的User-Agent是:"+item) 43 request.headers.setdefault('User-Agent', item) 44 except Exception as e: 45 print(e) 46 pass 47 48 49 # 设置用户代理池 50 UPPOOL = [ 51 "Mozilla/5.0 (Windows NT 10.0; WOW64; rv:52.0) Gecko/20100101 Firefox/52.0", "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/59.0.3071.115 Safari/537.36", "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/51.0.2704.79 Safari/537.36 Edge/14.14393" 52 ]

四、settngs.py配置

1 #======================================== 2 3 # 设置IP池和用户代理 4 5 # 禁止本地Cookie 6 COOKIES_ENABLED = False 7 8 # 下载中间件配置指向(注意这里的工程名字是"demo3",指向DOWNLOADER_MIDDLEWARES = { 9 # 'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware':123, 10 # 'demo3.middlewares.HTTPPROXY' : 125, 11 'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware': 2, 12 'demo3.middlewares.USERAGENT': 1 13 } 14 15 # 管道指向配置(注意这里的工程名字是"demo3",指向ITEM_PIPELINES = { 16 'demo3.pipelines.Demo3Pipeline': 300, 17 } 18 19 #============================================

五、定义爬取关注的数据(items.py文件)

1 # -*- coding: utf-8 -*- 2 import scrapy 3 # Define here the models for your scraped items 4 # 5 # See documentation in: 6 # http://doc.scrapy.org/en/latest/topics/items.html 7 class Demo3Item(scrapy.Item): 8 name = scrapy.Field() 9 link = scrapy.Field()

六、爬虫文件编写(test.py)

1 # -*- coding: utf-8 -*- 2 import scrapy 3 from scrapy.linkextractors import LinkExtractor 4 from scrapy.spiders import CrawlSpider, Rule 5 from demo3.items import Demo3Item 6 7 class TestSpider(CrawlSpider): 8 name = 'test' 9 allowed_domains = ['sohu.com'] 10 start_urls = ['http://www.sohu.com/'] 11 12 rules = ( 13 Rule(LinkExtractor(allow=('http://news.sohu.com'), allow_domains=('sohu.com')), callback='parse_item', follow=False), 14 #Rule(LinkExtractor(allow=('.*?/n.*?shtml'),allow_domains=('sohu.com')), callback='parse_item', follow=False), 15 ) 16 17 def parse_item(self, response): 18 i = Demo3Item() 19 i['name'] = response.xpath('//div[@class="news"]/h1/a/text()').extract() 20 i['link'] = response.xpath('//div[@class="news"]/h1/a/@href').extract() 21 return i

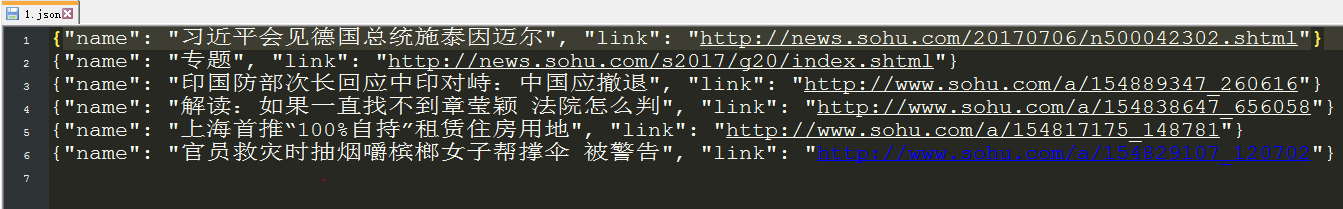

七、管道文件编写(pipelines.py)

1 # -*- coding: utf-8 -*- 2 import codecs 3 import json 4 # Define your item pipelines here 5 # 6 # Don't forget to add your pipeline to the ITEM_PIPELINES setting 7 # See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html 8 class Demo3Pipeline(object): 9 def __init__(self): 10 self.file = codecs.open("E:/workspace/PyCharm/codeSpace/books/python_web_crawler_book/chapter17/demo3/1.json", "wb", encoding='utf-8') 11 12 def process_item(self, item, spider): 13 for j in range(0, len(item["name"])): 14 name = item["name"][j] 15 link = item["link"][j] 16 datas = {"name": name, "link": link} 17 i = json.dumps(dict(datas), ensure_ascii=False) 18 line = i + ' ' 19 self.file.write(line) 20 return item 21 def close_spider(self, spider): 22 self.file.close()

八、测试(scrapy crawl test )之后,生成了1.json文件