环境

spark-2.2.0

kafka_2.11-0.10.0.1

jdk1.8

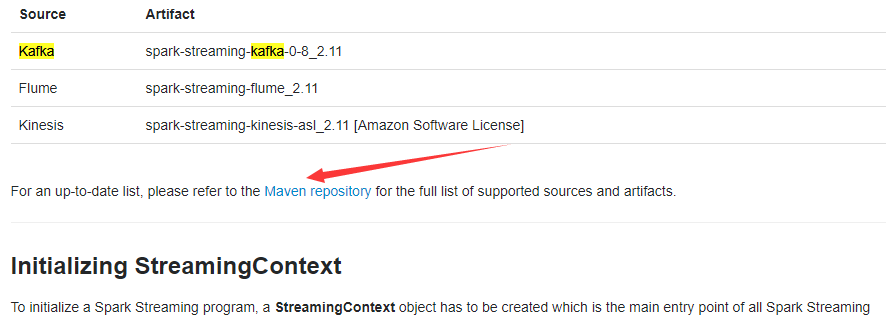

配置好jdk,创建项目并将kafka和spark的jar包添加到项目中,除此之外还需要添加spark-streaming-kafka-*****.jar,笔者这里用的是spark-streaming-kafka-0-10_2.11-2.2.0.jar,可在spark官网上自行下载

1 import java.util.Arrays; 2 import java.util.Collection; 3 import java.util.HashMap; 4 import java.util.Map; 5 6 import org.apache.kafka.clients.consumer.ConsumerRecord; 7 import org.apache.kafka.common.serialization.StringDeserializer; 8 import org.apache.spark.SparkConf; 9 import org.apache.spark.api.java.JavaPairRDD; 10 import org.apache.spark.api.java.JavaRDD; 11 import org.apache.spark.api.java.function.Function; 12 import org.apache.spark.api.java.function.PairFunction; 13 import org.apache.spark.api.java.function.VoidFunction; 14 import org.apache.spark.rdd.RDD; 15 import org.apache.spark.streaming.Durations; 16 import org.apache.spark.streaming.api.java.JavaDStream; 17 import org.apache.spark.streaming.api.java.JavaInputDStream; 18 import org.apache.spark.streaming.api.java.JavaPairDStream; 19 import org.apache.spark.streaming.api.java.JavaStreamingContext; 20 import org.apache.spark.streaming.kafka010.ConsumerStrategies; 21 import org.apache.spark.streaming.kafka010.KafkaUtils; 22 import org.apache.spark.streaming.kafka010.LocationStrategies; 23 24 import scala.Tuple2; 25 26 public class SparkStreamingFromkafka { 27 28 public static void main(String[] args) throws Exception { 29 // TODO Auto-generated method stub 30 SparkConf sparkConf = new SparkConf().setMaster("local[*]").setAppName("SparkStreamingFromkafka"); 31 JavaStreamingContext streamingContext = new JavaStreamingContext(sparkConf , Durations.seconds(1)); 32 33 Map<String, Object> kafkaParams = new HashMap<>(); 34 kafkaParams.put("bootstrap.servers", "192.168.246.134:9092");//多个可用ip可用","隔开 35 kafkaParams.put("key.deserializer", StringDeserializer.class); 36 kafkaParams.put("value.deserializer", StringDeserializer.class); 37 kafkaParams.put("group.id", "sparkStreaming"); 38 Collection<String> topics = Arrays.asList("video");//配置topic,可以是数组 39 40 JavaInputDStream<ConsumerRecord<String, String>> javaInputDStream =KafkaUtils.createDirectStream( 41 streamingContext, 42 LocationStrategies.PreferConsistent(), 43 ConsumerStrategies.Subscribe(topics, kafkaParams)); 44 45 JavaPairDStream<String, String> javaPairDStream = javaInputDStream.mapToPair(new PairFunction<ConsumerRecord<String, String>, String, String>(){ 46 private static final long serialVersionUID = 1L; 47 @Override 48 public Tuple2<String, String> call(ConsumerRecord<String, String> consumerRecord) throws Exception { 49 return new Tuple2<>(consumerRecord.key(), consumerRecord.value()); 50 } 51 }); 52 javaPairDStream.foreachRDD(new VoidFunction<JavaPairRDD<String,String>>() { 53 @Override 54 public void call(JavaPairRDD<String, String> javaPairRDD) throws Exception { 55 // TODO Auto-generated method stub 56 javaPairRDD.foreach(new VoidFunction<Tuple2<String,String>>() { 57 @Override 58 public void call(Tuple2<String, String> tuple2) 59 throws Exception { 60 // TODO Auto-generated method stub 61 System.out.println(tuple2._2); 62 } 63 }); 64 } 65 }); 66 streamingContext.start(); 67 streamingContext.awaitTermination(); 68 } 69 70 }