正文

一,简介

跟hive没太的关系,就是使用了hive的标准(HQL, 元数据库、UDF、序列化、反序列化机制)。Hive On Spark 使用RDD(DataFrame),然后运行在spark 集群上。

二,shell方式配置和使用hive元数据信息

2.1 文件配置

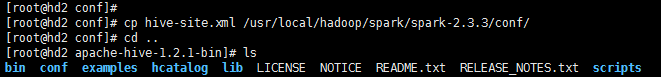

配置和hive的配置一致,所以只需要我们把hive的配置文件hive-site.xml拷贝到一份到spark的conf目录下就可以。

命令:

cp hive-site.xml /usr/local/hadoop/spark/spark-2.3.3/conf/

2.2 驱动包加载

因为要链接mysql,从mysql中读取元数据,所以需要mysql的驱动包进行加载。

2.3 命令行启动

./spark-sql --master spark://hd1:7077 --driver-class-path /usr/local/hadoop/hive/apache-hive-1.2.1-bin/lib/mysql-connector-java-5.1.39.jar

参数解释:

--driver-class-path mysql驱动包的路径

--master 使用spark的链接

三,IDEA编程方式使用hive元数据信息

3.1 添加依赖和配置文件

依赖添加:注意除了hive依赖,mysql的驱动依赖也要添加

全部依赖:

<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>cn.edu360.sparkLearn</groupId> <artifactId>sparkTest</artifactId> <version>1.0-SNAPSHOT</version> <properties> <maven.compiler.source>1.8</maven.compiler.source> <maven.compiler.target>1.8</maven.compiler.target> <scala.version>2.11.8</scala.version> <spark.version>2.3.3</spark.version> <hadoop.version>2.8.5</hadoop.version> <encoding>UTF-8</encoding> </properties> <dependencies> <!-- 导入scala的依赖 --> <dependency> <groupId>org.scala-lang</groupId> <artifactId>scala-library</artifactId> <version>${scala.version}</version> </dependency> <!-- 导入spark的依赖 --> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_2.11</artifactId> <version>${spark.version}</version> </dependency> <dependency> <groupId>mysql</groupId> <artifactId>mysql-connector-java</artifactId> <version>5.1.38</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-sql_2.11</artifactId> <version>${spark.version}</version> </dependency> <!-- 指定hadoop-client API的版本 --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-client</artifactId> <version>${hadoop.version}</version> </dependency> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-hive_2.11</artifactId> <version>${spark.version}</version> </dependency> </dependencies> <build> <pluginManagement> <plugins> <!-- 编译scala的插件 --> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <version>3.2.2</version> </plugin> <!-- 编译java的插件 --> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <version>3.5.1</version> </plugin> </plugins> </pluginManagement> <plugins> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <executions> <execution> <id>scala-compile-first</id> <phase>process-resources</phase> <goals> <goal>add-source</goal> <goal>compile</goal> </goals> </execution> <execution> <id>scala-test-compile</id> <phase>process-test-resources</phase> <goals> <goal>testCompile</goal> </goals> </execution> </executions> </plugin> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <executions> <execution> <phase>compile</phase> <goals> <goal>compile</goal> </goals> </execution> </executions> </plugin> <!-- 打jar插件 --> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-shade-plugin</artifactId> <version>2.4.3</version> <executions> <execution> <phase>package</phase> <goals> <goal>shade</goal> </goals> <configuration> <filters> <filter> <artifact>*:*</artifact> <excludes> <exclude>META-INF/*.SF</exclude> <exclude>META-INF/*.DSA</exclude> <exclude>META-INF/*.RSA</exclude> </excludes> </filter> </filters> </configuration> </execution> </executions> </plugin> </plugins> </build> </project>

<dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-hive_2.11</artifactId> <version>${spark.version}</version> </dependency>

配置文件添加:

将hive-site.xml的配置文件添加到项目中。

3.2 程序示例

package cn.edu360.spark08 import org.apache.spark.sql.{DataFrame, SparkSession} object HiveTest { def main(args: Array[String]): Unit = { val spark: SparkSession = SparkSession.builder() .appName("HiveTest") .master("local[*]") .enableHiveSupport() .getOrCreate() val result: DataFrame = spark.sql("show databases") result.show() spark.stop() } }