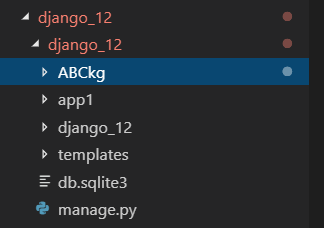

1. 在django项目根目录位置创建scrapy项目,django_12是django项目,ABCkg是scrapy爬虫项目,app1是django的子应用

2.在Scrapy的settings.py中加入以下代码

import os

import sys

sys.path.append(os.path.dirname(os.path.abspath('.')))

os.environ['DJANGO_SETTINGS_MODULE'] = 'django_12.settings' # 项目名.settings

import django

django.setup()

3.编写爬虫,下面代码以ABCkg为例,abckg.py

# -*- coding: utf-8 -*-

import scrapy

from ABCkg.items import AbckgItem

class AbckgSpider(scrapy.Spider):

name = 'abckg' #爬虫名称

allowed_domains = ['www.abckg.com'] # 允许爬取的范围

start_urls = ['http://www.abckg.com/'] # 第一次请求的地址

def parse(self, response):

print('返回内容:{}'.format(response))

"""

解析函数

:param response: 响应内容

:return:

"""

listtile = response.xpath('//*[@id="container"]/div/div/h2/a/text()').extract()

listurl = response.xpath('//*[@id="container"]/div/div/h2/a/@href').extract()

for index in range(len(listtile)):

item = AbckgItem()

item['title'] = listtile[index]

item['url'] = listurl[index]

yield scrapy.Request(url=listurl[index],callback=self.parse_content,method='GET',dont_filter=True,meta={'item':item})

# 获取下一页

nextpage = response.xpath('//*[@id="container"]/div[1]/div[10]/a[last()]/@href').extract_first()

print('即将请求:{}'.format(nextpage))

yield scrapy.Request(url=nextpage,callback=self.parse,method='GET',dont_filter=True)

# 获取详情页

def parse_content(self,response):

item = response.meta['item']

item['content'] = response.xpath('//*[@id="post-1192"]/dd/p').extract()

print('内容为:{}'.format(item))

yield item

4.scrapy中item.py 中引入django模型类

pip install scrapy-djangoitem

from app1 import models from scrapy_djangoitem import DjangoItem class AbckgItem(DjangoItem): # define the fields for your item here like: # name = scrapy.Field() # 普通scrapy爬虫写法 # title = scrapy.Field() # url = scrapy.Field() # content = scrapy.Field() django_model = models.ABCkg # 注入django项目的固定写法,必须起名为django_model =django中models.ABCkg表

5.pipelines.py中调用save()

import json from pymongo import MongoClient # 用于接收parse函数发过来的item class AbckgPipeline(object): # i = 0 def open_spider(self,spider): # print('打开文件') if spider.name == 'abckg': self.f = open('abckg.json',mode='w') def process_item(self, item, spider): # # print('ABC管道接收:{}'.format(item)) # if spider.name == 'abckg': # self.f.write(json.dumps(dict(item),ensure_ascii=False)) # # elif spider.name == 'cctv': # # img = requests.get(item['img']) # # if img != '': # # with open('图片\%d.png'%self.i,mode='wb')as f: # # f.write(img.content) # # self.i += 1 item.save() return item # 将item传给下一个管道执行 def close_spider(self,spider): # print('关闭文件') self.f.close()

6.在django中models.py中一个模型类,字段对应爬取到的数据,选择适当的类型与长度

class ABCkg(models.Model): title = models.CharField(max_length=30,verbose_name='标题') url = models.CharField(max_length=100,verbose_name='网址') content = models.CharField(max_length=200,verbose_name='内容') class Meta: verbose_name_plural = '爬虫ABCkg' def __str__(self): return self.title

7.通过命令启动爬虫:scrapy crawl 爬虫名称

8.django进入admin后台即可看到爬取到的数据。