简介

最近开发的项目中,kafka用的比较多,为了方便梳理,从今天起准备记录一些关于kafka的文章,首先,当然是如何安装kafka了。

Apache Kafka是分布式发布-订阅消息系统。

Apache Kafka与传统消息系统相比,有以下不同:

- 它被设计为一个分布式系统,易于向外扩展;

- 它同时为发布和订阅提供高吞吐量;

- 它支持多订阅者,当失败时能自动平衡消费者;

- 它将消息持久化到磁盘,因此可用于批量消费,例如ETL,以及实时应用程序。

安装 kafka

下载地址:http://mirrors.tuna.tsinghua.edu.cn/apache/kafka/2.3.0/kafka_2.11-2.3.0.tgz

wget http://mirrors.tuna.tsinghua.edu.cn/apache/kafka/2.3.0/kafka_2.11-2.3.0.tgz

解压

tar -zxvf kafka_2.11-2.3.0.tgz cd /usr/local/kafka_2.11-2.3.0/

修改 kafka-server 的配置文件

vi /usr/local/kafkaa_2.11-2.3.0/config/server.properties

修改如下

broker.id=1 log.dir=/data/kafka/logs-1

当然,也可以不修改,只是demo测试,默认配置即可。

常用功能简介

1、启动 zookeeper

使用安装包中的脚本启动单节点 Zookeeper 实例:

sh bin/zookeeper-server-start.sh -daemon config/zookeeper.properties

2、启动Kafka 服务

使用 kafka-server-start.sh 启动 kafka 服务:

sh bin/kafka-server-start.sh config/server.properties

启动日志

[2019-11-23 18:07:36,462] INFO Registered kafka:type=kafka.Log4jController MBean (kafka.utils.Log4jControllerRegistration$) [2019-11-23 18:07:37,095] INFO Registered signal handlers for TERM, INT, HUP (org.apache.kafka.common.utils.LoggingSignalHandler) [2019-11-23 18:07:37,095] INFO starting (kafka.server.KafkaServer) [2019-11-23 18:07:37,096] INFO Connecting to zookeeper on localhost:2181 (kafka.server.KafkaServer) [2019-11-23 18:07:37,119] INFO [ZooKeeperClient Kafka server] Initializing a new session to localhost:2181. (kafka.zookeeper.ZooKeeperClient) [2019-11-23 18:07:37,124] INFO Client environment:zookeeper.version=3.4.14-4c25d480e66aadd371de8bd2fd8da255ac140bcf, built on 03/06/2019 16:18 GMT (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:host.name=localhost (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:java.version=1.8.0_212 (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:java.vendor=Oracle Corporation (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:java.home=/usr/java/jdk1.8.0_212-amd64/jre (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:java.class.path=.:/usr/java/default/lib/dt.jar:/usr/java/default/lib/tools.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/activation-1.1.1.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/aopalliance-repackaged-2.5.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/argparse4j-0.7.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/audience-annotations-0.5.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/commons-lang3-3.8.1.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/connect-api-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/connect-basic-auth-extension-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/connect-file-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/connect-json-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/connect-runtime-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/connect-transforms-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/guava-20.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/hk2-api-2.5.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/hk2-locator-2.5.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/hk2-utils-2.5.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-annotations-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-core-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-databind-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-dataformat-csv-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-datatype-jdk8-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-jaxrs-base-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-jaxrs-json-provider-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-module-jaxb-annotations-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-module-paranamer-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jackson-module-scala_2.11-2.9.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jakarta.annotation-api-1.3.4.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jakarta.inject-2.5.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jakarta.ws.rs-api-2.1.5.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/javassist-3.22.0-CR2.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/javax.servlet-api-3.1.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/javax.ws.rs-api-2.1.1.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jaxb-api-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jersey-client-2.28.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jersey-common-2.28.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jersey-container-servlet-2.28.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jersey-container-servlet-core-2.28.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jersey-hk2-2.28.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jersey-media-jaxb-2.28.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jersey-server-2.28.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-client-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-continuation-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-http-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-io-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-security-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-server-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-servlet-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-servlets-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jetty-util-9.4.18.v20190429.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jopt-simple-5.0.4.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/jsr305-3.0.2.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka_2.11-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka_2.11-2.3.0-sources.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka-clients-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka-log4j-appender-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka-streams-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka-streams-examples-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka-streams-scala_2.11-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka-streams-test-utils-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/kafka-tools-2.3.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/log4j-1.2.17.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/lz4-java-1.6.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/maven-artifact-3.6.1.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/metrics-core-2.2.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/osgi-resource-locator-1.0.1.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/paranamer-2.8.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/plexus-utils-3.2.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/reflections-0.9.11.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/rocksdbjni-5.18.3.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/scala-library-2.11.12.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/scala-logging_2.11-3.9.0.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/scala-reflect-2.11.12.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/slf4j-api-1.7.26.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/slf4j-log4j12-1.7.26.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/snappy-java-1.1.7.3.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/spotbugs-annotations-3.1.9.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/validation-api-2.0.1.Final.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/zkclient-0.11.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/zookeeper-3.4.14.jar:/usr/local/kafka_2.11-2.3.0/bin/../libs/zstd-jni-1.4.0-1.jar (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:java.io.tmpdir=/tmp (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:java.compiler=<NA> (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:os.name=Linux (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,124] INFO Client environment:os.arch=amd64 (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,125] INFO Client environment:os.version=3.10.0-123.el7.x86_64 (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,125] INFO Client environment:user.name=root (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,125] INFO Client environment:user.home=/root (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,125] INFO Client environment:user.dir=/usr/local/kafka_2.11-2.3.0/bin (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,126] INFO Initiating client connection, connectString=localhost:2181 sessionTimeout=6000 watcher=kafka.zookeeper.ZooKeeperClient$ZooKeeperClientWatcher$@6302bbb1 (org.apache.zookeeper.ZooKeeper) [2019-11-23 18:07:37,153] INFO Opening socket connection to server localhost/0:0:0:0:0:0:0:1:2181. Will not attempt to authenticate using SASL (unknown error) (org.apache.zookeeper.ClientCnxn) [2019-11-23 18:07:37,172] INFO Socket connection established to localhost/0:0:0:0:0:0:0:1:2181, initiating session (org.apache.zookeeper.ClientCnxn) [2019-11-23 18:07:37,184] INFO [ZooKeeperClient Kafka server] Waiting until connected. (kafka.zookeeper.ZooKeeperClient) [2019-11-23 18:07:37,217] INFO Session establishment complete on server localhost/0:0:0:0:0:0:0:1:2181, sessionid = 0x1000016e9450000, negotiated timeout = 6000 (org.apache.zookeeper.ClientCnxn) [2019-11-23 18:07:37,220] INFO [ZooKeeperClient Kafka server] Connected. (kafka.zookeeper.ZooKeeperClient) [2019-11-23 18:07:37,594] INFO Cluster ID = SWLs93NzQPekqvdj7KmbmQ (kafka.server.KafkaServer) [2019-11-23 18:07:37,598] WARN No meta.properties file under dir /data/kafka/logs-1/meta.properties (kafka.server.BrokerMetadataCheckpoint) [2019-11-23 18:07:37,815] INFO KafkaConfig values: advertised.host.name = null advertised.listeners = null advertised.port = null alter.config.policy.class.name = null alter.log.dirs.replication.quota.window.num = 11 alter.log.dirs.replication.quota.window.size.seconds = 1 authorizer.class.name = auto.create.topics.enable = true auto.leader.rebalance.enable = true background.threads = 10 broker.id = 1 broker.id.generation.enable = true broker.rack = null client.quota.callback.class = null compression.type = producer connection.failed.authentication.delay.ms = 100 connections.max.idle.ms = 600000 connections.max.reauth.ms = 0 control.plane.listener.name = null controlled.shutdown.enable = true controlled.shutdown.max.retries = 3 controlled.shutdown.retry.backoff.ms = 5000 controller.socket.timeout.ms = 30000 create.topic.policy.class.name = null default.replication.factor = 1 delegation.token.expiry.check.interval.ms = 3600000 delegation.token.expiry.time.ms = 86400000 delegation.token.master.key = null delegation.token.max.lifetime.ms = 604800000 delete.records.purgatory.purge.interval.requests = 1 delete.topic.enable = true fetch.purgatory.purge.interval.requests = 1000 group.initial.rebalance.delay.ms = 0 group.max.session.timeout.ms = 1800000 group.max.size = 2147483647 group.min.session.timeout.ms = 6000 host.name = inter.broker.listener.name = null inter.broker.protocol.version = 2.3-IV1 kafka.metrics.polling.interval.secs = 10 kafka.metrics.reporters = [] leader.imbalance.check.interval.seconds = 300 leader.imbalance.per.broker.percentage = 10 listener.security.protocol.map = PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL listeners = null log.cleaner.backoff.ms = 15000 log.cleaner.dedupe.buffer.size = 134217728 log.cleaner.delete.retention.ms = 86400000 log.cleaner.enable = true log.cleaner.io.buffer.load.factor = 0.9 log.cleaner.io.buffer.size = 524288 log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308 log.cleaner.max.compaction.lag.ms = 9223372036854775807 log.cleaner.min.cleanable.ratio = 0.5 log.cleaner.min.compaction.lag.ms = 0 log.cleaner.threads = 1 log.cleanup.policy = [delete] log.dir = /tmp/kafka-logs log.dirs = /data/kafka/logs-1 log.flush.interval.messages = 9223372036854775807 log.flush.interval.ms = null log.flush.offset.checkpoint.interval.ms = 60000 log.flush.scheduler.interval.ms = 9223372036854775807 log.flush.start.offset.checkpoint.interval.ms = 60000 log.index.interval.bytes = 4096 log.index.size.max.bytes = 10485760 log.message.downconversion.enable = true log.message.format.version = 2.3-IV1 log.message.timestamp.difference.max.ms = 9223372036854775807 log.message.timestamp.type = CreateTime log.preallocate = false log.retention.bytes = -1 log.retention.check.interval.ms = 300000 log.retention.hours = 168 log.retention.minutes = null log.retention.ms = null log.roll.hours = 168 log.roll.jitter.hours = 0 log.roll.jitter.ms = null log.roll.ms = null log.segment.bytes = 1073741824 log.segment.delete.delay.ms = 60000 max.connections = 2147483647 max.connections.per.ip = 2147483647 max.connections.per.ip.overrides = max.incremental.fetch.session.cache.slots = 1000 message.max.bytes = 1000012 metric.reporters = [] metrics.num.samples = 2 metrics.recording.level = INFO metrics.sample.window.ms = 30000 min.insync.replicas = 1 num.io.threads = 8 num.network.threads = 3 num.partitions = 1 num.recovery.threads.per.data.dir = 1 num.replica.alter.log.dirs.threads = null num.replica.fetchers = 1 offset.metadata.max.bytes = 4096 offsets.commit.required.acks = -1 offsets.commit.timeout.ms = 5000 offsets.load.buffer.size = 5242880 offsets.retention.check.interval.ms = 600000 offsets.retention.minutes = 10080 offsets.topic.compression.codec = 0 offsets.topic.num.partitions = 50 offsets.topic.replication.factor = 1 offsets.topic.segment.bytes = 104857600 password.encoder.cipher.algorithm = AES/CBC/PKCS5Padding password.encoder.iterations = 4096 password.encoder.key.length = 128 password.encoder.keyfactory.algorithm = null password.encoder.old.secret = null password.encoder.secret = null port = 9092 principal.builder.class = null producer.purgatory.purge.interval.requests = 1000 queued.max.request.bytes = -1 queued.max.requests = 500 quota.consumer.default = 9223372036854775807 quota.producer.default = 9223372036854775807 quota.window.num = 11 quota.window.size.seconds = 1 replica.fetch.backoff.ms = 1000 replica.fetch.max.bytes = 1048576 replica.fetch.min.bytes = 1 replica.fetch.response.max.bytes = 10485760 replica.fetch.wait.max.ms = 500 replica.high.watermark.checkpoint.interval.ms = 5000 replica.lag.time.max.ms = 10000 replica.socket.receive.buffer.bytes = 65536 replica.socket.timeout.ms = 30000 replication.quota.window.num = 11 replication.quota.window.size.seconds = 1 request.timeout.ms = 30000 reserved.broker.max.id = 1000 sasl.client.callback.handler.class = null sasl.enabled.mechanisms = [GSSAPI] sasl.jaas.config = null sasl.kerberos.kinit.cmd = /usr/bin/kinit sasl.kerberos.min.time.before.relogin = 60000 sasl.kerberos.principal.to.local.rules = [DEFAULT] sasl.kerberos.service.name = null sasl.kerberos.ticket.renew.jitter = 0.05 sasl.kerberos.ticket.renew.window.factor = 0.8 sasl.login.callback.handler.class = null sasl.login.class = null sasl.login.refresh.buffer.seconds = 300 sasl.login.refresh.min.period.seconds = 60 sasl.login.refresh.window.factor = 0.8 sasl.login.refresh.window.jitter = 0.05 sasl.mechanism.inter.broker.protocol = GSSAPI sasl.server.callback.handler.class = null security.inter.broker.protocol = PLAINTEXT socket.receive.buffer.bytes = 102400 socket.request.max.bytes = 104857600 socket.send.buffer.bytes = 102400 ssl.cipher.suites = [] ssl.client.auth = none ssl.enabled.protocols = [TLSv1.2, TLSv1.1, TLSv1] ssl.endpoint.identification.algorithm = https ssl.key.password = null ssl.keymanager.algorithm = SunX509 ssl.keystore.location = null ssl.keystore.password = null ssl.keystore.type = JKS ssl.principal.mapping.rules = [DEFAULT] ssl.protocol = TLS ssl.provider = null ssl.secure.random.implementation = null ssl.trustmanager.algorithm = PKIX ssl.truststore.location = null ssl.truststore.password = null ssl.truststore.type = JKS transaction.abort.timed.out.transaction.cleanup.interval.ms = 60000 transaction.max.timeout.ms = 900000 transaction.remove.expired.transaction.cleanup.interval.ms = 3600000 transaction.state.log.load.buffer.size = 5242880 transaction.state.log.min.isr = 1 transaction.state.log.num.partitions = 50 transaction.state.log.replication.factor = 1 transaction.state.log.segment.bytes = 104857600 transactional.id.expiration.ms = 604800000 unclean.leader.election.enable = false zookeeper.connect = localhost:2181 zookeeper.connection.timeout.ms = 6000 zookeeper.max.in.flight.requests = 10 zookeeper.session.timeout.ms = 6000 zookeeper.set.acl = false zookeeper.sync.time.ms = 2000 (kafka.server.KafkaConfig) [2019-11-23 18:07:37,831] INFO KafkaConfig values: advertised.host.name = null advertised.listeners = null advertised.port = null alter.config.policy.class.name = null alter.log.dirs.replication.quota.window.num = 11 alter.log.dirs.replication.quota.window.size.seconds = 1 authorizer.class.name = auto.create.topics.enable = true auto.leader.rebalance.enable = true background.threads = 10 broker.id = 1 broker.id.generation.enable = true broker.rack = null client.quota.callback.class = null compression.type = producer connection.failed.authentication.delay.ms = 100 connections.max.idle.ms = 600000 connections.max.reauth.ms = 0 control.plane.listener.name = null controlled.shutdown.enable = true controlled.shutdown.max.retries = 3 controlled.shutdown.retry.backoff.ms = 5000 controller.socket.timeout.ms = 30000 create.topic.policy.class.name = null default.replication.factor = 1 delegation.token.expiry.check.interval.ms = 3600000 delegation.token.expiry.time.ms = 86400000 delegation.token.master.key = null delegation.token.max.lifetime.ms = 604800000 delete.records.purgatory.purge.interval.requests = 1 delete.topic.enable = true fetch.purgatory.purge.interval.requests = 1000 group.initial.rebalance.delay.ms = 0 group.max.session.timeout.ms = 1800000 group.max.size = 2147483647 group.min.session.timeout.ms = 6000 host.name = inter.broker.listener.name = null inter.broker.protocol.version = 2.3-IV1 kafka.metrics.polling.interval.secs = 10 kafka.metrics.reporters = [] leader.imbalance.check.interval.seconds = 300 leader.imbalance.per.broker.percentage = 10 listener.security.protocol.map = PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL listeners = null log.cleaner.backoff.ms = 15000 log.cleaner.dedupe.buffer.size = 134217728 log.cleaner.delete.retention.ms = 86400000 log.cleaner.enable = true log.cleaner.io.buffer.load.factor = 0.9 log.cleaner.io.buffer.size = 524288 log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308 log.cleaner.max.compaction.lag.ms = 9223372036854775807 log.cleaner.min.cleanable.ratio = 0.5 log.cleaner.min.compaction.lag.ms = 0 log.cleaner.threads = 1 log.cleanup.policy = [delete] log.dir = /tmp/kafka-logs log.dirs = /data/kafka/logs-1 log.flush.interval.messages = 9223372036854775807 log.flush.interval.ms = null log.flush.offset.checkpoint.interval.ms = 60000 log.flush.scheduler.interval.ms = 9223372036854775807 log.flush.start.offset.checkpoint.interval.ms = 60000 log.index.interval.bytes = 4096 log.index.size.max.bytes = 10485760 log.message.downconversion.enable = true log.message.format.version = 2.3-IV1 log.message.timestamp.difference.max.ms = 9223372036854775807 log.message.timestamp.type = CreateTime log.preallocate = false log.retention.bytes = -1 log.retention.check.interval.ms = 300000 log.retention.hours = 168 log.retention.minutes = null log.retention.ms = null log.roll.hours = 168 log.roll.jitter.hours = 0 log.roll.jitter.ms = null log.roll.ms = null log.segment.bytes = 1073741824 log.segment.delete.delay.ms = 60000 max.connections = 2147483647 max.connections.per.ip = 2147483647 max.connections.per.ip.overrides = max.incremental.fetch.session.cache.slots = 1000 message.max.bytes = 1000012 metric.reporters = [] metrics.num.samples = 2 metrics.recording.level = INFO metrics.sample.window.ms = 30000 min.insync.replicas = 1 num.io.threads = 8 num.network.threads = 3 num.partitions = 1 num.recovery.threads.per.data.dir = 1 num.replica.alter.log.dirs.threads = null num.replica.fetchers = 1 offset.metadata.max.bytes = 4096 offsets.commit.required.acks = -1 offsets.commit.timeout.ms = 5000 offsets.load.buffer.size = 5242880 offsets.retention.check.interval.ms = 600000 offsets.retention.minutes = 10080 offsets.topic.compression.codec = 0 offsets.topic.num.partitions = 50 offsets.topic.replication.factor = 1 offsets.topic.segment.bytes = 104857600 password.encoder.cipher.algorithm = AES/CBC/PKCS5Padding password.encoder.iterations = 4096 password.encoder.key.length = 128 password.encoder.keyfactory.algorithm = null password.encoder.old.secret = null password.encoder.secret = null port = 9092 principal.builder.class = null producer.purgatory.purge.interval.requests = 1000 queued.max.request.bytes = -1 queued.max.requests = 500 quota.consumer.default = 9223372036854775807 quota.producer.default = 9223372036854775807 quota.window.num = 11 quota.window.size.seconds = 1 replica.fetch.backoff.ms = 1000 replica.fetch.max.bytes = 1048576 replica.fetch.min.bytes = 1 replica.fetch.response.max.bytes = 10485760 replica.fetch.wait.max.ms = 500 replica.high.watermark.checkpoint.interval.ms = 5000 replica.lag.time.max.ms = 10000 replica.socket.receive.buffer.bytes = 65536 replica.socket.timeout.ms = 30000 replication.quota.window.num = 11 replication.quota.window.size.seconds = 1 request.timeout.ms = 30000 reserved.broker.max.id = 1000 sasl.client.callback.handler.class = null sasl.enabled.mechanisms = [GSSAPI] sasl.jaas.config = null sasl.kerberos.kinit.cmd = /usr/bin/kinit sasl.kerberos.min.time.before.relogin = 60000 sasl.kerberos.principal.to.local.rules = [DEFAULT] sasl.kerberos.service.name = null sasl.kerberos.ticket.renew.jitter = 0.05 sasl.kerberos.ticket.renew.window.factor = 0.8 sasl.login.callback.handler.class = null sasl.login.class = null sasl.login.refresh.buffer.seconds = 300 sasl.login.refresh.min.period.seconds = 60 sasl.login.refresh.window.factor = 0.8 sasl.login.refresh.window.jitter = 0.05 sasl.mechanism.inter.broker.protocol = GSSAPI sasl.server.callback.handler.class = null security.inter.broker.protocol = PLAINTEXT socket.receive.buffer.bytes = 102400 socket.request.max.bytes = 104857600 socket.send.buffer.bytes = 102400 ssl.cipher.suites = [] ssl.client.auth = none ssl.enabled.protocols = [TLSv1.2, TLSv1.1, TLSv1] ssl.endpoint.identification.algorithm = https ssl.key.password = null ssl.keymanager.algorithm = SunX509 ssl.keystore.location = null ssl.keystore.password = null ssl.keystore.type = JKS ssl.principal.mapping.rules = [DEFAULT] ssl.protocol = TLS ssl.provider = null ssl.secure.random.implementation = null ssl.trustmanager.algorithm = PKIX ssl.truststore.location = null ssl.truststore.password = null ssl.truststore.type = JKS transaction.abort.timed.out.transaction.cleanup.interval.ms = 60000 transaction.max.timeout.ms = 900000 transaction.remove.expired.transaction.cleanup.interval.ms = 3600000 transaction.state.log.load.buffer.size = 5242880 transaction.state.log.min.isr = 1 transaction.state.log.num.partitions = 50 transaction.state.log.replication.factor = 1 transaction.state.log.segment.bytes = 104857600 transactional.id.expiration.ms = 604800000 unclean.leader.election.enable = false zookeeper.connect = localhost:2181 zookeeper.connection.timeout.ms = 6000 zookeeper.max.in.flight.requests = 10 zookeeper.session.timeout.ms = 6000 zookeeper.set.acl = false zookeeper.sync.time.ms = 2000 (kafka.server.KafkaConfig) [2019-11-23 18:07:37,884] INFO [ThrottledChannelReaper-Fetch]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper) [2019-11-23 18:07:37,887] INFO [ThrottledChannelReaper-Produce]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper) [2019-11-23 18:07:37,890] INFO [ThrottledChannelReaper-Request]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper) [2019-11-23 18:07:37,914] INFO Log directory /data/kafka/logs-1 not found, creating it. (kafka.log.LogManager) [2019-11-23 18:07:37,928] INFO Loading logs. (kafka.log.LogManager) [2019-11-23 18:07:37,943] INFO Logs loading complete in 15 ms. (kafka.log.LogManager) [2019-11-23 18:07:37,957] INFO Starting log cleanup with a period of 300000 ms. (kafka.log.LogManager) [2019-11-23 18:07:37,960] INFO Starting log flusher with a default period of 9223372036854775807 ms. (kafka.log.LogManager) [2019-11-23 18:07:39,104] INFO Awaiting socket connections on 0.0.0.0:9092. (kafka.network.Acceptor) [2019-11-23 18:07:39,217] INFO [SocketServer brokerId=1] Created data-plane acceptor and processors for endpoint : EndPoint(null,9092,ListenerName(PLAINTEXT),PLAINTEXT) (kafka.network.SocketServer) [2019-11-23 18:07:39,218] INFO [SocketServer brokerId=1] Started 1 acceptor threads for data-plane (kafka.network.SocketServer) [2019-11-23 18:07:39,268] INFO [ExpirationReaper-1-Produce]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2019-11-23 18:07:39,271] INFO [ExpirationReaper-1-Fetch]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2019-11-23 18:07:39,275] INFO [ExpirationReaper-1-DeleteRecords]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2019-11-23 18:07:39,278] INFO [ExpirationReaper-1-ElectPreferredLeader]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2019-11-23 18:07:39,304] INFO [LogDirFailureHandler]: Starting (kafka.server.ReplicaManager$LogDirFailureHandler) [2019-11-23 18:07:39,362] INFO Creating /brokers/ids/1 (is it secure? false) (kafka.zk.KafkaZkClient) [2019-11-23 18:07:39,384] INFO Stat of the created znode at /brokers/ids/1 is: 24,24,1574561259376,1574561259376,1,0,0,72057692440821760,188,0,24 (kafka.zk.KafkaZkClient) [2019-11-23 18:07:39,384] INFO Registered broker 1 at path /brokers/ids/1 with addresses: ArrayBuffer(EndPoint(localhost,9092,ListenerName(PLAINTEXT),PLAINTEXT)), czxid (broker epoch): 24 (kafka.zk.KafkaZkClient) [2019-11-23 18:07:39,387] WARN No meta.properties file under dir /data/kafka/logs-1/meta.properties (kafka.server.BrokerMetadataCheckpoint) [2019-11-23 18:07:39,526] INFO [ExpirationReaper-1-topic]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2019-11-23 18:07:39,550] INFO [ExpirationReaper-1-Heartbeat]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2019-11-23 18:07:39,579] INFO [ExpirationReaper-1-Rebalance]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2019-11-23 18:07:39,630] INFO Successfully created /controller_epoch with initial epoch 0 (kafka.zk.KafkaZkClient) [2019-11-23 18:07:39,650] INFO [GroupCoordinator 1]: Starting up. (kafka.coordinator.group.GroupCoordinator) [2019-11-23 18:07:39,678] INFO [GroupCoordinator 1]: Startup complete. (kafka.coordinator.group.GroupCoordinator) [2019-11-23 18:07:39,705] INFO [GroupMetadataManager brokerId=1] Removed 0 expired offsets in 28 milliseconds. (kafka.coordinator.group.GroupMetadataManager) [2019-11-23 18:07:39,719] INFO [ProducerId Manager 1]: Acquired new producerId block (brokerId:1,blockStartProducerId:0,blockEndProducerId:999) by writing to Zk with path version 1 (kafka.coordinator.transaction.ProducerIdManager) [2019-11-23 18:07:39,792] INFO [TransactionCoordinator id=1] Starting up. (kafka.coordinator.transaction.TransactionCoordinator) [2019-11-23 18:07:39,816] INFO [Transaction Marker Channel Manager 1]: Starting (kafka.coordinator.transaction.TransactionMarkerChannelManager) [2019-11-23 18:07:39,819] INFO [TransactionCoordinator id=1] Startup complete. (kafka.coordinator.transaction.TransactionCoordinator) [2019-11-23 18:07:39,968] INFO [/config/changes-event-process-thread]: Starting (kafka.common.ZkNodeChangeNotificationListener$ChangeEventProcessThread) [2019-11-23 18:07:40,062] INFO [SocketServer brokerId=1] Started data-plane processors for 1 acceptors (kafka.network.SocketServer) [2019-11-23 18:07:40,092] INFO Kafka version: 2.3.0 (org.apache.kafka.common.utils.AppInfoParser) [2019-11-23 18:07:40,092] INFO Kafka commitId: fc1aaa116b661c8a (org.apache.kafka.common.utils.AppInfoParser) [2019-11-23 18:07:40,092] INFO Kafka startTimeMs: 1574561260062 (org.apache.kafka.common.utils.AppInfoParser) [2019-11-23 18:07:40,095] INFO [KafkaServer id=1] started (kafka.server.KafkaServer) [2019-11-23 18:12:30,042] INFO [ReplicaFetcherManager on broker 1] Removed fetcher for partitions Set(test-0) (kafka.server.ReplicaFetcherManager) [2019-11-23 18:12:30,141] INFO [Log partition=test-0, dir=/data/kafka/logs-1] Loading producer state till offset 0 with message format version 2 (kafka.log.Log) [2019-11-23 18:12:30,147] INFO [Log partition=test-0, dir=/data/kafka/logs-1] Completed load of log with 1 segments, log start offset 0 and log end offset 0 in 55 ms (kafka.log.Log) [2019-11-23 18:12:30,149] INFO Created log for partition test-0 in /data/kafka/logs-1 with properties {compression.type -> producer, message.downconversion.enable -> true, min.insync.replicas -> 1, segment.jitter.ms -> 0, cleanup.policy -> [delete], flush.ms -> 9223372036854775807, segment.bytes -> 1073741824, retention.ms -> 604800000, flush.messages -> 9223372036854775807, message.format.version -> 2.3-IV1, file.delete.delay.ms -> 60000, max.compaction.lag.ms -> 9223372036854775807, max.message.bytes -> 1000012, min.compaction.lag.ms -> 0, message.timestamp.type -> CreateTime, preallocate -> false, min.cleanable.dirty.ratio -> 0.5, index.interval.bytes -> 4096, unclean.leader.election.enable -> false, retention.bytes -> -1, delete.retention.ms -> 86400000, segment.ms -> 604800000, message.timestamp.difference.max.ms -> 9223372036854775807, segment.index.bytes -> 10485760}. (kafka.log.LogManager) [2019-11-23 18:12:30,150] INFO [Partition test-0 broker=1] No checkpointed highwatermark is found for partition test-0 (kafka.cluster.Partition) [2019-11-23 18:12:30,151] INFO Replica loaded for partition test-0 with initial high watermark 0 (kafka.cluster.Replica) [2019-11-23 18:12:30,153] INFO [Partition test-0 broker=1] test-0 starts at Leader Epoch 0 from offset 0. Previous Leader Epoch was: -1 (kafka.cluster.Partition) [2019-11-23 18:17:39,655] INFO [GroupMetadataManager brokerId=1] Removed 0 expired offsets in 0 milliseconds. (kafka.coordinator.group.GroupMetadataManager) [2019-11-23 18:27:39,652] INFO [GroupMetadataManager brokerId=1] Removed 0 expired offsets in 0 milliseconds. (kafka.coordinator.group.GroupMetadataManager)

3、创建 topic

使用 kafka-topics.sh 创建单分区单副本的 topic test:

sh bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic test

结果

Created topic test.

查看 topic 列表:

bin/kafka-topics.sh --list --zookeeper localhost:2181

结果

test

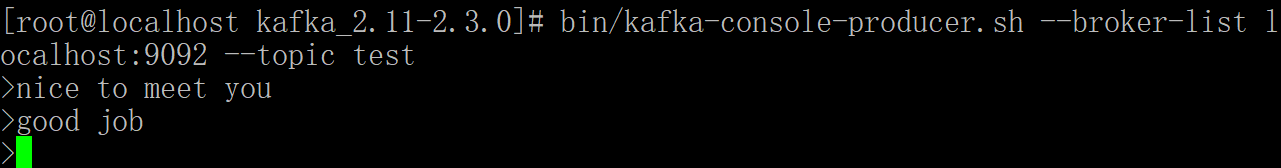

4、产生消息

使用 kafka-console-producer.sh 发送消息:

sh bin/kafka-console-producer.sh --broker-list localhost:9092 --topic test

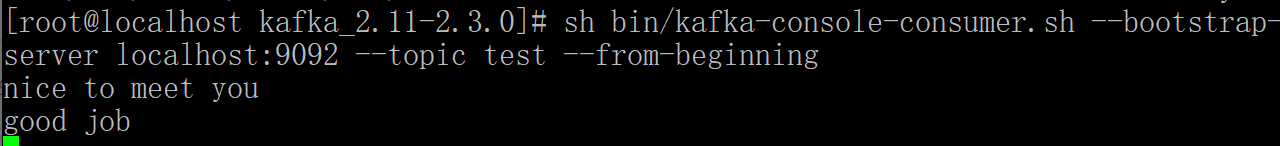

5、消费消息

使用 kafka-console-consumer.sh 接收消息并在终端打印:

sh bin/kafka-console-consumer.sh --zookeeper localhost:2181 --topic test --from-beginning

打开个新的命令窗口执行上面命令即可查看信息:

出错

zookeeper is not a recognized option

原因:我们使用的是高版本的kafka,换成以下命令

sh bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic test --from-beginning

可以看到这回发布的消息都可以订阅到了。

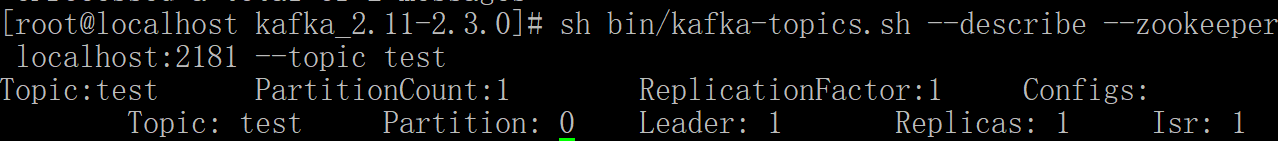

6、查看描述 topics 信息

sh bin/kafka-topics.sh --describe --zookeeper localhost:2181 --topic test

结果:

解释

第一行给出了所有分区的摘要,每个附加行给出了关于一个分区的信息。 由于我们只有一个分区,所以只有一行。

“Leader”: 是负责给定分区的所有读取和写入的节点。 每个节点将成为分区随机选择部分的领导者。

“Replicas”: 是复制此分区日志的节点列表,无论它们是否是领导者,或者即使他们当前处于活动状态。

“Isr”: 是一组“同步”副本。这是复制品列表的子集,当前活着并被引导到领导者。

集群配置

Kafka 支持两种模式的集群搭建:一种是在单机上运行多个 broker 实例来实现集群,一种是在多台机器上搭建集群。

下面介绍下如何实现单机多 broker 实例集群,其实很简单,只需要如下配置即可。

单机多broker 集群配置

利用单节点部署多个 broker。 不同的 broker 设置不同的 id,监听端口及日志目录。 例如:

cp config/server.properties config/server-2.properties cp config/server.properties config/server-3.properties vim config/server-2.properties vim config/server-3.properties

修改 :

broker.id=2 listeners = PLAINTEXT://your.host.name:9093 log.dir=/data/kafka/logs-2

和

broker.id=3 listeners = PLAINTEXT://your.host.name:9094 log.dir=/data/kafka/logs-3

启动Kafka服务:

bin/kafka-server-start.sh config/server-2.properties & bin/kafka-server-start.sh config/server-3.properties &

至此,单机多broker实例的集群配置完毕。

多机多 broker 集群配置

分别在多个节点按上述方式安装 Kafka,配置启动多个 Zookeeper 实例。

假设三台机器 IP 地址是 : 192.168.153.135, 192.168.153.136, 192.168.153.137

分别配置多个机器上的 Kafka 服务,设置不同的 broker id,zookeeper.connect 设置如下:

vim config/server.properties

里面的 zookeeper.connect

修改为:

zookeeper.connect=192.168.153.135:2181,192.168.153.136:2181,192.168.153.137:2181