小结:

1、When pipelining is used, many commands are usually read with a single read() system call, and multiple replies are delivered with a single write() system call.

Using pipelining to speedup Redis queries – Redis https://redis.io/topics/pipelining

Using pipelining to speedup Redis queries

Request/Response protocols and RTT

Redis is a TCP server using the client-server model and what is called a Request/Response protocol.

This means that usually a request is accomplished with the following steps:

- The client sends a query to the server, and reads from the socket, usually in a blocking way, for the server response.

- The server processes the command and sends the response back to the client.

So for instance a four commands sequence is something like this:

- Client: INCR X

- Server: 1

- Client: INCR X

- Server: 2

- Client: INCR X

- Server: 3

- Client: INCR X

- Server: 4

Clients and Servers are connected via a networking link. Such a link can be very fast (a loopback interface) or very slow (a connection established over the Internet with many hops between the two hosts). Whatever the network latency is, there is a time for the packets to travel from the client to the server, and back from the server to the client to carry the reply.

This time is called RTT (Round Trip Time). It is very easy to see how this can affect the performances when a client needs to perform many requests in a row (for instance adding many elements to the same list, or populating a database with many keys). For instance if the RTT time is 250 milliseconds (in the case of a very slow link over the Internet), even if the server is able to process 100k requests per second, we'll be able to process at max four requests per second.

If the interface used is a loopback interface, the RTT is much shorter (for instance my host reports 0,044 milliseconds pinging 127.0.0.1), but it is still a lot if you need to perform many writes in a row.

Fortunately there is a way to improve this use case.

Redis Pipelining

A Request/Response server can be implemented so that it is able to process new requests even if the client didn't already read the old responses. This way it is possible to send multiple commands to the server without waiting for the replies at all, and finally read the replies in a single step.

This is called pipelining, and is a technique widely in use since many decades. For instance many POP3 protocol implementations already supported this feature, dramatically speeding up the process of downloading new emails from the server.

Redis has supported pipelining since the very early days, so whatever version you are running, you can use pipelining with Redis. This is an example using the raw netcat utility:

$ (printf "PING

PING

PING

"; sleep 1) | nc localhost 6379

+PONG

+PONG

+PONG

This time we are not paying the cost of RTT for every call, but just one time for the three commands.

To be very explicit, with pipelining the order of operations of our very first example will be the following:

- Client: INCR X

- Client: INCR X

- Client: INCR X

- Client: INCR X

- Server: 1

- Server: 2

- Server: 3

- Server: 4

IMPORTANT NOTE: While the client sends commands using pipelining, the server will be forced to queue the replies, using memory. So if you need to send a lot of commands with pipelining, it is better to send them as batches having a reasonable number, for instance 10k commands, read the replies, and then send another 10k commands again, and so forth. The speed will be nearly the same, but the additional memory used will be at max the amount needed to queue the replies for these 10k commands.

It's not just a matter of RTT

Pipelining is not just a way in order to reduce the latency cost due to the round trip time, it actually improves by a huge amount the total operations you can perform per second in a given Redis server. This is the result of the fact that, without using pipelining, serving each command is very cheap from the point of view of accessing the data structures and producing the reply, but it is very costly from the point of view of doing the socket I/O. This involves calling the read() and write() syscall, that means going from user land to kernel land. The context switch is a huge speed penalty.

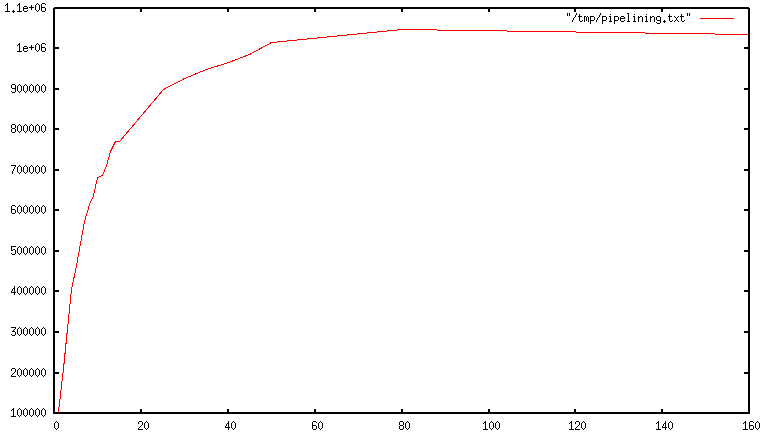

When pipelining is used, many commands are usually read with a single read() system call, and multiple replies are delivered with a single write() system call. Because of this, the number of total queries performed per second initially increases almost linearly with longer pipelines, and eventually reaches 10 times the baseline obtained not using pipelining, as you can see from the following graph:

Some real world code example

In the following benchmark we'll use the Redis Ruby client, supporting pipelining, to test the speed improvement due to pipelining:

require 'rubygems'

require 'redis'

def bench(descr)

start = Time.now

yield

puts "#{descr} #{Time.now-start} seconds"

end

def without_pipelining

r = Redis.new

10000.times {

r.ping

}

end

def with_pipelining

r = Redis.new

r.pipelined {

10000.times {

r.ping

}

}

end

bench("without pipelining") {

without_pipelining

}

bench("with pipelining") {

with_pipelining

}

Running the above simple script will provide the following figures in my Mac OS X system, running over the loopback interface, where pipelining will provide the smallest improvement as the RTT is already pretty low:

without pipelining 1.185238 seconds

with pipelining 0.250783 seconds

As you can see, using pipelining, we improved the transfer by a factor of five.

Pipelining VS Scripting

Using Redis scripting (available in Redis version 2.6 or greater) a number of use cases for pipelining can be addressed more efficiently using scripts that perform a lot of the work needed at the server side. A big advantage of scripting is that it is able to both read and write data with minimal latency, making operations like read, compute, write very fast (pipelining can't help in this scenario since the client needs the reply of the read command before it can call the write command).

Sometimes the application may also want to send EVAL or EVALSHA commands in a pipeline. This is entirely possible and Redis explicitly supports it with the SCRIPT LOAD command (it guarantees that EVALSHA can be called without the risk of failing).

Appendix: Why are busy loops slow even on the loopback interface?

Even with all the background covered in this page, you may still wonder why a Redis benchmark like the following (in pseudo code), is slow even when executed in the loopback interface, when the server and the client are running in the same physical machine:

FOR-ONE-SECOND:

Redis.SET("foo","bar")

END

After all if both the Redis process and the benchmark are running in the same box, isn't this just messages copied via memory from one place to another without any actual latency and actual networking involved?

The reason is that processes in a system are not always running, actually it is the kernel scheduler that let the process run, so what happens is that, for instance, the benchmark is allowed to run, reads the reply from the Redis server (related to the last command executed), and writes a new command. The command is now in the loopback interface buffer, but in order to be read by the server, the kernel should schedule the server process (currently blocked in a system call) to run, and so forth. So in practical terms the loopback interface still involves network-alike latency, because of how the kernel scheduler works.

Basically a busy loop benchmark is the silliest thing that can be done when metering performances in a networked server. The wise thing is just avoiding benchmarking in this way.

https://mp.weixin.qq.com/s/Yv3FJghKtmZ-RKUORuxpMw

云测评 | Redis-pipeline对于性能的提升究竟有多大?

Redis 更加友好的 Pipeline API · Issue #20 · go-kratos/kratos https://github.com/go-kratos/kratos/issues/20

Redis pipeline - 知乎 https://zhuanlan.zhihu.com/p/58608323

mq消息合并:由于mq请求发出到响应的时间,即往返时间, RTT(Round Time Trip),每次提交都要消耗RTT,不支持类似redis的pipeline机制。

Redis pipeline

关于 Redis pipeline

为什么需要 pipeline ?

Redis 的工作过程是基于 请求/响应 模式的。正常情况下,客户端发送一个命令,等待 Redis 应答;Redis 接收到命令,处理后应答。请求发出到响应的时间叫做往返时间,即 RTT(Round Time Trip)。在这种情况下,如果需要执行大量的命令,就需要等待上一条命令应答后再执行。这中间不仅仅多了许多次 RTT,而且还频繁的调用系统 IO,发送网络请求。为了提升效率,pipeline 出现了,它允许客户端可以一次发送多条命令,而不等待上一条命令执行的结果。

实现思路

客户端首先将执行的命令写入到缓冲区中,最后再一次性发送 Redis。但是有一种情况就是,缓冲区的大小是有限制的:如果命令数据太大,可能会有多次发送的过程,但是仍不会处理 Redis 的应答。

实现原理

要支持 pipeline,既要服务端的支持,也要客户端支持。对于服务端来说,所需要的是能够处理一个客户端通过同一个 TCP 连接发来的多个命令。可以理解为,这里将多个命令切分,和处理单个命令一样。对于客户端,则是要将多个命令缓存起来,缓冲区满了就发送,然后再写缓冲,最后才处理 Redis 的应答。

Redis pipeline 的参考资料

在 SpringBoot 中使用 Redis pipeline

基于 SpringBoot 的自动配置,在使用 Redis 时只需要在 pom 文件中给出 spring-boot-starter-data-redis 依赖,就可以直接使用 Spring Data Redis。关于 Redis pipeline 的使用方法,可以阅读 Spring Data Redis 给出的解释。下面,我给出一个简单的例子:

import com.imooc.ad.Application;

import org.junit.Test;

import org.junit.runner.RunWith;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.dao.DataAccessException;

import org.springframework.data.redis.connection.StringRedisConnection;

import org.springframework.data.redis.core.RedisCallback;

import org.springframework.data.redis.core.RedisOperations;

import org.springframework.data.redis.core.SessionCallback;

import org.springframework.data.redis.core.StringRedisTemplate;

import org.springframework.test.context.junit4.SpringRunner;

/**

* <h1>Redis Pipeline 功能测试用例</h1>

* 参考: https://docs.spring.io/spring-data/redis/docs/1.8.1.RELEASE/reference/html/#redis:template

* Created by Qinyi.

*/

@RunWith(SpringRunner.class)

@SpringBootTest(classes = {Application.class}, webEnvironment = SpringBootTest.WebEnvironment.NONE)

public class RedisPipelineTest {

/** 注入 StringRedisTemplate, 使用默认配置 */

@Autowired

private StringRedisTemplate stringRedisTemplate;

@Test

@SuppressWarnings("all")

public void testExecutePipelined() {

// 使用 RedisCallback 把命令放在 pipeline 中

RedisCallback<Object> redisCallback = connection -> {

StringRedisConnection stringRedisConn = (StringRedisConnection) connection;

for (int i = 0; i != 10; ++i) {

stringRedisConn.set(String.valueOf(i), String.valueOf(i));

}

return null; // 这里必须要返回 null

};

// 使用 SessionCallback 把命令放在 pipeline

SessionCallback<Object> sessionCallback = new SessionCallback<Object>() {

@Override

public Object execute(RedisOperations operations) throws DataAccessException {

operations.opsForValue().set("name", "qinyi");

operations.opsForValue().set("gender", "male");

operations.opsForValue().set("age", "19");

return null;

}

};

System.out.println(stringRedisTemplate.executePipelined(redisCallback));

System.out.println(stringRedisTemplate.executePipelined(sessionCallback));

}

}总结:Redis pipeline 的特性以及使用时需要注意的地方

- pipeline 减少了 RTT,也减少了IO调用次数(IO 调用涉及到用户态到内核态之间的切换)

- 如果某一次需要执行大量的命令,不能放到一个 pipeline 中执行。数据量过多,网络传输延迟会增加,且会消耗 Redis 大量的内存。应该将大量的命令切分为多个 pipeline 分别执行。