解决图片下载重命名保存本地,直接上代码(在原来代码做了一定的修改)。

总结:主要就是添加配置一个内置的函数,对保存的东西进行修改再存储,主要问题还是再piplines的设置里面。

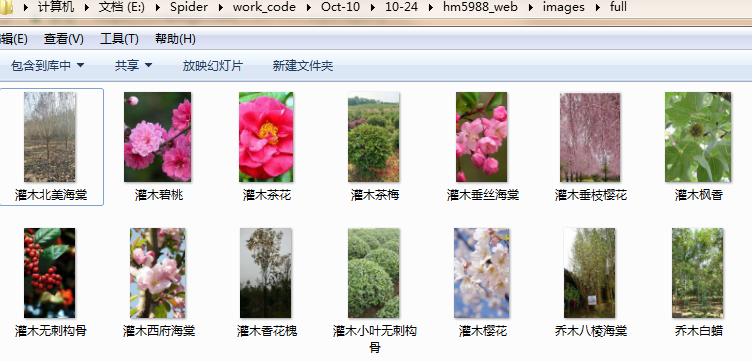

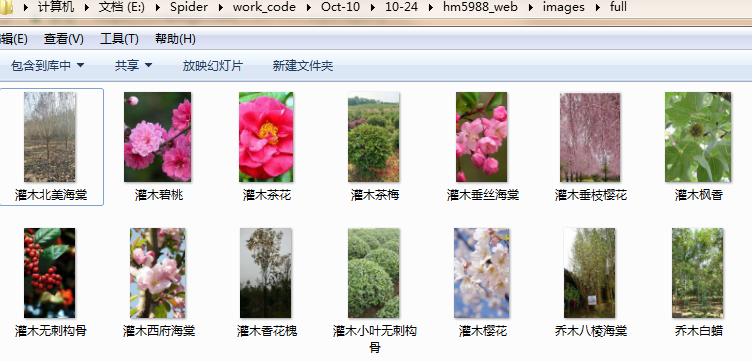

效果如图:

hm5988.py

# -*- coding: utf-8 -*-

import scrapy

from hm5988_web.items import Hm5988WebItem

class Hm5988Spider(scrapy.Spider):

name = 'hm5988'

allowed_domains = ['www.hm5988.com']

start_urls = ['http://www.hm5988.com/goods/CATE0.html?page=1']

custom_settings = {

'DOWNLOAD_DELAY': 0.5,

"ITEM_PIPELINES": {

'hm5988_web.pipelines.ImagePipeline': 300,

'hm5988_web.pipelines.MysqlPipeline': 302,

},

"DOWNLOADER_MIDDLEWARES": {

'hm5988_web.middlewares.Hm5988WebDownloaderMiddleware': 500,

},

}

def parse(self, response):

# 获取页码

p_url = response.xpath("//li[@class='last']/a/@href").extract_first()

page_num = p_url.split('=')[1]

# print(page_num)

for i in range(2, int(page_num)+1):

url = 'http://www.hm5988.com/goods/CATE0.html?page={}'.format(i)

yield scrapy.Request(url=url, callback=self.parse_detail)

def parse_detail(self,response):

link_urls = response.xpath("//li[@class='mr10 mb20']/a/@href").extract()

for link_url in link_urls:

# print(link_url)

yield scrapy.Request(url=link_url, callback=self.parse_detail2)

def parse_detail2(self, response):

item = Hm5988WebItem()

# 所属分类

_type = response.xpath("//div[@class='current']/a[6]/text()").extract_first()

item['_type'] = _type

# 产品名称

name = response.xpath("//div[@class='txt-t fl']/li[@class='fs22 gc3 fw']/text()").extract_first()

item['name'] = name

# 详情图片

pic = response.xpath("//div[@class='pic-box fl p10']/img[@class='pic-big']/@src").extract_first()

item['pic'] = pic

yield item

print('*'*100)

items.py

# -*- coding: utf-8 -*-

# Define here the models for your scraped items

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/items.html

import scrapy

class Hm5988WebItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

# 所属分类

_type = scrapy.Field()

# 产品名称

name = scrapy.Field()

# 详情图片

pic = scrapy.Field()

middlewares.py

# -*- coding: utf-8 -*-

# Define here the models for your spider middleware

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

from scrapy import signals

class Hm5988WebSpiderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the spider middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_spider_input(self, response, spider):

# Called for each response that goes through the spider

# middleware and into the spider.

# Should return None or raise an exception.

return None

def process_spider_output(self, response, result, spider):

# Called with the results returned from the Spider, after

# it has processed the response.

# Must return an iterable of Request, dict or Item objects.

for i in result:

yield i

def process_spider_exception(self, response, exception, spider):

# Called when a spider or process_spider_input() method

# (from other spider middleware) raises an exception.

# Should return either None or an iterable of Response, dict

# or Item objects.

pass

def process_start_requests(self, start_requests, spider):

# Called with the start requests of the spider, and works

# similarly to the process_spider_output() method, except

# that it doesn’t have a response associated.

# Must return only requests (not items).

for r in start_requests:

yield r

def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name)

class Hm5988WebDownloaderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the downloader middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_request(self, request, spider):

# Called for each request that goes through the downloader

# middleware.

# Must either:

# - return None: continue processing this request

# - or return a Response object

# - or return a Request object

# - or raise IgnoreRequest: process_exception() methods of

# installed downloader middleware will be called

return None

def process_response(self, request, response, spider):

# Called with the response returned from the downloader.

# Must either;

# - return a Response object

# - return a Request object

# - or raise IgnoreRequest

return response

def process_exception(self, request, exception, spider):

# Called when a download handler or a process_request()

# (from other downloader middleware) raises an exception.

# Must either:

# - return None: continue processing this exception

# - return a Response object: stops process_exception() chain

# - return a Request object: stops process_exception() chain

pass

def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name)

piplines.py

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

from scrapy.conf import settings

from scrapy import Request

from scrapy.exceptions import DropItem

from scrapy.pipelines.images import ImagesPipeline

import pymysql

class Hm5988WebPipeline(object):

def process_item(self, item, spider):

return item

# 数据保存mysql

class MysqlPipeline(object):

def open_spider(self, spider):

self.host = settings.get('MYSQL_HOST')

self.port = settings.get('MYSQL_PORT')

self.user = settings.get('MYSQL_USER')

self.password = settings.get('MYSQL_PASSWORD')

self.db = settings.get(('MYSQL_DB'))

self.table = settings.get('TABLE')

self.client = pymysql.connect(host=self.host, user=self.user, password=self.password, port=self.port, db=self.db, charset='utf8')

def process_item(self, item, spider):

item_dict = dict(item)

cursor = self.client.cursor()

values = ','.join(['%s'] * len(item_dict))

keys = ','.join(item_dict.keys())

sql = 'INSERT INTO {table}({keys}) VALUES ({values})'.format(table=self.table, keys=keys, values=values)

try:

if cursor.execute(sql, tuple(item_dict.values())): # 第一个值为sql语句第二个为 值 为一个元组

print('数据入库成功!')

self.client.commit()

except Exception as e:

print(e)

self.client.rollback()

return item

def close_spider(self, spider):

self.client.close()

class ImagePipeline(ImagesPipeline):

def item_completed(self, results, item, info):

image_paths = [x['path'] for ok, x in results if ok]

if not image_paths:

raise DropItem('Image Downloaded Failed')

return item

def get_media_requests(self, item, info):

yield Request(item['pic'], meta={'item':item})

def file_path(self, request, response=None, info=None):

item = request.meta['item']

filename = u'full/{}.jpg'.format(item['name'])

return filename

settings.py

# -*- coding: utf-8 -*-

# Scrapy settings for hm5988_web project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://doc.scrapy.org/en/latest/topics/settings.html

# https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'hm5988_web'

SPIDER_MODULES = ['hm5988_web.spiders']

NEWSPIDER_MODULE = 'hm5988_web.spiders'

IMAGES_STORE = './images'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'hm5988_web (+http://www.yourdomain.com)'

# Obey robots.txt rules

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

# mysql配置参数

MYSQL_HOST = "172.16.10.157"

MYSQL_PORT = 3306

MYSQL_USER = "root"

MYSQL_PASSWORD = "123456"

MYSQL_DB = 'web_datas'

TABLE = "hm5988_2"

# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#}

# Enable or disable spider middlewares

# See https://doc.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'hm5988_web.middlewares.Hm5988WebSpiderMiddleware': 543,

#}

# Enable or disable downloader middlewares

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

DOWNLOADER_MIDDLEWARES = {

'hm5988_web.middlewares.Hm5988WebDownloaderMiddleware': 543,

}

# Enable or disable extensions

# See https://doc.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#}

# Configure item pipelines

# See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

'hm5988_web.pipelines.ImagePipeline': 300,

'hm5988_web.pipelines.Hm5988WebPipeline': 302,

}

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'