文章目录

大家好,我是cv君,很多大创,比赛,项目,工程,科研,学术的炼丹术士问我上述这些识别,该怎么做,怎么选择框架,今天可以和大家分析一下一些方案:

用单帧目标检测做的话,前后语义相关性很差(也有优化版),效果不能达到实际项目需求,尤其是在误检上较难,并且目标检测是需要大量数据来拟合的。标注需求极大。

用姿态加目标检测结合的方式,效果是很不错的,不过一些这样类似Two stage的方案,速度较慢(也有很多实时的),同样有着一些不能通过解决时间上下文的问题。

即:摔倒检测 我们正常是应该有一个摔倒过程,才能被判断为摔倒的,而不是人倒下的就一定是摔倒(纯目标检测弊病)

运动检测 比如引体向上,和高抬腿计数,球类运动,若是使用目标检测做,那么会出现什么问题呢? 引体向上无法实现动作是否规范(当然可以通过后处理判断下巴是否过框,效果是不够人工智能的),高抬腿计数,目标检测是无法计数的,判断人物的球类运动,目标检测是有很大的误检的:第一种使用球检测,误检很大,第二种使用打球手势检测,遇到人物遮挡球类,就无法识别目标,在标注上也需要大量数据…

今天cv君铺垫了这么多,只是为了给大家推荐一个全新出炉视频序列检测方法,目前代码已开源至Github:https://github.com/xiaobingchan/CV-Action 欢迎star~

欢迎移步。只需要很少的训练数据,就可以拟合哦!不信你来试试吧~几个训练集即可。

神经网络使用的是这两个月开源的实时动作序列强分类神经网络:realtimenet 。

我的github将收集 所有的上述说到的动作序列视频数据,训练出能实用的检测任务:目前实现了手势控制的检测,等等,大家欢迎关注公众号,后续会接着更新。

开始

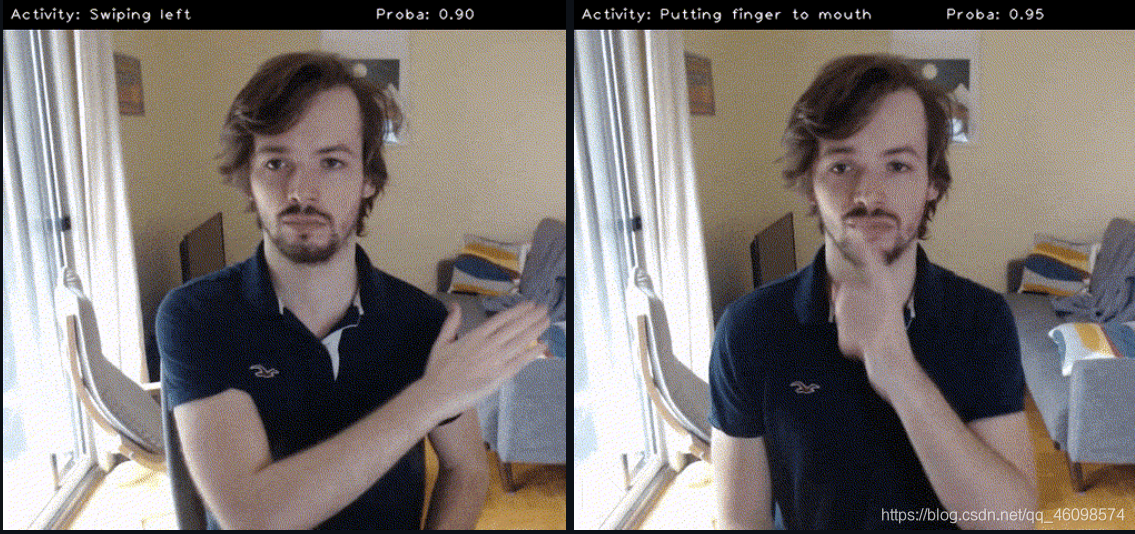

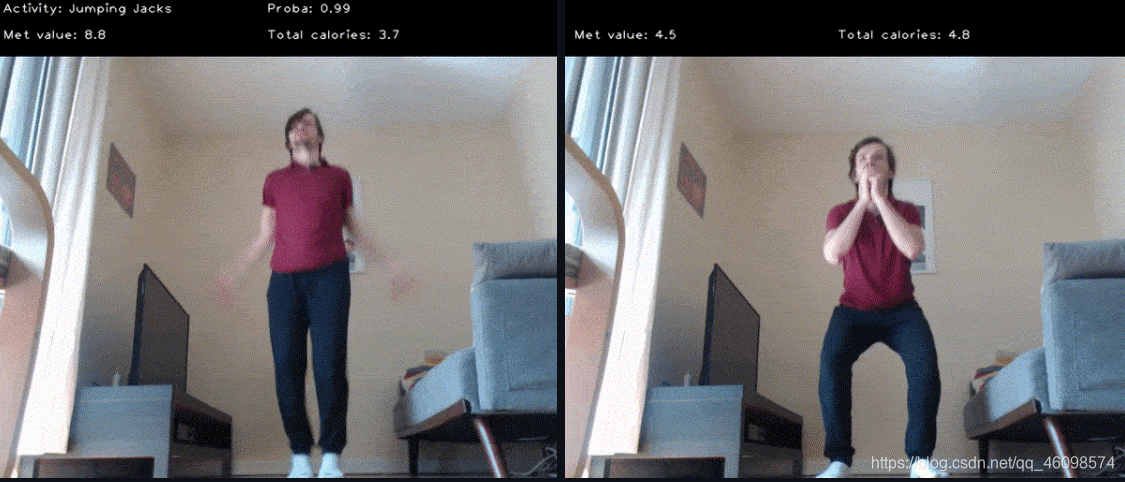

目前以手势和运动识别为例子,因为cv君没什么数据哈哈

项目演示:

本人做的没转gif,所以大家可以看看其他的演示效果图,跟我的是几乎一样的~ 只是训练数据不同

一、 基本过程和思想

基本思想是将数据集中视频及分类标签转换为图像(视频帧)和其对应的分类标签,也可以不标注,单独给一个小视频标注上分类类别,再采用CNN网络对图像进行训练学习和测试,将视频分类问题转化为图形分类问题。具体步骤包括:

(1) 对每个视频(训练和测试视频)以一定的FPS截出视频帧(jpegs)保存为训练集和测试集,将对图像的分类性能作为所对应视频的分类性能

(2)训练一个人物等特征提取模型,并采用模型融合策略,一个特征提取,一个分类模型。特征工程部分通用人物行为,分类模型,训练自己的类别的分类模型即可。

(4) 训练完成后载入模型对test set内所有的视频帧进行检查验证,得出全测试集上的top1准确率和top5准确率输出。

(5)实时检测。

二 、视频理解还有哪些优秀框架

第一个 就是我github这个了,比较方便,但不敢排前几,因为没有什么集成,

然后MMaction ,就是视频理解框架了,众所周知,他们家的东西很棒

第二个就是facebook家的一些了,

再下来基本上就不多了,全面好用的实时框架。

好,所以我们先来说说我的使用过程。

三、效果体验~使用

体验官方的一些模型 (模型我已经放在里面了)

将模型放置此处:

首先,请试用我们提供的演示。在sense/examples目录中,您将找到3个Python脚本, run_gesture_recognition.py ,健身_跟踪器 run_fitness_tracker.py .py,并运行卡路里_估算 run_calorie_estimation .py. 启动每个演示就像在终端中运行脚本一样简单,如下所述。

手势:

cd examples/

python run_gesture_recognition.py

健身_跟踪器:

卡路里计算

三、训练自己数据集步骤

首先 clone一下我的github,或者原作者github,

然后自己录制几个视频,比如我这里capture 一个类别,录制了几个视频,可以以MP4 或者avi后缀,再来个类别,再录制一些视频,以名字为类别。

然后

- 1

这一步,会显示:

然后,打开这个网址:

来到前端界面

点击一下start new project

这样编写

然后点击create project 即可制作数据。

但是官方的制作方法是有着严重bug的~我们该怎么做呢!

下面,我修改后,可以这样!

这里请仔细看:

我们在sense_studio 文件夹下,新建一个文件夹:我叫他cvdemo1

然后新建两个文件夹:videos_train 和videos_valid 里面存放的capture是你的类别名字的数据集,capture存放相关的训练集,click存放click的训练集,同样的videos_valid 存放验证集,

在cvdemo1文件夹下新建project_config.json ,里面写什么呢? 可以复制我的下面的代码:

里面的name 改成你的文件夹名字即可。

就这么简单!

然后就可以训练:

python train_classifier.py 你可以将main中修改一下。

将path in修改成我们的训练数据地址,即可,其他的修改不多,就按照我的走即可,

训练特别快,10分钟即可,

然后,你可以运行run_custom_classifier.py

同样修改路径即可。

结果就可以实时检测了

原代码解读

同样的,我们使用的是使用efficienct 来做的特征,你也可以改成mobilenet 来做,有示例代码,就是训练的时候,用mobilenet ,检测的时候也是,只需要修改几行代码即可。

efficienct 提取特征部分代码:

这个InvertedResidual 在这,

我们finetune自己的数据集

构建数据的dataloader

如何实时检测视频序列的?

这个问题,主要是通过 系列时间内帧间图像组合成一个序列,送到网络中进行分类的,可以在许多地方找到相关参数,比如 display.py :

每个类别只需要5个左右的视频,即可得到不错的效果嗷~

欢迎Star github~

.

任何程序错误,以及技术疑问或需要解答的,请扫码添加作者VX::1755337994

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-ZHcMZyRC-1614669575240)(D:CSDNpic_newsense1614657924104.png)]](https://img-blog.csdnimg.cn/20210302162352210.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L3FxXzQ2MDk4NTc0,size_16,color_FFFFFF,t_70#pic_center)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-wv6folha-1614669575241)(./sense1614658174416.png)]](https://img-blog.csdnimg.cn/2021030215233270.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L3FxXzQ2MDk4NTc0,size_16,color_FFFFFF,t_70#pic_center)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-EEhm0qcY-1614669575243)(./sense1614658199676.png)]](https://img-blog.csdnimg.cn/20210302152340386.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L3FxXzQ2MDk4NTc0,size_16,color_FFFFFF,t_70#pic_center)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-Dp8HRoE2-1614669575244)(./sense1614658272219.png)]](https://img-blog.csdnimg.cn/20210302152350164.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L3FxXzQ2MDk4NTc0,size_16,color_FFFFFF,t_70#pic_center)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-0tBCyoPd-1614669575245)(./sense1614660437833.png)]](https://img-blog.csdnimg.cn/20210302152400224.png?x-oss-process=image/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNzZG4ubmV0L3FxXzQ2MDk4NTc0,size_16,color_FFFFFF,t_70#pic_center)