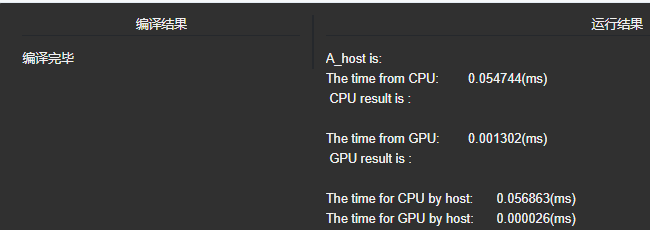

1.两个一维数组相加,求和

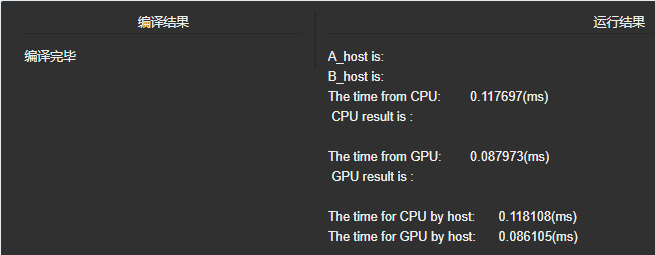

1 #include <stdio.h> 2 #include <stdlib.h> 3 #include <time.h> 4 #include "cuda_runtime.h" 5 #include "device_launch_parameters.h" 6 void initial(float *list,int size) 7 { 8 float *num = list; 9 //srand((unsigned)time(NULL)); 10 for (int i=0; i<size; i++) 11 { 12 num[i] = rand()%10; 13 } 14 15 } 16 void sumMatrix(float* MatA, float* MatB,float *MatC,int nx,int ny) 17 { 18 float* a=MatA; 19 float* b=MatB; 20 float* c=MatC; 21 for(int j=0; j<ny;j++) 22 { 23 for(int i=0; i<nx;i++) 24 { 25 c[i] = a[i] + b[i]; 26 } 27 c += nx; 28 b += nx; 29 a += nx; 30 } 31 } 32 33 34 //核函数 35 __global__ void GPUsumMatrix(float* MatA,float* MatB,float* MatC,int nx,int ny) 36 { 37 int ix = threadIdx.x + blockDim.x * blockIdx.x; 38 int iy = threadIdx.y + blockDim.y * blockIdx.y; 39 int idx = ix + iy * ny; 40 if(ix<nx && iy<ny) 41 { 42 MatC[idx] = MatA[idx] + MatB[idx]; 43 // printf(" C: %f ",MatC[idx]); 44 } 45 } 46 47 void printList(float* A,int size) 48 { 49 for (int i=0;i<size;i++) 50 { 51 printf(" %f ",A[i]); 52 } 53 } 54 int main(int argc, char** argv) 55 { 56 //CPU计时方法 57 float time_cpu, time_gpu; 58 clock_t start_cpu, stop_cpu, start_gpu, stop_gpu; 59 //GPU计时方法 60 float time_CPU, time_GPU; 61 cudaEvent_t start_GPU, stop_GPU, start_CPU, stop_CPU; 62 63 //输入二维矩阵 64 int nx = 1<<12; 65 int ny = 1<<12; 66 int nBytes = nx * ny *sizeof(float); 67 //开辟主机内存 68 float *A_host = (float*)malloc(nBytes); 69 float *B_host = (float*)malloc(nBytes); 70 float *C_host = (float*)malloc(nBytes); 71 float *C_from_gpu = (float*)malloc(nBytes); 72 initial(A_host,nx*ny); 73 printf("A_host is:"); 74 // printList(A_host,nx*ny); 75 initial(B_host,nx*ny); 76 printf(" B_host is:"); 77 // printList(B_host,nx*ny); 78 79 80 //记录当前时间 81 start_cpu = clock(); 82 sumMatrix(A_host,B_host,C_host,nx,ny); 83 stop_cpu = clock(); 84 printf(" The time from CPU: %f(ms) ", (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC); 85 86 //输出结果 87 printf(" CPU result is : "); 88 // printList(C_host,nx*ny); 89 90 //开辟设备内存 91 float* A_dev = NULL; 92 float* B_dev = NULL; 93 float* C_dev = NULL; 94 cudaMalloc((void**)&A_dev,nBytes); 95 cudaMalloc((void**)&B_dev,nBytes); 96 cudaMalloc((void**)&C_dev,nBytes); 97 98 //输入数据,从hostTO device 99 cudaMemcpy(A_dev,A_host,nBytes,cudaMemcpyHostToDevice); 100 cudaMemcpy(B_dev,B_host,nBytes,cudaMemcpyHostToDevice); 101 dim3 block(2,2); 102 dim3 grid((nx-1)/block.x+1,(ny-1)/block.y+1); 103 // 创建Event 104 cudaEventCreate(&start_GPU); 105 cudaEventCreate(&stop_GPU); 106 //记录当前时间 107 cudaEventRecord(start_GPU,0); 108 start_gpu = clock(); 109 110 GPUsumMatrix<<<grid,block>>>(A_dev,B_dev,C_dev,nx,ny); 111 sumMatrix(A_host,B_host,C_host,nx,ny); 112 113 stop_gpu = clock(); 114 cudaEventRecord(stop_GPU,0); 115 cudaEventSynchronize(start_GPU); 116 cudaEventSynchronize(stop_GPU); 117 //计算时间差 118 cudaEventElapsedTime(&time_GPU, start_GPU,stop_GPU); 119 printf(" The time from GPU: %f(ms) ", time_GPU/1000); 120 //消除Event 121 cudaEventDestroy(start_GPU); 122 cudaEventDestroy(stop_GPU); 123 cudaMemcpy(C_from_gpu,C_dev,nBytes,cudaMemcpyDeviceToHost); 124 //输出结果 125 printf(" GPU result is : "); 126 // printList(C_from_gpu,nx*ny); 127 128 cudaFree(A_dev); 129 cudaFree(B_dev); 130 cudaFree(C_dev); 131 free(A_host); 132 free(B_host); 133 free(C_host); 134 135 time_cpu = (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC; 136 time_gpu = (float) (stop_gpu-start_gpu) / CLOCKS_PER_SEC; 137 printf(" The time for CPU by host: %f(ms) ", time_cpu); 138 printf("The time for GPU by host: %f(ms) ", time_gpu); 139 140 141 cudaDeviceReset(); 142 return 0; 143 }

2.

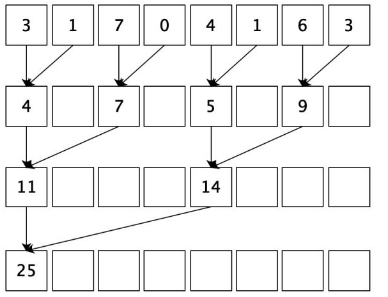

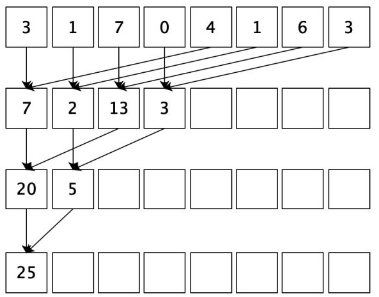

由于加法的交换律和结合律,数组可以以任意顺序求和。所以我们会自然而然产生这样的思路:首先把输入数组划分为更小的数据块,之后用一个线程计算一个数据块的部分和,最后把所有部分和再求和得出最终结果。

依照以上的思路,我们可以按下图这样计算。就上面的输入例子而言,首先需要我们开辟一个8个int的存储空间,图中一行的数据代表我们开辟的存储空间。计算时首先将相邻的两数相加(也称相邻配对),结果写入第一个数的存储空间内。第二轮迭代时我们再将第一次的结果两两相加得出下一级结果,一直重复这个过程最后直到我们得到最终的结果,空白方框里面存储的内容是我们不需要的。这个过程相当于,每一轮迭代后,选取被加数的跨度翻倍,后面我们核函数就是这样实现的。

1 #include <stdio.h> 2 #include <stdlib.h> 3 #include <time.h> 4 #include "cuda_runtime.h" 5 #include "device_launch_parameters.h" 6 void initial(float *list,int size) 7 { 8 float *num = list; 9 //srand((unsigned)time(NULL)); 10 for (int i=0; i<size; i++) 11 { 12 num[i] = rand()%10; 13 } 14 15 } 16 void sumMatrix(float* MatA, float* MatB,int size) 17 { 18 float* a=MatA; 19 float* b=MatB; 20 int i = 0; 21 for(int j=0; j<size;j++) 22 { 23 b[i] += a[j]; 24 } 25 26 } 27 28 29 //核函数 30 __global__ void GPUreduceNeighbored(float* g_idata,float* g_odata, unsigned int n) 31 { 32 //set thread ID 33 unsigned int tid = threadIdx.x; 34 if(tid >= n) return; 35 float *idata = g_idata + blockIdx.x * blockDim.x; 36 for(int stride = 1; stride < blockDim.x; stride*=2) 37 { 38 if((tid%(2*stride))==0) 39 { 40 idata[tid] += idata[tid+stride]; 41 } 42 __syncthreads(); 43 } 44 if(tid == 0) 45 { 46 g_odata[blockIdx.x] = idata[0]; 47 } 48 49 } 50 void printList(float* A,int size) 51 { 52 for (int i=0;i<size;i++) 53 { 54 printf(" %f ",A[i]); 55 } 56 } 57 int main(int argc, char** argv) 58 { 59 //CPU计时方法 60 float time_cpu, time_gpu; 61 clock_t start_cpu, stop_cpu, start_gpu, stop_gpu; 62 //GPU计时方法 63 float time_CPU, time_GPU; 64 cudaEvent_t start_GPU, stop_GPU, start_CPU, stop_CPU; 65 66 //输入一维数组 67 int size = 1<<24; 68 69 int nBytes = size *sizeof(float); 70 //开辟主机内存 71 float *A_host = (float*)malloc(nBytes); 72 float *B_host = (float*)malloc(nBytes); 73 float *C_from_gpu = (float*)malloc(nBytes); 74 75 initial(A_host,size); 76 printf("A_host is:"); 77 // printList(A_host,size); 78 79 80 //记录当前时间 81 start_cpu = clock(); 82 83 sumMatrix(A_host,B_host,size); 84 85 stop_cpu = clock(); 86 87 printf(" The time from CPU: %f(ms) ", (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC); 88 89 //输出结果 90 printf(" CPU result is : "); 91 // printList(B_host,1); 92 93 //开辟设备内存 94 float* A_dev = NULL; 95 float* B_dev = NULL; 96 97 cudaMalloc((void**)&A_dev,nBytes); 98 cudaMalloc((void**)&B_dev,nBytes); 99 // cudaMalloc((void**)&C_dev,nBytes); 100 101 //输入数据,从hostTO device 102 cudaMemcpy(A_dev,A_host,nBytes,cudaMemcpyHostToDevice); 103 //cudaMemcpy(B_dev,B_host,nBytes,cudaMemcpyHostToDevice); 104 dim3 block(1024,1); 105 dim3 grid((size-1)/block.x+1,1); 106 // 创建Event 107 cudaEventCreate(&start_GPU); 108 cudaEventCreate(&stop_GPU); 109 //记录当前时间 110 cudaEventRecord(start_GPU,0); 111 start_gpu = clock(); 112 113 GPUreduceNeighbored<<<grid,block>>>(A_dev,B_dev,size); 114 115 stop_gpu = clock(); 116 cudaEventRecord(stop_GPU,0); 117 cudaEventSynchronize(start_GPU); 118 cudaEventSynchronize(stop_GPU); 119 //计算时间差 120 cudaEventElapsedTime(&time_GPU, start_GPU,stop_GPU); 121 printf(" The time from GPU: %f(ms) ", time_GPU/1000); 122 //消除Event 123 cudaEventDestroy(start_GPU); 124 cudaEventDestroy(stop_GPU); 125 cudaMemcpy(C_from_gpu,B_dev,nBytes,cudaMemcpyDeviceToHost); 126 //输出结果 127 printf(" GPU result is : "); 128 // printList(C_from_gpu,1); 129 130 cudaFree(A_dev); 131 cudaFree(B_dev); 132 133 free(A_host); 134 free(B_host); 135 136 137 time_cpu = (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC; 138 time_gpu = (float) (stop_gpu-start_gpu) / CLOCKS_PER_SEC; 139 printf(" The time for CPU by host: %f(ms) ", time_cpu); 140 printf("The time for GPU by host: %f(ms) ", time_gpu); 141 142 143 cudaDeviceReset(); 144 return 0; 145 }

3.

线程束分化

首先我们来回忆一下上个程序的实现思路,我们是利用stride变量来实现每轮迭代时的被加数选择,这造成线程束的分化,也就是每个线程束中只有部分线程是活跃的,但由于硬件设计,调度会以一整个线程束为单位进行,所以影响了程序的效率。

这种情况下,我们可以通过重新组织线程索引来解决线程束分化的问题。我们把核函数的代码进行修改:

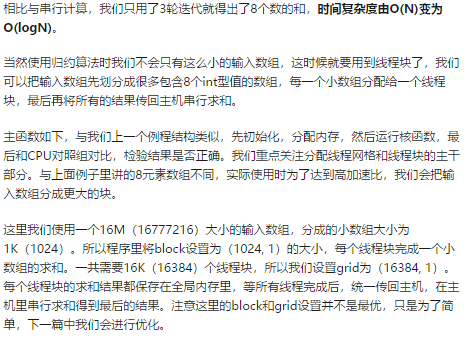

相比于之前的初版代码,我们现在使用线程ID来生成一个数组访问索引,这种方式有效地避免了线程束的分化。可能会有点迷惑,我们更具体说说,在每个线程块有1024个线程(32个线程束)时,在第一轮迭代,前16个线程束执行计算,后16个线程束什么都不做;而原来的代码中,32个线程束都执行计算,但只有偶数标号的线程活跃。第二轮迭代时,前8个线程束执行计算,后24个线程束执行计算;而原来的代码中,32个线程束都执行计算,但只有标号是4的倍数的线程活跃。这样重新组织线程ID后,线程束分化就被避免掉了。我们来实际运行一下,看看效果。

可见尽量避免线程束的分化十分重要。这给了我们一点的启发,看似不起眼的细节,在CUDA编程中却会产生不小的影响,这也是我们需要了解底层硬件运行机制的一个重要原因。

1 #include <stdio.h> 2 #include <stdlib.h> 3 #include <time.h> 4 #include "cuda_runtime.h" 5 #include "device_launch_parameters.h" 6 void initial(float *list,int size) 7 { 8 float *num = list; 9 //srand((unsigned)time(NULL)); 10 for (int i=0; i<size; i++) 11 { 12 num[i] = rand()%10; 13 } 14 15 } 16 void sumMatrix(float* MatA, float* MatB,int size) 17 { 18 float* a=MatA; 19 float* b=MatB; 20 int i = 0; 21 for(int j=0; j<size;j++) 22 { 23 b[i] += a[j]; 24 } 25 26 } 27 28 29 //核函数 30 __global__ void GPUreduceNeighbored(float* g_idata,float* g_odata, unsigned int n) 31 { 32 unsigned int tid = threadIdx.x; 33 unsigned idx = blockIdx.x*blockDim.x + threadIdx.x; 34 // convert global data pointer to the local point of this block 35 float *idata = g_idata + blockIdx.x*blockDim.x; 36 if (idx > n) 37 return; 38 //in-place reduction in global memory 39 for (int stride = 1; stride < blockDim.x; stride *= 2) 40 { 41 //convert tid into local array index 42 int index = 2 * stride *tid; 43 if (index < blockDim.x) 44 { 45 idata[index] += idata[index + stride]; 46 } 47 __syncthreads(); 48 } 49 //write result for this block to global men 50 if (tid == 0) 51 g_odata[blockIdx.x] = idata[0]; 52 53 } 54 void printList(float* A,int size) 55 { 56 for (int i=0;i<size;i++) 57 { 58 printf(" %f ",A[i]); 59 } 60 } 61 int main(int argc, char** argv) 62 { 63 //CPU计时方法 64 float time_cpu, time_gpu; 65 clock_t start_cpu, stop_cpu, start_gpu, stop_gpu; 66 //GPU计时方法 67 float time_CPU, time_GPU; 68 cudaEvent_t start_GPU, stop_GPU, start_CPU, stop_CPU; 69 70 //输入一维数组 71 int size = 1<<24; 72 73 int nBytes = size *sizeof(float); 74 //开辟主机内存 75 float *A_host = (float*)malloc(nBytes); 76 float *B_host = (float*)malloc(nBytes); 77 float *C_from_gpu = (float*)malloc(nBytes); 78 79 initial(A_host,size); 80 printf("A_host is:"); 81 // printList(A_host,size); 82 83 84 //记录当前时间 85 start_cpu = clock(); 86 87 sumMatrix(A_host,B_host,size); 88 89 stop_cpu = clock(); 90 91 printf(" The time from CPU: %f(ms) ", (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC); 92 //输出结果 93 printf(" CPU result is : "); 94 // printList(B_host,1); 95 96 //开辟设备内存 97 float* A_dev = NULL; 98 float* B_dev = NULL; 99 100 cudaMalloc((void**)&A_dev,nBytes); 101 cudaMalloc((void**)&B_dev,nBytes); 102 // cudaMalloc((void**)&C_dev,nBytes); 103 104 //输入数据,从hostTO device 105 cudaMemcpy(A_dev,A_host,nBytes,cudaMemcpyHostToDevice); 106 //cudaMemcpy(B_dev,B_host,nBytes,cudaMemcpyHostToDevice); 107 dim3 block(1024,1); 108 dim3 grid((size-1)/block.x+1,1); 109 // 创建Event 110 cudaEventCreate(&start_GPU); 111 cudaEventCreate(&stop_GPU); 112 //记录当前时间 113 cudaEventRecord(start_GPU,0); 114 start_gpu = clock(); 115 116 GPUreduceNeighbored<<<grid,block>>>(A_dev,B_dev,size); 117 118 stop_gpu = clock(); 119 cudaEventRecord(stop_GPU,0); 120 cudaEventSynchronize(start_GPU); 121 cudaEventSynchronize(stop_GPU); 122 //计算时间差 123 cudaEventElapsedTime(&time_GPU, start_GPU,stop_GPU); 124 printf(" The time from GPU: %f(ms) ", time_GPU/1000); 125 //消除Event 126 cudaEventDestroy(start_GPU); 127 cudaEventDestroy(stop_GPU); 128 cudaMemcpy(C_from_gpu,B_dev,nBytes,cudaMemcpyDeviceToHost); 129 //输出结果 130 printf(" GPU result is : "); 131 // printList(C_from_gpu,1); 132 133 cudaFree(A_dev); 134 cudaFree(B_dev); 135 136 free(A_host); 137 free(B_host); 138 139 140 time_cpu = (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC; 141 time_gpu = (float) (stop_gpu-start_gpu) / CLOCKS_PER_SEC; 142 printf(" The time for CPU by host: %f(ms) ", time_cpu); 143 printf("The time for GPU by host: %f(ms) ", time_gpu); 144 145 146 cudaDeviceReset(); 147 return 0; 148 }

4.

内存组织

在CPU编程中,我们学过空间局部性与时间局部性,这在CUDA编程中对我们也是很有启发的。之前我们采用相邻配对进行求和,这种方法最为直观,但会造成在第二轮之后内存访问的不连续(因为使用了stride变量作为跨度)。为了缓解这种现象,我们重新组织一下配对方法,让对内存的访问更加集中,如下图,这种新的配对方法我们称为交错配对。

1 #include <stdio.h> 2 #include <stdlib.h> 3 #include <time.h> 4 #include "cuda_runtime.h" 5 #include "device_launch_parameters.h" 6 void initial(float *list,int size) 7 { 8 float *num = list; 9 //srand((unsigned)time(NULL)); 10 for (int i=0; i<size; i++) 11 { 12 num[i] = rand()%10; 13 } 14 15 } 16 void sumMatrix(float* MatA, float* MatB,int size) 17 { 18 float* a=MatA; 19 float* b=MatB; 20 int i = 0; 21 for(int j=0; j<size;j++) 22 { 23 b[i] += a[j]; 24 } 25 26 } 27 28 29 //核函数 30 __global__ void GPUreduceNeighbored(float* g_idata,float* g_odata, unsigned int n) 31 { 32 unsigned int tid = threadIdx.x; 33 unsigned idx = blockIdx.x*blockDim.x + threadIdx.x; 34 // convert global data pointer to the local point of this block 35 float *idata = g_idata + blockIdx.x*blockDim.x; 36 if (idx >= n) 37 return; 38 //in-place reduction in global memory 39 for (int stride = blockDim.x/2; stride >0; stride >>=1) 40 { 41 42 if (tid <stride) 43 { 44 idata[tid] += idata[tid + stride]; 45 } 46 __syncthreads(); 47 } 48 //write result for this block to global men 49 if (tid == 0) 50 g_odata[blockIdx.x] = idata[0]; 51 52 } 53 void printList(float* A,int size) 54 { 55 for (int i=0;i<size;i++) 56 { 57 printf(" %f ",A[i]); 58 } 59 } 60 int main(int argc, char** argv) 61 { 62 //CPU计时方法 63 float time_cpu, time_gpu; 64 clock_t start_cpu, stop_cpu, start_gpu, stop_gpu; 65 //GPU计时方法 66 float time_CPU, time_GPU; 67 cudaEvent_t start_GPU, stop_GPU, start_CPU, stop_CPU; 68 69 //输入一维数组 70 int size = 1<<24; 71 72 int nBytes = size *sizeof(float); 73 //开辟主机内存 74 float *A_host = (float*)malloc(nBytes); 75 float *B_host = (float*)malloc(nBytes); 76 float *C_from_gpu = (float*)malloc(nBytes); 77 78 initial(A_host,size); 79 printf("A_host is:"); 80 // printList(A_host,size); 81 82 83 //记录当前时间 84 start_cpu = clock(); 85 86 sumMatrix(A_host,B_host,size); 87 88 stop_cpu = clock(); 89 90 printf(" The time from CPU: %f(ms) ", (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC); 91 92 //输出结果 93 printf(" CPU result is : "); 94 // printList(B_host,1); 95 96 //开辟设备内存 97 float* A_dev = NULL; 98 float* B_dev = NULL; 99 100 cudaMalloc((void**)&A_dev,nBytes); 101 cudaMalloc((void**)&B_dev,nBytes); 102 // cudaMalloc((void**)&C_dev,nBytes); 103 104 //输入数据,从hostTO device 105 cudaMemcpy(A_dev,A_host,nBytes,cudaMemcpyHostToDevice); 106 //cudaMemcpy(B_dev,B_host,nBytes,cudaMemcpyHostToDevice); 107 dim3 block(1024,1); 108 dim3 grid((size-1)/block.x+1,1); 109 // 创建Event 110 cudaEventCreate(&start_GPU); 111 cudaEventCreate(&stop_GPU); 112 //记录当前时间 113 cudaEventRecord(start_GPU,0); 114 start_gpu = clock(); 115 116 GPUreduceNeighbored<<<grid,block>>>(A_dev,B_dev,size); 117 118 stop_gpu = clock(); 119 cudaEventRecord(stop_GPU,0); 120 cudaEventSynchronize(start_GPU); 121 cudaEventSynchronize(stop_GPU); 122 //计算时间差 123 cudaEventElapsedTime(&time_GPU, start_GPU,stop_GPU); 124 printf(" The time from GPU: %f(ms) ", time_GPU/1000); 125 //消除Event 126 cudaEventDestroy(start_GPU); 127 cudaEventDestroy(stop_GPU); 128 cudaMemcpy(C_from_gpu,B_dev,nBytes,cudaMemcpyDeviceToHost); 129 //输出结果 130 printf(" GPU result is : "); 131 // printList(C_from_gpu,1); 132 133 cudaFree(A_dev); 134 cudaFree(B_dev); 135 136 free(A_host); 137 free(B_host); 138 139 140 time_cpu = (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC; 141 time_gpu = (float) (stop_gpu-start_gpu) / CLOCKS_PER_SEC; 142 printf(" The time for CPU by host: %f(ms) ", time_cpu); 143 printf("The time for GPU by host: %f(ms) ", time_gpu); 144 145 146 cudaDeviceReset(); 147 return 0; 148 }

这给我们的启发是,对全局内存的访问要尽量进行合并访问与存储,这样才能达到最大的带宽。

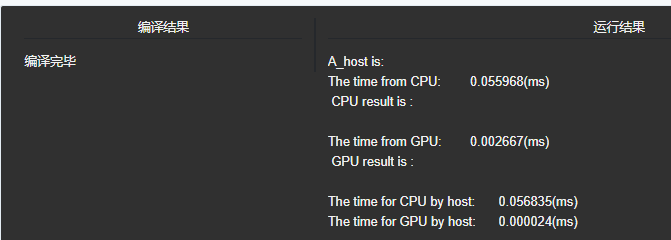

程序从2.48ms加速到1.36ms,累计获得了1.8倍的加速比。而这两点,恰恰对应了系列开篇中我们提到的CUDA编程的特点,线程组织和内存组织,这也是我们在CUDA编程中需要随时注意的。

5.

展开循环以隐藏延时

(1)现在使用高级语言编程时,我们已经不会刻意去进行循环展开了,因为这件事会由编译器帮我们完成。但在CUDA编程中,循环展开具有很重要的意义,它能给线程束调度器提供更多可用的线程束,以帮助我们有效地隐藏延时。

于是我们可以使用循环展开的方法,对并行归约的程序再来一波优化。

我们之前只是用一个线程块来处理一个小数组,我们称其为一个数据块。如果我们使用一个线程块手动展开两个数据块的处理,那会怎样呢?先给个结论,这样通过减少指令消耗和增加更多的独立调度指令,更多的并发操作被添加到流水线上,以产生了更高的指令和内存带宽。反映在宏观上就是程序执行的总体时间变少了。

(2)从概念上来讲,可以把它作为归约循环的一个迭代,此循环可在数据块间进行归约。

如果每个线程处理两个数据块,那么我们需要的线程块总量会变为原来的一半,因此主函数也要对应修改。

看上去,这样处理后线程块减少了,与我们之前要使用尽量多线程块的理论不符。但实际我们通过这种方式,让一个线程中有更多的独立内存加载/存储操作,这样可以更好地隐藏内存延时,更好地使用设备内存读取吞吐量的指标,以产生更好的性能。所以我们编程时,各个策略要针对实际情况结合使用。

#include <stdio.h>

#include <stdlib.h>

#include <time.h>

#include "cuda_runtime.h"

#include "device_launch_parameters.h"

void initial(int *list,int size)

{

int *num = list;

for (int i=0; i<size; i++)

{

num[i] = rand()%10;

}

}

void sumMatrix(int* MatA, int* MatB,int size)

{

int* a=MatA;

int* b=MatB;

int i = 0;

b[0] = 0;

for(int j=0; j<size;j++)

{

b[i] += a[j];

}

}

//核函数

__global__ void GPUreduceNeighbored(int* g_idata,int* g_odata, unsigned int n)

{

//set thread ID

unsigned int tid = threadIdx.x;

unsigned int idx = blockDim.x*blockIdx.x*2+threadIdx.x;

if (tid >= n) return;

int *idata = g_idata + blockIdx.x*blockDim.x*2;

if(idx+blockDim.x<n)

{

g_idata[idx]+=g_idata[idx+blockDim.x];

}

__syncthreads();

for (int stride = blockDim.x/2; stride>0 ; stride >>=1)

{

if (tid <stride)

{

idata[tid] += idata[tid + stride];

}

__syncthreads();

}

if (tid == 0)

g_odata[blockIdx.x] = idata[0];

}

void printList(int* A,int size)

{

for (int i=0;i<size;i++)

{

printf(" %d ",A[i]);

}

}

int main(int argc, char** argv)

{

//CPU计时方法

float time_cpu, time_gpu;

clock_t start_cpu, stop_cpu, start_gpu, stop_gpu;

//GPU计时方法

float time_CPU, time_GPU;

cudaEvent_t start_GPU, stop_GPU, start_CPU, stop_CPU;

//输入一维数组

int size = 1<<24;

size_t nBytes = size * sizeof(int);

//开辟主机内存

int *A_host = (int*)malloc(nBytes);

int *B_host = (int*)malloc(nBytes);

int *C_from_gpu = (int*)malloc(nBytes);

initial(A_host,size);

printf("A_host is:");

start_cpu = clock();

sumMatrix(A_host,B_host,size);

stop_cpu = clock();

printf("

The time from CPU: %f(ms)

", (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC);

printf(" CPU result is :

");

printList(B_host,1);

int blocksize = 1024;

dim3 block(blocksize, 1);

dim3 grid((size - 1) / block.x + 1, 1);

printf("

grid %d block %d

", grid.x, block.x);

int* idata_dev = NULL;

int* odata_dev = NULL;

cudaMalloc((void**)&idata_dev,nBytes);

cudaMalloc((void**)&odata_dev,grid.x * sizeof(int));

int gpu_sum=0;

cudaMemcpy(idata_dev,A_host,nBytes,cudaMemcpyHostToDevice);

cudaDeviceSynchronize();

// 创建Event

cudaEventCreate(&start_GPU);

cudaEventCreate(&stop_GPU);

//记录当前时间

cudaEventRecord(start_GPU,0);

start_gpu = clock();

GPUreduceNeighbored<<<grid.x/2,block>>>(idata_dev,odata_dev,size);

stop_gpu = clock();

cudaEventRecord(stop_GPU,0);

cudaEventSynchronize(start_GPU);

cudaEventSynchronize(stop_GPU);

cudaEventElapsedTime(&time_GPU, start_GPU,stop_GPU);

printf("

The time from GPU: %f(ms)

", time_GPU/1000);

cudaDeviceSynchronize();

cudaEventDestroy(start_GPU);

cudaEventDestroy(stop_GPU);

cudaMemcpy(C_from_gpu,odata_dev,grid.x * sizeof(int),cudaMemcpyDeviceToHost);

for(int i=0;i<grid.x/2;i++)

{

gpu_sum += C_from_gpu[i];

}

printf(" GPU result is :%d

",gpu_sum);

// printList(C_from_gpu,1);

cudaFree(idata_dev);

cudaFree(odata_dev);

free(A_host);

free(B_host);

time_cpu = (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC;

time_gpu = (float) (stop_gpu-start_gpu) / CLOCKS_PER_SEC;

printf("

The time for CPU by host: %f(ms)

", time_cpu);

printf("The time for GPU by host: %f(ms)

", time_gpu);

cudaDeviceReset();

return 0;

}

既然一个线程块处理2个数据块能获得这么高的加速比,那么处理4个,8个呢?

随着处理数据块数量的增多,处理时间不断降低。不过随着设备内存吞吐量逐渐到达极限,这个时间就不会继续降低了。

总结一下,为了隐藏延时,我们需要合理地增加一个线程块中需要处理的数据量,以便线程束调度器进行调度。

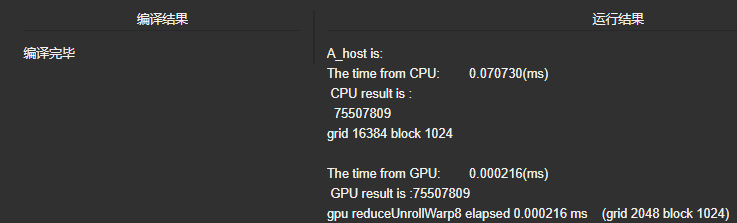

6.

展开线程

我们可以再进一步想想,当执行到最后几次迭代时,当只需要32个或更少线程时,每次迭代后还需要进行线程束同步。为了加速,我们可以把这最后6次迭代进行展开,使用下面的语句:

if(tid<32)

{

volatile int *vmem = idata;

vmem[tid]+=vmem[tid+32];

vmem[tid]+=vmem[tid+16];

vmem[tid]+=vmem[tid+8];

vmem[tid]+=vmem[tid+4];

vmem[tid]+=vmem[tid+2];

vmem[tid]+=vmem[tid+1];

}这样就避免了执行控制循环逻辑和线程同步逻辑的时间。

1 #include <stdio.h> 2 #include <stdlib.h> 3 #include <time.h> 4 #include "cuda_runtime.h" 5 #include "device_launch_parameters.h" 6 7 8 void initial(int *list,int size) 9 { 10 int *num = list; 11 for (int i=0; i<size; i++) 12 { 13 num[i] = rand()%10; 14 } 15 16 } 17 18 void sumMatrix(int* MatA, int* MatB,int size) 19 { 20 int* a=MatA; 21 int* b=MatB; 22 int i = 0; 23 b[0] = 0; 24 for(int j=0; j<size;j++) 25 { 26 b[i] += a[j]; 27 } 28 29 } 30 31 32 //核函数 33 __global__ void GPUreduceNeighbored(int* g_idata,int* g_odata, unsigned int n) 34 { 35 //set thread ID 36 unsigned int tid = threadIdx.x; 37 unsigned int idx = blockDim.x*blockIdx.x*8+threadIdx.x; 38 //boundary check 39 if (tid >= n) return; 40 //convert global data pointer to the 41 int *idata = g_idata + blockIdx.x*blockDim.x*8; 42 //unrolling 8; 43 if(idx+7 * blockDim.x<n) 44 { 45 int a1=g_idata[idx]; 46 int a2=g_idata[idx+blockDim.x]; 47 int a3=g_idata[idx+2*blockDim.x]; 48 int a4=g_idata[idx+3*blockDim.x]; 49 int a5=g_idata[idx+4*blockDim.x]; 50 int a6=g_idata[idx+5*blockDim.x]; 51 int a7=g_idata[idx+6*blockDim.x]; 52 int a8=g_idata[idx+7*blockDim.x]; 53 g_idata[idx]=a1+a2+a3+a4+a5+a6+a7+a8; 54 55 } 56 __syncthreads(); 57 //in-place reduction in global memory 58 for (int stride = blockDim.x/2; stride>32; stride >>=1) 59 { 60 if (tid <stride) 61 { 62 idata[tid] += idata[tid + stride]; 63 } 64 //synchronize within block 65 __syncthreads(); 66 } 67 //write result for this block to global mem 68 if(tid<32) 69 { 70 volatile int *vmem = idata; 71 vmem[tid]+=vmem[tid+32]; 72 vmem[tid]+=vmem[tid+16]; 73 vmem[tid]+=vmem[tid+8]; 74 vmem[tid]+=vmem[tid+4]; 75 vmem[tid]+=vmem[tid+2]; 76 vmem[tid]+=vmem[tid+1]; 77 78 } 79 80 if (tid == 0) 81 g_odata[blockIdx.x] = idata[0]; 82 83 } 84 85 void printList(int* A,int size) 86 { 87 for (int i=0;i<size;i++) 88 { 89 printf(" %d ",A[i]); 90 } 91 } 92 93 int main(int argc, char** argv) 94 { 95 //CPU计时方法 96 float time_cpu, time_gpu; 97 clock_t start_cpu, stop_cpu, start_gpu, stop_gpu; 98 //GPU计时方法 99 float time_CPU, time_GPU; 100 cudaEvent_t start_GPU, stop_GPU, start_CPU, stop_CPU; 101 102 //输入一维数组 103 int size = 1<<24; 104 size_t nBytes = size * sizeof(int); 105 //开辟主机内存 106 int *A_host = (int*)malloc(nBytes); 107 int *B_host = (int*)malloc(nBytes); 108 int *C_from_gpu = (int*)malloc(nBytes); 109 110 initial(A_host,size); 111 printf("A_host is:"); 112 113 start_cpu = clock(); 114 115 sumMatrix(A_host,B_host,size); 116 117 stop_cpu = clock(); 118 119 printf(" The time from CPU: %f(ms) ", (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC); 120 121 122 printf(" CPU result is : "); 123 printList(B_host,1); 124 125 int blocksize = 1024; 126 dim3 block(blocksize, 1); 127 dim3 grid((size - 1) / block.x + 1, 1); 128 printf(" grid %d block %d ", grid.x, block.x); 129 130 int* idata_dev = NULL; 131 int* odata_dev = NULL; 132 cudaMalloc((void**)&idata_dev,nBytes); 133 cudaMalloc((void**)&odata_dev,grid.x * sizeof(int)); 134 int gpu_sum=0; 135 cudaMemcpy(idata_dev,A_host,nBytes,cudaMemcpyHostToDevice); 136 cudaDeviceSynchronize(); 137 // 创建Event 138 cudaEventCreate(&start_GPU); 139 cudaEventCreate(&stop_GPU); 140 //记录当前时间 141 cudaEventRecord(start_GPU,0); 142 143 start_gpu = clock(); 144 145 GPUreduceNeighbored<<<grid.x/8,block>>>(idata_dev,odata_dev,size); 146 147 stop_gpu = clock(); 148 149 cudaEventRecord(stop_GPU,0); 150 cudaEventSynchronize(start_GPU); 151 cudaEventSynchronize(stop_GPU); 152 cudaEventElapsedTime(&time_GPU, start_GPU,stop_GPU); 153 printf(" The time from GPU: %f(ms) ", time_GPU/1000); 154 cudaDeviceSynchronize(); 155 cudaEventDestroy(start_GPU); 156 cudaEventDestroy(stop_GPU); 157 158 cudaMemcpy(C_from_gpu,odata_dev,grid.x * sizeof(int),cudaMemcpyDeviceToHost); 159 for(int i=0;i<grid.x/8;i++) 160 { 161 gpu_sum += C_from_gpu[i]; 162 } 163 printf(" GPU result is :%d ",gpu_sum); 164 printf("gpu reduceUnrollWarp8 elapsed %lf ms (grid %d block %d) ", 165 time_GPU/1000, grid.x/8, block.x); 166 // printList(C_from_gpu,1); 167 168 cudaFree(idata_dev); 169 cudaFree(odata_dev); 170 free(A_host); 171 free(B_host); 172 time_cpu = (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC; 173 time_gpu = (float) (stop_gpu-start_gpu) / CLOCKS_PER_SEC; 174 printf(" The time for CPU by host: %f(ms) ", time_cpu); 175 printf("The time for GPU by host: %f(ms) ", time_gpu); 176 177 cudaDeviceReset(); 178 return 0; 179 }

7.

完全展开

理论上如果编译时已知一个循环中的迭代次数,就可以把循环完全展开。因为我们的核函数中的循环迭代次数是基于一个线程块维度的,而且一个线程块支持最大1024个线程,所以我们可以将这个循环进行完全展开。核函数代码如下:

1 #include <stdio.h> 2 #include <stdlib.h> 3 #include <time.h> 4 #include "cuda_runtime.h" 5 #include "device_launch_parameters.h" 6 7 8 void initial(int *list,int size) 9 { 10 int *num = list; 11 for (int i=0; i<size; i++) 12 { 13 num[i] = rand()%10; 14 } 15 16 } 17 18 void sumMatrix(int* MatA, int* MatB,int size) 19 { 20 int* a=MatA; 21 int* b=MatB; 22 int i = 0; 23 b[0] = 0; 24 for(int j=0; j<size;j++) 25 { 26 b[i] += a[j]; 27 } 28 29 } 30 31 32 //核函数 33 __global__ void GPUreduceNeighbored(int* g_idata,int* g_odata, unsigned int n) 34 { 35 //set thread ID 36 unsigned int tid = threadIdx.x; 37 unsigned int idx = blockDim.x*blockIdx.x*8+threadIdx.x; 38 //boundary check 39 if (tid >= n) return; 40 //convert global data pointer to the 41 int *idata = g_idata + blockIdx.x*blockDim.x*8; 42 if(idx+7 * blockDim.x<n) 43 { 44 int a1=g_idata[idx]; 45 int a2=g_idata[idx+blockDim.x]; 46 int a3=g_idata[idx+2*blockDim.x]; 47 int a4=g_idata[idx+3*blockDim.x]; 48 int a5=g_idata[idx+4*blockDim.x]; 49 int a6=g_idata[idx+5*blockDim.x]; 50 int a7=g_idata[idx+6*blockDim.x]; 51 int a8=g_idata[idx+7*blockDim.x]; 52 g_idata[idx]=a1+a2+a3+a4+a5+a6+a7+a8; 53 54 } 55 __syncthreads(); 56 //in-place reduction in global memory 57 if(blockDim.x>=1024 && tid <512) 58 idata[tid]+=idata[tid+512]; 59 __syncthreads(); 60 if(blockDim.x>=512 && tid <256) 61 idata[tid]+=idata[tid+256]; 62 __syncthreads(); 63 if(blockDim.x>=256 && tid <128) 64 idata[tid]+=idata[tid+128]; 65 __syncthreads(); 66 if(blockDim.x>=128 && tid <64) 67 idata[tid]+=idata[tid+64]; 68 __syncthreads(); 69 //write result for this block to global mem 70 if(tid<32) 71 { 72 volatile int *vmem = idata; 73 vmem[tid]+=vmem[tid+32]; 74 vmem[tid]+=vmem[tid+16]; 75 vmem[tid]+=vmem[tid+8]; 76 vmem[tid]+=vmem[tid+4]; 77 vmem[tid]+=vmem[tid+2]; 78 vmem[tid]+=vmem[tid+1]; 79 80 } 81 82 if (tid == 0) 83 g_odata[blockIdx.x] = idata[0]; 84 85 } 86 87 void printList(int* A,int size) 88 { 89 for (int i=0;i<size;i++) 90 { 91 printf(" %d ",A[i]); 92 } 93 } 94 95 int main(int argc, char** argv) 96 { 97 //CPU计时方法 98 float time_cpu, time_gpu; 99 clock_t start_cpu, stop_cpu, start_gpu, stop_gpu; 100 //GPU计时方法 101 float time_CPU, time_GPU; 102 cudaEvent_t start_GPU, stop_GPU, start_CPU, stop_CPU; 103 104 //输入一维数组 105 int size = 1<<24; 106 size_t nBytes = size * sizeof(int); 107 //开辟主机内存 108 int *A_host = (int*)malloc(nBytes); 109 int *B_host = (int*)malloc(nBytes); 110 int *C_from_gpu = (int*)malloc(nBytes); 111 112 initial(A_host,size); 113 printf("A_host is:"); 114 115 start_cpu = clock(); 116 117 sumMatrix(A_host,B_host,size); 118 119 stop_cpu = clock(); 120 121 printf(" The time from CPU: %f(ms) ", (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC); 122 123 124 printf(" CPU result is : "); 125 printList(B_host,1); 126 127 int blocksize = 1024; 128 dim3 block(blocksize, 1); 129 dim3 grid((size - 1) / block.x + 1, 1); 130 printf(" grid %d block %d ", grid.x, block.x); 131 132 int* idata_dev = NULL; 133 int* odata_dev = NULL; 134 cudaMalloc((void**)&idata_dev,nBytes); 135 cudaMalloc((void**)&odata_dev,grid.x * sizeof(int)); 136 int gpu_sum=0; 137 cudaMemcpy(idata_dev,A_host,nBytes,cudaMemcpyHostToDevice); 138 cudaDeviceSynchronize(); 139 // 创建Event 140 cudaEventCreate(&start_GPU); 141 cudaEventCreate(&stop_GPU); 142 //记录当前时间 143 cudaEventRecord(start_GPU,0); 144 145 start_gpu = clock(); 146 147 GPUreduceNeighbored<<<grid.x/8,block>>>(idata_dev,odata_dev,size); 148 149 stop_gpu = clock(); 150 151 cudaEventRecord(stop_GPU,0); 152 cudaEventSynchronize(start_GPU); 153 cudaEventSynchronize(stop_GPU); 154 cudaEventElapsedTime(&time_GPU, start_GPU,stop_GPU); 155 printf(" The time from GPU: %f(ms) ", time_GPU/1000); 156 cudaDeviceSynchronize(); 157 cudaEventDestroy(start_GPU); 158 cudaEventDestroy(stop_GPU); 159 160 cudaMemcpy(C_from_gpu,odata_dev,grid.x * sizeof(int),cudaMemcpyDeviceToHost); 161 for(int i=0;i<grid.x/8;i++) 162 { 163 gpu_sum += C_from_gpu[i]; 164 } 165 printf(" GPU result is :%d ",gpu_sum); 166 printf("gpu reduceUnrollWarp8 elapsed %lf ms (grid %d block %d) ", 167 time_GPU/1000, grid.x/8, block.x); 168 // printList(C_from_gpu,1); 169 170 cudaFree(idata_dev); 171 cudaFree(odata_dev); 172 free(A_host); 173 free(B_host); 174 time_cpu = (float) (stop_cpu-start_cpu) / CLOCKS_PER_SEC; 175 time_gpu = (float) (stop_gpu-start_gpu) / CLOCKS_PER_SEC; 176 printf(" The time for CPU by host: %f(ms) ", time_cpu); 177 printf("The time for GPU by host: %f(ms) ", time_gpu); 178 179 cudaDeviceReset(); 180 return 0; 181 }

注:其中并行规约看的是知乎文章:https://zhuanlan.zhihu.com/p/98463812