引言

本文讨论的kNN算法是监督学习中分类方法的一种。所谓监督学习与非监督学习,是指训练数据是否有标注类别,若有则为监督学习,若否则为非监督学习。监督学习是根据输入数据(训练数据)学习一个模型,能对后来的输入做预测。在监督学习中,输入变量与输出变量可以是连续的,也可以是离散的。若输入变量与输出变量均为连续变量,则称为回归;输出变量为有限个离散变量,则称为分类;输入变量与输出变量均为变量序列,则称为标注

有监督的分类学习

KNN算法的基本要素大致有三个:

1、K 值的选择 (即输入新实例要取多少个训练实例点作为近邻)

2、距离的度量 (欧氏距离,曼哈顿距离等)

3、分类决策规则 (常用的方式是取k个近邻训练实例中类别出现次数最多者作为输入新实例的类别)

使用方式:

K 值会对算法的结果产生重大影响。K值较小意味着只有与输入实例较近的训练实例才会对预测结果起作用,容易发生过拟合;如果 K 值较大,优点是可以减少学习的估计误差,缺点是学习的近似误差增大,这时与输入实例较远的训练实例也会对预测起作用,是预测发生错误。在实际应用中,K 值一般选择一个较小的数值,通常采用交叉验证的方法来选择最有的 K 值。随着训练实例数目趋向于无穷和 K=1 时,误差率不会超过贝叶斯误差率的2倍,如果K也趋向于无穷,则误差率趋向于贝叶斯误差率。

距离度量一般采用 Lp 距离,当p=2时,即为欧氏距离,在度量之前,应该将每个属性的值规范化,这样有助于防止具有较大初始值域的属性比具有较小初始值域的属性的权重过大(规范)

算法中的分类决策规则往往是多数表决,即由输入实例的 K 个最临近的训练实例中的多数类决定输入实例的类别

简述

KNN分类算法,是理论上比较成熟的方法,也是最简单的机器学习算法之一。

该方法的思路是:如果一个样本在特征空间中的k个最相似(即特征空间中最邻近)的样本中的大多数属于某一个类别,则该样本也属于这个类别。

KNN算法中,所选择的邻居都是已经正确分类的对象。该方法在定类决策上只依据最邻近的一个或者几个样本的类别来决定待分样本所属的类别。

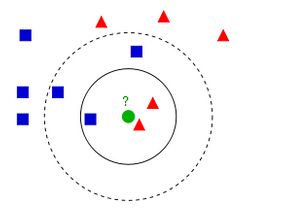

一个对于KNN算法解释最清楚的图如下所示:

蓝方块和红三角均是已有分类数据,当前的任务是将绿色圆块进行分类判断,判断是属于蓝方块或者红三角。

当然这里的分类还跟K值是有关的:

如果K=3(实线圈),红三角占比2/3,则判断为红三角;

如果K=5(虚线圈),蓝方块占比3/5,则判断为蓝方块。

由此可以看出knn算法实际上根本就不用进行训练,而是直接进行计算的,训练时间为0,计算时间为训练集规模n。

knn算法在分类时主要的不足是,当样本不平衡时,如果一个类的样本容量很大,而其他类样本容量很小时,有可能导致当输入一个新样本时,该样本的 K 个邻居中大容量类的样本占多数。

*算法实现

1、函数

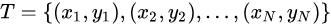

输入: 训练数据集  ,其中

,其中 为训练实例的特征向量(

为训练实例的特征向量(i为特征值),

为训练实例的类别(

为训练实例的类别(i为

i对应的类别)

输出: 新输入实例所属类别

(实例类别)

1、根据给定的距离度量,在训练集T中找到与最近的k个点,涵盖这k个点的邻域记为

(上图中实体圆圈包含的数据)

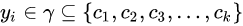

2、在中根据分类决策规则(如多数表决)决定

所属的类别

(即统计

哪个

类别出现的次数多,则取哪个):

其中I为指示函数,仅当时I(*)的值为1,否则为0.(argmax公式表示后面取值最大时,Cj的变量值,公式的目的也是求此值;

i为

中的值,Cj为涵盖领域存在的类别;利用求和公式∑,当Cj为C1时,

i∈

分别代入,得出值;

i为已知数据,Cj值不断变化,求出公式的最大值,得出最终

得取值)

2、距离度量方式

较为常用的距离度量方式是欧式距离,定义可以使用其他更为一般的距离或闵科夫斯基(Minkowski)距离。

设特征空间为n为实数向量空间

,

,

,

的

距离可定义为:

闵科夫斯基距离

欧氏距离,p取2

曼哈顿距离,p取1

p取

3、K值选择

一般会先选择较小的k值,然后进行交叉验证选取最优的k值。k值较小时,整体模型会变得复杂,且对近邻的训练实例点较为敏感,容易出现过拟合。k值较大时,模型则会趋于简单,此时较远的训练实例点也会起到预测作用,容易出现欠拟合,特殊的,当k取N时,此时无论输入实例是什么,都会将其预测为属于训练实例中最多的类别。

4、分类决策规则

KNN学习模型:输入

通过学习得到决策函数:输出类别

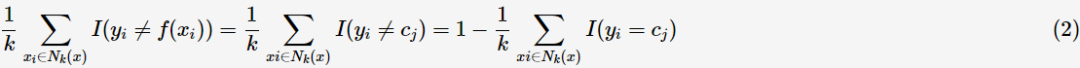

假设分类损失函数为0-1损失函数,即分类正确时损失函数值为0,分类错误时则为1。假如给 预测类别为

预测类别为 ,即

,即 ;同时由式子(1)可知k邻域的样本点对学习模型的贡献度是均等的,则kNN学习模型误分类率为

;同时由式子(1)可知k邻域的样本点对学习模型的贡献度是均等的,则kNN学习模型误分类率为

若要最小化误分类率,则应

所以,最大表决规则等价于经验风险最小化。

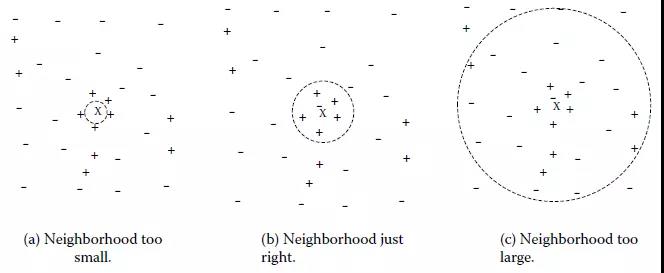

k值得选取对kNN学习模型有着很大的影响。若k值过小,预测结果会对噪音样本点显得异常敏感。特别地,当k等于1时,kNN退化成最近邻算法,没有了显式的学习过程。若k值过大,会有较大的邻域训练样本进行预测,可以减小噪音样本点的减少;但是距离较远的训练样本点对预测结果会有贡献,以至于造成预测结果错误。下图给出k值的选取对于预测结果的影响:

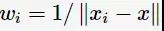

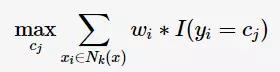

前面提到过,k邻域的样本点对预测结果的贡献度是相等的;但距离更近的样本点应有更大的相似度,其贡献度应比距离更远的样本点大。可以加上权值 进行修正,则最大表决原则变成:

进行修正,则最大表决原则变成:

应用案例

对data2.txt中的数据进行运算,取前10%的数据进行验证,90%的数据做训练,根据K值的不同,算出误差率,选出最佳K值;

40920 8.326976 0.953952 3 14488 7.153469 1.673904 2 26052 1.441871 0.805124 1 75136 13.147394 0.428964 1 38344 1.669788 0.134296 1 72993 10.141740 1.032955 1 35948 6.830792 1.213192 3 42666 13.276369 0.543880 3 67497 8.631577 0.749278 1 35483 12.273169 1.508053 3 50242 3.723498 0.831917 1 63275 8.385879 1.669485 1 5569 4.875435 0.728658 2 51052 4.680098 0.625224 1 77372 15.299570 0.331351 1 43673 1.889461 0.191283 1 61364 7.516754 1.269164 1 69673 14.239195 0.261333 1 15669 0.000000 1.250185 2 28488 10.528555 1.304844 3 6487 3.540265 0.822483 2 37708 2.991551 0.833920 1 22620 5.297865 0.638306 2 28782 6.593803 0.187108 3 19739 2.816760 1.686209 2 36788 12.458258 0.649617 3 5741 0.000000 1.656418 2 28567 9.968648 0.731232 3 6808 1.364838 0.640103 2 41611 0.230453 1.151996 1 36661 11.865402 0.882810 3 43605 0.120460 1.352013 1 15360 8.545204 1.340429 3 63796 5.856649 0.160006 1 10743 9.665618 0.778626 2 70808 9.778763 1.084103 1 72011 4.932976 0.632026 1 5914 2.216246 0.587095 2 14851 14.305636 0.632317 3 33553 12.591889 0.686581 3 44952 3.424649 1.004504 1 17934 0.000000 0.147573 2 27738 8.533823 0.205324 3 29290 9.829528 0.238620 3 42330 11.492186 0.263499 3 36429 3.570968 0.832254 1 39623 1.771228 0.207612 1 32404 3.513921 0.991854 1 27268 4.398172 0.975024 1 5477 4.276823 1.174874 2 14254 5.946014 1.614244 2 68613 13.798970 0.724375 1 41539 10.393591 1.663724 3 7917 3.007577 0.297302 2 21331 1.031938 0.486174 2 8338 4.751212 0.064693 2 5176 3.692269 1.655113 2 18983 10.448091 0.267652 3 68837 10.585786 0.329557 1 13438 1.604501 0.069064 2 48849 3.679497 0.961466 1 12285 3.795146 0.696694 2 7826 2.531885 1.659173 2 5565 9.733340 0.977746 2 10346 6.093067 1.413798 2 1823 7.712960 1.054927 2 9744 11.470364 0.760461 3 16857 2.886529 0.934416 2 39336 10.054373 1.138351 3 65230 9.972470 0.881876 1 2463 2.335785 1.366145 2 27353 11.375155 1.528626 3 16191 0.000000 0.605619 2 12258 4.126787 0.357501 2 42377 6.319522 1.058602 1 25607 8.680527 0.086955 3 77450 14.856391 1.129823 1 58732 2.454285 0.222380 1 46426 7.292202 0.548607 3 32688 8.745137 0.857348 3 64890 8.579001 0.683048 1 8554 2.507302 0.869177 2 28861 11.415476 1.505466 3 42050 4.838540 1.680892 1 32193 10.339507 0.583646 3 64895 6.573742 1.151433 1 2355 6.539397 0.462065 2 0 2.209159 0.723567 2 70406 11.196378 0.836326 1 57399 4.229595 0.128253 1 41732 9.505944 0.005273 3 11429 8.652725 1.348934 3 75270 17.101108 0.490712 1 5459 7.871839 0.717662 2 73520 8.262131 1.361646 1 40279 9.015635 1.658555 3 21540 9.215351 0.806762 3 17694 6.375007 0.033678 2 22329 2.262014 1.022169 1 46570 5.677110 0.709469 1 42403 11.293017 0.207976 3 33654 6.590043 1.353117 1 9171 4.711960 0.194167 2 28122 8.768099 1.108041 3 34095 11.502519 0.545097 3 1774 4.682812 0.578112 2 40131 12.446578 0.300754 3 13994 12.908384 1.657722 3 77064 12.601108 0.974527 1 11210 3.929456 0.025466 2 6122 9.751503 1.182050 3 15341 3.043767 0.888168 2 44373 4.391522 0.807100 1 28454 11.695276 0.679015 3 63771 7.879742 0.154263 1 9217 5.613163 0.933632 2 69076 9.140172 0.851300 1 24489 4.258644 0.206892 1 16871 6.799831 1.221171 2 39776 8.752758 0.484418 3 5901 1.123033 1.180352 2 40987 10.833248 1.585426 3 7479 3.051618 0.026781 2 38768 5.308409 0.030683 3 4933 1.841792 0.028099 2 32311 2.261978 1.605603 1 26501 11.573696 1.061347 3 37433 8.038764 1.083910 3 23503 10.734007 0.103715 3 68607 9.661909 0.350772 1 27742 9.005850 0.548737 3 11303 0.000000 0.539131 2 0 5.757140 1.062373 2 32729 9.164656 1.624565 3 24619 1.318340 1.436243 1 42414 14.075597 0.695934 3 20210 10.107550 1.308398 3 33225 7.960293 1.219760 3 54483 6.317292 0.018209 1 18475 12.664194 0.595653 3 33926 2.906644 0.581657 1 43865 2.388241 0.913938 1 26547 6.024471 0.486215 3 44404 7.226764 1.255329 3 16674 4.183997 1.275290 2 8123 11.850211 1.096981 3 42747 11.661797 1.167935 3 56054 3.574967 0.494666 1 10933 0.000000 0.107475 2 18121 7.937657 0.904799 3 11272 3.365027 1.014085 2 16297 0.000000 0.367491 2 28168 13.860672 1.293270 3 40963 10.306714 1.211594 3 31685 7.228002 0.670670 3 55164 4.508740 1.036192 1 17595 0.366328 0.163652 2 1862 3.299444 0.575152 2 57087 0.573287 0.607915 1 63082 9.183738 0.012280 1 51213 7.842646 1.060636 3 6487 4.750964 0.558240 2 4805 11.438702 1.556334 3 30302 8.243063 1.122768 3 68680 7.949017 0.271865 1 17591 7.875477 0.227085 2 74391 9.569087 0.364856 1 37217 7.750103 0.869094 3 42814 0.000000 1.515293 1 14738 3.396030 0.633977 2 19896 11.916091 0.025294 3 14673 0.460758 0.689586 2 32011 13.087566 0.476002 3 58736 4.589016 1.672600 1 54744 8.397217 1.534103 1 29482 5.562772 1.689388 1 27698 10.905159 0.619091 3 11443 1.311441 1.169887 2 56117 10.647170 0.980141 3 39514 0.000000 0.481918 1 26627 8.503025 0.830861 3 16525 0.436880 1.395314 2 24368 6.127867 1.102179 1 22160 12.112492 0.359680 3 6030 1.264968 1.141582 2 6468 6.067568 1.327047 2 22945 8.010964 1.681648 3 18520 3.791084 0.304072 2 34914 11.773195 1.262621 3 6121 8.339588 1.443357 2 38063 2.563092 1.464013 1 23410 5.954216 0.953782 1 35073 9.288374 0.767318 3 52914 3.976796 1.043109 1 16801 8.585227 1.455708 3 9533 1.271946 0.796506 2 16721 0.000000 0.242778 2 5832 0.000000 0.089749 2 44591 11.521298 0.300860 3 10143 1.139447 0.415373 2 21609 5.699090 1.391892 2 23817 2.449378 1.322560 1 15640 0.000000 1.228380 2 8847 3.168365 0.053993 2 50939 10.428610 1.126257 3 28521 2.943070 1.446816 1 32901 10.441348 0.975283 3 42850 12.478764 1.628726 3 13499 5.856902 0.363883 2 40345 2.476420 0.096075 1 43547 1.826637 0.811457 1 70758 4.324451 0.328235 1 19780 1.376085 1.178359 2 44484 5.342462 0.394527 1 54462 11.835521 0.693301 3 20085 12.423687 1.424264 3 42291 12.161273 0.071131 3 47550 8.148360 1.649194 3 11938 1.531067 1.549756 2 40699 3.200912 0.309679 1 70908 8.862691 0.530506 1 73989 6.370551 0.369350 1 11872 2.468841 0.145060 2 48463 11.054212 0.141508 3 15987 2.037080 0.715243 2 70036 13.364030 0.549972 1 32967 10.249135 0.192735 3 63249 10.464252 1.669767 1 42795 9.424574 0.013725 3 14459 4.458902 0.268444 2 19973 0.000000 0.575976 2 5494 9.686082 1.029808 3 67902 13.649402 1.052618 1 25621 13.181148 0.273014 3 27545 3.877472 0.401600 1 58656 1.413952 0.451380 1 7327 4.248986 1.430249 2 64555 8.779183 0.845947 1 8998 4.156252 0.097109 2 11752 5.580018 0.158401 2 76319 15.040440 1.366898 1 27665 12.793870 1.307323 3 67417 3.254877 0.669546 1 21808 10.725607 0.588588 3 15326 8.256473 0.765891 2 20057 8.033892 1.618562 3 79341 10.702532 0.204792 1 15636 5.062996 1.132555 2 35602 10.772286 0.668721 3 28544 1.892354 0.837028 1 57663 1.019966 0.372320 1 78727 15.546043 0.729742 1 68255 11.638205 0.409125 1 14964 3.427886 0.975616 2 21835 11.246174 1.475586 3 7487 0.000000 0.645045 2 8700 0.000000 1.424017 2 26226 8.242553 0.279069 3 65899 8.700060 0.101807 1 6543 0.812344 0.260334 2 46556 2.448235 1.176829 1 71038 13.230078 0.616147 1 47657 0.236133 0.340840 1 19600 11.155826 0.335131 3 37422 11.029636 0.505769 3 1363 2.901181 1.646633 2 26535 3.924594 1.143120 1 47707 2.524806 1.292848 1 38055 3.527474 1.449158 1 6286 3.384281 0.889268 2 10747 0.000000 1.107592 2 44883 11.898890 0.406441 3 56823 3.529892 1.375844 1 68086 11.442677 0.696919 1 70242 10.308145 0.422722 1 11409 8.540529 0.727373 2 67671 7.156949 1.691682 1 61238 0.720675 0.847574 1 17774 0.229405 1.038603 2 53376 3.399331 0.077501 1 30930 6.157239 0.580133 1 28987 1.239698 0.719989 1 13655 6.036854 0.016548 2 7227 5.258665 0.933722 2 40409 12.393001 1.571281 3 13605 9.627613 0.935842 2 26400 11.130453 0.597610 3 13491 8.842595 0.349768 3 30232 10.690010 1.456595 3 43253 5.714718 1.674780 3 55536 3.052505 1.335804 1 8807 0.000000 0.059025 2 25783 9.945307 1.287952 3 22812 2.719723 1.142148 1 77826 11.154055 1.608486 1 38172 2.687918 0.660836 1 31676 10.037847 0.962245 3 74038 12.404762 1.112080 1 44738 10.237305 0.633422 3 17410 4.745392 0.662520 2 5688 4.639461 1.569431 2 36642 3.149310 0.639669 1 29956 13.406875 1.639194 3 60350 6.068668 0.881241 1 23758 9.477022 0.899002 3 25780 3.897620 0.560201 2 11342 5.463615 1.203677 2 36109 3.369267 1.575043 1 14292 5.234562 0.825954 2 11160 0.000000 0.722170 2 23762 12.979069 0.504068 3 39567 5.376564 0.557476 1 25647 13.527910 1.586732 3 14814 2.196889 0.784587 2 73590 10.691748 0.007509 1 35187 1.659242 0.447066 1 49459 8.369667 0.656697 3 31657 13.157197 0.143248 3 6259 8.199667 0.908508 2 33101 4.441669 0.439381 3 27107 9.846492 0.644523 3 17824 0.019540 0.977949 2 43536 8.253774 0.748700 3 67705 6.038620 1.509646 1 35283 6.091587 1.694641 3 71308 8.986820 1.225165 1 31054 11.508473 1.624296 3 52387 8.807734 0.713922 3 40328 0.000000 0.816676 1 34844 8.889202 1.665414 3 11607 3.178117 0.542752 2 64306 7.013795 0.139909 1 32721 9.605014 0.065254 3 33170 1.230540 1.331674 1 37192 10.412811 0.890803 3 13089 0.000000 0.567161 2 66491 9.699991 0.122011 1 15941 0.000000 0.061191 2 4272 4.455293 0.272135 2 48812 3.020977 1.502803 1 28818 8.099278 0.216317 3 35394 1.157764 1.603217 1 71791 10.105396 0.121067 1 40668 11.230148 0.408603 3 39580 9.070058 0.011379 3 11786 0.566460 0.478837 2 19251 0.000000 0.487300 2 56594 8.956369 1.193484 3 54495 1.523057 0.620528 1 11844 2.749006 0.169855 2 45465 9.235393 0.188350 3 31033 10.555573 0.403927 3 16633 6.956372 1.519308 2 13887 0.636281 1.273984 2 52603 3.574737 0.075163 1 72000 9.032486 1.461809 1 68497 5.958993 0.023012 1 35135 2.435300 1.211744 1 26397 10.539731 1.638248 3 7313 7.646702 0.056513 2 91273 20.919349 0.644571 1 24743 1.424726 0.838447 1 31690 6.748663 0.890223 3 15432 2.289167 0.114881 2 58394 5.548377 0.402238 1 33962 6.057227 0.432666 1 31442 10.828595 0.559955 3 31044 11.318160 0.271094 3 29938 13.265311 0.633903 3 9875 0.000000 1.496715 2 51542 6.517133 0.402519 3 11878 4.934374 1.520028 2 69241 10.151738 0.896433 1 37776 2.425781 1.559467 1 68997 9.778962 1.195498 1 67416 12.219950 0.657677 1 59225 7.394151 0.954434 1 29138 8.518535 0.742546 3 5962 2.798700 0.662632 2 10847 0.637930 0.617373 2 70527 10.750490 0.097415 1 9610 0.625382 0.140969 2 64734 10.027968 0.282787 1 25941 9.817347 0.364197 3 2763 0.646828 1.266069 2 55601 3.347111 0.914294 1 31128 11.816892 0.193798 3 5181 0.000000 1.480198 2 69982 10.945666 0.993219 1 52440 10.244706 0.280539 3 57350 2.579801 1.149172 1 57869 2.630410 0.098869 1 56557 11.746200 1.695517 3 42342 8.104232 1.326277 3 15560 12.409743 0.790295 3 34826 12.167844 1.328086 3 8569 3.198408 0.299287 2 77623 16.055513 0.541052 1 78184 7.138659 0.158481 1 7036 4.831041 0.761419 2 69616 10.082890 1.373611 1 21546 10.066867 0.788470 3 36715 8.129538 0.329913 3 20522 3.012463 1.138108 2 42349 3.720391 0.845974 1 9037 0.773493 1.148256 2 26728 10.962941 1.037324 3 587 0.177621 0.162614 2 48915 3.085853 0.967899 1 9824 8.426781 0.202558 2 4135 1.825927 1.128347 2 9666 2.185155 1.010173 2 59333 7.184595 1.261338 1 36198 0.000000 0.116525 1 34909 8.901752 1.033527 3 47516 2.451497 1.358795 1 55807 3.213631 0.432044 1 14036 3.974739 0.723929 2 42856 9.601306 0.619232 3 64007 8.363897 0.445341 1 59428 6.381484 1.365019 1 13730 0.000000 1.403914 2 41740 9.609836 1.438105 3 63546 9.904741 0.985862 1 30417 7.185807 1.489102 3 69636 5.466703 1.216571 1 64660 0.000000 0.915898 1 14883 4.575443 0.535671 2 7965 3.277076 1.010868 2 68620 10.246623 1.239634 1 8738 2.341735 1.060235 2 7544 3.201046 0.498843 2 6377 6.066013 0.120927 2 36842 8.829379 0.895657 3 81046 15.833048 1.568245 1 67736 13.516711 1.220153 1 32492 0.664284 1.116755 1 39299 6.325139 0.605109 3 77289 8.677499 0.344373 1 33835 8.188005 0.964896 3 71890 9.414263 0.384030 1 32054 9.196547 1.138253 3 38579 10.202968 0.452363 3 55984 2.119439 1.481661 1 72694 13.635078 0.858314 1 42299 0.083443 0.701669 1 26635 9.149096 1.051446 3 8579 1.933803 1.374388 2 37302 14.115544 0.676198 3 22878 8.933736 0.943352 3 4364 2.661254 0.946117 2 4985 0.988432 1.305027 2 37068 2.063741 1.125946 1 41137 2.220590 0.690754 1 67759 6.424849 0.806641 1 11831 1.156153 1.613674 2 34502 3.032720 0.601847 1 4088 3.076828 0.952089 2 15199 0.000000 0.318105 2 17309 7.750480 0.554015 3 42816 10.958135 1.482500 3 43751 10.222018 0.488678 3 58335 2.367988 0.435741 1 75039 7.686054 1.381455 1 42878 11.464879 1.481589 3 42770 11.075735 0.089726 3 8848 3.543989 0.345853 2 31340 8.123889 1.282880 3 41413 4.331769 0.754467 3 12731 0.120865 1.211961 2 22447 6.116109 0.701523 3 33564 7.474534 0.505790 3 48907 8.819454 0.649292 3 8762 6.802144 0.615284 2 46696 12.666325 0.931960 3 36851 8.636180 0.399333 3 67639 11.730991 1.289833 1 171 8.132449 0.039062 2 26674 10.296589 1.496144 3 8739 7.583906 1.005764 2 66668 9.777806 0.496377 1 68732 8.833546 0.513876 1 69995 4.907899 1.518036 1 82008 8.362736 1.285939 1 25054 9.084726 1.606312 3 33085 14.164141 0.560970 3 41379 9.080683 0.989920 3 39417 6.522767 0.038548 3 12556 3.690342 0.462281 2 39432 3.563706 0.242019 1 38010 1.065870 1.141569 1 69306 6.683796 1.456317 1 38000 1.712874 0.243945 1 46321 13.109929 1.280111 3 66293 11.327910 0.780977 1 22730 4.545711 1.233254 1 5952 3.367889 0.468104 2 72308 8.326224 0.567347 1 60338 8.978339 1.442034 1 13301 5.655826 1.582159 2 27884 8.855312 0.570684 3 11188 6.649568 0.544233 2 56796 3.966325 0.850410 1 8571 1.924045 1.664782 2 4914 6.004812 0.280369 2 10784 0.000000 0.375849 2 39296 9.923018 0.092192 3 13113 2.389084 0.119284 2 70204 13.663189 0.133251 1 46813 11.434976 0.321216 3 11697 0.358270 1.292858 2 44183 9.598873 0.223524 3 2225 6.375275 0.608040 2 29066 11.580532 0.458401 3 4245 5.319324 1.598070 2 34379 4.324031 1.603481 1 44441 2.358370 1.273204 1 2022 0.000000 1.182708 2 26866 12.824376 0.890411 3 57070 1.587247 1.456982 1 32932 8.510324 1.520683 3 51967 10.428884 1.187734 3 44432 8.346618 0.042318 3 67066 7.541444 0.809226 1 17262 2.540946 1.583286 2 79728 9.473047 0.692513 1 14259 0.352284 0.474080 2 6122 0.000000 0.589826 2 76879 12.405171 0.567201 1 11426 4.126775 0.871452 2 2493 0.034087 0.335848 2 19910 1.177634 0.075106 2 10939 0.000000 0.479996 2 17716 0.994909 0.611135 2 31390 11.053664 1.180117 3 20375 0.000000 1.679729 2 26309 2.495011 1.459589 1 33484 11.516831 0.001156 3 45944 9.213215 0.797743 3 4249 5.332865 0.109288 2 6089 0.000000 1.689771 2 7513 0.000000 1.126053 2 27862 12.640062 1.690903 3 39038 2.693142 1.317518 1 19218 3.328969 0.268271 2 62911 7.193166 1.117456 1 77758 6.615512 1.521012 1 27940 8.000567 0.835341 3 2194 4.017541 0.512104 2 37072 13.245859 0.927465 3 15585 5.970616 0.813624 2 25577 11.668719 0.886902 3 8777 4.283237 1.272728 2 29016 10.742963 0.971401 3 21910 12.326672 1.592608 3 12916 0.000000 0.344622 2 10976 0.000000 0.922846 2 79065 10.602095 0.573686 1 36759 10.861859 1.155054 3 50011 1.229094 1.638690 1 1155 0.410392 1.313401 2 71600 14.552711 0.616162 1 30817 14.178043 0.616313 3 54559 14.136260 0.362388 1 29764 0.093534 1.207194 1 69100 10.929021 0.403110 1 47324 11.432919 0.825959 3 73199 9.134527 0.586846 1 44461 5.071432 1.421420 1 45617 11.460254 1.541749 3 28221 11.620039 1.103553 3 7091 4.022079 0.207307 2 6110 3.057842 1.631262 2 79016 7.782169 0.404385 1 18289 7.981741 0.929789 3 43679 4.601363 0.268326 1 22075 2.595564 1.115375 1 23535 10.049077 0.391045 3 25301 3.265444 1.572970 2 32256 11.780282 1.511014 3 36951 3.075975 0.286284 1 31290 1.795307 0.194343 1 38953 11.106979 0.202415 3 35257 5.994413 0.800021 1 25847 9.706062 1.012182 3 32680 10.582992 0.836025 3 62018 7.038266 1.458979 1 9074 0.023771 0.015314 2 33004 12.823982 0.676371 3 44588 3.617770 0.493483 1 32565 8.346684 0.253317 3 38563 6.104317 0.099207 1 75668 16.207776 0.584973 1 9069 6.401969 1.691873 2 53395 2.298696 0.559757 1 28631 7.661515 0.055981 3 71036 6.353608 1.645301 1 71142 10.442780 0.335870 1 37653 3.834509 1.346121 1 76839 10.998587 0.584555 1 9916 2.695935 1.512111 2 38889 3.356646 0.324230 1 39075 14.677836 0.793183 3 48071 1.551934 0.130902 1 7275 2.464739 0.223502 2 41804 1.533216 1.007481 1 35665 12.473921 0.162910 3 67956 6.491596 0.032576 1 41892 10.506276 1.510747 3 38844 4.380388 0.748506 1 74197 13.670988 1.687944 1 14201 8.317599 0.390409 2 3908 0.000000 0.556245 2 2459 0.000000 0.290218 2 32027 10.095799 1.188148 3 12870 0.860695 1.482632 2 9880 1.557564 0.711278 2 72784 10.072779 0.756030 1 17521 0.000000 0.431468 2 50283 7.140817 0.883813 3 33536 11.384548 1.438307 3 9452 3.214568 1.083536 2 37457 11.720655 0.301636 3 17724 6.374475 1.475925 3 43869 5.749684 0.198875 3 264 3.871808 0.552602 2 25736 8.336309 0.636238 3 39584 9.710442 1.503735 3 31246 1.532611 1.433898 1 49567 9.785785 0.984614 3 7052 2.633627 1.097866 2 35493 9.238935 0.494701 3 10986 1.205656 1.398803 2 49508 3.124909 1.670121 1 5734 7.935489 1.585044 2 65479 12.746636 1.560352 1 77268 10.732563 0.545321 1 28490 3.977403 0.766103 1 13546 4.194426 0.450663 2 37166 9.610286 0.142912 3 16381 4.797555 1.260455 2 10848 1.615279 0.093002 2 35405 4.614771 1.027105 1 15917 0.000000 1.369726 2 6131 0.608457 0.512220 2 67432 6.558239 0.667579 1 30354 12.315116 0.197068 3 69696 7.014973 1.494616 1 33481 8.822304 1.194177 3 43075 10.086796 0.570455 3 38343 7.241614 1.661627 3 14318 4.602395 1.511768 2 5367 7.434921 0.079792 2 37894 10.467570 1.595418 3 36172 9.948127 0.003663 3 40123 2.478529 1.568987 1 10976 5.938545 0.878540 2 12705 0.000000 0.948004 2 12495 5.559181 1.357926 2 35681 9.776654 0.535966 3 46202 3.092056 0.490906 1 11505 0.000000 1.623311 2 22834 4.459495 0.538867 1 49901 8.334306 1.646600 3 71932 11.226654 0.384686 1 13279 3.904737 1.597294 2 49112 7.038205 1.211329 3 77129 9.836120 1.054340 1 37447 1.990976 0.378081 1 62397 9.005302 0.485385 1 0 1.772510 1.039873 2 15476 0.458674 0.819560 2 40625 10.003919 0.231658 3 36706 0.520807 1.476008 1 28580 10.678214 1.431837 3 25862 4.425992 1.363842 1 63488 12.035355 0.831222 1 33944 10.606732 1.253858 3 30099 1.568653 0.684264 1 13725 2.545434 0.024271 2 36768 10.264062 0.982593 3 64656 9.866276 0.685218 1 14927 0.142704 0.057455 2 43231 9.853270 1.521432 3 66087 6.596604 1.653574 1 19806 2.602287 1.321481 2 41081 10.411776 0.664168 3 10277 7.083449 0.622589 2 7014 2.080068 1.254441 2 17275 0.522844 1.622458 2 31600 10.362000 1.544827 3 59956 3.412967 1.035410 1 42181 6.796548 1.112153 3 51743 4.092035 0.075804 1 5194 2.763811 1.564325 2 30832 12.547439 1.402443 3 7976 5.708052 1.596152 2 14602 4.558025 0.375806 2 41571 11.642307 0.438553 3 55028 3.222443 0.121399 1 5837 4.736156 0.029871 2 39808 10.839526 0.836323 3 20944 4.194791 0.235483 2 22146 14.936259 0.888582 3 42169 3.310699 1.521855 1 7010 2.971931 0.034321 2 3807 9.261667 0.537807 2 29241 7.791833 1.111416 3 52696 1.480470 1.028750 1 42545 3.677287 0.244167 1 24437 2.202967 1.370399 1 16037 5.796735 0.935893 2 8493 3.063333 0.144089 2 68080 11.233094 0.492487 1 59016 1.965570 0.005697 1 11810 8.616719 0.137419 2 68630 6.609989 1.083505 1 7629 1.712639 1.086297 2 71992 10.117445 1.299319 1 13398 0.000000 1.104178 2 26241 9.824777 1.346821 3 11160 1.653089 0.980949 2 76701 18.178822 1.473671 1 32174 6.781126 0.885340 3 45043 8.206750 1.549223 3 42173 10.081853 1.376745 3 69801 6.288742 0.112799 1 41737 3.695937 1.543589 1 46979 6.726151 1.069380 3 79267 12.969999 1.568223 1 4615 2.661390 1.531933 2 32907 7.072764 1.117386 3 37444 9.123366 1.318988 3 569 3.743946 1.039546 2 8723 2.341300 0.219361 2 6024 0.541913 0.592348 2 52252 2.310828 1.436753 1 8358 6.226597 1.427316 2 26166 7.277876 0.489252 3 18471 0.000000 0.389459 2 3386 7.218221 1.098828 2 41544 8.777129 1.111464 3 10480 2.813428 0.819419 2 5894 2.268766 1.412130 2 7273 6.283627 0.571292 2 22272 7.520081 1.626868 3 31369 11.739225 0.027138 3 10708 3.746883 0.877350 2 69364 12.089835 0.521631 1 37760 12.310404 0.259339 3 13004 0.000000 0.671355 2 37885 2.728800 0.331502 1 52555 10.814342 0.607652 3 38997 12.170268 0.844205 3 69698 6.698371 0.240084 1 11783 3.632672 1.643479 2 47636 10.059991 0.892361 3 15744 1.887674 0.756162 2 69058 8.229125 0.195886 1 33057 7.817082 0.476102 3 28681 12.277230 0.076805 3 34042 10.055337 1.115778 3 29928 3.596002 1.485952 1 9734 2.755530 1.420655 2 7344 7.780991 0.513048 2 7387 0.093705 0.391834 2 33957 8.481567 0.520078 3 9936 3.865584 0.110062 2 36094 9.683709 0.779984 3 39835 10.617255 1.359970 3 64486 7.203216 1.624762 1 0 7.601414 1.215605 2 39539 1.386107 1.417070 1 66972 9.129253 0.594089 1 15029 1.363447 0.620841 2 44909 3.181399 0.359329 1 38183 13.365414 0.217011 3 37372 4.207717 1.289767 1 0 4.088395 0.870075 2 17786 3.327371 1.142505 2 39055 1.303323 1.235650 1 37045 7.999279 1.581763 3 6435 2.217488 0.864536 2 72265 7.751808 0.192451 1 28152 14.149305 1.591532 3 25931 8.765721 0.152808 3 7538 3.408996 0.184896 2 1315 1.251021 0.112340 2 12292 6.160619 1.537165 2 49248 1.034538 1.585162 1 9025 0.000000 1.034635 2 13438 2.355051 0.542603 2 69683 6.614543 0.153771 1 25374 10.245062 1.450903 3 55264 3.467074 1.231019 1 38324 7.487678 1.572293 3 69643 4.624115 1.185192 1 44058 8.995957 1.436479 3 41316 11.564476 0.007195 3 29119 3.440948 0.078331 1 51656 1.673603 0.732746 1 3030 4.719341 0.699755 2 35695 10.304798 1.576488 3 1537 2.086915 1.199312 2 9083 6.338220 1.131305 2 47744 8.254926 0.710694 3 71372 16.067108 0.974142 1 37980 1.723201 0.310488 1 42385 3.785045 0.876904 1 22687 2.557561 0.123738 1 39512 9.852220 1.095171 3 11885 3.679147 1.557205 2 4944 9.789681 0.852971 2 73230 14.958998 0.526707 1 17585 11.182148 1.288459 3 68737 7.528533 1.657487 1 13818 5.253802 1.378603 2 31662 13.946752 1.426657 3 86686 15.557263 1.430029 1 43214 12.483550 0.688513 3 24091 2.317302 1.411137 1 52544 10.069724 0.766119 3 61861 5.792231 1.615483 1 47903 4.138435 0.475994 1 37190 12.929517 0.304378 3 6013 9.378238 0.307392 2 27223 8.361362 1.643204 3 69027 7.939406 1.325042 1 78642 10.735384 0.705788 1 30254 11.592723 0.286188 3 21704 10.098356 0.704748 3 34985 9.299025 0.545337 3 31316 11.158297 0.218067 3 76368 16.143900 0.558388 1 27953 10.971700 1.221787 3 152 0.000000 0.681478 2 9146 3.178961 1.292692 2 75346 17.625350 0.339926 1 26376 1.995833 0.267826 1 35255 10.640467 0.416181 3 19198 9.628339 0.985462 3 12518 4.662664 0.495403 2 25453 5.754047 1.382742 2 12530 0.000000 0.037146 2 62230 9.334332 0.198118 1 9517 3.846162 0.619968 2 71161 10.685084 0.678179 1 1593 4.752134 0.359205 2 33794 0.697630 0.966786 1 39710 10.365836 0.505898 3 16941 0.461478 0.352865 2 69209 11.339537 1.068740 1 4446 5.420280 0.127310 2 9347 3.469955 1.619947 2 55635 8.517067 0.994858 3 65889 8.306512 0.413690 1 10753 2.628690 0.444320 2 7055 0.000000 0.802985 2 7905 0.000000 1.170397 2 53447 7.298767 1.582346 3 9194 7.331319 1.277988 2 61914 9.392269 0.151617 1 15630 5.541201 1.180596 2 79194 15.149460 0.537540 1 12268 5.515189 0.250562 2 33682 7.728898 0.920494 3 26080 11.318785 1.510979 3 19119 3.574709 1.531514 2 30902 7.350965 0.026332 3 63039 7.122363 1.630177 1 51136 1.828412 1.013702 1 35262 10.117989 1.156862 3 42776 11.309897 0.086291 3 64191 8.342034 1.388569 1 15436 0.241714 0.715577 2 14402 10.482619 1.694972 2 6341 9.289510 1.428879 2 14113 4.269419 0.134181 2 6390 0.000000 0.189456 2 8794 0.817119 0.143668 2 43432 1.508394 0.652651 1 38334 9.359918 0.052262 3 34068 10.052333 0.550423 3 30819 11.111660 0.989159 3 22239 11.265971 0.724054 3 28725 10.383830 0.254836 3 57071 3.878569 1.377983 1 72420 13.679237 0.025346 1 28294 10.526846 0.781569 3 9896 0.000000 0.924198 2 65821 4.106727 1.085669 1 7645 8.118856 1.470686 2 71289 7.796874 0.052336 1 5128 2.789669 1.093070 2 13711 6.226962 0.287251 2 22240 10.169548 1.660104 3 15092 0.000000 1.370549 2 5017 7.513353 0.137348 2 10141 8.240793 0.099735 2 35570 14.612797 1.247390 3 46893 3.562976 0.445386 1 8178 3.230482 1.331698 2 55783 3.612548 1.551911 1 1148 0.000000 0.332365 2 10062 3.931299 0.487577 2 74124 14.752342 1.155160 1 66603 10.261887 1.628085 1 11893 2.787266 1.570402 2 50908 15.112319 1.324132 3 39891 5.184553 0.223382 3 65915 3.868359 0.128078 1 65678 3.507965 0.028904 1 62996 11.019254 0.427554 1 36851 3.812387 0.655245 1 36669 11.056784 0.378725 3 38876 8.826880 1.002328 3 26878 11.173861 1.478244 3 46246 11.506465 0.421993 3 12761 7.798138 0.147917 3 35282 10.155081 1.370039 3 68306 10.645275 0.693453 1 31262 9.663200 1.521541 3 34754 10.790404 1.312679 3 13408 2.810534 0.219962 2 30365 9.825999 1.388500 3 10709 1.421316 0.677603 2 24332 11.123219 0.809107 3 45517 13.402206 0.661524 3 6178 1.212255 0.836807 2 10639 1.568446 1.297469 2 29613 3.343473 1.312266 1 22392 5.400155 0.193494 1 51126 3.818754 0.590905 1 53644 7.973845 0.307364 3 51417 9.078824 0.734876 3 24859 0.153467 0.766619 1 61732 8.325167 0.028479 1 71128 7.092089 1.216733 1 27276 5.192485 1.094409 3 30453 10.340791 1.087721 3 18670 2.077169 1.019775 2 70600 10.151966 0.993105 1 12683 0.046826 0.809614 2 81597 11.221874 1.395015 1 69959 14.497963 1.019254 1 8124 3.554508 0.533462 2 18867 3.522673 0.086725 2 80886 14.531655 0.380172 1 55895 3.027528 0.885457 1 31587 1.845967 0.488985 1 10591 10.226164 0.804403 3 70096 10.965926 1.212328 1 53151 2.129921 1.477378 1 11992 0.000000 1.606849 2 33114 9.489005 0.827814 3 7413 0.000000 1.020797 2 10583 0.000000 1.270167 2 58668 6.556676 0.055183 1 35018 9.959588 0.060020 3 70843 7.436056 1.479856 1 14011 0.404888 0.459517 2 35015 9.952942 1.650279 3 70839 15.600252 0.021935 1 3024 2.723846 0.387455 2 5526 0.513866 1.323448 2 5113 0.000000 0.861859 2 20851 7.280602 1.438470 2 40999 9.161978 1.110180 3 15823 0.991725 0.730979 2 35432 7.398380 0.684218 3 53711 12.149747 1.389088 3 64371 9.149678 0.874905 1 9289 9.666576 1.370330 2 60613 3.620110 0.287767 1 18338 5.238800 1.253646 2 22845 14.715782 1.503758 3 74676 14.445740 1.211160 1 34143 13.609528 0.364240 3 14153 3.141585 0.424280 2 9327 0.000000 0.120947 2 18991 0.454750 1.033280 2 9193 0.510310 0.016395 2 2285 3.864171 0.616349 2 9493 6.724021 0.563044 2 2371 4.289375 0.012563 2 13963 0.000000 1.437030 2 2299 3.733617 0.698269 2 5262 2.002589 1.380184 2 4659 2.502627 0.184223 2 17582 6.382129 0.876581 2 27750 8.546741 0.128706 3 9868 2.694977 0.432818 2 18333 3.951256 0.333300 2 3780 9.856183 0.329181 2 18190 2.068962 0.429927 2 11145 3.410627 0.631838 2 68846 9.974715 0.669787 1 26575 10.650102 0.866627 3 48111 9.134528 0.728045 3 43757 7.882601 1.332446 3

代码:

import numpy as np

import operator

import matplotlib.pyplot as plt

def knn_classifier(inX, train_data, labels, k):

"""

Knn_Classifier k近邻核心实现代码

:param inX: 输入待测试实例

:param train_data: 输入的训练数据集

:param labels: 训练数据集对应的标签

:param k: 分为k类

:return: 返回对应的类别

"""

train_data_size = train_data.shape[0] # 获取数据集的

diff_matrix = np.tile(inX, (train_data_size, 1)) - train_data # 计算inx与训练数据集中所有数据的差值

lp_diff_matrix = diff_matrix**2 # 这里采用的是欧氏距离

lp_distances = lp_diff_matrix.sum(axis=1)

distances = lp_distances**0.5

sorted_dist_indicies = np.argsort(distances) # 按照欧氏距离的大小进行排序

class_count = {}

for i in range(k):

vote_I_label = labels[sorted_dist_indicies[i]] # 依次存入欧氏距离前k个最小距离的编号

class_count[vote_I_label] = class_count.get(vote_I_label, 0) + 1 # 对应一个字典

sorted_class_count = sorted(class_count.items(), key=operator.itemgetter(1), reverse=True) # 对字典进行排序

return sorted_class_count[0][0] # 最高值对应类别即为inX的类别

def file_to_matrix(filename):

'''

文件数据转换为矩阵类型

:param filename:

:return:

'''

f = open(filename)

lines = f.readlines()

lines_number = len(lines)

feature = len(lines[1].split(' '))

matrix = np.zeros((lines_number, feature - 1))

class_label_vector = []

index = 0

for line in lines:

line = line.strip()

item = line.split(' ')

matrix[index, :] = item[0:3]

class_label_vector.append(item[-1])

index += 1

return matrix, class_label_vector

def auto_norm(data_set):

"""

auto_norm 归一化函数,消除量纲不同,不同属性的大小差异过大的影响

归一化公式 newValue = (oldValue - min)/(max - min) 取值0-1

:param dataSet:

:return:

"""

min_vals = data_set.min(0)

max_vals = data_set.max(0)

ranges = max_vals - min_vals

# print(type(min_vals))

m = data_set.shape[0]

data_set = data_set - np.tile(min_vals, (m, 1))

data_set = data_set/(np.tile(ranges, (m, 1)))

return data_set, ranges, min_vals

def dating_class_test():

"""

dating_class_test 测试约会数据集data2的聚类预测结果

return: null

"""

ho_ratio = 0.1

dating_matrix, dating_labels = file_to_matrix('data2.txt') # 读入数据

norm_matrix, ranges, min_vals = auto_norm(dating_matrix) # 归一化数据

m = norm_matrix.shape[0] # 获取行数

num_test_vecs = int(m * ho_ratio) # 取出其中的10%作为测试数据

error_count = 0.0 # 记录错误率

for i in range(num_test_vecs):

classifier = knn_classifier(norm_matrix[i, :], norm_matrix[num_test_vecs:m, :], dating_labels[num_test_vecs:m], 4) #利用KNN聚类算法进行预测

print("the classifier came back with: " +classifier + " the real answer is: " + dating_labels[i])

if(classifier != dating_labels[i]) :

error_count += 1.0

print('the total error rate is: %f' % (error_count/float(num_test_vecs)))

if __name__ == "__main__":

dating_class_test()