RedHat5.4 32Bit 安装Oracle11gR2 RAC

1 前期规划 3

1.1整体规划 3

1.2拓扑图 4

2 安装前准备配置 4

2.1检查两台机器物理内存 4

2.2.检查两台机器swap和/tmp 4

2.3验证操作系统版本和bit 5

2.4查看每个节点的日期时间 5

2.5检查和安装所需要的软件包 5

2.6配置网络环境和host文件 9

2.7创建grid,oracle的用户和需要的组 11

2.8设置grid和Oracle用户的环境变量 13

2.9配置grid用户ssh互信 16

2.9.1配置grid用户ssh互信 16

2.9.2测试grid用户ssh互信 19

2.10配置Oracle用户ssh互信 19

2.10.1配置oracle用户ssh互信 19

2.10.2测试oracle用户ssh互信 21

2.11配置kernel和Oracle相关的Shell限制 21

2.12禁用Selinux 23

2.13配置时间同步 24

2.14配置ASM磁盘 25

2.14.1安装ASM包 25

2.14.2划分共享盘 27

2.14.3配置ASM Driver 31

2.14.4创建ASM磁盘组 32

2.14.5在节点上扫描磁盘 33

2.15安装cvuqdisk package for linux 33

3 安装前检查 34

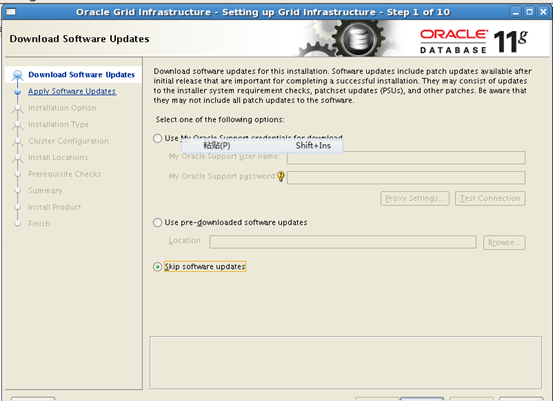

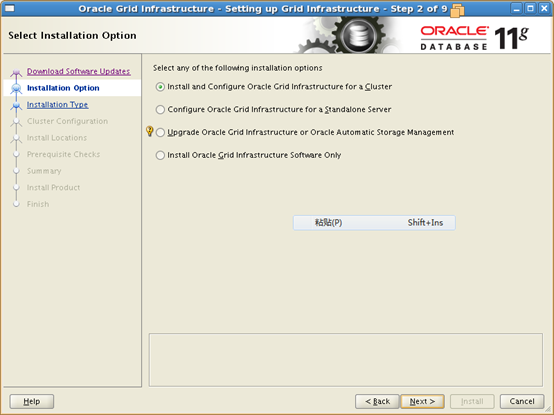

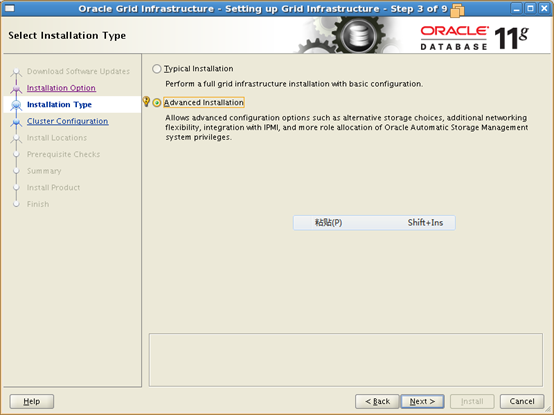

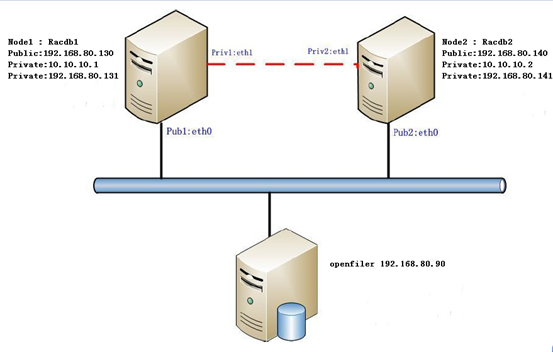

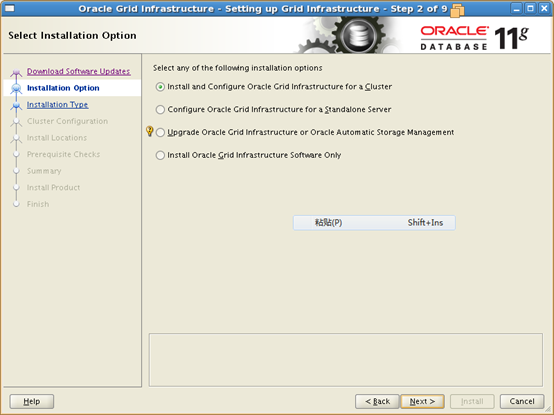

4 Install Oracle Grid Infrastructure for a cluster 51

4.1运行 ./runInstaller进入安装界面 51

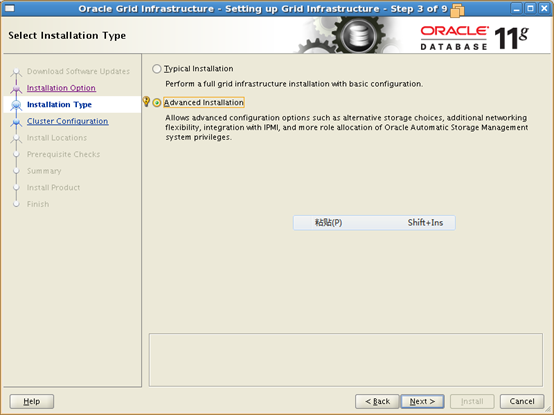

4.2选择"Advanced Installation" 52

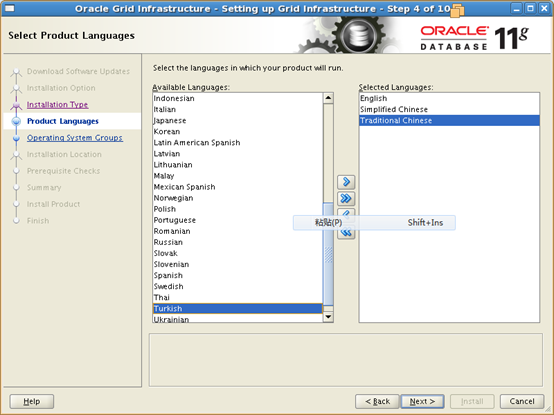

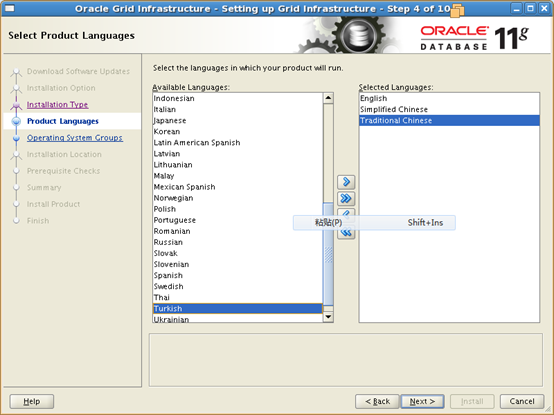

4.3选择安装语言,默认的 next 53

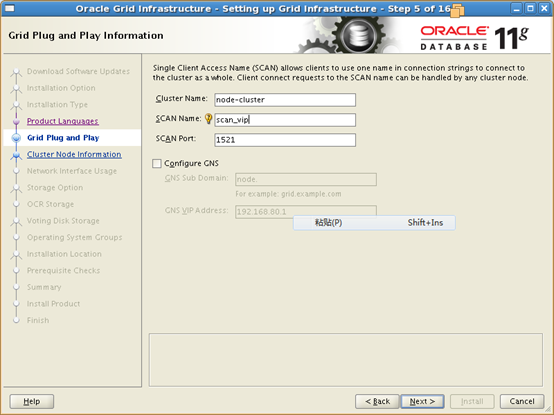

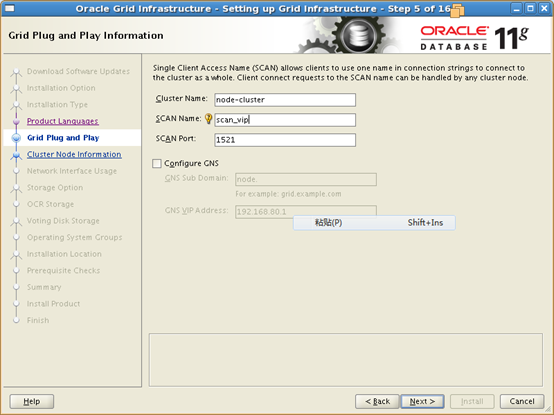

4.4Grid Plug and Play Information 54

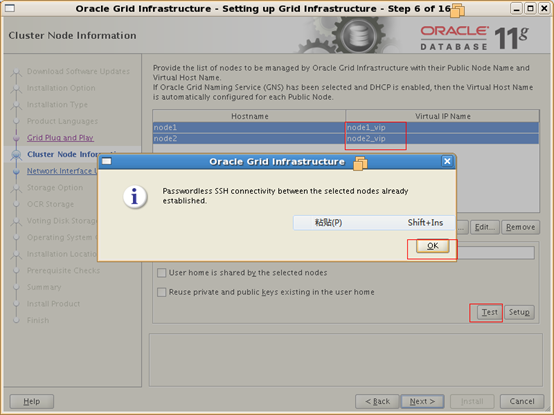

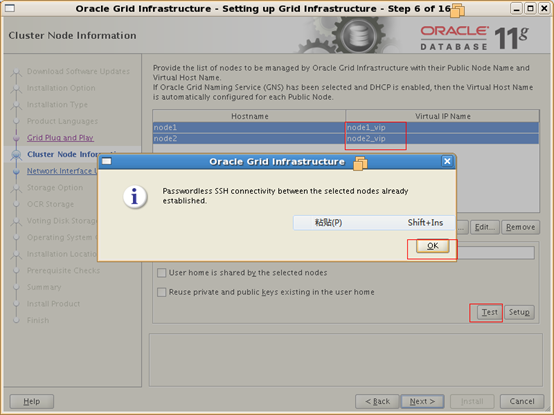

4.5Cluster Node Information 55

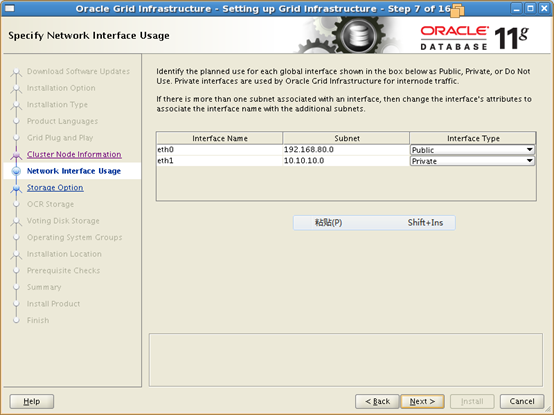

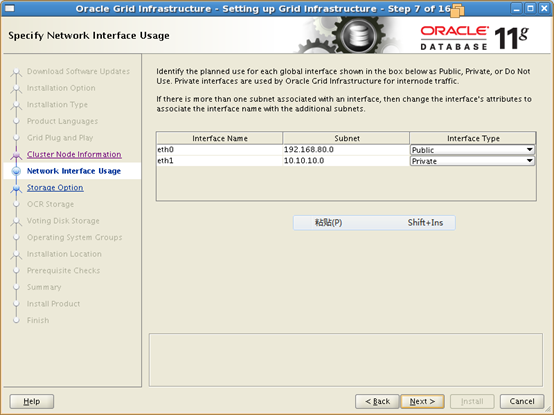

4.6Specify Network Interface Usage 56

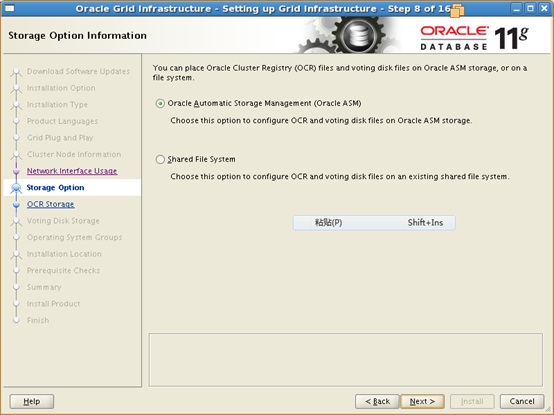

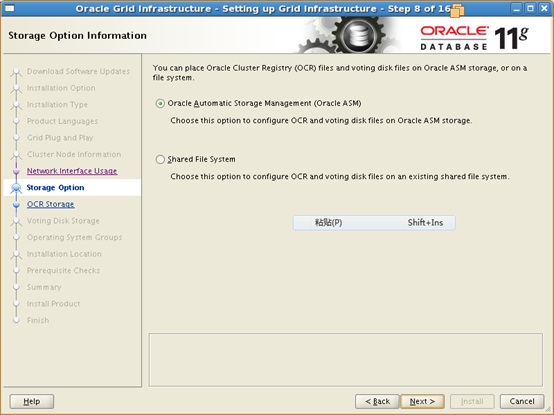

4.7Storage Option Information 57

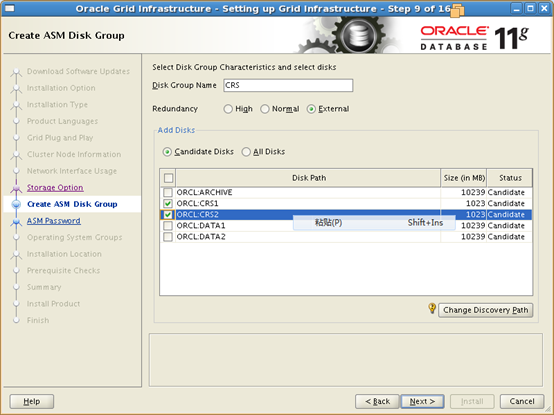

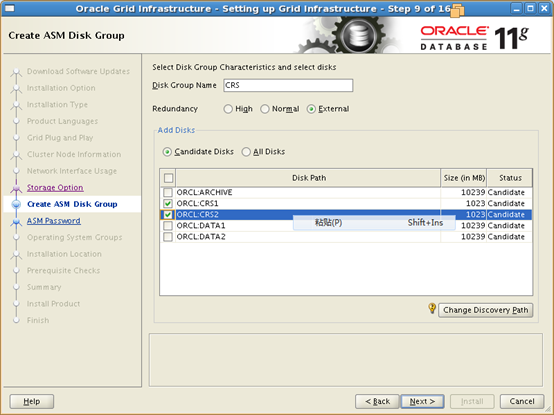

4.8Create ASM Disk Group 58

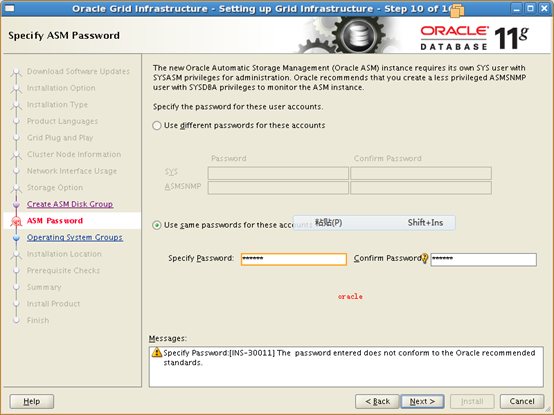

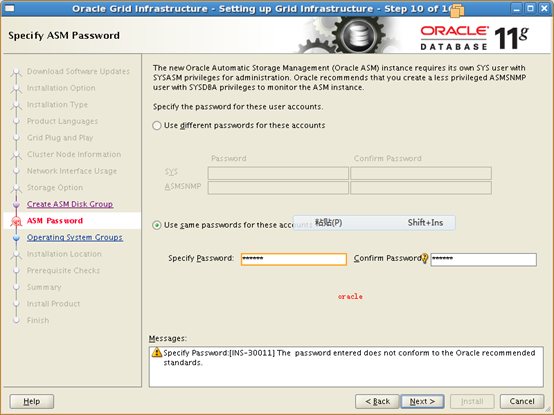

4.9ASM Password 59

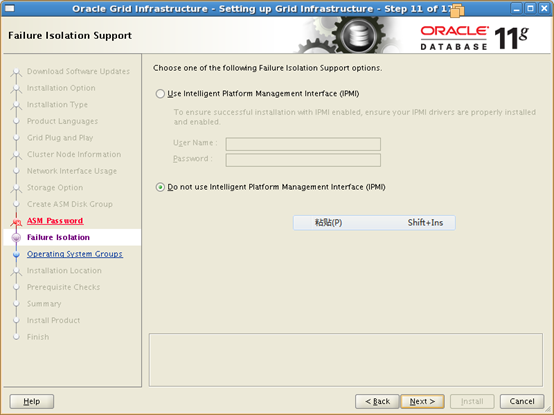

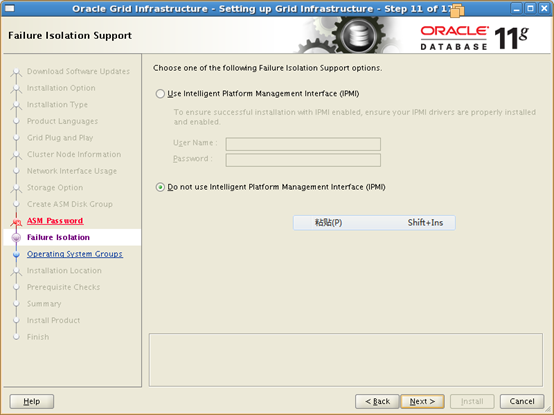

4.10Failure Isolation Support 60

4.11Privileged Operating System Groups 61

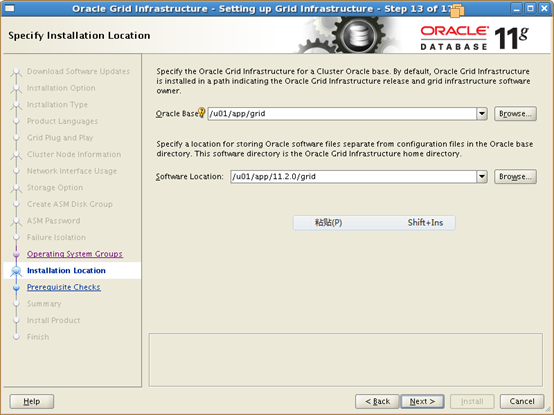

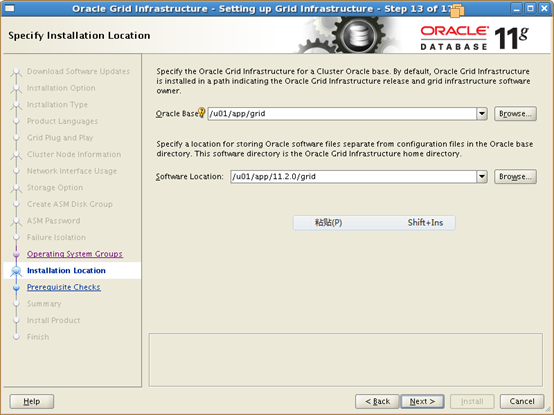

4.12Specify Installation Location 61

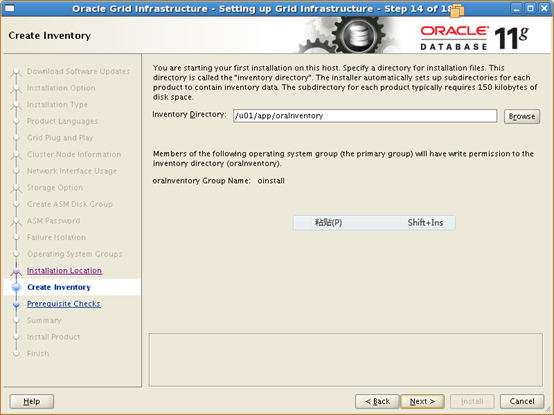

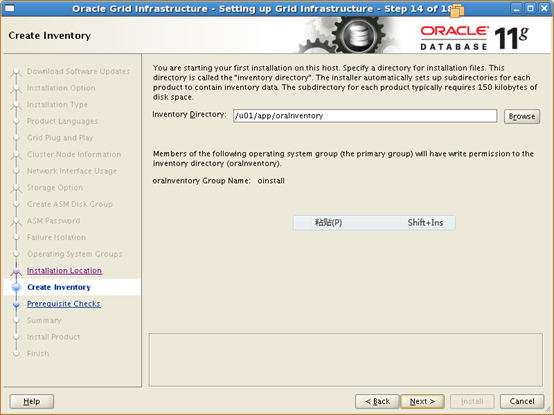

4.13Create Inventory 63

4.14Perform Prerequisite Checks 64

4.15Summary Informations 65

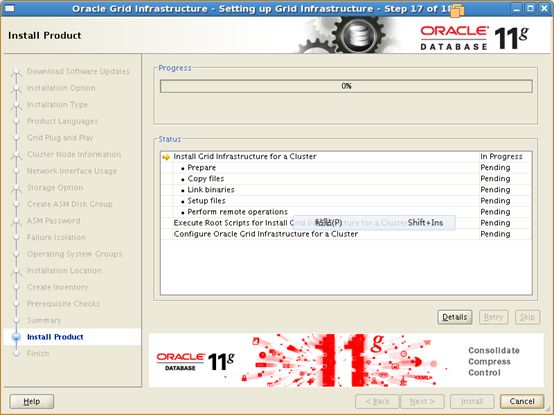

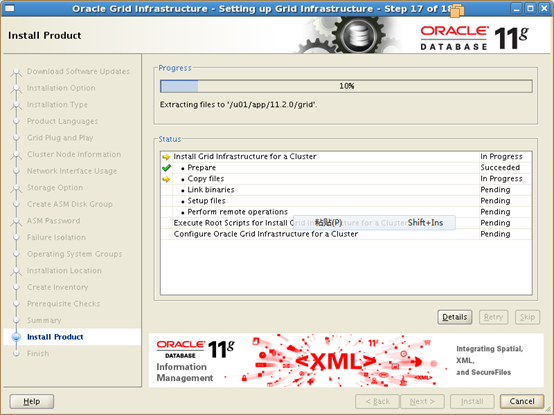

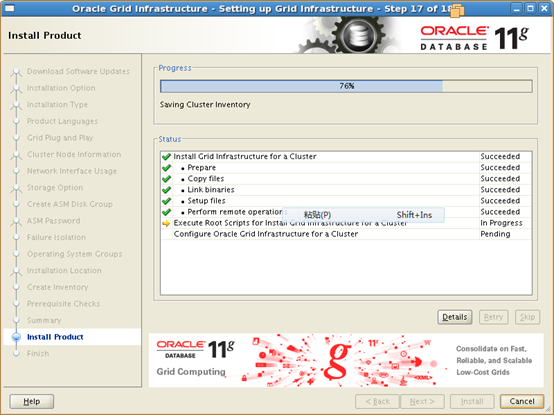

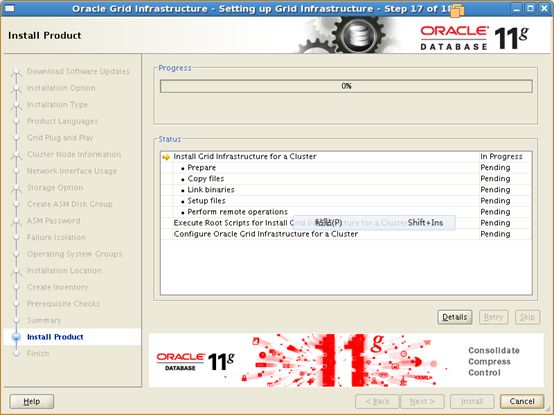

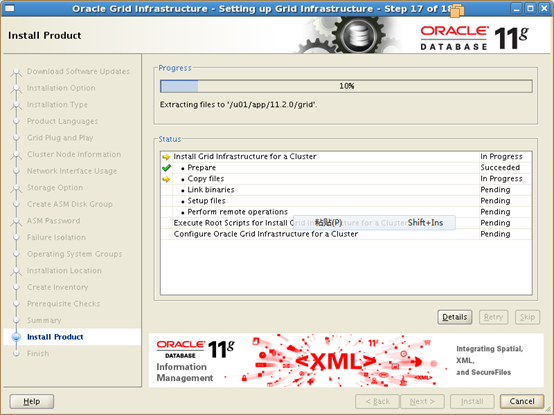

4.16Setup 66

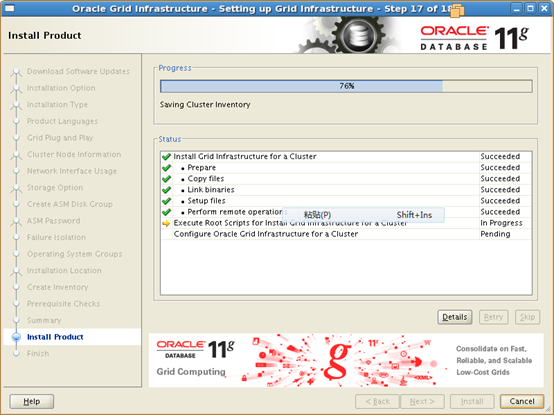

4.17RAC Nodes通过root用户执行对应的脚本 67

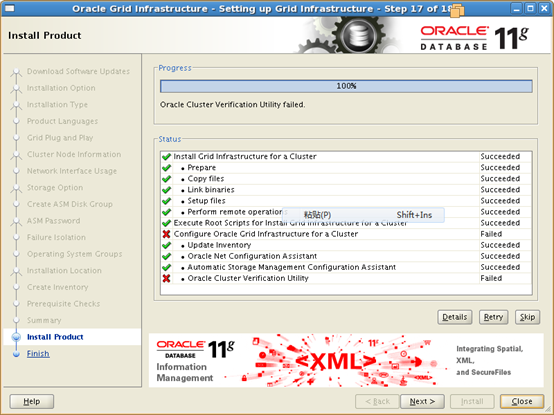

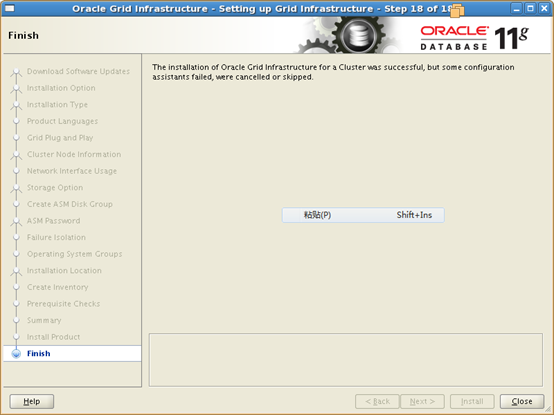

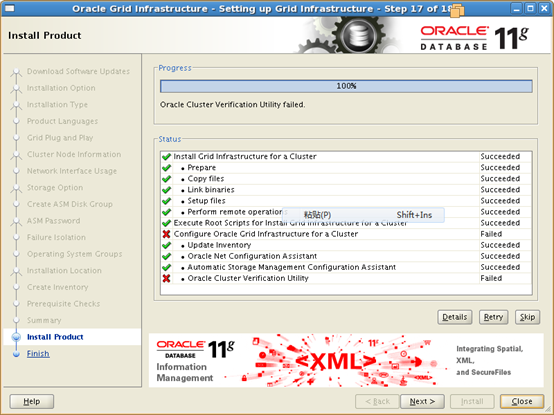

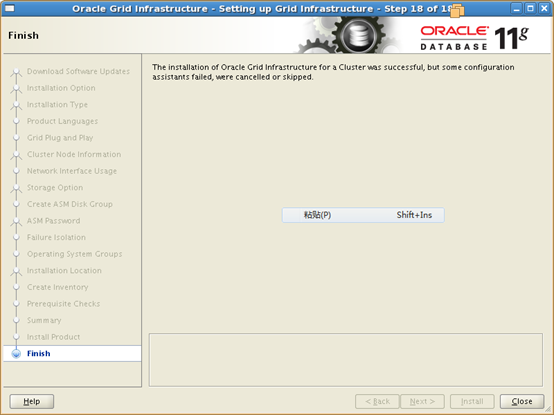

4.18所有组件安装完成 72

4.19Oracle CVU检查没有错误, 整个过程安装成功close窗口 73

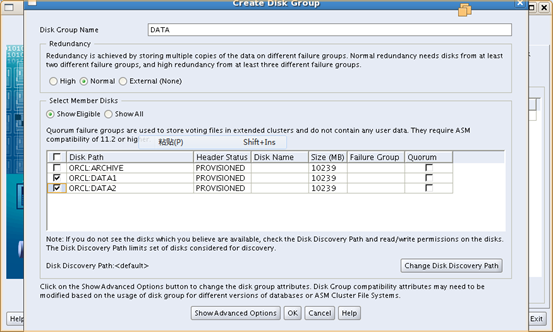

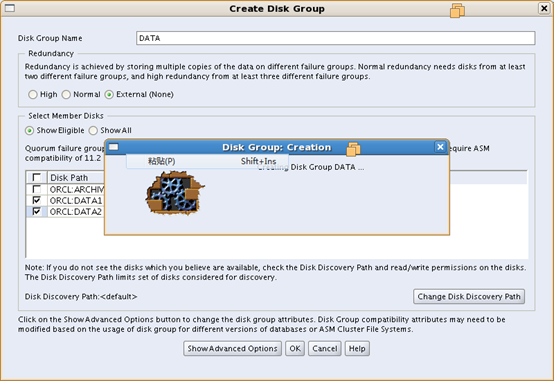

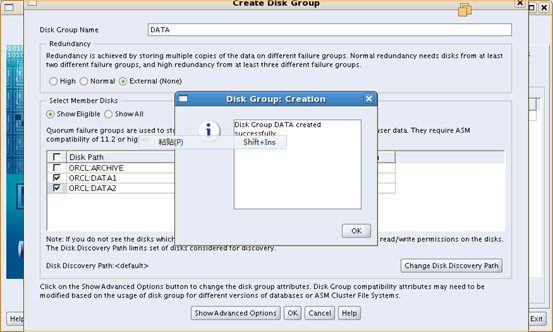

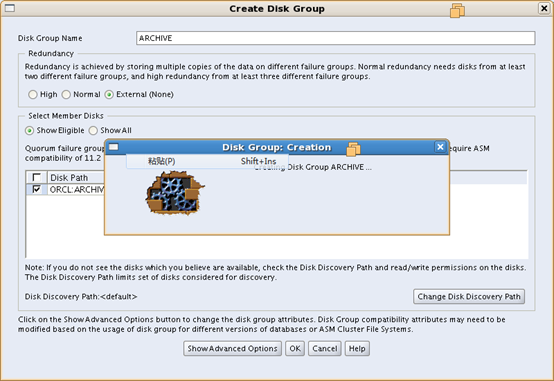

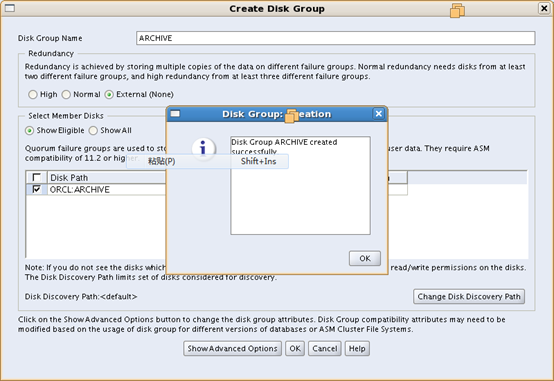

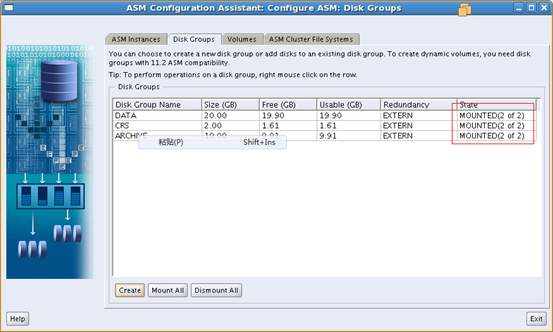

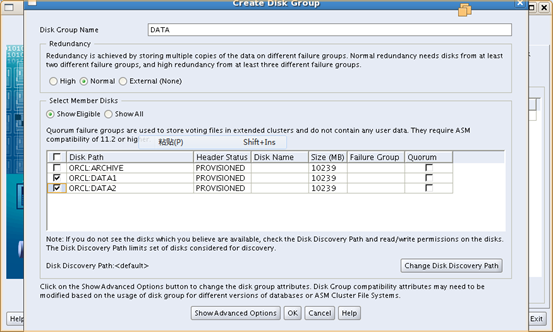

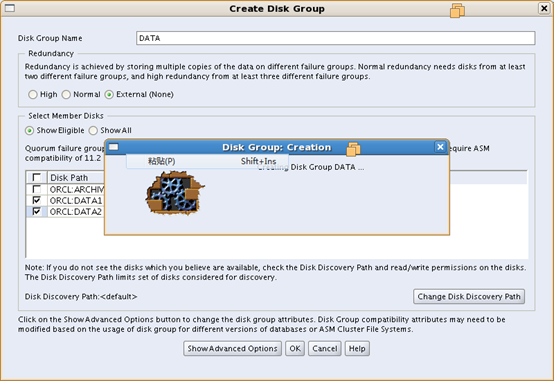

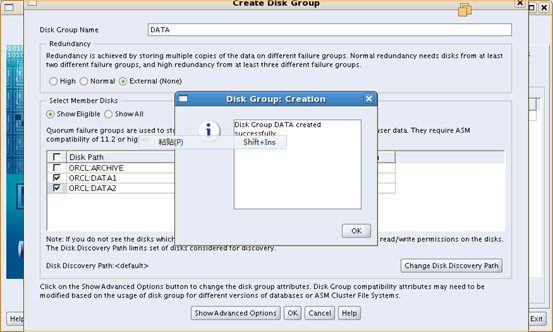

5 配置另外两个ASM Disk Group 73

5.1以grid用户在任意一节点运行asmca,进入配置界面 73

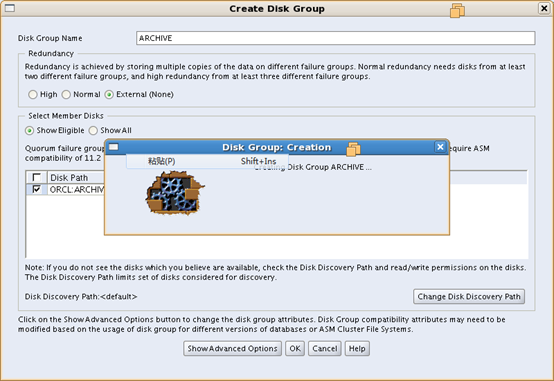

5.2点击create创建磁盘组 74

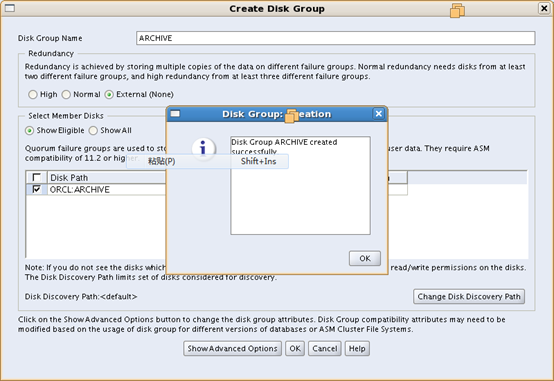

5.3浏览已经创建好的磁盘组 77

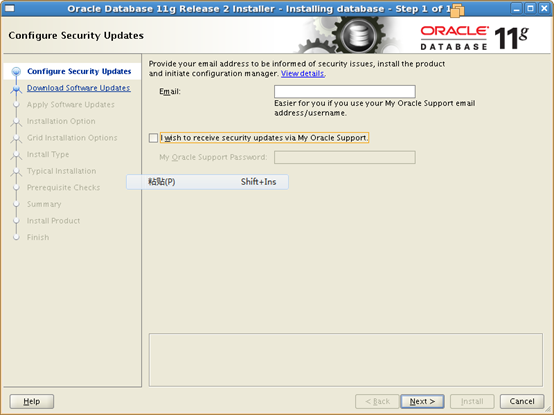

6 Oracle RAC安装 77

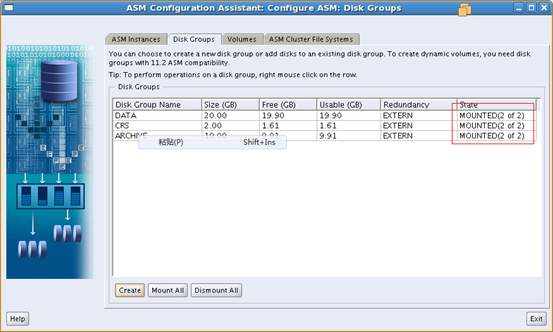

6.1以 Oracle用户在任意一节点运行./runInstaller 77

6.2Configrue Security Updates 78

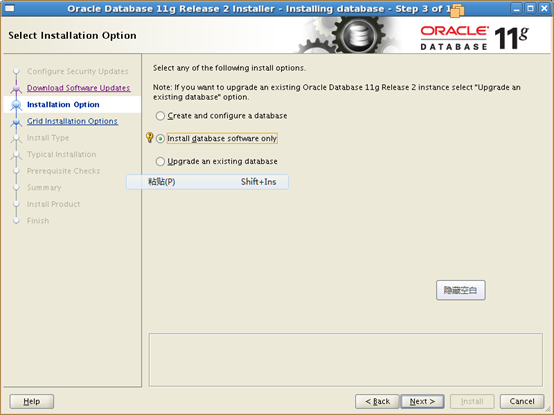

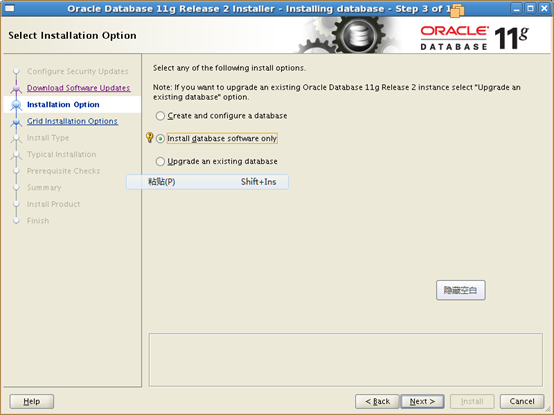

6.3Select Installation Option 79

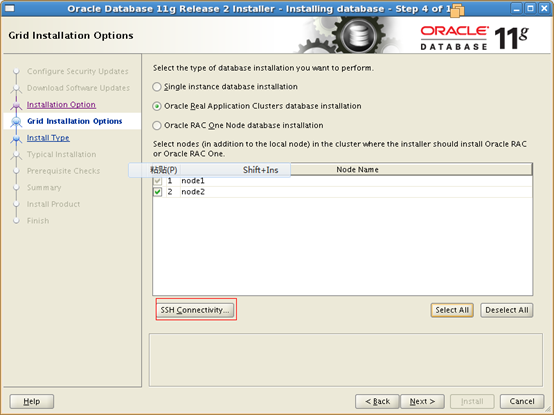

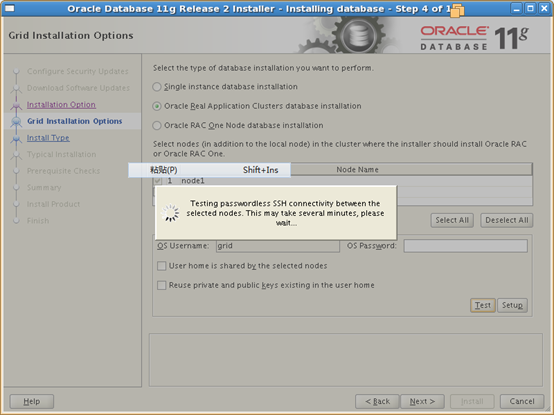

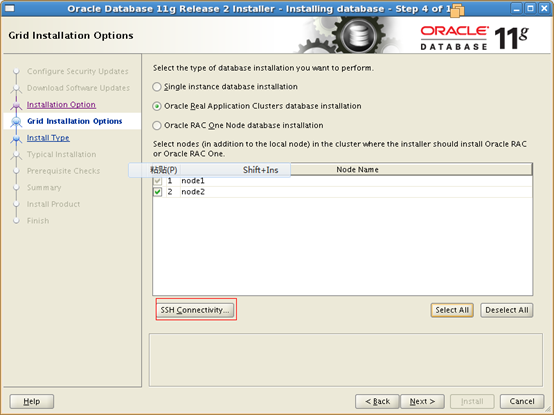

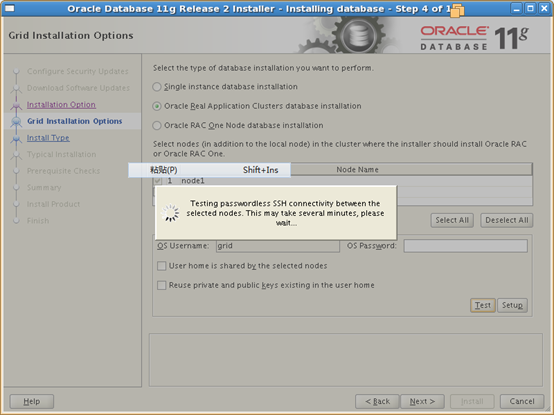

6.4Nodes Selection 80

6.5Select Product Languages 81

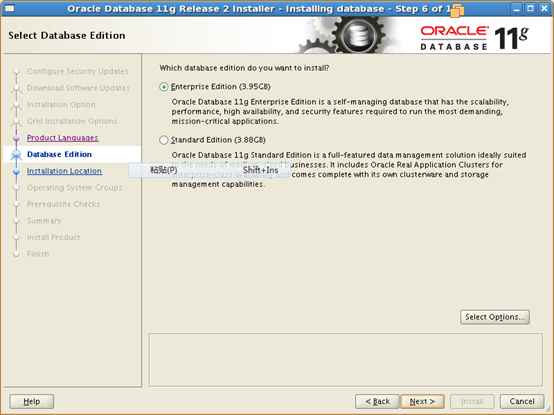

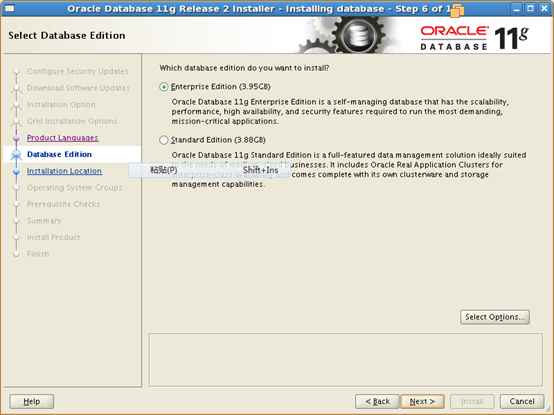

6.6Select Database Edition 82

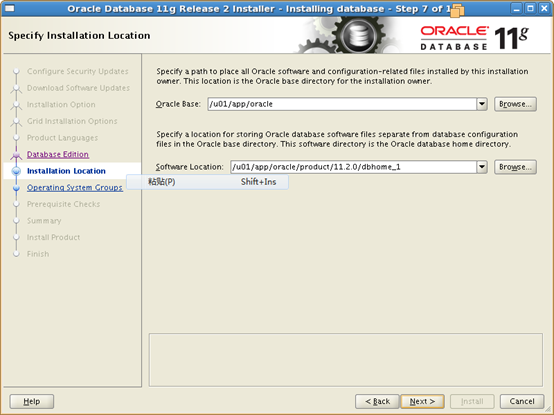

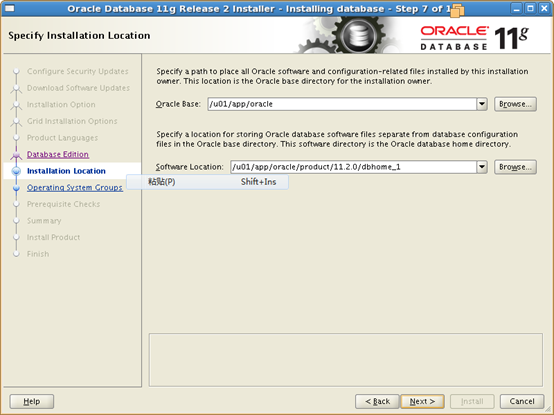

6.7Specify Installation Location 83

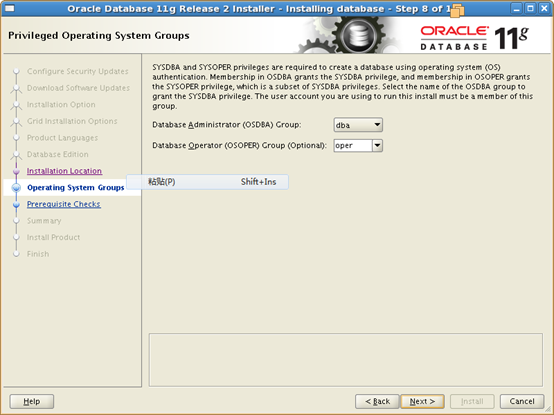

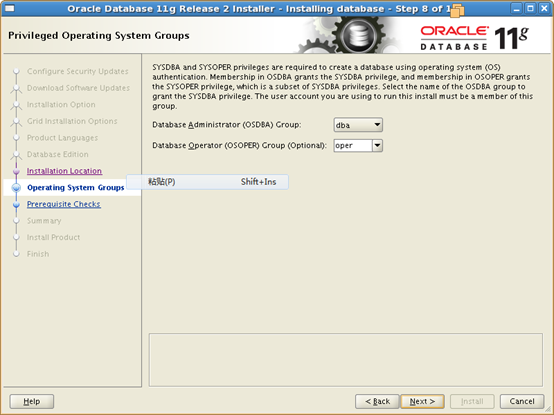

6.8Privilege Operating System Groups 84

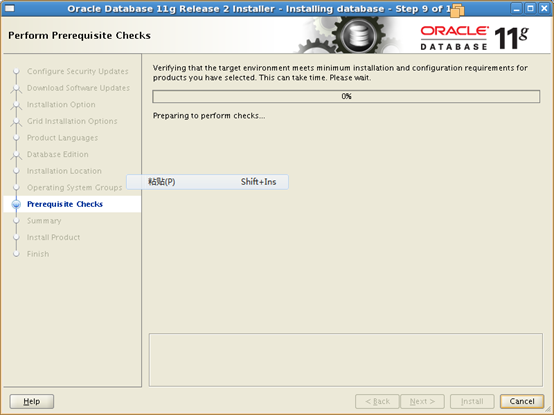

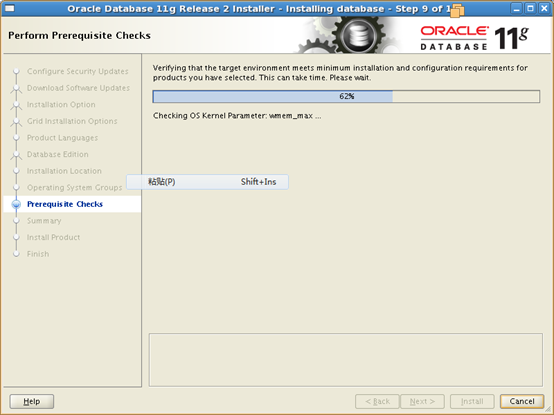

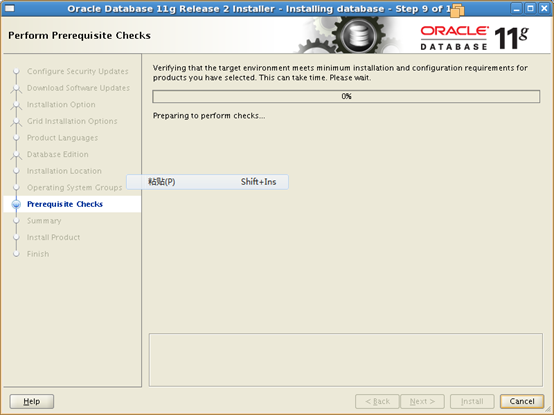

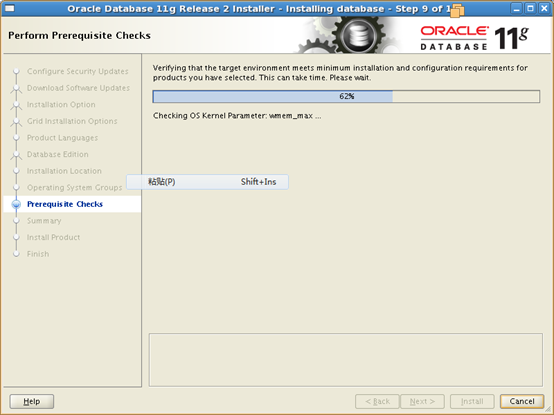

6.9Perform Prerequisite Checks 85

6.10Summary Informations 86

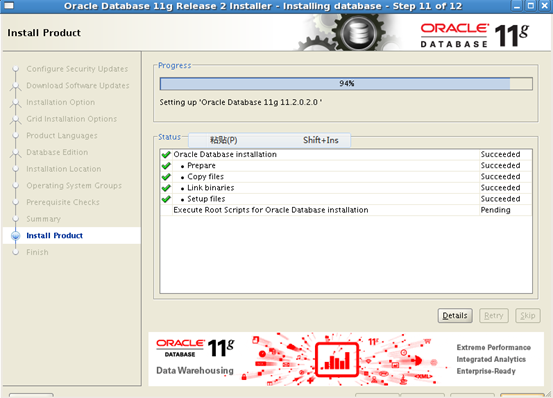

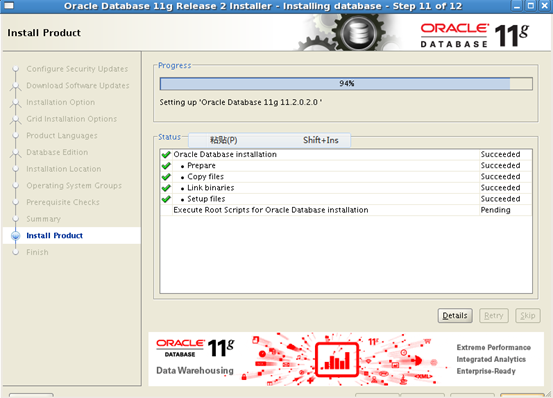

6.11Install Product 87

6.12以root用户在两个节点运行root.sh脚本 88

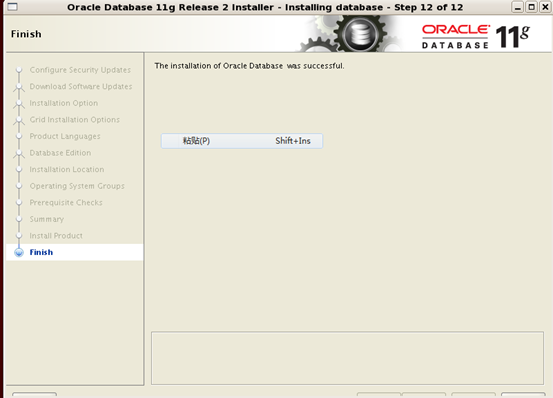

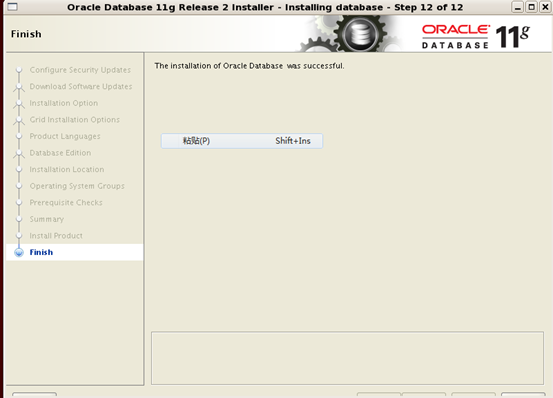

6.13安装成功,关闭窗口 89

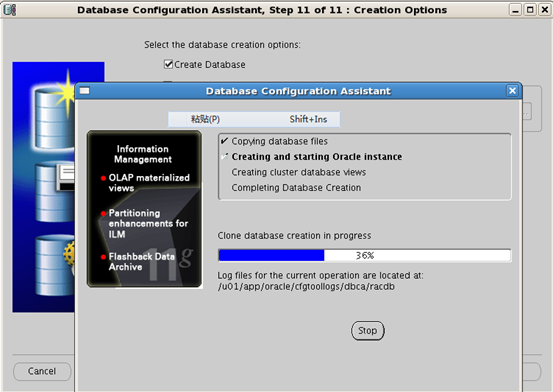

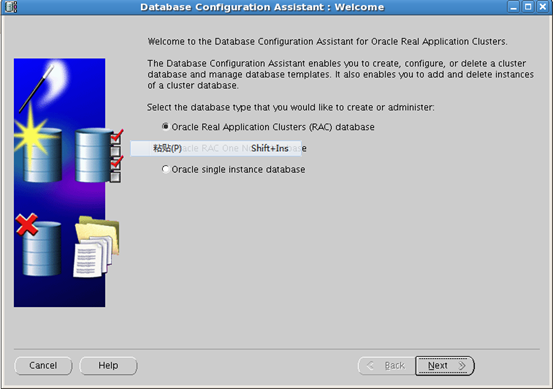

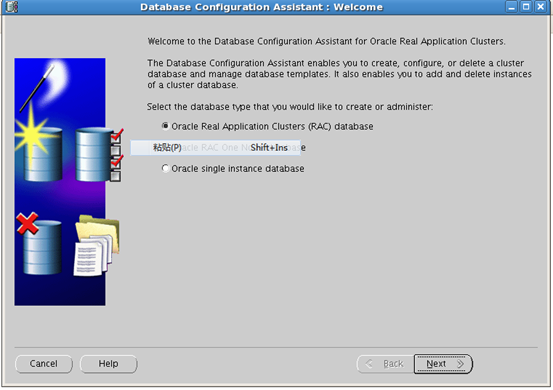

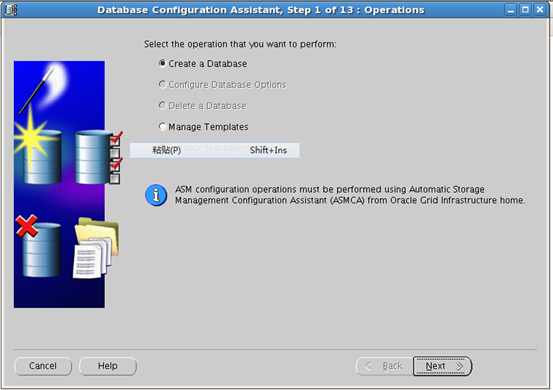

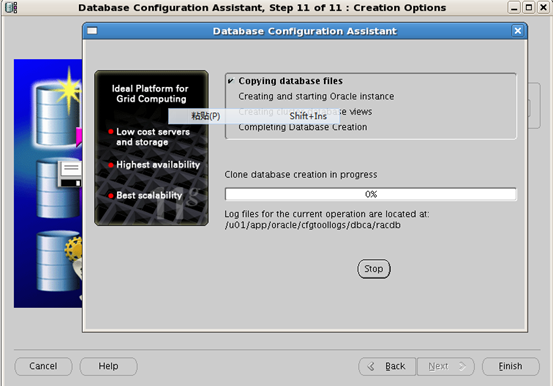

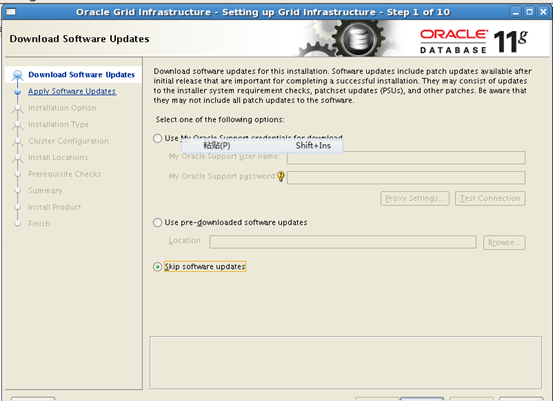

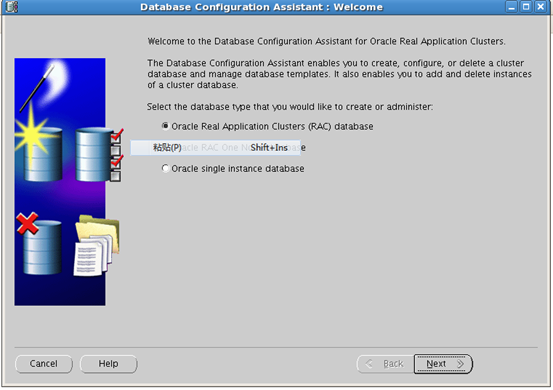

7创建集群数据库 90

7.1以Oracle用户在任意节点运行dbca 90

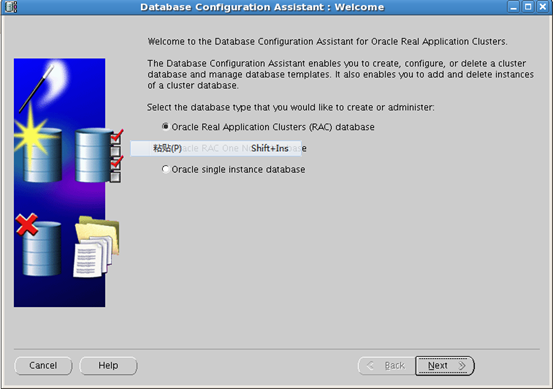

7.2选择"Oracle Real Application Clusters Database" 91

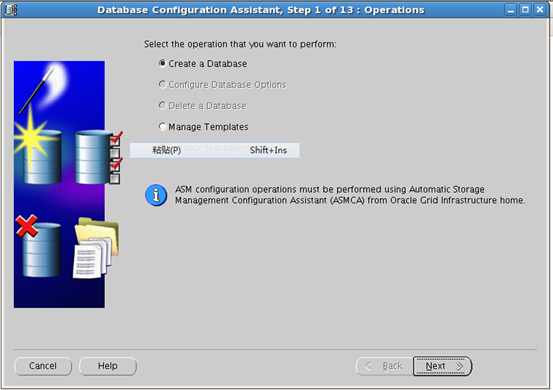

7.3选择"Create a Database" 91

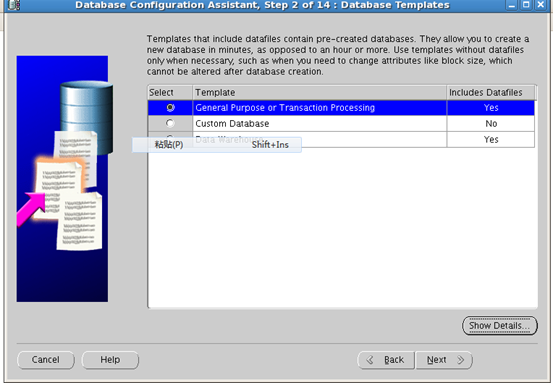

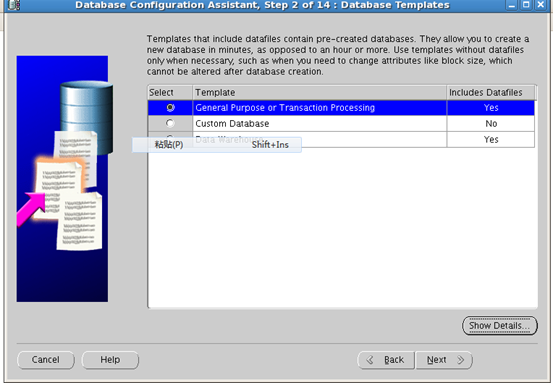

7.4选择"Genera Purpose or Transaction Processing" 92

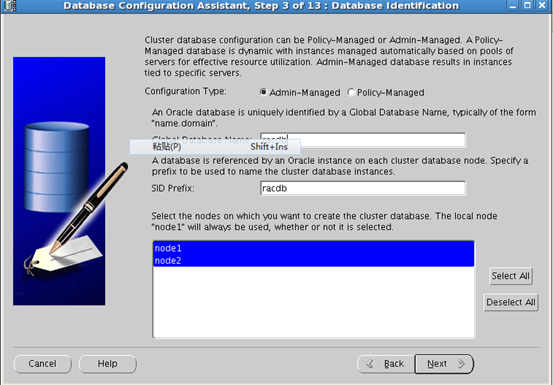

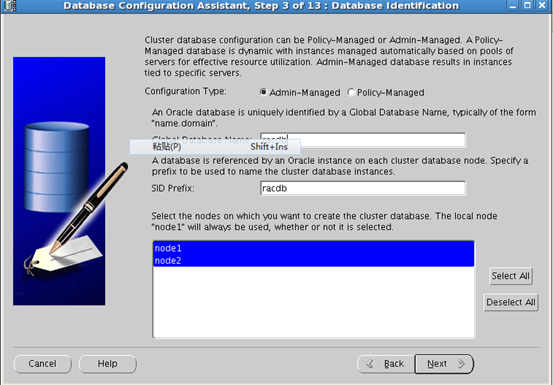

7.5Database Identitification 92

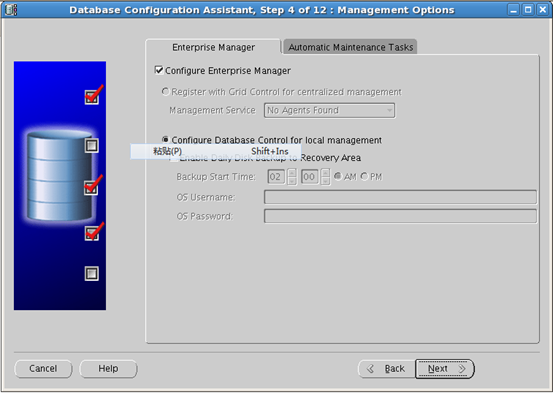

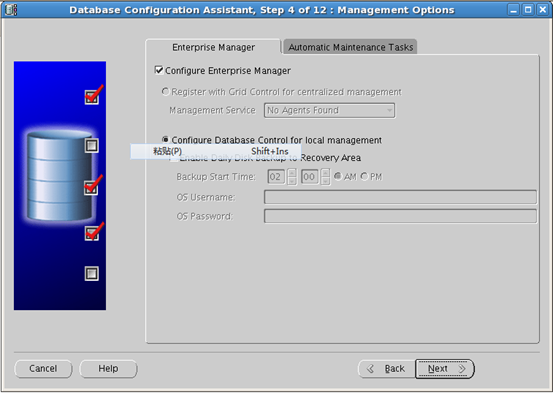

7.6选择配置EM 93

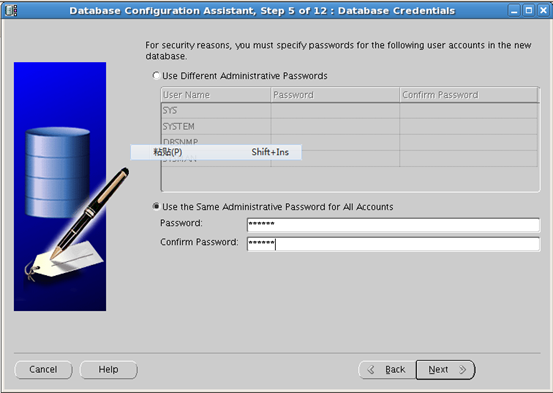

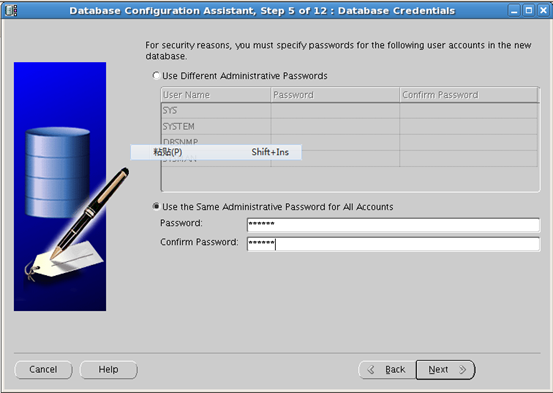

7.7数据库密码 93

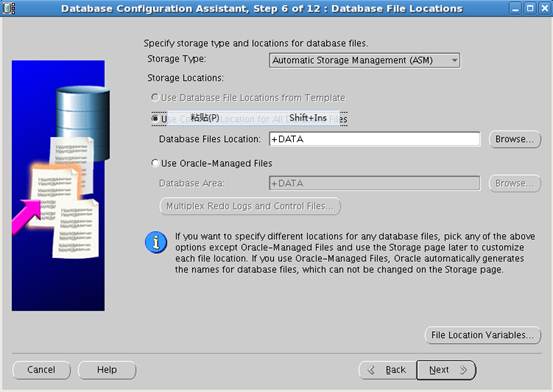

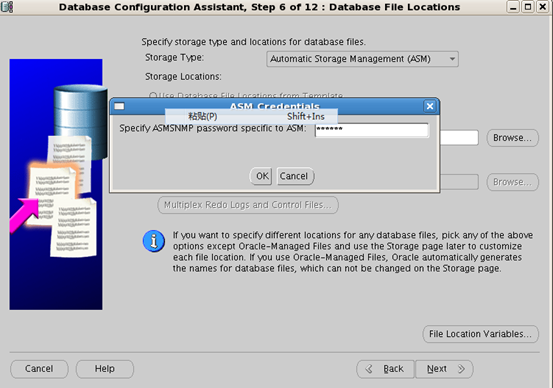

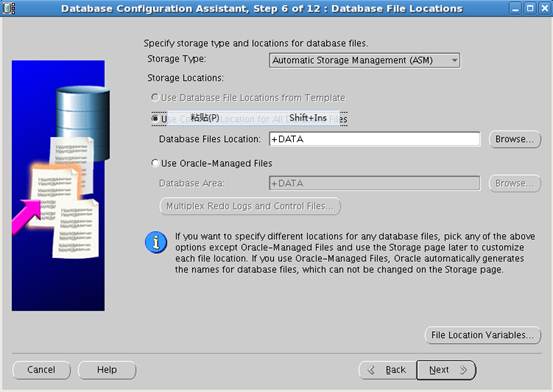

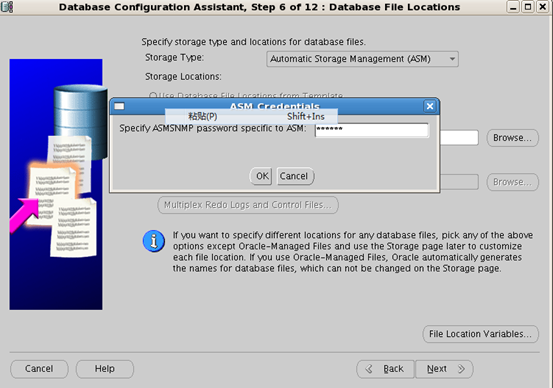

7.8设置数据库文件存储位置 94

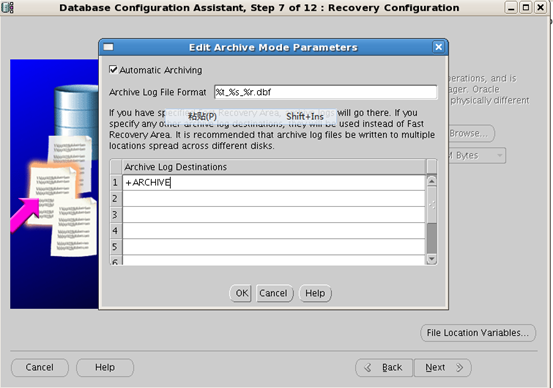

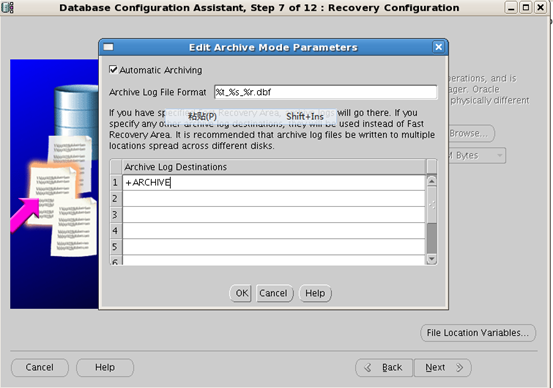

7.9归档配置 95

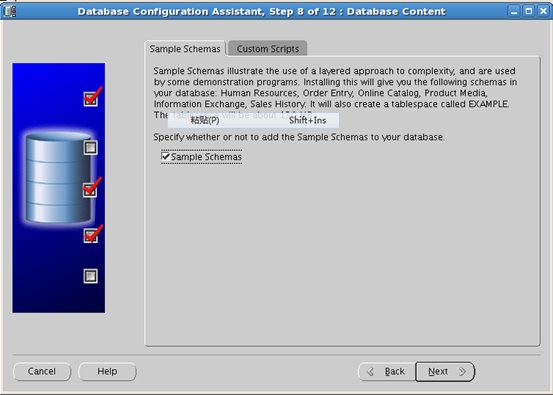

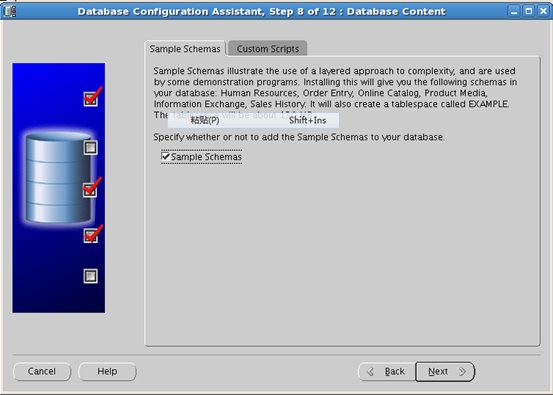

7.10勾选Sample(也可以不选) 95

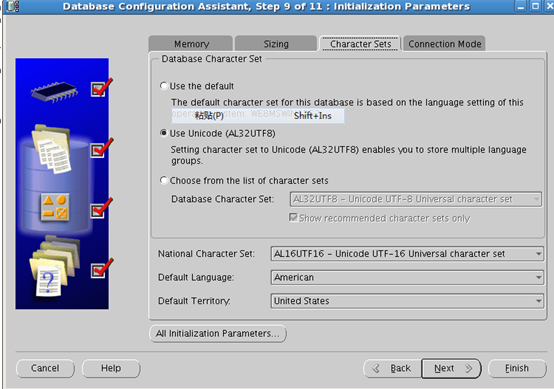

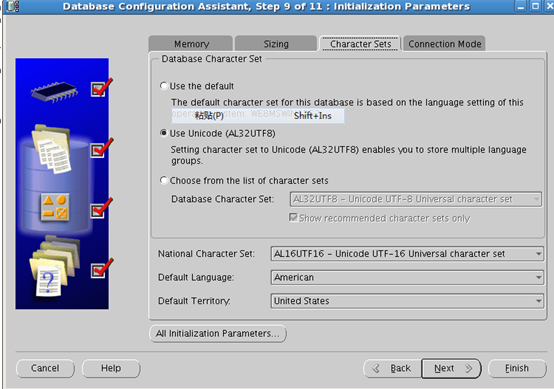

7.11配置所有初始化参数 96

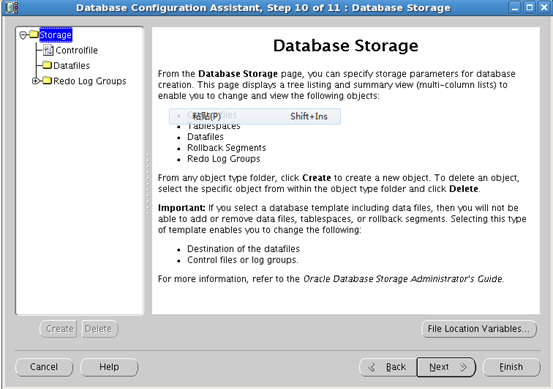

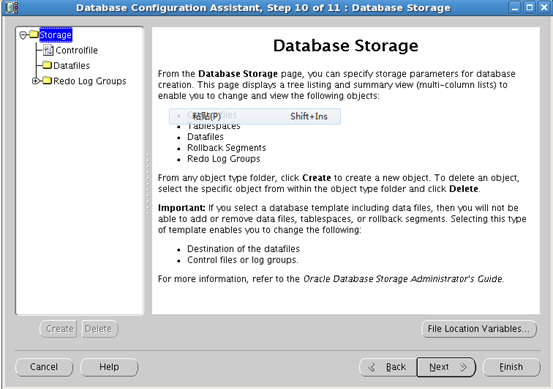

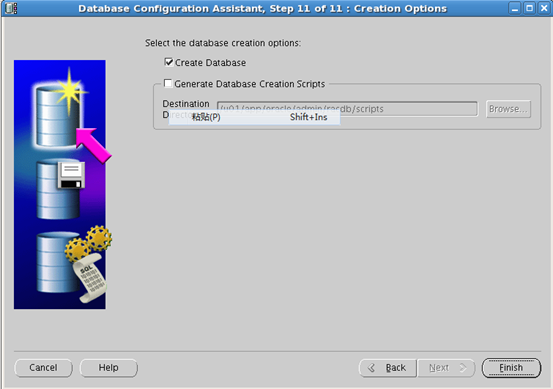

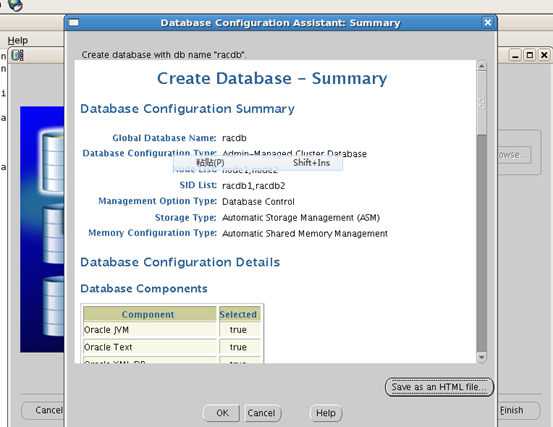

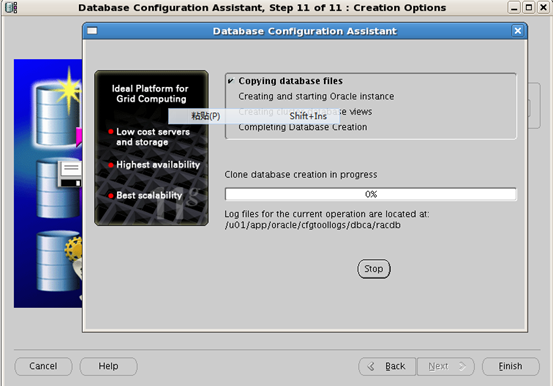

7.12开始创建数据库 97

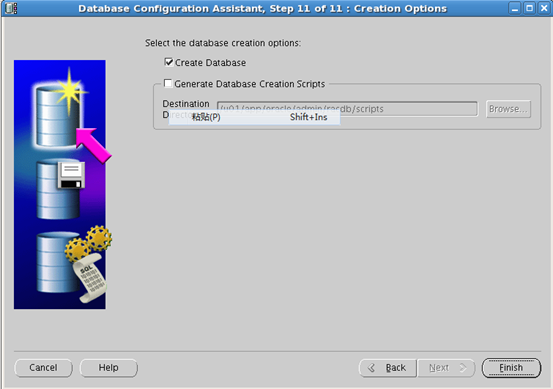

7.13统计信息 98

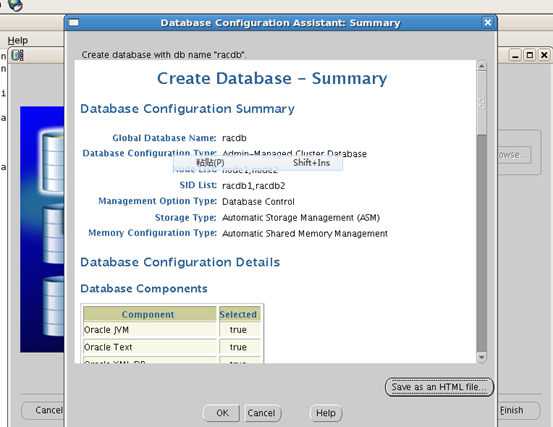

7.14数据库已经创建完毕 99

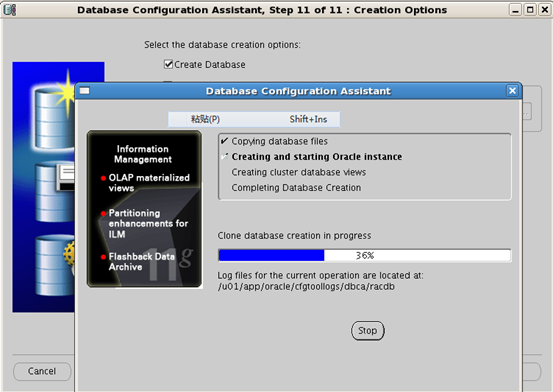

1 前期规划

1.1整体规划

Oracle RAC/Storage Server nodes

节点名称 | 实例名称 | 数据库名称 | RAM | OS |

Node1 | Racdb1 | racdb | 800M | Redhat5.4 32bit |

Node2 | Racdb1 | 800M | Redhat5.4 32bit | |

openfiler | 1G | 32bit | ||

Nodes Network Configuration

identity | Name | Type | IP Address | Resolved By |

Node1 Public | Node1-public | public | 192.168.80.130 | /etc/hosts |

Node1 private | Node1-private | private | 10.10.10.1 | /etc/hosts |

Node1 VIP | Node1-vip | Virtual | 192.168.80.131 | /etc/hosts |

Node2 Public | Node2-public | public | 192.168.80.140 | /etc/hosts |

Node2 private | Node2-private | private | 10.10.10.2 | /etc/hosts |

Node2 Vip | Node2-vip | Virtual | 192.168.80.141 | /etc/hosts |

Scan vip | Scan_vip | Virtual | 192.168.80.150 | /etc/hosts |

OracleSoftware Companents

Software Companetns | Grid Infrstructure | Oracle RAC |

OS USER | grid | oracle |

Primary Group | oinstall | oinstall |

Supplementary Group | Asmadmin,asmdba,asdmoper,dba | Dba,oper,asmdba |

Home Directory | /home/grid | /home/oracle |

Oracle Base | /u01/app/grid | /u01/app/oracle |

Oracle Home | /u01/app/11.2.0/grid | /u01/app/11.2.0/oracle |

Storage Companents

存储项 | 文件系统 | 大小 | ASM磁盘组 | 冗余方式 |

OCR/Voting | ASM | 1G | CRS | 外部 |

Datafile | ASM | 20G | DATA | 外部 |

Archive | ASM | 10G | ARCHIVE | 外部 |

1.2拓扑图

2 安装前准备配置

2.1检查两台机器物理内存

[root@node1 ~]# top | grep Mem

Mem: 807512k total, 449292k used, 358220k free, 28976k buffers

[root@node2 ~]# top | grep Mem

Mem: 807512k total, 439164k used, 368348k free, 28912k buffers

2.2.检查两台机器swap和/tmp

[root@node1 ~]# top | grep Swap

Swap: 2096472k total, 0k used, 2096472k free, 358600k cached

[root@node2 ~]# top | grep Swap

Swap: 2096472k total, 0k used, 2096472k free, 353620k cached

[root@node1 ~]# df -h /tmp/

Filesystem Size Used Avail Use% Mounted on

/dev/sda3 28G 3.8G 22G 15% /

[root@node2 ~]# df -h /tmp/

Filesystem Size Used Avail Use% Mounted on

/dev/sda3 28G 3.8G 22G 15% /

2.3验证操作系统版本和bit

[root@node1 ~]# uname -a

Linux node1 2.6.18-164.el5 #1 SMP Tue Aug 18 15:51:54 EDT 2009 i686 i686 i386 GNU/Linux

[root@node1 ~]# cat /etc/redhat-release

Red Hat Enterprise Linux Server release 5.4 (Tikanga)

[root@node2 ~]# uname -a

Linux node2 2.6.18-164.el5 #1 SMP Tue Aug 18 15:51:54 EDT 2009 i686 i686 i386 GNU/Linux

[root@node2 ~]# cat /etc/redhat-release

Red Hat Enterprise Linux Server release 5.4 (Tikanga)

2.4查看每个节点的日期时间

[root@node1 ~]# date

Mon Jan 14 20:26:49 CST 2013

[root@node2 ~]# date

Mon Jan 14 20:26:52 CST 2013

2.5检查和安装所需要的软件包

[root@node1 ~]# rpm -qa | grep binutils-2.17.50.0.6

rpm -qa | grep compat-libstdc++-33-3.2.3

rpm -qa | grep elfutils-libelf

rpm -qa | grep elfutils-libelf-devel

rpm -qa | grep gcc-4.1.2

rpm -qa | grep gcc-c++-4.1.2

rpm -qa | grep glibc

rpm -qa | grep glibc-common-2.5

rpm -qa | grep glibc-devel-2.5

rpm -qa | grep glibc-headers-2.5

rpm -qa | grep ksh

rpm -qa | grep libaio-0.3.106

rpm -qa | grep libaio-devel

rpm -qa | grep libgcc-4.1.2

rpm -qa | grep libstdc++-4.1.2

rpm -qa | grep libstdc++-devel

rpm -qa | grep make-3.81

rpm -qa | grep numactl-devel

rpm -qa | grep sysstat

rpm -qa | grep unixODBC

rpm -qa | grep unixODBC-devel

binutils-2.17.50.0.6-12.el5

[root@node1 ~]# rpm -qa | grep compat-libstdc++-33-3.2.3

compat-libstdc++-33-3.2.3-61

[root@node1 ~]# rpm -qa | grep elfutils-libelf

elfutils-libelf-0.137-3.el5

elfutils-libelf-devel-0.137-3.el5

elfutils-libelf-devel-static-0.137-3.el5

[root@node1 ~]# rpm -qa | grep elfutils-libelf-devel

elfutils-libelf-devel-0.137-3.el5

elfutils-libelf-devel-static-0.137-3.el5

[root@node1 ~]# rpm -qa | grep gcc-4.1.2

libgcc-4.1.2-46.el5

gcc-4.1.2-46.el5

[root@node1 ~]# rpm -qa | grep gcc-c++-4.1.2

gcc-c++-4.1.2-46.el5

[root@node1 ~]# rpm -qa | grep glibc

glibc-2.5-42

glibc-devel-2.5-42

compat-glibc-headers-2.3.4-2.26

glibc-headers-2.5-42

glibc-common-2.5-42

compat-glibc-2.3.4-2.26

[root@node1 ~]# rpm -qa | grep glibc-common-2.5

glibc-common-2.5-42

[root@node1 ~]# rpm -qa | grep glibc-devel-2.5

glibc-devel-2.5-42

[root@node1 ~]# rpm -qa | grep glibc-headers-2.5

glibc-headers-2.5-42

[root@node1 ~]# rpm -qa | grep ksh

ksh-20080202-14.el5

[root@node1 ~]# rpm -qa | grep libaio-0.3.106

libaio-0.3.106-3.2

[root@node1 ~]# rpm -qa | grep libaio-devel

[root@node1 ~]# rpm -qa | grep libgcc-4.1.2

libgcc-4.1.2-46.el5

[root@node1 ~]# rpm -qa | grep libstdc++-4.1.2

libstdc++-4.1.2-46.el5

[root@node1 ~]# rpm -qa | grep libstdc++-devel

libstdc++-devel-4.1.2-46.el5

[root@node1 ~]# rpm -qa | grep make-3.81

make-3.81-3.el5

[root@node1 ~]# rpm -qa | grep numactl-devel

[root@node1 ~]# rpm -qa | grep sysstat

[root@node1 ~]# rpm -qa | grep unixODBC

[root@node1 ~]# rpm -qa | grep unixODBC-devel

[root@node2 ~]# rpm -qa | grep binutils-2.17.50.0.6

rpm -qa | grep compat-libstdc++-33-3.2.3

rpm -qa | grep elfutils-libelf

rpm -qa | grep elfutils-libelf-devel

rpm -qa | grep gcc-4.1.2

rpm -qa | grep gcc-c++-4.1.2

rpm -qa | grep glibc

rpm -qa | grep glibc-common-2.5

rpm -qa | grep glibc-devel-2.5

rpm -qa | grep glibc-headers-2.5

rpm -qa | grep ksh

rpm -qa | grep libaio-0.3.106

rpm -qa | grep libaio-devel

rpm -qa | grep libgcc-4.1.2

rpm -qa | grep libstdc++-4.1.2

rpm -qa | grep libstdc++-devel

rpm -qa | grep make-3.81

rpm -qa | grep numactl-devel

rpm -qa | grep sysstat

rpm -qa | grep unixODBC

rpm -qa | grep unixODBC-devel

binutils-2.17.50.0.6-12.el5

[root@node2 ~]# rpm -qa | grep compat-libstdc++-33-3.2.3

compat-libstdc++-33-3.2.3-61

[root@node2 ~]# rpm -qa | grep elfutils-libelf

elfutils-libelf-0.137-3.el5

elfutils-libelf-devel-0.137-3.el5

elfutils-libelf-devel-static-0.137-3.el5

[root@node2 ~]# rpm -qa | grep elfutils-libelf-devel

elfutils-libelf-devel-0.137-3.el5

elfutils-libelf-devel-static-0.137-3.el5

[root@node2 ~]# rpm -qa | grep gcc-4.1.2

libgcc-4.1.2-46.el5

gcc-4.1.2-46.el5

[root@node2 ~]# rpm -qa | grep gcc-c++-4.1.2

gcc-c++-4.1.2-46.el5

[root@node2 ~]# rpm -qa | grep glibc

glibc-2.5-42

glibc-devel-2.5-42

compat-glibc-headers-2.3.4-2.26

glibc-headers-2.5-42

glibc-common-2.5-42

compat-glibc-2.3.4-2.26

[root@node2 ~]# rpm -qa | grep glibc-common-2.5

glibc-common-2.5-42

[root@node2 ~]# rpm -qa | grep glibc-devel-2.5

glibc-devel-2.5-42

[root@node2 ~]# rpm -qa | grep glibc-headers-2.5

glibc-headers-2.5-42

[root@node2 ~]# rpm -qa | grep ksh

ksh-20080202-14.el5

[root@node2 ~]# rpm -qa | grep libaio-0.3.106

libaio-0.3.106-3.2

[root@node2 ~]# rpm -qa | grep libaio-devel

[root@node2 ~]# rpm -qa | grep libgcc-4.1.2

libgcc-4.1.2-46.el5

[root@node2 ~]# rpm -qa | grep libstdc++-4.1.2

libstdc++-4.1.2-46.el5

[root@node2 ~]# rpm -qa | grep libstdc++-devel

libstdc++-devel-4.1.2-46.el5

[root@node2 ~]# rpm -qa | grep make-3.81

make-3.81-3.el5

[root@node2 ~]# rpm -qa | grep numactl-devel

[root@node2 ~]# rpm -qa | grep sysstat

[root@node2 ~]# rpm -qa | grep unixODBC

[root@node2 ~]# rpm -qa | grep unixODBC-devel

安装缺少的包

[root@node1 Server]# rpm -ivh libaio-devel-0.3.106-3.2.i386.rpm numactl-devel-0.9.8-8.el5.i386.rpm sysstat-7.0.2-3.el5.i386.rpm unixODBC-2.2.11-7.1.i386.rpm unixODBC-devel-2.2.11-7.1.i386.rpm

warning: libaio-devel-0.3.106-3.2.i386.rpm: Header V3 DSA signature: NOKEY, key ID 37017186

Preparing... ########################################### [100%]

1:numactl-devel ########################################### [ 20%]

2:libaio-devel ########################################### [ 40%]

3:unixODBC ########################################### [ 60%]

4:sysstat ########################################### [ 80%]

5:unixODBC-devel ########################################### [100%]

[root@node2 Server]# rpm -ivh libaio-devel-0.3.106-3.2.i386.rpm numactl-devel-0.9.8-8.el5.i386.rpm sysstat-7.0.2-3.el5.i386.rpm unixODBC-2.2.11-7.1.i386.rpm unixODBC-devel-2.2.11-7.1.i386.rpm

warning: libaio-devel-0.3.106-3.2.i386.rpm: Header V3 DSA signature: NOKEY, key ID 37017186

Preparing... ########################################### [100%]

1:numactl-devel ########################################### [ 20%]

2:libaio-devel ########################################### [ 40%]

3:unixODBC ########################################### [ 60%]

4:sysstat ########################################### [ 80%]

5:unixODBC-devel ########################################### [100%]

2.6配置网络环境和host文件

[root@node1 ~]# ifconfig -a

eth0 Link encap:Ethernet HWaddr 00:0C:29:DF:B0:D3

inet addr:192.168.80.130 Bcast:192.168.80.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fedf:b0d3/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:227 errors:0 dropped:0 overruns:0 frame:0

TX packets:128 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:31236 (30.5 KiB) TX bytes:21377 (20.8 KiB)

Interrupt:67 Base address:0x2024

eth1 Link encap:Ethernet HWaddr 00:0C:29:DF:B0:DD

inet addr:10.10.10.1 Bcast:10.10.10.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fedf:b0dd/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:528 errors:0 dropped:0 overruns:0 frame:0

TX packets:400 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:54982 (53.6 KiB) TX bytes:30939 (30.2 KiB)

Interrupt:67 Base address:0x20a4

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:9066 errors:0 dropped:0 overruns:0 frame:0

TX packets:9066 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:16258892 (15.5 MiB) TX bytes:16258892 (15.5 MiB)

sit0 Link encap:IPv6-in-IPv4

NOARP MTU:1480 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 b) TX bytes:0 (0.0 b)

[root@node2 ~]# ifconfig -a

eth0 Link encap:Ethernet HWaddr 00:0C:29:63:2F:C8

inet addr:192.168.80.140 Bcast:192.168.80.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe63:2fc8/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:192 errors:0 dropped:0 overruns:0 frame:0

TX packets:127 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:24575 (23.9 KiB) TX bytes:21090 (20.5 KiB)

Interrupt:67 Base address:0x2024

eth1 Link encap:Ethernet HWaddr 00:0C:29:63:2F:D2

inet addr:10.10.10.2 Bcast:10.10.10.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe63:2fd2/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:503 errors:0 dropped:0 overruns:0 frame:0

TX packets:412 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:49154 (48.0 KiB) TX bytes:30896 (30.1 KiB)

Interrupt:67 Base address:0x20a4

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:9078 errors:0 dropped:0 overruns:0 frame:0

TX packets:9078 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:16350556 (15.5 MiB) TX bytes:16350556 (15.5 MiB)

sit0 Link encap:IPv6-in-IPv4

NOARP MTU:1480 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:0 (0.0 b) TX bytes:0 (0.0 b)

Hosts文件

[root@node1 ~]# cat /etc/hosts

# Do not remove the following line, or various programs

# that require network functionality will fail.

127.0.0.1 localhost.localdomain localhost

::1 localhost6.localdomain6 localhost6

192.168.80.130 node1_public

192.168.80.140 node2_public

10.10.10.1 node1_private

10.10.10.2 node2_private

192.168.80.131 node1_vip

192.168.80.132 node2_vip

192.168.80.150 scan_vip

[root@node2 ~]# cat /etc/hosts

# Do not remove the following line, or various programs

# that require network functionality will fail.

127.0.0.1 localhost.localdomain localhost

::1 localhost6.localdomain6 localhost6

192.168.80.130 node1_public

192.168.80.140 node2_public

10.10.10.1 node1_private

10.10.10.2 node2_private

192.168.80.131 node1_vip

192.168.80.132 node2_vip

192.168.80.150 scan_vip

2.7创建grid,oracle的用户和需要的组

[root@node1 ~]# groupadd oinstall

groupadd dba

groupadd oper

groupadd asmdba

groupadd asmadmin

groupadd asmoper

useradd -g oinstall -G asmdba,asmadmin,asmoper,dba grid

useradd -g oinstall -G dba,asmdba,oper oracle[root@node1 ~]# groupadd dba

[root@node1 ~]# groupadd oper

[root@node1 ~]# groupadd asmdba

[root@node1 ~]# groupadd asmadmin

[root@node1 ~]# groupadd asmoper

[root@node1 ~]# useradd -g oinstall -G asmdba,asmadmin,asmoper,dba grid

[root@node1 ~]# useradd -g oinstall -G dba,asmdba,oper oracle

[root@node2 ~]# groupadd oinstall

groupadd oper

groupadd asmdba

groupadd asmadmin

groupadd asmoper

useradd -g oinstall -G asmdba,asmadmin,asmoper,dba grid

useradd -g oinstall -G dba,asmdba,oper oracle[root@node2 ~]# groupadd dba

[root@node2 ~]# groupadd oper

[root@node2 ~]# groupadd asmdba

[root@node2 ~]# groupadd asmadmin

[root@node2 ~]# groupadd asmoper

[root@node2 ~]# useradd -g oinstall -G asmdba,asmadmin,asmoper,dba grid

[root@node2 ~]# useradd -g oinstall -G dba,asmdba,oper oracle

[root@node2 ~]#

[root@node1 ~]# passwd grid

Changing password for user grid.

New UNIX password:

BAD PASSWORD: it is based on a dictionary word

Retype new UNIX password:

passwd: all authentication tokens updated successfully.

[root@node1 ~]# passwd oracle

Changing password for user oracle.

New UNIX password:

BAD PASSWORD: it is based on a dictionary word

Retype new UNIX password:

passwd: all authentication tokens updated successfully.

[root@node1 ~]#

[root@node2 ~]# passwd grid

Changing password for user grid.

New UNIX password:

BAD PASSWORD: it is based on a dictionary word

Retype new UNIX password:

passwd: all authentication tokens updated successfully.

[root@node2 ~]# passwd oracle

Changing password for user oracle.

New UNIX password:

BAD PASSWORD: it is based on a dictionary word

Retype new UNIX password:

passwd: all authentication tokens updated successfully.

2.8设置grid和Oracle用户的环境变量

[grid@node1 ~]$ id

uid=500(grid) gid=500(oinstall) groups=500(oinstall),501(dba),503(asmdba),504(asmadmin),505(asmoper)

[grid@node1 ~]$ cat .bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin

export PATH

export ORACLE_SID=+ASM1

export JAVA_HOME=/usr/local/java

export ORACLE_BASE=/u01/app/grid

export ORACLE_HOME=/u01/app/11.2.0/grid

export ORACLE_PATH=/u01/app/oracle/dba_scripts/common/sql

export TNS_ADMIN=$ORACLE_HOME/network/admin

export ORA_NLS11=$ORACLE_HOME/nls/data

export PATH=${JAVA_HOME}/bin:${PATH}:$HOME/bin:$ORACLE_HOME/bin:$PATH

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:$ORACLE_HOME/oracm/lib:$PATH

export CLASSPATH=$ORACLE_HOME/JRE

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/jlib

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/rdbms/jlib

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/network/jlib:$ORACLE_HOME/oracm/lib

umask 022

[grid@node2 ~]$ id

uid=500(grid) gid=500(oinstall) groups=500(oinstall),501(dba),503(asmdba),504(asmadmin),505(asmoper)

[grid@node2 ~]$ cat .bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin

export PATH

export ORACLE_SID=+ASM2

export JAVA_HOME=/usr/local/java

export ORACLE_BASE=/u01/app/grid

export ORACLE_HOME=/u01/app/11.2.0/grid

export ORACLE_PATH=/u01/app/oracle/dba_scripts/common/sql

export TNS_ADMIN=$ORACLE_HOME/network/admin

export ORA_NLS11=$ORACLE_HOME/nls/data

export PATH=${JAVA_HOME}/bin:${PATH}:$HOME/bin:$ORACLE_HOME/bin:$PATH

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:$ORACLE_HOME/oracm/lib:$PATH

export CLASSPATH=$ORACLE_HOME/JRE

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/jlib

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/rdbms/jlib

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/network/jlib:$ORACLE_HOME/oracm/lib

umask 022

[oracle@node1 ~]$ cat .bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin

export PATH

export ORACLE_SID=racdb1

export ORACLE_UNQNAME=racdb

export JAVA_HOME=/usr/local/java

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=$ORACLE_BASE/product/11.2.0/dbhome_1

export ORACLE_PATH=$ORACLE_BASE/dba_scripts/common/sql:$ORACLE_HOME/rdbms/admin

export TNS_ADMIN=$ORACLE_HOME/network/admin

export ORA_NLS11=$ORACLE_HOME/nls/data

export PATH=${JAVA_HOME}/bin:${PATH}:$HOME/bin:$ORACLE_HOME/bin:$PATH

export LD_LIBRARY_PATH=$ORACLE_HOME/lib

export LD_LIBRARY_PATH=${LD_LIBRARY_PATH}:$ORACLE_HOME/oracm/lib

export LD_LIBRARY_PATH=${LD_LIBRARY_PATH}:/lib:/usr/lib:/usr/local/lib

export CLASSPATH=$ORACLE_HOME/JRE

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/jlib

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/rdbms/jlib

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/network/jlib

umask 022

[oracle@node1 ~]$ id

uid=501(oracle) gid=500(oinstall) groups=500(oinstall),501(dba),502(oper),503(asmdba)

[oracle@node2 ~]$ cat .bash_profile

# .bash_profile

# Get the aliases and functions

if [ -f ~/.bashrc ]; then

. ~/.bashrc

fi

# User specific environment and startup programs

PATH=$PATH:$HOME/bin

export PATH

export ORACLE_SID=racdb2

export ORACLE_UNQNAME=racdb

export JAVA_HOME=/usr/local/java

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=$ORACLE_BASE/product/11.2.0/dbhome_1

export ORACLE_PATH=$ORACLE_BASE/dba_scripts/common/sql:$ORACLE_HOME/rdbms/admin

export TNS_ADMIN=$ORACLE_HOME/network/admin

export ORA_NLS11=$ORACLE_HOME/nls/data

export PATH=${JAVA_HOME}/bin:${PATH}:$HOME/bin:$ORACLE_HOME/bin:$PATH

export LD_LIBRARY_PATH=$ORACLE_HOME/lib

export LD_LIBRARY_PATH=${LD_LIBRARY_PATH}:$ORACLE_HOME/oracm/lib

export LD_LIBRARY_PATH=${LD_LIBRARY_PATH}:/lib:/usr/lib:/usr/local/lib

export CLASSPATH=$ORACLE_HOME/JRE

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/jlib

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/rdbms/jlib

export CLASSPATH=${CLASSPATH}:$ORACLE_HOME/network/jlib

umask 022

[oracle@node2 ~]$ id

uid=501(oracle) gid=500(oinstall) groups=500(oinstall),501(dba),502(oper),503(asmdba)

2.9配置grid用户ssh互信

2.9.1配置grid用户ssh互信

1 在node1上

[root@node1 ~]# su - grid

[grid@node1 ~]$ mkdir .ssh

chmod 700 .ssh/

cd .ssh/[grid@node1 ~]$ chmod 700 .ssh/

[grid@node1 ~]$ cd .ssh/

[grid@node1 .ssh]$ ssh

ssh ssh-add ssh-agent ssh-copy-id ssh-keygen ssh-keyscan

[grid@node1 .ssh]$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/grid/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/grid/.ssh/id_rsa.

Your public key has been saved in /home/grid/.ssh/id_rsa.pub.

The key fingerprint is:

28:e7:99:e4:70:21:39:da:89:b8:ac:2c:1a:0c:9f:f9 grid@node1

[grid@node1 .ssh]$ ssh-keygen -t dsa

Generating public/private dsa key pair.

Enter file in which to save the key (/home/grid/.ssh/id_dsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/grid/.ssh/id_dsa.

Your public key has been saved in /home/grid/.ssh/id_dsa.pub.

The key fingerprint is:

45:4f:e5:8a:88:32:3a:ea:d3:8e:fd:7b:22:d5:11:4f grid@node1

- 在node2上

[root@node2 ~]# su - grid

[grid@node2 ~]$ mkdir .ssh

[grid@node2 ~]$ chmod 700 .ssh/

[grid@node2 ~]$ cd .ssh/

[grid@node2 .ssh]$ ssh

ssh ssh-add ssh-agent ssh-copy-id ssh-keygen ssh-keyscan

[grid@node2 .ssh]$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/grid/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/grid/.ssh/id_rsa.

Your public key has been saved in /home/grid/.ssh/id_rsa.pub.

The key fingerprint is:

14:8a:89:d6:85:fd:47:2d:4c:fa:a3:0f:fe:a9:20:ad grid@node2

[grid@node2 .ssh]$ ssh-keygen -t dsa

Generating public/private dsa key pair.

Enter file in which to save the key (/home/grid/.ssh/id_dsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/grid/.ssh/id_dsa.

Your public key has been saved in /home/grid/.ssh/id_dsa.pub.

The key fingerprint is:

51:93:35:a1:de:01:73:1d:4e:e2:a7:43:e6:3f:0a:7e grid@node2

- 添加密钥到密钥授权文件中 authorized_keys

[grid@node1 .ssh]$ cat *.pub >> authorized_keys

[grid@node1 .ssh]$ ls

authorized_keys id_dsa id_dsa.pub id_rsa id_rsa.pub

[grid@node2 .ssh]$ cat *.pub >> authorized_keys

[grid@node2 .ssh]$ ls

authorized_keys id_dsa id_dsa.pub id_rsa id_rsa.pub

4)交换并同步密钥

[grid@node1 .ssh]$ cat /etc/hosts

# Do not remove the following line, or various programs

# that require network functionality will fail.

127.0.0.1 localhost.localdomain localhost

::1 localhost6.localdomain6 localhost6

192.168.80.130 node1_public

192.168.80.140 node2_public

10.10.10.1 node1_private

10.10.10.2 node2_private

192.168.80.131 node1_vip

192.168.80.132 node2_vip

192.168.80.150 scan_vip

[grid@node1 .ssh]$ scp authorized_keys node2_public:/home/grid/.ssh/keys

The authenticity of host 'node2_public (192.168.80.140)' can't be established.

RSA key fingerprint is 11:6b:a6:f4:8f:3c:8d:7b:4a:10:cf:79:5c:ce:c9:d9.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node2_public,192.168.80.140' (RSA) to the list of known hosts.

grid@node2_public's password:

Permission denied, please try again.

grid@node2_public's password:

authorized_keys 100% 992 1.0KB/s 00:00

[grid@node2 .ssh]$ cat keys >> authorized_keys

[grid@node2 .ssh]$ scp authorized_keys node1_public:/home/grid/.ssh/

The authenticity of host 'node1_public (192.168.80.130)' can't be established.

RSA key fingerprint is 11:6b:a6:f4:8f:3c:8d:7b:4a:10:cf:79:5c:ce:c9:d9.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1_public,192.168.80.130' (RSA) to the list of known hosts.

grid@node1_public's password:

authorized_keys 100% 1984 1.9KB/s 00:00

2.9.2测试grid用户ssh互信

Node1:

Ssh node1_public

Ssh node1_private

Ssh node2_public

Ssh node2_private

Node2:

Ssh node1_public

Ssh node1_private

Ssh node2_public

Ssh node2_private

2.10配置Oracle用户ssh互信

2.10.1配置oracle用户ssh互信

- node1上创建公钥密钥

[oracle@node1 ~]$ mkdir .ssh

[oracle@node1 ~]$ chmod 700 .ssh/

[oracle@node1 ~]$ cd .ssh/

[oracle@node1 .ssh]$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/oracle/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/oracle/.ssh/id_rsa.

Your public key has been saved in /home/oracle/.ssh/id_rsa.pub.

The key fingerprint is:

d1:65:e7:36:f2:45:69:d8:94:1c:82:b0:ef:84:fd:4f oracle@node1

[oracle@node1 .ssh]$ ssh-keygen -t dsa

Generating public/private dsa key pair.

Enter file in which to save the key (/home/oracle/.ssh/id_dsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/oracle/.ssh/id_dsa.

Your public key has been saved in /home/oracle/.ssh/id_dsa.pub.

The key fingerprint is:

73:b5:b5:42:d7:f8:60:5b:d7:3b:ac:71:93:79:c1:e3 oracle@node1

- node2上创建公钥密钥

[oracle@node2 ~]$ mkdir .ssh

chmod 700 .ssh/

[oracle@node2 ~]$ chmod 700 .ssh/

cd .ssh/

[oracle@node2 ~]$ cd .ssh/

[oracle@node2 .ssh]$

[oracle@node2 .ssh]$ ls

[oracle@node2 .ssh]$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/oracle/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/oracle/.ssh/id_rsa.

Your public key has been saved in /home/oracle/.ssh/id_rsa.pub.

The key fingerprint is:

89:39:79:c4:1f:09:e8:18:bb:50:b4:10:e3:5f:e8:c0 oracle@node2

[oracle@node2 .ssh]$ ssh-keygen -t dsa

Generating public/private dsa key pair.

Enter file in which to save the key (/home/oracle/.ssh/id_dsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/oracle/.ssh/id_dsa.

Your public key has been saved in /home/oracle/.ssh/id_dsa.pub.

The key fingerprint is:

12:5e:74:19:dd:0a:88:97:46:79:fa:ed:0b:78:93:35 oracle@node2

- 添加密钥到密钥授权文件authorized_keys

[oracle@node1 .ssh]$ cat *.pub >> authorized_keys

[oracle@node1 .ssh]$ ls

authorized_keys id_dsa id_dsa.pub id_rsa id_rsa.pub

[oracle@node2 .ssh]$ cat *.pub >> authorized_keys

[oracle@node2 .ssh]$ ls

authorized_keys id_dsa id_dsa.pub id_rsa id_rsa.pub

- 交换并同步密钥

[oracle@node1 .ssh]$ scp authorized_keys node2_public:/home/oracle/.ssh/keys

The authenticity of host 'node2_public (192.168.80.140)' can't be established.

RSA key fingerprint is 11:6b:a6:f4:8f:3c:8d:7b:4a:10:cf:79:5c:ce:c9:d9.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node2_public,192.168.80.140' (RSA) to the list of known hosts.

oracle@node2_public's password:

authorized_keys 100% 996 1.0KB/s 00:00

[oracle@node2 .ssh]$ cat keys >> authorized_keys

[oracle@node2 .ssh]$ ls

authorized_keys id_dsa id_dsa.pub id_rsa id_rsa.pub keys

[oracle@node2 .ssh]$ scp authorized_keys node1_public:/home/oracle/.ssh/

The authenticity of host 'node1_public (192.168.80.130)' can't be established.

RSA key fingerprint is 11:6b:a6:f4:8f:3c:8d:7b:4a:10:cf:79:5c:ce:c9:d9.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1_public,192.168.80.130' (RSA) to the list of known hosts.

oracle@node1_public's password:

authorized_keys 100% 1992 2.0KB/s 00:00

2.10.2测试oracle用户ssh互信

Node1:

Ssh node1_public

Ssh node1_private

Ssh node2_public

Ssh node2_private

Node2:

Ssh node1_public

Ssh node1_private

Ssh node2_public

Ssh node2_private

2.11配置kernel和Oracle相关的Shell限制

编辑"/etc/sysctl.conf /etc/sysctl."

[root@node1 ~]# sysctl -p

net.ipv4.ip_forward = 0

net.ipv4.conf.default.rp_filter = 1

net.ipv4.conf.default.accept_source_route = 0

kernel.sysrq = 0

kernel.core_uses_pid = 1

net.ipv4.tcp_syncookies = 1

kernel.msgmnb = 65536

kernel.msgmax = 65536

fs.aio-max-nr = 1048576

fs.file-max = 6815744

kernel.shmall = 2097152

kernel.shmmax = 5872025600

kernel.shmmni = 4096

kernel.sem = 250 32000 100 128

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 262144

net.core.rmem_max = 4194304

net.core.wmem_default = 262144

net.core.wmem_max = 1048586

[root@node2 ~]# sysctl -p

net.ipv4.ip_forward = 0

net.ipv4.conf.default.rp_filter = 1

net.ipv4.conf.default.accept_source_route = 0

kernel.sysrq = 0

kernel.core_uses_pid = 1

net.ipv4.tcp_syncookies = 1

kernel.msgmnb = 65536

kernel.msgmax = 65536

fs.aio-max-nr = 1048576

fs.file-max = 6815744

kernel.shmall = 2097152

kernel.shmmax = 5872025600

kernel.shmmni = 4096

kernel.sem = 250 32000 100 128

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 262144

net.core.rmem_max = 4194304

net.core.wmem_default = 262144

net.core.wmem_max = 1048586

编辑"/etc/security/limits.conf" 在两个节点执行

cat >> /etc/security/limits.conf <<EOF

grid soft nproc 2047

grid hard nproc 16384

grid soft nofile 1024

grid hard nofile 65536

oracle soft nproc 2047

oracle hard nproc 16384

oracle soft nofile 1024

oracle hard nofile 65536

EOF

编辑"/etc/pam.d/login"文件 在两个节点执行

cat >> /etc/pam.d/login <<EOF

session required pam_limits.so

EOF

编辑"/etc/profile"在两个节点执行

cat >> /etc/profile <<EOF

if [ /$USER= "oracle" ] || [ /$USER = "grid" ]; then

if[ /$SHELL = "/bin/ksh" ]; then

ulimit-p 16384

ulimit-n 65536

else

ulimit-u 16384 -n 65536

fi

umask022

fi

EOF

2.12禁用Selinux

[root@node1 ~]# cat /etc/selinux/config

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - SELinux is fully disabled.

SELINUX=disabled

# SELINUXTYPE= type of policy in use. Possible values are:

# targeted - Only targeted network daemons are protected.

# strict - Full SELinux protection.

SELINUXTYPE=targeted

[root@node2 ~]# cat /etc/selinux/config

config config,v

[root@node2 ~]# cat /etc/selinux/config

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - SELinux is fully disabled.

SELINUX=disabled

# SELINUXTYPE= type of policy in use. Possible values are:

# targeted - Only targeted network daemons are protected.

# strict - Full SELinux protection.

SELINUXTYPE=targeted

2.13配置时间同步

[root@node1 ~]# cat /etc/ntp.conf

erver 192.168.80.130

restrict 192.168.80.130 mask 255.255.255.255 nomodify notrap noquery

server 127.127.1.0

[root@node2 ~]# cat /etc/ntp.conf

server 192.168.80.130

restrict 192.168.80.130 mask 255.255.255.255 nomodify notrap noquery

[root@node1 ~]# cat /etc/sysconfig/ntpd

# Drop root to id 'ntp:ntp' by default.

OPTIONS="-x -u ntp:ntp -p /var/run/ntpd.pid"

# Set to 'yes' to sync hw clock after successful ntpdate

SYNC_HWCLOCK=yes

# Additional options for ntpdate

NTPDATE_OPTIONS=""

[root@node2 ~]# cat /etc/sysconfig/ntpd

# Drop root to id 'ntp:ntp' by default.

OPTIONS="-x -u ntp:ntp -p /var/run/ntpd.pid"

# Set to 'yes' to sync hw clock after successful ntpdate

SYNC_HWCLOCK=yes

# Additional options for ntpdate

NTPDATE_OPTIONS=""

[root@node1 ~]# service ntpd restart

Shutting down ntpd: [FAILED]

ntpd: Synchronizing with time server: [FAILED]

Starting ntpd: [ OK ]

[root@node1 ~]# chkconfig ntpd on

[root@node2 ~]# service ntpd restart

Shutting down ntpd: [FAILED]

ntpd: Synchronizing with time server: [FAILED]

Starting ntpd: [ OK ]

[root@node2 ~]# chkconfig ntpd on

测试

[root@node2 ~]# ntpdate 192.168.80.130

15 Jan 10:32:39 ntpdate[10532]: step time server 192.168.80.130 offset 31726.584320 sec

[root@node2 ~]# date

Tue Jan 15 10:32:42 CST 2013

2.14配置ASM磁盘

2.14.1安装ASM包

[root@node1 2.6.18-164.el5]# rpm -ivh *.rpm --force --nodeps

warning: oracleasm-2.6.18-164.el5-2.0.5-1.el5.i686.rpm: Header V3 DSA signature: NOKEY, key ID 1e5e0159

Preparing... ########################################### [100%]

1:oracleasm-support ########################################### [ 14%]

2:oracleasm-2.6.18-164.el########################################### [ 29%]

3:oracleasm-2.6.18-164.el########################################### [ 43%]

4:oracleasm-2.6.18-164.el########################################### [ 57%]

5:oracleasm-2.6.18-164.el########################################### [ 71%]

6:oracleasm-2.6.18-164.el########################################### [ 86%]

7:oracleasmlib ########################################### [100%]

[root@node2 2.6.18-164.el5]# rpm -ivh *.rpm --force --nodeps

warning: oracleasm-2.6.18-164.el5-2.0.5-1.el5.i686.rpm: Header V3 DSA signature: NOKEY, key ID 1e5e0159

Preparing... ########################################### [100%]

1:oracleasm-support ########################################### [ 14%]

2:oracleasm-2.6.18-164.el########################################### [ 29%]

3:oracleasm-2.6.18-164.el########################################### [ 43%]

4:oracleasm-2.6.18-164.el########################################### [ 57%]

5:oracleasm-2.6.18-164.el########################################### [ 71%]

6:oracleasm-2.6.18-164.el########################################### [ 86%]

7:oracleasmlib ########################################### [100%]

open-iscsi的安装

首先下载open-iscsi-2.0-871,然后执行:两个节点tar xzvf open-iscsi-2.0-871.tar.gz

cd open-iscsi-2.0-871

make

make installrpm -ivh iscsi-initiator-utils-6.2.0.871-0.10.el5.i386.rpm

Service iscis start

iscsiadm -m discovery -t sendtargets -p 192.168.80.90

192.168.80.90:3260,1 iqn.2006-01.com.openfiler:tsn.09b20940df9d

iscsiadm -m node -T iqn.2006-01.com.openfiler:tsn.09b20940df9d -p 192.168.80.90 -l

[root@node1 Server]# fdisk -l

Disk /dev/sda: 32.2 GB, 32212254720 bytes

255 heads, 63 sectors/track, 3916 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 274 2096482+ 82 Linux swap / Solaris

/dev/sda3 275 3916 29254365 83 Linux

Disk /dev/sdb: 1073 MB, 1073741824 bytes

34 heads, 61 sectors/track, 1011 cylinders

Units = cylinders of 2074 * 512 = 1061888 bytes

Disk /dev/sdb doesn't contain a valid partition table

Disk /dev/sdc: 1073 MB, 1073741824 bytes

34 heads, 61 sectors/track, 1011 cylinders

Units = cylinders of 2074 * 512 = 1061888 bytes

Disk /dev/sdc doesn't contain a valid partition table

Disk /dev/sdd: 10.7 GB, 10737418240 bytes

64 heads, 32 sectors/track, 10240 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Disk /dev/sdd doesn't contain a valid partition table

Disk /dev/sde: 10.7 GB, 10737418240 bytes

64 heads, 32 sectors/track, 10240 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Disk /dev/sde doesn't contain a valid partition table

Disk /dev/sdf: 10.7 GB, 10737418240 bytes

64 heads, 32 sectors/track, 10240 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Disk /dev/sdf doesn't contain a valid partition table

2.14.2划分共享盘

[root@node1 Server]# fdisk /dev/sd

sda sda1 sda2 sda3 sdb sdc sdd sde sdf

[root@node1 Server]# fdisk /dev/sdb

Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel

Building a new DOS disklabel. Changes will remain in memory only,

until you decide to write them. After that, of course, the previous

content won't be recoverable.

Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite)

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-1011, default 1):

Using default value 1

Last cylinder or +size or +sizeM or +sizeK (1-1011, default 1011):

Using default value 1011

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@node1 Server]# fdisk /dev/sdc

Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel

Building a new DOS disklabel. Changes will remain in memory only,

until you decide to write them. After that, of course, the previous

content won't be recoverable.

Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite)

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-1011, default 1):

Using default value 1

Last cylinder or +size or +sizeM or +sizeK (1-1011, default 1011):

Using default value 1011

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@node1 Server]# fdisk /dev/sdd

Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel

Building a new DOS disklabel. Changes will remain in memory only,

until you decide to write them. After that, of course, the previous

content won't be recoverable.

The number of cylinders for this disk is set to 10240.

There is nothing wrong with that, but this is larger than 1024,

and could in certain setups cause problems with:

1) software that runs at boot time (e.g., old versions of LILO)

2) booting and partitioning software from other OSs

(e.g., DOS FDISK, OS/2 FDISK)

Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite)

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-10240, default 1):

Using default value 1

Last cylinder or +size or +sizeM or +sizeK (1-10240, default 10240):

Using default value 10240

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@node1 Server]# fdisk /dev/sde

Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel

Building a new DOS disklabel. Changes will remain in memory only,

until you decide to write them. After that, of course, the previous

content won't be recoverable.

The number of cylinders for this disk is set to 10240.

There is nothing wrong with that, but this is larger than 1024,

and could in certain setups cause problems with:

1) software that runs at boot time (e.g., old versions of LILO)

2) booting and partitioning software from other OSs

(e.g., DOS FDISK, OS/2 FDISK)

Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite)

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-10240, default 1):

Using default value 1

Last cylinder or +size or +sizeM or +sizeK (1-10240, default 10240):

Using default value 10240

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@node1 Server]# fdisk /dev/sdf

Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel

Building a new DOS disklabel. Changes will remain in memory only,

until you decide to write them. After that, of course, the previous

content won't be recoverable.

The number of cylinders for this disk is set to 10240.

There is nothing wrong with that, but this is larger than 1024,

and could in certain setups cause problems with:

1) software that runs at boot time (e.g., old versions of LILO)

2) booting and partitioning software from other OSs

(e.g., DOS FDISK, OS/2 FDISK)

Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite)

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-10240, default 1):

Using default value 1

Last cylinder or +size or +sizeM or +sizeK (1-10240, default 10240):

Using default value 10240

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

[root@node2 Server]# fdisk -l

Disk /dev/sda: 32.2 GB, 32212254720 bytes

255 heads, 63 sectors/track, 3916 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 274 2096482+ 82 Linux swap / Solaris

/dev/sda3 275 3916 29254365 83 Linux

Disk /dev/sdb: 1073 MB, 1073741824 bytes

34 heads, 61 sectors/track, 1011 cylinders

Units = cylinders of 2074 * 512 = 1061888 bytes

Device Boot Start End Blocks Id System

/dev/sdb1 1 1011 1048376+ 83 Linux

Disk /dev/sdc: 1073 MB, 1073741824 bytes

34 heads, 61 sectors/track, 1011 cylinders

Units = cylinders of 2074 * 512 = 1061888 bytes

Device Boot Start End Blocks Id System

/dev/sdc1 1 1011 1048376+ 83 Linux

Disk /dev/sdd: 10.7 GB, 10737418240 bytes

64 heads, 32 sectors/track, 10240 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Device Boot Start End Blocks Id System

/dev/sdd1 1 10240 10485744 83 Linux

Disk /dev/sde: 10.7 GB, 10737418240 bytes

64 heads, 32 sectors/track, 10240 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Device Boot Start End Blocks Id System

/dev/sde1 1 10240 10485744 83 Linux

Disk /dev/sdf: 10.7 GB, 10737418240 bytes

64 heads, 32 sectors/track, 10240 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Device Boot Start End Blocks Id System

/dev/sdf1 1 10240 10485744 83 Linux

2.14.3配置ASM Driver

[root@node1 Server]# /etc/init.d/oracleasm configure

Configuring the Oracle ASM library driver.

This will configure the on-boot properties of the Oracle ASM library

driver. The following questions will determine whether the driver is

loaded on boot and what permissions it will have. The current values

will be shown in brackets ('[]'). Hitting <ENTER> without typing an

answer will keep that current value. Ctrl-C will abort.

Default user to own the driver interface []: grid

Default group to own the driver interface []: asmadmin

Start Oracle ASM library driver on boot (y/n) [n]: y

Scan for Oracle ASM disks on boot (y/n) [y]: y

Writing Oracle ASM library driver configuration: done

Initializing the Oracle ASMLib driver: [ OK ]

Scanning the system for Oracle ASMLib disks: [ OK ]

[root@node2 Server]# /etc/init.d/oracleasm configure

Configuring the Oracle ASM library driver.

This will configure the on-boot properties of the Oracle ASM library

driver. The following questions will determine whether the driver is

loaded on boot and what permissions it will have. The current values

will be shown in brackets ('[]'). Hitting <ENTER> without typing an

answer will keep that current value. Ctrl-C will abort.

Default user to own the driver interface []: grid

Default group to own the driver interface []: asmadmin

Start Oracle ASM library driver on boot (y/n) [n]: y

Scan for Oracle ASM disks on boot (y/n) [y]: y

Writing Oracle ASM library driver configuration: done

Initializing the Oracle ASMLib driver: [ OK ]

Scanning the system for Oracle ASMLib disks: [ OK ]

2.14.4创建ASM磁盘组

[root@node1 Server]# oracleasm createdisk CRS1 /dev/sdb1

oracleasm createdisk CRS2 /dev/sdc1

oracleasm createdisk DATA1 /dev/sdd1

oracleasm createdisk DATA2 /dev/sde1

oracleasm createdisk ARCHIVE /dev/sdf1Writing disk header: done

Instantiating disk: done

[root@node1 Server]# oracleasm createdisk CRS2 /dev/sdc1

Writing disk header: done

Instantiating disk: done

[root@node1 Server]# oracleasm createdisk DATA1 /dev/sdd1

Writing disk header: done

Instantiating disk: done

[root@node1 Server]# oracleasm createdisk DATA2 /dev/sde1

Writing disk header: done

Instantiating disk: done

[root@node1 Server]# oracleasm createdisk ARCHIVE /dev/sdf1

Writing disk header: done

2.14.5在节点上扫描磁盘

[root@node1 Server]# /etc/init.d/oracleasm enable

Writing Oracle ASM library driver configuration: done

Initializing the Oracle ASMLib driver: [ OK ]

Scanning the system for Oracle ASMLib disks: [ OK ]

[root@node1 Server]# /etc/init.d/oracleasm scandisks

Scanning the system for Oracle ASMLib disks: [ OK ]

[root@node1 Server]# /etc/init.d/oracleasm listdisks

ARCHIVE

CRS1

CRS2

DATA1

DATA2

[root@node2 Server]# /etc/init.d/oracleasm enable

Writing Oracle ASM library driver configuration: done

Initializing the Oracle ASMLib driver: [ OK ]

Scanning the system for Oracle ASMLib disks: [ OK ]

[root@node2 Server]# /etc/init.d/oracleasm scandisks

Scanning the system for Oracle ASMLib disks: [ OK ]

[root@node2 Server]# /etc/init.d/oracleasm listdisks

ARCHIVE

CRS1

CRS2

DATA1

DATA2

2.15安装cvuqdisk package for linux

[root@node1 Server]# cd /mnt/hgfs/

2.6.18-164.el5/ iscsi/ oracle_11gr2020_linux_32bit/

[root@node1 Server]# cd /mnt/hgfs/oracle_11gr2020_linux_32bit/

[root@node1 oracle_11gr2020_linux_32bit]# ls

cdclient database deinstall examples gateways grid

[root@node1 oracle_11gr2020_linux_32bit]# cd grid/

[root@node1 grid]# ls

doc install readme.html response rpm runcluvfy.sh runInstaller sshsetup stage welcome.html

[root@node1 grid]# cd rpm/

[root@node1 rpm]# ls

cvuqdisk-1.0.9-1.rpm

[root@node1 rpm]# rpm -ivh cvuqdisk-1.0.9-1.rpm

Preparing... ########################################### [100%]

Using default group oinstall to install package

1:cvuqdisk ########################################### [100%]

[root@node2 Server]# cd /mnt/hgfs/oracle_11gr2020_linux_32bit/grid/

[root@node2 grid]# cd rpm/

[root@node2 rpm]# rpm -ivh cvuqdisk-1.0.9-1.rpm

Preparing... ########################################### [100%]

Using default group oinstall to install package

1:cvuqdisk ########################################### [100%]

3 安装前检查

验证oracle clusterware环境

./runcluvfy.sh stage -pre crsinst -fixup -n node1,node2 -verbose

验证硬件环境

./runcluvfy.sh stage -post hwos -n node1,node2 -verbose

[grid@node1 grid]$ ./runcluvfy.sh stage -pre crsinst -fixup -n node1,node2 -verbose

Performing pre-checks for cluster services setup

Checking node reachability...

Check: Node reachability from node "node1"

Destination Node Reachable?

------------------------------------ ------------------------

node1 yes

node2 yes

Result: Node reachability check passed from node "node1"

Checking user equivalence...

Check: User equivalence for user "grid"

Node Name Comment

------------------------------------ ------------------------

node2 passed

node1 passed

Result: User equivalence check passed for user "grid"

Checking node connectivity...

Checking hosts config file...

Node Name Status Comment

------------ ------------------------ ------------------------

node2 passed

node1 passed

Verification of the hosts config file successful

Interface information for node "node2"

Name IP Address Subnet Gateway Def. Gateway HW Address MTU

------ --------------- --------------- --------------- --------------- ----------------- ------

eth0 192.168.80.140 192.168.80.0 0.0.0.0 10.10.10.254 00:0C:29:63:2F:C8 1500

eth1 10.10.10.2 10.10.10.0 0.0.0.0 10.10.10.254 00:0C:29:63:2F:D2 1500

Interface information for node "node1"

Name IP Address Subnet Gateway Def. Gateway HW Address MTU

------ --------------- --------------- --------------- --------------- ----------------- ------

eth0 192.168.80.130 192.168.80.0 0.0.0.0 10.10.10.254 00:0C:29:DF:B0:D3 1500

eth1 10.10.10.1 10.10.10.0 0.0.0.0 10.10.10.254 00:0C:29:DF:B0:DD 1500

Check: Node connectivity of subnet "192.168.80.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

node2[192.168.80.140] node1[192.168.80.130] yes

Result: Node connectivity passed for subnet "192.168.80.0" with node(s) node2,node1

Check: TCP connectivity of subnet "192.168.80.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

node1:192.168.80.130 node2:192.168.80.140 passed

Result: TCP connectivity check passed for subnet "192.168.80.0"

Check: Node connectivity of subnet "10.10.10.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

node2[10.10.10.2] node1[10.10.10.1] yes

Result: Node connectivity passed for subnet "10.10.10.0" with node(s) node2,node1

Check: TCP connectivity of subnet "10.10.10.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

node1:10.10.10.1 node2:10.10.10.2 passed

Result: TCP connectivity check passed for subnet "10.10.10.0"

Interfaces found on subnet "10.10.10.0" that are likely candidates for VIP are:

node2 eth1:10.10.10.2

node1 eth1:10.10.10.1

Interfaces found on subnet "192.168.80.0" that are likely candidates for a private interconnect are:

node2 eth0:192.168.80.140

node1 eth0:192.168.80.130

Result: Node connectivity check passed

Checking ASMLib configuration.

Node Name Comment

------------------------------------ ------------------------

node2 passed

node1 passed

Result: Check for ASMLib configuration passed.

Check: Total memory

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 788.5859MB (807512.0KB) 1.5GB (1572864.0KB) failed

node1 788.5859MB (807512.0KB) 1.5GB (1572864.0KB) failed

Result: Total memory check failed

Check: Available memory

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 634.5859MB (649816.0KB) 50MB (51200.0KB) passed

node1 635.2852MB (650532.0KB) 50MB (51200.0KB) passed

Result: Available memory check passed

Check: Swap space

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 1.9994GB (2096472.0KB) 1.5GB (1572864.0KB) passed

node1 1.9994GB (2096472.0KB) 1.5GB (1572864.0KB) passed

Result: Swap space check passed

Check: Free disk space for "node2:/tmp"

Path Node Name Mount point Available Required Comment

---------------- ------------ ------------ ------------ ------------ ------------

/tmp node2 / 22.583GB 1GB passed

Result: Free disk space check passed for "node2:/tmp"

Check: Free disk space for "node1:/tmp"

Path Node Name Mount point Available Required Comment

---------------- ------------ ------------ ------------ ------------ ------------

/tmp node1 / 22.9425GB 1GB passed

Result: Free disk space check passed for "node1:/tmp"

Check: User existence for "grid"

Node Name Status Comment

------------ ------------------------ ------------------------

node2 exists(500) passed

node1 exists(500) passed

Checking for multiple users with UID value 500

Result: Check for multiple users with UID value 500 passed

Result: User existence check passed for "grid"

Check: Group existence for "oinstall"

Node Name Status Comment

------------ ------------------------ ------------------------

node2 exists passed

node1 exists passed

Result: Group existence check passed for "oinstall"

Check: Group existence for "dba"

Node Name Status Comment

------------ ------------------------ ------------------------

node2 exists passed

node1 exists passed

Result: Group existence check passed for "dba"

Check: Membership of user "grid" in group "oinstall" [as Primary]

Node Name User Exists Group Exists User in Group Primary Comment

---------------- ------------ ------------ ------------ ------------ ------------

node2 yes yes yes yes passed

node1 yes yes yes yes passed

Result: Membership check for user "grid" in group "oinstall" [as Primary] passed

Check: Membership of user "grid" in group "dba"

Node Name User Exists Group Exists User in Group Comment

---------------- ------------ ------------ ------------ ----------------

node2 yes yes yes passed

node1 yes yes yes passed

Result: Membership check for user "grid" in group "dba" passed

Check: Run level

Node Name run level Required Comment

------------ ------------------------ ------------------------ ----------

node2 5 3,5 passed

node1 5 3,5 passed

Result: Run level check passed

Check: Hard limits for "maximum open file descriptors"

Node Name Type Available Required Comment

---------------- ------------ ------------ ------------ ----------------

node2 hard 65536 65536 passed

node1 hard 65536 65536 passed

Result: Hard limits check passed for "maximum open file descriptors"

Check: Soft limits for "maximum open file descriptors"

Node Name Type Available Required Comment

---------------- ------------ ------------ ------------ ----------------

node2 soft 1024 1024 passed

node1 soft 1024 1024 passed

Result: Soft limits check passed for "maximum open file descriptors"

Check: Hard limits for "maximum user processes"

Node Name Type Available Required Comment

---------------- ------------ ------------ ------------ ----------------

node2 hard 16384 16384 passed

node1 hard 16384 16384 passed

Result: Hard limits check passed for "maximum user processes"

Check: Soft limits for "maximum user processes"

Node Name Type Available Required Comment

---------------- ------------ ------------ ------------ ----------------

node2 soft 2047 2047 passed

node1 soft 2047 2047 passed

Result: Soft limits check passed for "maximum user processes"

Check: System architecture

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 i686 x86 passed

node1 i686 x86 passed

Result: System architecture check passed

Check: Kernel version

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 2.6.18-164.el5 2.6.18 passed

node1 2.6.18-164.el5 2.6.18 passed

Result: Kernel version check passed

Check: Kernel parameter for "semmsl"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 250 250 passed

node1 250 250 passed

Result: Kernel parameter check passed for "semmsl"

Check: Kernel parameter for "semmns"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 32000 32000 passed

node1 32000 32000 passed

Result: Kernel parameter check passed for "semmns"

Check: Kernel parameter for "semopm"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 100 100 passed

node1 100 100 passed

Result: Kernel parameter check passed for "semopm"

Check: Kernel parameter for "semmni"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 128 128 passed

node1 128 128 passed

Result: Kernel parameter check passed for "semmni"

Check: Kernel parameter for "shmmax"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 1577058304 413446144 passed

node1 1577058304 413446144 passed

Result: Kernel parameter check passed for "shmmax"

Check: Kernel parameter for "shmmni"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 4096 4096 passed

node1 4096 4096 passed

Result: Kernel parameter check passed for "shmmni"

Check: Kernel parameter for "shmall"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 2097152 2097152 passed

node1 2097152 2097152 passed

Result: Kernel parameter check passed for "shmall"

Check: Kernel parameter for "file-max"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 6815744 6815744 passed

node1 6815744 6815744 passed

Result: Kernel parameter check passed for "file-max"

Check: Kernel parameter for "ip_local_port_range"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 between 9000 & 65500 between 9000 & 65500 passed

node1 between 9000 & 65500 between 9000 & 65500 passed

Result: Kernel parameter check passed for "ip_local_port_range"

Check: Kernel parameter for "rmem_default"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 262144 262144 passed

node1 262144 262144 passed

Result: Kernel parameter check passed for "rmem_default"

Check: Kernel parameter for "rmem_max"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 4194304 4194304 passed

node1 4194304 4194304 passed

Result: Kernel parameter check passed for "rmem_max"

Check: Kernel parameter for "wmem_default"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 262144 262144 passed

node1 262144 262144 passed

Result: Kernel parameter check passed for "wmem_default"

Check: Kernel parameter for "wmem_max"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 1048586 1048576 passed

node1 1048586 1048576 passed

Result: Kernel parameter check passed for "wmem_max"

Check: Kernel parameter for "aio-max-nr"

Node Name Configured Required Comment

------------ ------------------------ ------------------------ ----------

node2 1048576 1048576 passed

node1 1048576 1048576 passed

Result: Kernel parameter check passed for "aio-max-nr"

Check: Package existence for "make-3.81( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 make-3.81-3.el5 make-3.81( i686) passed

node1 make-3.81-3.el5 make-3.81( i686) passed

Result: Package existence check passed for "make-3.81( i686)"

Check: Package existence for "binutils-2.17.50.0.6( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 binutils-2.17.50.0.6-12.el5 binutils-2.17.50.0.6( i686) passed

node1 binutils-2.17.50.0.6-12.el5 binutils-2.17.50.0.6( i686) passed

Result: Package existence check passed for "binutils-2.17.50.0.6( i686)"

Check: Package existence for "gcc-4.1.2( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 gcc-4.1.2-46.el5 gcc-4.1.2( i686) passed

node1 gcc-4.1.2-46.el5 gcc-4.1.2( i686) passed

Result: Package existence check passed for "gcc-4.1.2( i686)"

Check: Package existence for "gcc-c++-4.1.2( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 gcc-c++-4.1.2-46.el5 gcc-c++-4.1.2( i686) passed

node1 gcc-c++-4.1.2-46.el5 gcc-c++-4.1.2( i686) passed

Result: Package existence check passed for "gcc-c++-4.1.2( i686)"

Check: Package existence for "libgomp-4.1.2( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 libgomp-4.4.0-6.el5 libgomp-4.1.2( i686) passed

node1 libgomp-4.4.0-6.el5 libgomp-4.1.2( i686) passed

Result: Package existence check passed for "libgomp-4.1.2( i686)"

Check: Package existence for "libaio-0.3.106( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 libaio-0.3.106-3.2 libaio-0.3.106( i686) passed

node1 libaio-0.3.106-3.2 libaio-0.3.106( i686) passed

Result: Package existence check passed for "libaio-0.3.106( i686)"

Check: Package existence for "glibc-2.5-24( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 glibc-2.5-42 glibc-2.5-24( i686) passed

node1 glibc-2.5-42 glibc-2.5-24( i686) passed

Result: Package existence check passed for "glibc-2.5-24( i686)"

Check: Package existence for "compat-libstdc++-33-3.2.3( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 compat-libstdc++-33-3.2.3-61 compat-libstdc++-33-3.2.3( i686) passed

node1 compat-libstdc++-33-3.2.3-61 compat-libstdc++-33-3.2.3( i686) passed

Result: Package existence check passed for "compat-libstdc++-33-3.2.3( i686)"

Check: Package existence for "elfutils-libelf-0.125( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 elfutils-libelf-0.137-3.el5 elfutils-libelf-0.125( i686) passed

node1 elfutils-libelf-0.137-3.el5 elfutils-libelf-0.125( i686) passed

Result: Package existence check passed for "elfutils-libelf-0.125( i686)"

Check: Package existence for "elfutils-libelf-devel-0.125( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 elfutils-libelf-devel-0.137-3.el5 elfutils-libelf-devel-0.125( i686) passed

node1 elfutils-libelf-devel-0.137-3.el5 elfutils-libelf-devel-0.125( i686) passed

Result: Package existence check passed for "elfutils-libelf-devel-0.125( i686)"

Check: Package existence for "glibc-common-2.5( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 glibc-common-2.5-42 glibc-common-2.5( i686) passed

node1 glibc-common-2.5-42 glibc-common-2.5( i686) passed

Result: Package existence check passed for "glibc-common-2.5( i686)"

Check: Package existence for "glibc-devel-2.5( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 glibc-devel-2.5-42 glibc-devel-2.5( i686) passed

node1 glibc-devel-2.5-42 glibc-devel-2.5( i686) passed

Result: Package existence check passed for "glibc-devel-2.5( i686)"

Check: Package existence for "glibc-headers-2.5( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 glibc-headers-2.5-42 glibc-headers-2.5( i686) passed

node1 glibc-headers-2.5-42 glibc-headers-2.5( i686) passed

Result: Package existence check passed for "glibc-headers-2.5( i686)"

Check: Package existence for "libaio-devel-0.3.106( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 libaio-devel-0.3.106-3.2 libaio-devel-0.3.106( i686) passed

node1 libaio-devel-0.3.106-3.2 libaio-devel-0.3.106( i686) passed

Result: Package existence check passed for "libaio-devel-0.3.106( i686)"

Check: Package existence for "libgcc-4.1.2( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 libgcc-4.1.2-46.el5 libgcc-4.1.2( i686) passed

node1 libgcc-4.1.2-46.el5 libgcc-4.1.2( i686) passed

Result: Package existence check passed for "libgcc-4.1.2( i686)"

Check: Package existence for "libstdc++-4.1.2( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 libstdc++-4.1.2-46.el5 libstdc++-4.1.2( i686) passed

node1 libstdc++-4.1.2-46.el5 libstdc++-4.1.2( i686) passed

Result: Package existence check passed for "libstdc++-4.1.2( i686)"

Check: Package existence for "libstdc++-devel-4.1.2( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 libstdc++-devel-4.1.2-46.el5 libstdc++-devel-4.1.2( i686) passed

node1 libstdc++-devel-4.1.2-46.el5 libstdc++-devel-4.1.2( i686) passed

Result: Package existence check passed for "libstdc++-devel-4.1.2( i686)"

Check: Package existence for "sysstat-7.0.2( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 sysstat-7.0.2-3.el5 sysstat-7.0.2( i686) passed

node1 sysstat-7.0.2-3.el5 sysstat-7.0.2( i686) passed

Result: Package existence check passed for "sysstat-7.0.2( i686)"

Check: Package existence for "ksh-20060214( i686)"

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 ksh-20080202-14.el5 ksh-20060214( i686) passed

node1 ksh-20080202-14.el5 ksh-20060214( i686) passed

Result: Package existence check passed for "ksh-20060214( i686)"

Checking for multiple users with UID value 0

Result: Check for multiple users with UID value 0 passed

Check: Current group ID

Result: Current group ID check passed

Starting Clock synchronization checks using Network Time Protocol(NTP)...

NTP Configuration file check started...

The NTP configuration file "/etc/ntp.conf" is available on all nodes

NTP Configuration file check passed

Checking daemon liveness...

Check: Liveness for "ntpd"

Node Name Running?

------------------------------------ ------------------------

node2 yes

node1 yes

Result: Liveness check passed for "ntpd"

Check for NTP daemon or service alive passed on all nodes

Checking NTP daemon command line for slewing option "-x"

Check: NTP daemon command line

Node Name Slewing Option Set?

------------------------------------ ------------------------

node2 yes

node1 yes

Result:

NTP daemon slewing option check passed

Checking NTP daemon's boot time configuration, in file "/etc/sysconfig/ntpd", for slewing option "-x"

Check: NTP daemon's boot time configuration

Node Name Slewing Option Set?

------------------------------------ ------------------------

node2 yes

node1 yes

Result:

NTP daemon's boot time configuration check for slewing option passed

Checking whether NTP daemon or service is using UDP port 123 on all nodes

Check for NTP daemon or service using UDP port 123

Node Name Port Open?

------------------------------------ ------------------------

node2 yes

node1 yes

NTP common Time Server Check started...

PRVF-5408 : NTP Time Server ".LOCL." is common only to the following nodes "node1"

PRVF-5416 : Query of NTP daemon failed on all nodes

Result: Clock synchronization check using Network Time Protocol(NTP) failed

Checking Core file name pattern consistency...

Core file name pattern consistency check passed.

Checking to make sure user "grid" is not in "root" group

Node Name Status Comment

------------ ------------------------ ------------------------

node2 does not exist passed

node1 does not exist passed

Result: User "grid" is not part of "root" group. Check passed

Check default user file creation mask

Node Name Available Required Comment

------------ ------------------------ ------------------------ ----------

node2 0022 0022 passed

node1 0022 0022 passed

Result: Default user file creation mask check passed

Checking consistency of file "/etc/resolv.conf" across nodes

Checking the file "/etc/resolv.conf" to make sure only one of domain and search entries is defined

File "/etc/resolv.conf" does not have both domain and search entries defined

Checking if domain entry in file "/etc/resolv.conf" is consistent across the nodes...

domain entry in file "/etc/resolv.conf" is consistent across nodes

Checking if search entry in file "/etc/resolv.conf" is consistent across the nodes...

search entry in file "/etc/resolv.conf" is consistent across nodes

Checking file "/etc/resolv.conf" to make sure that only one search entry is defined

All nodes have one search entry defined in file "/etc/resolv.conf"

Checking all nodes to make sure that search entry is "localdomain" as found on node "node2"

All nodes of the cluster have same value for 'search'

Checking DNS response time for an unreachable node

Node Name Status

------------------------------------ ------------------------

node2 passed

node1 passed

The DNS response time for an unreachable node is within acceptable limit on all nodes

File "/etc/resolv.conf" is consistent across nodes

Check: Time zone consistency

Result: Time zone consistency check passed

Starting check for Huge Pages Existence ...

Check for Huge Pages Existence passed

Starting check for Hardware Clock synchronization at shutdown ...

Check for Hardware Clock synchronization at shutdown passed

Pre-check for cluster services setup was unsuccessful on all the nodes.

[oracle@node2 grid]$ /runcluvfy.sh stage -post hwos -n node1,node2 -verbose

-bash: /runcluvfy.sh: No such file or directory

[oracle@node2 grid]$ ./runcluvfy.sh stage -post hwos -n node1,node2 -verbose

Performing post-checks for hardware and operating system setup

Checking node reachability...

Check: Node reachability from node "node2"

Destination Node Reachable?

------------------------------------ ------------------------

node1 yes

node2 yes

Result: Node reachability check passed from node "node2"

Checking user equivalence...

Check: User equivalence for user "oracle"

Node Name Comment

------------------------------------ ------------------------

node2 passed

node1 passed

Result: User equivalence check passed for user "oracle"

Checking node connectivity...

Checking hosts config file...

Node Name Status Comment

------------ ------------------------ ------------------------

node2 passed

node1 passed

Verification of the hosts config file successful

Interface information for node "node2"

Name IP Address Subnet Gateway Def. Gateway HW Address MTU

------ --------------- --------------- --------------- --------------- ----------------- ------

eth0 192.168.80.140 192.168.80.0 0.0.0.0 10.10.10.254 00:0C:29:63:2F:C8 1500

eth1 10.10.10.2 10.10.10.0 0.0.0.0 10.10.10.254 00:0C:29:63:2F:D2 1500

Interface information for node "node1"

Name IP Address Subnet Gateway Def. Gateway HW Address MTU

------ --------------- --------------- --------------- --------------- ----------------- ------

eth0 192.168.80.130 192.168.80.0 0.0.0.0 10.10.10.254 00:0C:29:DF:B0:D3 1500

eth1 10.10.10.1 10.10.10.0 0.0.0.0 10.10.10.254 00:0C:29:DF:B0:DD 1500

Check: Node connectivity of subnet "192.168.80.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

node2[192.168.80.140] node1[192.168.80.130] yes

Result: Node connectivity passed for subnet "192.168.80.0" with node(s) node2,node1

Check: TCP connectivity of subnet "192.168.80.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

node2:192.168.80.140 node1:192.168.80.130 passed

Result: TCP connectivity check passed for subnet "192.168.80.0"

Check: Node connectivity of subnet "10.10.10.0"

Source Destination Connected?

------------------------------ ------------------------------ ----------------

node2[10.10.10.2] node1[10.10.10.1] yes