一、部署准备:

(1)所有ceph集群节点(包括客户端)设置静态域名解析;

[root@zxw9 ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.126.6 zxw6

192.168.126.7 zxw7

192.168.126.8 zxw8

192.168.126.9 zxw9

(2)所有集群节点(包括客户端)创建cent用户,并设置密码,后执行如下命令:

useradd cent && echo "123" | passwd --stdin cent

echo -e 'Defaults:cent !requiretty cent ALL = (root) NOPASSWD:ALL' | tee /etc/sudoers.d/ceph

chmod 440 /etc/sudoers.d/ceph

(3)在部署节点切换为cent用户,设置无密钥登陆各节点包括客户端节点

[root@zxw9 ~]# su - cent

[cent@zxw9 ~]$

[cent@zxw9 ~]$ ssh-keygen

[cent@zxw9 ~]$ ssh-copy-id zxw6

[cent@zxw9 ~]$ ssh-copy-id zxw7

[cent@zxw9 ~]$ ssh-copy-id zxw8

(4)在部署节点切换为cent用户,在cent用户家目录,设置如下文件:

[cent@zxw9 ~]$ vim .ssh/config

Host zxw9

Hostname zxw9

User cent

Host zxw8

Hostname zxw8

User cent

Host zxw7

Hostname zxw7

User cent

Host zxw6

Hostname zxw6

User cent

[cent@zxw9 ~]$ chmod 600 .ssh/config

二、所有节点配置国内ceph源:

vim ceph.repo

[ceph]

name = ceph1

baseurl = https://mirrors.aliyun.com/centos/7.6.1810/storage/x86_64/ceph-jewel/

enable = 1

gpgcheck = 0

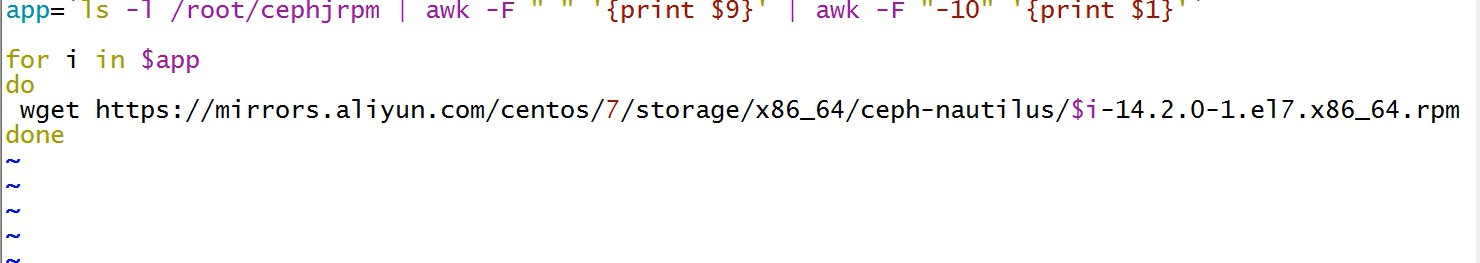

(2)每个机器都下载到国内ceph源中https://mirrors.aliyun.com/centos/7.6.1810/storage/x86_64/ceph-jewel/下载如下所需rpm包。

注意:红色框中为ceph-deploy的rpm,只需要在部署节点安装,下载需要到https://mirrors.aliyun.com/ceph/rpm-jewel/el7/noarch/中找到最新对应的ceph-deploy-xxxxx.noarch.rpm 下载

1 ceph-10.2.11-0.el7.x86_64.rpm

2 ceph-base-10.2.11-0.el7.x86_64.rpm

3 ceph-common-10.2.11-0.el7.x86_64.rpm

4 ceph-deploy-1.5.39-0.noarch.rpm

5 ceph-devel-compat-10.2.11-0.el7.x86_64.rpm

6 cephfs-java-10.2.11-0.el7.x86_64.rpm

7 ceph-fuse-10.2.11-0.el7.x86_64.rpm

8 ceph-libs-compat-10.2.11-0.el7.x86_64.rpm

9 ceph-mds-10.2.11-0.el7.x86_64.rpm

10 ceph-mon-10.2.11-0.el7.x86_64.rpm

11 ceph-osd-10.2.11-0.el7.x86_64.rpm

12 ceph-radosgw-10.2.11-0.el7.x86_64.rpm

13 ceph-resource-agents-10.2.11-0.el7.x86_64.rpm

14 ceph-selinux-10.2.11-0.el7.x86_64.rpm

15 ceph-test-10.2.11-0.el7.x86_64.rpm

16 libcephfs1-10.2.11-0.el7.x86_64.rpm

17 libcephfs1-devel-10.2.11-0.el7.x86_64.rpm

18 libcephfs_jni1-10.2.11-0.el7.x86_64.rpm

19 libcephfs_jni1-devel-10.2.11-0.el7.x86_64.rpm

20 librados2-10.2.11-0.el7.x86_64.rpm

21 librados2-devel-10.2.11-0.el7.x86_64.rpm

22 libradosstriper1-10.2.11-0.el7.x86_64.rpm

23 libradosstriper1-devel-10.2.11-0.el7.x86_64.rpm

24 librbd1-10.2.11-0.el7.x86_64.rpm

25 librbd1-devel-10.2.11-0.el7.x86_64.rpm

26 librgw2-10.2.11-0.el7.x86_64.rpm

27 librgw2-devel-10.2.11-0.el7.x86_64.rpm

28 python-ceph-compat-10.2.11-0.el7.x86_64.rpm

29 python-cephfs-10.2.11-0.el7.x86_64.rpm

30 python-rados-10.2.11-0.el7.x86_64.rpm

31 python-rbd-10.2.11-0.el7.x86_64.rpm

32 rbd-fuse-10.2.11-0.el7.x86_64.rpm

33 rbd-mirror-10.2.11-0.el7.x86_64.rpm

34 rbd-nbd-10.2.11-0.el7.x86_64.rpm

(3)将下载好的rpm拷贝到所有节点,并安装。注意ceph-deploy-xxxxx.noarch.rpm 只有部署节点用到,其他节点不需要,部署节点也需要安装其余的rpm

[root@zxw9 cephjrpm]# mv ceph-deploy-1.5.39-0.noarch.rpm /root

[root@zxw9 ~]# yum localinstall ceph-deploy-1.5.39-0.noarch.rpm -y

在部署节点(cent用户下执行):安装 ceph-deploy,在root用户下,进入下载好的rpm包目录,执行:

yum localinstall -y ./*

(或者sudo yum install ceph-deploy)

创建ceph工作目录

1 mkdir ceph && cd ceph

注意:如遇到如下报错:

处理办法1:

可能不能安装成功,报如下问题:将python-distribute remove 再进行安装(或者 yum remove python-setuptools -y)

注意:如果不是安装上述方法添加的rpm,用的是网络源,每个节点必须yum install ceph ceph-radosgw -y

处理办法2:

安装依赖包:python-distribute

root@bushu12:16:46~/cephjrpm# yum install python-distribute -y

已加载插件:fastestmirror, langpacks

Loading mirror speeds from cached hostfile

软件包 python-setuptools 已经被 python2-setuptools 取代,改为尝试安装 python2-setuptools-22.0.5-1.el7.noarch

正在解决依赖关系

--> 正在检查事务

---> 软件包 python2-setuptools.noarch.0.22.0.5-1.el7 将被 安装

--> 解决依赖关系完成

依赖关系解决

=============================================================================================================================

Package 架构 版本 源 大小

=============================================================================================================================

正在安装:

python2-setuptools noarch 22.0.5-1.el7 openstack-ocata 485 k

事务概要

=============================================================================================================================

安装 1 软件包

总下载量:485 k

安装大小:1.8 M

Downloading packages:

python2-setuptools-22.0.5-1.el7.noarch.rpm | 485 kB 00:00:00

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

正在安装 : python2-setuptools-22.0.5-1.el7.noarch 1/1

验证中 : python2-setuptools-22.0.5-1.el7.noarch 1/1

已安装:

python2-setuptools.noarch 0:22.0.5-1.el7

完毕!

再次安装 :ceph-deploy-1.5.39-0.noarch.rpm -y

root@bushu12:22:12~/cephjrpm# yum localinstall ceph-deploy-1.5.39-0.noarch.rpm -y

已加载插件:fastestmirror, langpacks

正在检查 ceph-deploy-1.5.39-0.noarch.rpm: ceph-deploy-1.5.39-0.noarch

ceph-deploy-1.5.39-0.noarch.rpm 将被安装

正在解决依赖关系

--> 正在检查事务

---> 软件包 ceph-deploy.noarch.0.1.5.39-0 将被 安装

--> 正在处理依赖关系 python-distribute,它被软件包 ceph-deploy-1.5.39-0.noarch 需要

Loading mirror speeds from cached hostfile

软件包 python-setuptools-0.9.8-7.el7.noarch 被已安装的 python2-setuptools-22.0.5-1.el7.noarch 取代

--> 解决依赖关系完成

错误:软件包:ceph-deploy-1.5.39-0.noarch (/ceph-deploy-1.5.39-0.noarch)

需要:python-distribute

可用: python-setuptools-0.9.8-7.el7.noarch (base)

python-distribute = 0.9.8-7.el7

您可以尝试添加 --skip-broken 选项来解决该问题

您可以尝试执行:rpm -Va --nofiles --nodigest

删除:python2-setuptools-22.0.5-1.el7.noarch

root@bushu12:25:30~/cephjrpm# yum remove python2-setuptools-22.0.5-1.el7.noarch -y

已加载插件:fastestmirror, langpacks

正在解决依赖关系

--> 正在检查事务

---> 软件包 python2-setuptools.noarch.0.22.0.5-1.el7 将被 删除

--> 解决依赖关系完成

依赖关系解决

=============================================================================================================================

Package 架构 版本 源 大小

=============================================================================================================================

正在删除:

python2-setuptools noarch 22.0.5-1.el7 @openstack-ocata 1.8 M

事务概要

=============================================================================================================================

移除 1 软件包

安装大小:1.8 M

Downloading packages:

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

正在删除 : python2-setuptools-22.0.5-1.el7.noarch 1/1

验证中 : python2-setuptools-22.0.5-1.el7.noarch 1/1

删除:

python2-setuptools.noarch 0:22.0.5-1.el7

完毕!

因为目前的源用的是上面黄色标记openstack-ocata源,安装版本就成了python2-setuptools-22.0.5-1.el7.noarch,所以修改yum源后,只要保证安装 python-setuptools 版本是 0.9.8-7.el7 就可以通过了,如下把openstack-ocata 源移走或者删除:

root@dlp10:30:02/etc/yum.repos.d# mv rdo-release-yunwei.repo old/

root@dlp10:30:11/etc/yum.repos.d# ls

Centos7-Base-yunwei.repo ceph-yunwei.repo epel-testing.repo old

ceph.repo epel.repo epel-yunwei.repo

root@dlp10:30:11/etc/yum.repos.d#

root@dlp10:30:12/etc/yum.repos.d#

root@dlp10:30:12/etc/yum.repos.d# yum clean all

root@dlp10:30:12/etc/yum.repos.d# yum makecache

root@bushu12:25:30~/cephjrpm# yum localinstall ceph-deploy-1.5.39-0.noarch.rpm -y

已加载插件:fastestmirror, langpacks

正在检查 ceph-deploy-1.5.39-0.noarch.rpm: ceph-deploy-1.5.39-0.noarch

ceph-deploy-1.5.39-0.noarch.rpm 将被安装

正在解决依赖关系

--> 正在检查事务

---> 软件包 ceph-deploy.noarch.0.1.5.39-0 将被 安装

--> 正在处理依赖关系 python-distribute,它被软件包 ceph-deploy-1.5.39-0.noarch 需要

Loading mirror speeds from cached hostfile

--> 正在检查事务

---> 软件包 python-setuptools.noarch.0.0.9.8-7.el7 将被 安装

--> 解决依赖关系完成

依赖关系解决

=============================================================================================================================

Package 架构 版本 源 大小

=============================================================================================================================

正在安装:

ceph-deploy noarch 1.5.39-0 /ceph-deploy-1.5.39-0.noarch 1.3 M

为依赖而安装:

python-setuptools noarch 0.9.8-7.el7 base 397 k

事务概要

=============================================================================================================================

安装 1 软件包 (+1 依赖软件包)

总计:1.6 M

总下载量:397 k

安装大小:3.2 M

Downloading packages:

python-setuptools-0.9.8-7.el7.noarch.rpm | 397 kB 00:00:00

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

正在安装 : python-setuptools-0.9.8-7.el7.noarch 1/2

正在安装 : ceph-deploy-1.5.39-0.noarch 2/2

验证中 : ceph-deploy-1.5.39-0.noarch 1/2

验证中 : python-setuptools-0.9.8-7.el7.noarch 2/2

已安装:

ceph-deploy.noarch 0:1.5.39-0

作为依赖被安装:

python-setuptools.noarch 0:0.9.8-7.el7

完毕!

查看版本:

root@bushu12:55:58~/cephjrpm#ceph -v

ceph version 10.2.11 (e4b061b47f07f583c92a050d9e84b1813a35671e)

(5)在部署节点(cent用户下执行):配置新集群

1 ceph-deploy new node1 node2 node3

2 vim ./ceph.conf

3 添加

osd_pool_default_size = 1

osd_pool_default_min_size = 1

mon_clock_drift_allowed = 2

mon_clock_drift_warn_backoff = 3

可选参数如下:

1 public_network = 192.168.254.0/24

2 cluster_network = 172.16.254.0/24

3 osd_pool_default_size = 3

4 osd_pool_default_min_size = 1

5 osd_pool_default_pg_num = 8

6 osd_pool_default_pgp_num = 8

7 osd_crush_chooseleaf_type = 1

8

9 [mon]

10 mon_clock_drift_allowed = 0.5

11

12 [osd]

13 osd_mkfs_type = xfs

14 osd_mkfs_options_xfs = -f

15 filestore_max_sync_interval = 5

16 filestore_min_sync_interval = 0.1

17 filestore_fd_cache_size = 655350

18 filestore_omap_header_cache_size = 655350

19 filestore_fd_cache_random = true

20 osd op threads = 8

21 osd disk threads = 4

22 filestore op threads = 8

23 max_open_files = 655350

(6)在部署节点执行(cent用户下执行):所有节点安装ceph软件

所有节点有如下软件包:

1 root@rab116:13:59~/cephjrpm#ls

2 ceph-10.2.11-0.el7.x86_64.rpm ceph-resource-agents-10.2.11-0.el7.x86_64.rpm librbd1-10.2.11-0.el7.x86_64.rpm

3 ceph-base-10.2.11-0.el7.x86_64.rpm ceph-selinux-10.2.11-0.el7.x86_64.rpm librbd1-devel-10.2.11-0.el7.x86_64.rpm

4 ceph-common-10.2.11-0.el7.x86_64.rpm ceph-test-10.2.11-0.el7.x86_64.rpm librgw2-10.2.11-0.el7.x86_64.rpm

5 ceph-devel-compat-10.2.11-0.el7.x86_64.rpm libcephfs1-10.2.11-0.el7.x86_64.rpm librgw2-devel-10.2.11-0.el7.x86_64.rpm

6 cephfs-java-10.2.11-0.el7.x86_64.rpm libcephfs1-devel-10.2.11-0.el7.x86_64.rpm python-ceph-compat-10.2.11-0.el7.x86_64.rpm

7 ceph-fuse-10.2.11-0.el7.x86_64.rpm libcephfs_jni1-10.2.11-0.el7.x86_64.rpm python-cephfs-10.2.11-0.el7.x86_64.rpm

8 ceph-libs-compat-10.2.11-0.el7.x86_64.rpm libcephfs_jni1-devel-10.2.11-0.el7.x86_64.rpm python-rados-10.2.11-0.el7.x86_64.rpm

9 ceph-mds-10.2.11-0.el7.x86_64.rpm librados2-10.2.11-0.el7.x86_64.rpm python-rbd-10.2.11-0.el7.x86_64.rpm

10 ceph-mon-10.2.11-0.el7.x86_64.rpm librados2-devel-10.2.11-0.el7.x86_64.rpm rbd-fuse-10.2.11-0.el7.x86_64.rpm

11 ceph-osd-10.2.11-0.el7.x86_64.rpm libradosstriper1-10.2.11-0.el7.x86_64.rpm rbd-mirror-10.2.11-0.el7.x86_64.rpm

12 ceph-radosgw-10.2.11-0.el7.x86_64.rpm libradosstriper1-devel-10.2.11-0.el7.x86_64.rpm rbd-nbd-10.2.11-0.el7.x86_64.rpm

所有节点安装上述软件包(包括客户端):

1 yum localinstall ./* -y

(7)在部署节点执行,所有节点安装ceph软件

1 ceph-deploy install dlp node1 node2 node3

(8)在部署节点初始化集群(cent用户下执行):

1 ceph-deploy mon create-initial

(9)在osd节点prepare Object Storage Daemon :

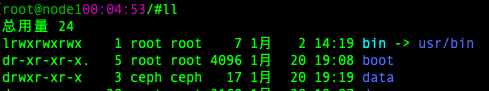

1 mkdir /data && chown ceph.ceph /data

(10)每个节点将第二块硬盘做分区,并格式化为xfs文件系统挂载到/data:

1 root@con116:45:22/#fdisk /dev/vdb

2

3 root@con116:45:22/#lsblk

4 NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

5 vda 252:0 0 40G 0 disk

6 ├─vda1 252:1 0 512M 0 part /boot

7 └─vda2 252:2 0 39.5G 0 part

8 ├─cl-root 253:0 0 35.5G 0 lvm /

9 └─cl-swap 253:1 0 4G 0 lvm [SWAP]

10 vdb 252:16 0 10G 0 disk

11 └─vdb1 252:17 0 10G 0 part

12

13 root@rab116:54:35/#mkfs -t xfs /dev/vdb1

14

15 root@rab116:54:50/#mount /dev/vdb1 /data/

16

17 root@rab116:56:39/#lsblk

18 NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

19 vda 252:0 0 40G 0 disk

20 ├─vda1 252:1 0 512M 0 part /boot

21 └─vda2 252:2 0 39.5G 0 part

22 ├─cl-root 253:0 0 35.5G 0 lvm /

23 └─cl-swap 253:1 0 4G 0 lvm [SWAP]

24 vdb 252:16 0 10G 0 disk

25 └─vdb1 252:17 0 10G 0 part /data

(11)在/data/下面创建osd挂载目录:

1 mkdir /data/osd

2 chown -R ceph.ceph /data/

3 chmod 750 /data/osd/

4 ln -s /data/osd /var/lib/ceph

注意:准备前先将硬盘做文件系统 xfs,挂载到/var/lib/ceph/osd,并且注意属主和属主为ceph:

列出节点磁盘:ceph-deploy disk list node1

擦净节点磁盘:ceph-deploy disk zap node1:/dev/vdb1

(12)准备Object Storage Daemon:

1 ceph-deploy osd prepare node1:/var/lib/ceph/osd node2:/var/lib/ceph/osd node3:/var/lib/ceph/osd

(13)激活Object Storage Daemon:

1 ceph-deploy osd activate node1:/var/lib/ceph/osd node2:/var/lib/ceph/osd node3:/var/lib/ceph/osd

(14)在部署节点transfer config files

1 ceph-deploy admin dlp node1 node2 node3

2 sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

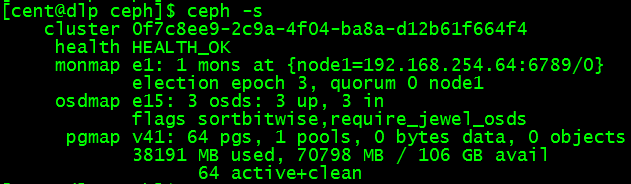

(15)在ceph集群中任意节点检测:

1 ceph -s

三、客户端设置:

(1)客户端也要有cent用户:

1 useradd cent && echo "123" | passwd --stdin cent

2 echo-e 'Defaults:cent !requiretty

cent ALL = (root) NOPASSWD:ALL' | tee /etc/sudoers.d/ceph

3 chmod440 /etc/sudoers.d/ceph

在部署节点执行,安装ceph客户端及设置:

1 ceph-deploy install controller

2 ceph-deploy admin controller

(2)客户端执行

1 sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

(3)客户端执行,块设备rdb配置:

1 创建rbd:rbd create disk01 --size 10G --image-feature layering 删除:rbd rm disk01

2 列示rbd:rbd ls -l

3 映射rbd的image map:sudo rbd map disk01 取消映射:sudo rbd unmap disk01

4 显示map:rbd showmapped

5 格式化disk01文件系统xfs:sudo mkfs.xfs /dev/rbd0

6 挂载硬盘:sudo mount /dev/rbd0 /mnt

7 验证是否挂着成功:df -hT

(4)File System配置:

在部署节点执行,选择一个node来创建MDS:

1 ceph-deploy mds create node1

以下操作在node1上执行:

1 sudo chmod 644 /etc/ceph/ceph.client.admin.keyring

在MDS节点node1上创建 cephfs_data 和 cephfs_metadata 的 pool

1 ceph osd pool create cephfs_data 128

2 ceph osd pool create cephfs_metadata 128

开启pool:

1 ceph fs new cephfs cephfs_metadata cephfs_data

显示ceph fs:

1 ceph fs ls

2 ceph mds stat

以下操作在客户端执行,安装ceph-fuse:

1 yum -y install ceph-fuse

获取admin key:

1 sshcent@node1"sudo ceph-authtool -p /etc/ceph/ceph.client.admin.keyring" > admin.key

2 chmod600 admin.key

挂载ceph-fs:

1 mount-t ceph node1:6789:/ /mnt -o name=admin,secretfile=admin.key

2 df-hT

停止ceph-mds服务:

1 systemctl stop ceph-mds@node1

2 ceph mds fail 0

3 ceph fs rm cephfs --yes-i-really-mean-it

4

5 ceph osd lspools

6 显示结果:0 rbd,1 cephfs_data,2 cephfs_metadata,

7

8 ceph osd pool rm cephfs_metadata cephfs_metadata --yes-i-really-really-mean-it

四、删除环境:

1 ceph-deploy purge dlp node1 node2 node3 controller

2 ceph-deploy purgedata dlp node1 node2 node3 controller

3 ceph-deploy forgetkeys

4 rm -rf ceph*