内容来源于官方 Longhorn 1.1.2 英文技术手册。

系列

- Longhorn 是什么?

- Longhorn 企业级云原生容器分布式存储解决方案设计架构和概念

- Longhorn 企业级云原生容器分布式存储-部署篇

- Longhorn 企业级云原生容器分布式存储-券(Volume)和节点(Node)

- Longhorn,企业级云原生容器分布式存储-K8S 资源配置示例

目录

- 设置

Prometheus和Grafana来监控Longhorn - 将

Longhorn指标集成到Rancher监控系统中 Longhorn监控指标- 支持

Kubelet Volume指标 Longhorn警报规则示例

设置 Prometheus 和 Grafana 来监控 Longhorn

概览

Longhorn 在 REST 端点 http://LONGHORN_MANAGER_IP:PORT/metrics 上以 Prometheus 文本格式原生公开指标。

有关所有可用指标的说明,请参阅 Longhorn's metrics。

您可以使用 Prometheus, Graphite, Telegraf 等任何收集工具来抓取这些指标,然后通过 Grafana 等工具将收集到的数据可视化。

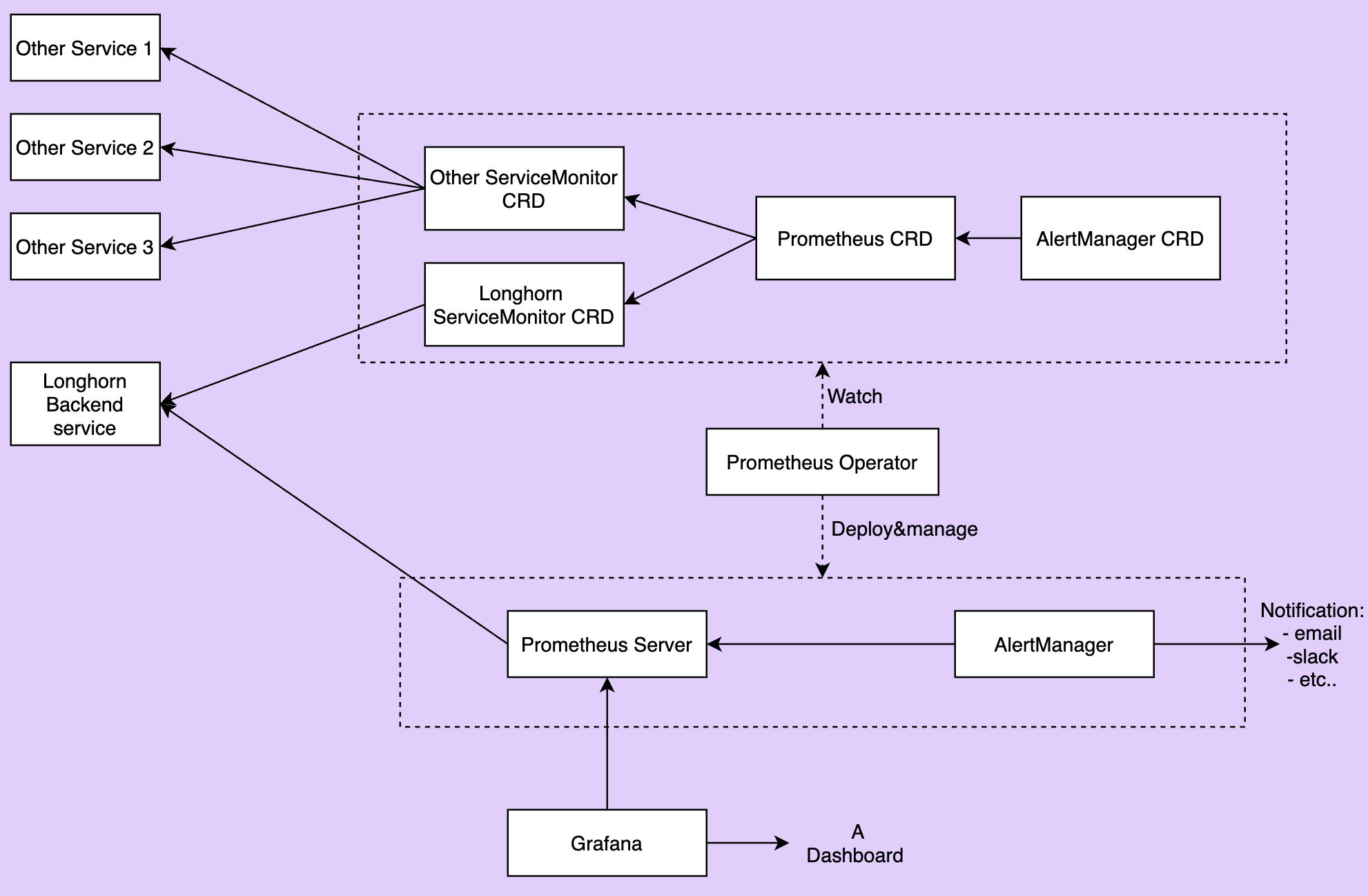

本文档提供了一个监控 Longhorn 的示例设置。监控系统使用 Prometheus 收集数据和警报,使用 Grafana 将收集的数据可视化/仪表板(visualizing/dashboarding)。 高级概述来看,监控系统包含:

Prometheus服务器从Longhorn指标端点抓取和存储时间序列数据。Prometheus还负责根据配置的规则和收集的数据生成警报。Prometheus服务器然后将警报发送到Alertmanager。AlertManager然后管理这些警报(alerts),包括静默(silencing)、抑制(inhibition)、聚合(aggregation)和通过电子邮件、呼叫通知系统和聊天平台等方法发送通知。Grafana向Prometheus服务器查询数据并绘制仪表板进行可视化。

下图描述了监控系统的详细架构。

上图中有 2 个未提及的组件:

- Longhorn 后端服务是指向

Longhorn manager pods集的服务。Longhorn的指标在端点http://LONGHORN_MANAGER_IP:PORT/metrics的Longhorn manager pods中公开。 - Prometheus operator 使在

Kubernetes上运行Prometheus变得非常容易。operator监视3个自定义资源:ServiceMonitor、Prometheus和AlertManager。当用户创建这些自定义资源时,Prometheus Operator会使用用户指定的配置部署和管理Prometheus server,AlerManager。

安装

按照此说明将所有组件安装到 monitoring 命名空间中。要将它们安装到不同的命名空间中,请更改字段 namespace: OTHER_NAMESPACE

创建 monitoring 命名空间

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

安装 Prometheus Operator

部署 Prometheus Operator 及其所需的 ClusterRole、ClusterRoleBinding 和 Service Account。

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/name: prometheus-operator

app.kubernetes.io/version: v0.38.3

name: prometheus-operator

namespace: monitoring

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus-operator

subjects:

- kind: ServiceAccount

name: prometheus-operator

namespace: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/name: prometheus-operator

app.kubernetes.io/version: v0.38.3

name: prometheus-operator

namespace: monitoring

rules:

- apiGroups:

- apiextensions.k8s.io

resources:

- customresourcedefinitions

verbs:

- create

- apiGroups:

- apiextensions.k8s.io

resourceNames:

- alertmanagers.monitoring.coreos.com

- podmonitors.monitoring.coreos.com

- prometheuses.monitoring.coreos.com

- prometheusrules.monitoring.coreos.com

- servicemonitors.monitoring.coreos.com

- thanosrulers.monitoring.coreos.com

resources:

- customresourcedefinitions

verbs:

- get

- update

- apiGroups:

- monitoring.coreos.com

resources:

- alertmanagers

- alertmanagers/finalizers

- prometheuses

- prometheuses/finalizers

- thanosrulers

- thanosrulers/finalizers

- servicemonitors

- podmonitors

- prometheusrules

verbs:

- '*'

- apiGroups:

- apps

resources:

- statefulsets

verbs:

- '*'

- apiGroups:

- ""

resources:

- configmaps

- secrets

verbs:

- '*'

- apiGroups:

- ""

resources:

- pods

verbs:

- list

- delete

- apiGroups:

- ""

resources:

- services

- services/finalizers

- endpoints

verbs:

- get

- create

- update

- delete

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- namespaces

verbs:

- get

- list

- watch

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/name: prometheus-operator

app.kubernetes.io/version: v0.38.3

name: prometheus-operator

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app.kubernetes.io/component: controller

app.kubernetes.io/name: prometheus-operator

template:

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/name: prometheus-operator

app.kubernetes.io/version: v0.38.3

spec:

containers:

- args:

- --kubelet-service=kube-system/kubelet

- --logtostderr=true

- --config-reloader-image=jimmidyson/configmap-reload:v0.3.0

- --prometheus-config-reloader=quay.io/prometheus-operator/prometheus-config-reloader:v0.38.3

image: quay.io/prometheus-operator/prometheus-operator:v0.38.3

name: prometheus-operator

ports:

- containerPort: 8080

name: http

resources:

limits:

cpu: 200m

memory: 200Mi

requests:

cpu: 100m

memory: 100Mi

securityContext:

allowPrivilegeEscalation: false

nodeSelector:

beta.kubernetes.io/os: linux

securityContext:

runAsNonRoot: true

runAsUser: 65534

serviceAccountName: prometheus-operator

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/name: prometheus-operator

app.kubernetes.io/version: v0.38.3

name: prometheus-operator

namespace: monitoring

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/name: prometheus-operator

app.kubernetes.io/version: v0.38.3

name: prometheus-operator

namespace: monitoring

spec:

clusterIP: None

ports:

- name: http

port: 8080

targetPort: http

selector:

app.kubernetes.io/component: controller

app.kubernetes.io/name: prometheus-operator

安装 Longhorn ServiceMonitor

Longhorn ServiceMonitor 有一个标签选择器 app: longhorn-manager 来选择 Longhorn 后端服务。

稍后,Prometheus CRD 可以包含 Longhorn ServiceMonitor,以便 Prometheus server 可以发现所有 Longhorn manager pods 及其端点。

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: longhorn-prometheus-servicemonitor

namespace: monitoring

labels:

name: longhorn-prometheus-servicemonitor

spec:

selector:

matchLabels:

app: longhorn-manager

namespaceSelector:

matchNames:

- longhorn-system

endpoints:

- port: manager

安装和配置 Prometheus AlertManager

-

使用

3个实例创建一个高可用的Alertmanager部署:apiVersion: monitoring.coreos.com/v1 kind: Alertmanager metadata: name: longhorn namespace: monitoring spec: replicas: 3 -

除非提供有效配置,否则

Alertmanager实例将无法启动。有关 Alertmanager 配置的更多说明,请参见此处。下面的代码给出了一个示例配置:global: resolve_timeout: 5m route: group_by: [alertname] receiver: email_and_slack receivers: - name: email_and_slack email_configs: - to: <the email address to send notifications to> from: <the sender address> smarthost: <the SMTP host through which emails are sent> # SMTP authentication information. auth_username: <the username> auth_identity: <the identity> auth_password: <the password> headers: subject: 'Longhorn-Alert' text: |- {{ range .Alerts }} *Alert:* {{ .Annotations.summary }} - `{{ .Labels.severity }}` *Description:* {{ .Annotations.description }} *Details:* {{ range .Labels.SortedPairs }} • *{{ .Name }}:* `{{ .Value }}` {{ end }} {{ end }} slack_configs: - api_url: <the Slack webhook URL> channel: <the channel or user to send notifications to> text: |- {{ range .Alerts }} *Alert:* {{ .Annotations.summary }} - `{{ .Labels.severity }}` *Description:* {{ .Annotations.description }} *Details:* {{ range .Labels.SortedPairs }} • *{{ .Name }}:* `{{ .Value }}` {{ end }} {{ end }}将上述

Alertmanager配置保存在名为alertmanager.yaml的文件中,并使用kubectl从中创建一个secret。Alertmanager实例要求secret资源命名遵循alertmanager-{ALERTMANAGER_NAME}格式。

在上一步中,Alertmanager的名称是longhorn,所以secret名称必须是alertmanager-longhorn$ kubectl create secret generic alertmanager-longhorn --from-file=alertmanager.yaml -n monitoring -

为了能够查看

Alertmanager的Web UI,请通过Service公开它。一个简单的方法是使用NodePort类型的Service:apiVersion: v1 kind: Service metadata: name: alertmanager-longhorn namespace: monitoring spec: type: NodePort ports: - name: web nodePort: 30903 port: 9093 protocol: TCP targetPort: web selector: alertmanager: longhorn创建上述服务后,您可以通过节点的

IP和端口30903访问Alertmanager的web UI。使用上面的

NodePort服务进行快速验证,因为它不通过TLS连接进行通信。您可能希望将服务类型更改为ClusterIP,并设置一个Ingress-controller以通过TLS连接公开Alertmanager的web UI。

安装和配置 Prometheus server

-

创建定义警报条件的

PrometheusRule自定义资源。apiVersion: monitoring.coreos.com/v1 kind: PrometheusRule metadata: labels: prometheus: longhorn role: alert-rules name: prometheus-longhorn-rules namespace: monitoring spec: groups: - name: longhorn.rules rules: - alert: LonghornVolumeUsageCritical annotations: description: Longhorn volume {{$labels.volume}} on {{$labels.node}} is at {{$value}}% used for more than 5 minutes. summary: Longhorn volume capacity is over 90% used. expr: 100 * (longhorn_volume_usage_bytes / longhorn_volume_capacity_bytes) > 90 for: 5m labels: issue: Longhorn volume {{$labels.volume}} usage on {{$labels.node}} is critical. severity: critical有关如何定义警报规则的更多信息,请参见https://prometheus.io/docs/prometheus/latest/configuration/alerting_rules/#alerting-rules

-

如果激活了 RBAC 授权,则为

Prometheus Pod创建ClusterRole和ClusterRoleBinding:apiVersion: v1 kind: ServiceAccount metadata: name: prometheus namespace: monitoringapiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRole metadata: name: prometheus namespace: monitoring rules: - apiGroups: [""] resources: - nodes - services - endpoints - pods verbs: ["get", "list", "watch"] - apiGroups: [""] resources: - configmaps verbs: ["get"] - nonResourceURLs: ["/metrics"] verbs: ["get"]apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: name: prometheus roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: prometheus subjects: - kind: ServiceAccount name: prometheus namespace: monitoring -

创建

Prometheus自定义资源。请注意,我们在spec中选择了Longhorn服务监视器(service monitor)和Longhorn规则。apiVersion: monitoring.coreos.com/v1 kind: Prometheus metadata: name: prometheus namespace: monitoring spec: replicas: 2 serviceAccountName: prometheus alerting: alertmanagers: - namespace: monitoring name: alertmanager-longhorn port: web serviceMonitorSelector: matchLabels: name: longhorn-prometheus-servicemonitor ruleSelector: matchLabels: prometheus: longhorn role: alert-rules -

为了能够查看

Prometheus服务器的web UI,请通过Service公开它。一个简单的方法是使用NodePort类型的Service:apiVersion: v1 kind: Service metadata: name: prometheus namespace: monitoring spec: type: NodePort ports: - name: web nodePort: 30904 port: 9090 protocol: TCP targetPort: web selector: prometheus: prometheus创建上述服务后,您可以通过节点的

IP和端口30904访问Prometheus server的web UI。此时,您应该能够在

Prometheus server UI的目标和规则部分看到所有Longhorn manager targets以及Longhorn rules。使用上述

NodePortservice 进行快速验证,因为它不通过TLS连接进行通信。您可能希望将服务类型更改为ClusterIP,并设置一个Ingress-controller以通过TLS连接公开Prometheus server的web UI。

安装 Grafana

-

创建

Grafana数据源配置:apiVersion: v1 kind: ConfigMap metadata: name: grafana-datasources namespace: monitoring data: prometheus.yaml: |- { "apiVersion": 1, "datasources": [ { "access":"proxy", "editable": true, "name": "prometheus", "orgId": 1, "type": "prometheus", "url": "http://prometheus:9090", "version": 1 } ] } -

创建

Grafana部署:apiVersion: apps/v1 kind: Deployment metadata: name: grafana namespace: monitoring labels: app: grafana spec: replicas: 1 selector: matchLabels: app: grafana template: metadata: name: grafana labels: app: grafana spec: containers: - name: grafana image: grafana/grafana:7.1.5 ports: - name: grafana containerPort: 3000 resources: limits: memory: "500Mi" cpu: "300m" requests: memory: "500Mi" cpu: "200m" volumeMounts: - mountPath: /var/lib/grafana name: grafana-storage - mountPath: /etc/grafana/provisioning/datasources name: grafana-datasources readOnly: false volumes: - name: grafana-storage emptyDir: {} - name: grafana-datasources configMap: defaultMode: 420 name: grafana-datasources -

在

NodePort 32000上暴露Grafana:apiVersion: v1 kind: Service metadata: name: grafana namespace: monitoring spec: selector: app: grafana type: NodePort ports: - port: 3000 targetPort: 3000 nodePort: 32000使用上述

NodePort服务进行快速验证,因为它不通过TLS连接进行通信。您可能希望将服务类型更改为ClusterIP,并设置一个Ingress-controller以通过TLS连接公开Grafana。 -

使用端口

32000上的任何节点IP访问Grafana仪表板。默认凭据为:User: admin Pass: admin -

安装 Longhorn dashboard

进入

Grafana后,导入预置的面板:https://grafana.com/grafana/dashboards/13032有关如何导入

Grafana dashboard的说明,请参阅 https://grafana.com/docs/grafana/latest/reference/export_import/成功后,您应该会看到以下

dashboard:

将 Longhorn 指标集成到 Rancher 监控系统中

关于 Rancher 监控系统

使用 Rancher,您可以通过与领先的开源监控解决方案 Prometheus 的集成来监控集群节点、Kubernetes 组件和软件部署的状态和进程。

有关如何部署/启用 Rancher 监控系统的说明,请参见https://rancher.com/docs/rancher/v2.x/en/monitoring-alerting/

将 Longhorn 指标添加到 Rancher 监控系统

如果您使用 Rancher 来管理您的 Kubernetes 并且已经启用 Rancher 监控,您可以通过简单地部署以下 ServiceMonitor 将 Longhorn 指标添加到 Rancher 监控中:

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: longhorn-prometheus-servicemonitor

namespace: longhorn-system

labels:

name: longhorn-prometheus-servicemonitor

spec:

selector:

matchLabels:

app: longhorn-manager

namespaceSelector:

matchNames:

- longhorn-system

endpoints:

- port: manager

创建 ServiceMonitor 后,Rancher 将自动发现所有 Longhorn 指标。

然后,您可以设置 Grafana 仪表板以进行可视化。

Longhorn 监控指标

Volume(卷)

| 指标名 | 说明 | 示例 |

|---|---|---|

| longhorn_volume_actual_size_bytes | 对应节点上卷的每个副本使用的实际空间 | longhorn_volume_actual_size_bytes{node="worker-2",volume="testvol"} 1.1917312e+08 |

| longhorn_volume_capacity_bytes | 此卷的配置大小(以 byte 为单位) | longhorn_volume_capacity_bytes{node="worker-2",volume="testvol"} 6.442450944e+09 |

| longhorn_volume_state | 本卷状态: 1=creating, 2=attached, 3=Detached, 4=Attaching, 5=Detaching, 6=Deleting | longhorn_volume_state{node="worker-2",volume="testvol"} 2 |

| longhorn_volume_robustness | 本卷的健壮性: 0=unknown, 1=healthy, 2=degraded, 3=faulted | longhorn_volume_robustness{node="worker-2",volume="testvol"} 1 |

Node(节点)

| 指标名 | 说明 | 示例 |

|---|---|---|

| longhorn_node_status | 该节点的状态: 1=true, 0=false | longhorn_node_status{condition="ready",condition_reason="",node="worker-2"} 1 |

| longhorn_node_count_total | Longhorn 系统中的节点总数 | longhorn_node_count_total 4 |

| longhorn_node_cpu_capacity_millicpu | 此节点上的最大可分配 CPU | longhorn_node_cpu_capacity_millicpu{node="worker-2"} 2000 |

| longhorn_node_cpu_usage_millicpu | 此节点上的 CPU 使用率 | longhorn_node_cpu_usage_millicpu{node="pworker-2"} 186 |

| longhorn_node_memory_capacity_bytes | 此节点上的最大可分配内存 | longhorn_node_memory_capacity_bytes{node="worker-2"} 4.031229952e+09 |

| longhorn_node_memory_usage_bytes | 此节点上的内存使用情况 | longhorn_node_memory_usage_bytes{node="worker-2"} 1.833582592e+09 |

| longhorn_node_storage_capacity_bytes | 本节点的存储容量 | longhorn_node_storage_capacity_bytes{node="worker-3"} 8.3987283968e+10 |

| longhorn_node_storage_usage_bytes | 该节点的已用存储 | longhorn_node_storage_usage_bytes{node="worker-3"} 9.060941824e+09 |

| longhorn_node_storage_reservation_bytes | 此节点上为其他应用程序和系统保留的存储空间 | longhorn_node_storage_reservation_bytes{node="worker-3"} 2.519618519e+10 |

Disk(磁盘)

| 指标名 | 说明 | 示例 |

|---|---|---|

| longhorn_disk_capacity_bytes | 此磁盘的存储容量 | longhorn_disk_capacity_bytes{disk="default-disk-8b28ee3134628183",node="worker-3"} 8.3987283968e+10 |

| longhorn_disk_usage_bytes | 此磁盘的已用存储空间 | longhorn_disk_usage_bytes{disk="default-disk-8b28ee3134628183",node="worker-3"} 9.060941824e+09 |

| longhorn_disk_reservation_bytes | 此磁盘上为其他应用程序和系统保留的存储空间 | longhorn_disk_reservation_bytes{disk="default-disk-8b28ee3134628183",node="worker-3"} 2.519618519e+10 |

Instance Manager(实例管理器)

| 指标名 | 说明 | 示例 |

|---|---|---|

| longhorn_instance_manager_cpu_usage_millicpu | 这个 longhorn 实例管理器的 CPU 使用率 | longhorn_instance_manager_cpu_usage_millicpu{instance_manager="instance-manager-e-2189ed13",instance_manager_type="engine",node="worker-2"} 80 |

| longhorn_instance_manager_cpu_requests_millicpu | 在这个 Longhorn 实例管理器的 kubernetes 中请求的 CPU 资源 | longhorn_instance_manager_cpu_requests_millicpu{instance_manager="instance-manager-e-2189ed13",instance_manager_type="engine",node="worker-2"} 250 |

| longhorn_instance_manager_memory_usage_bytes | 这个 longhorn 实例管理器的内存使用情况 | longhorn_instance_manager_memory_usage_bytes{instance_manager="instance-manager-e-2189ed13",instance_manager_type="engine",node="worker-2"} 2.4072192e+07 |

| longhorn_instance_manager_memory_requests_bytes | 这个 longhorn 实例管理器在 Kubernetes 中请求的内存 | longhorn_instance_manager_memory_requests_bytes{instance_manager="instance-manager-e-2189ed13",instance_manager_type="engine",node="worker-2"} 0 |

Manager(管理器)

| 指标名 | 说明 | 示例 |

|---|---|---|

| longhorn_manager_cpu_usage_millicpu | 这个 Longhorn Manager 的 CPU 使用率 | longhorn_manager_cpu_usage_millicpu{manager="longhorn-manager-5rx2n",node="worker-2"} 27 |

| longhorn_manager_memory_usage_bytes | 这个 Longhorn Manager 的内存使用情况 | longhorn_manager_memory_usage_bytes{manager="longhorn-manager-5rx2n",node="worker-2"} 2.6144768e+07 |

支持 Kubelet Volume 指标

关于 Kubelet Volume 指标

Kubelet 公开了以下指标:

kubelet_volume_stats_capacity_byteskubelet_volume_stats_available_byteskubelet_volume_stats_used_byteskubelet_volume_stats_inodeskubelet_volume_stats_inodes_freekubelet_volume_stats_inodes_used

这些指标衡量与 Longhorn 块设备内的 PVC 文件系统相关的信息。

它们与 longhorn_volume_* 指标不同,后者测量特定于 Longhorn 块设备(block device)的信息。

您可以设置一个监控系统来抓取 Kubelet 指标端点以获取 PVC 的状态并设置异常事件的警报,例如 PVC 即将耗尽存储空间。

一个流行的监控设置是 prometheus-operator/kube-prometheus-stack,,它抓取 kubelet_volume_stats_* 指标并为它们提供仪表板和警报规则。

Longhorn CSI 插件支持

在 v1.1.0 中,Longhorn CSI 插件根据 CSI spec 支持 NodeGetVolumeStats RPC。

这允许 kubelet 查询 Longhorn CSI 插件以获取 PVC 的状态。

然后 kubelet 在 kubelet_volume_stats_* 指标中公开该信息。

Longhorn 警报规则示例

我们在下面提供了几个示例 Longhorn 警报规则供您参考。请参阅此处获取所有可用 Longhorn 指标的列表并构建您自己的警报规则。

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

prometheus: longhorn

role: alert-rules

name: prometheus-longhorn-rules

namespace: monitoring

spec:

groups:

- name: longhorn.rules

rules:

- alert: LonghornVolumeActualSpaceUsedWarning

annotations:

description: The actual space used by Longhorn volume {{$labels.volume}} on {{$labels.node}} is at {{$value}}% capacity for

more than 5 minutes.

summary: The actual used space of Longhorn volume is over 90% of the capacity.

expr: (longhorn_volume_actual_size_bytes / longhorn_volume_capacity_bytes) * 100 > 90

for: 5m

labels:

issue: The actual used space of Longhorn volume {{$labels.volume}} on {{$labels.node}} is high.

severity: warning

- alert: LonghornVolumeStatusCritical

annotations:

description: Longhorn volume {{$labels.volume}} on {{$labels.node}} is Fault for

more than 2 minutes.

summary: Longhorn volume {{$labels.volume}} is Fault

expr: longhorn_volume_robustness == 3

for: 5m

labels:

issue: Longhorn volume {{$labels.volume}} is Fault.

severity: critical

- alert: LonghornVolumeStatusWarning

annotations:

description: Longhorn volume {{$labels.volume}} on {{$labels.node}} is Degraded for

more than 5 minutes.

summary: Longhorn volume {{$labels.volume}} is Degraded

expr: longhorn_volume_robustness == 2

for: 5m

labels:

issue: Longhorn volume {{$labels.volume}} is Degraded.

severity: warning

- alert: LonghornNodeStorageWarning

annotations:

description: The used storage of node {{$labels.node}} is at {{$value}}% capacity for

more than 5 minutes.

summary: The used storage of node is over 70% of the capacity.

expr: (longhorn_node_storage_usage_bytes / longhorn_node_storage_capacity_bytes) * 100 > 70

for: 5m

labels:

issue: The used storage of node {{$labels.node}} is high.

severity: warning

- alert: LonghornDiskStorageWarning

annotations:

description: The used storage of disk {{$labels.disk}} on node {{$labels.node}} is at {{$value}}% capacity for

more than 5 minutes.

summary: The used storage of disk is over 70% of the capacity.

expr: (longhorn_disk_usage_bytes / longhorn_disk_capacity_bytes) * 100 > 70

for: 5m

labels:

issue: The used storage of disk {{$labels.disk}} on node {{$labels.node}} is high.

severity: warning

- alert: LonghornNodeDown

annotations:

description: There are {{$value}} Longhorn nodes which have been offline for more than 5 minutes.

summary: Longhorn nodes is offline

expr: longhorn_node_total - (count(longhorn_node_status{condition="ready"}==1) OR on() vector(0))

for: 5m

labels:

issue: There are {{$value}} Longhorn nodes are offline

severity: critical

- alert: LonghornIntanceManagerCPUUsageWarning

annotations:

description: Longhorn instance manager {{$labels.instance_manager}} on {{$labels.node}} has CPU Usage / CPU request is {{$value}}% for

more than 5 minutes.

summary: Longhorn instance manager {{$labels.instance_manager}} on {{$labels.node}} has CPU Usage / CPU request is over 300%.

expr: (longhorn_instance_manager_cpu_usage_millicpu/longhorn_instance_manager_cpu_requests_millicpu) * 100 > 300

for: 5m

labels:

issue: Longhorn instance manager {{$labels.instance_manager}} on {{$labels.node}} consumes 3 times the CPU request.

severity: warning

- alert: LonghornNodeCPUUsageWarning

annotations:

description: Longhorn node {{$labels.node}} has CPU Usage / CPU capacity is {{$value}}% for

more than 5 minutes.

summary: Longhorn node {{$labels.node}} experiences high CPU pressure for more than 5m.

expr: (longhorn_node_cpu_usage_millicpu / longhorn_node_cpu_capacity_millicpu) * 100 > 90

for: 5m

labels:

issue: Longhorn node {{$labels.node}} experiences high CPU pressure.

severity: warning

在https://prometheus.io/docs/prometheus/latest/configuration/alerting_rules/#alerting-rules

查看有关如何定义警报规则的更多信息。

公众号:黑客下午茶