Ceph 准备工作

官方文档:http://docs.ceph.com/docs/master/rbd/rbd-openstack/

官方中文文档:http://docs.ceph.org.cn/rbd/rbd-openstack/

环境准备:保证openstack节点的hosts文件里有ceph集群的各个主机名,也要保证ceph集群节点有openstack节点的各个主机名

创建存储池

# ceph osd pool create volumes 128

# ceph osd pool create images 128

# ceph osd pool create backups 128

# ceph osd pool create vms 128

Create Ceph User ceph---为openstack创建ceph用户

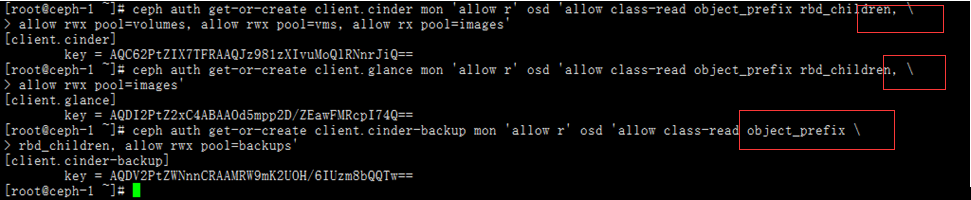

如果你启用了 cephx 认证,需要分别为 Nova/Cinder 和 Glance 创建新用户。命令如下:

ceph auth get-or-create client.cinder mon 'allow r' osd 'allow class-read object_prefix rbd_children, allow rwx pool=volumes, allow rwx pool=vms, allow rx pool=images'

ceph auth get-or-create client.glance mon 'allow r' osd 'allow class-read object_prefix rbd_children, allow rwx pool=images'

ceph auth get-or-create client.cinder-backup mon 'allow r' osd 'allow class-read object_prefix rbd_children, allow rwx pool=backups'

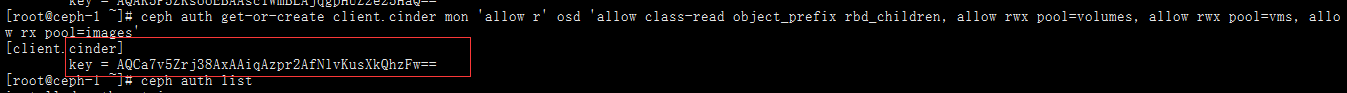

每创建一个用户会输出一个对应用户的秘钥环,秘钥环是其他节点访问集群的钥匙,如图:

用户创建完我们可以在ceph集群的mon节点上ceph auth list看下创建的用户是否正确,出现下面图那种,会导致接下来的调用ceph出现权限不正确的报错

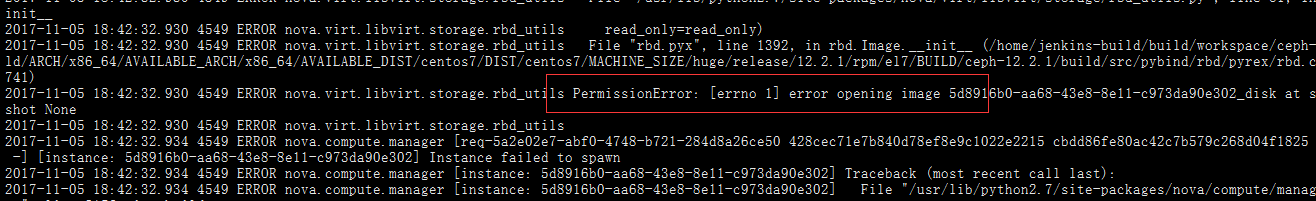

报错信息:

例子--{your-glance-api-server}节点:

把 client.cinder 、 client.glance 和 client.cinder-backup 的密钥环复制到适当的节点,并更改所有权:

ceph auth get-or-create client.glance | ssh {your-glance-api-server} sudo tee /etc/ceph/ceph.client.glance.keyring

ceph auth get-or-create client.glance | ssh controller sudo tee /etc/ceph/ceph.client.glance.keyring

ssh controller sudo chown glance:glance /etc/ceph/ceph.client.glance.keyring

也可以登录到{your-glance-api-server}节点,更改秘钥的所有权

注意:必须已经在controller节点上安装过ceph包,也就是要有/etc/ceph这个文件夹,完成后可以在controller下面看到ceph.client.glance.keyring秘钥环

例子--{your-volume-server}:

ceph auth get-or-create client.cinder | ssh {your-volume-server} sudo tee /etc/ceph/ceph.client.cinder.keyring

ceph auth get-or-create client.cinder | ssh computer-1 sudo tee /etc/ceph/ceph.client.cinder.keyring

也可以登录到{your-volume-server}节点,更改秘钥的所有权

注意:必须已经在computer-1节点上安装过ceph包,也就是要有/etc/ceph这个文件夹,完成后可以在computer-1下面看到ceph.client.cinder.keyring秘钥环

运行着 glance-api 、 cinder-volume 、 nova-compute 或 cinder-backup 的主机被当作 Ceph 客户端,它们都需要 ceph.conf 文件,copy user.keyring to glance-api node and cinder-volume node

ceph-deploy# ceph auth get-or-create client.ceph >> ceph.client.ceph.keyring

# scp ceph.client.ceph.keyring ceph.conf controller:/etc/ceph/

# scp ceph.client.ceph.keyring ceph.conf cinder-volume:/etc/ceph/对接glance-api

install rbd

# yum install ceph-common python-rbd

设置key权限

# chown glance:glance /etc/ceph/ceph.client.ceph.keyring

vim /etc/glance/glance-api.conf 添加下面的参数

[DEFAULT]

verbose=True

show_image_direct_url=True

[glance_store]

default_store=rbd

stores = rbd

rbd_store_pool = images

rbd_store_user = glance ////这里的用户是我们上面创建的client.glance 只写glance

rbd_store_ceph_conf = /etc/ceph/ceph.conf

rbd_store_chunk_size = 8

filesystem_store_datadir=/var/lib/glance/images/

注意:网上有的把这个给注释掉了,亲测liberty 注释不注释都是不会在给/var/lib/glance/image下存储镜像了,镜像存在了ceph集群glance pool里了

restart glance service

systemctl restart openstack-glance-api.service

systemctl restart openstack-glance-registry.service

对接cinder-volume

install ceph-common

yum install ceph-common python-rbd

设置key权限

登录到cinder节点,对秘钥进行授权

chown cinder:cinder /etc/ceph/ceph.client.ceph.keyring

vim /etc/cinder/cinder.conf 添加下面的参数

[DEFAULT]

enabled_backends = ceph

[ceph]

volume_driver = cinder.volume.drivers.rbd.RBDDriver

volume_backend_name = ceph

rbd_pool = volumes

rbd_ceph_conf = /etc/ceph/ceph.conf

rbd_flatten_volume_from_snapshot = false

rbd_max_clone_depth = 5

rbd_store_chunk_size = 4

rados_connect_timeout = -1

glance_api_version = 2

rbd_user = cinder ////这里的用户是我们上面创建的client.cinder 只写cinder

rbd_secret_uuid = 457eb676-33da-42ec-9a8c-9293d545c337restart cinder-volume service

# systemctl restart openstack-cinder-volume.service对接compute

install

# yum install ceph-common python-rbdvim /etc/nova/nova.conf 添加下面的参数

[cinder]

os_region_name = RegionOne

[libvirt]

images_type = rbd

images_rbd_pool = vms

images_rbd_ceph_conf = /etc/ceph/ceph.conf

disk_cachemodes="network=writeback"

rbd_user = cinder ////这里的用户是我们上面创建的client.cinder 只写cinder

rbd_secret_uuid = 457eb676-33da-42ec-9a8c-9293d545c337

inject_password = false

inject_key = false

inject_partition = -2

live_migration_flag="VIR_MIGRATE_UNDEFINE_SOURCE,VIR_MIGRATE_PEER2PEER,VIR_MIGRATE_LIVE,VIR_MIGRATE_PERSIST_DEST,VIR_MIGRATE_TUNNELLED"

hw_disk_discard = unmap从ceph集群复制ceph.conf到控制节点或者计算节点的/etc/ceph/文件夹下并更改所有权

# scp ceph-node:/etc/ceph/ceph.conf /etc/ceph

# chown cinder:cinder /etc/ceph/ceph.conf

设置libvird

# uuidgen ///这里uuid会生成一个新的随机数,我们这里工这个固定的随机数

457eb676-33da-42ec-9a8c-9293d545c337

# cat > secret.xml <<EOF

<secret ephemeral='no' private='no'>

<uuid>457eb676-33da-42ec-9a8c-9293d545c337</uuid>

<usage type='ceph'>

<name>client.cinder secret</name>

</usage>

</secret>

EOF

virsh secret-list ////可以列出我们设定的secret列表

virsh secret-undefine ///可以取消我们定义的secret,其实相当于删除之前的设定的参数

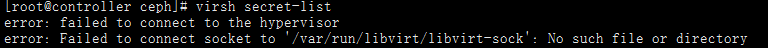

如果提示我们这样的报错:

这是说明我明我们的几点没有开启libvirtd服务

这是说明我明我们的几点没有开启libvirtd服务

systemctl start libvirtd.service

systemctl status libvirtd.service

# virsh secret-define --file secret.xml ////定义秘钥

Secret 457eb676-33da-42ec-9a8c-9293d545c337 created

# cat /etc/ceph/ceph.client.cinder.keyring

[client.cinder]

key = AQC62PtZIX7TFRAAQJz981zXIvuMoQlRNnrJiQ==

# virsh secret-set-value --secret 457eb676-33da-42ec-9a8c-9293d545c337 --base64 $(cat client.ceph.key)

virsh secret-set-value --secret 457eb676-33da-42ec-9a8c-9293d545c337 --base64 AQC62PtZIX7TFRAAQJz981zXIvuMoQlRNnrJiQ==

Secret value set

为了省事,用下面命令一下即可:

virsh secret-set-value --secret 457eb676-33da-42ec-9a8c-9293d545c337 --base64 $(cat /etc/ceph/ceph.client.cinder.keyring |grep key|awk -F ' ' '{print $3}')

restart nova-compute

systemctl restart openstack-nova-compute.service

至此修改过的文件,对应的服务重启一下

cinder service-list 查看ceph是都up了