1、

如果你的集群尚未安装所需软件,你得首先安装它们。

以Ubuntu Linux为例:

$ sudo apt-get install ssh

$ sudo apt-get install rsync

2、为了获取Hadoop的发行版,从Apache的某个镜像服务器上下载最近的 稳定发行版

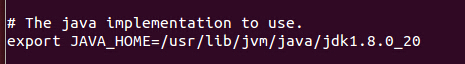

3、解压所下载的Hadoop发行版。编辑 conf/hadoop-env.sh文件,至少需要将JAVA_HOME设置为Java安装根路径。

4、在etc/hadoop目录下修改hadoop-env.sh加入jdk安装目录

5、在/etc/bash.bashrc末尾加一下环境变量export HADOOP_INSTALL=/usr/local/hadoop

export PATH=$PATH:$HADOOP_INSTALL/bin

export PATH=$PATH:$HADOOP_INSTALL/sbin

export HADOOP_MAPRED_HOME=$HADOOP_INSTALL

export HADOOP_COMMON_HOME=$HADOOP_INSTALL

export HADOOP_HDFS_HOME=$HADOOP_INSTALL

export YARN_HOME=$HADOOP_INSTALL

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_INSTALL/lib/native

export HADOOP_OPTS="-Djava.library.path=$HADOOP_INSTALL/lib"

6、通过执行hadoop自带实例WordCount验证是否安装成功

/usr/local/hadoop路径下创建input文件夹

在hadoop目录下执行bin/hadoop jar share/hadoop/mapreduce/sources/hadoop-mapreduce-examples-2.6.0-sources.jar org.apache.hadoop.examples.WordCount input output

Hadoop 伪分布式配置

7、配置core-site.xml、hdfs-site.xml、yarn-site.xml。配置内容如下所示:

core-site.xml

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/pc/hadoop/hadoopdata</value>

</property>

<property>

<name>fs.default.name</name>

<value>hdfs://local:9000</value>

</property>

</configuration>

yarn-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!-- Site specific YARN configuration properties -->

</configuration>

hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/home/pc/hadoop/hdfshadoopname</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/home/pc/hadoop/hdfshadoopdata</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

</configuration>

8、

sudo gedit /usr/local/hadoop/etc/hadoop/masters 添加:localhost

sudo gedit /usr/local/hadoop/etc/hadoop/slaves 添加:localhost

9、初始化文件系统HDFS

bin/hdfs namenode -format

成功的话,最后的提示如下,Exitting with status 0 表示成功,Exitting with status 1: 则是出错。

打开

sbin/start-all.sh或者sbin/start-dfs.sh

sbin/start-yarn.sh

关闭

sbin/stop-all.sh 或者相应的:sbin/stop-dfs.sh,sbin/stop-yarn.sh

打开浏览器 http://localhost:8088/cluster/scheduler

http://localhost:50070

参考材料:

http://blog.csdn.net/ggz631047367/article/details/42426391

http://blog.csdn.net/philosophyatmath/article/details/41956725