kubernetes master 节点运行如下组件:

+ kube-apiserver

+ kube-scheduler

+ kube-controller-manager

+ kube-nginx

kube-apiserver、kube-scheduler 和 kube-controller-manager 均以多实例模式运行:

- kube-scheduler 和 kube-controller-manager 会自动选举产生一个 leader 实例,其它实例处于阻塞模式,当 leader 挂了后,重新选举产生新的 leader,从而保证服务可用性;

- kube-apiserver 是无状态的,需要通过 kube-nginx 进行代理访问,从而保证服务可用性;

## 基于 nginx 代理的 kube-apiserver 高可用方案

- 控制节点的 kube-controller-manager、kube-scheduler 是多实例部署,所以只要有一个实例正常,就可以保证高可用;

- 集群内的 Pod 使用 K8S 服务域名 kubernetes 访问 kube-apiserver, kube-dns 会自动解析出多个 kube-apiserver 节点的 IP,所以也是高可用的;

- 在每个节点起一个 nginx 进程,后端对接多个 apiserver 实例,nginx 对它们做健康检查和负载均衡;

- kubelet、kube-proxy、controller-manager、scheduler 通过本地的 nginx(监听 127.0.0.1)访问 kube-apiserver,从而实现 kube-apiserver 的高可用;

本章讲解使用 nginx 4 层透明代理功能实现 K8S 节点( master 节点和 worker 节点)高可用访问 kube-apiserver 的步骤。

注意:如果没有特殊指明,本文档的所有操作均在 zhangjun-k8s01 节点上执行,然后远程分发文件和执行命令

CA证书请参考:https://www.cnblogs.com/deny/p/12259778.html

etcd集群安装请参考:https://www.cnblogs.com/deny/p/12260025.html

flannel 集群请参考:https://www.cnblogs.com/deny/p/12260072.html

kubectl命令行工具部署请参考:https://www.cnblogs.com/deny/p/12260515.html

节点信息:

+ zhangjun-k8s01:192.168.1.201 + zhangjun-k8s02:192.168.1.202 + zhangjun-k8s03:192.168.1.203

所需的变量存放在/opt/k8s/bin/environment.sh

#!/usr/bin/bash # 生成 EncryptionConfig 所需的加密 key export ENCRYPTION_KEY=$(head -c 32 /dev/urandom | base64) # 集群各机器 IP 数组 export NODE_IPS=(192.168.1.201 192.168.1.202 192.168.1.203) # 集群各 IP 对应的主机名数组 export NODE_NAMES=(zhangjun-k8s01 zhangjun-k8s02 zhangjun-k8s03) # etcd 集群服务地址列表 export ETCD_ENDPOINTS="https://192.168.1.201:2379,https://192.168.1.202:2379,https://192.168.1.203:2379" # etcd 集群间通信的 IP 和端口 export ETCD_NODES="zhangjun-k8s01=https://192.168.1.201:2380,zhangjun-k8s02=https://192.168.1.202:2380,zhangjun-k8s03=https://192.168.1.203:2380" # kube-apiserver 的反向代理(kube-nginx)地址端口 export KUBE_APISERVER="https://127.0.0.1:8443" # 节点间互联网络接口名称 export IFACE="ens33" # etcd 数据目录 export ETCD_DATA_DIR="/data/k8s/etcd/data" # etcd WAL 目录,建议是 SSD 磁盘分区,或者和 ETCD_DATA_DIR 不同的磁盘分区 export ETCD_WAL_DIR="/data/k8s/etcd/wal" # k8s 各组件数据目录 export K8S_DIR="/data/k8s/k8s" # docker 数据目录 export DOCKER_DIR="/data/k8s/docker" ## 以下参数一般不需要修改 # TLS Bootstrapping 使用的 Token,可以使用命令 head -c 16 /dev/urandom | od -An -t x | tr -d ' ' 生成 BOOTSTRAP_TOKEN="41f7e4ba8b7be874fcff18bf5cf41a7c" # 最好使用 当前未用的网段 来定义服务网段和 Pod 网段 # 服务网段,部署前路由不可达,部署后集群内路由可达(kube-proxy 保证) SERVICE_CIDR="10.254.0.0/16" # Pod 网段,建议 /16 段地址,部署前路由不可达,部署后集群内路由可达(flanneld 保证) CLUSTER_CIDR="172.30.0.0/16" # 服务端口范围 (NodePort Range) export NODE_PORT_RANGE="30000-32767" # flanneld 网络配置前缀 export FLANNEL_ETCD_PREFIX="/kubernetes/network" # kubernetes 服务 IP (一般是 SERVICE_CIDR 中第一个IP) export CLUSTER_KUBERNETES_SVC_IP="10.254.0.1" # 集群 DNS 服务 IP (从 SERVICE_CIDR 中预分配) export CLUSTER_DNS_SVC_IP="10.254.0.2" # 集群 DNS 域名(末尾不带点号) export CLUSTER_DNS_DOMAIN="cluster.local" # 将二进制目录 /opt/k8s/bin 加到 PATH 中 export PATH=/opt/k8s/bin:$PATH

一、部署 master 节点

1、下载最新版本二进制文件

从 [CHANGELOG 页面](https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG.md) 下载二进制 tar 文件并解压

cd /opt/k8s/work wget https://dl.k8s.io/v1.14.2/kubernetes-server-linux-amd64.tar.gz tar -xzvf kubernetes-server-linux-amd64.tar.gz cd kubernetes tar -xzvf kubernetes-src.tar.gz

其他下载链接:

wget https://storage.googleapis.com/kubernetes-release/release/v1.14.2/kubernetes-node-linux-amd64.tar.gz wget https://storage.googleapis.com/kubernetes-release/release/v1.14.2/kubernetes-server-linux-amd64.tar.gz wget https://storage.googleapis.com/kubernetes-release/release/v1.14.2/kubernetes-client-linux-amd64.tar.gz wget https://storage.googleapis.com/kubernetes-release/release/v1.14.2/kubernetes.tar.gz

2、将二进制文件拷贝到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kubernetes/server/bin/{apiextensions-apiserver,cloud-controller-manager,kube-apiserver,kube-controller-manager,kube-proxy,kube-scheduler,kubeadm,kubectl,kubelet,mounter} root@${node_ip}:/opt/k8s/bin/ ssh root@${node_ip} "chmod +x /opt/k8s/bin/*" done

二、安装配置nginx代理

1、下载安装模板nginx

yum install -y gcc gcc-c++ make

cd /opt/k8s/work wget http://nginx.org/download/nginx-1.15.3.tar.gz tar -xzvf nginx-1.15.3.tar.gz cd /opt/k8s/work/nginx-1.15.3 mkdir nginx-prefix ./configure --with-stream --without-http --prefix=$(pwd)/nginx-prefix --without-http_uwsgi_module --without-http_scgi_module --without-http_fastcgi_module

make && make install

- `--with-stream`:开启 4 层透明转发(TCP Proxy)功能;

- `--without-xxx`:关闭所有其他功能,这样生成的动态链接二进制程序依赖最小;

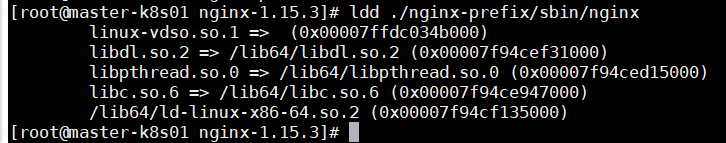

查看 nginx 动态链接的库

cd /opt/k8s/work/nginx-1.15.3

ldd ./nginx-prefix/sbin/nginx

- 由于只开启了 4 层透明转发功能,所以除了依赖 libc 等操作系统核心 lib 库外,没有对其它 lib 的依赖(如 libz、libssl 等),这样可以方便部署到各版本操作系统中

2、安装和部署 nginx

1)创建目录结构

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "mkdir -p /opt/k8s/kube-nginx/{conf,logs,sbin}" done

2)拷贝二进制程序

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "mkdir -p /opt/k8s/kube-nginx/{conf,logs,sbin}" scp /opt/k8s/work/nginx-1.15.3/nginx-prefix/sbin/nginx root@${node_ip}:/opt/k8s/kube-nginx/sbin/kube-nginx ssh root@${node_ip} "chmod a+x /opt/k8s/kube-nginx/sbin/*" done

- 重命名二进制文件为 kube-nginx

3)配置 nginx,开启 4 层透明转发功能

cd /opt/k8s/work cat > kube-nginx.conf << EOF worker_processes 1; events { worker_connections 1024; } stream { upstream backend { hash $remote_addr consistent; server 192.168.1.201:6443 max_fails=3 fail_timeout=30s; server 192.168.1.202:6443 max_fails=3 fail_timeout=30s; server 192.168.1.203:6443 max_fails=3 fail_timeout=30s; } server { listen 127.0.0.1:8443; proxy_connect_timeout 1s; proxy_pass backend; } } EOF

- 需要根据集群 kube-apiserver 的实际情况,替换 backend 中 server 列表

4)分发配置文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-nginx.conf root@${node_ip}:/opt/k8s/kube-nginx/conf/kube-nginx.conf done

5)配置 systemd unit 文件,启动服务

配置 kube-nginx systemd unit 文件

cd /opt/k8s/work cat > kube-nginx.service <<EOF [Unit] Description=kube-apiserver nginx proxy After=network.target After=network-online.target Wants=network-online.target [Service] Type=forking ExecStartPre=/opt/k8s/kube-nginx/sbin/kube-nginx -c /opt/k8s/kube-nginx/conf/kube-nginx.conf -p /opt/k8s/kube-nginx -t ExecStart=/opt/k8s/kube-nginx/sbin/kube-nginx -c /opt/k8s/kube-nginx/conf/kube-nginx.conf -p /opt/k8s/kube-nginx ExecReload=/opt/k8s/kube-nginx/sbin/kube-nginx -c /opt/k8s/kube-nginx/conf/kube-nginx.conf -p /opt/k8s/kube-nginx -s reload PrivateTmp=true Restart=always RestartSec=5 StartLimitInterval=0 LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

6)分发 systemd unit 文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-nginx.service root@${node_ip}:/etc/systemd/system/ done

7)启动 kube-nginx 服务

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-nginx && systemctl restart kube-nginx" done

8)检查 kube-nginx 服务运行状态

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "systemctl status kube-nginx |grep 'Active:'" done

- 确保状态为 `active (running)`,否则查看日志journalctl -u kube-nginx,确认原因

三、部署高可用 kube-apiserver 集群

1、创建 kubernetes 证书和私钥

1)创建证书签名请求

cd /opt/k8s/work source /opt/k8s/bin/environment.sh cat > kubernetes-csr.json <<EOF { "CN": "kubernetes", "hosts": [ "127.0.0.1", "192.168.1.201", "192.168.1.202", "192.168.1.203", "${CLUSTER_KUBERNETES_SVC_IP}", "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.cluster", "kubernetes.default.svc.cluster.local." ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "BeiJing", "L": "BeiJing", "O": "k8s", "OU": "4Paradigm" } ] } EOF

- hosts 字段指定授权使用该证书的 IP 和域名列表,这里列出了 master 节点 IP、kubernetes 服务的 IP 和域名;

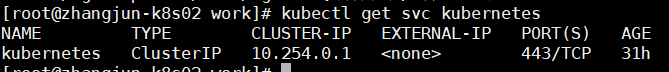

- kubernetes 服务 IP 是 apiserver 自动创建的,一般是 `--service-cluster-ip-range` 参数指定的网段的第一个IP**,后续可以通过下面命令获取:

kubectl get svc kubernetes

2)生成证书和私钥

cfssl gencert -ca=/opt/k8s/work/ca.pem -ca-key=/opt/k8s/work/ca-key.pem -config=/opt/k8s/work/ca-config.json -profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes ls kubernetes*pem

3)将生成的证书和私钥文件拷贝到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "mkdir -p /etc/kubernetes/cert" scp kubernetes*.pem root@${node_ip}:/etc/kubernetes/cert/ done

2、创建加密配置文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh cat > encryption-config.yaml <<EOF kind: EncryptionConfig apiVersion: v1 resources: - resources: - secrets providers: - aescbc: keys: - name: key1 secret: ${ENCRYPTION_KEY} - identity: {} EOF

将加密配置文件拷贝到 master 节点的 `/etc/kubernetes` 目录下

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp encryption-config.yaml root@${node_ip}:/etc/kubernetes/ done

3、创建审计策略文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh cat > audit-policy.yaml <<EOF apiVersion: audit.k8s.io/v1beta1 kind: Policy rules: # The following requests were manually identified as high-volume and low-risk, so drop them. - level: None resources: - group: "" resources: - endpoints - services - services/status users: - 'system:kube-proxy' verbs: - watch - level: None resources: - group: "" resources: - nodes - nodes/status userGroups: - 'system:nodes' verbs: - get - level: None namespaces: - kube-system resources: - group: "" resources: - endpoints users: - 'system:kube-controller-manager' - 'system:kube-scheduler' - 'system:serviceaccount:kube-system:endpoint-controller' verbs: - get - update - level: None resources: - group: "" resources: - namespaces - namespaces/status - namespaces/finalize users: - 'system:apiserver' verbs: - get # Don't log HPA fetching metrics. - level: None resources: - group: metrics.k8s.io users: - 'system:kube-controller-manager' verbs: - get - list # Don't log these read-only URLs. - level: None nonResourceURLs: - '/healthz*' - /version - '/swagger*' # Don't log events requests. - level: None resources: - group: "" resources: - events # node and pod status calls from nodes are high-volume and can be large, don't log responses for expected updates from nodes - level: Request omitStages: - RequestReceived resources: - group: "" resources: - nodes/status - pods/status users: - kubelet - 'system:node-problem-detector' - 'system:serviceaccount:kube-system:node-problem-detector' verbs: - update - patch - level: Request omitStages: - RequestReceived resources: - group: "" resources: - nodes/status - pods/status userGroups: - 'system:nodes' verbs: - update - patch # deletecollection calls can be large, don't log responses for expected namespace deletions - level: Request omitStages: - RequestReceived users: - 'system:serviceaccount:kube-system:namespace-controller' verbs: - deletecollection # Secrets, ConfigMaps, and TokenReviews can contain sensitive & binary data, # so only log at the Metadata level. - level: Metadata omitStages: - RequestReceived resources: - group: "" resources: - secrets - configmaps - group: authentication.k8s.io resources: - tokenreviews # Get repsonses can be large; skip them. - level: Request omitStages: - RequestReceived resources: - group: "" - group: admissionregistration.k8s.io - group: apiextensions.k8s.io - group: apiregistration.k8s.io - group: apps - group: authentication.k8s.io - group: authorization.k8s.io - group: autoscaling - group: batch - group: certificates.k8s.io - group: extensions - group: metrics.k8s.io - group: networking.k8s.io - group: policy - group: rbac.authorization.k8s.io - group: scheduling.k8s.io - group: settings.k8s.io - group: storage.k8s.io verbs: - get - list - watch # Default level for known APIs - level: RequestResponse omitStages: - RequestReceived resources: - group: "" - group: admissionregistration.k8s.io - group: apiextensions.k8s.io - group: apiregistration.k8s.io - group: apps - group: authentication.k8s.io - group: authorization.k8s.io - group: autoscaling - group: batch - group: certificates.k8s.io - group: extensions - group: metrics.k8s.io - group: networking.k8s.io - group: policy - group: rbac.authorization.k8s.io - group: scheduling.k8s.io - group: settings.k8s.io - group: storage.k8s.io # Default level for all other requests. - level: Metadata omitStages: - RequestReceived EOF

2)分发审计策略文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp audit-policy.yaml root@${node_ip}:/etc/kubernetes/audit-policy.yaml done

4、创建后续访问 metrics-server 使用的证书

1)创建证书签名请求

cat > proxy-client-csr.json <<EOF { "CN": "aggregator", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "BeiJing", "L": "BeiJing", "O": "k8s", "OU": "4Paradigm" } ] } EOF

- CN 名称需要位于 kube-apiserver 的 `--requestheader-allowed-names` 参数中,否则后续访问 metrics 时会提示权限不足

2)生成证书和私钥

cfssl gencert -ca=/etc/kubernetes/cert/ca.pem -ca-key=/etc/kubernetes/cert/ca-key.pem -config=/etc/kubernetes/cert/ca-config.json -profile=kubernetes proxy-client-csr.json | cfssljson -bare proxy-client ls proxy-client*.pem

3)将生成的证书和私钥文件拷贝到所有 master 节点

source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp proxy-client*.pem root@${node_ip}:/etc/kubernetes/cert/ done

5、创建 kube-apiserver systemd文件

1)创建 kube-apiserver systemd unit 模板文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh cat > kube-apiserver.service.template <<EOF [Unit] Description=Kubernetes API Server Documentation=https://github.com/GoogleCloudPlatform/kubernetes After=network.target [Service] WorkingDirectory=${K8S_DIR}/kube-apiserver ExecStart=/opt/k8s/bin/kube-apiserver \ --advertise-address=##NODE_IP## \ --default-not-ready-toleration-seconds=360 \ --default-unreachable-toleration-seconds=360 \ --feature-gates=DynamicAuditing=true \ --max-mutating-requests-inflight=2000 \ --max-requests-inflight=4000 \ --default-watch-cache-size=200 \ --delete-collection-workers=2 \ --encryption-provider-config=/etc/kubernetes/encryption-config.yaml \ --etcd-cafile=/etc/kubernetes/cert/ca.pem \ --etcd-certfile=/etc/kubernetes/cert/kubernetes.pem \ --etcd-keyfile=/etc/kubernetes/cert/kubernetes-key.pem \ --etcd-servers=${ETCD_ENDPOINTS} \ --bind-address=##NODE_IP## \ --secure-port=6443 \ --tls-cert-file=/etc/kubernetes/cert/kubernetes.pem \ --tls-private-key-file=/etc/kubernetes/cert/kubernetes-key.pem \ --insecure-port=0 \ --audit-dynamic-configuration \ --audit-log-maxage=15 \ --audit-log-maxbackup=3 \ --audit-log-maxsize=100 \ --audit-log-truncate-enabled \ --audit-log-path=${K8S_DIR}/kube-apiserver/audit.log \ --audit-policy-file=/etc/kubernetes/audit-policy.yaml \ --profiling \ --anonymous-auth=false \ --client-ca-file=/etc/kubernetes/cert/ca.pem \ --enable-bootstrap-token-auth \ --requestheader-allowed-names="aggregator" \ --requestheader-client-ca-file=/etc/kubernetes/cert/ca.pem \ --requestheader-extra-headers-prefix="X-Remote-Extra-" \ --requestheader-group-headers=X-Remote-Group \ --requestheader-username-headers=X-Remote-User \ --service-account-key-file=/etc/kubernetes/cert/ca.pem \ --authorization-mode=Node,RBAC \ --runtime-config=api/all=true \ --enable-admission-plugins=NodeRestriction \ --allow-privileged=true \ --apiserver-count=3 \ --event-ttl=168h \ --kubelet-certificate-authority=/etc/kubernetes/cert/ca.pem \ --kubelet-client-certificate=/etc/kubernetes/cert/kubernetes.pem \ --kubelet-client-key=/etc/kubernetes/cert/kubernetes-key.pem \ --kubelet-https=true \ --kubelet-timeout=10s \ --proxy-client-cert-file=/etc/kubernetes/cert/proxy-client.pem \ --proxy-client-key-file=/etc/kubernetes/cert/proxy-client-key.pem \ --service-cluster-ip-range=${SERVICE_CIDR} \ --service-node-port-range=${NODE_PORT_RANGE} \ --logtostderr=true \ --v=2 Restart=on-failure RestartSec=10 Type=notify LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

- `--advertise-address`:apiserver 对外通告的 IP(kubernetes 服务后端节点 IP);

- `--default-*-toleration-seconds`:设置节点异常相关的阈值;

- `--max-*-requests-inflight`:请求相关的最大阈值;

- `--etcd-*`:访问 etcd 的证书和 etcd 服务器地址;

- `--experimental-encryption-provider-config`:指定用于加密 etcd 中 secret 的配置;

- `--bind-address`: https 监听的 IP,不能为 `127.0.0.1`,否则外界不能访问它的安全端口 6443;

- `--secret-port`:https 监听端口;

- `--insecure-port=0`:关闭监听 http 非安全端口(8080);

- `--tls-*-file`:指定 apiserver 使用的证书、私钥和 CA 文件;

- `--audit-*`:配置审计策略和审计日志文件相关的参数;

- `--client-ca-file`:验证 client (kue-controller-manager、kube-scheduler、kubelet、kube-proxy 等)请求所带的证书;

- `--enable-bootstrap-token-auth`:启用 kubelet bootstrap 的 token 认证;

- `--requestheader-*`:kube-apiserver 的 aggregator layer 相关的配置参数,proxy-client & HPA 需要使用;

- `--requestheader-client-ca-file`:用于签名 `--proxy-client-cert-file` 和 `--proxy-client-key-file` 指定的证书;在启用了 metric aggregator 时使用;

- `--requestheader-allowed-names`:不能为空,值为逗号分割的 `--proxy-client-cert-file` 证书的 CN 名称,这里设置为 "aggregator";

- `--service-account-key-file`:签名 ServiceAccount Token 的公钥文件,kube-controller-manager 的 `--service-account-private-key-file` 指定私钥文件,两者配对使用;

- `--runtime-config=api/all=true`: 启用所有版本的 APIs,如 autoscaling/v2alpha1;

- `--authorization-mode=Node,RBAC`、`--anonymous-auth=false`: 开启 Node 和 RBAC 授权模式,拒绝未授权的请求;

- `--enable-admission-plugins`:启用一些默认关闭的 plugins;

- `--allow-privileged`:运行执行 privileged 权限的容器;

- `--apiserver-count=3`:指定 apiserver 实例的数量;

- `--event-ttl`:指定 events 的保存时间;

- `--kubelet-*`:如果指定,则使用 https 访问 kubelet APIs;需要为证书对应的用户(上面 kubernetes*.pem 证书的用户为 kubernetes) 用户定义 RBAC 规则,否则访问 kubelet API 时提示未授权;

- `--proxy-client-*`:apiserver 访问 metrics-server 使用的证书;

- `--service-cluster-ip-range`: 指定 Service Cluster IP 地址段;

- `--service-node-port-range`: 指定 NodePort 的端口范围;

如果 kube-apiserver 机器**没有**运行 kube-proxy,则还需要添加 `--enable-aggregator-routing=true` 参数;

注意:

1. requestheader-client-ca-file 指定的 CA 证书,必须具有 client auth and server auth;

2. 如果 `--requestheader-allowed-names` 不为空,且 `--proxy-client-cert-file` 证书的 CN 名称不在 allowed-names 中,则后续查看 node 或 pods 的 metrics 失败,提示:

[root@zhangjun-k8s01 1.8+]# kubectl top nodes Error from server (Forbidden): nodes.metrics.k8s.io is forbidden: User "aggregator" cannot list resource "nodes" in API group "metrics.k8s.io" at the cluster scope

2)为各节点创建和分发 kube-apiserver systemd unit 文件

替换模板文件中的变量,为各节点生成 systemd unit 文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for (( i=0; i < 3; i++ )) do sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-apiserver.service.template > kube-apiserver-${NODE_IPS[i]}.service done ls kube-apiserver*.service

- NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP

3)分发生成的 systemd unit 文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-apiserver-${node_ip}.service root@${node_ip}:/etc/systemd/system/kube-apiserver.service done

- 文件重命名为 kube-apiserver.service

4)启动 kube-apiserver 服务

source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "mkdir -p ${K8S_DIR}/kube-apiserver" ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-apiserver && systemctl restart kube-apiserver" done

- 启动服务前必须先创建工作目录

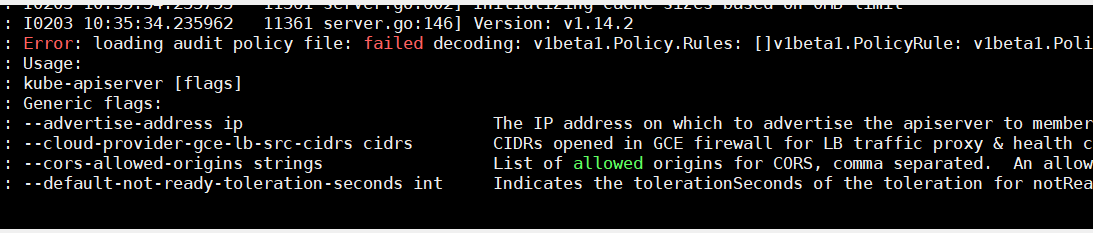

启动时可能报错,可能是 kube-apiserver 机器没有运行 kube-proxy,则在kube-apiserver.service还需要添加 `--enable-aggregator-routing=true` 参数;

如果不行audit-policy.yaml可改为如下:

# Log all requests at the Metadata level.

apiVersion: audit.k8s.io/v1

kind: Policy

rules:

- level: Metadata

5)检查 kube-apiserver 运行状态

source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "systemctl status kube-apiserver |grep 'Active:'" done

确保状态为 `active (running)`,否则查看日志journalctl -u kube-apiserver,确认原因

6、查看状态

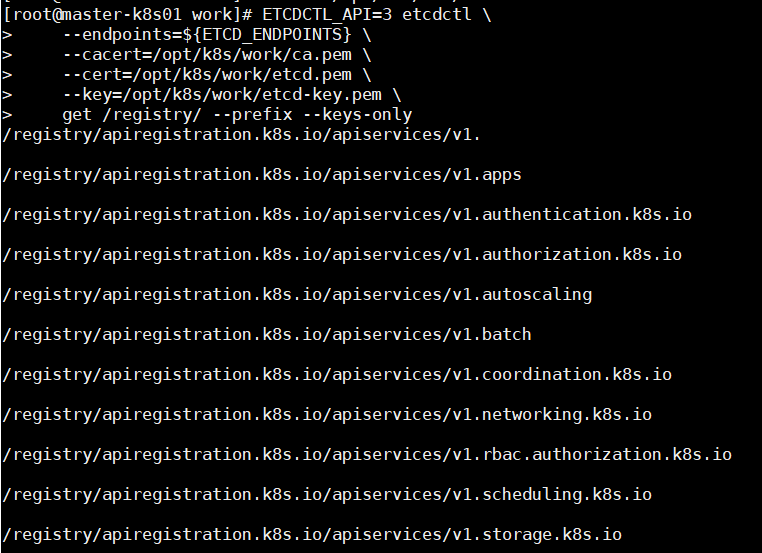

1)打印 kube-apiserver 写入 etcd 的数据

source /opt/k8s/bin/environment.sh ETCDCTL_API=3 etcdctl --endpoints=${ETCD_ENDPOINTS} --cacert=/opt/k8s/work/ca.pem --cert=/opt/k8s/work/etcd.pem --key=/opt/k8s/work/etcd-key.pem get /registry/ --prefix --keys-only

2)检查集群信息

``` bash $ kubectl cluster-info Kubernetes master is running at https://127.0.0.1:8443 To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. $ kubectl get all --all-namespaces NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE default service/kubernetes ClusterIP 10.254.0.1 <none> 443/TCP 12m $ kubectl get componentstatuses NAME STATUS MESSAGE ERROR controller-manager Unhealthy Get http://127.0.0.1:10252/healthz: dial tcp 127.0.0.1:10252: connect: connection refused scheduler Unhealthy Get http://127.0.0.1:10251/healthz: dial tcp 127.0.0.1:10251: connect: connection refused etcd-0 Healthy {"health":"true"} etcd-2 Healthy {"health":"true"} etcd-1 Healthy {"health":"true"}

1. 如果执行 kubectl 命令式时输出如下错误信息,则说明使用的 `~/.kube/config` 文件不对,请切换到正确的账户后再执行该命令:

`The connection to the server localhost:8080 was refused - did you specify the right host or port?`

2. 执行 `kubectl get componentstatuses` 命令时,apiserver 默认向 127.0.0.1 发送请求。当 controller-manager、scheduler 以集群模式运行时,有可能和 kube-apiserver **不在一台机器**上,这时 controller-manager 或 scheduler 的状态为 Unhealthy,但实际上它们工作**正常**。

3)检查 kube-apiserver 监听的端口

[root@zhangjun-k8s02 work]# netstat -lnpt|grep kube-ap tcp 0 0 192.168.1.202:6443 0.0.0.0:* LISTEN 903/kube-apiserver

- 6443: 接收 https 请求的安全端口,对所有请求做认证和授权;

- 由于关闭了非安全端口,故没有监听 8080;

7、授予 kube-apiserver 访问 kubelet API 的权限

在执行 kubectl exec、run、logs 等命令时,apiserver 会将请求转发到 kubelet 的 https 端口。这里定义 RBAC 规则,授权 apiserver 使用的证书(kubernetes.pem)用户名(CN:kuberntes)访问 kubelet API 的权限

kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

四、部署高可用 kube-controller-manager 集群

本章介绍部署高可用 kube-controller-manager 集群的步骤。

该集群包含 3 个节点,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用时,阻塞的节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。

为保证通信安全,本文档先生成 x509 证书和私钥,kube-controller-manager 在如下两种情况下使用该证书:

- 与 kube-apiserver 的安全端口通信;

- 在**安全端口**(https,10252) 输出 prometheus 格式的 metrics;

注意:如果没有特殊指明,本文档的所有操作**均在 zhangjun-k8s01 节点上执行**,然后远程分发文件和执行命令

1、创建 kube-controller-manager 证书和私钥

1)创建证书签名请求

cd /opt/k8s/work cat > kube-controller-manager-csr.json <<EOF { "CN": "system:kube-controller-manager", "key": { "algo": "rsa", "size": 2048 }, "hosts": [ "127.0.0.1", "192.168.1.201", "192.168.1.202", "192.168.1.203" ], "names": [ { "C": "CN", "ST": "BeiJing", "L": "BeiJing", "O": "system:kube-controller-manager", "OU": "4Paradigm" } ] } EOF

- hosts 列表包含**所有** kube-controller-manager 节点 IP;

- CN 和 O 均为 `system:kube-controller-manager`,kubernetes 内置的 ClusterRoleBindings `system:kube-controller-manager` 赋予 kube-controller-manager 工作所需的权限。

2)生成证书和私钥

cd /opt/k8s/work cfssl gencert -ca=/opt/k8s/work/ca.pem -ca-key=/opt/k8s/work/ca-key.pem -config=/opt/k8s/work/ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager ls kube-controller-manager*pem

3)将生成的证书和私钥分发到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-controller-manager*.pem root@${node_ip}:/etc/kubernetes/cert/ done

2、创建和分发 kubeconfig 文件

kube-controller-manager 使用 kubeconfig 文件访问 apiserver,该文件提供了 apiserver 地址、嵌入的 CA 证书和 kube-controller-manager 证书:

cd /opt/k8s/work source /opt/k8s/bin/environment.sh kubectl config set-cluster kubernetes --certificate-authority=/opt/k8s/work/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=kube-controller-manager.kubeconfig kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

分发 kubeconfig 到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-controller-manager.kubeconfig root@${node_ip}:/etc/kubernetes/ done

3、 kube-controller-manager systemd unit配置

1)创建 kube-controller-manager systemd unit 模板文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh cat > kube-controller-manager.service.template <<EOF [Unit] Description=Kubernetes Controller Manager Documentation=https://github.com/GoogleCloudPlatform/kubernetes [Service] WorkingDirectory=${K8S_DIR}/kube-controller-manager ExecStart=/opt/k8s/bin/kube-controller-manager \ --profiling \ --cluster-name=kubernetes \ --controllers=*,bootstrapsigner,tokencleaner \ --kube-api-qps=1000 \ --kube-api-burst=2000 \ --leader-elect \ --use-service-account-credentials\ --concurrent-service-syncs=2 \ --bind-address=##NODE_IP## \ --secure-port=10252 \ --tls-cert-file=/etc/kubernetes/cert/kube-controller-manager.pem \ --tls-private-key-file=/etc/kubernetes/cert/kube-controller-manager-key.pem \ --port=0 \ --authentication-kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \ --client-ca-file=/etc/kubernetes/cert/ca.pem \ --requestheader-allowed-names="" \ --requestheader-client-ca-file=/etc/kubernetes/cert/ca.pem \ --requestheader-extra-headers-prefix="X-Remote-Extra-" \ --requestheader-group-headers=X-Remote-Group \ --requestheader-username-headers=X-Remote-User \ --authorization-kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \ --cluster-signing-cert-file=/etc/kubernetes/cert/ca.pem \ --cluster-signing-key-file=/etc/kubernetes/cert/ca-key.pem \ --experimental-cluster-signing-duration=876000h \ --horizontal-pod-autoscaler-sync-period=10s \ --concurrent-deployment-syncs=10 \ --concurrent-gc-syncs=30 \ --node-cidr-mask-size=24 \ --service-cluster-ip-range=${SERVICE_CIDR} \ --pod-eviction-timeout=6m \ --terminated-pod-gc-threshold=10000 \ --root-ca-file=/etc/kubernetes/cert/ca.pem \ --service-account-private-key-file=/etc/kubernetes/cert/ca-key.pem \ --kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \ --logtostderr=true \ --v=2 Restart=on-failure RestartSec=5 [Install] WantedBy=multi-user.target EOF

- `--port=0`:关闭监听非安全端口(http),同时 `--address` 参数无效,`--bind-address` 参数有效;

- `--secure-port=10252`、`--bind-address=0.0.0.0`: 在所有网络接口监听 10252 端口的 https /metrics 请求;

- `--kubeconfig`:指定 kubeconfig 文件路径,kube-controller-manager 使用它连接和验证 kube-apiserver;

- `--authentication-kubeconfig` 和 `--authorization-kubeconfig`:kube-controller-manager 使用它连接 apiserver,对 client 的请求进行认证和授权。`kube-controller-manager` 不再使用 `--tls-ca-file` 对请求 https metrics 的 Client 证书进行校验。如果没有配置这两个 kubeconfig 参数,则 client 连接 kube-controller-manager https 端口的请求会被拒绝(提示权限不足)。

- `--cluster-signing-*-file`:签名 TLS Bootstrap 创建的证书;

- `--experimental-cluster-signing-duration`:指定 TLS Bootstrap 证书的有效期;

- `--root-ca-file`:放置到容器 ServiceAccount 中的 CA 证书,用来对 kube-apiserver 的证书进行校验;

- `--service-account-private-key-file`:签名 ServiceAccount 中 Token 的私钥文件,必须和 kube-apiserver 的 `--service-account-key-file` 指定的公钥文件配对使用;

- `--service-cluster-ip-range` :指定 Service Cluster IP 网段,必须和 kube-apiserver 中的同名参数一致;

- `--leader-elect=true`:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

- `--controllers=*,bootstrapsigner,tokencleaner`:启用的控制器列表,tokencleaner 用于自动清理过期的 Bootstrap token;

- `--horizontal-pod-autoscaler-*`:custom metrics 相关参数,支持 autoscaling/v2alpha1;

- `--tls-cert-file`、`--tls-private-key-file`:使用 https 输出 metrics 时使用的 Server 证书和秘钥;

- `--use-service-account-credentials=true`: kube-controller-manager 中各 controller 使用 serviceaccount 访问 kube-apiserver;

2)为各节点创建和分发 kube-controller-mananger systemd unit 文件

替换模板文件中的变量,为各节点创建 systemd unit 文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for (( i=0; i < 3; i++ )) do sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-controller-manager.service.template > kube-controller-manager-${NODE_IPS[i]}.service done ls kube-controller-manager*.service

- NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP

3)分发到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-controller-manager-${node_ip}.service root@${node_ip}:/etc/systemd/system/kube-controller-manager.service done

- 文件重命名为 kube-controller-manager.service;

4、启动 kube-controller-manager 服务

source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "mkdir -p ${K8S_DIR}/kube-controller-manager" ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-controller-manager && systemctl restart kube-controller-manager" done

- 启动服务前必须先创建工作目录;

1)检查服务运行状态

source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "systemctl status kube-controller-manager|grep Active" done

确保状态为 `active (running)`,否则查看日志journalctl -u kube-controller-manager,确认原因

kube-controller-manager 监听 10252 端口,接收 https 请求

$ sudo netstat -lnpt | grep kube-cont tcp 0 0 172.27.137.240:10252 0.0.0.0:* LISTEN 108977/kube-control

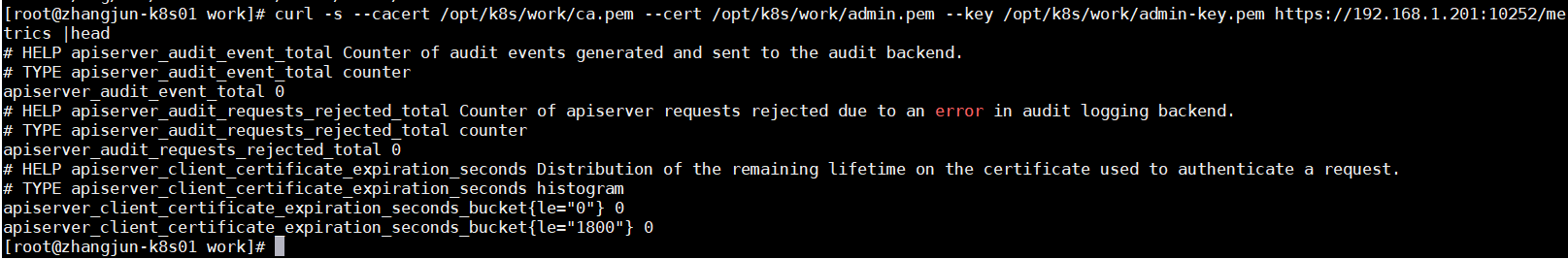

2)查看输出的 metrics

注意:以下命令在 kube-controller-manager 节点上执行

curl -s --cacert /opt/k8s/work/ca.pem --cert /opt/k8s/work/admin.pem --key /opt/k8s/work/admin-key.pem https://192.168.1.201:10252/metrics |head

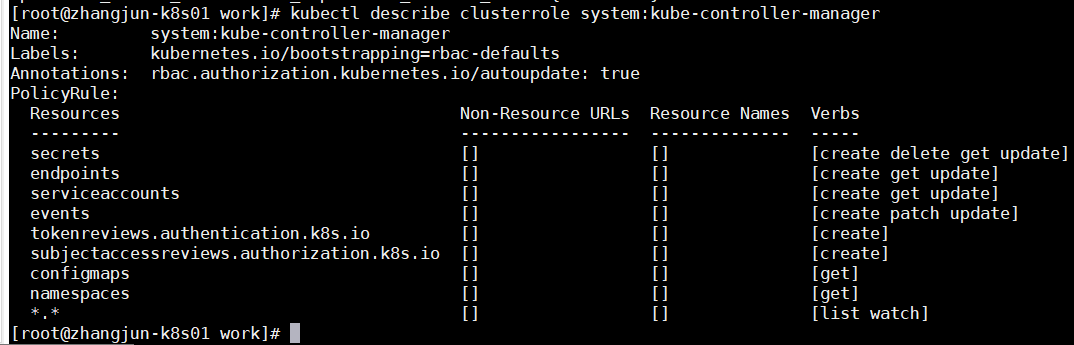

3)kube-controller-manager 的权限

ClusteRole `system:kube-controller-manager` 的权限很小,只能创建 secret、serviceaccount 等资源对象,各 controller 的权限分散到 ClusterRole `system:controller:XXX` 中:

kubectl describe clusterrole system:kube-controller-manager

需要在 kube-controller-manager 的启动参数中添加 `--use-service-account-credentials=true` 参数,这样 main controller 会为各 controller 创建对应的 ServiceAccount XXX-controller。内置的 ClusterRoleBinding system:controller:XXX 将赋予各 XXX-controller ServiceAccount 对应的 ClusterRole system:controller:XXX 权限。

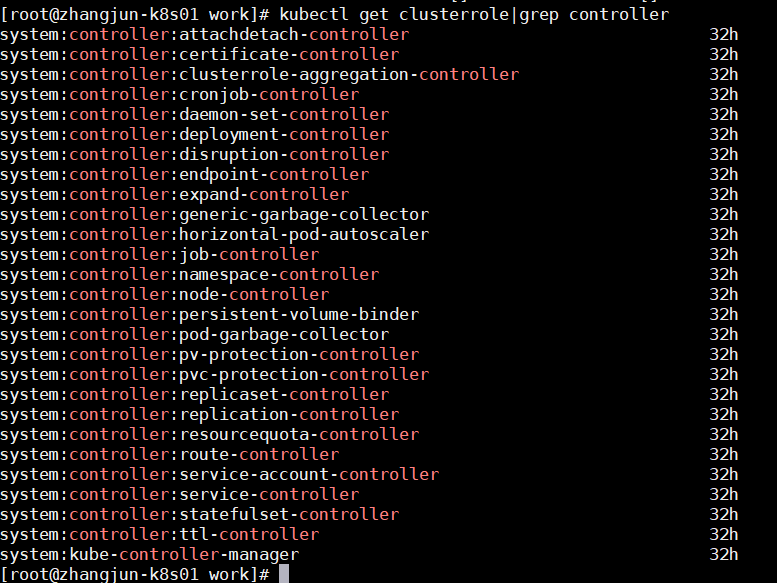

kubectl get clusterrole|grep controller

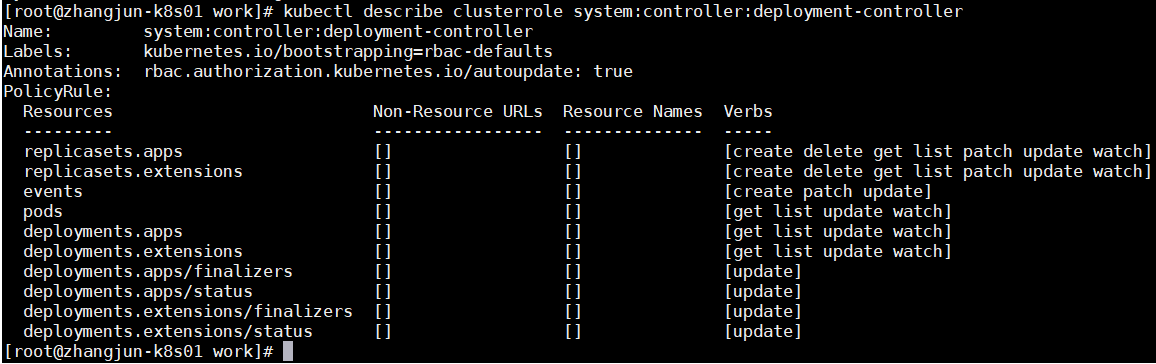

以 deployment controller 为例:

kubectl describe clusterrole system:controller:deployment-controller

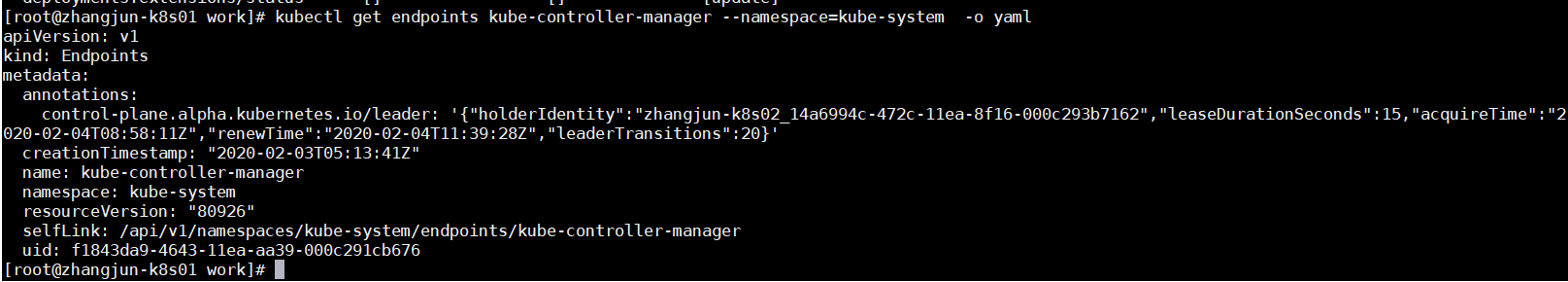

4)查看当前的 leader

kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml

可见,当前的 leader 为 zhangjun-k8s02 节点。

五、部署高可用 kube-scheduler 集群

该集群包含 3 个节点,启动后将通过竞争选举机制产生一个 leader 节点,其它节点为阻塞状态。当 leader 节点不可用后,剩余节点将再次进行选举产生新的 leader 节点,从而保证服务的可用性。

为保证通信安全,本文档先生成 x509 证书和私钥,kube-scheduler 在如下两种情况下使用该证书:

- 与 kube-apiserver 的安全端口通信;

- 在**安全端口**(https,10251) 输出 prometheus 格式的 metrics;

注意:如果没有特殊指明,本文档的所有操作**均在 zhangjun-k8s01 节点上执行**,然后远程分发文件和执行命令。

1、创建 kube-scheduler 证书和私钥

1)创建证书签名请求

cd /opt/k8s/work cat > kube-scheduler-csr.json <<EOF { "CN": "system:kube-scheduler", "hosts": [ "127.0.0.1", "192.168.1.201", "192.168.1.202", "192.168.1.203" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "ST": "BeiJing", "L": "BeiJing", "O": "system:kube-scheduler", "OU": "4Paradigm" } ] } EOF

- hosts 列表包含**所有** kube-scheduler 节点 IP;

- CN 和 O 均为 `system:kube-scheduler`,kubernetes 内置的 ClusterRoleBindings `system:kube-scheduler` 将赋予 kube-scheduler 工作所需的权限;

2)生成证书和私钥

cd /opt/k8s/work cfssl gencert -ca=/opt/k8s/work/ca.pem -ca-key=/opt/k8s/work/ca-key.pem -config=/opt/k8s/work/ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler ls kube-scheduler*pem

3)将生成的证书和私钥分发到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-scheduler*.pem root@${node_ip}:/etc/kubernetes/cert/ done

2、创建和分发 kubeconfig 文件

kube-scheduler 使用 kubeconfig 文件访问 apiserver,该文件提供了 apiserver 地址、嵌入的 CA 证书和 kube-scheduler 证书:

cd /opt/k8s/work source /opt/k8s/bin/environment.sh kubectl config set-cluster kubernetes --certificate-authority=/opt/k8s/work/ca.pem --embed-certs=true --server=${KUBE_APISERVER} --kubeconfig=kube-scheduler.kubeconfig kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

分发 kubeconfig 到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-scheduler.kubeconfig root@${node_ip}:/etc/kubernetes/ done

3、创建 kube-scheduler 配置文件

1)创建模板文件

cd /opt/k8s/work cat >kube-scheduler.yaml.template <<EOF apiVersion: kubescheduler.config.k8s.io/v1alpha1 kind: KubeSchedulerConfiguration bindTimeoutSeconds: 600 clientConnection: burst: 200 kubeconfig: "/etc/kubernetes/kube-scheduler.kubeconfig" qps: 100 enableContentionProfiling: false enableProfiling: true hardPodAffinitySymmetricWeight: 1 healthzBindAddress: ##NODE_IP##:10251 leaderElection: leaderElect: true metricsBindAddress: ##NODE_IP##:10251 EOF

- --kubeconfig`:指定 kubeconfig 文件路径,kube-scheduler 使用它连接和验证 kube-apiserver;

- --leader-elect=true`:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

替换模板文件中的变量:

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for (( i=0; i < 3; i++ )) do sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-scheduler.yaml.template > kube-scheduler-${NODE_IPS[i]}.yaml done ls kube-scheduler*.yaml

- NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP;

2)分发 kube-scheduler 配置文件到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-scheduler-${node_ip}.yaml root@${node_ip}:/etc/kubernetes/kube-scheduler.yaml done

- 重命名为 kube-scheduler.yaml

4、创建 kube-scheduler systemd unit 文件

1)创建模板文件

cd /opt/k8s/work source /opt/k8s/bin/environment.sh cat > kube-scheduler.service.template <<EOF [Unit] Description=Kubernetes Scheduler Documentation=https://github.com/GoogleCloudPlatform/kubernetes [Service] WorkingDirectory=${K8S_DIR}/kube-scheduler ExecStart=/opt/k8s/bin/kube-scheduler \ --config=/etc/kubernetes/kube-scheduler.yaml \ --bind-address=##NODE_IP## \ --secure-port=10259 \ --port=0 \ --tls-cert-file=/etc/kubernetes/cert/kube-scheduler.pem \ --tls-private-key-file=/etc/kubernetes/cert/kube-scheduler-key.pem \ --authentication-kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \ --client-ca-file=/etc/kubernetes/cert/ca.pem \ --requestheader-allowed-names="" \ --requestheader-client-ca-file=/etc/kubernetes/cert/ca.pem \ --requestheader-extra-headers-prefix="X-Remote-Extra-" \ --requestheader-group-headers=X-Remote-Group \ --requestheader-username-headers=X-Remote-User \ --authorization-kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \ --logtostderr=true \ --v=2 Restart=always RestartSec=5 StartLimitInterval=0 [Install] WantedBy=multi-user.target EOF

2)为各节点创建和分发 kube-scheduler systemd unit 文件

替换模板文件中的变量,为各节点创建 systemd unit 文件:

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for (( i=0; i < 3; i++ )) do sed -e "s/##NODE_NAME##/${NODE_NAMES[i]}/" -e "s/##NODE_IP##/${NODE_IPS[i]}/" kube-scheduler.service.template > kube-scheduler-${NODE_IPS[i]}.service done ls kube-scheduler*.service

- NODE_NAMES 和 NODE_IPS 为相同长度的 bash 数组,分别为节点名称和对应的 IP

3)分发 systemd unit 文件到所有 master 节点

cd /opt/k8s/work source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" scp kube-scheduler-${node_ip}.service root@${node_ip}:/etc/systemd/system/kube-scheduler.service done

- 重命名为 kube-scheduler.service

5、启动 kube-scheduler 服务

source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "mkdir -p ${K8S_DIR}/kube-scheduler" ssh root@${node_ip} "systemctl daemon-reload && systemctl enable kube-scheduler && systemctl restart kube-scheduler" done

- 启动服务前必须先创建工作目录

1)检查服务运行状态

source /opt/k8s/bin/environment.sh for node_ip in ${NODE_IPS[@]} do echo ">>> ${node_ip}" ssh root@${node_ip} "systemctl status kube-scheduler|grep Active" done

确保状态为 `active (running)`,否则查看日志,确认原因 journalctl -u kube-scheduler

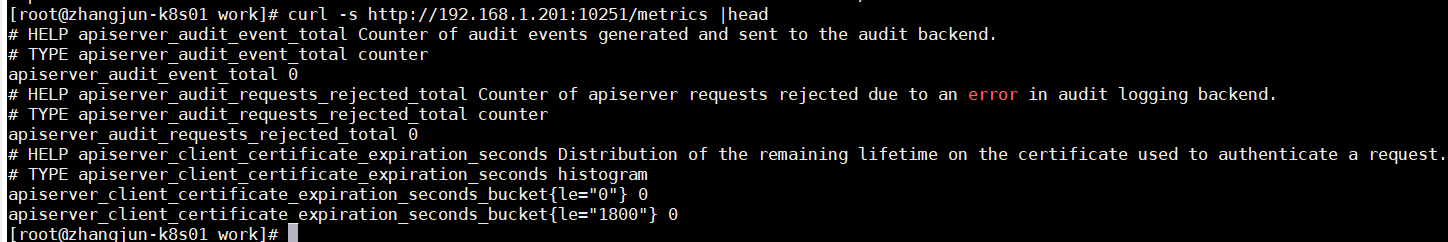

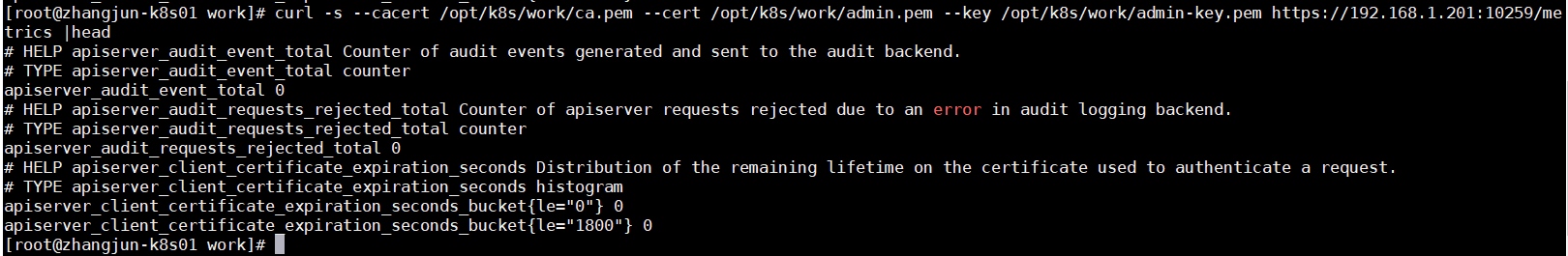

2)查看输出的 metrics

注意:以下命令在 kube-scheduler 节点上执行

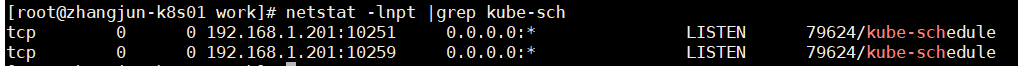

kube-scheduler 监听 10251 和 10259 端口:

- 10251:接收 http 请求,非安全端口,不需要认证授权;

- 10259:接收 https 请求,安全端口,需要认证授权;

两个接口都对外提供 `/metrics` 和 `/healthz` 的访问。

netstat -lnpt |grep kube-sch

curl -s http://192.168.1.201:10251/metrics |head

curl -s --cacert /opt/k8s/work/ca.pem --cert /opt/k8s/work/admin.pem --key /opt/k8s/work/admin-key.pem https://192.168.1.201:10259/metrics |head

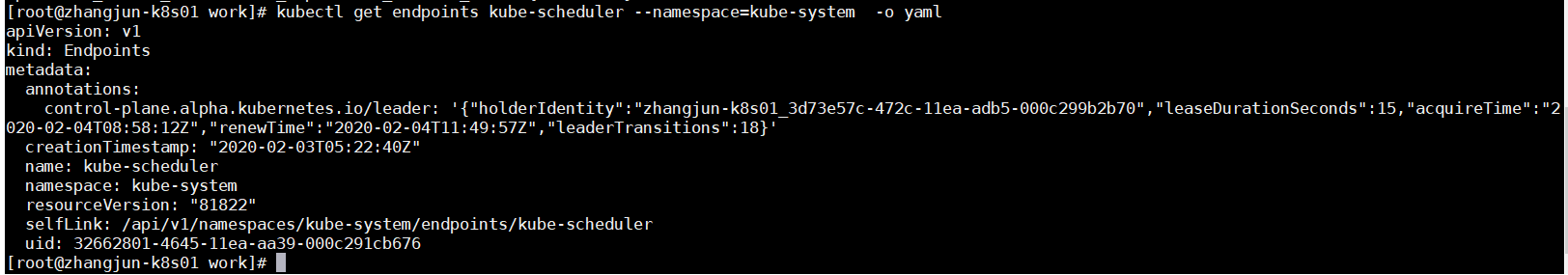

3)查看当前的 leader

kubectl get endpoints kube-scheduler --namespace=kube-system -o yaml

可见,当前的 leader 为 zhangjun-k8s01 节点