启动azkaban

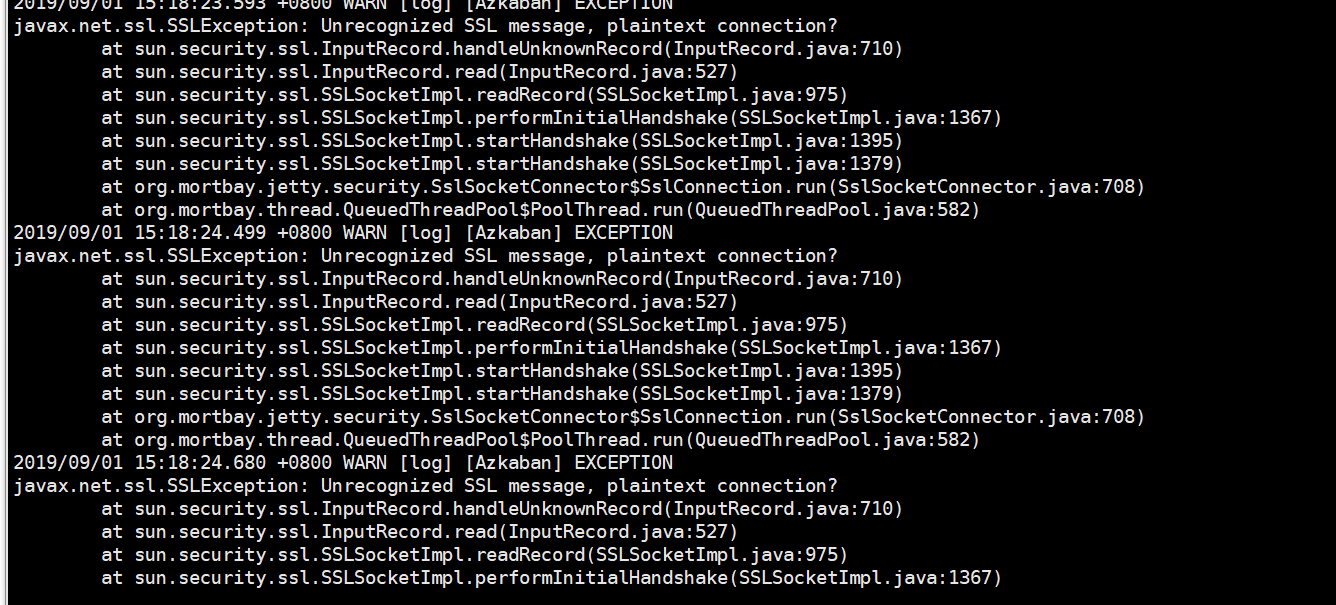

在启动了server和excutor之后,在浏览器打开azkaban,会发现不能打开,日志报这个错误

at sun.security.ssl.InputRecord.handleUnknownRecord(InputRecord.java:710) at sun.security.ssl.InputRecord.read(InputRecord.java:527) at sun.security.ssl.SSLSocketImpl.readRecord(SSLSocketImpl.java:975) at sun.security.ssl.SSLSocketImpl.performInitialHandshake(SSLSocketImpl.java:1367) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1395) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1379) at org.mortbay.jetty.security.SslSocketConnector$SslConnection.run(SslSocketConnector.java:708) at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582) 2019/09/01 15:18:24.499 +0800 WARN [log] [Azkaban] EXCEPTION javax.net.ssl.SSLException: Unrecognized SSL message, plaintext connection? at sun.security.ssl.InputRecord.handleUnknownRecord(InputRecord.java:710) at sun.security.ssl.InputRecord.read(InputRecord.java:527) at sun.security.ssl.SSLSocketImpl.readRecord(SSLSocketImpl.java:975) at sun.security.ssl.SSLSocketImpl.performInitialHandshake(SSLSocketImpl.java:1367) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1395) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1379) at org.mortbay.jetty.security.SslSocketConnector$SslConnection.run(SslSocketConnector.java:708) at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582) 2019/09/01 15:18:24.680 +0800 WARN [log] [Azkaban] EXCEPTION javax.net.ssl.SSLException: Unrecognized SSL message, plaintext connection? at sun.security.ssl.InputRecord.handleUnknownRecord(InputRecord.java:710) at sun.security.ssl.InputRecord.read(InputRecord.java:527) at sun.security.ssl.SSLSocketImpl.readRecord(SSLSocketImpl.java:975) at sun.security.ssl.SSLSocketImpl.performInitialHandshake(SSLSocketImpl.java:1367) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1395) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1379) at org.mortbay.jetty.security.SslSocketConnector$SslConnection.run(SslSocketConnector.java:708) at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582) 2019/09/01 15:18:24.809 +0800 WARN [log] [Azkaban] EXCEPTION javax.net.ssl.SSLException: Unrecognized SSL message, plaintext connection? at sun.security.ssl.InputRecord.handleUnknownRecord(InputRecord.java:710) at sun.security.ssl.InputRecord.read(InputRecord.java:527) at sun.security.ssl.SSLSocketImpl.readRecord(SSLSocketImpl.java:975) at sun.security.ssl.SSLSocketImpl.performInitialHandshake(SSLSocketImpl.java:1367) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1395) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1379) at org.mortbay.jetty.security.SslSocketConnector$SslConnection.run(SslSocketConnector.java:708) at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582) 2019/09/01 15:26:14.746 +0800 WARN [log] [Azkaban] EXCEPTION javax.net.ssl.SSLHandshakeException: Remote host closed connection during handshake at sun.security.ssl.SSLSocketImpl.readRecord(SSLSocketImpl.java:994) at sun.security.ssl.SSLSocketImpl.performInitialHandshake(SSLSocketImpl.java:1367) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1395) at sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1379) at org.mortbay.jetty.security.SslSocketConnector$SslConnection.run(SslSocketConnector.java:708) at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582) Caused by: java.io.EOFException: SSL peer shut down incorrectly at sun.security.ssl.InputRecord.read(InputRecord.java:505) at sun.security.ssl.SSLSocketImpl.readRecord(SSLSocketImpl.java:975) ... 5 more

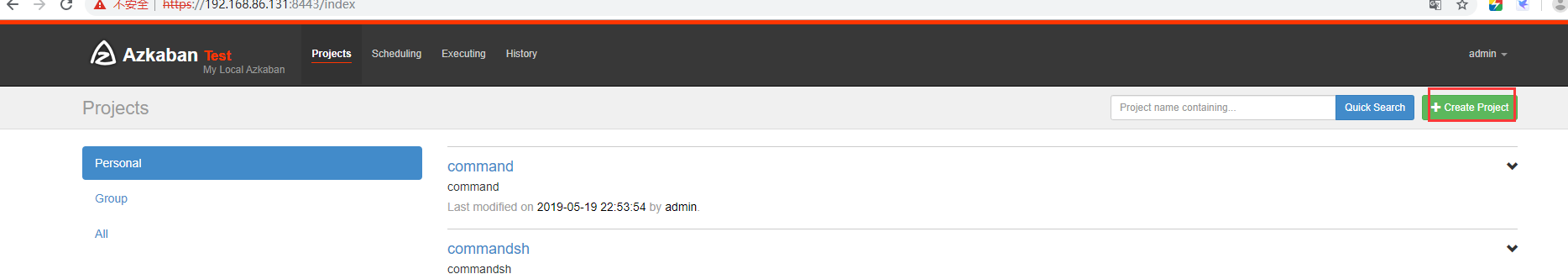

其实这个问题不难解决,在打开的时候建议用谷歌浏览器:地址是 https://192.168.86.131:8443/(https://你的ip:8433)

注意了,之前一直不能打开是因为使用了http,这里强调一下,一定不能用http,必须用https,然后选择高级选项,运行访问不安全的地址,因为这个地址被默认为不安全的

编写upload.job脚本

# upload.job type=command command=bash uploadFile2Hdfs.sh

编写uploadFile2Hdfs.sh脚本

#!/bin/bash #set java env export JAVA_HOME=/opt/modules/jdk1.8.0_65 export JRE_HOME=${JAVA_HOME}/jre export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib export PATH=${JAVA_HOME}/bin:$PATH #set hadoop env export HADOOP_HOME=/opt/modules/hadoop-2.6.0 export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH #版本1的问题: #虽然上传到Hadoop集群上了,但是原始文件还在。如何处理? #日志文件的名称都是xxxx.log1,再次上传文件时,因为hdfs上已经存在了,会报错。如何处理? #如何解决版本1的问题 # 1、先将需要上传的文件移动到待上传目录 # 2、在讲文件移动到待上传目录时,将文件按照一定的格式重名名 # /export/software/hadoop.log1 /export/data/click_log/xxxxx_click_log_{date} #日志文件存放的目录 log_src_dir=/home/hadoop/logs/log/ #待上传文件存放的目录 log_toupload_dir=/home/hadoop/logs/toupload/ day_01=`date -d'-1 day' +%Y-%m-%d` syear=`date --date=$day_01 +%Y` smonth=`date --date=$day_01 +%m` sday=`date --date=$day_01 +%d` #echo $day_01 #echo $syear #echo $smonth #echo $sday #日志文件上传到hdfs的根路径 hdfs_root_dir=/data/clickLog/$syear/$smonth/$sday hadoop fs -mkdir -p $hdfs_root_dir #打印环境变量信息 echo "envs: hadoop_home: $HADOOP_HOME" #读取日志文件的目录,判断是否有需要上传的文件 echo "log_src_dir:"$log_src_dir ls $log_src_dir | while read fileName do if [[ "$fileName" == access.log ]]; then # if [ "access.log" = "$fileName" ];then date=`date +%Y_%m_%d_%H_%M_%S` #将文件移动到待上传目录并重命名 #打印信息 echo "moving $log_src_dir$fileName to $log_toupload_dir"xxxxx_click_log_$fileName"$date" mv $log_src_dir$fileName $log_toupload_dir"xxxxx_click_log_$fileName"$date #将待上传的文件path写入一个列表文件willDoing echo $log_toupload_dir"xxxxx_click_log_$fileName"$date >> $log_toupload_dir"willDoing."$date fi done #找到列表文件willDoing ls $log_toupload_dir | grep will |grep -v "_COPY_" | grep -v "_DONE_" | while read line do #打印信息 echo "toupload is in file:"$line #将待上传文件列表willDoing改名为willDoing_COPY_ mv $log_toupload_dir$line $log_toupload_dir$line"_COPY_" #读列表文件willDoing_COPY_的内容(一个一个的待上传文件名),此处的line 就是列表中的一个待上传文件的path cat $log_toupload_dir$line"_COPY_" |while read line do #打印信息 echo "puting...$line to hdfs path.....$hdfs_root_dir" hadoop fs -put $line $hdfs_root_dir done mv $log_toupload_dir$line"_COPY_" $log_toupload_dir$line"_DONE_" done

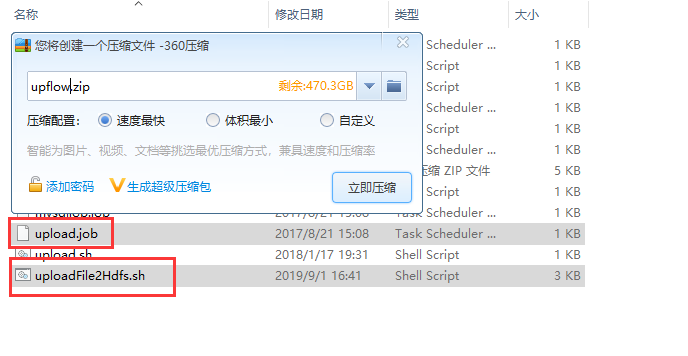

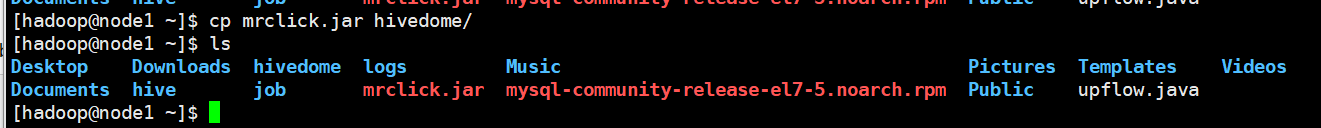

然后把这两个脚本打包

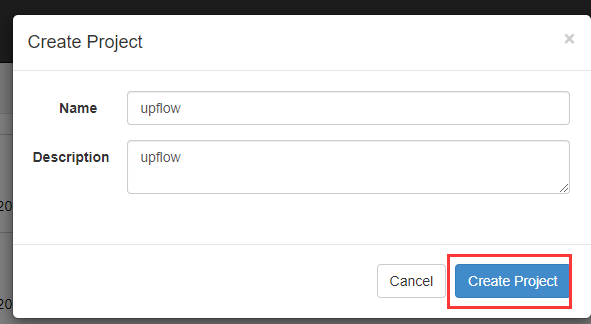

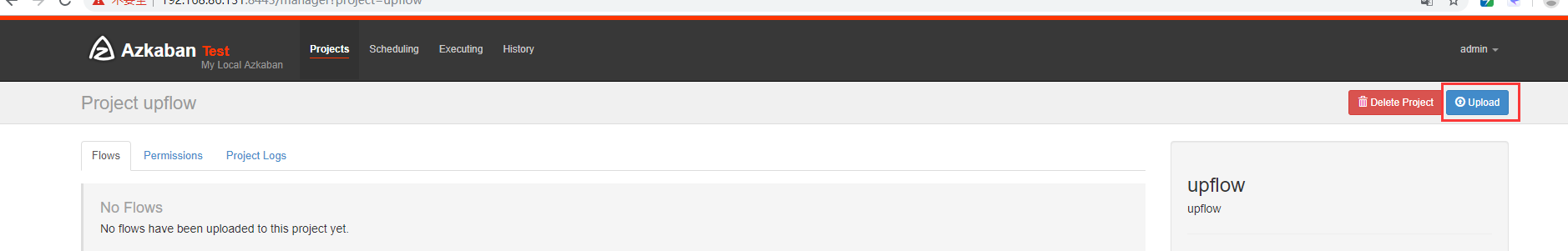

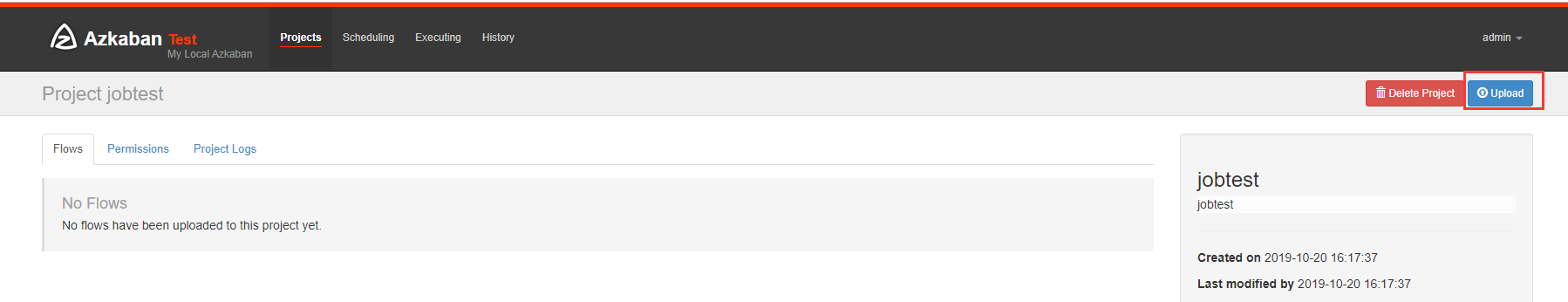

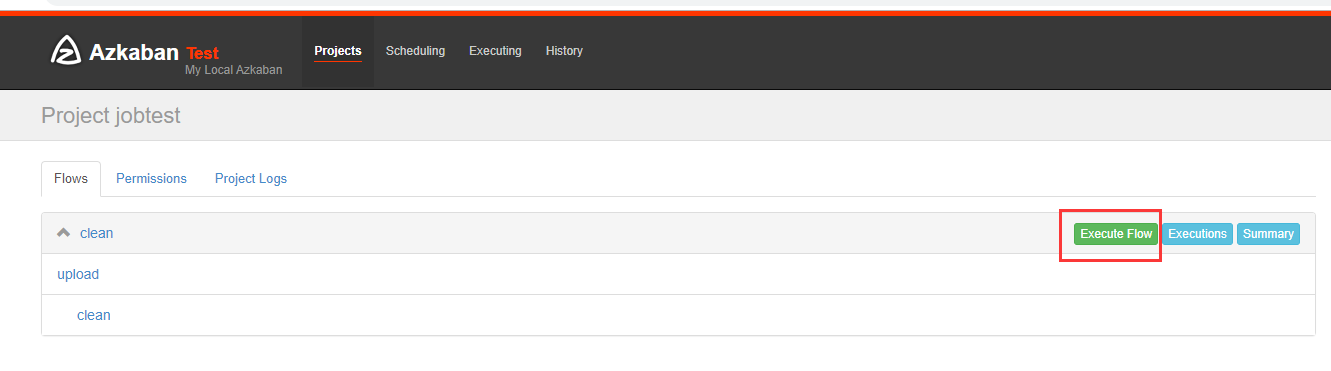

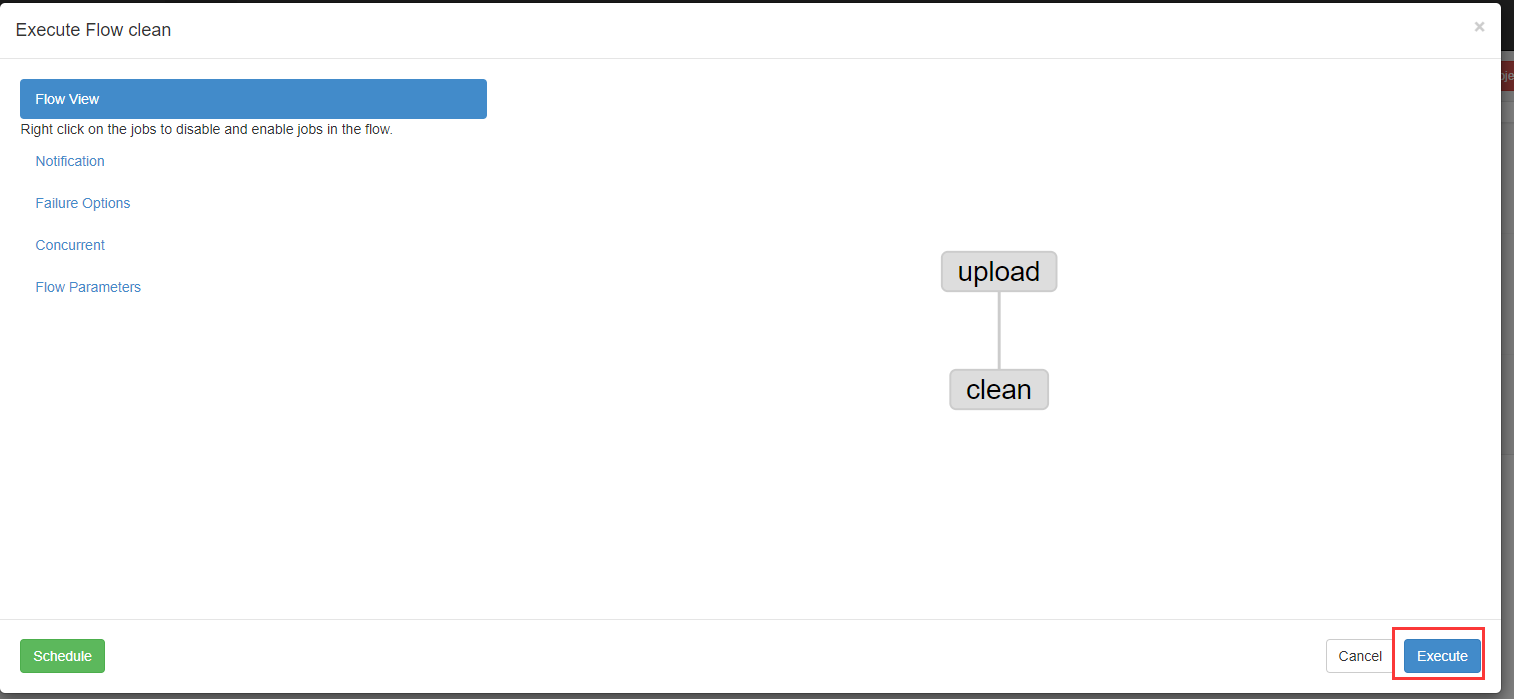

进入azkaban的登录页面

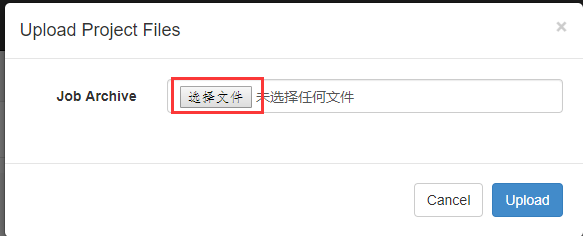

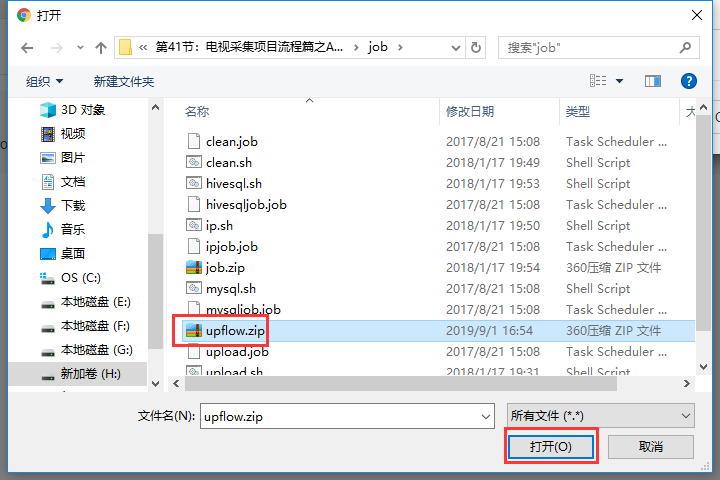

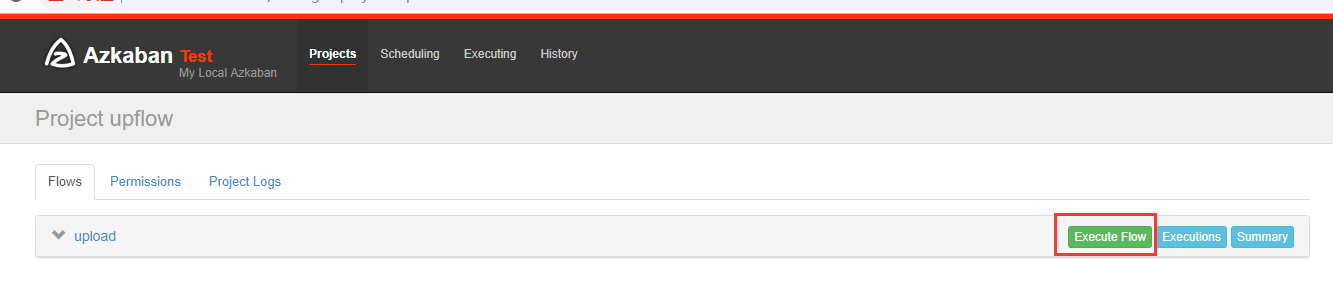

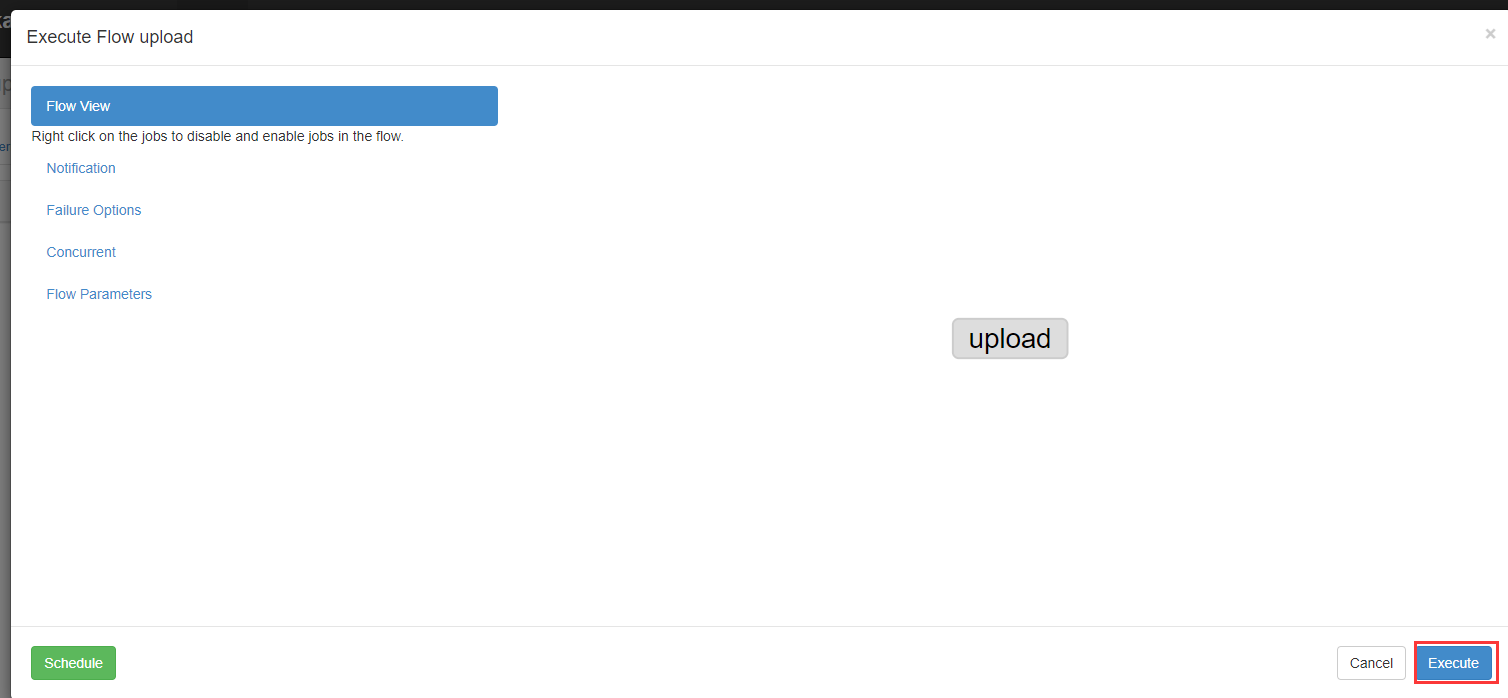

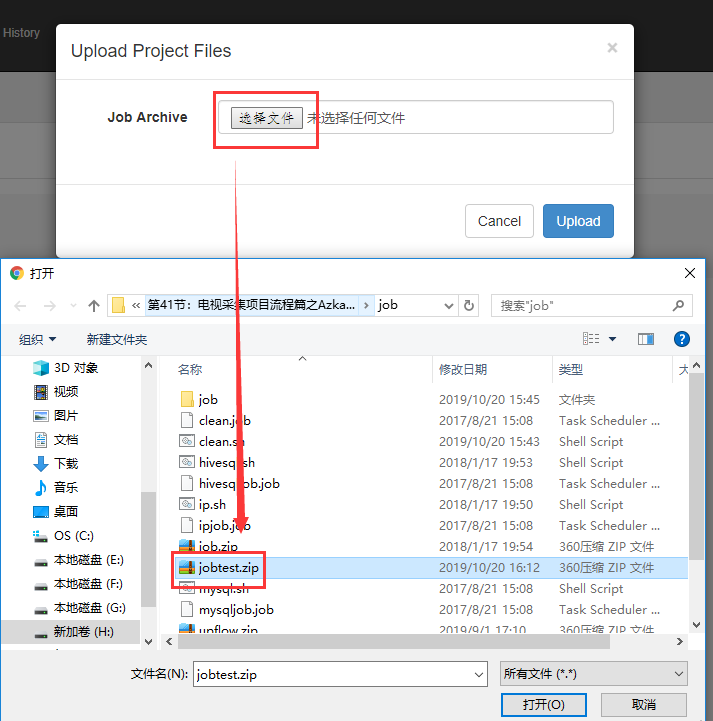

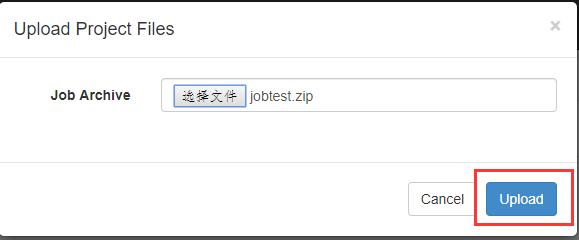

选择刚刚打包的upflow.zip

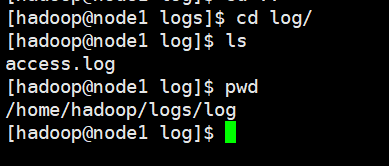

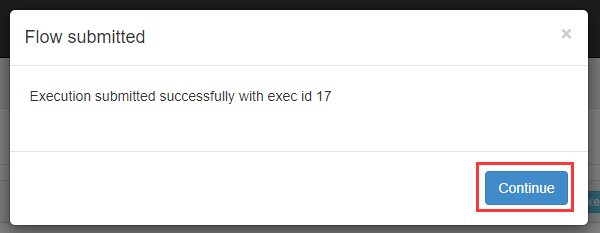

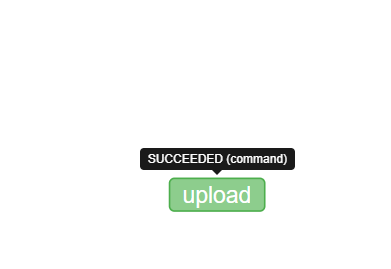

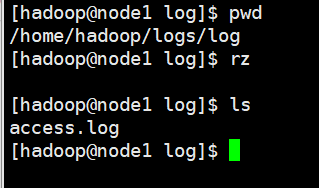

这个时候把日志文件上传到集群上

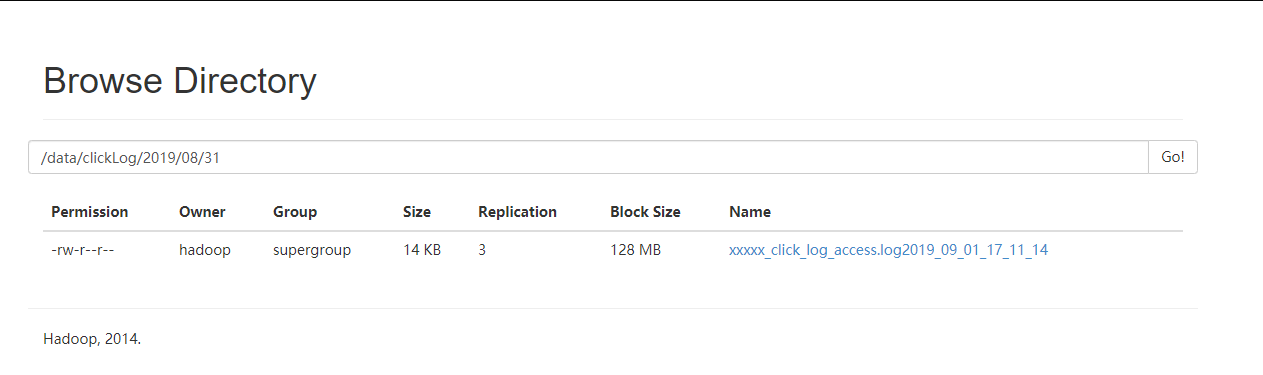

查看HDFS目录,证明文件上传成功

本地集群的日志文件也不在了

编写脚本clean.job

# clean.job type=command dependencies=upload command=bash clean.sh

编写clean.sh脚本

#!/bin/bash export JAVA_HOME=/opt/modules/jdk1.8.0_65 export JRE_HOME=${JAVA_HOME}/jre export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib export PATH=${JAVA_HOME}/bin:$PATH #set hadoop env export HADOOP_HOME=/opt/modules/hadoop-2.6.0 export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH log_local_dir=/home/hadoop/flume/ #log_hdfs_dir=/test/2017/7/ day_01=`date -d'-1 day' +%Y-%m-%d` syear=`date --date=$day_01 +%Y` smonth=`date --date=$day_01 +%m` sday=`date --date=$day_01 +%d` #echo $day_01 #echo $syear #echo $smonth #echo $sday log_hdfs_dir=/data/clickLog/$syear/$smonth/$sday #echo $log_hdfs_dir click_log_clean=com.it19gong.clickLog.AccessLogDriver clean_dir=/cleaup/$syear/$smonth/$sday echo "hadoop jar /home/hadoop/hivedome/hiveaad.jar $click_log_clean $log_hdfs_dir $clean_dir" hadoop fs -rm -r -f $clean_dir hadoop jar /home/hadoop/hivedome/mrclick.jar $click_log_clean $log_hdfs_dir $clean_dir

编写upload.job脚本

# upload.job type=command command=bash uploadFile2Hdfs.sh

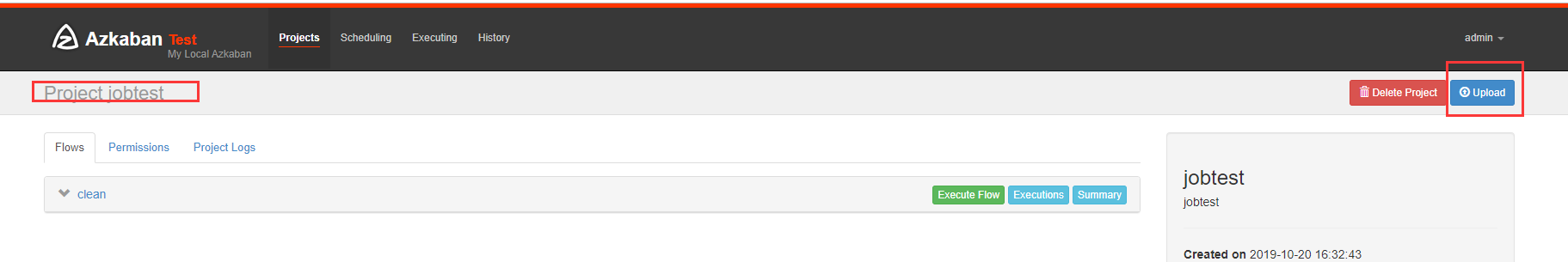

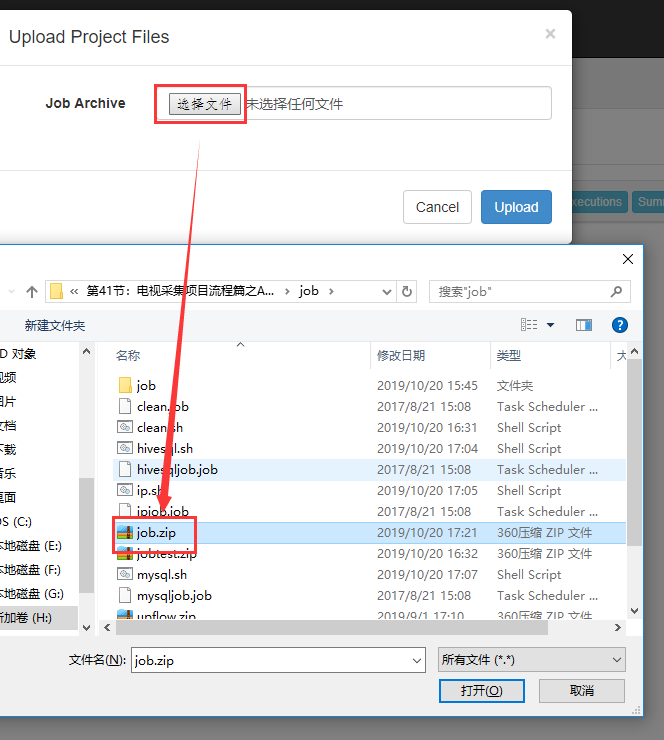

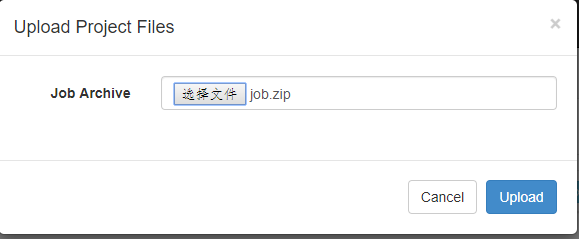

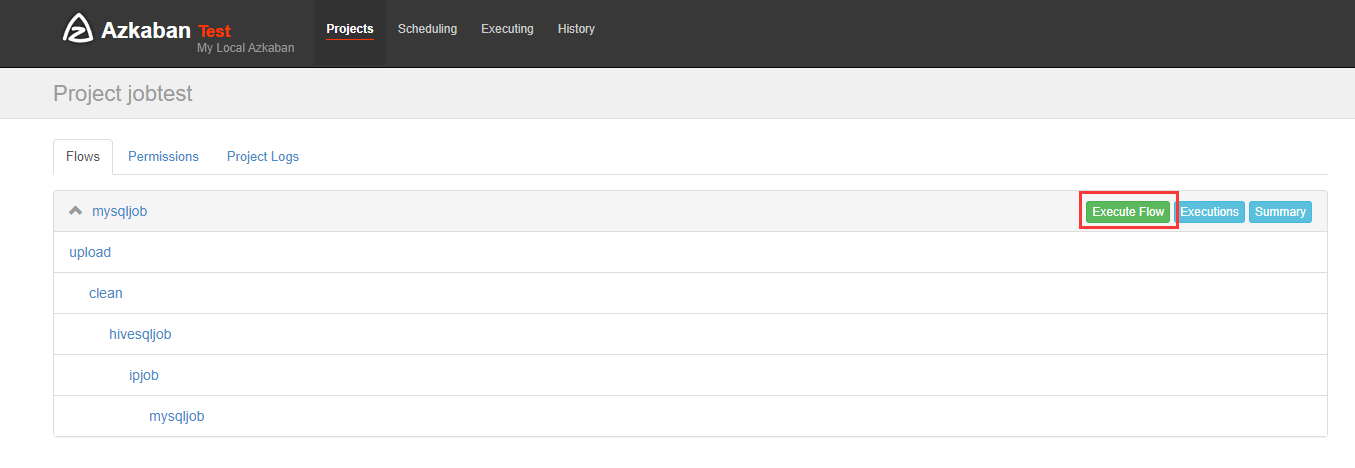

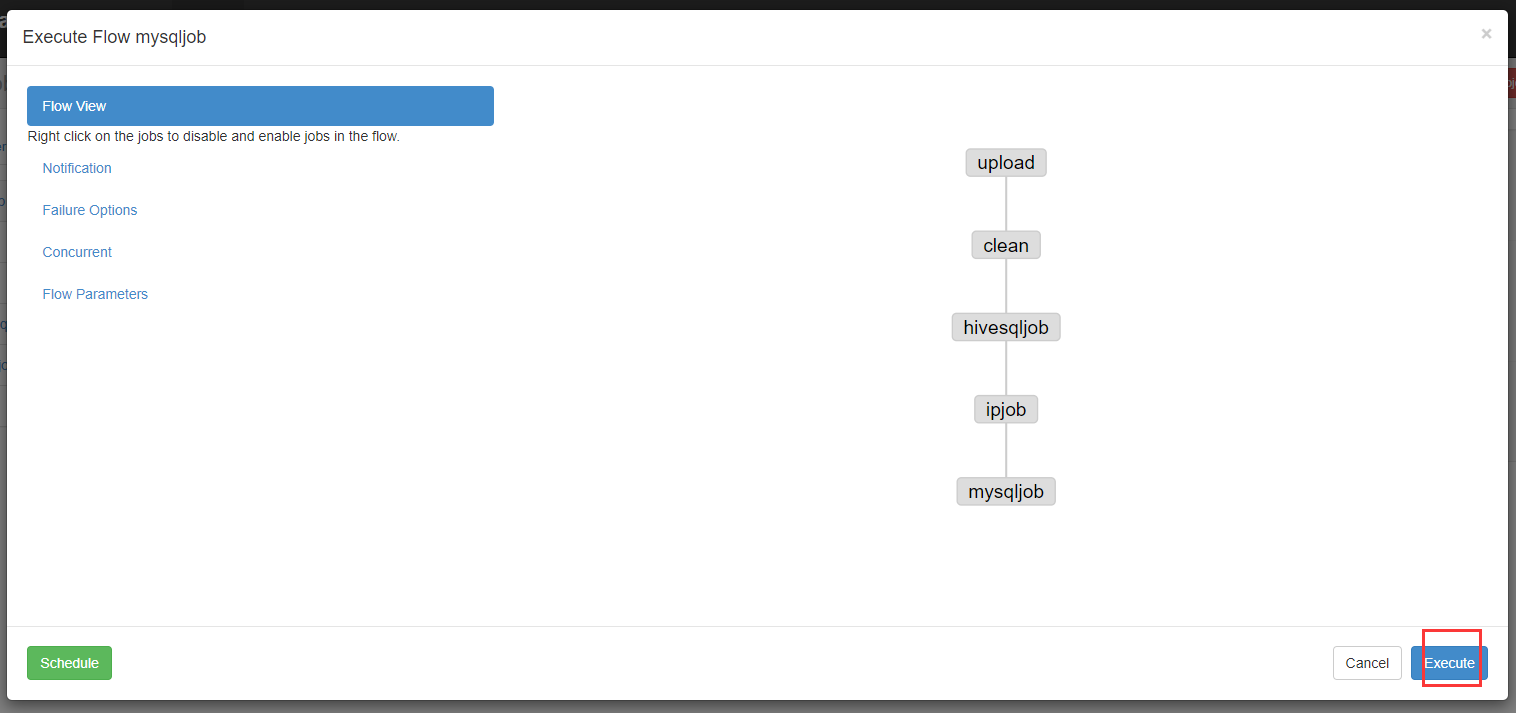

然后将这四个脚本进行打包

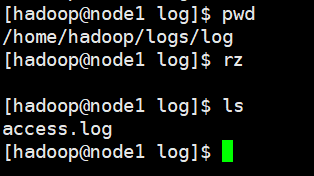

上传数据文件

编写脚本hivesql.sh

#!/bin/bash export JAVA_HOME=/opt/modules/jdk1.8.0_65 export JRE_HOME=${JAVA_HOME}/jre export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib export PATH=${JAVA_HOME}/bin:$PATH #set hadoop env export HADOOP_HOME=/opt/modules/hadoop-2.6.0 export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH export HIVE_HOME=/opt/modules/hive export PATH=${HIVE_HOME}/bin:$PATH log_local_dir=/home/hadoop/flume/ #log_hdfs_dir=/test/2017/7/ day_01=`date -d'-1 day' +%Y-%m-%d` syear=`date --date=$day_01 +%Y` smonth=`date --date=$day_01 +%m` sday=`date --date=$day_01 +%d` #echo $day_01 #echo $syear #echo $smonth #echo $sday log_hdfs_dir=/data/clickLog/$syear/$smonth/$sday #echo $log_hdfs_dir click_log_clean=com.it19gong.clickLog.AccessLogDriver clean_dir=/cleaup/$syear/$smonth/$sday HQL_origin="load data inpath '$clean_dir' into table mydb2.access" #HQL_origin="create external table db2.access(ip string,day string,url string,upflow string) row format delimited fields terminated by ',' location '$clean_dir'" #echo $HQL_origin hive -e "$HQL_origin"

编写脚本hivesqljob.job

# hivesql.job type=command dependencies=clean command=bash hivesql.sh

编写脚本ip.sh

#!/bin/bash export JAVA_HOME=/opt/modules/jdk1.8.0_65 export JRE_HOME=${JAVA_HOME}/jre export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib export PATH=${JAVA_HOME}/bin:$PATH #set hadoop env export HADOOP_HOME=/opt/modules/hadoop-2.6.0 export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH export HIVE_HOME=/opt/modules/hive export PATH=${HIVE_HOME}/bin:$PATH log_local_dir=/home/hadoop/flume/ #log_hdfs_dir=/test/2017/7/ day_01=`date -d'-1 day' +%Y-%m-%d` syear=`date --date=$day_01 +%Y` smonth=`date --date=$day_01 +%m` sday=`date --date=$day_01 +%d` #echo $day_01 #echo $syear #echo $smonth #echo $sday log_hdfs_dir=/data/clickLog/$syear/$smonth/$sday #echo $log_hdfs_dir click_log_clean=com.it19gong.clickLog.AccessLogDriver clean_dir=/cleaup/$syear/$smonth/$sday HQL_origin="insert into mydb2.upflow select ip,sum(upflow) as sum from mydb2.access group by ip order by sum desc " #echo $HQL_origin hive -e "$HQL_origin"

编写脚本ipjob.job

# ip.job type=command dependencies=hivesqljob command=bash ip.sh

编写脚本mysql.sh

#!/bin/bash export JAVA_HOME=/opt/modules/jdk1.8.0_65 export JRE_HOME=${JAVA_HOME}/jre export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib export PATH=${JAVA_HOME}/bin:$PATH #set hadoop env export HADOOP_HOME=/opt/modules/hadoop-2.6.0 export PATH=${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:$PATH export HIVE_HOME=/opt/modules/hive export PATH=${HIVE_HOME}/bin:$PATH export SQOOP_HOME=/opt/modules/sqoop export PATH=${SQOOP_HOME}/bin:$PATH sqoop export --connect jdbc:mysql://node1:3306/userdb --username sqoop --password sqoop --table upflow --export-dir /user/hive/warehouse/mydb2.db/upflow --input-fields-terminated-by ','

编写脚本mysqljob.job

# mysql.job type=command dependencies=ipjob command=bash mysql.sh

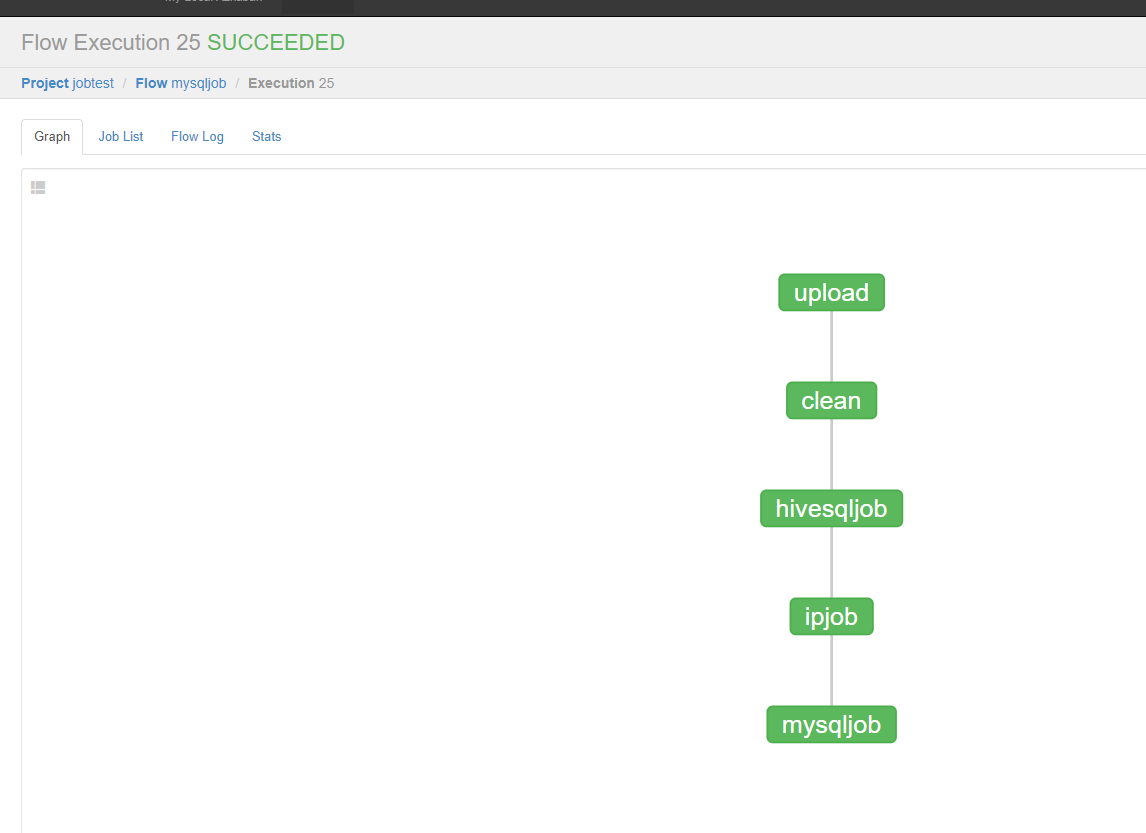

打包成job.zip

上传日志文件

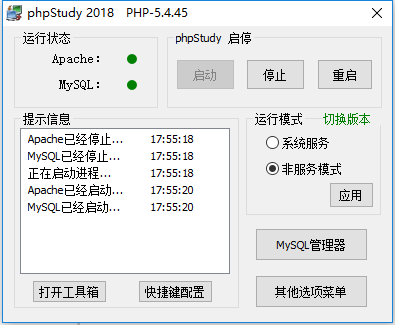

打开本地安装的phpsudy

在浏览器打开网页http://www.echart.com/