NLP FROM SCRATCH: CLASSIFYING NAMES WITH A CHARACTER-LEVEL RNN

原文来自于pytorch官网教程。

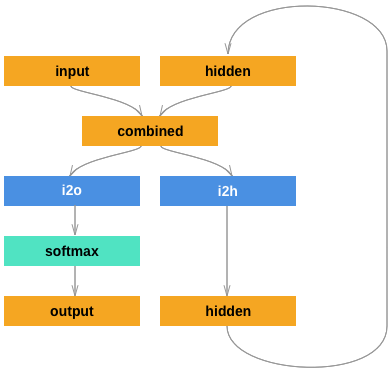

文章实现了一个字母级别的基础RNN模型来分类单词。其中并没有用已经提炼过的pytorch中的RNN方法,以展示RNN模型是怎样工作的。

这个模型将单词读成一个字母序列,每一步都会输出当前预测和隐藏层,隐藏层会传递给下一个字母,用最后一个结果可以对这个单词进行分类。

数据为几千个来自18个国家的人名的姓氏。通过输入这些姓氏,我们的模型应当能够判断这是哪个国家的。

$ python predict.py Hinton

(-0.47) Scottish

(-1.52) English

(-3.57) Irish

$ python predict.py Schmidhuber

(-0.19) German

(-2.48) Czech

(-2.68) Dutch

推荐阅读

pytorch相关:

- https://pytorch.org/ For installation instructions

- Deep Learning with PyTorch: A 60 Minute Blitz to get started with PyTorch in general

- Learning PyTorch with Examples for a wide and deep overview

- PyTorch for Former Torch Users if you are former Lua Torch user

RNN相关:

- The Unreasonable Effectiveness of Recurrent Neural Networks shows a bunch of real life examples

- Understanding LSTM Networks is about LSTMs specifically but also informative about RNNs in general

准备数据

在data/names目录下,有18个命名为[Language].txt的文件。每个文件里面都有很多名字,一个一行,大多数都已经转化成了我们看得懂的字母,但是仍然需要进一步规则化。

最后我们想要的是一个dictionary,每个语言作为索引可以找到它所有的名字组成的list。({Language: [names...]})

from __future__ import unicode_literals, print_function, division

from io import open

import glob

import os

def findFiles(path): return glob.glob(path)

print(findFiles('data/names/*.txt'))

import unicodedata

import string

all_letters = string.ascii_letters + " .,;'"

n_letters = len(all_letters)

# Turn a Unicode string to plain ASCII, thanks to https://stackoverflow.com/a/518232/2809427

def unicodeToAscii(s):

return ''.join(

c for c in unicodedata.normalize('NFD', s)

if unicodedata.category(c) != 'Mn'

and c in all_letters

)

print(unicodeToAscii('Ślusàrski'))

# Build the category_lines dictionary, a list of names per language

category_lines = {}

all_categories = []

# Read a file and split into lines

def readLines(filename):

lines = open(filename, encoding='utf-8').read().strip().split('

')

return [unicodeToAscii(line) for line in lines]

for filename in findFiles('data/names/*.txt'):

category = os.path.splitext(os.path.basename(filename))[0]

all_categories.append(category)

lines = readLines(filename)

category_lines[category] = lines

n_categories = len(all_categories)

输出:

['data/names/French.txt', 'data/names/Czech.txt', 'data/names/Dutch.txt', 'data/names/Polish.txt', 'data/names/Scottish.txt', 'data/names/Chinese.txt', 'data/names/English.txt', 'data/names/Italian.txt', 'data/names/Portuguese.txt', 'data/names/Japanese.txt', 'data/names/German.txt', 'data/names/Russian.txt', 'data/names/Korean.txt', 'data/names/Arabic.txt', 'data/names/Greek.txt', 'data/names/Vietnamese.txt', 'data/names/Spanish.txt', 'data/names/Irish.txt']

Slusarski

现在我们有category_lines作为字典,其中用语言名字可以找到对应语言文件中所有的单词。我们还需要all_categories和n_categories后面用。

将名字转化为张量

现在我们已经组织了所有名称,我们需要将它们转换为张量以使用它们。

在这里直接使用独热编码,一个字母张量为1 X n_letters,一个单词的维度大小就是line_length X 1 X n_letters。

额外的1维是因为PyTorch假定所有东西都是成批的-我们在这里只使用1的批处理大小。

import torch

# Find letter index from all_letters, e.g. "a" = 0

def letterToIndex(letter):

return all_letters.find(letter)

# Just for demonstration, turn a letter into a <1 x n_letters> Tensor

def letterToTensor(letter):

tensor = torch.zeros(1, n_letters)

tensor[0][letterToIndex(letter)] = 1

return tensor

# Turn a line into a <line_length x 1 x n_letters>,

# or an array of one-hot letter vectors

def lineToTensor(line):

tensor = torch.zeros(len(line), 1, n_letters)

for li, letter in enumerate(line):

tensor[li][0][letterToIndex(letter)] = 1

return tensor

print(letterToTensor('J'))

print(lineToTensor('Jones').size())

输出:

tensor([[0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0.,

0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 1.,

0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0.,

0., 0., 0.]])

torch.Size([5, 1, 57])

建立模型

在进行自动微分之前,在Torch中创建一个递归神经网络需要在多个epoch中克隆网络层(layers)的参数,layers保留了隐藏状态和梯度,这些layers现在完全由张量图本身处理。这意味着您可以以非常“纯粹”的方式实现RNN,作为常规的前馈层。

这个RNN模块(大部分是从PyTorch for Torch用户教程中复制的)只有2个线性层,它们在输入和隐藏状态下运行,输出之后是LogSoftmax层。

注:我在实际操作中将模型有所更改,达到了相对更好一点的训练效果,下面的模型是官方的模型。我的模型在文末。

import torch.nn as nn

class RNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNN, self).__init__()

self.hidden_size = hidden_size

self.i2h = nn.Linear(input_size + hidden_size, hidden_size)

self.i2o = nn.Linear(input_size + hidden_size, output_size)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, input, hidden):

combined = torch.cat((input, hidden), 1)

hidden = self.i2h(combined)

output = self.i2o(combined)

output = self.softmax(output)

return output, hidden

def initHidden(self):

return torch.zeros(1, self.hidden_size)

n_hidden = 128

rnn = RNN(n_letters, n_hidden, n_categories)

要运行此网络的步骤,我们需要传递输入(在本例中为当前字母的张量)和先前的隐藏状态(首先将其初始化为零)。我们将返回输出(每种语言的概率)和下一个隐藏状态(我们将其保留用于下一步)。

input = letterToTensor('A')

hidden =torch.zeros(1, n_hidden)

output, next_hidden = rnn(input, hidden)

为了提高效率,我们不想为每个步骤都创建一个新的Tensor,因此我们将使用lineToTensor代替 letterToTensor和使用slice。这可以通过预先计算一批张量来进一步优化。

input = lineToTensor('Albert')

hidden = torch.zeros(1, n_hidden)

output, next_hidden = rnn(input[0], hidden)

print(output)

输出

tensor([[-2.9504, -2.8402, -2.9195, -2.9136, -2.9799, -2.8207, -2.8258, -2.8399,

-2.9098, -2.8815, -2.8313, -2.8628, -3.0440, -2.8689, -2.9391, -2.8381,

-2.9202, -2.8717]], grad_fn=<LogSoftmaxBackward>)

训练

准备训练

在开始训练之前,我们应该做一些辅助功能。首先是解释网络的输出,我们知道这是每个类别的可能性。我们可以Tensor.topk用来获取最高可能性的值:

def categoryFromOutput(output):

top_n, top_i = output.topk(1)

category_i = top_i[0].item()

return all_categories[category_i], category_i

print(categoryFromOutput(output))

输出:

('Chinese', 5)

我们还将需要快速地获取一组随机训练数据:

import random

def randomChoice(l):

return l[random.randint(0, len(l) - 1)]

def randomTrainingExample():

category = randomChoice(all_categories)

line = randomChoice(category_lines[category])

category_tensor = torch.tensor([all_categories.index(category)], dtype=torch.long)

line_tensor = lineToTensor(line)

return category, line, category_tensor, line_tensor

for i in range(10):

category, line, category_tensor, line_tensor = randomTrainingExample()

print('category =', category, '/ line =', line)

输出:

category = Italian / line = Pastore

category = Arabic / line = Toma

category = Irish / line = Tracey

category = Portuguese / line = Lobo

category = Arabic / line = Sleiman

category = Polish / line = Sokolsky

category = English / line = Farr

category = Polish / line = Winogrodzki

category = Russian / line = Adoratsky

category = Dutch / line = Robert

训练模型

现在,训练该网络所需要做的就是向它展示大量示例,进行预测,并告诉它是否错误。

损失函数用nn.NLLLoss,因为RNN的最后一层是nn.LogSoftmax。

criterion = nn.NLLLoss()

每个epoch中:

- 创建输入和目标张量

- 创建为零的初始隐藏状态

- 输入每个字母

- 记录下一个字母的隐藏状态

- 比较最终输出与目标

- 反向传播

- 返回输出和损失

learning_rate = 0.005 # If you set this too high, it might explode. If too low, it might not learn

def train(category_tensor, line_tensor):

hidden = rnn.initHidden()

rnn.zero_grad()

for i in range(line_tensor.size()[0]):

output, hidden = rnn(line_tensor[i], hidden)

loss = criterion(output, category_tensor)

loss.backward()

# Add parameters' gradients to their values, multiplied by learning rate

for p in rnn.parameters():

p.data.add_(-learning_rate, p.grad.data)

return output, loss.item()

现在,我们只需要运行大量samples。由于 train函数同时返回输出和损失,因此我们可以打印其猜测并跟踪绘制损失。由于有1000个示例,因此我们仅打印每个print_every示例,并对损失进行平均。

import time

import math

n_iters = 100000

print_every = 5000

plot_every = 1000

# Keep track of losses for plotting

current_loss = 0

all_losses = []

def timeSince(since):

now = time.time()

s = now - since

m = math.floor(s / 60)

s -= m * 60

return '%dm %ds' % (m, s)

start = time.time()

for iter in range(1, n_iters + 1):

category, line, category_tensor, line_tensor = randomTrainingExample()

output, loss = train(category_tensor, line_tensor)

current_loss += loss

# Print iter number, loss, name and guess

if iter % print_every == 0:

guess, guess_i = categoryFromOutput(output)

correct = '✓' if guess == category else '✗ (%s)' % category

print('%d %d%% (%s) %.4f %s / %s %s' % (iter, iter / n_iters * 100, timeSince(start), loss, line, guess, correct))

# Add current loss avg to list of losses

if iter % plot_every == 0:

all_losses.append(current_loss / plot_every)

current_loss = 0

输出:

5000 5% (0m 12s) 3.1806 Olguin / Irish ✗ (Spanish)

10000 10% (0m 21s) 2.1254 Dubnov / Russian ✓

15000 15% (0m 29s) 3.1001 Quirke / Polish ✗ (Irish)

20000 20% (0m 38s) 0.9191 Jiang / Chinese ✓

25000 25% (0m 46s) 2.3233 Marti / Italian ✗ (Spanish)

30000 30% (0m 54s) nan Amari / Russian ✗ (Arabic)

35000 35% (1m 3s) nan Gudojnik / Russian ✓

40000 40% (1m 11s) nan Finn / Russian ✗ (Irish)

45000 45% (1m 20s) nan Napoliello / Russian ✗ (Italian)

50000 50% (1m 28s) nan Clark / Russian ✗ (Irish)

55000 55% (1m 37s) nan Roijakker / Russian ✗ (Dutch)

60000 60% (1m 46s) nan Kalb / Russian ✗ (Arabic)

65000 65% (1m 54s) nan Hanania / Russian ✗ (Arabic)

70000 70% (2m 3s) nan Theofilopoulos / Russian ✗ (Greek)

75000 75% (2m 11s) nan Pakulski / Russian ✗ (Polish)

80000 80% (2m 20s) nan Thistlethwaite / Russian ✗ (English)

85000 85% (2m 29s) nan Shadid / Russian ✗ (Arabic)

90000 90% (2m 37s) nan Finnegan / Russian ✗ (Irish)

95000 95% (2m 46s) nan Brannon / Russian ✗ (Irish)

100000 100% (2m 54s) nan Gomulka / Russian ✗ (Polish)

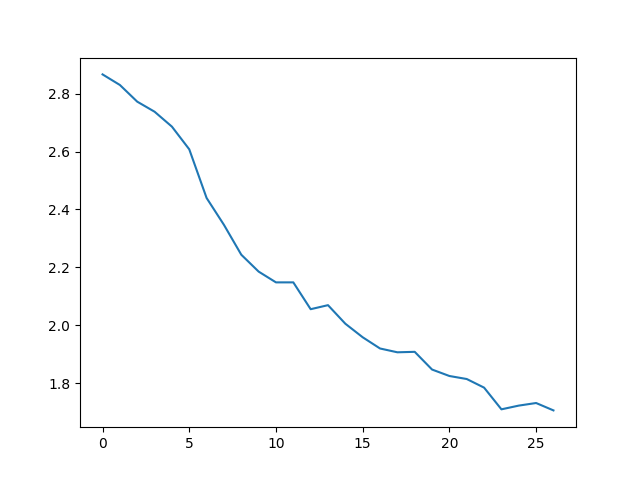

绘制结果

从中绘出历史损失all_losses显示网络学习情况:

import matplotlib.pyplot as plt

import matplotlib.ticker as ticker

plt.figure()

plt.plot(all_losses)

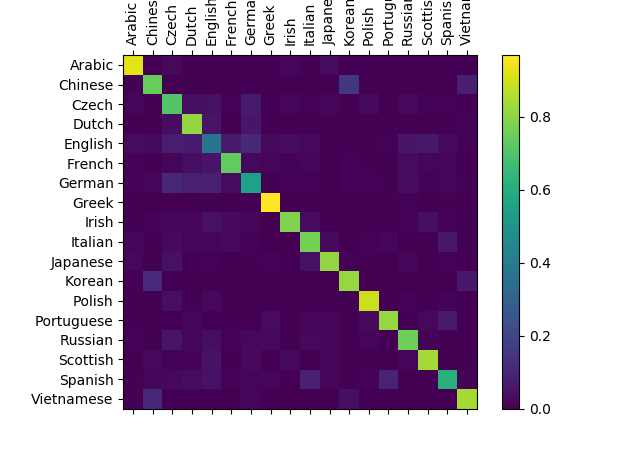

评估结果

# Keep track of correct guesses in a confusion matrix

confusion = torch.zeros(n_categories, n_categories)

n_confusion = 10000

# Just return an output given a line

def evaluate(line_tensor):

hidden = rnn.initHidden()

for i in range(line_tensor.size()[0]):

output, hidden = rnn(line_tensor[i], hidden)

return output

# Go through a bunch of examples and record which are correctly guessed

for i in range(n_confusion):

category, line, category_tensor, line_tensor = randomTrainingExample()

output = evaluate(line_tensor)

guess, guess_i = categoryFromOutput(output)

category_i = all_categories.index(category)

confusion[category_i][guess_i] += 1

# Normalize by dividing every row by its sum

for i in range(n_categories):

confusion[i] = confusion[i] / confusion[i].sum()

# Set up plot

fig = plt.figure()

ax = fig.add_subplot(111)

cax = ax.matshow(confusion.numpy())

fig.colorbar(cax)

# Set up axes

ax.set_xticklabels([''] + all_categories, rotation=90)

ax.set_yticklabels([''] + all_categories)

# Force label at every tick

ax.xaxis.set_major_locator(ticker.MultipleLocator(1))

ax.yaxis.set_major_locator(ticker.MultipleLocator(1))

# sphinx_gallery_thumbnail_number = 2

plt.show()

这个准确率表示方法有必要学一下,非常直观。我对我的模型也做了这样的评估。

尝试nn.LSTM或者nn.GRU,再加上一些更复杂的网络层,可以达到更好的效果。