一、在代码中标记要显示的各种量

tensorboard各函数的作用和用法请参考:https://www.cnblogs.com/lyc-seu/p/8647792.html

import tensorflow as tf import numpy as np import matplotlib.pyplot as plt import os #设置当前工作目录 os.chdir(r'H:NotepadTensorflow') def add_layer(inputs, in_size, out_size, n_layer, activation_function=None): layer_name = 'layer%s' % n_layer with tf.name_scope(layer_name): Weights = tf.Variable(tf.random_normal([in_size, out_size]), name='W') biases = tf.Variable(tf.zeros([1, out_size]) + 0.1, name='b') Wx_plus_b = tf.add(tf.matmul(inputs, Weights), biases) if activation_function is None: outputs = Wx_plus_b else: outputs = activation_function(Wx_plus_b, ) #histogram用来显示训练过程中变量的分布情况 tf.summary.histogram(layer_name + '/weights', Weights) tf.summary.histogram(layer_name + '/biases', biases) tf.summary.histogram(layer_name + '/outputs', outputs) return outputs #数据 x_data = np.linspace(-1,1,300)[:, np.newaxis] noise = np.random.normal(0, 0.05, x_data.shape) y_data = 5*np.square(x_data) - 0.5 + noise #输入 with tf.name_scope('inputs'): xs = tf.placeholder(tf.float32, [None, 1], name='x_input') ys = tf.placeholder(tf.float32, [None, 1], name='y_input') #3层网络 l1 = add_layer(xs, 1, 10, 1,activation_function=tf.nn.relu) l2 = add_layer(l1, 10, 10,2, activation_function=tf.nn.relu) prediction = add_layer(l2, 10, 1,3, activation_function=None) #损失与训练 with tf.name_scope('loss'): loss = tf.reduce_mean(tf.reduce_sum(tf.square(ys - prediction), reduction_indices=[1])) tf.summary.scalar('loss-haha', loss) with tf.name_scope('train'): train_step = tf.train.GradientDescentOptimizer(0.1).minimize(loss) #运行 init = tf.global_variables_initializer() #merge_all 可以将所有summary全部保存到磁盘,以便tensorboard显示。 merged = tf.summary.merge_all() with tf.Session() as sess: sess.run(init)

#FileWriter指定一个文件用来保存图。可以调用其add_summary()方法将训练过程数据保存在filewriter指定的文件中 writer = tf.summary.FileWriter("logs/", sess.graph)#输出Graph for i in range(10000): sess.run(train_step, feed_dict={xs: x_data, ys: y_data}) if i % 50 == 0: result = sess.run(merged,feed_dict={xs: x_data, ys: y_data}) writer.add_summary(result, i)

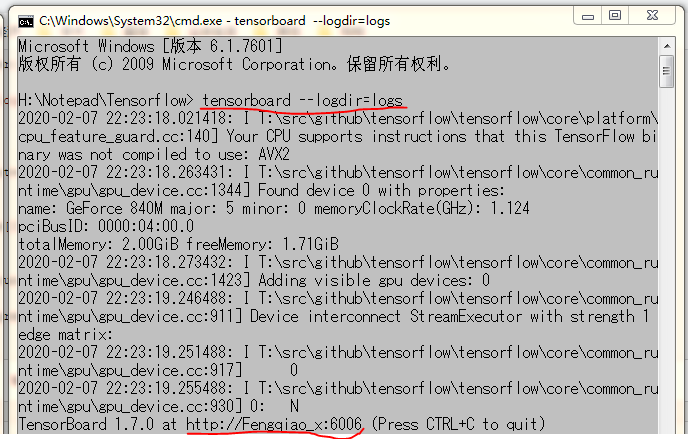

二、在log文件夹所在目录打开cmd,并输入‘ tensorboard --logdir=logs ’

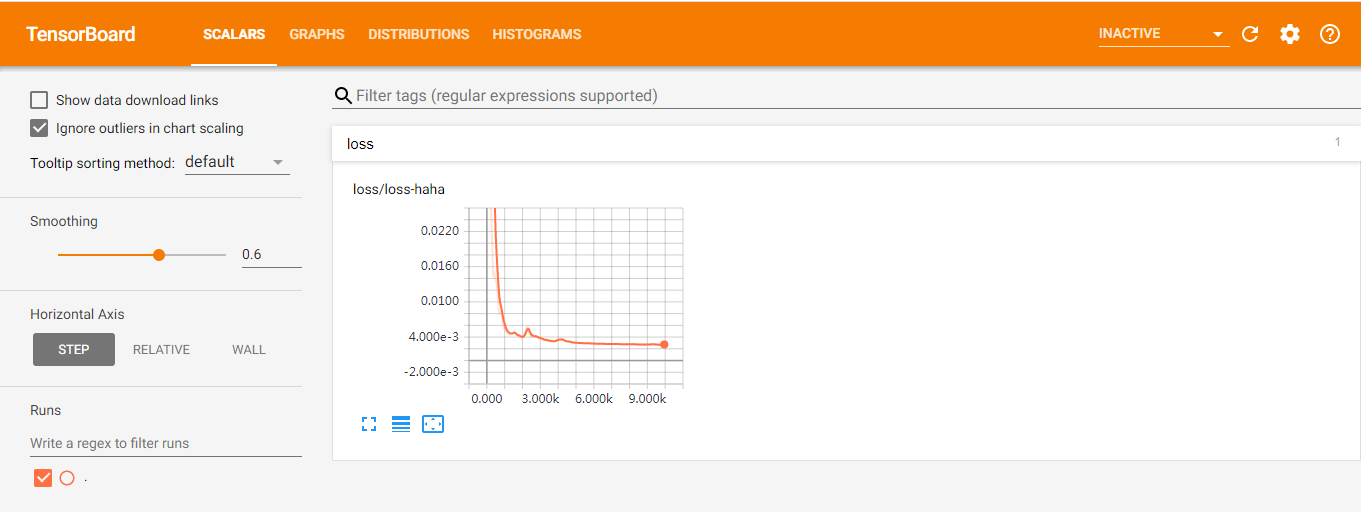

三、在Google Chrome浏览器中输入cmd中给出的网址: http://Fengqiao_x:6006