简介

有一段时间,没写博客了,因为公司开发分布式调用链追踪系统,用到hbase,在这里记录一下搭建过程

1、集群如下:

| ip | 主机名 | 角色 |

| 192.168.6.130 | node1.jacky.com | maser |

| 192.168.6.131 | node2.jacky.com | slave |

| 192.168.6.132 | node3.jacky.com | slave |

2、文件如下:

[root@node1 software]# ll 总用量 464336 -rw-r--r--. 1 root root 214092195 9月 12 17:54 hadoop-2.7.3.tar.gz -rw-r--r--. 1 root root 104584366 9月 14 14:08 hbase-1.2.5-bin.tar.gz -rwxr-xr-x. 1 root root 140393310 9月 16 13:51 jdk-8u11-linux-x64.rpm -rw-r--r--. 1 root root 16402010 10月 25 2016 zookeeper-3.4.5.tar.gz

说明:安装hbase之前,需要安装hadoop环境(hbase用到hadoop的hdfs),需要zookeeper环境,需要jdk环境

3、安装hadoop、centos7.0环境配置

3.1、修改3台机器的hosts文件,配置ip和主机名映射

[root@node1 jacky]# vim /etc/hosts

在文件后面添加内容为:

192.168.6.130 node1.jacky.com 192.168.6.131 node2.jacky.com 192.168.6.132 node3.jacky.com

3.2、修改3台机器hostname文件

在192.168.6.130机器中修改,修改hostname为

[root@node1 jacky]# cat /etc/hostname

node1.jacky.com

很显然另外两台技术设置的主机名分别为node2.jacky.com和node3.jacky.com

3.3、配置192.168.6.130可以免密码登录192.168.6.131和192.168.6.132

步骤:

- 生成公钥和私钥

- 修改公钥名称为authorized_keys

[root@node1 ~]# ssh-keygen Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:pvR6iWfppGPSFZlAqP35/6DEtGTvaMY64otThWoBTuk root@localhost.localdomain The key's randomart image is: +---[RSA 2048]----+ | . o. | |.o . . | |+. o . . o | | Eo o . + | | o o..S. | | o ..oO.o | | . . ..=*oo | | ..o *=@+ . | | .oo=+@+.o.. | +----[SHA256]-----+ [root@node1 .ssh]# cp id_rsa.pub authorized_keys

[root@node1 .ssh]# chmod 777 authorized_keys #修改文件权限

说明:

authorized_keys:存放远程免密登录的公钥,主要通过这个文件记录多台机器的公钥

id_rsa : 生成的私钥文件

id_rsa.pub : 生成的公钥文件

know_hosts : 已知的主机公钥清单

[root@node1 .ssh]# ssh-copy-id -i root@node1.jacky.com 到自己

[root@node1 .ssh]# ssh-copy-id -i root@node2.jacky.com

[root@node1 .ssh]# ssh-copy-id -i root@node3.jacky.com

3.4、配置hadoop的环境变量

[root@node1 software]# vim /etc/profile

# hadoop export HADOOP_HOME=/usr/local/hadoop-2.7.3 export HADOOP_MAPRED_HOME=$HADOOP_HOME export HADOOP_COMMON_HOME=$HADOOP_HOME export HADOOP_HDFS_HOME=$HADOOP_HOME export YARN_HOME=$HADOOP_HOME export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin export HADOOP_INSTALL=$HADOOP_HOME

输入 source /etc/profile 使配置文件生效。

[root@node1 software]# source /etc/profile

4、hadoop配置

4.1、上传hadoop文件

[root@node1 software]# ll 总用量 464336 -rw-r--r--. 1 root root 214092195 9月 12 17:54 hadoop-2.7.3.tar.gz -rw-r--r--. 1 root root 104584366 9月 14 14:08 hbase-1.2.5-bin.tar.gz -rwxr-xr-x. 1 root root 140393310 9月 16 13:51 jdk-8u11-linux-x64.rpm -rw-r--r--. 1 root root 16402010 10月 25 2016 zookeeper-3.4.5.tar.gz [root@node1 software]# pwd /usr/software [root@node1 software]#

说明:我把hadoop-2.7.3.tar.gz上传到/usr/software

4.2、配置hadoop-env.sh文件

# The java implementation to use. export JAVA_HOME=/usr/java/jdk1.8.0_11

4.3、配置yarn-env.sh文件

export JAVA_HOME=/usr/java/jdk1.8.0_11

4.4、修改slaves文件,指定master的小弟,在master机器上,sbin目录下只执行start-all.sh,能够启动所有slave的DataNode和NodeManager

[root@node1 hadoop]# cat slaves

node2.jacky.com

node3.jacky.com

4.5、修改hadoop核心配置文件core-site.xml

<configuration>

<!--配置hadoop使用的文件系统,配置hadoop内置的文件系统-->

<property>

<name>fs.defaultFS</name>

<value>hdfs://node1.jacky.com:9000</value>

</property>

<!--配置hadoop数据目录-->

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop-2.7.3/tmp</value>

</property>

</configuration>

说明:目录/usr/local/hadoop-2.7.3/tmp,是自己新建的

4.6、修改hdfs-site.xml文件

<configuration>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>node1.jacky.com:50090</value>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.name.dir</name>

<value>/usr/local/hadoop-2.7.3/hadoop/name</value>

</property>

<property>

<name>dfs.data.dir</name>

<value>/usr/local/hadoop-2.7.3/hadoop/data</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

</configuration>

4.7、修改mapred-site.xml文件

<configuration>

<!--mapreduce配置在yarn集群上跑-->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>node1.jacky.com:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>node1.jacky.com:19888</value>

</property>

</configuration>

4.8、修改yarn-site.xml文件

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<!--配置yarn的master-->

<property>

<name>yarn.resourcemanager.address</name>

<value>node1.jacky.com:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>node1.jacky.com:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>node1.jacky.com:8031</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>node1.jacky.com:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>node1.jacky.com:8088</value>

</property>

</configuration>

4.9、然后把在master的配置拷贝到node2.jacky.com和node3.jacky.com节点上

[root@node1 hadoop-2.7.3]# scp -r hadoop-2.7.3 root@node2.jacky.com:/usr/local/ [root@node1 hadoop-2.7.3]# scp -r hadoop-2.7.3 root@node3.jacky.com:/usr/local/

5、启动hadoop

5.1、格式化hadoop

[root@node1 hadoop-2.7.3]# hdfs namenode -format

5.2、启动hadoop

[root@node1 sbin]# start-all.sh

5.3、用jps命令查看三台机器上hadoop有没起来

192.168.6.130

[root@node1 sbin]# jps 7969 QuorumPeerMain 25113 NameNode 25483 ResourceManager 73116 Jps 25311 SecondaryNameNode

192.168.6.131

[root@node2 jacky]# jps 43986 Jps 60437 DataNode 12855 QuorumPeerMain 60621 NodeManager

192.168.6.132

[root@node2 jacky]# jps 43986 Jps 60437 DataNode 12855 QuorumPeerMain 60621 NodeManager

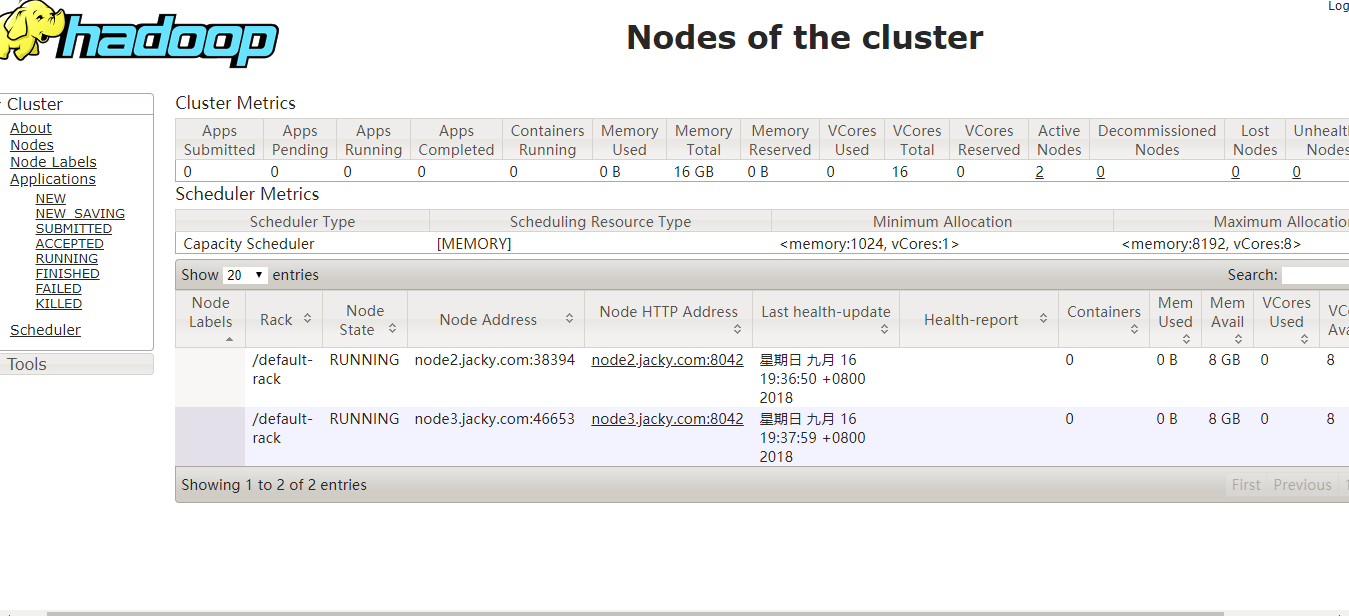

5.4、界面查看验证

http://192.168.6.130:8088/cluster/nodes

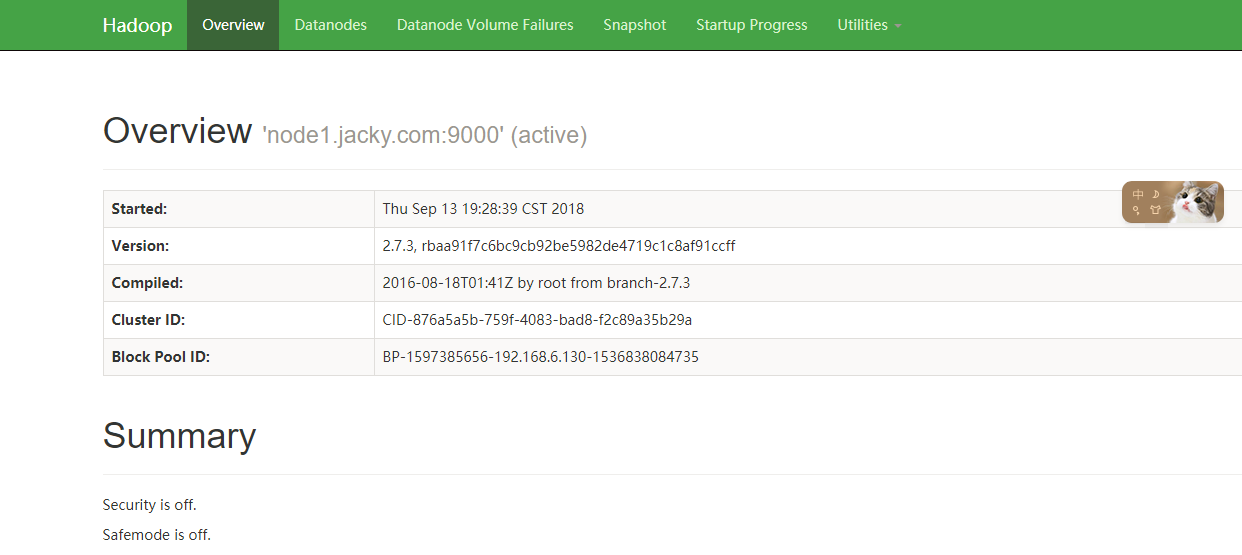

查看dataNode是否启动

http://192.168.6.130:50070/

好了,到这里,hadoop-2.7.3完全分布式集群搭建成功了,接下来我们将进入hbase搭建

6、hbase完全分布式集群搭建

6.1、上传到文件到/usr/software目录下,解压到/usr/local目录下

[root@node2 software]# ll 总用量 327232 -rw-r--r--. 1 root root 214092195 9月 12 17:54 hadoop-2.7.3.tar.gz -rw-r--r--. 1 root root 104584366 9月 14 15:59 hbase-1.2.5-bin.tar.gz -rw-r--r--. 1 root root 16402010 10月 25 2016 zookeeper-3.4.5.tar.gz [root@node2 software]# pwd /usr/software

[root@node2 software]# tar -xvzf hbase-1.2.5-bin.tar.gz -C /usr/local/

6.2、配置hbase环境变量vim /etc/profile

#hbase

export HBASE_HOME=/usr/local/hbase-1.2.5 export PATH=$HBASE_HOME/bin:$PATH

执行 source /etc/profile,让配置生效

6.3、创建目录tmp

[root@node2 hbase-1.2.5]# /usr/local/hbase-1.2.5/tmp

6.4、配置hbase-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_11 # Extra Java CLASSPATH elements. Optional. export HBASE_CLASSPATH=/usr/local/hadoop-2.7.3/etc/hadoop

# Tell HBase whether it should manage it's own instance of Zookeeper or not.

export HBASE_MANAGES_ZK=false 默认是true

6.5、配置hbase-site.xml

<configuration>

<property>

<name>hbase.rootdir</name>

<value>hdfs://node1.jacky.com:9000/hbase</value>

</property>

<property>

<name>hbase.master</name>

<value>node1.jacky.com</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.tmp.dir</name>

<value>/usr/local/hbase-1.2.5/tmp</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>node1.jacky.com,node2.jacky.com,node3.jacky.com</value>

</property>

<property>

<name>hbase.zoopkeeper.property.dataDir</name>

<value>/usr/local/zookeeper-3.4.5/data</value>

</property>

<property>

<name>zookeeper.session.timeout</name>

<value>60000000</value>

</property>

<property>

<name>dfs.support.append</name>

<value>true</value>

</property>

</configuration>

6.6、配置regionservers,其实就是配置master的小弟

node2.jacky.com

node3.jacky.com

6.7、用scp 命令把配置好的hbase程序分发到各个机器上

scp hbase-1.2.5 root@node2.jacky.com:/usr/local scp hbase-1.2.5 root@node3.jacky.com:/usr/local

7、启动hbase,只需要在master机器上执行

[root@node1 bin]# ./start-hbase.sh node3.jacky.com: starting zookeeper, logging to /usr/local/hbase-1.2.5/bin/../logs/hbase-root-zookeeper-node3.jacky.com.out node2.jacky.com: starting zookeeper, logging to /usr/local/hbase-1.2.5/bin/../logs/hbase-root-zookeeper-node2.jacky.com.out node1.jacky.com: starting zookeeper, logging to /usr/local/hbase-1.2.5/bin/../logs/hbase-root-zookeeper-node1.jacky.com.out starting master, logging to /usr/local/hbase-1.2.5/logs/hbase-jacky-master-node1.jacky.com.out node2.jacky.com: starting regionserver, logging to /usr/local/hbase-1.2.5/bin/../logs/hbase-root-regionserver-node2.jacky.com.out node3.jacky.com: starting regionserver, logging to /usr/local/hbase-1.2.5/bin/../logs/hbase-root-regionserver-node3.jacky.com.out node2.jacky.com: Java HotSpot(TM) 64-Bit Server VM warning: ignoring option PermSize=128m; support was removed in 8.0 node2.jacky.com: Java HotSpot(TM) 64-Bit Server VM warning: ignoring option MaxPermSize=128m; support was removed in 8.0 [root@node1 bin]#

7.1、通过jps查看hbase进程

192.168.6.130

[root@node1 bin]# jps 7969 QuorumPeerMain 74560 HMaster 25113 NameNode 25483 ResourceManager 25311 SecondaryNameNode 75006 Jps

192.168.131

[root@node2 local]# jps 60437 DataNode 12855 QuorumPeerMain 47227 HRegionServer 60621 NodeManager 47902 Jps

192.168.6.132

[root@node2 local]# jps 60437 DataNode 12855 QuorumPeerMain 47227 HRegionServer 60621 NodeManager 47902 Jps

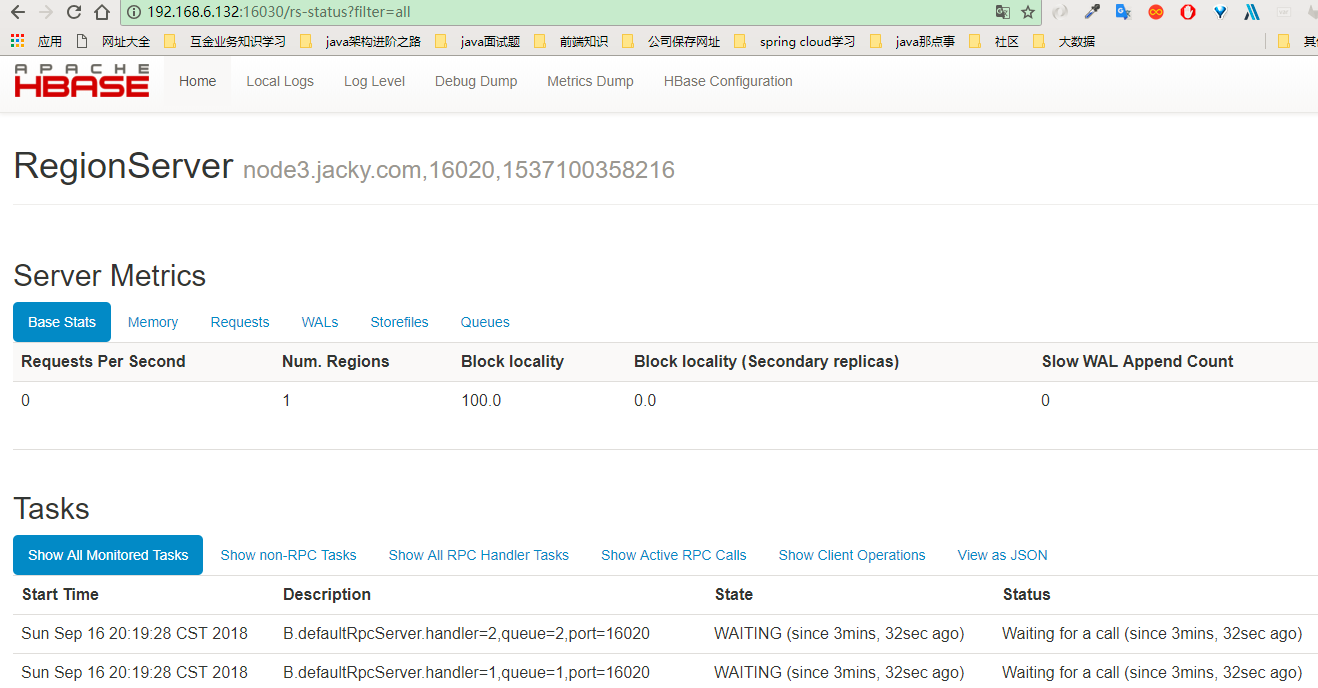

7.2、通过打开页面验证

到这里hbase完全分布式集群就搭建完成了

欢迎关注