步骤

一、创建maven工程并导入jar包

<properties>

<scala.version>2.11.8</scala.version>

<spark.version>2.2.0</spark.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-streaming -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.11</artifactId>

<version>2.2.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.5</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_2.11</artifactId>

<version>2.2.0</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.38</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.0</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

<encoding>UTF-8</encoding>

<!-- <verbal>true</verbal>-->

</configuration>

</plugin>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.0</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

<configuration>

<args>

<arg>-dependencyfile</arg>

<arg>${project.build.directory}/.scala_dependencies</arg>

</args>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>3.1.1</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers>

<transformer implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass></mainClass>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

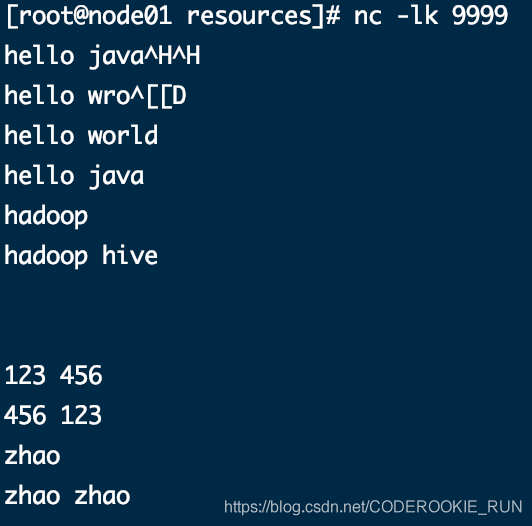

二、安装并启动生产者

在node01安装nc工具

yum -y install nc

使用nc工具向指定端口发送数据

nc -lk 9999

三、开发SparkStreaming代码

import org.apache.spark.streaming.dstream.{DStream, ReceiverInputDStream}

import org.apache.spark.{SparkConf, SparkContext}

import org.apache.spark.streaming.{Seconds, StreamingContext}

object WordCountTest {

def main(args: Array[String]): Unit = {

//获取SparkConf

val sparkConf: SparkConf = new SparkConf().setAppName("Streaming_WordCountTest").setMaster("local[4]").set("spark.driver.host", "localhost")

//获取SparkContext

val sparkContext: SparkContext = new SparkContext(sparkConf)

//设置日志级别

sparkContext.setLogLevel("WARN")

//获取StreamingContext 需要两个参数 SparkContext和duration,后者就是间隔时间

val streamContext: StreamingContext = new StreamingContext(sparkContext, Seconds(5))

//从socket获取数据

val stream: ReceiverInputDStream[String] = streamContext.socketTextStream("node01", 9999)

//对数据进行计数操作

val result: DStream[(String, Int)] = stream.flatMap(x => x.split(" ")).map((_, 1)).reduceByKey(_ + _)

//输出数据

result.print()

//启动程序

streamContext.start()

streamContext.awaitTermination()

}

}

四、查看结果

nc工具发送的数据

控制台结果

-----------------------------------------

Time: 1586852050000 ms

-------------------------------------------

(hive,1)

(wro,1)

(hadoop,2)

(hello,4)

(java,1)

(ja,1)

(world,1)

-------------------------------------------

Time: 1586852055000 ms

-------------------------------------------

-------------------------------------------

Time: 1586852060000 ms

-------------------------------------------

20/04/14 16:14:23 WARN RandomBlockReplicationPolicy: Expecting 1 replicas with only 0 peer/s.

20/04/14 16:14:23 WARN BlockManager: Block input-0-1586852063400 replicated to only 0 peer(s) instead of 1 peers

20/04/14 16:14:24 WARN RandomBlockReplicationPolicy: Expecting 1 replicas with only 0 peer/s.

20/04/14 16:14:24 WARN BlockManager: Block input-0-1586852064000 replicated to only 0 peer(s) instead of 1 peers

-------------------------------------------

Time: 1586852065000 ms

-------------------------------------------

(,2)

20/04/14 16:14:29 WARN RandomBlockReplicationPolicy: Expecting 1 replicas with only 0 peer/s.

20/04/14 16:14:29 WARN BlockManager: Block input-0-1586852069600 replicated to only 0 peer(s) instead of 1 peers

-------------------------------------------

Time: 1586852070000 ms

-------------------------------------------

(456,1)

(123,1)

20/04/14 16:14:31 WARN RandomBlockReplicationPolicy: Expecting 1 replicas with only 0 peer/s.

20/04/14 16:14:31 WARN BlockManager: Block input-0-1586852071200 replicated to only 0 peer(s) instead of 1 peers

20/04/14 16:14:34 WARN RandomBlockReplicationPolicy: Expecting 1 replicas with only 0 peer/s.

20/04/14 16:14:34 WARN BlockManager: Block input-0-1586852073800 replicated to only 0 peer(s) instead of 1 peers

-------------------------------------------

Time: 1586852075000 ms

-------------------------------------------

(zhao,1)

(456,1)

(123,1)

20/04/14 16:14:36 WARN RandomBlockReplicationPolicy: Expecting 1 replicas with only 0 peer/s.

20/04/14 16:14:36 WARN BlockManager: Block input-0-1586852076200 replicated to only 0 peer(s) instead of 1 peers

-------------------------------------------

Time: 1586852080000 ms

-------------------------------------------

(zhao,2)

-------------------------------------------

Time: 1586852085000 ms

-------------------------------------------

-------------------------------------------

Time: 1586852090000 ms

-------------------------------------------