介绍,目前已经创建一个名为ceph的Ceph集群,和一个backup(单节点)Ceph集群,是的这两个集群的数据可以同步,做备份恢复功能

一、配置集群的相互访问

1.1 安装rbd mirror

rbd-mirror是一个新的守护进程,负责将一个镜像从一个集群同步到另一个集群

如果是单向同步,则只需要在备份集群上安装

如果是双向同步,则需要在两个集群上都安装

rbd-mirror需要连接本地和远程集群

每个集群只需要运行一个rbd-mirror进程,必须手动按章

[root@ceph5 ceph]# yum install rbd-mirror

1.2 互传配置文件

Ceph集群----->backu盘集群

[root@ceph2 ceph]# scp /etc/ceph/ceph.conf /etc/ceph/ceph.client.admin.keyring ceph5:/etc/ceph/

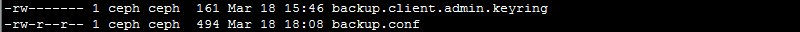

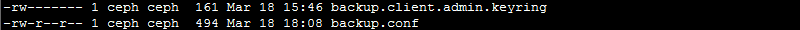

[root@ceph5 ceph]# ll -h

[root@ceph5 ceph]# chown ceph.ceph -R ./

[root@ceph5 ceph]# ll

[root@ceph5 ceph]# ceph -s --cluster ceph

cluster: id: 35a91e48-8244-4e96-a7ee-980ab989d20d health: HEALTH_OK services: mon: 3 daemons, quorum ceph2,ceph3,ceph4 mgr: ceph4(active), standbys: ceph2, ceph3 osd: 9 osds: 9 up, 9 in data: pools: 2 pools, 192 pgs objects: 119 objects, 177 MB usage: 1500 MB used, 133 GB / 134 GB avail pgs: 192 active+clean

1.3 把配置文件从从端传到主端

[root@ceph5 ceph]# scp /etc/ceph/backup.conf /etc/ceph/backup.client.admin.keyring ceph2:/etc/ceph/

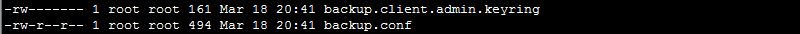

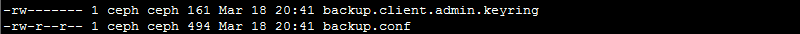

[root@ceph2 ceph]# ll

[root@ceph2 ceph]# chown -R ceph.ceph /etc/ceph/

[root@ceph2 ceph]# ll

[root@ceph2 ceph]# ceph -s --cluster backup

cluster: id: 51dda18c-7545-4edb-8ba9-27330ead81a7 health: HEALTH_OK services: mon: 1 daemons, quorum ceph5 mgr: ceph5(active) osd: 3 osds: 3 up, 3 in data: pools: 1 pools, 64 pgs objects: 5 objects, 133 bytes usage: 5443 MB used, 40603 MB / 46046 MB avail pgs: 64 active+clean

二、 配置镜像为池模式

如果是单向同步,则只需要在主集群修改,如果是双向同步,需要在两个集群上都修改,(如果只启用单向备份,则不需要在备份集群上开启镜像模式)

rbd_default_features = 125

也可不修改配置文件,而在创建镜像时指定需要启用的功能

2.1 创建镜像池

[root@ceph2 ceph]# ceph osd pool create rbdmirror 32 32

[root@ceph5 ceph]# ceph osd pool create rbdmirror 32 32

[root@ceph2 ceph]# yum -y install rbd-mirror

[root@ceph2 ceph]# rbd mirror pool enable rbdmirror pool --cluster ceph

[root@ceph2 ceph]# rbd mirror pool enable rbdmirror pool --cluster backup

2.2 增加同伴集群

需要将ceph和backup两个集群设置为同伴,这是为了让rbd-mirror进程找到它peer的集群的存储池

[root@ceph2 ceph]# rbd mirror pool peer add rbdmirror client.admin@ceph --cluster backup

[root@ceph2 ceph]# rbd mirror pool peer add rbdmirror client.admin@backup --cluster ceph

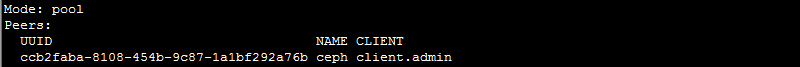

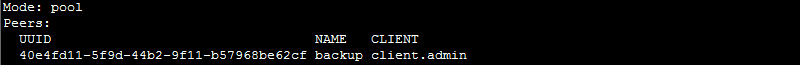

[root@ceph2 ceph]# rbd mirror pool info --pool=rbdmirror --cluster backup

[root@ceph2 ceph]# rbd mirror pool info --pool=rbdmirror --cluster ceph

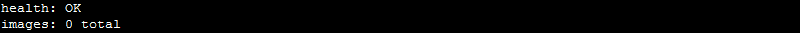

[root@ceph2 ceph]# rbd mirror pool status rbdmirror

[root@ceph2 ceph]# rbd mirror pool status rbdmirror --cluster backup

2.3 创建RBD并开启journaling

在rbdmirror池中创建一个test镜像

[root@ceph2 ceph]# rbd create --size 1G rbdmirror/test

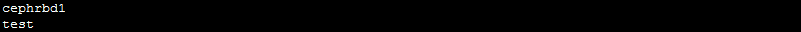

[root@ceph2 ceph]# rbd ls rbdmirror

在从端查看,为空并没有同步,是因为没有开启journing功能,从端没有开启mirror

[root@ceph2 ceph]# rbd ls rbdmirror --cluster backup

[root@ceph2 ceph]# rbd info rbdmirror/test

rbd image 'test': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.fbcd643c9869 format: 2 features: layering, exclusive-lock, object-map, fast-diff, deep-flatten flags: create_timestamp: Mon Mar 18 21:06:48 2019

[root@ceph2 ceph]# rbd feature enable rbdmirror/test journaling

[root@ceph2 ceph]# rbd info rbdmirror/test

rbd image 'test': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.fbcd643c9869 format: 2 features: layering, exclusive-lock, object-map, fast-diff, deep-flatten, journaling flags: create_timestamp: Mon Mar 18 21:06:48 2019 journal: fbcd643c9869 mirroring state: enabled mirroring global id: 0f6993f2-8c34-4c49-a546-61fcb452ff40 mirroring primary: true #主是true

2.4 从端开启mirror进程

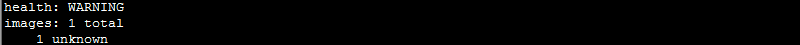

[root@ceph2 ceph]# rbd mirror pool status rbdmirror

2.5 前台手动启动调试

[root@ceph5 ceph]# rbd-mirror -d --setuser ceph --setgroup ceph --cluster backup -i admin

2019-03-18 21:12:39.521126 7f3a7f7c5340 0 set uid:gid to 1001:1001 (ceph:ceph) 2019-03-18 21:12:39.521150 7f3a7f7c5340 0 ceph version 12.2.1-40.el7cp (c6d85fd953226c9e8168c9abe81f499d66cc2716) luminous (stable), process (unknown), pid 451 2019-03-18 21:12:39.544922 7f3a7f7c5340 1 mgrc service_daemon_register rbd-mirror.admin metadata {arch=x86_64,ceph_version=ceph version 12.2.1-40.el7cp (c6d85fd953226c9e8168c9abe81f499d66cc2716) luminous (stable),cpu=QEMU Virtual CPU version 1.5.3,distro=rhel,distro_description=Red Hat Enterprise Linux Server 7.4 (Maipo),distro_version=7.4,hostname=ceph5,instance_id=4150,kernel_description=#1 SMP Thu Dec 28 14:23:39 EST 2017,kernel_version=3.10.0-693.11.6.el7.x86_64,mem_swap_kb=0,mem_total_kb=1883532,os=Linux} 2019-03-18 21:12:42.743067 7f3a6bfff700 -1 rbd::mirror::ImageReplayer: 0x7f3a50016c70 [2/0f6993f2-8c34-4c49-a546-61fcb452ff40] handle_init_remote_journaler: image_id=1037140e0f76, m_client_meta.image_id=1037140e0f76, client.state=connected

[root@ceph5 ceph]# systemctl start ceph-rbd-mirror@admin

[root@ceph5 ceph]# ps aux|grep rbd

ceph 520 1.6 1.5 1202736 29176 ? Ssl 21:13 0:00 /usr/bin/rbd-mirror -f --cluster backup --id admin --setuser ceph --setgroup ceph

2.6 从端验证

查看已经同步

[root@ceph5 ceph]# rbd ls rbdmirror

[root@ceph5 ceph]# rbd info rbdmirror/test

rbd image 'test': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.1037140e0f76 format: 2 features: layering, exclusive-lock, object-map, fast-diff, deep-flatten, journaling flags: create_timestamp: Mon Mar 18 21:12:41 2019 journal: 1037140e0f76 mirroring state: enabled mirroring global id: 0f6993f2-8c34-4c49-a546-61fcb452ff40 mirroring primary: false #从为false

现在相当于单向同步,

2.7 配置双向同步

主端手动启一下mirror

[root@ceph2 ceph]# /usr/bin/rbd-mirror -f --cluster ceph --id admin --setuser ceph --setgroup ceph -d

[root@ceph2 ceph]# systemctl restart ceph-rbd-mirror@admin

[root@ceph2 ceph]# ps aux|grep rbd

ceph 348407 1.0 0.4 1611988 17704 ? Ssl 21:19 0:00 /usr/bin/rbd-mirror -f --cluster ceph --id admin --setuser ceph --setgroup ceph

从端做主端,创建一个镜像:

[root@ceph5 ceph]# rbd create --size 1G rbdmirror/ceph5-rbd --image-feature journaling --image-feature exclusive-lock

[root@ceph5 ceph]# rbd info rbdmirror/ceph5-rbd

rbd image 'ceph5-rbd': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.103e74b0dc51 format: 2 features: exclusive-lock, journaling flags: create_timestamp: Mon Mar 18 21:21:43 2019 journal: 103e74b0dc51 mirroring state: enabled mirroring global id: c41dc1d8-43e5-44eb-ba3f-87dbb17f0d15 mirroring primary: true

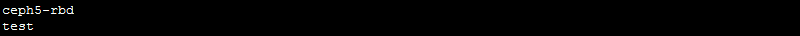

[root@ceph5 ceph]# rbd ls rbdmirror

主端变从端

[root@ceph2 ceph]# rbd info rbdmirror/ceph5-rbd

rbd image 'ceph5-rbd': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.fbe541a7c4c9 format: 2 features: exclusive-lock, journaling flags: create_timestamp: Mon Mar 18 21:21:43 2019 journal: fbe541a7c4c9 mirroring state: enabled mirroring global id: c41dc1d8-43e5-44eb-ba3f-87dbb17f0d15 mirroring primary: false

查看基于池的同步信息

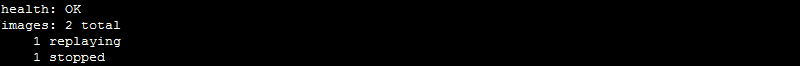

[root@ceph2 ceph]# rbd mirror pool status --pool=rbdmirror

查看image状态

[root@ceph2 ceph]# rbd mirror image status rbdmirror/test

test: global_id: 0f6993f2-8c34-4c49-a546-61fcb452ff40 state: up+stopped description: local image is primary last_update: 2019-03-18 21:25:48

三、基于镜像级别的双向同步

把池中的cephrbd1,作为镜像同步

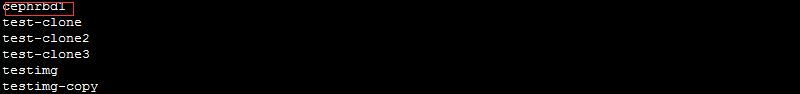

[root@ceph2 ceph]# rbd ls

3.1 先为rbd池开启镜像模式

[root@ceph2 ceph]# rbd mirror pool enable rbd image --cluster ceph

[root@ceph2 ceph]# rbd mirror pool enable rbd image --cluster backup

3.2 增加同伴

[root@ceph2 ceph]# rbd mirror pool peer add rbd client.admin@ceph --cluster backup

[root@ceph2 ceph]# rbd mirror pool peer add rbd client.admin@backup --cluster ceph

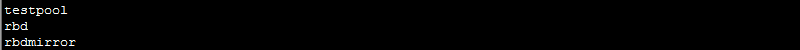

[root@ceph2 ceph]# ceph osd pool ls

[root@ceph2 ceph]# rbd info cephrbd1

rbd image 'cephrbd1': size 2048 MB in 512 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.fb2074b0dc51 format: 2 features: layering flags: create_timestamp: Sun Mar 17 21:23:52 2019

3.3 打开journaling

[root@ceph2 ceph]# rbd feature enable cephrbd1 exclusive-lock

[root@ceph2 ceph]# rbd feature enable cephrbd1 journaling

[root@ceph2 ceph]# rbd info cephrbd1

rbd image 'cephrbd1': size 2048 MB in 512 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.fb2074b0dc51 format: 2 features: layering, exclusive-lock, journaling flags: create_timestamp: Sun Mar 17 21:23:52 2019 journal: fb2074b0dc51 mirroring state: disabled

3.4 开启镜像的mirror功能

[root@ceph2 ceph]# rbd mirror image enable rbd/cephrbd1

[root@ceph2 ceph]# rbd info rbd/cephrbd1

rbd image 'cephrbd1': size 2048 MB in 512 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.fb2074b0dc51 format: 2 features: layering, exclusive-lock, journaling flags: create_timestamp: Sun Mar 17 21:23:52 2019 journal: fb2074b0dc51 mirroring state: enabled mirroring global id: cfa19d96-1c41-47e3-bae0-0b9acfdcb72d mirroring primary: true

[root@ceph2 ceph]# rbd ls

3.5 从端验证

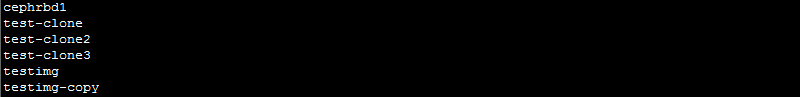

[root@ceph5 ceph]# rbd ls

同步成功

如果主挂掉,可以把从设为主,但是实验状态下,可以把主降级处理,然后把从设为主

3.6 降级处理

[root@ceph2 ceph]# rbd mirror image demote rbd/cephrbd1 Image demoted to non-primary [root@ceph2 ceph]# rbd info cephrbd1 rbd image 'cephrbd1': size 2048 MB in 512 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.fb2074b0dc51 format: 2 features: layering, exclusive-lock, journaling flags: create_timestamp: Sun Mar 17 21:23:52 2019 journal: fb2074b0dc51 mirroring state: enabled mirroring global id: cfa19d96-1c41-47e3-bae0-0b9acfdcb72d mirroring primary: false [root@ceph5 ceph]# rbd mirror image promote cephrbd1 Image promoted to primary [root@ceph5 ceph]# rbd info cephrbd1 rbd image 'cephrbd1': size 2048 MB in 512 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.1043836c40e format: 2 features: layering, exclusive-lock, journaling flags: create_timestamp: Mon Mar 18 21:38:48 2019 journal: 1043836c40e mirroring state: enabled mirroring global id: cfa19d96-1c41-47e3-bae0-0b9acfdcb72d mirroring primary: true #成为主,客户端就可以挂载到这里

3.7 池的升降级

[root@ceph2 ceph]# rbd mirror pool demote rbdmirror Demoted 1 mirrored images [root@ceph2 ceph]# rbd mirror pool demote rbdmirror Demoted 1 mirrored images [root@ceph2 ceph]# rbd info rbdmirror/ceph5-rbd rbd image 'ceph5-rbd': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.fbe541a7c4c9 format: 2 features: exclusive-lock, journaling flags: create_timestamp: Mon Mar 18 21:21:43 2019 journal: fbe541a7c4c9 mirroring state: enabled mirroring global id: c41dc1d8-43e5-44eb-ba3f-87dbb17f0d15 mirroring primary: false [root@ceph5 ceph]# rbd mirror pool promote rbdmirror Promoted 1 mirrored images [root@ceph5 ceph]# rbd ls rbdmirror ceph5-rbd test [root@ceph5 ceph]# rbd info rbdmirror/ceph5-rbd rbd image 'ceph5-rbd': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.103e74b0dc51 format: 2 features: exclusive-lock, journaling flags: create_timestamp: Mon Mar 18 21:21:43 2019 journal: 103e74b0dc51 mirroring state: enabled mirroring global id: c41dc1d8-43e5-44eb-ba3f-87dbb17f0d15 mirroring primary: true [root@ceph5 ceph]# rbd info rbdmirror/test rbd image 'test': size 1024 MB in 256 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.1037140e0f76 format: 2 features: layering, exclusive-lock, object-map, fast-diff, deep-flatten, journaling flags: create_timestamp: Mon Mar 18 21:12:41 2019 journal: 1037140e0f76 mirroring state: enabled mirroring global id: 0f6993f2-8c34-4c49-a546-61fcb452ff40 mirroring primary: true

3.8 摘掉mirror

[root@ceph2 ceph]# rbd mirror pool info --pool=rbdmirror Mode: pool Peers: UUID NAME CLIENT 40e4fd11-5f9d-44b2-9f11-b57968be62cf backup client.admin [root@ceph2 ceph]# rbd mirror pool info --pool=rbdmirror --cluster backup Mode: pool Peers: UUID NAME CLIENT ccb2faba-8108-454b-9c87-1a1bf292a76b ceph client.admin

[root@ceph5 ceph]# rbd mirror pool peer remove --pool=rbdmirror 761872b4-03d8-4f0a-972e-dc61575a785f --cluster backup

[root@ceph5 ceph]# rbd mirror image disable rbd/cephrbd1 --force

[root@ceph5 ceph]# rbd info cephrbd1

rbd image 'cephrbd1': size 2048 MB in 512 objects order 22 (4096 kB objects) block_name_prefix: rbd_data.1043836c40e format: 2 features: layering, exclusive-lock, journaling flags: create_timestamp: Mon Mar 18 21:38:48 2019 journal: 1043836c40e mirroring state: disabled #已经关闭了镜像的mirror

Ceph的集群间相互访问备份配置完成!!!

博主声明:本文的内容来源主要来自誉天教育晏威老师,由本人实验完成操作验证,需要的博友请联系誉天教育(http://www.yutianedu.com/),获得官方同意或者晏老师(https://www.cnblogs.com/breezey/)本人同意即可转载,谢谢!