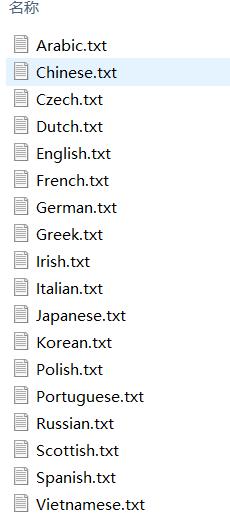

数据处理

数据可以从传送门下载。

这些数据包括了18个国家的名字,我们的任务是根据这些数据训练模型,使得模型可以判断出名字是哪个国家的。

一开始,我们需要对名字进行一些处理,因为不同国家的文字可能会有一些区别。

在这里最好先了解一下Unicode:可以看看:Unicode的文本处理二三事

import os

import glob

import unicodedata

all_letters = string.ascii_letters + " .,;'" # string.ascii_letters的作用是生成所有的英文字母

n_letters = len(all_letters)

def find_files(path):

"""

:param path:文件路径

:return: 文件列表地址

"""

return glob.glob(path) # glob模块提供了一个函数用于从目录通配符搜索中生成文件列表:

def unicode_to_ascii(str):

"""

:param str:名字

:return:返回均采用NFD编码方式的名字

"""

return ''.join(

c for c in unicodedata.normalize('NFD', str) # 对文字采用相同的编码方式

if unicodedata.category(c) != 'Mn' and c in all_letters

)

def read_lines(files_list):

"""

读取每个文件的内容

:param files_list:文件所在地址列表

:return:{国家:名字列表}

"""

category_lines = {}

all_categories = []

for file in files_list:

# os.path.splitext:分割路径,返回路径名和文件扩展名的元组

# os.path.basename:返回文件名

category = os.path.splitext(os.path.basename(file))[0]

line = [unicode_to_ascii(line) for line in open(file)]

all_categories.append(category)

category_lines[category] = line

return all_categories, category_lines

# print(all_categories)

# print(category_lines['Chinese'])

接下来我们要对单词进行编码,这里使用独热编码方式, 在上述代码中已经生成了all_letters的字符串,对于名字中的每个字母,我们只需令其在all_letters中所在的索引位为0即可。

这样每个名字的size就是[ len(name),1,len(all_letters) ]。

def get_index(letter):

"""

:param letter: 字母

:return: 字母索引

"""

return all_letters.find(letter)

def letter_to_tensor(letter):

"""

将字母转换成张量

:param letter:字母

:return: 张量

"""

tensor = torch.zeros(1, n_letters)

tensor[0][get_index(letter)] = 1

return tensor.to(device) # 将tensor放到cuda上

def word_to_tensor(word):

"""

将单词转换成张量

:param word: 单词

:return: 张量

"""

tensor = torch.zeros(len(word), 1, n_letters)

for i, letter in enumerate(word):

tensor[i][0][get_index(letter)] = 1

return tensor.to(device)

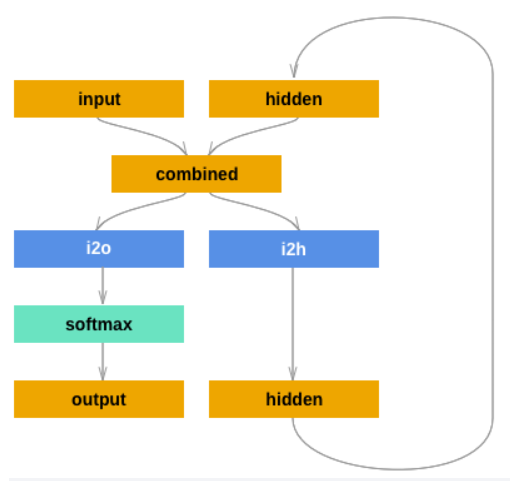

模型构建

因为是入门级的学习,这里也是只使用了最简单的RNN,只包含了一个隐藏层。

这里将隐藏层的维度定为128。

class RNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNN, self).__init__()

self.input_size = input_size

self.hidden_size = hidden_size

self.output_size = output_size

self.i2h = nn.Linear(input_size + self.hidden_size, self.hidden_size)

self.i2o = nn.Linear(input_size + self.hidden_size, self.output_size) # 这里写成self.i2o = nn.Linear(self.hidden_size, self.output_size)也是可以的,forward传入的就得是hidden的值

self.softmax = nn.LogSoftmax(dim=1) # dim=1表示对第1维度的数据进行logsoftmax操作

def forward(self, input, hidden):

tmp = torch.cat((input, hidden), 1)

hidden = self.i2h(tmp)

output = self.i2o(tmp)

output = self.softmax(output)

return output, hidden

def init_hidden(self): # 隐藏层初始化0操作

return torch.zeros(1, self.hidden_size).to(device)

最后输出的结果是属于18个国家的概率值,下面的函数就是从中挑选出概率最大的国家。

def category_from_output(output):

top_n, top_i = output.topk(1)

category_i = top_i[0].item()

return all_categories[category_i], category_i

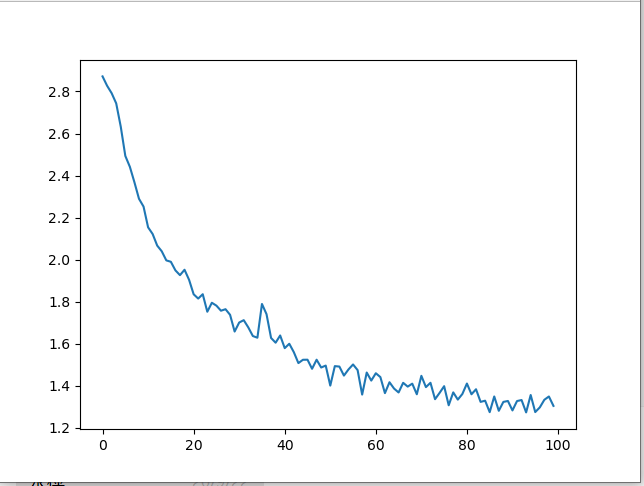

模型训练

模型的训练采用随机梯度下降,每次随机选择一个数据来进行训练。下面的代码实现的功能是先随机选择一个国家,再从该国家中随机选择一个名字。

def random_choice(obj):

return obj[random.randint(0, len(obj)-1)]

def random_training_example():

category = random_choice(all_categories)

word = random_choice(category_lines[category])

category_tensor = torch.tensor([all_categories.index(category)], dtype=torch.long).to(device)

word_tensor = word_to_tensor(word)

return category, word, category_tensor, word_tensor

具体的训练代码如下:

def train():

rnn = RNN(n_letters, n_hidden, n_categories).to(device) # 创建模型

loss = nn.NLLLoss() # 定义损失函数

lr = 0.005 # 学习率

epoch_num = 100000 # 迭代次数

current_loss = 0 # 累计损失

all_losses = [] # 记录损失,后续画图

for epoch in range(epoch_num):

epoch_start_time = time.time() # 记录一次迭代的时间

category, word, category_tensor, word_tensor = random_training_example() # 随机选择一条训练数据

rnn.zero_grad() # 梯度清零,这和optimizer.zero_grad()是等价的

hidden = rnn.init_hidden() # 初始化隐藏层

for i in range(word_tensor.size()[0]):

output, hidden = rnn(word_tensor[i].to(device), hidden.to(device))

train_loss = loss(output, category_tensor)

train_loss.backward()

for p in rnn.parameters():

p.data.add_(p.grad.data, alpha=-lr)

current_loss += train_loss.item()

if epoch % 5000 == 0: # 每迭代5000次输出信息

guess, guess_i = category_from_output(output)

correct = '√' if guess == category else '×(%s)' % category

print('%d %d%% %2.4f sec(s) %.4f %s / %s %s' %

(epoch, epoch / epoch_num * 100, time.time() - epoch_start_time, train_loss.item(), word, guess, correct))

if (epoch+1) % 1000 == 0: # 每迭代1000次,记录下该1000次的平均损失

all_losses.append(current_loss / 1000)

current_loss = 0

plt.figure() # 画出损失变化图

plt.plot(all_losses)

plt.show()

最终的损失变化图为:

完整代码

import string

import os

import glob

import unicodedata

import torch

import torch.nn as nn

import random

import time

import matplotlib.pyplot as plt

import matplotlib.ticker as ticker

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

all_letters = string.ascii_letters + " .,;'" # string.ascii_letters的作用是生成所有的英文字母

n_letters = len(all_letters)

n_hidden = 128

n_categories = 18

class RNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNN, self).__init__()

self.input_size = input_size

self.hidden_size = hidden_size

self.output_size = output_size

self.i2h = nn.Linear(input_size + self.hidden_size, self.hidden_size)

self.i2o = nn.Linear(input_size + self.hidden_size, self.output_size) # 这里写成self.i2o = nn.Linear(self.hidden_size, self.output_size)也是可以的,forward传入的就得是hidden的值

self.softmax = nn.LogSoftmax(dim=1) # dim=1表示对第1维度的数据进行logsoftmax操作

def forward(self, input, hidden):

tmp = torch.cat((input, hidden), 1)

hidden = self.i2h(tmp)

output = self.i2o(tmp)

output = self.softmax(output)

return output, hidden

def init_hidden(self): # 隐藏层初始化0操作

return torch.zeros(1, self.hidden_size).to(device)

def find_files(path):

"""

:param path:文件路径

:return: 文件列表地址

"""

return glob.glob(path) # glob模块提供了一个函数用于从目录通配符搜索中生成文件列表:

def unicode_to_ascii(str):

"""

:param str:名字

:return:返回均采用NFD编码方式的名字

"""

return ''.join(

c for c in unicodedata.normalize('NFD', str) # 对文字采用相同的编码方式

if unicodedata.category(c) != 'Mn' and c in all_letters

)

def read_lines(files_list):

"""

读取每个文件的内容

:param files_list:文件所在地址列表

:return:{国家:名字列表}

"""

category_lines = {}

all_categories = []

for file in files_list:

# os.path.splitext:分割路径,返回路径名和文件扩展名的元组

# os.path.basename:返回文件名

category = os.path.splitext(os.path.basename(file))[0]

line = [unicode_to_ascii(line) for line in open(file)]

all_categories.append(category)

category_lines[category] = line

return all_categories, category_lines

# print(all_categories)

# print(category_lines['Chinese'])

def get_index(letter):

"""

:param letter: 字母

:return: 字母索引

"""

return all_letters.find(letter)

def letter_to_tensor(letter):

"""

将字母转换成张量

:param letter:字母

:return: 张量

"""

tensor = torch.zeros(1, n_letters)

tensor[0][get_index(letter)] = 1

return tensor.to(device) # 将tensor放到cuda上

def word_to_tensor(word):

"""

将单词转换成张量

:param word: 单词

:return: 张量

"""

tensor = torch.zeros(len(word), 1, n_letters)

for i, letter in enumerate(word):

tensor[i][0][get_index(letter)] = 1

return tensor.to(device)

def random_choice(obj):

return obj[random.randint(0, len(obj)-1)]

def random_training_example():

category = random_choice(all_categories)

word = random_choice(category_lines[category])

category_tensor = torch.tensor([all_categories.index(category)], dtype=torch.long).to(device)

word_tensor = word_to_tensor(word)

return category, word, category_tensor, word_tensor

def category_from_output(output):

top_n, top_i = output.topk(1)

category_i = top_i[0].item()

return all_categories[category_i], category_i

def train():

rnn = RNN(n_letters, n_hidden, n_categories).to(device) # 创建模型

loss = nn.NLLLoss() # 定义损失函数

lr = 0.005 # 学习率

epoch_num = 100000 # 迭代次数

current_loss = 0 # 累计损失

all_losses = [] # 记录损失,后续画图

for epoch in range(epoch_num):

epoch_start_time = time.time() # 记录一次迭代的时间

category, word, category_tensor, word_tensor = random_training_example() # 随机选择一条训练数据

rnn.zero_grad() # 梯度清零,这和optimizer.zero_grad()是等价的

hidden = rnn.init_hidden() # 初始化隐藏层

for i in range(word_tensor.size()[0]):

output, hidden = rnn(word_tensor[i].to(device), hidden.to(device))

train_loss = loss(output, category_tensor)

train_loss.backward()

for p in rnn.parameters():

p.data.add_(p.grad.data, alpha=-lr)

current_loss += train_loss.item()

if epoch % 5000 == 0: # 每迭代5000次输出信息

guess, guess_i = category_from_output(output)

correct = '√' if guess == category else '×(%s)' % category

print('%d %d%% %2.4f sec(s) %.4f %s / %s %s' %

(epoch, epoch / epoch_num * 100, time.time() - epoch_start_time, train_loss.item(), word, guess, correct))

if (epoch+1) % 1000 == 0: # 每迭代1000次,记录下该1000次的平均损失

all_losses.append(current_loss / 1000)

current_loss = 0

plt.figure() # 画出损失变化图

plt.plot(all_losses)

plt.show()

if __name__ == '__main__':

files_list = find_files('./names/*.txt')

all_categories, category_lines = read_lines(files_list)

train()