不多说,直接上干货!

一切来源于官网

http://kafka.apache.org/documentation/

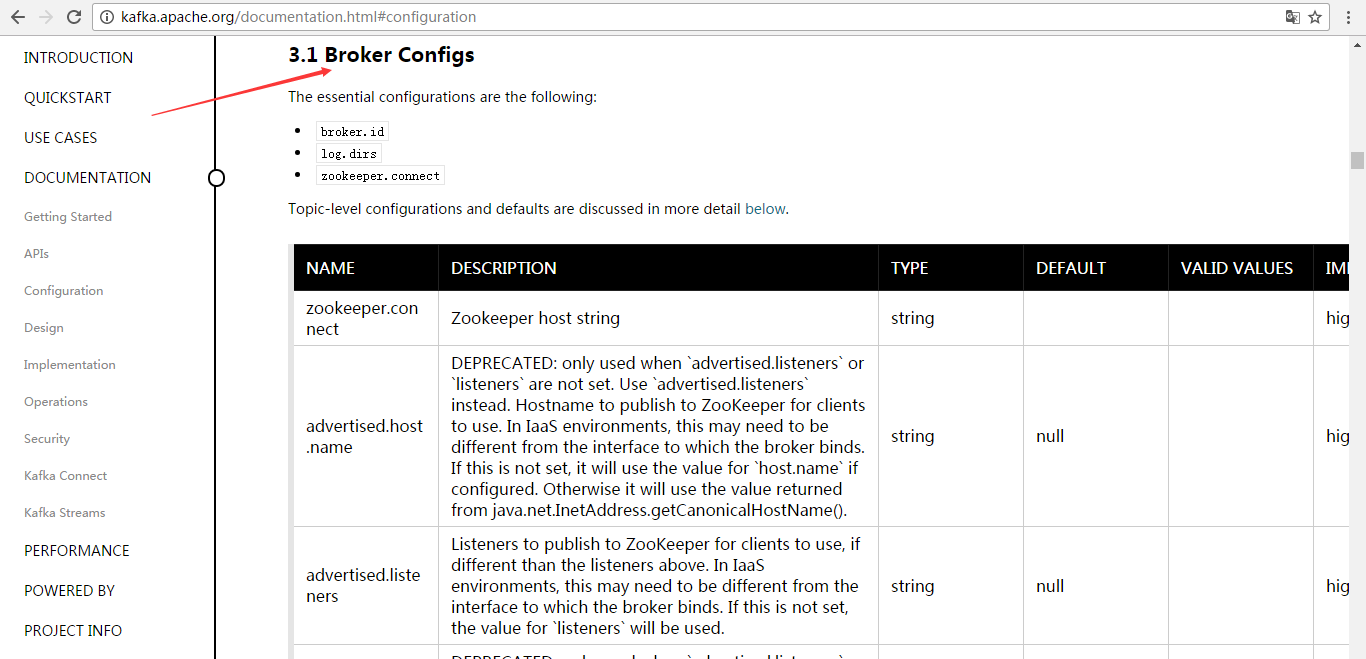

3.1 Broker Configs

3.1 broker配置

The essential configurations are the following:

broker.idlog.dirszookeeper.connect

基本配置如下:

broker.idlog.dirszookeeper.connect

Topic-level configurations and defaults are discussed in more detail below.

下文将详细论述了主题级别配置和默认值。

| NAME | DESCRIPTION | TYPE | DEFAULT | VALID VALUES | IMPORTANCE |

|---|---|---|---|---|---|

| zookeeper.connect | Zookeeper host string | string | high | ||

| advertised.host.name | DEPRECATED: only used when `advertised.listeners` or `listeners` are not set. Use `advertised.listeners` instead. Hostname to publish to ZooKeeper for clients to use. In IaaS environments, this may need to be different from the interface to which the broker binds. If this is not set, it will use the value for `host.name` if configured. Otherwise it will use the value returned from java.net.InetAddress.getCanonicalHostName(). | string | null | high | |

| advertised.listeners | Listeners to publish to ZooKeeper for clients to use, if different than the listeners above. In IaaS environments, this may need to be different from the interface to which the broker binds. If this is not set, the value for `listeners` will be used. | string | null | high | |

| advertised.port | DEPRECATED: only used when `advertised.listeners` or `listeners` are not set. Use `advertised.listeners` instead. The port to publish to ZooKeeper for clients to use. In IaaS environments, this may need to be different from the port to which the broker binds. If this is not set, it will publish the same port that the broker binds to. | int | null | high | |

| auto.create.topics.enable | Enable auto creation of topic on the server | boolean | true | high | |

| auto.leader.rebalance.enable | Enables auto leader balancing. A background thread checks and triggers leader balance if required at regular intervals | boolean | true | high | |

| background.threads | The number of threads to use for various background processing tasks | int | 10 | [1,...] | high |

| broker.id | The broker id for this server. If unset, a unique broker id will be generated.To avoid conflicts between zookeeper generated broker id's and user configured broker id's, generated broker ids start from reserved.broker.max.id + 1. | int | -1 | high | |

| compression.type | Specify the final compression type for a given topic. This configuration accepts the standard compression codecs ('gzip', 'snappy', 'lz4'). It additionally accepts 'uncompressed' which is equivalent to no compression; and 'producer' which means retain the original compression codec set by the producer. | string | producer | high | |

| delete.topic.enable | Enables delete topic. Delete topic through the admin tool will have no effect if this config is turned off | boolean | false | high | |

| host.name | DEPRECATED: only used when `listeners` is not set. Use `listeners` instead. hostname of broker. If this is set, it will only bind to this address. If this is not set, it will bind to all interfaces | string | "" | high | |

| leader.imbalance.check.interval.seconds | The frequency with which the partition rebalance check is triggered by the controller | long | 300 | high | |

| leader.imbalance.per.broker.percentage | The ratio of leader imbalance allowed per broker. The controller would trigger a leader balance if it goes above this value per broker. The value is specified in percentage. | int | 10 | high | |

| listeners | Listener List - Comma-separated list of URIs we will listen on and the listener names. If the listener name is not a security protocol, listener.security.protocol.map must also be set. Specify hostname as 0.0.0.0 to bind to all interfaces. Leave hostname empty to bind to default interface. Examples of legal listener lists: PLAINTEXT://myhost:9092,SSL://:9091 CLIENT://0.0.0.0:9092,REPLICATION://localhost:9093 | string | null | high | |

| log.dir | The directory in which the log data is kept (supplemental for log.dirs property) | string | /tmp/kafka-logs | high | |

| log.dirs | The directories in which the log data is kept. If not set, the value in log.dir is used | string | null | high | |

| log.flush.interval.messages | The number of messages accumulated on a log partition before messages are flushed to disk | long | 9223372036854775807 | [1,...] | high |

| log.flush.interval.ms | The maximum time in ms that a message in any topic is kept in memory before flushed to disk. If not set, the value in log.flush.scheduler.interval.ms is used | long | null | high | |

| log.flush.offset.checkpoint.interval.ms | The frequency with which we update the persistent record of the last flush which acts as the log recovery point | int | 60000 | [0,...] | high |

| log.flush.scheduler.interval.ms | The frequency in ms that the log flusher checks whether any log needs to be flushed to disk | long | 9223372036854775807 | high | |

| log.retention.bytes | The maximum size of the log before deleting it | long | -1 | high | |

| log.retention.hours | The number of hours to keep a log file before deleting it (in hours), tertiary to log.retention.ms property | int | 168 | high | |

| log.retention.minutes | The number of minutes to keep a log file before deleting it (in minutes), secondary to log.retention.ms property. If not set, the value in log.retention.hours is used | int | null | high | |

| log.retention.ms | The number of milliseconds to keep a log file before deleting it (in milliseconds), If not set, the value in log.retention.minutes is used | long | null | high | |

| log.roll.hours | The maximum time before a new log segment is rolled out (in hours), secondary to log.roll.ms property | int | 168 | [1,...] | high |

| log.roll.jitter.hours | The maximum jitter to subtract from logRollTimeMillis (in hours), secondary to log.roll.jitter.ms property | int | 0 | [0,...] | high |

| log.roll.jitter.ms | The maximum jitter to subtract from logRollTimeMillis (in milliseconds). If not set, the value in log.roll.jitter.hours is used | long | null | high | |

| log.roll.ms | The maximum time before a new log segment is rolled out (in milliseconds). If not set, the value in log.roll.hours is used | long | null | high | |

| log.segment.bytes | The maximum size of a single log file | int | 1073741824 | [14,...] | high |

| log.segment.delete.delay.ms | The amount of time to wait before deleting a file from the filesystem | long | 60000 | [0,...] | high |

| message.max.bytes | The maximum size of message that the server can receive | int | 1000012 | [0,...] | high |

| min.insync.replicas | When a producer sets acks to "all" (or "-1"), min.insync.replicas specifies the minimum number of replicas that must acknowledge a write for the write to be considered successful. If this minimum cannot be met, then the producer will raise an exception (either NotEnoughReplicas or NotEnoughReplicasAfterAppend). When used together, min.insync.replicas and acks allow you to enforce greater durability guarantees. A typical scenario would be to create a topic with a replication factor of 3, set min.insync.replicas to 2, and produce with acks of "all". This will ensure that the producer raises an exception if a majority of replicas do not receive a write. |

int | 1 | [1,...] | high |

| num.io.threads | The number of io threads that the server uses for carrying out network requests | int | 8 | [1,...] | high |

| num.network.threads | the number of network threads that the server uses for handling network requests | int | 3 | [1,...] | high |

| num.recovery.threads.per.data.dir | The number of threads per data directory to be used for log recovery at startup and flushing at shutdown | int | 1 | [1,...] | high |

| num.replica.fetchers | Number of fetcher threads used to replicate messages from a source broker. Increasing this value can increase the degree of I/O parallelism in the follower broker. | int | 1 | high | |

| offset.metadata.max.bytes | The maximum size for a metadata entry associated with an offset commit | int | 4096 | high | |

| offsets.commit.required.acks | The required acks before the commit can be accepted. In general, the default (-1) should not be overridden | short | -1 | high | |

| offsets.commit.timeout.ms | Offset commit will be delayed until all replicas for the offsets topic receive the commit or this timeout is reached. This is similar to the producer request timeout. | int | 5000 | [1,...] | high |

| offsets.load.buffer.size | Batch size for reading from the offsets segments when loading offsets into the cache. | int | 5242880 | [1,...] | high |

| offsets.retention.check.interval.ms | Frequency at which to check for stale offsets | long | 600000 | [1,...] | high |

| offsets.retention.minutes | Log retention window in minutes for offsets topic | int | 1440 | [1,...] | high |

| offsets.topic.compression.codec | Compression codec for the offsets topic - compression may be used to achieve "atomic" commits | int | 0 | high | |

| offsets.topic.num.partitions | The number of partitions for the offset commit topic (should not change after deployment) | int | 50 | [1,...] | high |

| offsets.topic.replication.factor | The replication factor for the offsets topic (set higher to ensure availability). To ensure that the effective replication factor of the offsets topic is the configured value, the number of alive brokers has to be at least the replication factor at the time of the first request for the offsets topic. If not, either the offsets topic creation will fail or it will get a replication factor of min(alive brokers, configured replication factor) | short | 3 | [1,...] | high |

| offsets.topic.segment.bytes | The offsets topic segment bytes should be kept relatively small in order to facilitate faster log compaction and cache loads | int | 104857600 | [1,...] | high |

| port | DEPRECATED: only used when `listeners` is not set. Use `listeners` instead. the port to listen and accept connections on | int | 9092 | high | |

| queued.max.requests | The number of queued requests allowed before blocking the network threads | int | 500 | [1,...] | high |

| quota.consumer.default | DEPRECATED: Used only when dynamic default quotas are not configured for <user, <client-id="">or <user, client-id="">in Zookeeper. Any consumer distinguished by clientId/consumer group will get throttled if it fetches more bytes than this value per-second | long | 9223372036854775807 | [1,...] | high |

| quota.producer.default | DEPRECATED: Used only when dynamic default quotas are not configured for , or <user, client-id="">in Zookeeper. Any producer distinguished by clientId will get throttled if it produces more bytes than this value per-second | long | 9223372036854775807 | [1,...] | high |

| replica.fetch.min.bytes | Minimum bytes expected for each fetch response. If not enough bytes, wait up to replicaMaxWaitTimeMs | int | 1 | high | |

| replica.fetch.wait.max.ms | max wait time for each fetcher request issued by follower replicas. This value should always be less than the replica.lag.time.max.ms at all times to prevent frequent shrinking of ISR for low throughput topics | int | 500 | high | |

| replica.high.watermark.checkpoint.interval.ms | The frequency with which the high watermark is saved out to disk | long | 5000 | high | |

| replica.lag.time.max.ms | If a follower hasn't sent any fetch requests or hasn't consumed up to the leaders log end offset for at least this time, the leader will remove the follower from isr | long | 10000 | high | |

| replica.socket.receive.buffer.bytes | The socket receive buffer for network requests | int | 65536 | high | |

| replica.socket.timeout.ms | The socket timeout for network requests. Its value should be at least replica.fetch.wait.max.ms | int | 30000 | high | |

| request.timeout.ms | The configuration controls the maximum amount of time the client will wait for the response of a request. If the response is not received before the timeout elapses the client will resend the request if necessary or fail the request if retries are exhausted. | int | 30000 | high | |

| socket.receive.buffer.bytes | The SO_RCVBUF buffer of the socket sever sockets. If the value is -1, the OS default will be used. | int | 102400 | high | |

| socket.request.max.bytes | The maximum number of bytes in a socket request | int | 104857600 | [1,...] | high |

| socket.send.buffer.bytes | The SO_SNDBUF buffer of the socket sever sockets. If the value is -1, the OS default will be used. | int | 102400 | high | |

| unclean.leader.election.enable | Indicates whether to enable replicas not in the ISR set to be elected as leader as a last resort, even though doing so may result in data loss | boolean | true | high | |

| zookeeper.connection.timeout.ms | The max time that the client waits to establish a connection to zookeeper. If not set, the value in zookeeper.session.timeout.ms is used | int | null | high | |

| zookeeper.session.timeout.ms | Zookeeper session timeout | int | 6000 | high | |

| zookeeper.set.acl | Set client to use secure ACLs | boolean | false | high | |

| broker.id.generation.enable | Enable automatic broker id generation on the server. When enabled the value configured for reserved.broker.max.id should be reviewed. | boolean | true | medium | |

| broker.rack | Rack of the broker. This will be used in rack aware replication assignment for fault tolerance. Examples: `RACK1`, `us-east-1d` | string | null | medium | |

| connections.max.idle.ms | Idle connections timeout: the server socket processor threads close the connections that idle more than this | long | 600000 | medium | |

| controlled.shutdown.enable | Enable controlled shutdown of the server | boolean | true | medium | |

| controlled.shutdown.max.retries | Controlled shutdown can fail for multiple reasons. This determines the number of retries when such failure happens | int | 3 | medium | |

| controlled.shutdown.retry.backoff.ms | Before each retry, the system needs time to recover from the state that caused the previous failure (Controller fail over, replica lag etc). This config determines the amount of time to wait before retrying. | long | 5000 | medium | |

| controller.socket.timeout.ms | The socket timeout for controller-to-broker channels | int | 30000 | medium | |

| default.replication.factor | default replication factors for automatically created topics | int | 1 | medium | |

| fetch.purgatory.purge.interval.requests | The purge interval (in number of requests) of the fetch request purgatory | int | 1000 | medium | |

| group.max.session.timeout.ms | The maximum allowed session timeout for registered consumers. Longer timeouts give consumers more time to process messages in between heartbeats at the cost of a longer time to detect failures. | int | 300000 | medium | |

| group.min.session.timeout.ms | The minimum allowed session timeout for registered consumers. Shorter timeouts result in quicker failure detection at the cost of more frequent consumer heartbeating, which can overwhelm broker resources. | int | 6000 | medium | |

| inter.broker.listener.name | Name of listener used for communication between brokers. If this is unset, the listener name is defined by security.inter.broker.protocol. It is an error to set this and security.inter.broker.protocol properties at the same time. | string | null | medium | |

| inter.broker.protocol.version | Specify which version of the inter-broker protocol will be used. This is typically bumped after all brokers were upgraded to a new version. Example of some valid values are: 0.8.0, 0.8.1, 0.8.1.1, 0.8.2, 0.8.2.0, 0.8.2.1, 0.9.0.0, 0.9.0.1 Check ApiVersion for the full list. | string | 0.10.2-IV0 | medium | |

| log.cleaner.backoff.ms | The amount of time to sleep when there are no logs to clean | long | 15000 | [0,...] | medium |

| log.cleaner.dedupe.buffer.size | The total memory used for log deduplication across all cleaner threads | long | 134217728 | medium | |

| log.cleaner.delete.retention.ms | How long are delete records retained? | long | 86400000 | medium | |

| log.cleaner.enable | Enable the log cleaner process to run on the server. Should be enabled if using any topics with a cleanup.policy=compact including the internal offsets topic. If disabled those topics will not be compacted and continually grow in size. | boolean | true | medium | |

| log.cleaner.io.buffer.load.factor | Log cleaner dedupe buffer load factor. The percentage full the dedupe buffer can become. A higher value will allow more log to be cleaned at once but will lead to more hash collisions | double | 0.9 | medium | |

| log.cleaner.io.buffer.size | The total memory used for log cleaner I/O buffers across all cleaner threads | int | 524288 | [0,...] | medium |

| log.cleaner.io.max.bytes.per.second | The log cleaner will be throttled so that the sum of its read and write i/o will be less than this value on average | double | 1.7976931348623157E308 | medium | |

| log.cleaner.min.cleanable.ratio | The minimum ratio of dirty log to total log for a log to eligible for cleaning | double | 0.5 | medium | |

| log.cleaner.min.compaction.lag.ms | The minimum time a message will remain uncompacted in the log. Only applicable for logs that are being compacted. | long | 0 | medium | |

| log.cleaner.threads | The number of background threads to use for log cleaning | int | 1 | [0,...] | medium |

| log.cleanup.policy | The default cleanup policy for segments beyond the retention window. A comma separated list of valid policies. Valid policies are: "delete" and "compact" | list | delete | [compact, delete] | medium |

| log.index.interval.bytes | The interval with which we add an entry to the offset index | int | 4096 | [0,...] | medium |

| log.index.size.max.bytes | The maximum size in bytes of the offset index | int | 10485760 | [4,...] | medium |

| log.message.format.version | Specify the message format version the broker will use to append messages to the logs. The value should be a valid ApiVersion. Some examples are: 0.8.2, 0.9.0.0, 0.10.0, check ApiVersion for more details. By setting a particular message format version, the user is certifying that all the existing messages on disk are smaller or equal than the specified version. Setting this value incorrectly will cause consumers with older versions to break as they will receive messages with a format that they don't understand. | string | 0.10.2-IV0 | medium | |

| log.message.timestamp.difference.max.ms | The maximum difference allowed between the timestamp when a broker receives a message and the timestamp specified in the message. If log.message.timestamp.type=CreateTime, a message will be rejected if the difference in timestamp exceeds this threshold. This configuration is ignored if log.message.timestamp.type=LogAppendTime. | long | 9223372036854775807 | [0,...] | medium |

| log.message.timestamp.type | Define whether the timestamp in the message is message create time or log append time. The value should be either `CreateTime` or `LogAppendTime` | string | CreateTime | [CreateTime, LogAppendTime] | medium |

| log.preallocate | Should pre allocate file when create new segment? If you are using Kafka on Windows, you probably need to set it to true. | boolean | false | medium | |

| log.retention.check.interval.ms | The frequency in milliseconds that the log cleaner checks whether any log is eligible for deletion | long | 300000 | [1,...] | medium |

| max.connections.per.ip | The maximum number of connections we allow from each ip address | int | 2147483647 | [1,...] | medium |

| max.connections.per.ip.overrides | Per-ip or hostname overrides to the default maximum number of connections | string | "" | medium | |

| num.partitions | The default number of log partitions per topic | int | 1 | [1,...] | medium |

| principal.builder.class | The fully qualified name of a class that implements the PrincipalBuilder interface, which is currently used to build the Principal for connections with the SSL SecurityProtocol. | class | org.apache.kafka.common.security.auth.DefaultPrincipalBuilder | medium | |

| producer.purgatory.purge.interval.requests | The purge interval (in number of requests) of the producer request purgatory | int | 1000 | medium | |

| replica.fetch.backoff.ms | The amount of time to sleep when fetch partition error occurs. | int | 1000 | [0,...] | medium |

| replica.fetch.max.bytes | The number of bytes of messages to attempt to fetch for each partition. This is not an absolute maximum, if the first message in the first non-empty partition of the fetch is larger than this value, the message will still be returned to ensure that progress can be made. The maximum message size accepted by the broker is defined via message.max.bytes (broker config) or max.message.bytes (topic config). |

int | 1048576 | [0,...] | medium |

| replica.fetch.response.max.bytes | Maximum bytes expected for the entire fetch response. This is not an absolute maximum, if the first message in the first non-empty partition of the fetch is larger than this value, the message will still be returned to ensure that progress can be made. The maximum message size accepted by the broker is defined via message.max.bytes (broker config) or max.message.bytes (topic config). |

int | 10485760 | [0,...] | medium |

| reserved.broker.max.id | Max number that can be used for a broker.id | int | 1000 | [0,...] | medium |

| sasl.enabled.mechanisms | The list of SASL mechanisms enabled in the Kafka server. The list may contain any mechanism for which a security provider is available. Only GSSAPI is enabled by default. | list | GSSAPI | medium | |

| sasl.kerberos.kinit.cmd | Kerberos kinit command path. | string | /usr/bin/kinit | medium | |

| sasl.kerberos.min.time.before.relogin | Login thread sleep time between refresh attempts. | long | 60000 | medium | |

| sasl.kerberos.principal.to.local.rules | A list of rules for mapping from principal names to short names (typically operating system usernames). The rules are evaluated in order and the first rule that matches a principal name is used to map it to a short name. Any later rules in the list are ignored. By default, principal names of the form {username}/{hostname}@{REALM} are mapped to {username}. For more details on the format please see security authorization and acls. | list | DEFAULT | medium | |

| sasl.kerberos.service.name | The Kerberos principal name that Kafka runs as. This can be defined either in Kafka's JAAS config or in Kafka's config. | string | null | medium | |

| sasl.kerberos.ticket.renew.jitter | Percentage of random jitter added to the renewal time. | double | 0.05 | medium | |

| sasl.kerberos.ticket.renew.window.factor | Login thread will sleep until the specified window factor of time from last refresh to ticket's expiry has been reached, at which time it will try to renew the ticket. | double | 0.8 | medium | |

| sasl.mechanism.inter.broker.protocol | SASL mechanism used for inter-broker communication. Default is GSSAPI. | string | GSSAPI | medium | |

| security.inter.broker.protocol | Security protocol used to communicate between brokers. Valid values are: PLAINTEXT, SSL, SASL_PLAINTEXT, SASL_SSL. It is an error to set this and inter.broker.listener.name properties at the same time. | string | PLAINTEXT | medium | |

| ssl.cipher.suites | A list of cipher suites. This is a named combination of authentication, encryption, MAC and key exchange algorithm used to negotiate the security settings for a network connection using TLS or SSL network protocol. By default all the available cipher suites are supported. | list | null | medium | |

| ssl.client.auth | Configures kafka broker to request client authentication. The following settings are common:

|

string | none | [required, requested, none] | medium |

| ssl.enabled.protocols | The list of protocols enabled for SSL connections. | list | TLSv1.2,TLSv1.1,TLSv1 | medium | |

| ssl.key.password | The password of the private key in the key store file. This is optional for client. | password | null | medium | |

| ssl.keymanager.algorithm | The algorithm used by key manager factory for SSL connections. Default value is the key manager factory algorithm configured for the Java Virtual Machine. | string | SunX509 | medium | |

| ssl.keystore.location | The location of the key store file. This is optional for client and can be used for two-way authentication for client. | string | null | medium | |

| ssl.keystore.password | The store password for the key store file. This is optional for client and only needed if ssl.keystore.location is configured. | password | null | medium | |

| ssl.keystore.type | The file format of the key store file. This is optional for client. | string | JKS | medium | |

| ssl.protocol | The SSL protocol used to generate the SSLContext. Default setting is TLS, which is fine for most cases. Allowed values in recent JVMs are TLS, TLSv1.1 and TLSv1.2. SSL, SSLv2 and SSLv3 may be supported in older JVMs, but their usage is discouraged due to known security vulnerabilities. | string | TLS | medium | |

| ssl.provider | The name of the security provider used for SSL connections. Default value is the default security provider of the JVM. | string | null | medium | |

| ssl.trustmanager.algorithm | The algorithm used by trust manager factory for SSL connections. Default value is the trust manager factory algorithm configured for the Java Virtual Machine. | string | PKIX | medium | |

| ssl.truststore.location | The location of the trust store file. | string | null | medium | |

| ssl.truststore.password | The password for the trust store file. | password | null | medium | |

| ssl.truststore.type | The file format of the trust store file. | string | JKS | medium | |

| authorizer.class.name | The authorizer class that should be used for authorization | string | "" | low | |

| create.topic.policy.class.name | The create topic policy class that should be used for validation. The class should implement the org.apache.kafka.server.policy.CreateTopicPolicyinterface. |

class | null | low | |

| listener.security.protocol.map | Map between listener names and security protocols. This must be defined for the same security protocol to be usable in more than one port or IP. For example, we can separate internal and external traffic even if SSL is required for both. Concretely, we could define listeners with names INTERNAL and EXTERNAL and this property as: `INTERNAL:SSL,EXTERNAL:SSL`. As shown, key and value are separated by a colon and map entries are separated by commas. Each listener name should only appear once in the map. | string | SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,TRACE:TRACE,SASL_SSL:SASL_SSL,PLAINTEXT:PLAINTEXT | low | |

| metric.reporters | A list of classes to use as metrics reporters. Implementing the MetricReporter interface allows plugging in classes that will be notified of new metric creation. The JmxReporter is always included to register JMX statistics. |

list | "" | low | |

| metrics.num.samples | The number of samples maintained to compute metrics. | int | 2 | [1,...] | low |

| metrics.recording.level | The highest recording level for metrics. | string | INFO | low | |

| metrics.sample.window.ms | The window of time a metrics sample is computed over. | long | 30000 | [1,...] | low |

| quota.window.num | The number of samples to retain in memory for client quotas | int | 11 | [1,...] | low |

| quota.window.size.seconds | The time span of each sample for client quotas | int | 1 | [1,...] | low |

| replication.quota.window.num | The number of samples to retain in memory for replication quotas | int | 11 | [1,...] | low |

| replication.quota.window.size.seconds | The time span of each sample for replication quotas | int | 1 | [1,...] | low |

| ssl.endpoint.identification.algorithm | The endpoint identification algorithm to validate server hostname using server certificate. | string | null | low | |

| ssl.secure.random.implementation | The SecureRandom PRNG implementation to use for SSL cryptography operations. | string | null | low | |

| zookeeper.sync.time.ms | How far a ZK follower can be behind a ZK leader | int | 2000 | low |

More details about broker configuration can be found in the scala class kafka.server.KafkaConfig.

Topic-level configuration Configurations pertinent to topics have both a server default as well an optional per-topic override. If no per-topic configuration is given the server default is used. The override can be set at topic creation time by giving one or more --config options. This example creates a topic named my-topic with a custom max message size and flush rate:

> bin/kafka-topics.sh --zookeeper localhost:2181 --create --topic my-topic --partitions 1

--replication-factor 1 --config max.message.bytes=64000 --config flush.messages=1

Overrides can also be changed or set later using the alter configs command. This example updates the max message size for my-topic:

> bin/kafka-configs.sh --zookeeper localhost:2181 --entity-type topics --entity-name my-topic --alter --add-config max.message.bytes=128000

To check overrides set on the topic you can do

> bin/kafka-configs.sh --zookeeper localhost:2181 --entity-type topics --entity-name my-topic --describe

To remove an override you can do

> bin/kafka-configs.sh --zookeeper localhost:2181 --entity-type topics --entity-name my-topic --alter --delete-config max.message.bytes

The following are the topic-level configurations. The server's default configuration for this property is given under the Server Default Property heading. A given server default config value only applies to a topic if it does not have an explicit topic config override.

| NAME | DESCRIPTION | TYPE | DEFAULT | VALID VALUES | SERVER DEFAULT PROPERTY | IMPORTANCE |

|---|---|---|---|---|---|---|

| cleanup.policy | A string that is either "delete" or "compact". This string designates the retention policy to use on old log segments. The default policy ("delete") will discard old segments when their retention time or size limit has been reached. The "compact" setting will enable log compaction on the topic. | list | delete | [compact, delete] | log.cleanup.policy | medium |

| compression.type | Specify the final compression type for a given topic. This configuration accepts the standard compression codecs ('gzip', 'snappy', lz4). It additionally accepts 'uncompressed' which is equivalent to no compression; and 'producer' which means retain the original compression codec set by the producer. | string | producer | [uncompressed, snappy, lz4, gzip, producer] | compression.type | medium |

| delete.retention.ms | The amount of time to retain delete tombstone markers for log compacted topics. This setting also gives a bound on the time in which a consumer must complete a read if they begin from offset 0 to ensure that they get a valid snapshot of the final stage (otherwise delete tombstones may be collected before they complete their scan). | long | 86400000 | [0,...] | log.cleaner.delete.retention.ms | medium |

| file.delete.delay.ms | The time to wait before deleting a file from the filesystem | long | 60000 | [0,...] | log.segment.delete.delay.ms | medium |

| flush.messages | This setting allows specifying an interval at which we will force an fsync of data written to the log. For example if this was set to 1 we would fsync after every message; if it were 5 we would fsync after every five messages. In general we recommend you not set this and use replication for durability and allow the operating system's background flush capabilities as it is more efficient. This setting can be overridden on a per-topic basis (see the per-topic configuration section). | long | 9223372036854775807 | [0,...] | log.flush.interval.messages | medium |

| flush.ms | This setting allows specifying a time interval at which we will force an fsync of data written to the log. For example if this was set to 1000 we would fsync after 1000 ms had passed. In general we recommend you not set this and use replication for durability and allow the operating system's background flush capabilities as it is more efficient. | long | 9223372036854775807 | [0,...] | log.flush.interval.ms | medium |

| follower.replication.throttled.replicas | A list of replicas for which log replication should be throttled on the follower side. The list should describe a set of replicas in the form [PartitionId]:[BrokerId],[PartitionId]:[BrokerId]:... or alternatively the wildcard '*' can be used to throttle all replicas for this topic. | list | "" | kafka.server.ThrottledReplicaListValidator$@6121c9d6 | follower.replication.throttled.replicas | medium |

| index.interval.bytes | This setting controls how frequently Kafka adds an index entry to it's offset index. The default setting ensures that we index a message roughly every 4096 bytes. More indexing allows reads to jump closer to the exact position in the log but makes the index larger. You probably don't need to change this. | int | 4096 | [0,...] | log.index.interval.bytes | medium |

| leader.replication.throttled.replicas | A list of replicas for which log replication should be throttled on the leader side. The list should describe a set of replicas in the form [PartitionId]:[BrokerId],[PartitionId]:[BrokerId]:... or alternatively the wildcard '*' can be used to throttle all replicas for this topic. | list | "" | kafka.server.ThrottledReplicaListValidator$@6121c9d6 | leader.replication.throttled.replicas | medium |

| max.message.bytes | This is largest message size Kafka will allow to be appended. Note that if you increase this size you must also increase your consumer's fetch size so they can fetch messages this large. | int | 1000012 | [0,...] | message.max.bytes | medium |

| message.format.version | Specify the message format version the broker will use to append messages to the logs. The value should be a valid ApiVersion. Some examples are: 0.8.2, 0.9.0.0, 0.10.0, check ApiVersion for more details. By setting a particular message format version, the user is certifying that all the existing messages on disk are smaller or equal than the specified version. Setting this value incorrectly will cause consumers with older versions to break as they will receive messages with a format that they don't understand. | string | 0.10.2-IV0 | log.message.format.version | medium | |

| message.timestamp.difference.max.ms | The maximum difference allowed between the timestamp when a broker receives a message and the timestamp specified in the message. If message.timestamp.type=CreateTime, a message will be rejected if the difference in timestamp exceeds this threshold. This configuration is ignored if message.timestamp.type=LogAppendTime. | long | 9223372036854775807 | [0,...] | log.message.timestamp.difference.max.ms | medium |

| message.timestamp.type | Define whether the timestamp in the message is message create time or log append time. The value should be either `CreateTime` or `LogAppendTime` | string | CreateTime | log.message.timestamp.type | medium | |

| min.cleanable.dirty.ratio | This configuration controls how frequently the log compactor will attempt to clean the log (assuming log compaction is enabled). By default we will avoid cleaning a log where more than 50% of the log has been compacted. This ratio bounds the maximum space wasted in the log by duplicates (at 50% at most 50% of the log could be duplicates). A higher ratio will mean fewer, more efficient cleanings but will mean more wasted space in the log. | double | 0.5 | [0,...,1] | log.cleaner.min.cleanable.ratio | medium |

| min.compaction.lag.ms | The minimum time a message will remain uncompacted in the log. Only applicable for logs that are being compacted. | long | 0 | [0,...] | log.cleaner.min.compaction.lag.ms | medium |

| min.insync.replicas | When a producer sets acks to "all" (or "-1"), min.insync.replicas specifies the minimum number of replicas that must acknowledge a write for the write to be considered successful. If this minimum cannot be met, then the producer will raise an exception (either NotEnoughReplicas or NotEnoughReplicasAfterAppend). When used together, min.insync.replicas and acks allow you to enforce greater durability guarantees. A typical scenario would be to create a topic with a replication factor of 3, set min.insync.replicas to 2, and produce with acks of "all". This will ensure that the producer raises an exception if a majority of replicas do not receive a write. |

int | 1 | [1,...] | min.insync.replicas | medium |

| preallocate | Should pre allocate file when create new segment? | boolean | false | log.preallocate | medium | |

| retention.bytes | This configuration controls the maximum size a log can grow to before we will discard old log segments to free up space if we are using the "delete" retention policy. By default there is no size limit only a time limit. | long | -1 | log.retention.bytes | medium | |

| retention.ms | This configuration controls the maximum time we will retain a log before we will discard old log segments to free up space if we are using the "delete" retention policy. This represents an SLA on how soon consumers must read their data. | long | 604800000 | log.retention.ms | medium | |

| segment.bytes | This configuration controls the segment file size for the log. Retention and cleaning is always done a file at a time so a larger segment size means fewer files but less granular control over retention. | int | 1073741824 | [14,...] | log.segment.bytes | medium |

| segment.index.bytes | This configuration controls the size of the index that maps offsets to file positions. We preallocate this index file and shrink it only after log rolls. You generally should not need to change this setting. | int | 10485760 | [0,...] | log.index.size.max.bytes | medium |

| segment.jitter.ms | The maximum random jitter subtracted from the scheduled segment roll time to avoid thundering herds of segment rolling | long | 0 | [0,...] | log.roll.jitter.ms | medium |

| segment.ms | This configuration controls the period of time after which Kafka will force the log to roll even if the segment file isn't full to ensure that retention can delete or compact old data. | long | 604800000 | [0,...] | log.roll.ms | medium |

| unclean.leader.election.enable | Indicates whether to enable replicas not in the ISR set to be elected as leader as a last resort, even though doing so may result in data loss | boolean | true | unclean.leader.election.enable | medium |