prometheus组件介绍

1.Prometheus Server: 用于收集和存储时间序列数据。

2.Client Library: 客户端库,检测应用程序代码,当Prometheus抓取实例的HTTP端点时,客户端库会将所有跟踪的metrics指标的当前状态发送到prometheus server端。

3.Exporters: prometheus支持多种exporter,通过exporter可以采集metrics数据,然后发送到prometheus server端

4.Alertmanager: 从 Prometheus server 端接收到 alerts 后,会进行去重,分组,并路由到相应的接收方,发出报警,常见的接收方式有:电子邮件,微信,钉钉, slack等。

5.Grafana:监控仪表盘

6.pushgateway: 各个目标主机可上报数据到pushgatewy,然后prometheus server统一从pushgateway拉取数据。

Prometheus server由三个部分组成,Retrieval,Storage,PromQL

Retrieval负责在活跃的target主机上抓取监控指标数据

Storage存储主要是把采集到的数据存储到磁盘中

PromQL是Prometheus提供的查询语言模块。

prometheus工作流程:

1. Prometheus server可定期从活跃的(up)目标主机上(target)拉取监控指标数据,目标主机的监控数据可通过配置静态job或者服务发现的方式被prometheus server采集到,这种方式默认的pull方式拉取指标;也可通过pushgateway把采集的数据上报到prometheus server中;还可通过一些组件自带的exporter采集相应组件的数据;

2.Prometheus server把采集到的监控指标数据保存到本地磁盘或者数据库;

3.Prometheus采集的监控指标数据按时间序列存储,通过配置报警规则,把触发的报警发送到alertmanager

4.Alertmanager通过配置报警接收方,发送报警到邮件,微信或者钉钉等

5.Prometheus 自带的web ui界面提供PromQL查询语言,可查询监控数据

6.Grafana可接入prometheus数据源,把监控数据以图形化形式展示出

node-exporter是什么?

采集机器(物理机、虚拟机、云主机等)的监控指标数据,能够采集到的指标包括CPU, 内存,磁盘,网络,文件数等信息。

安装node-exporter组件,在k8s集群的master1节点操作

cat >node-export.yaml <<EOF

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: monitor-sa

labels:

name: node-exporter

spec:

selector:

matchLabels:

name: node-exporter

template:

metadata:

labels:

name: node-exporter

spec:

hostPID: true

hostIPC: true

hostNetwork: true

containers:

- name: node-exporter

image: prom/node-exporter:v0.16.0

ports:

- containerPort: 9100

resources:

requests:

cpu: 0.15

securityContext:

privileged: true

args:

- --path.procfs

- /host/proc

- --path.sysfs

- /host/sys

- --collector.filesystem.ignored-mount-points

- '"^/(sys|proc|dev|host|etc)($|/)"'

volumeMounts:

- name: dev

mountPath: /host/dev

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: rootfs

mountPath: /rootfs

tolerations:

- key: "node-role.kubernetes.io/master"

operator: "Exists"

effect: "NoSchedule"

volumes:

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: rootfs

hostPath:

path: /

EOF

#通过kubectl apply更新node-exporter

kubectl apply -f node-export.yaml

#查看node-exporter是否部署成功

kubectl get pods -n monitor-sa

显示如下,看到pod的状态都是running,说明部署成功

NAME READY STATUS RESTARTS AGE node-exporter-9qpkd 1/1 Running 0 89s node-exporter-zqmnk 1/1 Running 0 89s

通过node-exporter采集数据

curl http://主机ip:9100/metrics

#node-export默认的监听端口是9100,可以看到当前主机获取到的所有监控数据,截取一部分,如下

k8s集群中部署prometheus

1.创建namespace、sa账号,在k8s集群的master节点操作

#创建一个monitor-sa的名称空间

kubectl create ns monitor-sa

#创建一个sa账号

kubectl create serviceaccount monitor -n monitor-sa

#把sa账号monitor通过clusterrolebing绑定到clusterrole上

kubectl create clusterrolebinding monitor-clusterrolebinding -n monitor-sa --clusterrole=cluster-admin --serviceaccount=monitor-sa:monitor

2.创建数据目录

#在k8s集群的任何一个node节点操作,因为我的k8s集群只有一个node节点node1,所以我在node1上操作如下命令:

mkdir /data

chmod 777 /data/

3.安装prometheus,以下步骤均在在k8s集群的master1节点操作

1)创建一个configmap存储卷,用来存放prometheus配置信息

cat >prometheus-cfg.yaml <<EOF

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus-config

namespace: monitor-sa

data:

prometheus.yml: |

global:

scrape_interval: 15s

scrape_timeout: 10s

evaluation_interval: 1m

scrape_configs:

- job_name: 'kubernetes-node'

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- job_name: 'kubernetes-node-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-apiserver'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::d+)?;(d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

EOF

注意:通过上面命令生成的promtheus-cfg.yaml文件会有一些问题,$1和$2这种变量在文件里没有,需要在k8s的master1节点打开promtheus-cfg.yaml文件,手动把$1和$2这种变量写进文件里,promtheus-cfg.yaml文件需要手动修改部分如下:

22行的replacement: ':9100'变成replacement: '${1}:9100'

42行的replacement: /api/v1/nodes//proxy/metrics/cadvisor变成 replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

73行的replacement: 变成replacement: $1:$2

#通过kubectl apply更新configmap

kubectl apply -f prometheus-cfg.yaml

2)通过deployment部署prometheus

cat >prometheus-deploy.yaml <<EOF

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: monitor-sa

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

#matchExpressions:

#- {key: app, operator: In, values: [prometheus]}

#- {key: component, operator: In, values: [server]}

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

spec:

nodeName: node1

serviceAccountName: monitor

containers:

- name: prometheus

image: prom/prometheus:v2.2.1

imagePullPolicy: IfNotPresent

command:

- prometheus

- --config.file=/etc/prometheus/prometheus.yml

- --storage.tsdb.path=/prometheus

- --storage.tsdb.retention=720h

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus/prometheus.yml

name: prometheus-config

subPath: prometheus.yml

- mountPath: /prometheus/

name: prometheus-storage-volume

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

items:

- key: prometheus.yml

path: prometheus.yml

mode: 0644

- name: prometheus-storage-volume

hostPath:

path: /data

type: Directory

EOF

注意:在上面的prometheus-deploy.yaml文件有个nodeName字段,这个就是用来指定创建的这个prometheus的pod调度到哪个节点上,我们这里让nodeName=node1,也即是让pod调度到node1节点上,因为node1节点我们创建了数据目录/data,所以大家记住:你在k8s集群的哪个节点创建/data,就让pod调度到哪个节点。

#通过kubectl apply更新prometheus

kubectl apply -f prometheus-deploy.yaml

#查看prometheus是否部署成功

kubectl get pods -n monitor-sa

显示如下,可看到pod状态是running,说明prometheus部署成功

NAME READY STATUS RESTARTS AGE node-exporter-9qpkd 1/1 Running 0 76m node-exporter-zqmnk 1/1 Running 0 76m prometheus-server-85dbc6c7f7-nsg94 1/1 Running 0 6m7

3)给prometheus pod创建一个service

cat > prometheus-svc.yaml << EOF

---

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitor-sa

labels:

app: prometheus

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

protocol: TCP

selector:

app: prometheus

component: server

EOF

#通过kubectl apply 更新service

kubectl apply -f prometheus-svc.yaml

#查看service在物理机映射的端口

kubectl get svc -n monitor-sa

显示如下:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE prometheus NodePort 10.96.45.93 <none> 9090:31043/TCP 50s

通过上面可以看到service在宿主机上映射的端口是31043,这样我们访问k8s集群的master1节点的ip:31043,就可以访问到prometheus的web ui界面了

#访问prometheus web ui界面

火狐浏览器输入如下地址:

http://192.168.0.6:31043/graph

可看到如下页面:

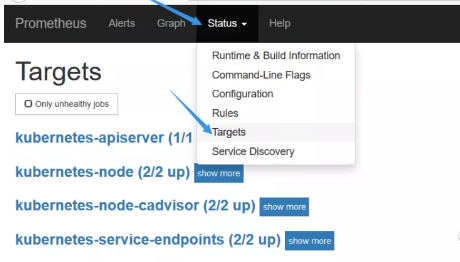

#点击页面的Status->Targets,可看到如下,说明我们配置的服务发现可以正常采集数据

#为了每次修改配置文件可以热加载prometheus,也就是不停止prometheus,就可以使配置生效,如修改prometheus-cfg.yaml,想要使配置生效可用如下热加载命令:

curl -X POST http://10.244.1.66:9090/-/reload

#10.244.1.66是prometheus的pod的ip地址,如何查看prometheus的pod ip,可用如下命令:

kubectl get pods -n monitor-sa -o wide | grep prometheus

显示如下, 10.244.1.7就是prometheus的ip

prometheus-server-85dbc6c7f7-nsg94 1/1 Running 0 29m 10.244.1.7 node

#热加载速度比较慢,可以暴力重启prometheus,如修改上面的prometheus-cfg.yaml文件之后,可执行如下强制删除:

kubectl delete -f prometheus-cfg.yaml

kubectl delete -f prometheus-deploy.yaml

然后再通过apply更新:

kubectl apply -f prometheus-cfg.yaml

kubectl apply -f prometheus-deploy.yaml

注意:

线上最好热加载,暴力删除可能造成监控数据的丢失