任务:

继续TensorFlow的学习:卷积神经网络

问题:

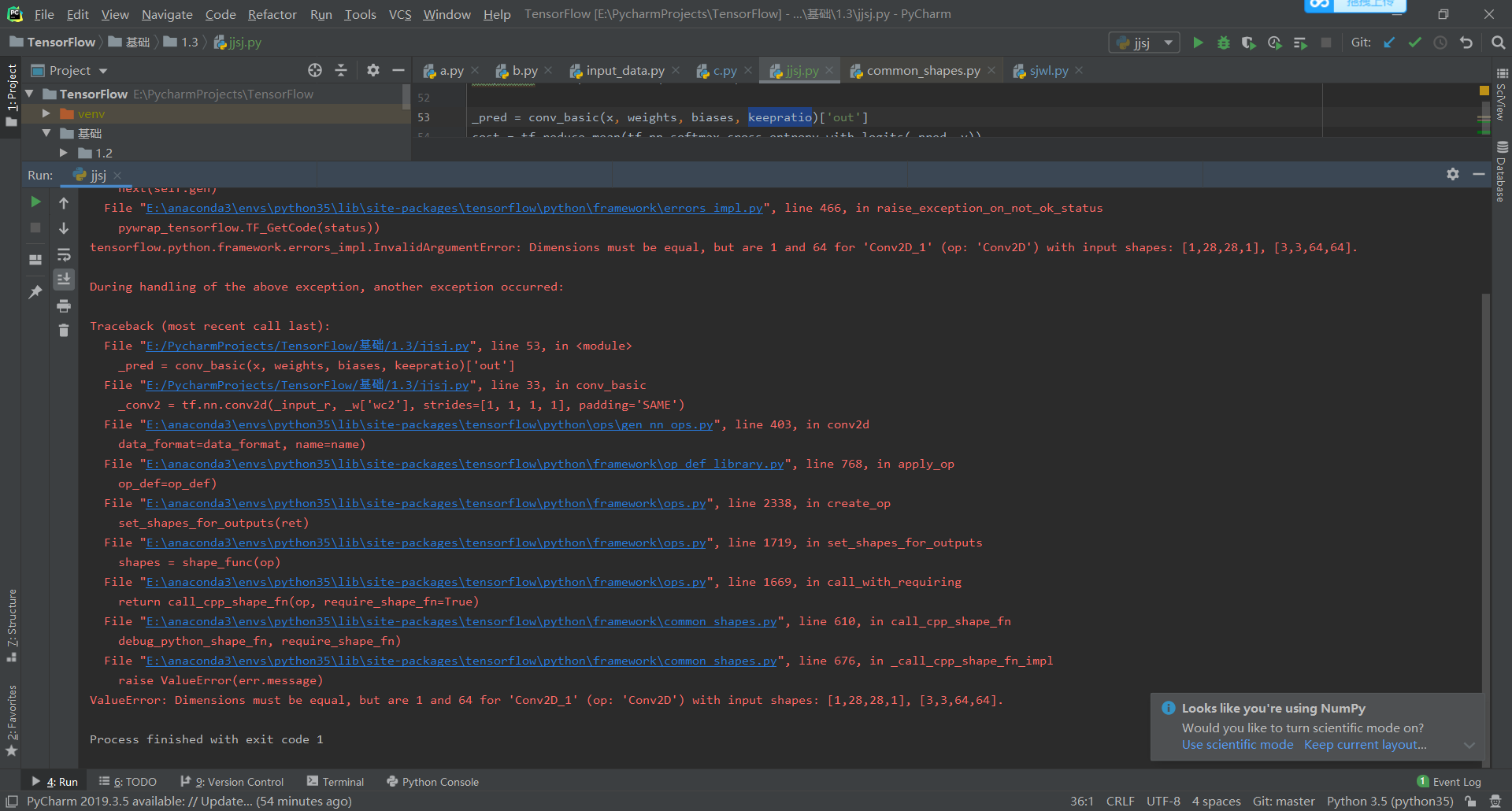

由报错位置可知是参数的问题,‘wc2’设置有误,卷积的参数应该是[3,3,64,128],而不是[3,3,64,64]

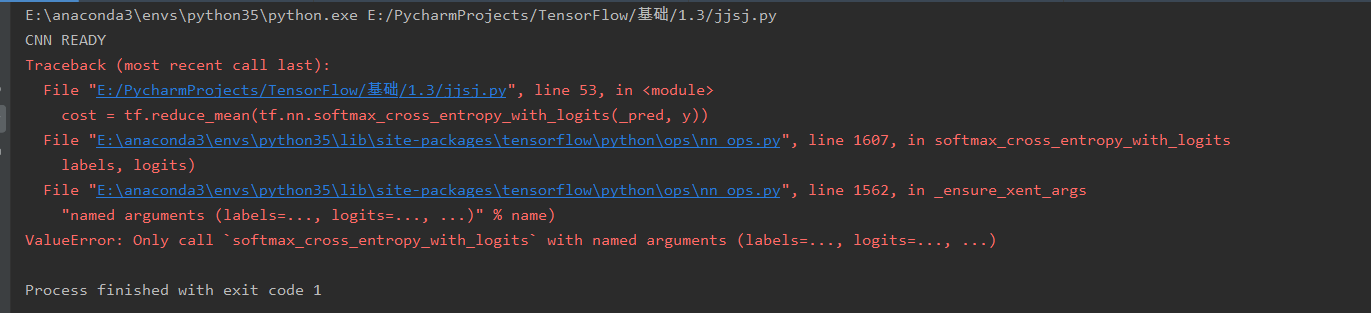

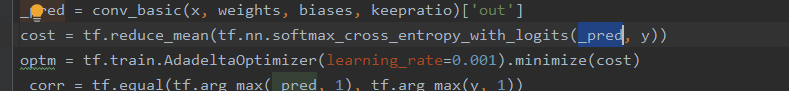

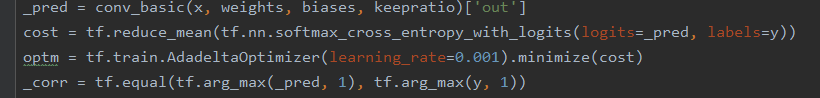

该方法的使用发生了改变,需要改成错误提示中的形式

源代码:

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data # 卷积层参数和全连接层参数 n_input = 784 n_output = 10 weights = { 'wc1': tf.Variable(tf.random_normal([3, 3, 1, 64], stddev=0.1)), # 3 3 1 64 h,w.输入深度,得出的特征图的个数 'wc2': tf.Variable(tf.random_normal([3, 3, 64, 128], stddev=0.1)), # 64:输入深度 128:输出深度 'wd1': tf.Variable(tf.random_normal([7 * 7 * 128, 1024], stddev=0.1)), 'wd2': tf.Variable(tf.random_normal([1024, n_output], stddev=0.1)), } biases = { 'bc1': tf.Variable(tf.random_normal([64], stddev=0.1)), 'bc2': tf.Variable(tf.random_normal([128], stddev=0.1)), 'bd1': tf.Variable(tf.random_normal([1024], stddev=0.1)), 'bd2': tf.Variable(tf.random_normal([n_output], stddev=0.1)), } # 特征图计算 # 转化成向量 # 卷积+池化操作 def conv_basic(_input, _w, _b, _keepratio): _input_r = tf.reshape(_input, shape=[-1, 28, 28, 1]) _conv1 = tf.nn.conv2d(_input_r, _w['wc1'], strides=[1, 1, 1, 1], padding='SAME') _conv1 = tf.nn.relu(tf.nn.bias_add(_conv1, _b['bc1'])) _pool1 = tf.nn.max_pool(_conv1, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME') _pool_dr1 = tf.nn.dropout(_pool1, _keepratio) _conv2 = tf.nn.conv2d(_pool_dr1, _w['wc2'], strides=[1, 1, 1, 1], padding='SAME') _conv2 = tf.nn.relu(tf.nn.bias_add(_conv2, _b['bc2'])) _pool2 = tf.nn.max_pool(_conv2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME') _pool_dr2 = tf.nn.dropout(_pool2, _keepratio) _dense1 = tf.reshape(_pool_dr2, [-1, _w['wd1'].get_shape().as_list()[0]]) _fc1 = tf.nn.relu(tf.add(tf.matmul(_dense1, _w['wd1']), _b['bd1'])) _fc_dr1 = tf.nn.dropout(_fc1, _keepratio) _out = tf.add(tf.matmul(_fc_dr1, _w['wd2']), _b['bd2']) out = {'input_r': _input_r, 'conv1': _conv1, 'pool1': _pool1, 'pool1_dr1': _pool_dr1, 'conv2': _conv2, 'pool2': _pool2, 'pool_dr2': _pool_dr2, 'dense1': _dense1, 'fc1': _fc1, 'fc_dr1': _fc_dr1, 'out': _out} return out print('CNN READY') x = tf.placeholder(tf.float32, [None, n_input]) y = tf.placeholder(tf.float32, [None, n_output]) keepratio = tf.placeholder(tf.float32) _pred = conv_basic(x, weights, biases, keepratio)['out'] cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=_pred, labels=y)) optm = tf.train.AdadeltaOptimizer(learning_rate=0.001).minimize(cost) _corr = tf.equal(tf.arg_max(_pred, 1), tf.arg_max(y, 1)) accr = tf.reduce_mean(tf.cast(_corr, tf.float32)) init = tf.global_variables_initializer() print('GRAPH READY') mnist = input_data.read_data_sets('data/', one_hot=True) init = tf.global_variables_initializer() # 加载数据 sess = tf.Session() sess.run(init) training_epochs = 15 batch_size = 16 display_step = 1 for epoch in range(training_epochs): avg_cost = 0. total_batch = 10 for i in range(total_batch): batch_xs, batch_ys = mnist.train.next_batch(batch_size) sess.run(optm, feed_dict={x: batch_xs, y: batch_ys, keepratio: 0.7}) avg_cost += sess.run(cost, feed_dict={x: batch_xs, y: batch_ys, keepratio: 1.}) / total_batch if epoch % display_step == 0: print("Epoch: %03d/%03d cost: %.9f" % (epoch, training_epochs, avg_cost)) train_acc = sess.run(accr, feed_dict={x: batch_xs, y: batch_ys, keepratio: 1.}) print(" Training accuracy: %.3f" % (train_acc)) print("OPTIMIZATION FINISHED")

参考资料:

https://blog.csdn.net/qq_36447181/article/details/80279802