数据准备:

放在一个txt文件中

hadoop hadoop

mapreduce

yyy yyy

zzz

hello hello hello

环境准备:

首先要下载好hadoop的windows版本。在D:hadoop-2.7.2sharehadoopmapreduce目录下可以看到官方示例的代码,我们仿照这个自己写一下。

要写的有三部分,Mapper,Reducer,Driver

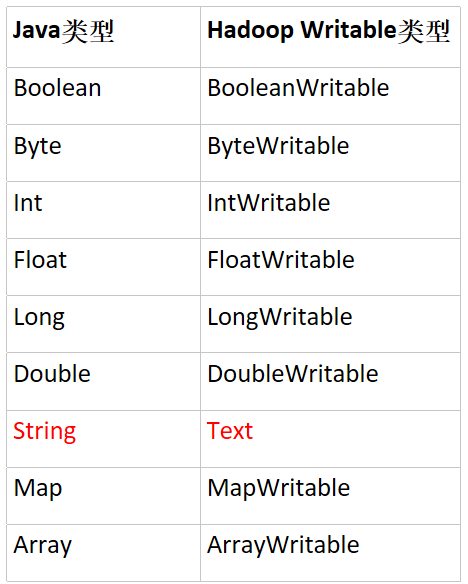

在MapReduce中,基础类型都被包装了。

1 在Mapper中把数据分割,传给Reducer。Mapper<LongWritable, Text,Text, IntWritable>中的参数表示输入类型,值。输出类型,值。

package cn.yjw.wordcount;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class WcMapper extends Mapper<LongWritable, Text,Text, IntWritable> {

private Text word = new Text();

private IntWritable one = new IntWritable(1);

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//拿到每一行数据

String line = value.toString();

//分割

String words[] = line.split(" ");

//输出

for (String w : words) {

word.set(w);

context.write(word,one);

}

}

}

2 在Reducer中做处理。大部分处理都被容器做了,导致做完也不知道怎么就对了。

package cn.yjw.wordcount;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WcReducer extends Reducer<Text, IntWritable,Text,IntWritable> {

private IntWritable total = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

//统计数量

for (IntWritable value : values) {

sum += value.get();

}

//输出

total.set(sum);

context.write(key,total);

}

}

3 写一些配置在Driver中

package cn.yjw.wordcount;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class WcDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//1 获取Job

Job job = Job.getInstance(new Configuration());

//2 设置类路径

job.setJarByClass(WcDriver.class);

//3 设置Mapper和Reducer路径

job.setMapperClass(WcMapper.class);

job.setReducerClass(WcReducer.class);

//4 设置Mapper和Reducer的输出类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

//5 设置接受的文件,输出的文件。注意导包别导错了

FileInputFormat.setInputPaths(job,new Path(args[0]));//外部参数1

FileOutputFormat.setOutputPath(job,new Path(args[1]));//外部参数2

//6 提交Job

boolean flag = job.waitForCompletion(true);

System.exit(flag ? 0 : 1);

}

}

4 pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>cn.yjw</groupId>

<artifactId>mapreducewordcount</artifactId>

<version>1.0-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>RELEASE</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>2.8.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.7.2</version>

</dependency>

<dependency>

<groupId>jdk.tools</groupId>

<artifactId>jdk.tools</artifactId>

<version>1.8</version>

<scope>system</scope>

<systemPath>${JAVA_HOME}/lib/tools.jar</systemPath>

</dependency>

</dependencies>

</project>

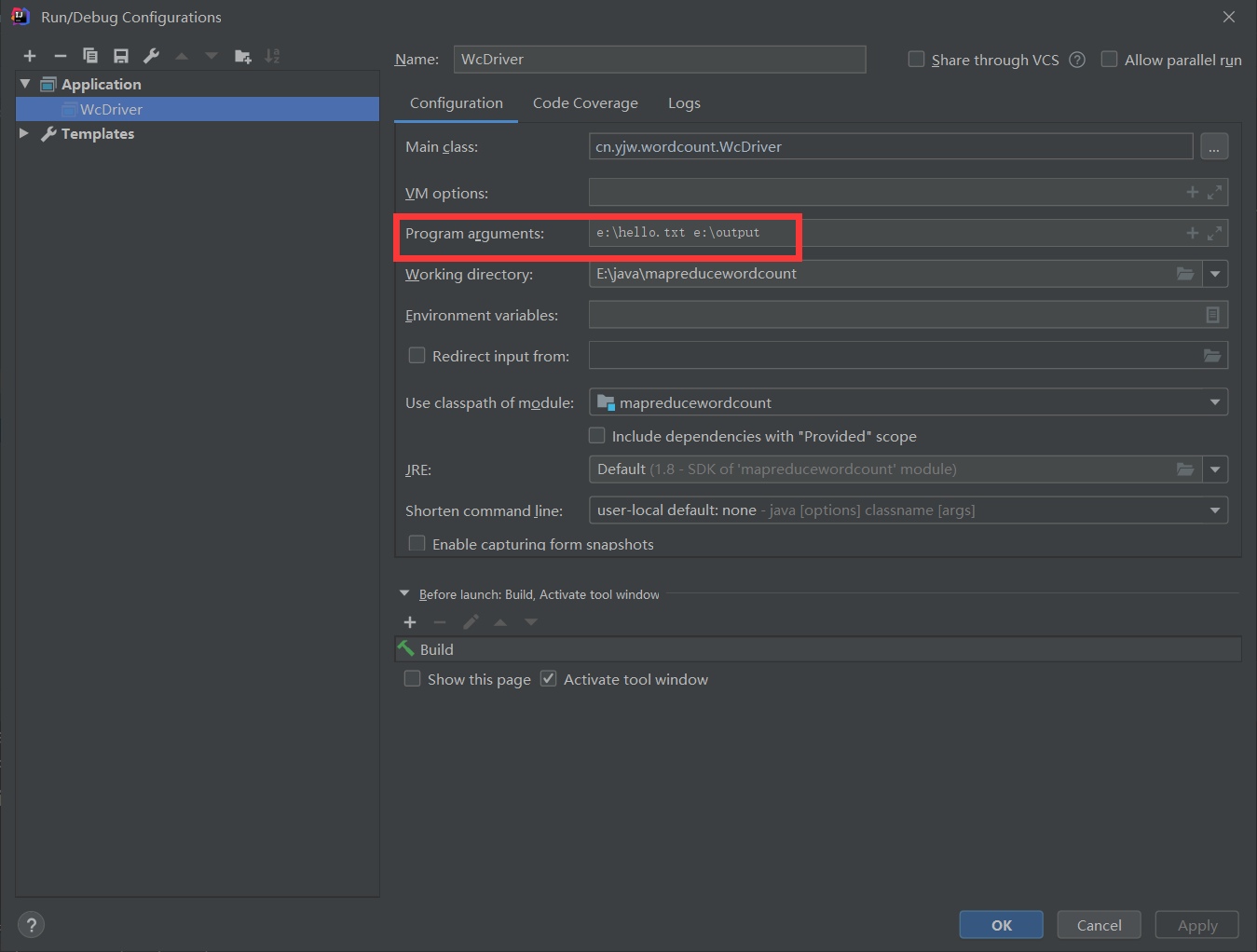

最后配置一下参数即可。

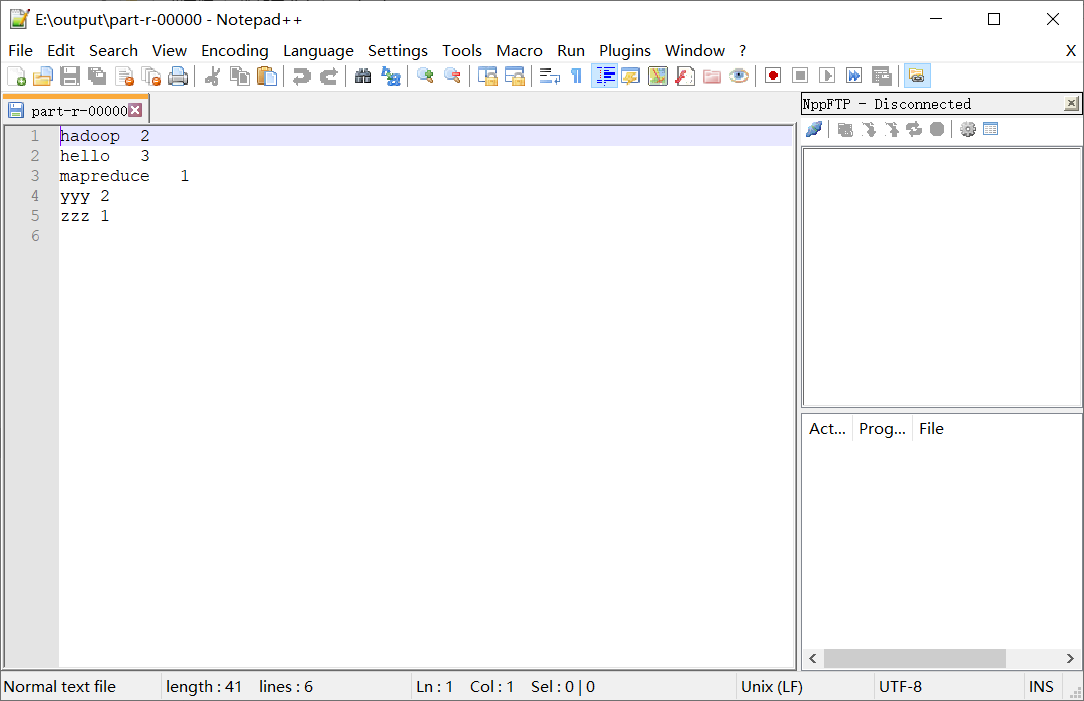

结果: