死锁

所谓死锁: 是指两个或两个以上的进程或线程在执行过程中,因争夺资源而造成的一种互相等待的现象,若无外力作用,它们都将无法推进下去。此时称系统处于死锁状态或系统产生了死锁,这些永远在互相等待的进程称为死锁进程,如下就是死锁。

from threading import Thread, Lock import time class MyRLock(Thread): mexty = Lock() mexty2 = Lock() def run(self): self.func() self.func2() def func(self): self.mexty.acquire() print('%s 拿到 A 锁' % self.name) self.mexty2.acquire() print('%s 拿到 B 锁' % self.name) self.mexty2.release() self.mexty.release() def func2(self): self.mexty2.acquire() print('%s 拿到 B 锁' % self.name) time.sleep(0.5) self.mexty.acquire() print('%s 拿到 A 锁' % self.name) self.mexty.release() self.mexty2.release() if __name__ == '__main__': for i in range(10): t = MyRLock() t.start()

执行结果:出现死锁,整个程序阻塞住

# Thread-1 拿到 A 锁 # Thread-1 拿到 B 锁 # Thread-1 拿到 B 锁 # Thread-2 拿到 A 锁

递归锁

解决方法,递归锁,在Python中为了支持在同一线程中多次请求同一资源,python提供了可重入锁RLock。

这个RLock内部维护着一个Lock和一个counter变量,counter记录了acquire的次数,从而使得资源可以被多次require。直到一个线程所有的acquire都被release,其他的线程才能获得资源。上面的例子如果使用RLock代替Lock,则不会发生死锁,二者的区别是:递归锁可以连续acquire多次,而互斥锁只能acquire一次。

递归锁可以连续 acquire 多次,每 acquire 一次计数器则 +1,只有计数为 0 时,才能被抢到 acquire。

from threading import Thread, RLock import time class MyRLock(Thread): mexty = mexty2 = RLock() def run(self): self.func() self.func2() def func(self): self.mexty.acquire() print('%s 拿到 A 锁' % self.name) self.mexty2.acquire() print('%s 拿到 B 锁' % self.name) self.mexty2.release() self.mexty.release() def func2(self): self.mexty2.acquire() print('%s 拿到 B 锁' % self.name) time.sleep(0.5) self.mexty.acquire() print('%s 拿到 A 锁' % self.name) self.mexty.release() self.mexty2.release() if __name__ == '__main__': for i in range(10): t = MyRLock() t.start()

信号量

信号量也是一把锁,可以指定信号量为5,对比互斥锁同一时间只能有一个任务抢到锁去执行,信号量同一时间可以有5个任务拿到锁去执行,如果说互斥锁是合租房屋的人去抢一个厕所,那么信号量就相当于一群路人争抢公共厕所,公共厕所有多个坑位,这意味着同一时间可以有多个人上公共厕所,但公共厕所容纳的人数是一定的,这便是信号量的大小。

解析

# Semaphore管理一个内置的计数器, # 每当调用acquire()时内置计数器-1; # 调用release() 时内置计数器+1; # 计数器不能小于0;当计数器为0时,acquire()将阻塞线程直到其他线程调用release()。

from threading import Thread, Semaphore, currentThread import time import random sm = Semaphore(3) def func(): with sm: print('%s 正在座位上' % currentThread().getName()) time.sleep(random.randint(1, 3)) if __name__ == '__main__': for i in range(10): s = Thread(target=func,) s.start()

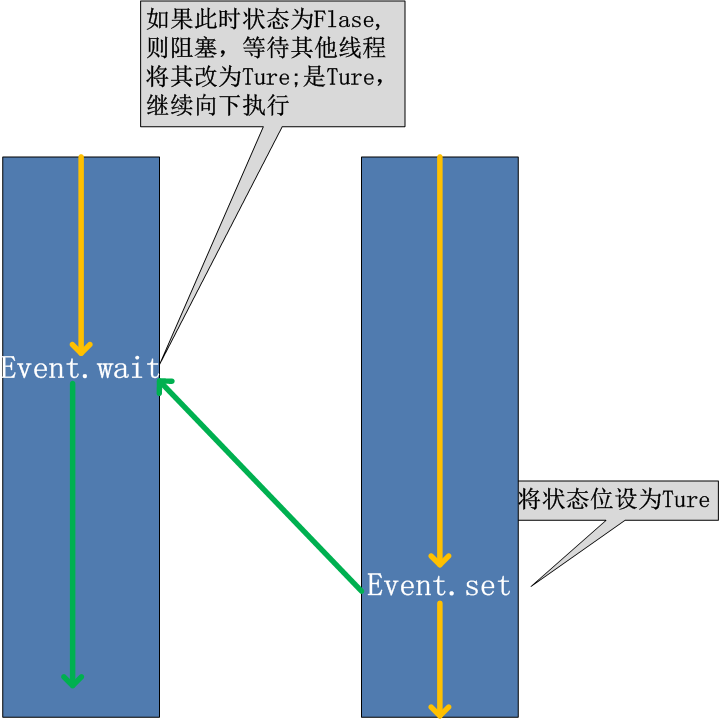

Event事件

线程的一个关键特性是每个线程都是独立运行且状态不可预测。如果程序中的其 他线程需要通过判断某个线程的状态来确定自己下一步的操作,这时线程同步问题就会变得非常棘手。为了解决这些问题,我们需要使用threading库中的Event对象。 对象包含一个可由线程设置的信号标志,它允许线程等待某些事件的发生。在 初始情况下,Event对象中的信号标志被设置为假。如果有线程等待一个Event对象, 而这个Event对象的标志为假,那么这个线程将会被一直阻塞直至该标志为真。一个线程如果将一个Event对象的信号标志设置为真,它将唤醒所有等待这个Event对象的线程。如果一个线程等待一个已经被设置为真的Event对象,那么它将忽略这个事件, 继续执行

# from threading import Event # event.isSet():返回event的状态值; # event.wait():如果 event.isSet()==False将阻塞线程; # event.set(): 设置event的状态值为True,所有阻塞池的线程激活进入就绪状态, 等待操作系统调度; # event.clear():恢复event的状态值为False。

E.wait() 可以设置等待时间,即超过此时间为超时,不在等待。

from threading import Thread, Event import time E = Event() def student(name): print('%s 学生正在上课' % name) E.wait() # E.wait(1) print('%s 学生下课' % name) def teacher(name): print('%s 老师正在上课' % name) time.sleep(3) print('%s 老师正在下课' % name) E.set() if __name__ == '__main__': s = Thread(target=student, args=('stu',)) s2 = Thread(target=student, args=('stu2',)) s3 = Thread(target=student, args=('stu3',)) t = Thread(target=teacher, args=('ysg',)) lis = [s, s2, s3, t] for i in lis: i.start()

执行结果:

# E.wait() # stu 学生正在上课 # stu2 学生正在上课 # stu3 学生正在上课 # ysg 老师正在上课 # ysg 老师正在下课 # stu2 学生下课 # stu3 学生下课 # stu 学生下课 # E.wait(1) # stu 学生正在上课 # stu2 学生正在上课 # stu3 学生正在上课 # ysg 老师正在上课 # stu 学生下课 # stu2 学生下课 # stu3 学生下课 # ysg 老师正在下课

模拟多个客户端连接服务端,限制连接次数

from threading import Thread, Event, currentThread import time E = Event() def client(): n = 0 while not E.is_set(): print('%s 正在连接' % currentThread().getName()) E.wait(0.5) n += 1 if n == 3: print('重试次数超限') return print('连接成功') def check(): # 检测服务端是否运行 print('%s 正在检测' % currentThread().getName()) time.sleep(5) E.set() print('检测完成') if __name__ == '__main__': for i in range(3): c = Thread(target=client, ) c.start()

定时器

定时器,指定n秒后执行某操作

from threading import Timer def func(name): print('hello %s' % name) if __name__ == '__main__': t = Timer(2, func, args=('ysg',)) t.start()

验证码小例子

from threading import Timer import random class Timers: def __init__(self): super().__init__() self.myTimer() def myTimer(self): self.info = self.make_timer() print(self.info) self.t = Timer(5, self.myTimer) self.t.start() def make_timer(self, num=6): lis = '' for i in range(num): s1 = str(random.randint(0, 9)) s2 = chr(random.randint(65, 90)) lis += random.choice([s1, s2]) return lis def run(self): while True: res = input('>>>').strip() if res == self.info: print('验证成功') self.t.cancel() break t = Timers() t.run()

线程queue

queue is especially useful in threaded programming when information must be exchanged safely between multiple threads.

当信息必须在多个线程之间安全交换时,队列在线程编程中特别有用。

有三种不同的用法

class queue.Queue(maxsize=0) # 队列:先进先出

import queue q = queue.Queue(3) q.put('hello') q.put(123) q.put([1, 2, 3]) # q.put(456,block=False) # 队列长度为 3,再继续放,报错:queue.Full print(q.get()) print(q.get()) print(q.get()) # print(q.get(block=False)) # 队列中数据已全部取走,再继续取,报错:queue.Empty # 输出结果 先进先出 # hello # 123 # [1, 2, 3]

class queue.LifoQueue(maxsize=0) #堆栈:last in fisrt out

注意:用法与队列一样

import queue q = queue.LifoQueue(3) q.put('hello') q.put(123) q.put([1, 2, 3]) print(q.get()) print(q.get()) print(q.get()) # 输出结果 先进先出 # [1, 2, 3] # 123 # hello

class queue.PriorityQueue(maxsize=0) #优先级队列:存储数据时可设置优先级的队列

import queue q = queue.PriorityQueue(3) q.put((10, 'hello')) q.put((50, 123)) q.put((45, [1, 2, 3])) print(q.get()) print(q.get()) print(q.get()) # 执行结果 按照优先级取出 # (10, 'hello') # (45, [1, 2, 3]) # (50, 123)

进程池&线程池

在刚开始学多进程或多线程时,我们迫不及待地基于多进程或多线程实现并发的套接字通信,然而这种实现方式的致命缺陷是:服务的开启的进程数或线程数都会随着并发的客户端数目地增多而增多,这会对服务端主机带来巨大的压力,甚至于不堪重负而瘫痪,于是我们必须对服务端开启的进程数或线程数加以控制,让机器在一个自己可以承受的范围内运行,这就是进程池或线程池的用途,例如进程池,就是用来存放进程的池子,本质还是基于多进程,只不过是对开启进程的数目加上了限制。

注意:ProcessPoolExecutor 与 ThreadPoolExecutor,用法全部相同。

解析

# 官网:https://docs.python.org/dev/library/concurrent.futures.html # concurrent.futures模块提供了高度封装的异步调用接口 # ThreadPoolExecutor:线程池,提供异步调用 # ProcessPoolExecutor: 进程池,提供异步调用 # Both implement the same interface, which is defined by the abstract Executor class. # 两者都实现相同的接口,该接口由抽象执行器类定义。

基本方法

1、submit(fn, *args, **kwargs)

异步提交任务

2、map(func, *iterables, timeout=None, chunksize=1)

取代for循环submit的操作

3、shutdown(wait=True)

相当于进程池的pool.close()+pool.join()操作

wait=True,等待池内所有任务执行完毕回收完资源后才继续

wait=False,立即返回,并不会等待池内的任务执行完毕

但不管wait参数为何值,整个程序都会等到所有任务执行完毕

submit和map必须在shutdown之前

4、result(timeout=None)

取得结果

5、add_done_callback(fn)

回调函数

进程池

介绍

# The ProcessPoolExecutor class is an Executor subclass that uses a pool of processes to execute calls asynchronously. ProcessPoolExecutor uses the multiprocessing module, which allows it to side-step the Global Interpreter Lock but also means that only picklable objects can be executed and returned. # class concurrent.futures.ProcessPoolExecutor(max_workers=None, mp_context=None) # An Executor subclass that executes calls asynchronously using a pool of at most max_workers processes. If max_workers is None or not given, it will default to the number of processors on the machine. If max_workers is lower or equal to 0, then a ValueError will be raised.

代码示例:

from concurrent.futures import ProcessPoolExecutor, ThreadPoolExecutor import os import time import random def func(): print('进程 is run,pid:%s' % os.getpid()) time.sleep(random.randint(1, 3)) if __name__ == '__main__': p = ProcessPoolExecutor(5) for i in range(10): p.submit(func,) p.shutdown() # 相当于进程池的pool.close()+pool.join()操作 print('主')

执行结果:可以看出 pid 只使用了 5 个。

# 进程 is run,pid:14440 # 进程 is run,pid:16928 # 进程 is run,pid:14056 # 进程 is run,pid:1788 # 进程 is run,pid:23436 # # 进程 is run,pid:14440 # 进程 is run,pid:16928 # # 进程 is run,pid:14056 # 进程 is run,pid:1788 # 进程 is run,pid:23436 # # 主

线程池

# ThreadPoolExecutor is an Executor subclass that uses a pool of threads to execute calls asynchronously. # class concurrent.futures.ThreadPoolExecutor(max_workers=None, thread_name_prefix='') # An Executor subclass that uses a pool of at most max_workers threads to execute calls asynchronously. # Changed in version 3.5: If max_workers is None or not given, it will default to the number of processors on the machine, multiplied by 5, assuming that ThreadPoolExecutor is often used to overlap I/O instead of CPU work and the number of workers should be higher than the number of workers for ProcessPoolExecutor. # New in version 3.6: The thread_name_prefix argument was added to allow users to control the threading.Thread names for worker threads created by the pool for easier debugging.

代码示例:

from concurrent.futures import ProcessPoolExecutor,ThreadPoolExecutor from threading import currentThread import os import time import random def func(): print('%s is run,pid:%s'%(currentThread().getName(),os.getpid())) time.sleep(random.randint(1,3)) if __name__ == '__main__': t = ThreadPoolExecutor(5) for i in range(10): t.submit(func,) t.shutdown() print('主')

执行结果:可以看出线程的名称只使用了 5 个。

# ThreadPoolExecutor-0_0 is run,pid:16976 # ThreadPoolExecutor-0_1 is run,pid:16976 # ThreadPoolExecutor-0_2 is run,pid:16976 # ThreadPoolExecutor-0_3 is run,pid:16976 # ThreadPoolExecutor-0_4 is run,pid:16976 # # ThreadPoolExecutor-0_2 is run,pid:16976 # ThreadPoolExecutor-0_1 is run,pid:16976 # ThreadPoolExecutor-0_0 is run,pid:16976 # # ThreadPoolExecutor-0_4 is run,pid:16976 # ThreadPoolExecutor-0_1 is run,pid:16976 # 主

map方法

from concurrent.futures import ThreadPoolExecutor, ProcessPoolExecutor from threading import currentThread import time import random def func(n): print('%s is run' % currentThread().getName()) time.sleep(random.randint(1, 3)) return n ** 2 if __name__ == '__main__': p = ThreadPoolExecutor(5) # for i in range(10): # p.submit(func, i) p.map(func, range(1, 10)) # #map取代了for+submit print('主')

异步调用与回调机制

可以为进程池或线程池内的每个进程或线程绑定一个函数,该函数在进程或线程的任务执行完毕后自动触发,并接收任务的返回值当作参数,该函数称为回调函数。

同步调用

from concurrent.futures import ThreadPoolExecutor, ProcessPoolExecutor from threading import currentThread import time import random def guahao(): print('%s 第一步量体温' % currentThread().getName()) time.sleep(random.randint(1, 3)) res = random.randint(36, 42) return {'Name': currentThread().getName(), 'tiwen': res} def wenzhen(info): name = info['Name'] tiwen = info['tiwen'] print('%s 的体温为 %s' % (name, tiwen)) if __name__ == '__main__': t = ThreadPoolExecutor(10) for i in range(10): res = t.submit(guahao,).result() wenzhen(res) print('主')

执行结果:可以看出,每个人需要拿到体温计把体温测量完成后,第二人才可以进行测量。

# 执行结果 # ThreadPoolExecutor-0_0 第一步量体温 # ThreadPoolExecutor-0_0 的体温为 39 # ThreadPoolExecutor-0_0 第一步量体温 # ThreadPoolExecutor-0_0 的体温为 40 # ThreadPoolExecutor-0_1 第一步量体温 # ThreadPoolExecutor-0_1 的体温为 36 # ThreadPoolExecutor-0_0 第一步量体温 # ThreadPoolExecutor-0_0 的体温为 42 # ThreadPoolExecutor-0_2 第一步量体温 # ThreadPoolExecutor-0_2 的体温为 40 # ThreadPoolExecutor-0_1 第一步量体温 # ThreadPoolExecutor-0_1 的体温为 40 # ThreadPoolExecutor-0_3 第一步量体温 # ThreadPoolExecutor-0_3 的体温为 41 # ThreadPoolExecutor-0_0 第一步量体温 # ThreadPoolExecutor-0_0 的体温为 39 # ThreadPoolExecutor-0_4 第一步量体温 # ThreadPoolExecutor-0_4 的体温为 37 # ThreadPoolExecutor-0_2 第一步量体温 # ThreadPoolExecutor-0_2 的体温为 39 # 主

异步调用

from concurrent.futures import ThreadPoolExecutor, ProcessPoolExecutor from threading import currentThread import time import random def guahao(): print('%s 第一步量体温' % currentThread().getName()) time.sleep(random.randint(1, 3)) res = random.randint(36, 42) return {'Name': currentThread().getName(), 'tiwen': res} def wenzhen(res): info = res.result() name = info['Name'] tiwen = info['tiwen'] print('%s 的体温为 %s' % (name, tiwen)) if __name__ == '__main__': t = ThreadPoolExecutor(10) for i in range(10): t.submit(guahao,).add_done_callback(wenzhen) print('主')

执行结果:可以看出,每个人需要拿到体温计第二个人可以在来体温计,体温测量好后,每个人上报各自的体温。

# ThreadPoolExecutor-0_0 第一步量体温 # ThreadPoolExecutor-0_1 第一步量体温 # ThreadPoolExecutor-0_2 第一步量体温 # ThreadPoolExecutor-0_3 第一步量体温 # ThreadPoolExecutor-0_4 第一步量体温 # ThreadPoolExecutor-0_5 第一步量体温 # ThreadPoolExecutor-0_6 第一步量体温 # ThreadPoolExecutor-0_7 第一步量体温 # ThreadPoolExecutor-0_8 第一步量体温 # ThreadPoolExecutor-0_9 第一步量体温 # 主 # ThreadPoolExecutor-0_0 的体温为 36 # ThreadPoolExecutor-0_2 的体温为 39 # ThreadPoolExecutor-0_5 的体温为 36 # ThreadPoolExecutor-0_6 的体温为 41 # ThreadPoolExecutor-0_9 的体温为 41 # ThreadPoolExecutor-0_3 的体温为 42 # ThreadPoolExecutor-0_4 的体温为 38 # ThreadPoolExecutor-0_8 的体温为 38 # ThreadPoolExecutor-0_1 的体温为 40 # ThreadPoolExecutor-0_7 的体温为 38