在Hbase使用中,除了进行预分区,rowkey合理的设计外,平时也会对系统的内置参数进行优化

1、 堆内存的优化

HBase-site.xml

<!-- regionServer的全局memstore的大小,超过该大小会触发flush到磁盘的操作,默认是堆大小的40%,而且regionserver级别的 flush会阻塞客户端读写 --> <property> <name>hbase.regionserver.global.memstore.size</name> <value></value> <description>Maximum size of all memstores in a region server before new updates are blocked and flushes are forced. Defaults to 40% of heap (0.4). Updates are blocked and flushes are forced until size of all memstores in a region server hits hbase.regionserver.global.memstore.size.lower.limit. The default value in this configuration has been intentionally left emtpy in order to honor the old hbase.regionserver.global.memstore.upperLimit property if present. </description> </property> <!--可以理解为一个安全的设置,有时候集群的“写负载”非常高,写入量一直超过flush的量,这时,我们就希望memstore不要超过一定的安全设置。 在这种情况下,写操作就要被阻塞一直到memstore恢复到一个“可管理”的大小, 这个大小就是默认值是堆大小 * 0.4 * 0.95,也就是当regionserver级别 的flush操作发送后,会阻塞客户端写,一直阻塞到整个regionserver级别的memstore的大小为 堆大小 * 0.4 *0.95为止 --> <property> <name>hbase.regionserver.global.memstore.size.lower.limit</name> <value></value> <description>Maximum size of all memstores in a region server before flushes are forced. Defaults to 95% of hbase.regionserver.global.memstore.size (0.95). A 100% value for this value causes the minimum possible flushing to occur when updates are blocked due to memstore limiting. The default value in this configuration has been intentionally left emtpy in order to honor the old hbase.regionserver.global.memstore.lowerLimit property if present. </description> </property>

这个参数并不是越大越好,因为内存如果设置得很大,当数据量一旦积压到阻塞条件,要想刷写到恢复正常的数据量(堆大小 * 0.4 * 0.95)也会加大,这样一来阻塞的时间就会加长

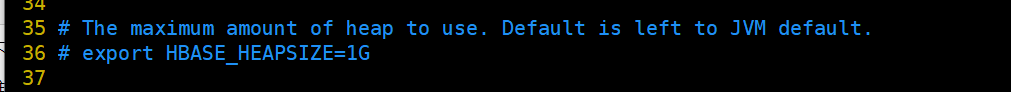

如果要更改堆内存的大小,

hbase-env.sh

2、优化DataNode允许的最大文件打开数

hdfs-site.xml

<!-- HBase一般都会同一时间操作大量的文件,根据集群的数量和规模以及数据动作,设置为4096或者更高。--> <property> <name>dfs.datanode.max.transfer.threads</name> <value>4096</value> <description> Specifies the maximum number of threads to use for transferring data in and out of the DN. </description> </property>

3、优化延迟高的数据操作的等待时间

hdfs-site.xml

<!--如果对于某一次数据操作来讲,延迟非常高,socket需要等待更长的时间,建议把该值设置为更大的值(默认60000毫秒),以确保socket不会被timeout掉。 --> <property> <name>dfs.image.transfer.timeout</name> <value>60000</value> <description> Socket timeout for image transfer in milliseconds. This timeout and the related dfs.image.transfer.bandwidthPerSec parameter should be configured such that normal image transfer can complete successfully. This timeout prevents client hangs when the sender fails during image transfer. This is socket timeout during image transfer. </description> </property>

4、优化数据的写入效率(即开启压缩)

mapred-site.xml

<property> <name>mapreduce.map.output.compress</name> <value>false</value> <description>Should the outputs of the maps be compressed before being sent across the network. Uses SequenceFile compression. </description> </property> <property> <name>mapreduce.map.output.compress.codec</name> <value>org.apache.hadoop.io.compress.DefaultCodec</value> <description>If the map outputs are compressed, how should they be compressed? </description> </property>

5、设置RPC监听数量

hbase-site.xml

<!-- regionServer端默认开启的RPC监控实例数,也即RegionServer能够处理的IO请求线程数 当客户端过多或者读写请求过多时,可增加该值--> <property> <name>hbase.regionserver.handler.count</name> <value>30</value> <description>Count of RPC Listener instances spun up on RegionServers. Same property is used by the Master for count of master handlers. </description> </property>

6、优化HStore文件大小

hbase-site.xml

<!--HStoreFile最大的大小,当某个region的某个列族超过这个大小会进行region拆分 如果需要运行HBase的MR任务,可以减小此值,因为一个region对应一个map任务,如果单个region过大,会导致map任务执行时间过长。--> <property> <name>hbase.hregion.max.filesize</name> <value>10737418240</value> <description> Maximum HStoreFile size. If any one of a column families' HStoreFiles has grown to exceed this value, the hosting HRegion is split in two. </description> </property>

7、增大读缓存,写缓存

hbase-site.xml

<!-- hbase客户端每次 写缓冲的大小(也就是客户端批量提交到server端),这块大小会同时占用客户端和服务端,缓冲区更大可以减少RPC次数,但是更大意味着内存占用更多 --> <property> <name>hbase.client.write.buffer</name> <value>2097152</value> <description>Default size of the HTable client write buffer in bytes. A bigger buffer takes more memory -- on both the client and server side since server instantiates the passed write buffer to process it -- but a larger buffer size reduces the number of RPCs made. For an estimate of server-side memory-used, evaluate hbase.client.write.buffer * hbase.regionserver.handler.count </description> </property> <!-- 在执行hbase scan操作的时候,客户端缓存的行数,设置小意味着更多的rpc次数,设置大比较吃内存 --> <property> <name>hbase.client.scanner.caching</name> <value>2147483647</value> <description>Number of rows that we try to fetch when calling next on a scanner if it is not served from (local, client) memory. This configuration works together with hbase.client.scanner.max.result.size to try and use the network efficiently. The default value is Integer.MAX_VALUE by default so that the network will fill the chunk size defined by hbase.client.scanner.max.result.size rather than be limited by a particular number of rows since the size of rows varies table to table. If you know ahead of time that you will not require more than a certain number of rows from a scan, this configuration should be set to that row limit via Scan#setCaching. Higher caching values will enable faster scanners but will eat up more memory and some calls of next may take longer and longer times when the cache is empty. Do not set this value such that the time between invocations is greater than the scanner timeout; i.e. hbase.client.scanner.timeout.period </description> </property>