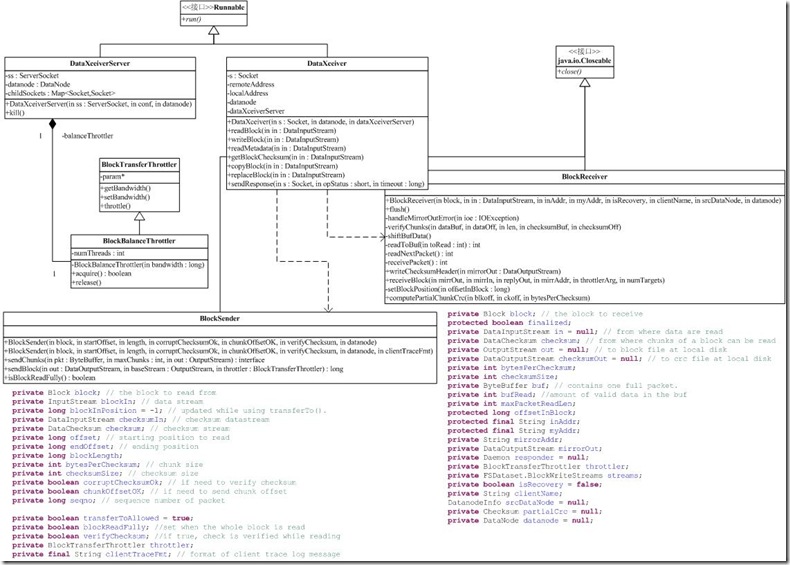

DataNode上数据块的接受/发送并没有采用我们前面介绍的RPC机制,原因很简单,RPC是一个命令式的接口,而DataNode处理数据部分,往往是一种流式机制。DataXceiverServer和DataXceiver就是这个机制的实现。其中,DataXceiver还依赖于两个辅助类:BlockSender和BlockReceiver。如下是类图

DataXceiverServer

DataXceiverServer相对比较简单,创建一个ServerSocket来接受请求,每接受一个连接,就创建一个DataXceiver用于处理请求,并将Socket存在一个名为childSockets的Map中。此外,还创建一个BlockBalanceThrottler对象用来控制DataXceiver的数目以及流量均衡。逻辑主要在run函数中.

这里值得说一下的是有几个参数:

dfs.datanode.max.xcievers:默认值是256,每个node最多可以起多少个DataXceiver,如果太多的话可能会导致内存不足

dfs.block.size:在这里需要block的大小,是因为需要预估本分区是否还有足够的空间

dfs.balance.bandwidthPerSec:在使用start-balancer.sh的时候,各个节点之间拷贝block的网络带宽,默认是1M/s

BlockBalanceThrottler(extend BlockTransferThrottler)用来进行带宽控制的.

DataXceiver

DataXceiver的主要流程都在run函数里面:

public void run() { DataInputStream in = null; try { in = new DataInputStream(new BufferedInputStream(NetUtils.getInputStream(s), SMALL_BUFFER_SIZE)); short version = in.readShort(); byte op = in.readByte(); int curXceiverCount = datanode.getXceiverCount(); if (curXceiverCount > dataXceiverServer.maxXceiverCount) { throw new IOException("xceiverCount " + curXceiverCount + " exceeds the limit of concurrent xcievers " + dataXceiverServer.maxXceiverCount); } switch (op) { case DataTransferProtocol.OP_READ_BLOCK: readBlock(in); break; case DataTransferProtocol.OP_WRITE_BLOCK: writeBlock(in); break; case DataTransferProtocol.OP_READ_METADATA: readMetadata(in); break; case DataTransferProtocol.OP_REPLACE_BLOCK: // for balancing purpose; send to a destination replaceBlock(in); break; case DataTransferProtocol.OP_COPY_BLOCK: copyBlock(in); break; case DataTransferProtocol.OP_BLOCK_CHECKSUM: // get the checksum of a block getBlockChecksum(in); break; default: throw new IOException("Unknown opcode " + op + " in data stream"); } } }

1.从上面可以看出客户端发来请求头部数据是这样的:

+----------------------------------------------+

| 2 bytes version | 1 byte OP |

+----------------------------------------------+

2.OP支持六种操作,相关操作在DataTransferProtocol中定义

public static final byte OP_WRITE_BLOCK = (byte) 80;

public static final byte OP_READ_BLOCK = (byte) 81;

public static final byte OP_READ_METADATA = (byte) 82;

public static final byte OP_REPLACE_BLOCK = (byte) 83;

public static final byte OP_COPY_BLOCK = (byte) 84;

public static final byte OP_BLOCK_CHECKSUM = (byte) 85;

这些操作中最复杂的是read和write,从简单的看起

OP_READ_METADATA是读块元数据操作,客户端请求的头部数据如下所示:

+------------------------------------------------+

| 8 byte Block ID | 8 byte genstamp |

+------------------------------------------------+

返回的数据如下所示:

+------------------------------------------------+

| 1 byte status | 4 byte length of metadata |

+------------------------------------------------+

| meta data | 0 |

+------------------------------------------------+

OP_REPLACE_BLOCK是替换块数据操作,主要用于负载均衡,DataXceiver会接收一个块并写到磁盘上,操作完成后通知namenode删除源数据块,客户端请求的头部数据如下所示:

+------------------------------------------------+

| 8 byte Block ID | 8 byte genstamp |

+------------------------------------------------+

| 4 byte length | source node id |

+------------------------------------------------+

| source data node |

+-----------------------+

具体的处理过程是这样的:向source datanode发送拷贝块请求(OP_COPY_BLOCK),然后接收source datanode的响应,创建一个BlockReceiver用于接收块数据,最后通知namenode已经接收完块数据.

OP_COPY_BLOCK是复制块数据操作,主要用于负载均衡,将块数据发送到发起请求的datanode,DataXceiver会创建一个BlockReceiver对象用来发送数据,请求的头部数据如下所示:

+------------------------------------------------+

| 8 byte Block ID | 8 byte genstamp |

+------------------------------------------------+

OP_BLOCK_CHECKSUM是获得块checksum操作,对块的所有checksum做MD5摘要,客户端请求的头部数据如下所示:

+------------------------------------------------+

| 8 byte Block ID | 8 byte genstamp |

+------------------------------------------------+

返回的数据如下所示:

+------------------------------------------------+

| 2 byte status | 4 byte bytes per CRC |

+------------------------------------------------+

| 8 byte CRC per block | 16 byte md5 digest |

+------------------------------------------------+

OP_READ_BLOCK是读块数据操作,客户端请求的头部数据如下所示,DataXceiver会创建一个BlockSender对象用来向客户端发送数据。

+-----------------------------------+

| 8 byte Block ID | 8 byte genstamp |

+-----------------------------------+

| 8 byte start offset |8 byte length |

+-----------------------------------+

| 4 byte length | <DFSClient id> |

+-----------------------------------+

OP_WRITE_BLOCK是写块数据操作,客户端请求的头部数据如下所示,DataXceiver会创建一个BlockReceiver对象用来接收客户端的数据。

+------------------------------------------------+

| 8 byte Block ID | 8 byte genstamp |

+------------------------------------------------+

| 4 byte num of datanodes in entire pipeline |

+------------------------------------------------+

| 1 byte is recovery | 4 byte length |

+------------------------------------------------+

| <DFSClient id> | 1 byte has src node |

+------------------------------------------------+

| src datanode info | 4 byte num of targets |

+------------------------------------------------+

| target datanodes |

+-----------------------+

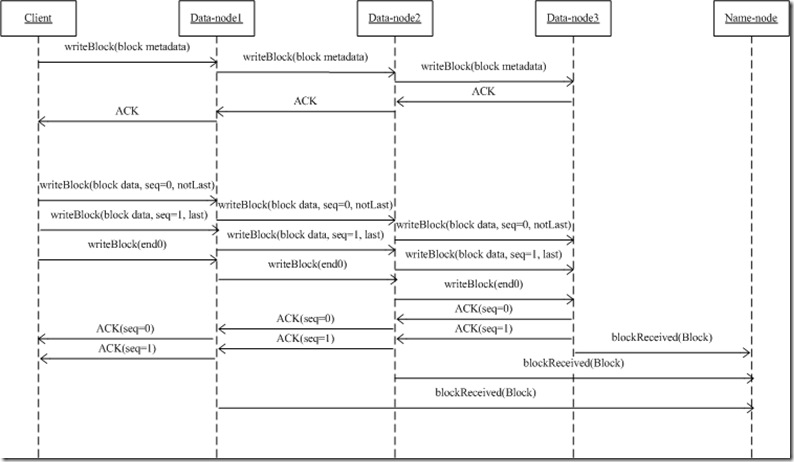

写块数据是一个比较复杂的操作,一个简单的时序图可以示意下:

这个过程其实还是挺复杂的,代码没看的很仔细

参考url

http://hi.baidu.com/hovlj_1130/blog/item/20200da530603af99052eed9.html

http://www.cnblogs.com/serendipity/archive/2012/03/03/2378639.html

http://blog.jeoygin.org/2012/03/hdfs-source-analysis-4-datanode-dataxceiver.html

http://caibinbupt.iteye.com/blog/286533