训练神经网络2

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt import input_data mnist = input_data.read_data_sets('data/',one_hot=True) #one_hot=True编码格式为01编码 n_hidden_1 = 256 n_hidden_2 = 128 n_input = 784 n_classes = 10 x = tf.placeholder("float",[None,n_input]) y = tf.placeholder("float",[None,n_classes]) stddev = 0.1 weights = { 'w1':tf.Variable(tf.random.normal([n_input,n_hidden_1],stddev=stddev)), 'w2':tf.Variable(tf.random.normal([n_hidden_1,n_hidden_2],stddev=stddev)), 'out':tf.Variable(tf.random.normal([n_hidden_2,n_classes],stddev=stddev)) } biases = { 'bi':tf.Variable(tf.random.normal([n_hidden_1])), 'b2':tf.Variable(tf.random.normal([n_hidden_2])), 'out':tf.Variable(tf.random.normal([n_classes])) } print("NETWORK READY") def multilayer_perceptron(_X,_weights,_biases): layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(_X,_weights['w1']),_biases['b1'])) layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1,_weights['w2']),_biases['b2'])) return (tf.matmul(layer_2,_weights['out']) + _biases['out']) pred = multilayer_perceptron(x, weights, biases) cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(pred,y)) #tensorflow中已有的交叉熵函数 optm = tf.train.GradientDescentOptimizer(learning_rate=0.01).minimize(cost) corr = tf.equal(tf.argmax(pred,1),tf.argmax(y,1)) accr = tf.reduce_mean(tf.cast(corr,"float")) init = tf.compat.v1.global_variables_initializer() print("FUNCTIONS READY")

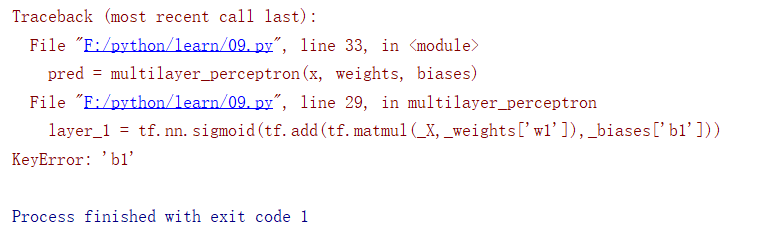

今天出现了报错,还没有解决。

今天出现了报错,还没有解决。