环境搭建版本:

Ubuntu 14.04.1 LTS 64位桌面版

hadoop-2.2.0.tar.gz

jdk-7u67-linux-x64.tar.gz

scala-2.10.4.tgz

spark-1.1.0-bin-hadoop2.4.tgz

Scala配置:

Scala-2.10.4解压

Tar -zxvf scala.tgz

修改配置sudo vi /etc/profile

#scala settings

export SCALA_HOME=/home/ubuntu/scala

export PATH=$SCALA_HOME/bin:$PATH

Spark配置:

解压spark-1.1.0

修改配置sudo vi /etc/profile

#spark settings

export SPARK_HOME=/home/ubuntu/spark

export PATH=$SPARK_HOME/bin:$PATH

保存并更新配置:

Source /etc/profile

目录spark/conf

spark-env.sh添加:

export SCALA_HOME=/home/ubuntu/scala

export JAVA_HOME=/home/ubuntu/jdk

export SPARK_MASTER_IP=master

export SPARK_WORKER_MEMORY=1000m

Slaves添加:

Slave1

启动spark

Sbin/start-all.sh

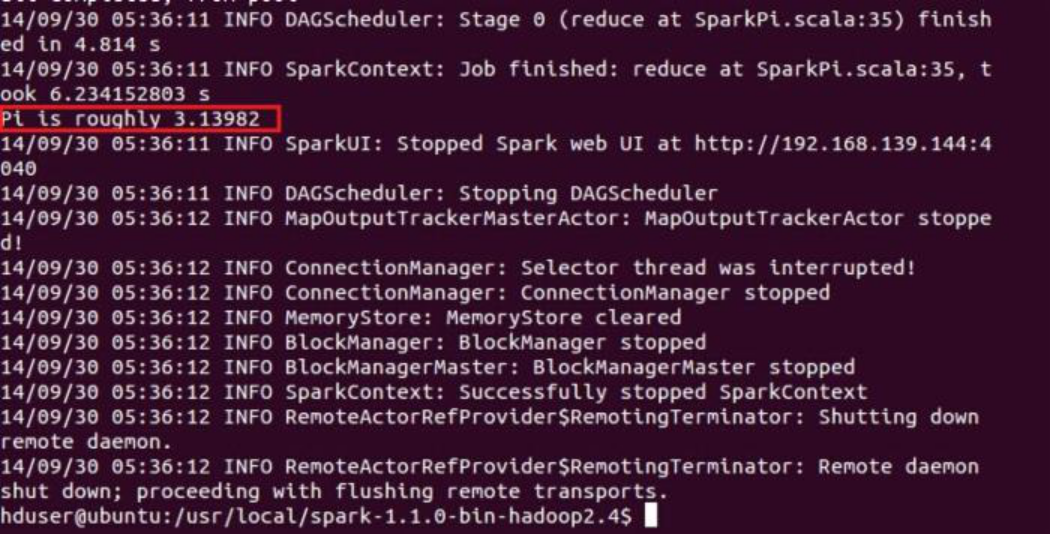

测试spark是否安装成功

Bin/run-example SparkPi: