负责搭建公司日志分析,一直想把CDN日志也放入到日志分析,前些日志终于达成所愿,现在贴出具体做法:

1、收集日志

腾讯云CDN日志一般一小时刷新一次,也就是说当前只能下载一小时之前的日志数据,但据本人观察,有时前一小时的并下载不到,所以为了保险起见,可以下载两小时之前的日志数据。下载日志可以通过腾讯云的API获取日志列表,然后下载。

腾讯云日志下载API 链接:https://www.qcloud.com/document/product/228/8087

日志采集脚本:

[root@BJVM-2-181 bin]# cat get_cdn_log.py #!/usr/bin/env python # coding=utf-8 import hashlib import requests import hmac import random import time import base64 import json import gzip import os import sys from datetime import datetime, timedelta class Sign(object): def __init__(self, secretId, secretKey): self.secretId = secretId self.secretKey = secretKey # 生成签名串 def make(self, requestHost, requestUri, params, method='GET'): srcStr = method.upper() + requestHost + requestUri + '?' + "&".join(k.replace("_",".") + "=" + str(params[k]) for k in sorted(params.keys())) hashed = hmac.new(self.secretKey, srcStr, hashlib.sha1) return base64.b64encode(hashed.digest()) class CdnHelper(object): SecretId='AKIDLsldjflsdjflsdjflsdjfpGSO5XoGiY9' SecretKey='SeaHjSDFLJSLDFJQIuFJ7rMiz0lGV' requestHost='cdn.api.qcloud.com' requestUri='/v2/index.php' def __init__(self, host, startDate, endDate): self.host = host self.startDate = startDate self.endDate = endDate self.params = { 'Timestamp': int(time.time()), 'Action': 'GetCdnLogList', 'SecretId': CdnHelper.SecretId, 'Nonce': random.randint(10000000,99999999), 'host': self.host, 'startDate': self.startDate, 'endDate': self.endDate } self.params['Signature'] = Sign(CdnHelper.SecretId, CdnHelper.SecretKey).make(CdnHelper.requestHost, CdnHelper.requestUri, self.params) self.url = 'https://%s%s' % (CdnHelper.requestHost, CdnHelper.requestUri) def GetCdnLogList(self): ret = requests.get(self.url, params=self.params) return ret.json() class GZipTool(object): """ 压缩与解压gzip """ def __init__(self, bufSize = 1024*8): self.bufSize = bufSize self.fin = None self.fout = None def compress(self, src, dst): self.fin = open(src, 'rb') self.fout = gzip.open(dst, 'wb') self.__in2out() def decompress(self, gzFile, dst): self.fin = gzip.open(gzFile, 'rb') self.fout = open(dst, 'wb') self.__in2out() def __in2out(self,): while True: buf = self.fin.read(self.bufSize) if len(buf) < 1: break self.fout.write(buf) self.fin.close() self.fout.close() def download(link, name): try: r = requests.get(link) with open(name, 'wb') as f: f.write(r.content) return True except: return False def writelog(src, dst): # 保存为以天命名日志 dst = dst.split('-')[0][:-2] + '-' + dst.split('-')[1] with open(src, 'r') as f1: with open(dst, 'a+') as f2: for line in f1: f2.write(line) if __name__ == '__main__': #startDate = "2017-02-23 12:00:00" #endDate = "2017-02-23 12:00:00" # 前一小时 # startDate = endDate = time.strftime('%Y-%m-%d ', time.localtime()) + str(time.localtime().tm_hour-1) + ":00:00" tm = datetime.now() + timedelta(hours=-2) startDate = endDate = tm.strftime("%Y-%m-%d %H:00:00") #hosts = ['userface.51img1.com'] hosts = [ 'pfcdn.xxx.com', 'pecdn.xxx.com', 'pdcdn.xxx.com', 'pccdn.xxx.com', 'pbcdn.xxx.com', 'pacdn.xxx.com', 'p9cdn.xxx.com', 'p8cdn.xxx.com', 'p7cdn.xxx.com', ] for host in hosts: try: obj = CdnHelper(host, startDate,endDate) ret = obj.GetCdnLogList() link = ret['data']['list'][0]['link'] name = ret['data']['list'][0]['name'] # 下载链接保存的文件名 gzip_name = '/data/logs/cdn/cdn_log_temp/' + name + '.gz' # 解压后的文件名 local_name = '/data/logs/cdn/cdn_log_temp/' + name + '.log' # 追加的文件名 real_path = '/data/logs/cdn/' + name + '.log' print local_name, real_path status = download(link, gzip_name) if status: try: GZipTool().decompress(gzip_name, local_name) writelog(local_name, real_path) # os.remove(gzip_name) os.remove(local_name) except: continue except Exception ,e: print e continue

放到定时任务,每小时执行一次

# cdn日志 30 */1 * * * /usr/bin/python /root/bin/get_cdn_log.py &> /dev/null

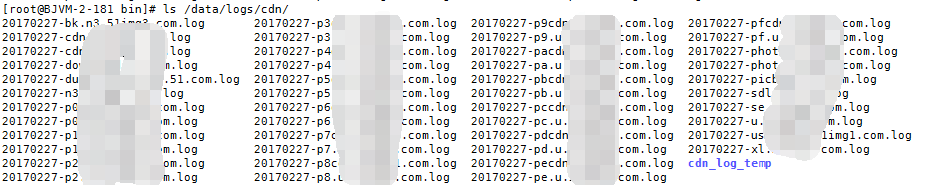

此图解压后的日志,每个域名保存为一个文件,按天分割。

2、filebeat配置(具体含义查看官方文档)

[root@BJ-2-11 bin]# cat /usr/local/app/filebeat-1.2.3-x86_64/nginx-php.yml filebeat: prospectors: - paths: - /data/logs/cdn/*.log document_type: cdn-log input_type: log #tail_files: true multiline: negate: true match: after output: logstash: hosts: ["10.80.2.181:5048", "10.80.2.182:5048"] shipper: logging: files:

3、logstash配置

日志格式:

20170227152116 61.135.234.125 cdn.xxx.com /game/2017/201701/20170121/57037f7fc1a0dde9091d4fe6502a6c53.jpg 17769 22 26 200 http://www.xxx.com/ 5 "Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 6.1; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; NetworkBench/7.0.0.282-5004888-124025)" "(null)" GET HTTP/1.1 hit

日志内容依次包括:请求时间、访问域名的客户端IP、被访问域名、文件请求路径、本次访问字节数大小、省份、运营商、http返回码、referer信息、request-time(毫秒)、User-Agent、range、HTTP Method、HTTP协议标识、缓存Hit/Miss。

配置文件

# /usr/local/app/logstash-2.3.4/conf.d/logstash.conf

input { beats { port => 5048 host => "0.0.0.0" } } filter { .....(省略) else if [type] == "cdn-log" { grok { patterns_dir => ["./patterns"] match => { "message" => "%{DATESTAMP_EVENTLOG:timestamp} %{IPORHOST:client_ip} %{IPORHOST:server_name} %{NOTSPACE:request} %{NUMBER:bytes} %{NUMBER:province} %{NUMBER:operator} %{NUMBER:status} (?:%{URI:referrer}|%{WORD:referrer}) %{NUMBER:request_time} %{QS:agent} "(%{WORD:range})" %{WORD:method} HTTP/%{NUMBER:protocol} %{WORD:cache}" } } date { match => [ "timestamp", "yyyyMMddHHmmss"] target => "@timestamp" } alter { condrewrite => [ "province", "22", "北京", "province", "86", "内蒙古", "province", "146", "山西", "province", "1069", "河北", "province", "1077", "天津", "province", "119", "宁夏", "province", "152", "陕西", "province", "1208", "甘肃", "province", "1467", "青海", "province", "1468", "新疆", "province", "145", "黑龙江", "province", "1445", "吉林", "province", "1464", "辽宁", "province", "2", "福建", "province", "120", "江苏", "province", "121", "安徽", "province", "122", "山东", "province", "1050", "上海", "province", "1442", "浙江", "province", "182", "河南", "province", "1135", "湖北", "province", "1465", "江西", "province", "1466", "湖南", "province", "118", "贵州", "province", "153", "云南", "province", "1051", "重庆", "province", "1068", "四川", "province", "1155", "西藏", "province", "4", "广东", "province", "173", "广西", "province", "1441", "海南", "province", "0", "其他", "province", "1", "港澳台", "province", "1", "海外", "operator", "2", "中国电信", "operator", "26", "中国联通", "operator", "38", "教育网", "operator", "43", "长城宽带", "operator", "1046", "中国移动", "operator", "3947", "中国铁通", "operator", "-1", "海外运营商", "operator", "0", "其他运营商" ] } } } # filter output { if "_grokparsefailure" in [tags] { file { path => "/var/log/logstash/grokparsefailure-%{[type]}-%{+YYYY.MM.dd}.log" } } ......(省略) else if [type] == "cdn-log"{ elasticsearch { hosts => ["10.80.2.13:9200","10.80.2.14:9200","10.80.2.15:9200","10.80.2.16:9200"] sniffing => true manage_template => true template_overwrite => true template_name => "cdn" template => "/usr/local/app/logstash-2.3.4/templates/cdn.json" index => "%{[type]}-%{+YYYY.MM.dd}" document_type => "%{[type]}" } } ......(省略) } # output

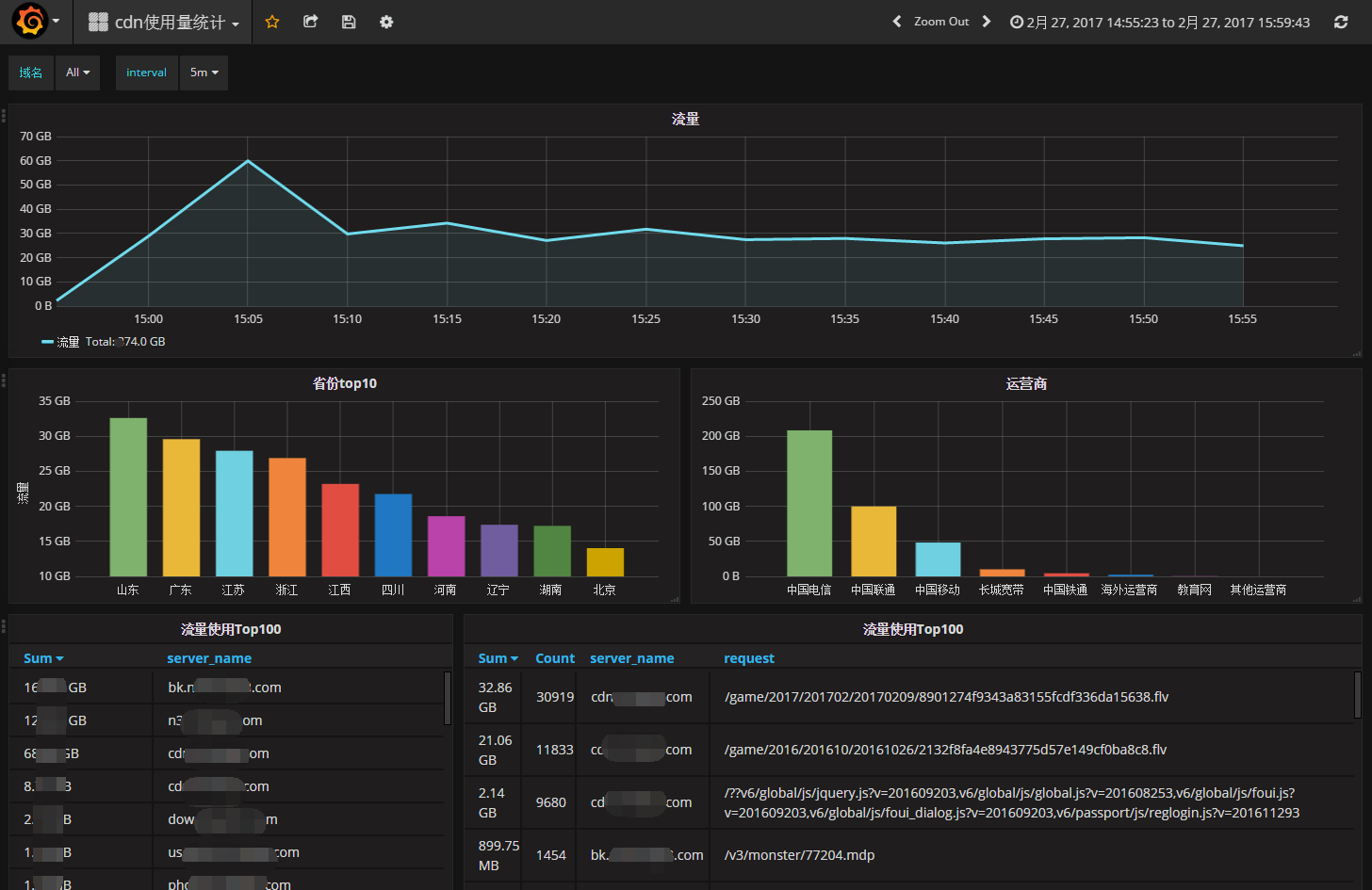

4 效果图(一小时数据)

cdn使用量效果图

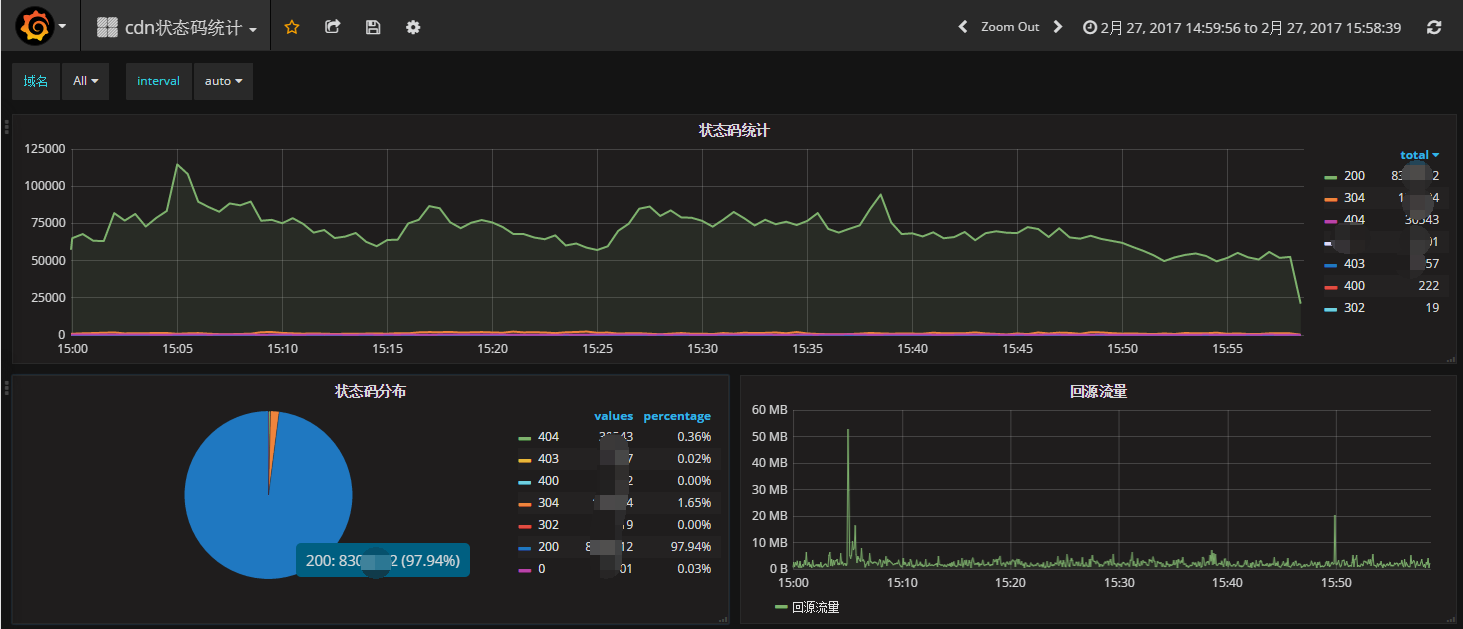

cdn访问情况统计

状态码统计