功能要求为:1,数据采集,定期从网络中爬取信息领域的相关热词

2,数据清洗:对热词信息进行数据清洗,并采用自动分类技术生成自动分类计数生成信息领域热词目录。

3,热词解释:针对每个热词名词自动添加中文解释(参照百度百科或维基百科)

4,热词引用:并对近期引用热词的文章或新闻进行标记,生成超链接目录,用户可以点击访问;

5,数据可视化展示:① 用字符云或热词图进行可视化展示;② 用关系图标识热词之间的紧密程度。

6,数据报告:可将所有热词目录和名词解释生成 WORD 版报告形式导出。

本次完成第一步的部分功能,爬取博客园的推荐新闻的标题和内容到文本中,

思路:通过观察发现页与页之间的规律![]()

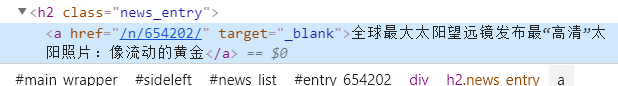

通过改变page来改变页面链接。又发现

图中的href即为对应的新闻详细内容的网页链接的地址

于是再循环爬取对应的href链接获取文章的具体地址。具体代码如下

import requests

from lxml import etree

import time

import pymysql

import datetime

import urllib

import json

def getDetail(href, title):

#print(href)

print(title)

head={

'cookie':'_ga=GA1.2.617656226.1563849568; __gads=ID=c19014f666d039b5:T=1563849576:S=ALNI_MZBAhutXhG60zo7dVhXyhwkfl_XzQ; UM_distinctid=16cacb45b0c180-0745b471080efa-7373e61-144000-16cacb45b0d6de; __utmz=226521935.1571044157.1.1.utmcsr=baidu|utmccn=(organic)|utmcmd=organic; __utma=226521935.617656226.1563849568.1571044157.1571044156.1; SyntaxHighlighter=python; .Cnblogs.AspNetCore.Cookies=CfDJ8Nf-Z6tqUPlNrwu2nvfTJEgfH-Wr7LrYHIrX6zFY2UqlCesxMAsEz9JpAIbaPlpJgugnPrXvs5KuTOPnzbk1pa_VZIVlfx1x5ufN55Z8sb63ACHlNKd4JMqI93TE2ONBD5KSWd-ryP2Tq1WfI9e_uTiJIIO9vlm54pfLY0fIReGGtqJkQ5E90ahfHtJeDTgM1RHXRieqriLUIXRciu-3QYwk8x5vLZfJIEUMO5g_seeG6G6FW2kbd6Uw3BfRkkIi-g2O_LSlBqj0DdbJFlNmd-TnPmckz5AENnX9f3SPVVhfmg7zINi4G2SSUcYWSvtVqdUtQ8o9vbBKosXoFOTUNH17VXX_IX8V0ODbs8qQfCkPFaDjS8RWSRkW9KDPOmXyqrtHvRXgGRydee52XJ1N8V-Mu0atT0zMwqzblDj2PDahV1R0Y7nBvzIy8uit15vGtR_r0gRFmFSt3ftTkk63zZixWgK7uZ5BsCMZJdhqpMSgLkDETjau0Qe1vqtLvDGOuBZBkznlzmTa-oZ7D6LrDhHJubRpCICUGRb5SB6WcbaxwOqE1um40OSyila-PgwySA; .CNBlogsCookie=9F86E25644BC936FAE04158D0531CF8F01D604657A302F62BA92F3EB0D7BE317FDE7525EFE154787036095256D48863066CB19BB91ADDA7932BCC3A2B13F6F098FC62FDA781E0FBDC55280B73670A89AE57E1CA5E1269FC05B8FFA0DD6048B0363AF0F08; _gid=GA1.2.1435993629.1581088378; __utmc=66375729; __utmz=66375729.1581151594.2.2.utmcsr=cnblogs.com|utmccn=(referral)|utmcmd=referral|utmcct=/; __utma=66375729.617656226.1563849568.1581151593.1581161200.3; __utmb=66375729.6.10.1581161200'

}

url2 = "https://news.cnblogs.com"+href

r2=requests.get(url2,headers=head)

#print(r2.status_code)

html = r2.content.decode("utf-8")

#if title=='病毒,一条静止的河流':

#print(html)

html1= etree.HTML(html)

#print(html1)

content1 = html1.xpath('//div[@id="news_body"]')

print(content1)

if len(content1)==0:

print("异常")

else:

content2 =content1[0].xpath('string(.)')

#print(content2)

content = content2.replace('

', '').replace(' ', '').replace('

', '').replace(' ','')

print(content)

f = open("news.txt", "a+",encoding='utf-8')

f.write(title+' '+content+'

')

#&emsp

if __name__=='__main__':

for i in range(0,100):

print("***********************************")

print(i)

page = i+1

url = "https://news.cnblogs.com/n/recommend?page="+str(page)

r = requests.get(url)

html = r.content.decode("utf-8")

print("Status code:", r.status_code)

html1 = etree.HTML(html)

href = html1.xpath('//h2[@class="news_entry"]/a/@href')

title =html1.xpath('//h2[@class="news_entry"]/a/text()')

print(len(href))

for a in range(0,18):

getDetail(href[a],title[a])

爬取结果如下(展示部分,标题与内容通过空格隔开,每行数据即为爬取的标题和内容):

总结:在爬取过程中遇到反扒,被检测到连续访问过多,然后页面直接进入登陆界面,经查阅后在head加上cookie可以解决这个问题,