一、背景

当生产环境web服务器做了负载集群后,查询每个tomcat下的日志无疑是一件麻烦的事,elk为我们提供了快速查看日志的方法。

二、环境

CentOS7、JDK8、这里使用了ELK5.0.0(即:Elasticsearch-5.0.0、Logstash-5.0.0、kibana-5.0.0),安装ElasticSearch6.2.0也是一样的步骤,已亲测。

三、注意

1. 安装ELK一定要JDK8或者以上版本。

2. 关闭防火墙:systemctl stop firewalld.service

3. 启动elasticsearch,一定不能用root用户,需要重新创建一个用户,因为elasticsearch会验证安全。

4.官网历史版本下载:https://www.elastic.co/downloads/past-releases

四、Elasticsearch安装

1. 用root登录centos,创建elk组,并且将elk用户添加到elk组中,然后创建elk用户的密码

[root@iZuf6a50pk1lwxxkn0qr6tZ ~]# groupadd elk

[root@iZuf6a50pk1lwxxkn0qr6tZ ~]# useradd -g elk elk

[root@iZuf6a50pk1lwxxkn0qr6tZ ~]# passwd elk

更改用户 elk 的密码 。

新的 密码:

重新输入新的 密码:

passwd:所有的身份验证令牌已经成功更新。

2.进入/usr/local目录,创建elk目录,并将该目录的使用权赋给elk用户。下载elasticsearch,可以从官网下载,也可以直接使用wget下载,这里使用wget下载

cd /usr/local

mkdir elk

chown -R elk:elk /usr/local/elk # 授权

cd elk

wget https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-5.0.0.tar.gz

解压elasticsearch:tar -zxvf elasticsearch-5.0.0.tar.gz

3.使用root用户,进入/usr/local/elk/elasticsearch-5.0.0/config目录,编辑 elasticsearch.yml ,修改以下参数:

cluster.name: nmtx-cluster # 在集群中的名字

node.name: node-1 # 节点的名字

path.data: /data/elk/elasticsearch-data # es数据保存的目录

path.logs: /data/elk/elasticsearch-logs # es日志保存的目录

network.host: 0.0.0.0

http.port: 9200 # 开放的端口

将data/elk目录的使用权限 赋予 elk用户:

chown -R elk:elk /data/elk

4.使用root用户,修改/etc/sysctl.conf 配置文件,在最后一行添加:vm.max_map_count=262144, 然后使其生效:sysctl -p /etc/sysctl.conf。如果不配置这一个的话,启动es会报如下错误:

max virtual memory areas vm.max_map_count [65530] is too low, increase to at least [262144]

5.使用root用户,修改/etc/security/limits.conf文件,添加或修改如下行:

* soft nproc 65536

* hard nproc 65536

* soft nofile 65536

* hard nofile 65536

如果不配置,会报如下错误:

max file descriptors [4096] for elasticsearch process is too low, increase to at least [65536]

6.切换到elk用户,启动es(直接后台启动了,加上 -d 即为后台启动,配置完4、5步骤基本不会出什么问题了),

/usr/local/elk/elasticsearch-5.0.0/bin/elasticsearch -d

7.验证是否启动成功,输入以下命令:

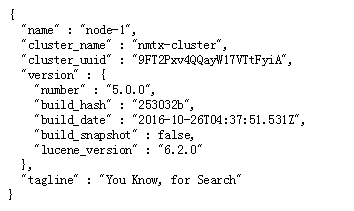

curl -XGET 'localhost:9200/?pretty'

当看到如下时,即为启动成功:

{

"name" : "node-1",

"cluster_name" : "nmtx-cluster",

"cluster_uuid" : "WdX1nqBPQJCPQniObzbUiQ",

"version" : {

"number" : "5.0.0",

"build_hash" : "253032b",

"build_date" : "2016-10-26T04:37:51.531Z",

"build_snapshot" : false,

"lucene_version" : "6.2.0"

},

"tagline" : "You Know, for Search"

}

或者使用浏览器访问:192.168.1.66:9200

但是6版本集成了x-pack插件,可能会出现如下:

[elk@localhost bin]$ curl -XGET 'localhost:9200/?pretty' { "error" : { "root_cause" : [ { "type" : "security_exception", "reason" : "missing authentication token for REST request [/?pretty]", "header" : { "WWW-Authenticate" : "Basic realm="security" charset="UTF-8"" } } ], "type" : "security_exception", "reason" : "missing authentication token for REST request [/?pretty]", "header" : { "WWW-Authenticate" : "Basic realm="security" charset="UTF-8"" } }, "status" : 401 }

需要加上用户名密码,如下:

[elk@localhost bin]$ curl --user elastic:changeme -XGET 'localhost:9200/_cat/health?v&pretty' epoch timestamp cluster status node.total node.data shards pri relo init unassign pending_tasks max_task_wait_time active_shards_percent 1556865276 06:34:36 my-application green 1 1 1 1 0 0 0 0 - 100.0%

五、Logstash安装

1.下载logstash5.0.0,也是使用 wget下载,解压logstash

cd /usr/local/elk

wget https://artifacts.elastic.co/downloads/logstash/logstash-5.0.0.tar.gz

tar -zxvf logstash-5.0.0.tar.gz

2.编辑 usr/local/elk/logstash-5.0.0/config/logstash.conf 文件,这是一个新创建的文件,在文件中添加如下内容:

input { tcp { port => 4567 mode => "server" codec => json_lines } } filter { } output { elasticsearch { hosts => ["192.168.1.66:9200"] cluster => nmtx-cluster index => "operation-%{+YYYY.MM.dd}" } stdout { codec => rubydebug } }

logstash的配置文件须包含三个内容:

- input{}:此模块是负责收集日志,可以从文件读取、从redis读取 或者 开启端口让产生日志的业务系统直接写入到logstash,这里使用的是:开启端口让产生日志的业务系统直接写入到logstash

- filter{}:此模块是负责过滤收集到的日志,并根据过滤后对日志定义显示字段

- output{}:此模块是负责将过滤后的日志输出到elasticsearch或者文件、redis等,生产环境中可以将 stdout 移除掉,防止在控制台上打印日志。

3.启动logstash,先不以后台方式启动,为了看logstash是否可以启动成功

/usr/local/elk/logstash-5.0.0/bin/logstash -f /usr/local/elk/logstash-5.0.0/config/logstash.conf

当看到控制台上出现如下内容时,即为启动成功:

Sending Logstash logs to /elk/logstash-5.0.0/logs which is now configured via log4j2.properties.

[2018-03-11T12:12:14,588][INFO ][logstash.inputs.tcp ] Starting tcp input listener {:address=>"0.0.0.0:4567"}

[2018-03-11T12:12:14,892][INFO ][logstash.outputs.elasticsearch] Elasticsearch pool URLs updated {:changes=>{:removed=>[], :added=>["http://localhost:9200"]}}

[2018-03-11T12:12:14,894][INFO ][logstash.outputs.elasticsearch] Using mapping template from {:path=>nil}

[2018-03-11T12:12:15,425][INFO ][logstash.outputs.elasticsearch] Attempting to install template {:manage_template=>{"template"=>"logstash-*", "version"=>50001, "settings"=>{"index.refresh_interval"=>"5s"}, "mappings"=>{"_default_"=>{"_all"=>{"enabled"=>true, "norms"=>false}, "dynamic_templates"=>[{"message_field"=>{"path_match"=>"message", "match_mapping_type"=>"string", "mapping"=>{"type"=>"text", "norms"=>false}}}, {"string_fields"=>{"match"=>"*", "match_mapping_type"=>"string", "mapping"=>{"type"=>"text", "norms"=>false, "fields"=>{"keyword"=>{"type"=>"keyword"}}}}}], "properties"=>{"@timestamp"=>{"type"=>"date", "include_in_all"=>false}, "@version"=>{"type"=>"keyword", "include_in_all"=>false}, "geoip"=>{"dynamic"=>true, "properties"=>{"ip"=>{"type"=>"ip"}, "location"=>{"type"=>"geo_point"}, "latitude"=>{"type"=>"half_float"}, "longitude"=>{"type"=>"half_float"}}}}}}}}

[2018-03-11T12:12:15,445][INFO ][logstash.outputs.elasticsearch] Installing elasticsearch template to _template/logstash

[2018-03-11T12:12:15,729][INFO ][logstash.outputs.elasticsearch] New Elasticsearch output {:class=>"LogStash::Outputs::ElasticSearch", :hosts=>["localhost:9200"]}

[2018-03-11T12:12:15,732][INFO ][logstash.pipeline ] Starting pipeline {"id"=>"main", "pipeline.workers"=>4, "pipeline.batch.size"=>125, "pipeline.batch.delay"=>5, "pipeline.max_inflight"=>500}

[2018-03-11T12:12:15,735][INFO ][logstash.pipeline ] Pipeline main started

[2018-03-11T12:12:15,768][INFO ][logstash.agent ] Successfully started Logstash API endpoint {:port=>9600}

Ctrl+C,关闭logstash,然后 后台启动:

nohup /usr/local/elk/logstash-5.0.0/bin/logstash -f /usr/local/elk/logstash-5.0.0/config/logstash.conf > /data/elk/logstash-log.file 2>&1 &

六、Kibana安装

1.下载并解压Kibana,wget下载:

cd /usr/local/elk

wget https://artifacts.elastic.co/downloads/kibana/kibana-5.0.0-linux-x86_64.tar.gz

tar zxvf kibana-5.0.0-linux-x86_64.tar.gz

2.修改/usr/local/elk/kibana-5.0.0-linux-x86_64/config/kibana.yml文件,修改内容如下:

server.port: 5601

server.host: "192.168.1.66"

elasticsearch.url: "http://192.168.1.66:9200"

kibana.index: ".kibana"

3.启动Kibana,后台启动:

nohup /elk/kibana-5.0.0-linux-x86_64/bin/kibana > /data/elk/kibana-log.file 2>&1 &

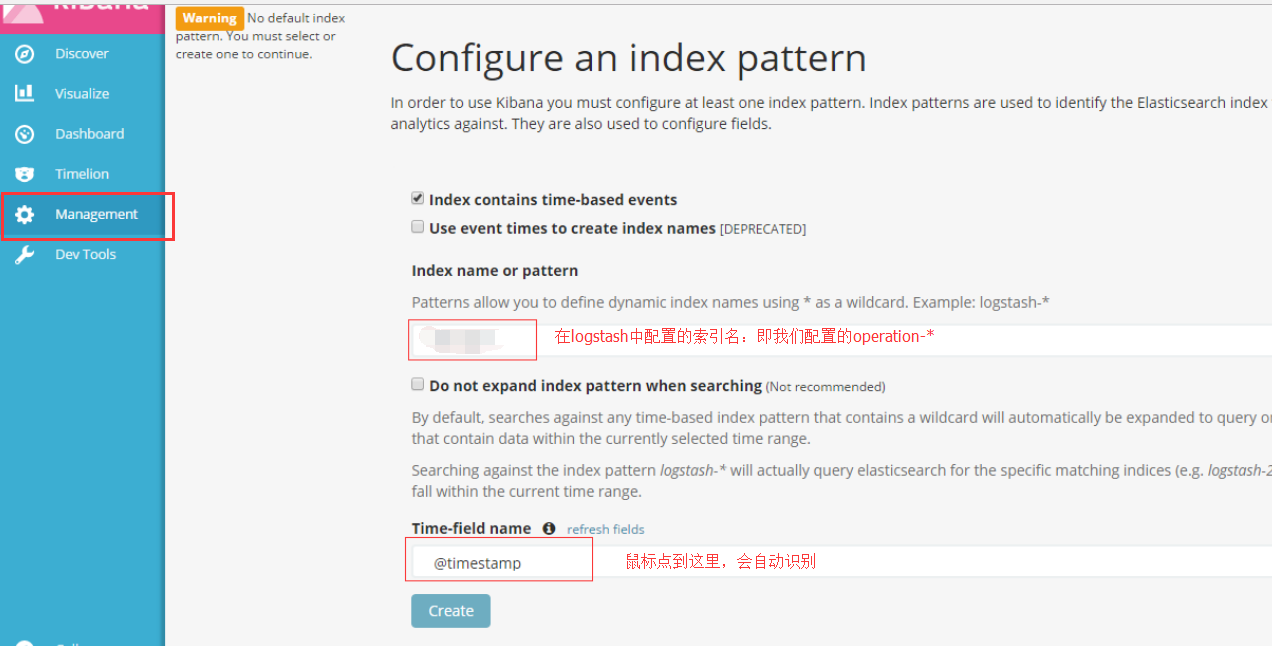

4.浏览器访问:x.x.x.x:5601,然后配置索引匹配,如下:

七、使用logback向logstash中写入日志

1.新建一个SpringBoot工程,引入 logstash需要的jar包依赖:

<!-- logstash --> <dependency> <groupId>net.logstash.logback</groupId> <artifactId>logstash-logback-encoder</artifactId> <version>4.11</version> </dependency>

2.添加 logback.xml 文件,内容如下:

<!-- Logback configuration. See http://logback.qos.ch/manual/index.html --> <configuration scan="true" scanPeriod="10 seconds"> <include resource="org/springframework/boot/logging/logback/base.xml" /> <appender name="INFO_FILE" class="ch.qos.logback.core.rolling.RollingFileAppender"> <File>${LOG_PATH}/info.log</File> <rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy"> <fileNamePattern>${LOG_PATH}/info-%d{yyyyMMdd}.log.%i</fileNamePattern> <timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP"> <maxFileSize>500MB</maxFileSize> </timeBasedFileNamingAndTriggeringPolicy> <maxHistory>2</maxHistory> </rollingPolicy> <layout class="ch.qos.logback.classic.PatternLayout"> <Pattern>%d{yyyy-MM-dd HH:mm:ss.SSS} [%thread] %-5level %logger{36} -%msg%n </Pattern> </layout> </appender> <appender name="ERROR_FILE" class="ch.qos.logback.core.rolling.RollingFileAppender"> <filter class="ch.qos.logback.classic.filter.ThresholdFilter"> <level>ERROR</level> </filter> <File>${LOG_PATH}/error.log</File> <rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy"> <fileNamePattern>${LOG_PATH}/error-%d{yyyyMMdd}.log.%i </fileNamePattern> <timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP"> <maxFileSize>500MB</maxFileSize> </timeBasedFileNamingAndTriggeringPolicy> <maxHistory>2</maxHistory> </rollingPolicy> <layout class="ch.qos.logback.classic.PatternLayout"> <Pattern> %d{yyyy-MM-dd HH:mm:ss.SSS} [%thread] %-5level %logger{36} -%msg%n </Pattern> </layout> </appender> <appender name="STDOUT" class="ch.qos.logback.core.ConsoleAppender"> <encoder> <pattern>%d{HH:mm:ss.SSS} [%thread] %-5level %logger{36} - %msg%n</pattern> </encoder> </appender> <!-- 配置logstash的ip端口 --> <appender name="LOGSTASH" class="net.logstash.logback.appender.LogstashTcpSocketAppender"> <destination>192.168.1.66:4567</destination> <encoder charset="UTF-8" class="net.logstash.logback.encoder.LogstashEncoder" /> </appender> <root level="INFO"> <appender-ref ref="INFO_FILE" /> <appender-ref ref="ERROR_FILE" /> <appender-ref ref="STDOUT" /> <!-- 向logstash中写入日志 --> <appender-ref ref="LOGSTASH" /> </root> <logger name="org.springframework.boot" level="INFO"/> </configuration>

3.application.properties文件中配置使用 我们刚刚加入的logback.xml文件

#日志

logging.config=classpath:logback.xml

logging.path=/data/springboot-log

4.新建一个测试类,如下:

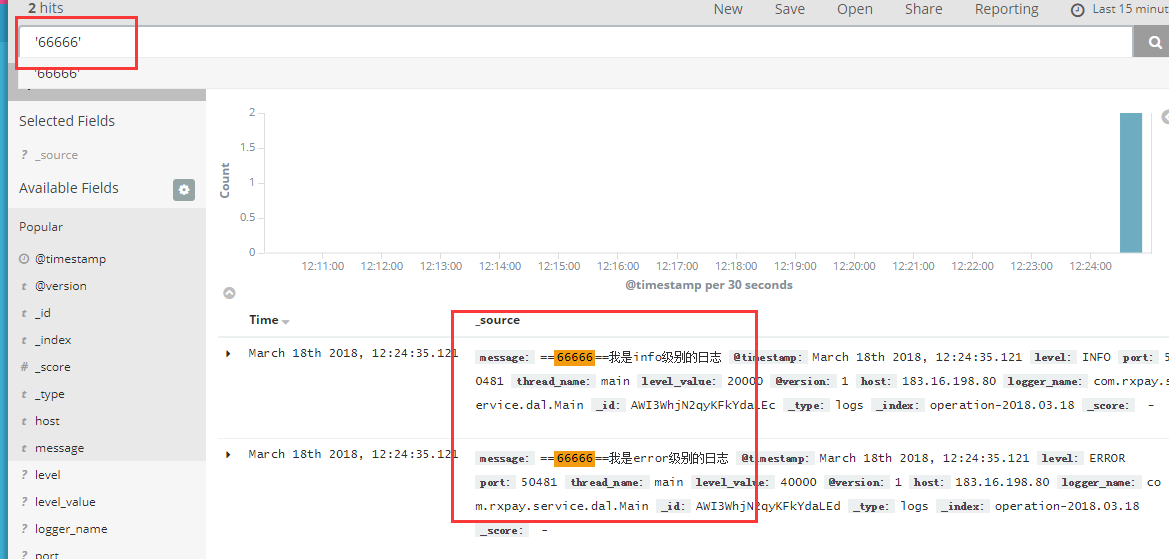

import org.junit.Test; import org.junit.runner.RunWith; import org.slf4j.Logger; import org.slf4j.LoggerFactory; import org.springframework.boot.test.context.SpringBootTest; import org.springframework.test.context.junit4.SpringRunner; @RunWith(SpringRunner.class) @SpringBootTest public class LogTest { public static final Logger logger = LoggerFactory.getLogger(Main.class); @Test public void testLog() { logger.info("==66666=={}" , "我是info级别的日志"); logger.error("==66666=={}" , "我是error级别的日志"); } }

在浏览器中输入:192.168.1.66:5601,选中 左侧菜单中的Discover,然后在 下图框中输入'66666'(注意:单引号代表模糊查询,双引号代表精确查询),回车,看到下图中可以查询出刚刚LogTest中输入的日志,说明logback已经可以向logstash中写入日志了。