目标任务:使用Scrapy框架爬取新浪网导航页所有大类、小类、小类里的子链接、以及子链接页面的新闻内容,最后保存到本地。

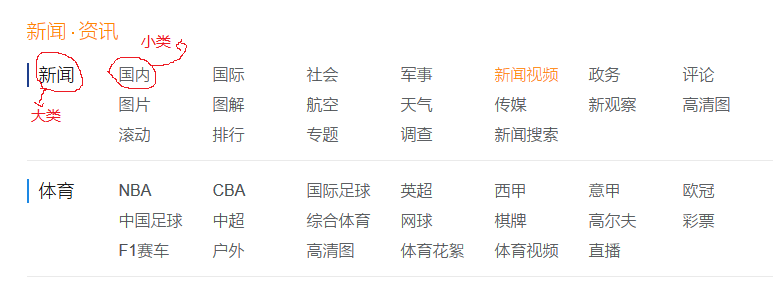

大类小类如下图所示:

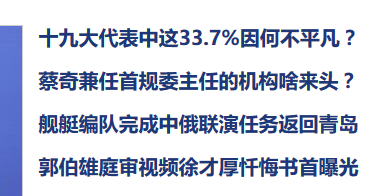

点击国内这个小类,进入页面后效果如下图(部分截图):

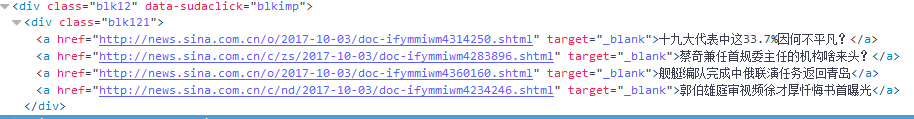

查看页面元素,得到小类里的子链接如下图所示:

有子链接就可以发送请求来访问对应新闻的内容了。

首先创建scrapy项目

# 创建项目 scrapy startproject sinaNews # 创建爬虫 scrapy genspider sina "sina.com.cn"

一、根据要爬取的字段创建item文件:

# -*- coding: utf-8 -*-import scrapy

import sys

reload(sys)

sys.setdefaultencoding("utf-8")class SinanewsItem(scrapy.Item):

# 大类的标题和url

parentTitle = scrapy.Field()

parentUrls = scrapy.Field()</span><span style="color: #008000">#</span><span style="color: #008000"> 小类的标题和子url</span> subTitle =<span style="color: #000000"> scrapy.Field() subUrls </span>=<span style="color: #000000"> scrapy.Field() </span><span style="color: #008000">#</span><span style="color: #008000"> 小类目录存储路径</span> subFilename =<span style="color: #000000"> scrapy.Field() </span><span style="color: #008000">#</span><span style="color: #008000"> 小类下的子链接</span> sonUrls =<span style="color: #000000"> scrapy.Field() </span><span style="color: #008000">#</span><span style="color: #008000"> 文章标题和内容</span> head =<span style="color: #000000"> scrapy.Field() content </span>= scrapy.Field()</pre>

二、编写spiders爬虫文件

# -*- coding: utf-8 -*-import scrapy

import os

from sinaNews.items import SinanewsItem

import sys

reload(sys)

sys.setdefaultencoding("utf-8")class SinaSpider(scrapy.Spider):

name = "sina"

allowed_domains = ["sina.com.cn"]

start_urls = ['http://news.sina.com.cn/guide/']</span><span style="color: #0000ff">def</span><span style="color: #000000"> parse(self, response): items</span>=<span style="color: #000000"> [] </span><span style="color: #008000">#</span><span style="color: #008000"> 所有大类的url 和 标题</span> parentUrls = response.xpath(<span style="color: #800000">'</span><span style="color: #800000">//div[@id="tab01"]/div/h3/a/@href</span><span style="color: #800000">'</span><span style="color: #000000">).extract() parentTitle </span>= response.xpath(<span style="color: #800000">'</span><span style="color: #800000">//div[@id="tab01"]/div/h3/a/text()</span><span style="color: #800000">'</span><span style="color: #000000">).extract() </span><span style="color: #008000">#</span><span style="color: #008000"> 所有小类的ur 和 标题</span> subUrls = response.xpath(<span style="color: #800000">'</span><span style="color: #800000">//div[@id="tab01"]/div/ul/li/a/@href</span><span style="color: #800000">'</span><span style="color: #000000">).extract() subTitle </span>= response.xpath(<span style="color: #800000">'</span><span style="color: #800000">//div[@id="tab01"]/div/ul/li/a/text()</span><span style="color: #800000">'</span><span style="color: #000000">).extract() </span><span style="color: #008000">#</span><span style="color: #008000">爬取所有大类</span> <span style="color: #0000ff">for</span> i <span style="color: #0000ff">in</span><span style="color: #000000"> range(0, len(parentTitle)): </span><span style="color: #008000">#</span><span style="color: #008000"> 指定大类目录的路径和目录名</span> parentFilename = <span style="color: #800000">"</span><span style="color: #800000">./Data/</span><span style="color: #800000">"</span> +<span style="color: #000000"> parentTitle[i] </span><span style="color: #008000">#</span><span style="color: #008000">如果目录不存在,则创建目录</span> <span style="color: #0000ff">if</span>(<span style="color: #0000ff">not</span><span style="color: #000000"> os.path.exists(parentFilename)): os.makedirs(parentFilename) </span><span style="color: #008000">#</span><span style="color: #008000"> 爬取所有小类</span> <span style="color: #0000ff">for</span> j <span style="color: #0000ff">in</span><span style="color: #000000"> range(0, len(subUrls)): item </span>=<span style="color: #000000"> SinanewsItem() </span><span style="color: #008000">#</span><span style="color: #008000"> 保存大类的title和urls</span> item[<span style="color: #800000">'</span><span style="color: #800000">parentTitle</span><span style="color: #800000">'</span>] =<span style="color: #000000"> parentTitle[i] item[</span><span style="color: #800000">'</span><span style="color: #800000">parentUrls</span><span style="color: #800000">'</span>] =<span style="color: #000000"> parentUrls[i] </span><span style="color: #008000">#</span><span style="color: #008000"> 检查小类的url是否以同类别大类url开头,如果是返回True (sports.sina.com.cn 和 sports.sina.com.cn/nba)</span> if_belong = subUrls[j].startswith(item[<span style="color: #800000">'</span><span style="color: #800000">parentUrls</span><span style="color: #800000">'</span><span style="color: #000000">]) </span><span style="color: #008000">#</span><span style="color: #008000"> 如果属于本大类,将存储目录放在本大类目录下</span> <span style="color: #0000ff">if</span><span style="color: #000000">(if_belong): subFilename </span>=parentFilename + <span style="color: #800000">'</span><span style="color: #800000">/</span><span style="color: #800000">'</span>+<span style="color: #000000"> subTitle[j] </span><span style="color: #008000">#</span><span style="color: #008000"> 如果目录不存在,则创建目录</span> <span style="color: #0000ff">if</span>(<span style="color: #0000ff">not</span><span style="color: #000000"> os.path.exists(subFilename)): os.makedirs(subFilename) </span><span style="color: #008000">#</span><span style="color: #008000"> 存储 小类url、title和filename字段数据</span> item[<span style="color: #800000">'</span><span style="color: #800000">subUrls</span><span style="color: #800000">'</span>] =<span style="color: #000000"> subUrls[j] item[</span><span style="color: #800000">'</span><span style="color: #800000">subTitle</span><span style="color: #800000">'</span>] =<span style="color: #000000">subTitle[j] item[</span><span style="color: #800000">'</span><span style="color: #800000">subFilename</span><span style="color: #800000">'</span>] =<span style="color: #000000"> subFilename items.append(item) </span><span style="color: #008000">#</span><span style="color: #008000">发送每个小类url的Request请求,得到Response连同包含meta数据 一同交给回调函数 second_parse 方法处理</span> <span style="color: #0000ff">for</span> item <span style="color: #0000ff">in</span><span style="color: #000000"> items: </span><span style="color: #0000ff">yield</span> scrapy.Request( url = item[<span style="color: #800000">'</span><span style="color: #800000">subUrls</span><span style="color: #800000">'</span>], meta={<span style="color: #800000">'</span><span style="color: #800000">meta_1</span><span style="color: #800000">'</span>: item}, callback=<span style="color: #000000">self.second_parse) </span><span style="color: #008000">#</span><span style="color: #008000">对于返回的小类的url,再进行递归请求</span> <span style="color: #0000ff">def</span><span style="color: #000000"> second_parse(self, response): </span><span style="color: #008000">#</span><span style="color: #008000"> 提取每次Response的meta数据</span> meta_1= response.meta[<span style="color: #800000">'</span><span style="color: #800000">meta_1</span><span style="color: #800000">'</span><span style="color: #000000">] </span><span style="color: #008000">#</span><span style="color: #008000"> 取出小类里所有子链接</span> sonUrls = response.xpath(<span style="color: #800000">'</span><span style="color: #800000">//a/@href</span><span style="color: #800000">'</span><span style="color: #000000">).extract() items</span>=<span style="color: #000000"> [] </span><span style="color: #0000ff">for</span> i <span style="color: #0000ff">in</span><span style="color: #000000"> range(0, len(sonUrls)): </span><span style="color: #008000">#</span><span style="color: #008000"> 检查每个链接是否以大类url开头、以.shtml结尾,如果是返回True</span> if_belong = sonUrls[i].endswith(<span style="color: #800000">'</span><span style="color: #800000">.shtml</span><span style="color: #800000">'</span>) <span style="color: #0000ff">and</span> sonUrls[i].startswith(meta_1[<span style="color: #800000">'</span><span style="color: #800000">parentUrls</span><span style="color: #800000">'</span><span style="color: #000000">]) </span><span style="color: #008000">#</span><span style="color: #008000"> 如果属于本大类,获取字段值放在同一个item下便于传输</span> <span style="color: #0000ff">if</span><span style="color: #000000">(if_belong): item </span>=<span style="color: #000000"> SinanewsItem() item[</span><span style="color: #800000">'</span><span style="color: #800000">parentTitle</span><span style="color: #800000">'</span>] =meta_1[<span style="color: #800000">'</span><span style="color: #800000">parentTitle</span><span style="color: #800000">'</span><span style="color: #000000">] item[</span><span style="color: #800000">'</span><span style="color: #800000">parentUrls</span><span style="color: #800000">'</span>] =meta_1[<span style="color: #800000">'</span><span style="color: #800000">parentUrls</span><span style="color: #800000">'</span><span style="color: #000000">] item[</span><span style="color: #800000">'</span><span style="color: #800000">subUrls</span><span style="color: #800000">'</span>] = meta_1[<span style="color: #800000">'</span><span style="color: #800000">subUrls</span><span style="color: #800000">'</span><span style="color: #000000">] item[</span><span style="color: #800000">'</span><span style="color: #800000">subTitle</span><span style="color: #800000">'</span>] = meta_1[<span style="color: #800000">'</span><span style="color: #800000">subTitle</span><span style="color: #800000">'</span><span style="color: #000000">] item[</span><span style="color: #800000">'</span><span style="color: #800000">subFilename</span><span style="color: #800000">'</span>] = meta_1[<span style="color: #800000">'</span><span style="color: #800000">subFilename</span><span style="color: #800000">'</span><span style="color: #000000">] item[</span><span style="color: #800000">'</span><span style="color: #800000">sonUrls</span><span style="color: #800000">'</span>] =<span style="color: #000000"> sonUrls[i] items.append(item) </span><span style="color: #008000">#</span><span style="color: #008000">发送每个小类下子链接url的Request请求,得到Response后连同包含meta数据 一同交给回调函数 detail_parse 方法处理</span> <span style="color: #0000ff">for</span> item <span style="color: #0000ff">in</span><span style="color: #000000"> items: </span><span style="color: #0000ff">yield</span> scrapy.Request(url=item[<span style="color: #800000">'</span><span style="color: #800000">sonUrls</span><span style="color: #800000">'</span>], meta={<span style="color: #800000">'</span><span style="color: #800000">meta_2</span><span style="color: #800000">'</span>:item}, callback =<span style="color: #000000"> self.detail_parse) </span><span style="color: #008000">#</span><span style="color: #008000"> 数据解析方法,获取文章标题和内容</span> <span style="color: #0000ff">def</span><span style="color: #000000"> detail_parse(self, response): item </span>= response.meta[<span style="color: #800000">'</span><span style="color: #800000">meta_2</span><span style="color: #800000">'</span><span style="color: #000000">] content </span>= <span style="color: #800000">""</span><span style="color: #000000"> head </span>= response.xpath(<span style="color: #800000">'</span><span style="color: #800000">//h1[@id="main_title"]/text()</span><span style="color: #800000">'</span><span style="color: #000000">) content_list </span>= response.xpath(<span style="color: #800000">'</span><span style="color: #800000">//div[@id="artibody"]/p/text()</span><span style="color: #800000">'</span><span style="color: #000000">).extract() </span><span style="color: #008000">#</span><span style="color: #008000"> 将p标签里的文本内容合并到一起</span> <span style="color: #0000ff">for</span> content_one <span style="color: #0000ff">in</span><span style="color: #000000"> content_list: content </span>+=<span style="color: #000000"> content_one item[</span><span style="color: #800000">'</span><span style="color: #800000">head</span><span style="color: #800000">'</span>]=<span style="color: #000000"> head item[</span><span style="color: #800000">'</span><span style="color: #800000">content</span><span style="color: #800000">'</span>]=<span style="color: #000000"> content </span><span style="color: #0000ff">yield</span> item</pre>

三、编写pipelines文件

# -*- coding: utf-8 -*-from scrapy import signals

import sys

reload(sys)

sys.setdefaultencoding("utf-8")class SinanewsPipeline(object):

def process_item(self, item, spider):

sonUrls = item['sonUrls']</span><span style="color: #008000">#</span><span style="color: #008000"> 文件名为子链接url中间部分,并将 / 替换为 _,保存为 .txt格式</span> filename = sonUrls[7:-6].replace(<span style="color: #800000">'</span><span style="color: #800000">/</span><span style="color: #800000">'</span>,<span style="color: #800000">'</span><span style="color: #800000">_</span><span style="color: #800000">'</span><span style="color: #000000">) filename </span>+= <span style="color: #800000">"</span><span style="color: #800000">.txt</span><span style="color: #800000">"</span><span style="color: #000000"> fp </span>= open(item[<span style="color: #800000">'</span><span style="color: #800000">subFilename</span><span style="color: #800000">'</span>]+<span style="color: #800000">'</span><span style="color: #800000">/</span><span style="color: #800000">'</span>+filename, <span style="color: #800000">'</span><span style="color: #800000">w</span><span style="color: #800000">'</span><span style="color: #000000">) fp.write(item[</span><span style="color: #800000">'</span><span style="color: #800000">content</span><span style="color: #800000">'</span><span style="color: #000000">]) fp.close() </span><span style="color: #0000ff">return</span> item</pre>

四、settings文件的设置

# 设置管道文件 ITEM_PIPELINES = { 'sinaNews.pipelines.SinanewsPipeline': 300, }

执行命令

scrapy crwal sina

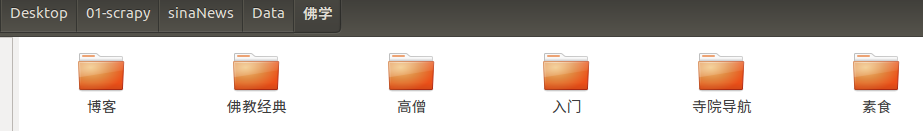

效果如下图所示:

打开工作目录下的Data目录,显示大类文件夹

大开一个大类文件夹,显示小类文件夹:

打开一个小类文件夹,显示文章: