3.8.1 隐藏层

3.8.2 激活函数

import torch

import matplotlib.pylab as plt

import numpy as np

import xiaobei_pytorch as xb

def xyplot(x_vals,y_vals,name):

xb.set_figsize(figsize=(5, 2.5))

xb.plt.plot(x_vals.detach().numpy(), y_vals.detach().numpy())

xb.plt.xlabel('x')

xb.plt.ylabel(name + '(x)')

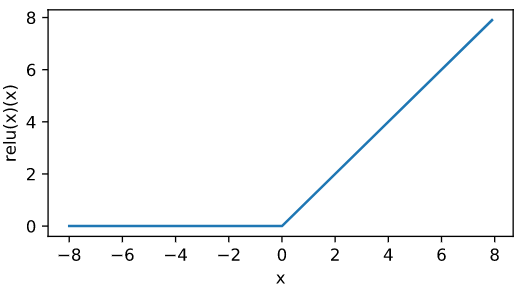

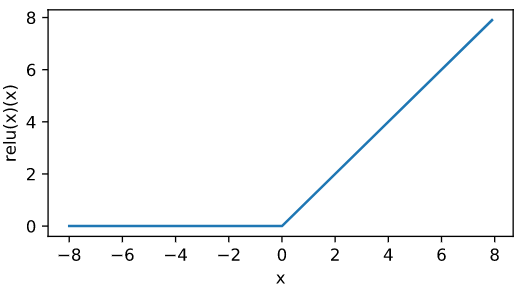

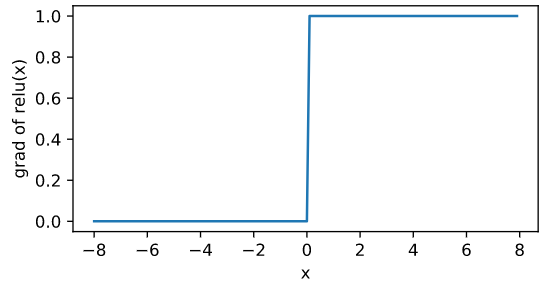

ReLU函数

x = torch.arange(-8,8,0.1,requires_grad=True)

y = x.relu()

xyplot(x,y,'relu(x)')

# print(y)

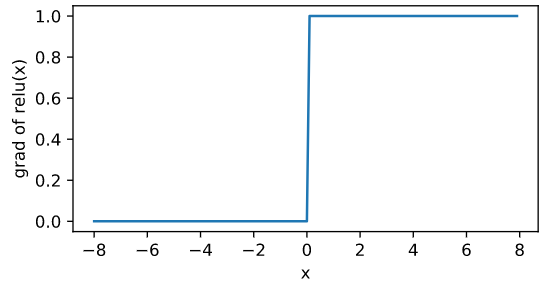

# 只允许标量对张量求导 所以这里将y转换车才能够标量,求和不改变梯度

y.sum().backward()

xyplot(x,x.grad,'grad of relu')

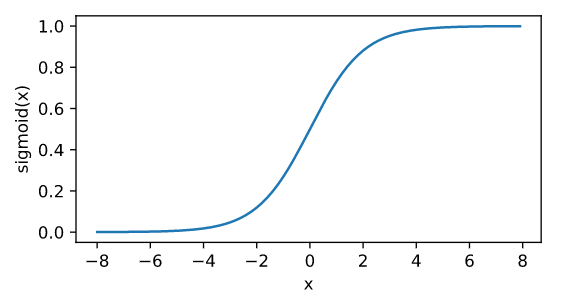

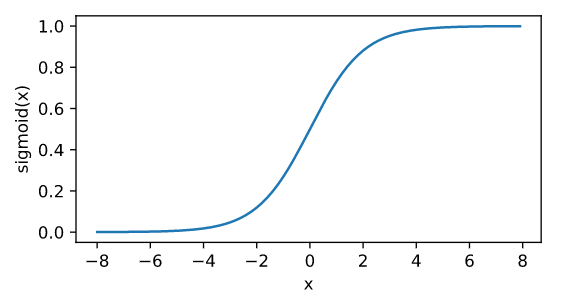

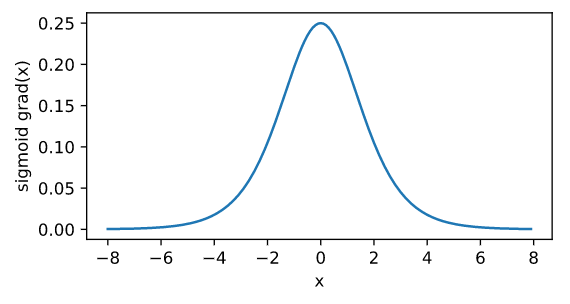

sigmoid函数

- sigmoid = $frac{1}{1+exp(-x)}$

y = x.sigmoid()

xyplot(x,y,'sigmoid')

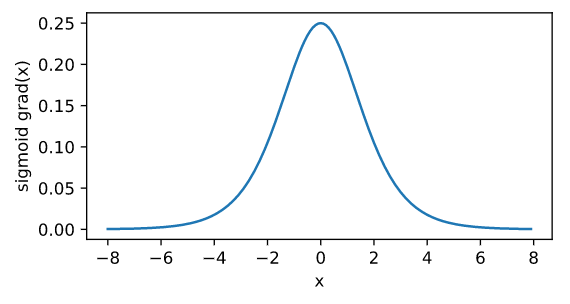

x.grad.zero_() #先将之前的梯度清零 否则梯度会累加

y.sum().backward()

xyplot(x,x.grad,'sigmoid grad')

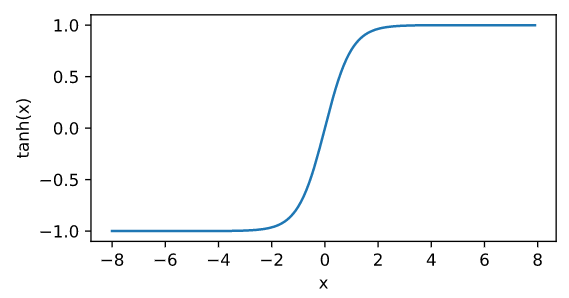

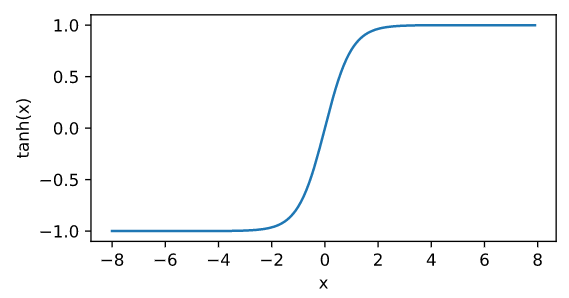

Tanh 函数

- tanh = $frac{1-exp(-2x)}{1+exp(-2x)}$

y = x.tanh()

xyplot(x,y,'tanh')

x.grad.zero_()

y.sum().backward()

xyplot(x,x.grad,'tanh grad')

3.8.3 多层感知机

- 隐藏层的层数和各隐藏层的隐藏单元的个数都称作感知机

- 分类问题 输出结果利用softmax + 交叉熵损失函数

- 回归问题 输出结果个数为1 利用平方损失函数