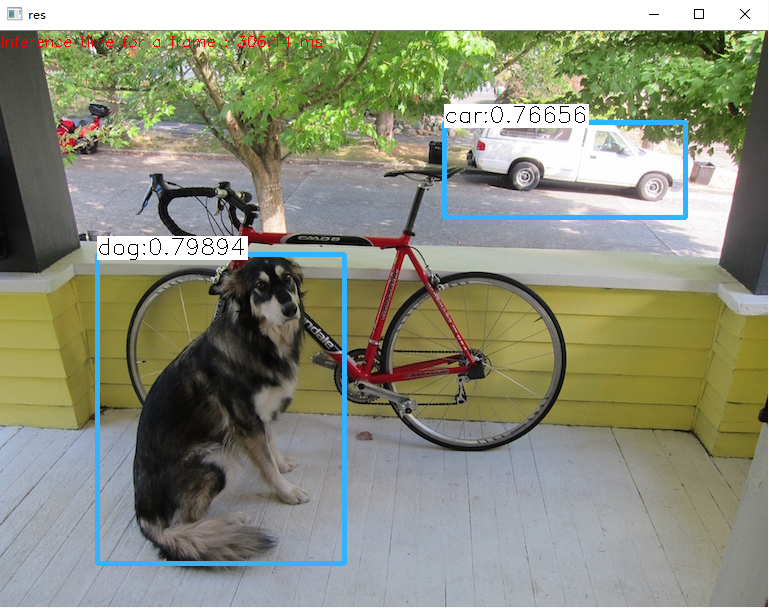

opencv自3.4版本以后就添加了对darknet的支持,用opencv来运行yolo模型,同样在cpu上跑是darknet性能的十倍以上,具体可以去看opencv官网。

本文主要测试了yolov2模型,需要将文件yolov2-tiny-voc.cfg最后region中的thresh设置得小一点,否则小于此threshold的目标将被过滤掉,这时再在代码里设置threshold就徒劳了。

当然yolov3模型当然也是能跑的,只是测试中没有发现cfg文件中关于confidence threshold的设置。暂时也用不到yolov3,罢了,望有心路人指点一二。

测试结果发现,框的分数和darknet跑出来的不一致,也不清楚是什么原因,具体可以看opencv问答社区问题

网上的教程比较多了,在此给出一个简单的demo,代码都是从github上copy过来的,只是整理了一下, 配好环境就可以跑了。

运行环境:

- win10

- opencv4.0预编译版

- vs2015

#include <fstream> #include <sstream> #include <iostream> #include <io.h> #include <opencv2/dnn.hpp> #include <opencv2/imgproc.hpp> #include <opencv2/highgui.hpp> #include<vector> using namespace std; using namespace cv; using namespace dnn; vector<string> classes; vector<String> getOutputsNames(Net&net) { static vector<String> names; if (names.empty()) { //Get the indices of the output layers, i.e. the layers with unconnected outputs vector<int> outLayers = net.getUnconnectedOutLayers(); //get the names of all the layers in the network vector<String> layersNames = net.getLayerNames(); // Get the names of the output layers in names names.resize(outLayers.size()); for (size_t i = 0; i < outLayers.size(); ++i) names[i] = layersNames[outLayers[i] - 1]; } return names; } void drawPred(int classId, float conf, int left, int top, int right, int bottom, Mat& frame) { //Draw a rectangle displaying the bounding box rectangle(frame, Point(left, top), Point(right, bottom), Scalar(255, 178, 50), 3); //Get the label for the class name and its confidence string label = format("%.5f", conf); if (!classes.empty()) { CV_Assert(classId < (int)classes.size()); label = classes[classId] + ":" + label; } //Display the label at the top of the bounding box int baseLine; Size labelSize = getTextSize(label, FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine); top = max(top, labelSize.height); rectangle(frame, Point(left, top - round(1.5*labelSize.height)), Point(left + round(1.5*labelSize.width), top + baseLine), Scalar(255, 255, 255), FILLED); putText(frame, label, Point(left, top), FONT_HERSHEY_SIMPLEX, 0.75, Scalar(0, 0, 0), 1); } void postprocess(Mat& frame, const vector<Mat>& outs, float confThreshold, float nmsThreshold) { vector<int> classIds; vector<float> confidences; vector<Rect> boxes; for (size_t i = 0; i < outs.size(); ++i) { // Scan through all the bounding boxes output from the network and keep only the // ones with high confidence scores. Assign the box's class label as the class // with the highest score for the box. float* data = (float*)outs[i].data; for (int j = 0; j < outs[i].rows; ++j, data += outs[i].cols) { Mat scores = outs[i].row(j).colRange(5, outs[i].cols); Point classIdPoint; double confidence; // Get the value and location of the maximum score minMaxLoc(scores, 0, &confidence, 0, &classIdPoint); if (confidence > confThreshold) { int centerX = (int)(data[0] * frame.cols); int centerY = (int)(data[1] * frame.rows); int width = (int)(data[2] * frame.cols); int height = (int)(data[3] * frame.rows); int left = centerX - width / 2; int top = centerY - height / 2; classIds.push_back(classIdPoint.x); confidences.push_back((float)confidence); boxes.push_back(Rect(left, top, width, height)); } } } // Perform non maximum suppression to eliminate redundant overlapping boxes with // lower confidences vector<int> indices; NMSBoxes(boxes, confidences, confThreshold, nmsThreshold, indices); for (size_t i = 0; i < indices.size(); ++i) { int idx = indices[i]; Rect box = boxes[idx]; drawPred(classIds[idx], confidences[idx], box.x, box.y, box.x + box.width, box.y + box.height, frame); } } int main() { string names_file = "E:\programing\VSProject\object_detect_opencv\model\yolov2_tiny\voc.names"; String model_def = "E:\programing\VSProject\object_detect_opencv\model\yolov2_tiny\yolov2-tiny-voc.cfg"; String weights = "E:\programing\VSProject\object_detect_opencv\model\yolov2_tiny\yolov2-tiny-voc.weights"; int in_w, in_h; double thresh = 0.35; double nms_thresh = 0.25; in_w = in_h = 416; string img_path = "E:\programing\VSProject\object_detect_opencv\test_images\dog.jpg"; //read names ifstream ifs(names_file.c_str()); string line; while (getline(ifs, line)) classes.push_back(line); //init model Net net = readNetFromDarknet(model_def, weights); net.setPreferableBackend(DNN_BACKEND_OPENCV); net.setPreferableTarget(DNN_TARGET_CPU); //read image and forward Mat frame, blob; if ((_access(img_path.c_str(), 0)) == -1) { cerr << "file: " << img_path.c_str() << " not exist" << endl; return -1; } frame = imread(img_path); // Create a 4D blob from a frame. blobFromImage(frame, blob, 1 / 255.0, Size(in_w, in_h), Scalar(), true, false); vector<Mat> mat_blob; imagesFromBlob(blob, mat_blob); //Sets the input to the network net.setInput(blob); // Runs the forward pass to get output of the output layers vector<Mat> outs; net.forward(outs, getOutputsNames(net)); postprocess(frame, outs, thresh, nms_thresh); vector<double> layersTimes; double freq = getTickFrequency() / 1000; double t = net.getPerfProfile(layersTimes) / freq; string label = format("Inference time for a frame : %.2f ms", t); putText(frame, label, Point(0, 15), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 255)); imshow("res", frame); waitKey(0); }