问题描述:

storm版本:1.2.2,kafka版本:2.11。

在使用storm去消费kafka中的数据时,发生了如下错误。

[root@node01 jars]# /opt/storm-1.2.2/bin/storm jar MyProject-1.0-SNAPSHOT-jar-with-dependencies.jar com.suhaha.storm.storm122_kafka211_demo02.KafkaTopoDemo stormkafka SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/storm-1.2.2/lib/log4j-slf4j-impl-2.8.2.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/data/jars/MyProject-1.0-SNAPSHOT-jar-with-dependencies.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory] Running: /usr/java/jdk1.8.0_181/bin/java -client -Ddaemon.name= -Dstorm.options= -Dstorm.home=/opt/storm-1.2.2 -Dstorm.log.dir=/opt/storm-1.2.2/logs -Djava.library.path=/usr/local/lib:/opt/local/lib:/usr/lib -Dstorm.conf.file= -cp /opt/storm-1.2.2/*:/opt/storm-1.2.2/lib/*:/opt/storm-1.2.2/extlib/*:MyProject-1.0-SNAPSHOT-jar-with-dependencies.jar:/opt/storm-1.2.2/conf:/opt/storm-1.2.2/bin -Dstorm.jar=MyProject-1.0-SNAPSHOT-jar-with-dependencies.jar -Dstorm.dependency.jars= -Dstorm.dependency.artifacts={} com.suhaha.storm.storm122_kafka211_demo02.KafkaTopoDemo stormkafka SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/opt/storm-1.2.2/lib/log4j-slf4j-impl-2.8.2.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/data/jars/MyProject-1.0-SNAPSHOT-jar-with-dependencies.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory] 1329 [main] INFO o.a.s.k.s.KafkaSpoutConfig - Setting Kafka consumer property 'auto.offset.reset' to 'earliest' to ensure at-least-once processing 1338 [main] INFO o.a.s.k.s.KafkaSpoutConfig - Setting Kafka consumer property 'enable.auto.commit' to 'false', because the spout does not support auto-commit 【run on cluster】 1617 [main] WARN o.a.s.u.Utils - STORM-VERSION new 1.2.2 old null 1699 [main] INFO o.a.s.StormSubmitter - Generated ZooKeeper secret payload for MD5-digest: -9173161025072727826:-6858502481790933429 1857 [main] WARN o.a.s.u.NimbusClient - Using deprecated config nimbus.host for backward compatibility. Please update your storm.yaml so it only has config nimbus.seeds 1917 [main] INFO o.a.s.u.NimbusClient - Found leader nimbus : node01:6627 1947 [main] INFO o.a.s.s.a.AuthUtils - Got AutoCreds [] 1948 [main] WARN o.a.s.u.NimbusClient - Using deprecated config nimbus.host for backward compatibility. Please update your storm.yaml so it only has config nimbus.seeds 1950 [main] INFO o.a.s.u.NimbusClient - Found leader nimbus : node01:6627 1998 [main] INFO o.a.s.StormSubmitter - Uploading dependencies - jars... 1998 [main] INFO o.a.s.StormSubmitter - Uploading dependencies - artifacts... 1998 [main] INFO o.a.s.StormSubmitter - Dependency Blob keys - jars : [] / artifacts : [] 2021 [main] INFO o.a.s.StormSubmitter - Uploading topology jar MyProject-1.0-SNAPSHOT-jar-with-dependencies.jar to assigned location: /var/storm/nimbus/inbox/stormjar-ce16c5f2-db05-4d0c-8c55-01512ed64ee7.jar 3832 [main] INFO o.a.s.StormSubmitter - Successfully uploaded topology jar to assigned location: /var/storm/nimbus/inbox/stormjar-ce16c5f2-db05-4d0c-8c55-01512ed64ee7.jar 3832 [main] INFO o.a.s.StormSubmitter - Submitting topology stormkafka in distributed mode with conf {"storm.zookeeper.topology.auth.scheme":"digest","storm.zookeeper.topology.auth.payload":"-9173161025072727826:-6858502481790933429","topology.workers":1,"topology.debug":true} 3832 [main] WARN o.a.s.u.Utils - STORM-VERSION new 1.2.2 old 1.2.2 5588 [main] WARN o.a.s.StormSubmitter - Topology submission exception: Component: [mybolt] subscribes from non-existent stream: [default] of component [kafka_spout] Exception in thread "main" java.lang.RuntimeException: InvalidTopologyException(msg:Component: [mybolt] subscribes from non-existent stream: [default] of component [kafka_spout]) at org.apache.storm.StormSubmitter.submitTopologyAs(StormSubmitter.java:273) at org.apache.storm.StormSubmitter.submitTopology(StormSubmitter.java:387) at org.apache.storm.StormSubmitter.submitTopology(StormSubmitter.java:159) at com.suhaha.storm.storm122_kafka211_demo02.KafkaTopoDemo.main(KafkaTopoDemo.java:47) Caused by: InvalidTopologyException(msg:Component: [mybolt] subscribes from non-existent stream: [default] of component [kafka_spout]) at org.apache.storm.generated.Nimbus$submitTopology_result$submitTopology_resultStandardScheme.read(Nimbus.java:8070) at org.apache.storm.generated.Nimbus$submitTopology_result$submitTopology_resultStandardScheme.read(Nimbus.java:8047) at org.apache.storm.generated.Nimbus$submitTopology_result.read(Nimbus.java:7981) at org.apache.storm.thrift.TServiceClient.receiveBase(TServiceClient.java:86) at org.apache.storm.generated.Nimbus$Client.recv_submitTopology(Nimbus.java:306) at org.apache.storm.generated.Nimbus$Client.submitTopology(Nimbus.java:290) at org.apache.storm.StormSubmitter.submitTopologyInDistributeMode(StormSubmitter.java:326) at org.apache.storm.StormSubmitter.submitTopologyAs(StormSubmitter.java:260) ... 3 more

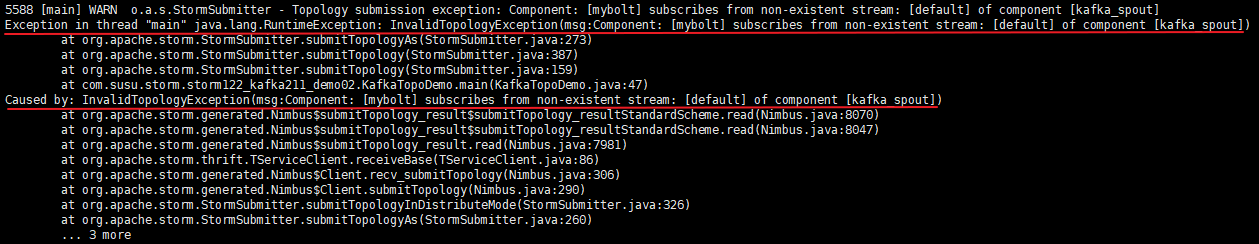

报错图示如下:

报错的意思为:mybolt这个组件,在从kafka_sput组件上消费消息时,它所消费的default数据流是不存在的。

上面的报错是因为代码中有地方写错了,下面贴出代码

1)KafkaTopoDemo类(main方法入口类和kafkaSpout设置)

1 package com.suhaha.storm.storm122_kafka211_demo02; 2 3 import org.apache.kafka.clients.consumer.ConsumerConfig; 4 import org.apache.storm.Config; 5 import org.apache.storm.LocalCluster; 6 import org.apache.storm.StormSubmitter; 7 import org.apache.storm.generated.AlreadyAliveException; 8 import org.apache.storm.generated.AuthorizationException; 9 import org.apache.storm.generated.InvalidTopologyException; 10 import org.apache.storm.kafka.spout.*; 11 import org.apache.storm.topology.TopologyBuilder; 12 import org.apache.storm.tuple.Fields; 13 import org.apache.storm.tuple.Values; 14 import org.apache.storm.kafka.spout.KafkaSpoutRetryExponentialBackoff.TimeInterval; 15 import static org.apache.storm.kafka.spout.KafkaSpoutConfig.FirstPollOffsetStrategy.EARLIEST; 16 17 /** 18 * @author suhaha 19 * @create 2019-04-28 00:44 20 * @comment storm消费kafka数据 21 */ 22 23 public class KafkaTopoDemo { 24 public static void main(String[] args) { 25 final TopologyBuilder topologybuilder = new TopologyBuilder(); 26 //简单的不可靠spout 27 // topologybuilder.setSpout("kafka_spout", new KafkaSpout<>(KafkaSpoutConfig.builder("node01:9092,node02:9092,node03:9092", "topic01").build())); 28 29 //详细的设置spout,写一个方法生成KafkaSpoutConfig 30 topologybuilder.setSpout("kafka_spout", new KafkaSpout<String,String>(newKafkaSpoutConfig("topic01"))); 31 32 topologybuilder.setBolt("mybolt", new MyBolt("/tmp/storm_test.log")).shuffleGrouping("kafka_spout"); 33 34 //上面设置的是topology,现在设置storm配置 35 Config stormConf=new Config(); 36 stormConf.setNumWorkers(1); 37 stormConf.setDebug(true); 38 39 if (args != null && args.length > 0) {//集群提交 40 System.out.println("【run on cluster】"); 41 42 try { 43 StormSubmitter.submitTopology(args[0], stormConf, topologybuilder.createTopology()); 44 } catch (AlreadyAliveException e) { 45 e.printStackTrace(); 46 } catch (InvalidTopologyException e) { 47 e.printStackTrace(); 48 } catch (AuthorizationException e) { 49 e.printStackTrace(); 50 } 51 System.out.println("提交完成"); 52 53 } else {//本地提交 54 System.out.println("【run on local】"); 55 LocalCluster lc = new LocalCluster(); 56 lc.submitTopology("storm_kafka", stormConf, topologybuilder.createTopology()); 57 } 58 } 59 60 61 /** 62 * KafkaSpoutConfig设置 63 */ 64 private static KafkaSpoutConfig<String,String> newKafkaSpoutConfig(String topic) { 65 ByTopicRecordTranslator<String, String> trans = new ByTopicRecordTranslator<>( 66 (r) -> new Values(r.topic(), r.partition(), r.offset(), r.key(), r.value()), 67 new Fields("topic", "partition", "offset", "key", "value"), "stream1"); 68 //bootstrapServer 以及topic 69 return KafkaSpoutConfig.builder("node01:9092,node02:9092,node03:9092", topic) 70 .setProp(ConsumerConfig.GROUP_ID_CONFIG, "kafkaSpoutTestGroup_" + System.nanoTime())//设置kafka使用者组属性"group.id" 71 .setProp(ConsumerConfig.MAX_PARTITION_FETCH_BYTES_CONFIG, 200) 72 .setRecordTranslator(trans)//修改spout如何将Kafka消费者message转换为tuple,以及将该tuple发布到哪个stream中 73 .setRetry(getRetryService())//重试策略 74 .setOffsetCommitPeriodMs(10_000) 75 .setFirstPollOffsetStrategy(EARLIEST)//允许你设置从哪里开始消费数据 76 .setMaxUncommittedOffsets(250) 77 .build(); 78 } 79 80 /** 81 * 重试策略设置 82 */ 83 protected static KafkaSpoutRetryService getRetryService() { 84 return new KafkaSpoutRetryExponentialBackoff(TimeInterval.microSeconds(500), 85 TimeInterval.milliSeconds(2), Integer.MAX_VALUE, TimeInterval.seconds(10)); 86 } 87 }

2)bolt类(跟问题没啥关系)

1 package com.suhaha.storm.storm122_kafka211_demo02; 2 3 import org.apache.storm.task.OutputCollector; 4 import org.apache.storm.task.TopologyContext; 5 import org.apache.storm.topology.IRichBolt; 6 import org.apache.storm.topology.OutputFieldsDeclarer; 7 import org.apache.storm.tuple.Tuple; 8 import java.io.FileWriter; 9 import java.io.IOException; 10 import java.util.Map; 11 12 /** 13 * @author suhaha 14 * @create 2019-04-28 01:05 15 * @comment 该bolt中的处理逻辑非常简单,只是简单的从input中将各类数据取出来,然后简单的打印出来 16 * 并且将数据打印到path指定的文件中(这里需要注意的是,最终写出的文件是在执行该bolt task的worker上的, 17 * 而不在nimbus服务器路径下,也不一定在提交storm job的服务器上) 18 */ 19 20 public class MyBolt implements IRichBolt { 21 private FileWriter fileWriter = null; 22 String path = null; 23 24 @Override 25 public void prepare(Map stormConf, TopologyContext context, OutputCollector collector) { 26 try { 27 fileWriter = new FileWriter(path); 28 } catch (IOException e) { 29 e.printStackTrace(); 30 } 31 } 32 33 /** 34 * 构造方法 35 * @param path 36 */ 37 public MyBolt(String path) { 38 this.path = path; 39 } 40 41 42 @Override 43 public void execute(Tuple input) { 44 System.out.println(input); 45 try { 46 /** 47 * 从input中获取相应数据 48 */ 49 System.out.println("========================="); 50 String topic = input.getString(0); 51 System.out.println("index 0 --> " + topic); //topic 52 System.out.println("topic --> " + input.getStringByField("topic")); 53 54 System.out.println("-------------------------"); 55 System.out.println("index 1 --> " + input.getInteger(1)); //partition 56 Integer partition = input.getIntegerByField("partition"); 57 System.out.println("partition-> " + partition); 58 59 System.out.println("-------------------------"); 60 Long offset = input.getLong(2); 61 System.out.println("index 2 --> " + offset); //offset 62 System.out.println("offset----> " +input.getLongByField("offset")); 63 64 System.out.println("-------------------------"); 65 String key = input.getString(3); 66 System.out.println("index 3 --> " + key); //key 67 System.out.println("key-------> " + input.getStringByField("key")); 68 69 System.out.println("-------------------------"); 70 String value = input.getString(4); 71 System.out.println("index 4 --> " + value); //value 72 System.out.println("value--> " + input.getStringByField("value")); 73 74 String info = "topic: " + topic + ", partiton: " +partition + ", offset: " + offset + ", key: " + key +", value: " + value + " "; 75 System.out.println("info = " + info); 76 fileWriter.write(info); 77 fileWriter.flush(); 78 } catch (Exception e) { 79 e.printStackTrace(); 80 } 81 } 82 83 @Override 84 public void cleanup() { 85 // TODO Auto-generated method stub 86 } 87 88 @Override 89 public void declareOutputFields(OutputFieldsDeclarer declarer) { 90 // TODO Auto-generated method stub 91 } 92 93 @Override 94 public Map<String, Object> getComponentConfiguration() { 95 // TODO Auto-generated method stub 96 return null; 97 } 98 }

错误出现在KafkaTopoDemo类中,已在上面的代码中做了黄色高亮标注。

错误的原因在于,在代码中对RecordTranslator进行设置时(第67行),将数据流Id设置成了stream1;而在对topologyBuilder设置bolt时(第32行),使用的分发策略是shuffleGrouping("kafka_spout"),其实错误跟分发策略没关系,但是跟分发策略的使用方式有关系——当使用shuffleGrouping(String componentId)这种方式设置分发策略时,mybolt组件默认是从上游组件的default 这个数据流中获取数据,而在代码中,我已将上游(kafka_spout)的数据流id设置成了stream1,故而导致了报错(InvalidTopologyException(msg:Component: [mybolt] subscribes from non-existent stream: [default] of component [kafka_spout]),说default数据流不存在)。

因此,需要对代码做了相应修改,即:在设置mybolt组件的分发策略时,使用shuffleGrouping(String componentId, String streamId),手动指定要读取的数据流id为stream1,如此,程序就不会报该错误了。

topologybuilder.setBolt("mybolt", new MyBolt("/tmp/storm_test.log")).shuffleGrouping("kafka_spout", "stream1");