一、介绍

本例子用scrapy-splash抓取36氪网站给定关键字抓取咨询信息。

给定关键字:个性化;融合;电视

抓取信息内如下:

1、资讯标题

2、资讯链接

3、资讯时间

4、资讯来源

二、网站信息

三、数据抓取

针对上面的网站信息,来进行抓取

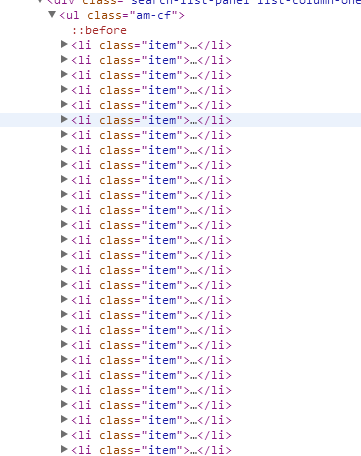

1、首先抓取信息列表

抓取代码:sels = site.xpath('//li[@class="item"]')

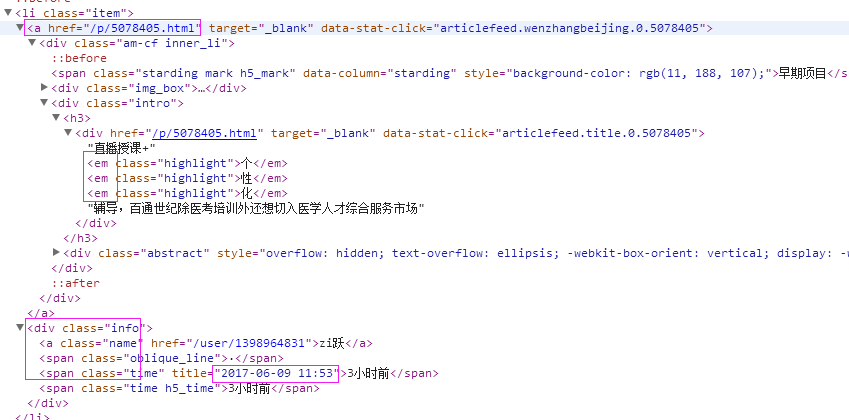

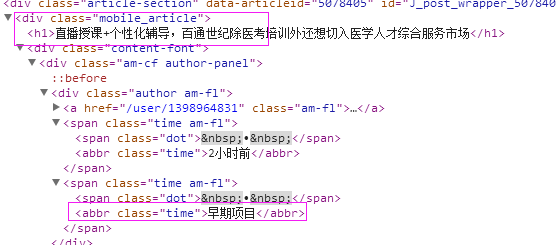

2、抓取标题

首先列表页面,根据标题和日期来判断是否自己需要的资讯,如果是,就今日到资讯对应的链接,来抓取来源,如果不是,就不用抓取了

抓取代码:titles = site.xpath('//div[@class="mobile_article"]/h1/text()')

3、抓取链接

抓取代码:url = 'http://36kr.com' + str(sel.xpath('.//a/@href')[0].extract())

4、抓取日期

抓取代码:dates = sel.xpath('.//div[@class="info"]/span[2]/@title')

5、抓取来源

抓取代码:sources = site.xpath('//div[@class="author am-fl"]/span[2]/abbr/text()')

四、完整代码

# -*- coding: utf-8 -*- import scrapy from scrapy import Request from scrapy.spiders import Spider from scrapy_splash import SplashRequest from scrapy_splash import SplashMiddleware from scrapy.http import Request, HtmlResponse from scrapy.selector import Selector from scrapy_splash import SplashRequest from splash_test.items import SplashTestItem import IniFile import sys import os import re import time reload(sys) sys.setdefaultencoding('utf-8') # sys.stdout = open('output.txt', 'w') class kr36Spider(Spider): name = 'kr36' configfile = os.path.join(os.getcwd(), 'splash_testspiderssetting.conf') cf = IniFile.ConfigFile(configfile) information_keywords = cf.GetValue("section", "information_keywords") information_wordlist = information_keywords.split(';') websearchurl = cf.GetValue("kr36", "websearchurl") start_urls = [] for word in information_wordlist: print websearchurl + word start_urls.append(websearchurl + word) # request需要封装成SplashRequest def start_requests(self): for url in self.start_urls: index = url.rfind('/') yield SplashRequest(url , self.parse , args={'wait': '2'}, meta={'keyword': url[index + 1:]} ) def Comapre_to_days(self,leftdate, rightdate): ''' 比较连个字符串日期,左边日期大于右边日期多少天 :param leftdate: 格式:2017-04-15 :param rightdate: 格式:2017-04-15 :return: 天数 ''' l_time = time.mktime(time.strptime(leftdate, '%Y-%m-%d')) r_time = time.mktime(time.strptime(rightdate, '%Y-%m-%d')) result = int(l_time - r_time) / 86400 return result def date_isValid(self, strDateText): currentDate = time.strftime('%Y-%m-%d') datePattern = re.compile(r'd{4}-d{1,2}-d{1,2}') strDate = re.findall(datePattern, strDateText) if len(strDate) == 1: if self.Comapre_to_days(currentDate, strDate[0]) == 0: return True, currentDate return False, '' def parse(self, response): site = Selector(response) sels = site.xpath('//li[@class="item"]') for sel in sels: dates = sel.xpath('.//div[@class="info"]/span[2]/@title') flag,date =self.date_isValid(dates[0].extract()) titles = sel.xpath('.//div[@class="intro"]/h3/div/em')#如果没有em标签,说明标题中没有搜索的关键字,这样直接就过滤掉了 if flag and len(titles)>0 : url = 'http://36kr.com' + str(sel.xpath('.//a/@href')[0].extract()) yield SplashRequest(url , self.parse_item , args={'wait': '1'}, meta={'date': date, 'url': url, 'keyword': response.meta['keyword']} ) def parse_item(self, response): site = Selector(response) titles = site.xpath('//div[@class="mobile_article"]/h1/text()') if len(titles) > 0: it = SplashTestItem() keyword = response.meta['keyword'] title = titles[0].extract() it['title'] = title it['url'] = response.meta['url'] it['date'] = response.meta['date'] it['keyword'] = response.meta['keyword'] sources = site.xpath('//div[@class="author am-fl"]/span[2]/abbr/text()') if len(sources) > 0: it['source'] = sources[0].extract() return it